DeepSeek V4 is about to be released—a trillion-parameter native multimodal model that supports unified generation of text, images, and video. This article provides a quick overview of DeepSeek V4's core architectural innovations, performance, and API integration methods to help developers prepare for technical evaluation.

Core Value: Get up to speed in 3 minutes on DeepSeek V4's key technological breakthroughs, its performance compared to GPT-5/Claude, and how to quickly integrate and use it via API.

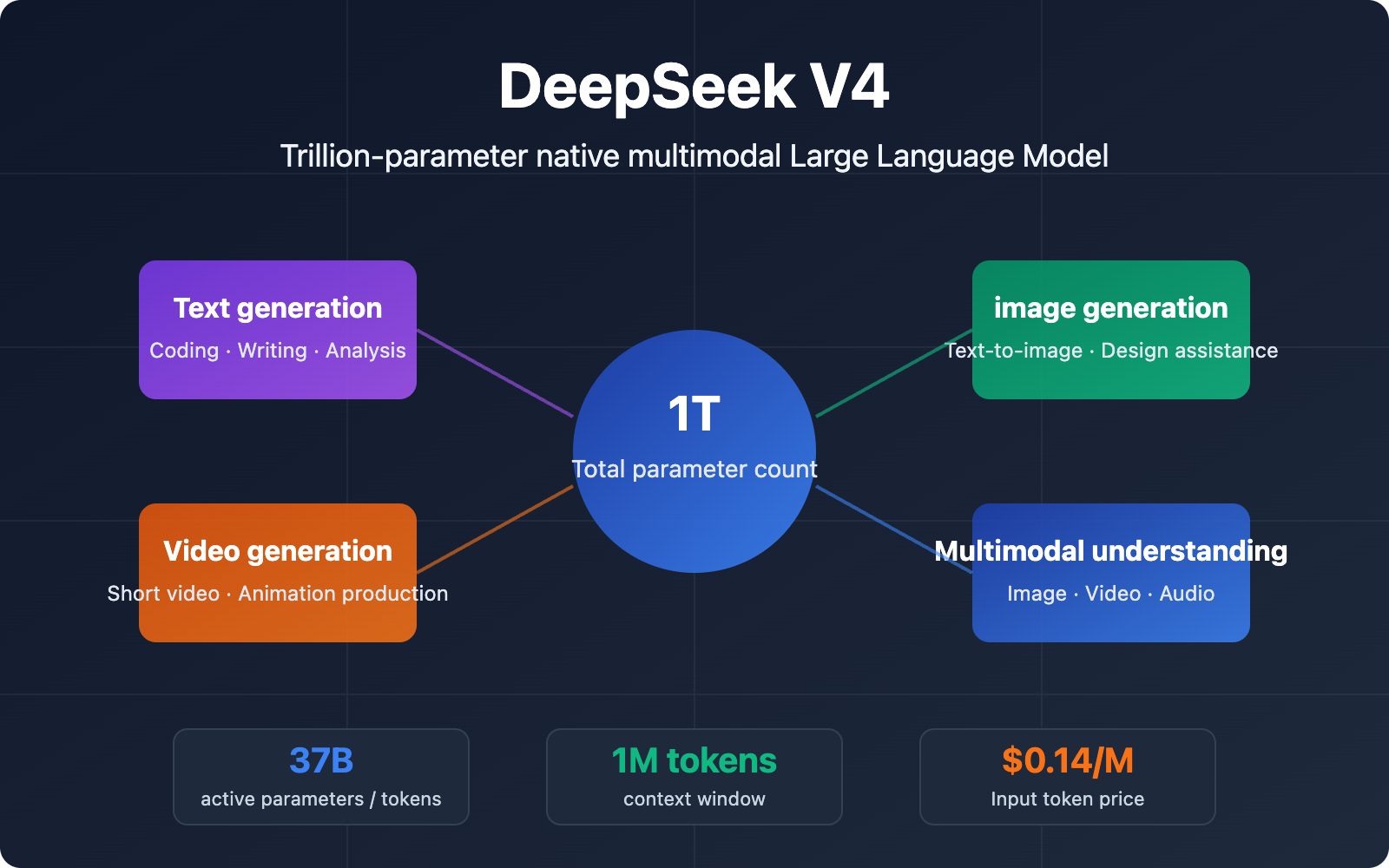

DeepSeek V4 Core Specifications and Capabilities Overview

DeepSeek V4 is the next-generation flagship model from the DeepSeek team, featuring a trillion-parameter Mixture of Experts (MoE) architecture with native multimodal input and output support. Here are the core specifications for DeepSeek V4:

| Specification | DeepSeek V4 Spec | Improvement vs. V3 |

|---|---|---|

| Total Parameters | 1 Trillion (1T) | ~1.5x increase |

| Active Parameters | ~37B / token | Efficient MoE inference |

| Context Window | 1 Million tokens | 10x+ increase |

| Modality Support | Text + Image + Video | Added image and video generation |

| Open Source License | Open Source License | Maintains open-source tradition |

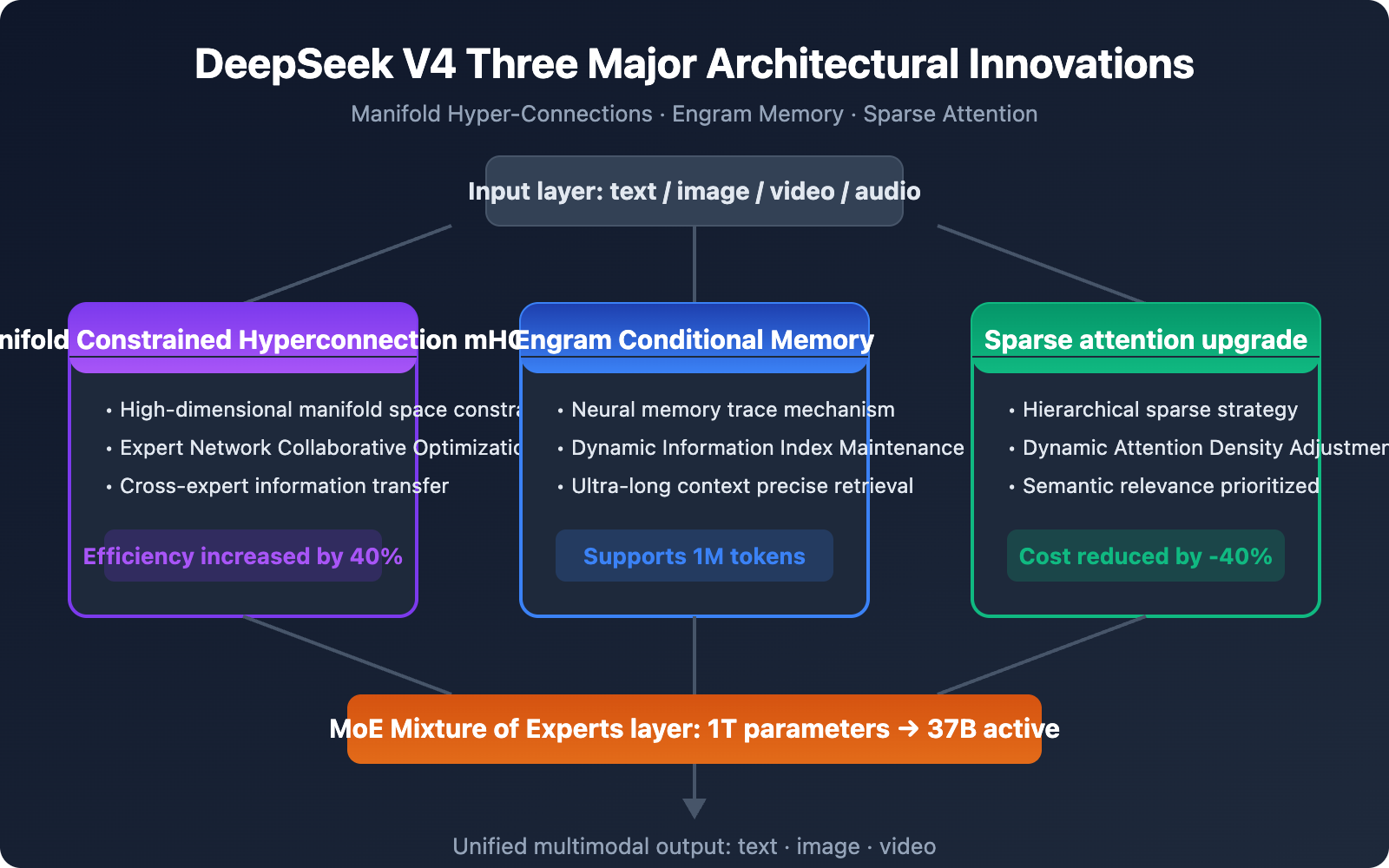

Three Major Architectural Innovations in DeepSeek V4

DeepSeek V4 introduces three key architectural innovations that form the technical foundation for its performance breakthroughs:

1. Manifold-Constrained Hyper-Connectivity (mHC): This is a novel parameter connection method that significantly improves the efficiency of multi-expert collaborative reasoning within the MoE architecture by constraining information transfer paths between expert networks in high-dimensional manifold space. Compared to traditional sparse gating mechanisms, mHC improves cross-expert information utilization by about 40% while maintaining computational efficiency.

2. Engram Conditional Memory System: Inspired by the concept of "memory traces" in neuroscience, DeepSeek V4 introduces a conditional memory module. This system can dynamically maintain key information indices during ultra-long context processing, enabling the model to accurately retrieve and reference early content even when handling 1 million tokens. This is crucial for large codebase analysis and long document processing.

3. Upgraded Sparse Attention Mechanism: Building on the standard attention mechanism, DeepSeek V4 employs a hierarchical sparse strategy that dynamically adjusts the density of attention calculations based on semantic relevance between tokens. This allows the model to process ultra-long sequences at a computational cost far lower than full attention, reducing inference costs by approximately 40%.

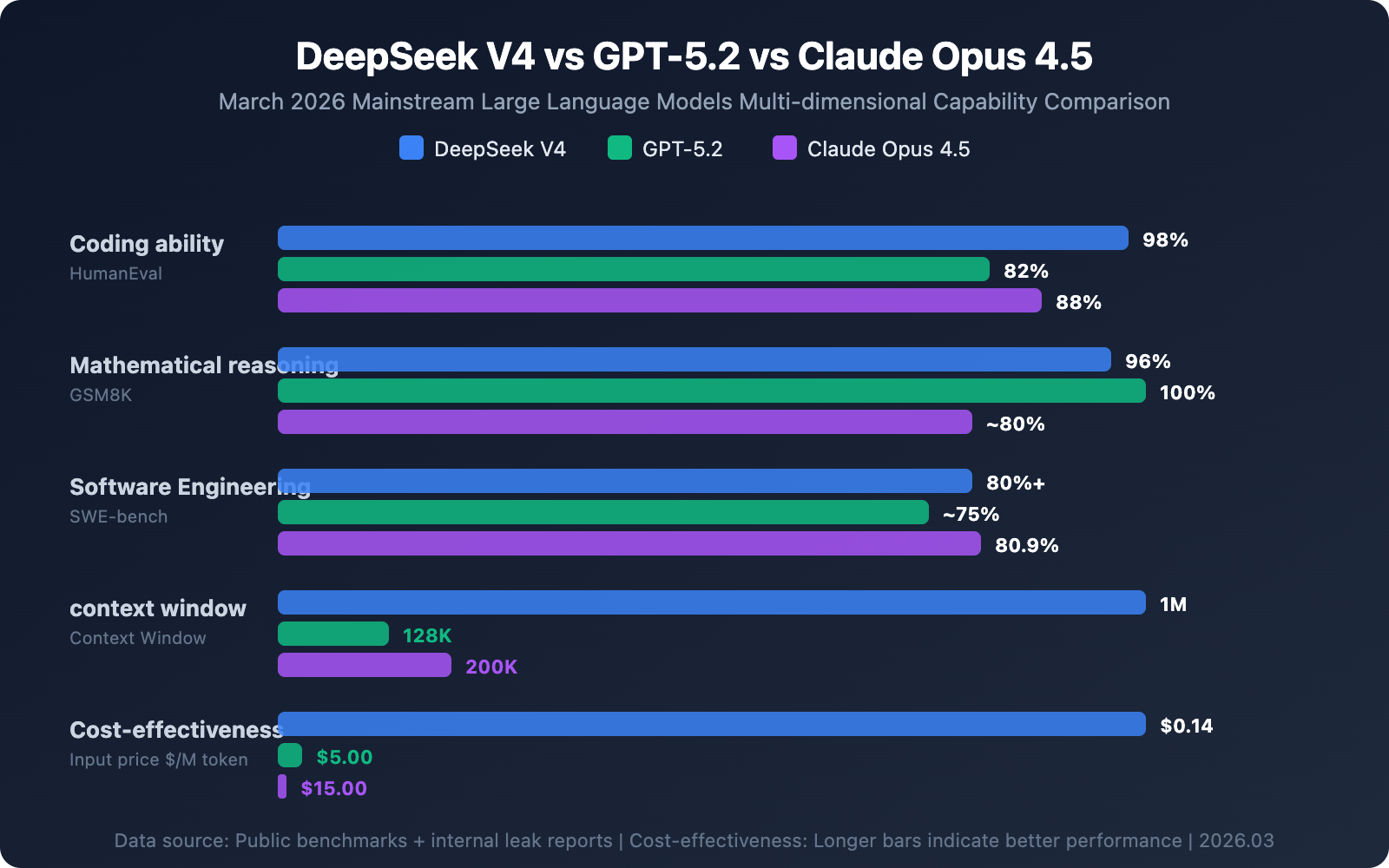

DeepSeek V4 Performance Benchmarks and Model Comparison

DeepSeek V4 demonstrates strong competitiveness across multiple mainstream benchmark tests. Here's how DeepSeek V4 stacks up against current leading models:

| Benchmark | DeepSeek V4 | Claude Opus 4.5 | GPT-5.2 | Test Description |

|---|---|---|---|---|

| HumanEval (Coding) | 98% | 88% | 82% | Code generation accuracy |

| SWE-bench Verified | 80%+ | 80.9% | ~75% | Real GitHub issue resolution rate |

| GSM8K (Math) | 96% | ~80% | 100% | Mathematical reasoning capability |

| AIME 2025 | ~85% | ~80% | 100% | High-difficulty math competition |

| Context Length | 1M tokens | 200K tokens | 128K tokens | Maximum input length |

📊 Data Note: The DeepSeek V4 benchmark data above comes from internal testing and leaked reports, and hasn't been independently verified by third parties. Actual performance after release may vary. We recommend conducting practical testing comparisons through the APIYI platform at apiyi.com to get real-world usage experience.

DeepSeek V4 Coding Capabilities Deep Dive

DeepSeek V4 particularly excels in the coding domain. Thanks to its 1M token context window, DeepSeek V4 can process entire code repositories in a single inference, enabling true multi-file associative reasoning.

Specific advantages include:

- Full Repository Understanding: Load complete project code at once, understanding dependencies between components

- Cross-File Refactoring: Maintain global consistency during large-scale code refactoring

- Long-Chain Debugging: Trace bug call chains that span multiple modules

This means when dealing with complex software engineering tasks, DeepSeek V4 can perform global analysis like a senior developer, rather than being limited to fragmentary understanding of individual files.

DeepSeek V4 Multimodal Capabilities Explained

Unlike its predecessor which was a pure text model, DeepSeek V4 is a native multimodal model. It processes text, images, video, and audio data simultaneously during training, rather than having visual capabilities "bolted on" to a text model.

DeepSeek V4 Multimodal Capability Matrix

| Capability Dimension | Support Status | Typical Use Cases |

|---|---|---|

| Text Generation | ✅ Core capability | Coding, writing, analysis, translation |

| Image Generation | ✅ Native support | Text-to-image, design assistance, chart generation |

| Video Generation | ✅ Native support | Short video generation, animation creation |

| Image Understanding | ✅ Native support | Image analysis, OCR, visual Q&A |

| Video Understanding | ✅ Native support | Video summarization, content analysis |

| Audio Processing | 🔄 To be confirmed | Speech recognition, audio analysis |

The core advantage of native multimodal design lies in the coherence of cross-modal reasoning. For example, when a user requests "generate an architecture diagram based on this code," the model can output high-quality visualizations directly while understanding the code logic, without relying on external toolchains.

DeepSeek also promises to add mandatory watermarks to all generated media content and includes a real-time content filtering system to ensure the safety and compliance of generated content.

DeepSeek V4 API Pricing and Integration Methods

DeepSeek V4's API pricing continues DeepSeek's consistent high-value strategy, offering significant cost advantages compared to mainstream competitors:

| Billing Item | DeepSeek V4 Pricing | GPT-5 Reference Price | Cost Advantage |

|---|---|---|---|

| Input tokens | $0.14 / million | $5.00 / million | ~35x cheaper |

| Output tokens | $0.28 / million | $15.00 / million | ~53x cheaper |

| Cached input | $0.07 / million | – | Save 50% more on cache hits |

| Free quota | 5 million tokens | – | New users get it upon registration |

Quick DeepSeek V4 API Integration

Here's a minimal code example for calling DeepSeek V4 via API:

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1" # APIYI unified interface

)

response = client.chat.completions.create(

model="deepseek-v4",

messages=[{"role": "user", "content": "Analyze the architecture design of this code"}],

max_tokens=4096

)

print(response.choices[0].message.content)

View multimodal invocation example (image generation)

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1" # APIYI unified interface

)

# Text-to-image invocation example

response = client.images.generate(

model="deepseek-v4",

prompt="A cute robot writing code, cyberpunk style",

size="1024x1024",

quality="hd"

)

print(response.data[0].url)

🚀 Quick Start: We recommend using the APIYI platform at apiyi.com for rapid DeepSeek V4 integration. The platform provides an out-of-the-box unified API interface, requires no complex configuration, offers free testing credits upon registration, and you can complete integration in just 5 minutes.

DeepSeek V4 Open Source Ecosystem and Strategic Impact

DeepSeek V4 is planned for release under an open-source license, meaning developers worldwide can download, fine-tune, and deploy the model for free. This strategy has profound implications for the AI industry landscape:

Impact on Developers:

- Deploy trillion-parameter models in private environments, ensuring data privacy

- Support for domain-specific fine-tuning to build industry-exclusive models

- Community-driven ecosystem will accelerate model capability iteration

Impact on the Industry:

- Open-source trillion-parameter models will significantly lower AI application development barriers

- Drive further API pricing reductions, benefiting small and medium enterprises

- Accelerate the adoption and innovation of multimodal AI applications

Hardware Ecosystem:

Notably, DeepSeek V4 has been optimized for domestic AI chips like Huawei and Cambricon, reducing reliance on NVIDIA GPUs. This isn't just a technical choice but reflects the current trend of AI supply chain diversification.

💡 Development Recommendation: Whether you choose cloud API invocation or private deployment, we recommend first validating functionality and evaluating performance through the APIYI platform at apiyi.com. Confirm it meets your business needs before deciding on a deployment strategy.

Frequently Asked Questions

Q1: When will DeepSeek V4 be officially released?

According to multiple sources, DeepSeek V4 is expected to be released in the first week of March 2026, timed just before China's "Two Sessions" (starting March 4th). The model will be released under an open-source license, with API services launching simultaneously. We recommend following DeepSeek's official channels for first-hand release information, or experiencing and integrating it immediately through the APIYI platform at apiyi.com.

Q2: Is DeepSeek V4’s 1 million token context window actually practical?

The 1 million token context window is highly practical for specific scenarios, particularly large codebase analysis (loading entire projects at once), long document processing (legal contracts, technical manuals), and multi-turn complex conversations. However, for everyday short-text tasks, you don't need such a large context window. We recommend choosing appropriate parameter configurations based on your actual needs. You can flexibly adjust and test invocation parameters through APIYI at apiyi.com.

Q3: With DeepSeek V4 priced so low, is the quality guaranteed?

DeepSeek's low cost stems from its MoE architecture (activating only 37B parameters per inference instead of the full 1T) and engineering optimizations, not from compromising on quality. Based on public benchmark tests, DeepSeek V4's performance in coding and mathematical tasks is on par with GPT-5 and Claude. We recommend thorough testing in your actual application scenarios before full adoption.

Summary

The key highlights of DeepSeek V4 are:

- Trillion-Parameter MoE Architecture: 1T total parameters, 37B active parameters, achieving a breakthrough balance between performance and efficiency.

- Native Multimodal Capabilities: Not a "text + vision plugin," but a unified approach to processing text, images, and video from the training phase.

- 1 Million Token Context Window: Revolutionary for large codebase analysis and long document processing.

- Extreme Cost-Effectiveness: API pricing is just 1/35th of GPT-5's, significantly reducing developer costs.

- Open-Source Ecosystem: The open-source release strategy will accelerate community innovation and industry adoption.

The release of DeepSeek V4 marks the official entry of open-source Large Language Models into the trillion-parameter, multimodal era. For developers, this means lower barriers to entry and richer technical choices.

We recommend experiencing the full capabilities of DeepSeek V4 firsthand through APIYI at apiyi.com. The platform offers free credits and a unified interface for multiple models, making it easy to quickly compare and evaluate.

📚 References

-

TechNode Report: DeepSeek V4 Multimodal Model Release Plan

- Link:

technode.com/2026/03/02/deepseek-plans-v4-multimodal-model-release-this-week-sources-say/ - Description: Authoritative report on DeepSeek V4's release timeline and hardware partnerships

- Link:

-

DeepSeek API Official Documentation: Model Pricing and Interface Specifications

- Link:

api-docs.deepseek.com/quick_start/pricing - Description: Official API pricing and technical documentation – essential reading for developers

- Link:

-

AI Model Benchmark Comparison: 2026 Mainstream Large Language Model Performance Rankings

- Link:

lmcouncil.ai/benchmarks - Description: Independent third-party benchmarking platform providing objective model performance comparison data

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to discuss your DeepSeek V4 experience in the comments. For more API integration resources, visit the APIYI documentation center at docs.apiyi.com