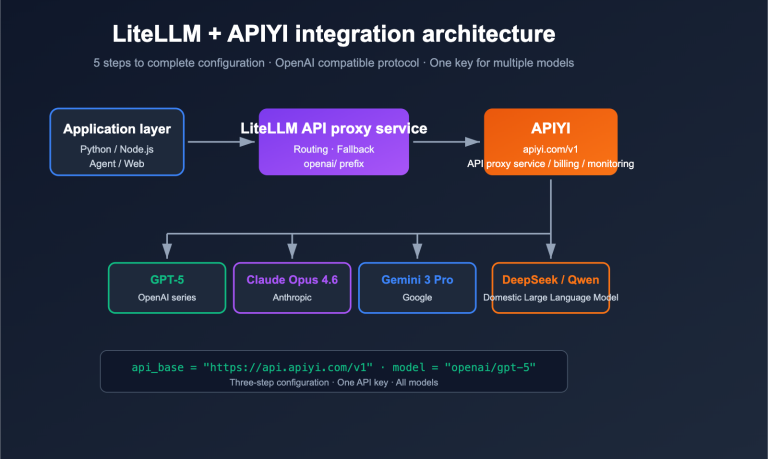

Complete tutorial for configuring LiteLLM with a third-party API proxy service: 5 steps to connect to APIYI

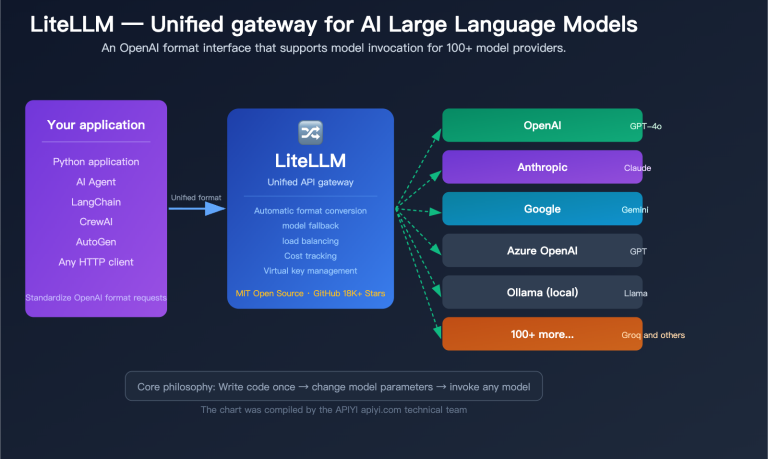

How can you make LiteLLM orchestrate multiple Large Language Models like OpenAI, Claude, Gemini, and DeepSeek simultaneously without getting blocked by overseas account, network, or payment issues? The answer is to connect LiteLLM to an OpenAI-compatible API proxy service. In this article, we’ll use LiteLLM + APIYI (apiyi.com) as an example to walk you through…