Author's Note: GPT-5.4 has been officially released and its API is now open for calls. It supports a 1 million token context window, native computer control, and full-resolution vision. APIYI has already integrated it—register now to start calling it.

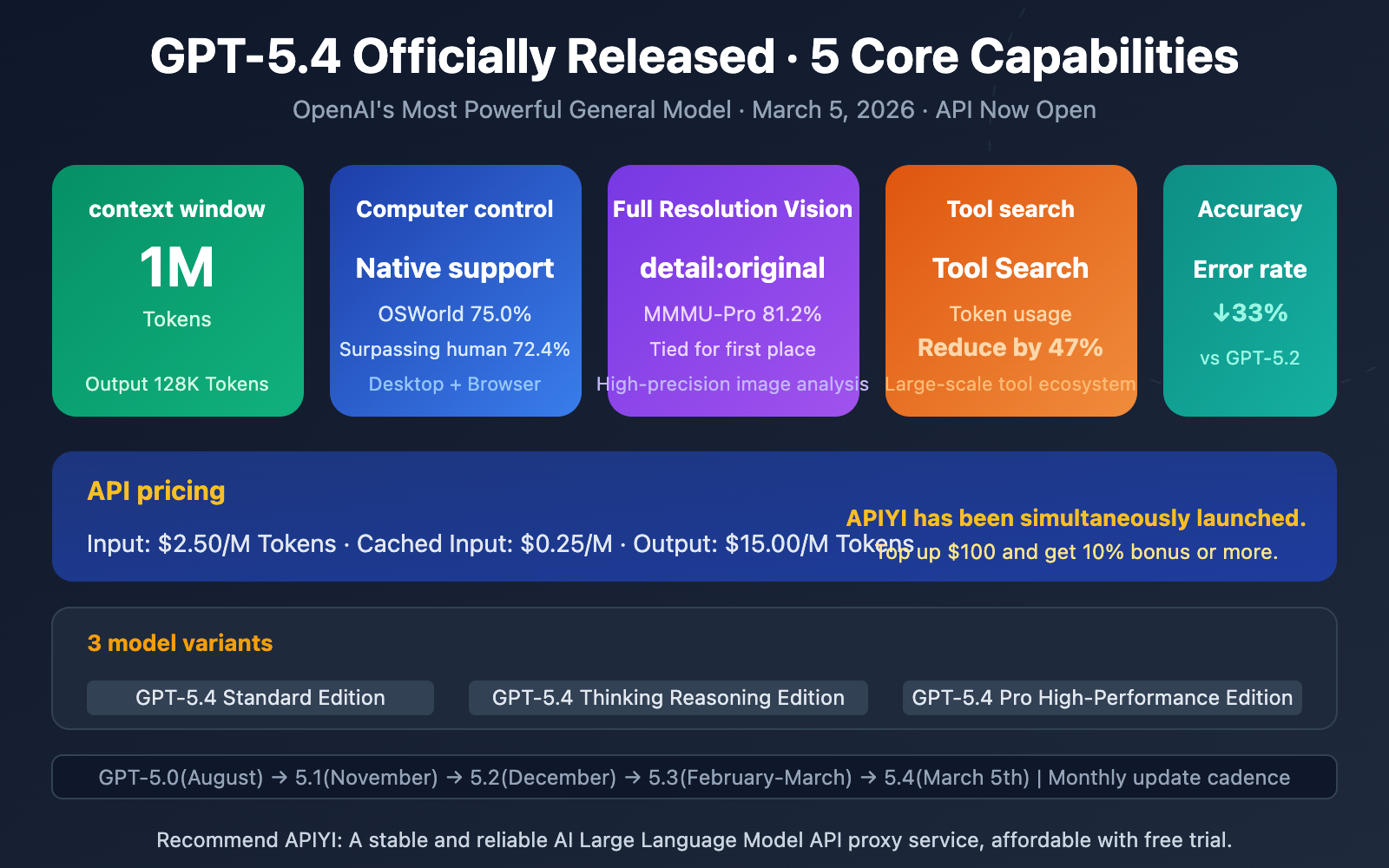

GPT-5.4 was officially launched on March 5, 2026. It's OpenAI's most powerful general-purpose model to date. For the first time, it combines a 1 million token context window, native computer control, and full-resolution vision into a single model. Its GDPval benchmark score jumped from 70.9% with GPT-5.2 to 83.0%, and it even surpassed human performance on the OSWorld computer control test.

Core Value: By reading this article, you'll understand GPT-5.4's 5 core capabilities, benchmark performance, API access methods, and pricing details, and learn how to call it immediately through the APIYI platform.

GPT-5.4 API Key Points

| Key Point | Specification | Developer Value |

|---|---|---|

| 1 Million Token Context | Input 1,050K, Output 128K | Process an entire novel or a complete codebase in one go |

| Native Computer Control | Screenshot + Keyboard/Mouse Control, OSWorld 75.0% | Automate cross-application workflows |

| Full-Resolution Vision | detail: original parameter |

High-precision scenarios like architectural blueprints, medical imaging |

| Tool Search | Token usage reduced by 47% | Drastically cuts costs in large-scale tool ecosystems |

| Accuracy Improvement | Single-claim error rate reduced by 33% | More reliable for high-risk fields like finance and law |

Three GPT-5.4 API Model Variants

For the first time, GPT-5.4 offers three variants tailored for different needs:

GPT-5.4 Standard (gpt-5.4): The general-purpose flagship model, supporting a 1 million token context, native computer control, and full-resolution vision processing. Ideal for professional scenarios requiring a balance of capability and cost.

GPT-5.4 Thinking (gpt-5.4-thinking): The reasoning-enhanced version, supporting five reasoning levels: none/low/medium/high/xhigh. Available to all paying users in ChatGPT, it's suitable for complex analysis, financial modeling, and deep research.

GPT-5.4 Pro (gpt-5.4-pro): The highest-performance version, aimed at enterprise-level tasks requiring ultimate accuracy. Available in ChatGPT Pro ($200/month) and Enterprise plans.

🎯 Access Recommendation: APIYI (apiyi.com) has already integrated the full GPT-5.4 series, with pricing aligned with OpenAI's official website. You can start calling it immediately upon registration. Top-ups of $100 or more get a 10% bonus, making it a convenient gateway for domestic developers to quickly access the GPT-5.4 API.

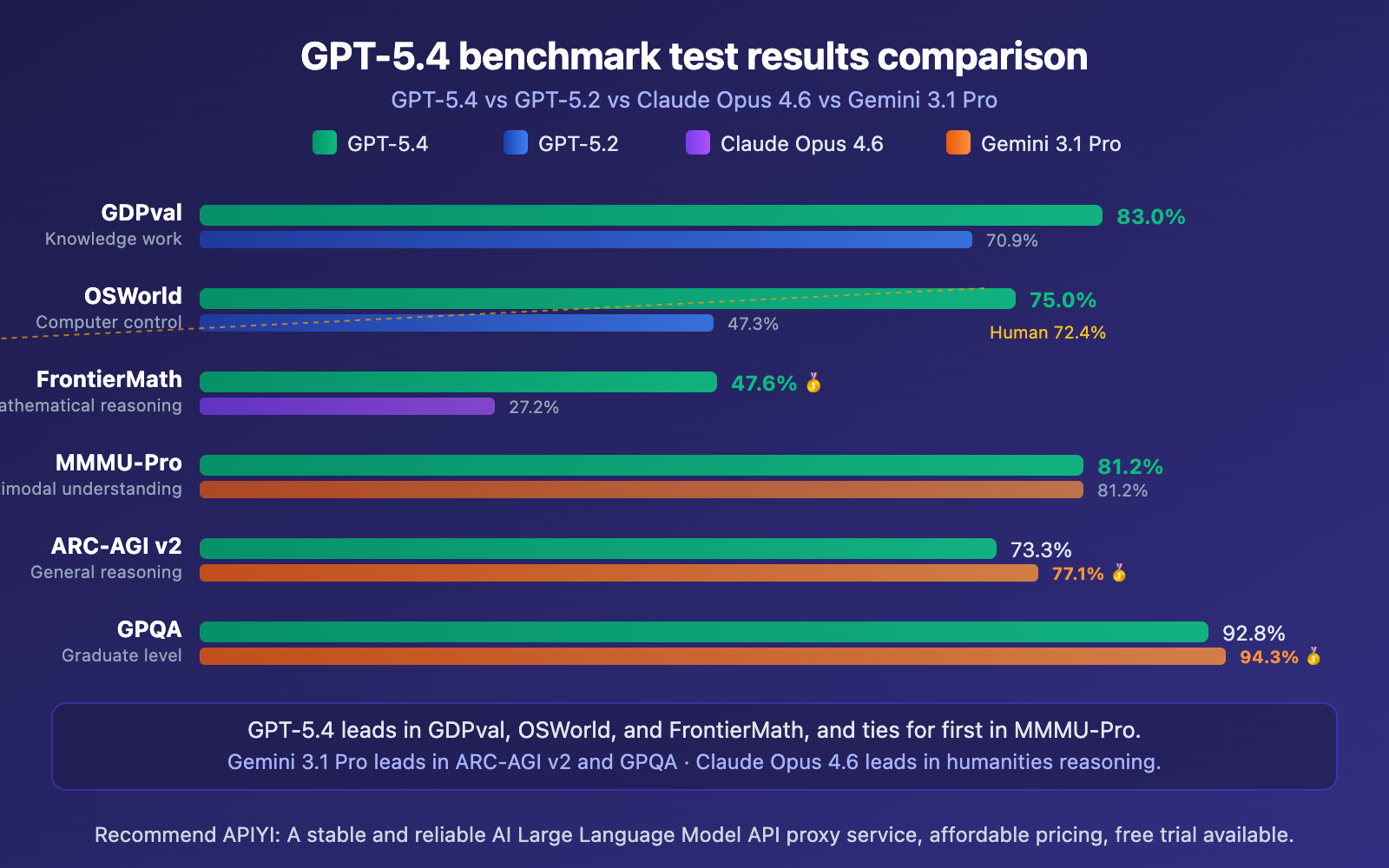

GPT-5.4 API Benchmark Test Results

GPT-5.4 API Benchmark Detailed Data

| Benchmark | GPT-5.4 | GPT-5.2 | Claude Opus 4.6 | Gemini 3.1 Pro |

|---|---|---|---|---|

| GDPval (Knowledge Work) | 83.0% 🥇 | 70.9% | — | — |

| OSWorld (Computer Control) | 75.0% 🥇 | 47.3% | — | — |

| FrontierMath (Mathematics) | 47.6% 🥇 | — | 27.2% | — |

| MMMU-Pro (Multimodal) | 81.2% 🥇 | — | — | 81.2% |

| ARC-AGI v2 (General Reasoning) | 73.3% | — | — | 77.1% 🥇 |

| GPQA (Graduate-Level) | 92.8% | — | — | 94.3% 🥇 |

| BrowseComp (Web Browsing) | 82.7% | — | — | 85.9% 🥇 |

A few key takeaways:

GPT-5.4 has a commanding lead in professional work capabilities (GDPval), matching or surpassing industry experts in 83% of comparisons. This means it's getting very close to—and in some cases exceeding—professional-level performance in real-world scenarios like financial analysis, spreadsheet processing, and PowerPoint creation.

The computer control (OSWorld 75.0%) result is the most impressive breakthrough—it actually surpasses the 72.4% human performance benchmark. This means GPT-5.4 can recognize screen content from screenshots and use keyboard and mouse inputs to complete complex, cross-application workflows.

🎯 Practical Advice: Benchmarks are just a reference; real-world performance varies by scenario. We recommend getting free test credits through APIYI at apiyi.com to compare GPT-5.4's actual performance against other models in your specific use cases.

GPT-5.4 API Pricing and Access Methods

GPT-5.4 API Official Pricing

| Pricing Item | Price (USD / Million Tokens) | Description |

|---|---|---|

| Standard Input | $2.50 | Input ≤ 272K Tokens |

| Cached Input | $0.25 | 90% discount, significant savings on repeated content |

| Standard Output | $15.00 | Maximum output 128K Tokens |

| Long Input (>272K) | $5.00 | Portion exceeding 272K Tokens billed at 2x rate |

| Long Output (>272K) | $22.50 | Output billed at 1.5x rate when input exceeds 272K |

The APIYI platform has been updated with pricing consistent with OpenAI's official site: Input $2.50/M, Output $15.00/M. Recharge $100 or more and get a 10% bonus. Register now to access the full GPT-5.4 model series.

GPT-5.4 API Minimal Integration Example

Here's the simplest way to call it, up and running in just 10 lines of code:

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="gpt-5.4",

messages=[{"role": "user", "content": "Analyze the key metrics in this quarterly financial report"}]

)

print(response.choices[0].message.content)

View Complete Implementation Code (Includes Computer Control & Full-Resolution Vision)

import openai

import base64

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

# Full-resolution image analysis

def analyze_image(image_path: str, prompt: str) -> str:

with open(image_path, "rb") as f:

image_data = base64.b64encode(f.read()).decode()

response = client.chat.completions.create(

model="gpt-5.4",

messages=[{

"role": "user",

"content": [

{"type": "text", "text": prompt},

{"type": "image_url", "image_url": {

"url": f"data:image/png;base64,{image_data}",

"detail": "original"

}}

]

}]

)

return response.choices[0].message.content

# Deep analysis with reasoning effort level

def deep_analysis(prompt: str, effort: str = "high") -> str:

response = client.chat.completions.create(

model="gpt-5.4",

messages=[{"role": "user", "content": prompt}],

reasoning={"effort": effort} # none/low/medium/high/xhigh

)

return response.choices[0].message.content

result = analyze_image("financial_report.png", "Analyze the anomalous data in this financial statement")

print(result)

Recommendation: Register an account via APIYI at apiyi.com to get your API key. Recharge $100 or more to enjoy a 10% bonus credit. The platform supports the full GPT-5.4 series and other mainstream models through a unified interface, making it easy to quickly compare and switch.

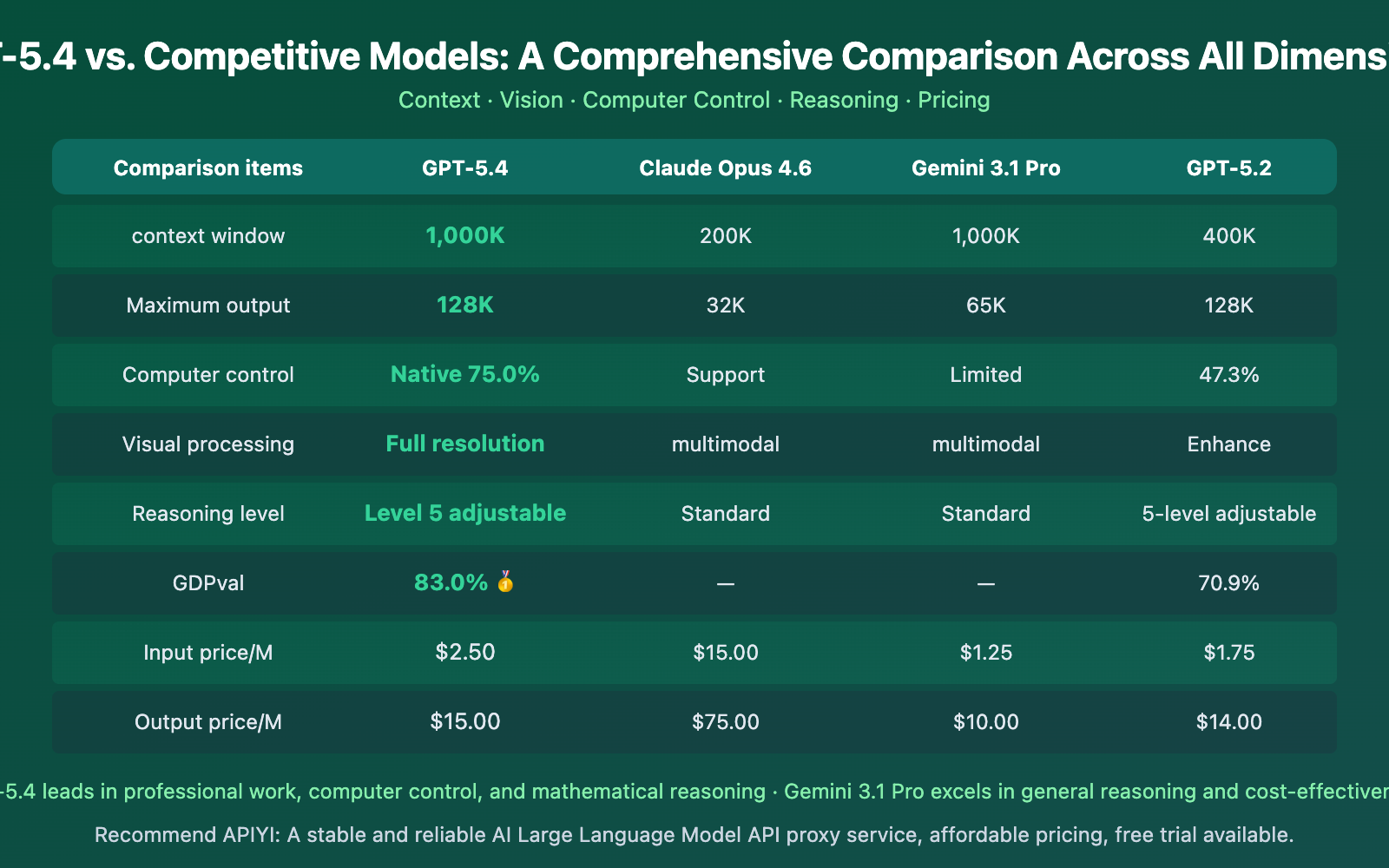

GPT-5.4 vs. Competitor Models

GPT-5.4 API Model Selection Guide by Use Case

| Use Case | Recommended Model | Reason | Available Platform |

|---|---|---|---|

| Daily Chat & Writing | GPT-5.3 Instant | Lowest cost (~$0.30/M input) | APIYI, etc. |

| Complex Analysis & Research | GPT-5.4 Thinking | GDPval 83.0%, xhigh reasoning | APIYI, etc. |

| Automated Workflows | GPT-5.4 Standard | Native computer control 75.0% | APIYI, etc. |

| Large Codebase Refactoring | GPT-5.3 Codex | Optimized for programming, 1M context | APIYI, etc. |

| Real-time Coding Assistance | GPT-5.3 Codex Spark | 1000+ tokens/s ultra-fast response | APIYI, etc. |

| Ultimate Accuracy Needs | GPT-5.4 Pro | Enterprise-grade highest performance | APIYI, etc. |

Selection Advice: Not sure which model to choose? Start with GPT-5.3 Instant for daily tasks (lowest cost), and switch to GPT-5.4 for complex jobs. All models are accessible via the unified APIYI apiyi.com interface, so you don't need to switch platforms.

Frequently Asked Questions

Q1: Is the 1M Token context available for all GPT-5.4 requests?

No. For requests with input exceeding 272K Tokens, the input price doubles ($5.00/M), and the output price increases by 50% ($22.50/M). For most use cases, it's recommended to manage your input length appropriately. If you genuinely require an ultra-long context, consider using cached input ($0.25/M) to reduce costs.

Q2: How do I choose between GPT-5.4 and GPT-5.2?

Choose GPT-5.4 if you need computer control capabilities or full-resolution vision processing. If your tasks are primarily text-based reasoning and you're cost-sensitive, GPT-5.2 ($1.75/M input) remains the more cost-effective option. We recommend testing both models in your real-world scenarios via APIYI at apiyi.com, where you can switch between them with one click.

Q3: How can I quickly integrate GPT-5.4 via APIYI?

You can get started in just 3 steps:

- Visit APIYI at apiyi.com to register an account and obtain your API key.

- Top up a minimum of $100 to receive a 10% bonus credit.

- Set your

base_urltohttps://vip.apiyi.com/v1and yourmodeltogpt-5.4to begin making calls.

Summary

Here are the key takeaways from the official launch of the GPT-5.4 API:

- Five Core Capabilities in One Model: 1M Token context window, native computer control (surpassing human performance with 75.0% on OSWorld), full-resolution vision, tool search (reducing Tokens by 47%), and a 33% accuracy improvement.

- Reasonable Pricing with Major Cache Discounts: Input at $2.50/M, output at $15.00/M, with cached input as low as $0.25/M (a 90% discount).

- Three Variants for All Scenarios: Standard for general use, Thinking for deep reasoning, and Pro for peak performance.

GPT-5.4 is currently OpenAI's most powerful general-purpose model, especially well-suited for tasks requiring computer control, ultra-long document processing, and high-precision visual analysis.

We recommend using APIYI at apiyi.com for quick and easy access to GPT-5.4. The platform offers pricing in sync with the official rates, provides a 10% bonus on top-ups of $100 or more, and gives you instant access to the full model suite upon registration.

📚 References

-

OpenAI GPT-5.4 Official Announcement: Details on the GPT-5.4 release and its core capabilities

- Link:

openai.com/index/introducing-gpt-5-4/ - Description: Official documentation for understanding GPT-5.4's new features like computer control and visual processing.

- Link:

-

OpenAI GPT-5.4 API Documentation: Model specifications, pricing, and interface parameter details

- Link:

developers.openai.com/api/docs/models/gpt-5.4 - Description: Essential reading for developers, containing technical details like model IDs, context limits, and rate limits.

- Link:

-

TechCrunch GPT-5.4 Coverage: In-depth analysis of the Pro and Thinking versions

- Link:

techcrunch.com/2026/03/05/openai-launches-gpt-5-4-with-pro-and-thinking-versions/ - Description: Understand the differences and suitable use cases for the three GPT-5.4 variants.

- Link:

-

LLM Stats GPT-5.4 Benchmark Tests: Complete benchmark data and competitor comparisons

- Link:

llm-stats.com/models/gpt-5.4 - Description: View GPT-5.4's rankings and specific scores across various benchmark tests.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to discuss in the comments. For more resources, visit the APIYI documentation center at docs.apiyi.com.