The most dramatic AI event of March 2026: An anonymous model dubbed "Hunter Alpha" quietly appeared on OpenRouter, burning through 500 billion tokens a week with performance rivaling GPT-5.2 and Claude Opus 4.6. Developers worldwide were buzzing, asking, "Is this DeepSeek V4?"

The answer caught everyone off guard—it was Xiaomi's MiMo V2 Pro. A smartphone company managed to build a trillion-parameter, world-class Large Language Model in less than a year.

Also released at the same time was MiMo V2 Omni—a multimodal model capable of natively processing text, images, video, and over 10 hours of continuous audio. Both models are now live on the APIYI platform, ready for developers to integrate.

Core Value: By the end of this article, you'll understand the true capabilities of MiMo V2 Pro and Omni, how they stack up against the competition, and why they're currently among the most cost-effective AI models available.

The Hunter Alpha Saga: How Xiaomi Shocked the AI World

Timeline

| Date | Event |

|---|---|

| Early 2026 | A model codenamed "Hunter Alpha" launches anonymously on OpenRouter |

| Several weeks | Consumes 500 billion tokens per week; developers flock to it |

| Community buzz | Performance nears top-tier closed models; widely assumed to be DeepSeek V4 |

| March 18-19, 2026 | Xiaomi officially reveals: Hunter Alpha = MiMo V2 Pro |

| Same day | MiMo V2 Omni and MiMo V2 Flash are released simultaneously |

| Launch day | Xiaomi stock rises by approximately 4% |

Why this is so shocking: A company known for smartphones and smart home devices managed to train a trillion-parameter Large Language Model in under a year, with performance landing it in the global top 10. Even more surprising, the lead researcher, Luo Fuli, was a key contributor to the breakthrough models at DeepSeek.

🎯 Available Information: MiMo V2 Pro and MiMo V2 Omni are now available on the APIYI (apiyi.com) platform for direct model invocation. Given the performance level of MiMo V2 Pro and its 1/3 pricing, it's currently one of the most cost-effective inference models on the market.

MiMo V2 Pro: A Trillion-Parameter Inference Model

Core Specifications

| Parameter | Details |

|---|---|

| Model Name | MiMo V2 Pro (formerly Hunter Alpha) |

| Release Date | March 18-19, 2026 |

| Total Parameters | Approx. 1 Trillion (MoE architecture) |

| Active Parameters | 42B (per inference) |

| Context Window | 1,048,576 tokens (1M) |

| Max Output | 131,072 tokens (128K) |

| Input/Output | Text-only |

| Inference Capability | Supports extended thinking (<think> tag) |

| Open Source Status | Not yet open source (API access only) |

| Lead Developer | Luo Fuli (former core member of DeepSeek) |

Benchmark Performance: 8th Globally, 2nd in China

| Benchmark | MiMo V2 Pro | Ranking |

|---|---|---|

| Artificial Analysis Intelligence Index | 49 | Global #8 |

| PinchBench | 84.0 | Global #3 |

| ClawEval (Agentic Capability) | 61.5 | Global #3 |

| GDPval-AA | 1434 Elo | China Model #1 |

| Math Accuracy | 94.0% | Top-tier |

| Coding Accuracy | 92.5% | Surpasses Claude Sonnet 4.6 |

| Hallucination Rate | 30% | Better than peers |

Key Findings: MiMo V2 Pro ranks 3rd globally in agentic tasks (ClawEval)—trailing only Claude Opus 4.6 (66.3) and one other model. This means it excels at multi-step reasoning, tool calling, and autonomous task execution.

Pricing: 1/6 the Cost of Comparable Performance

| Context Range | Input (per million tokens) | Output (per million tokens) |

|---|---|---|

| ≤ 256K | $1.00 | $3.00 |

| 256K – 1M | $2.00 | $6.00 |

Price Comparison with Competitors:

| Model | Input | Output | Relative to MiMo V2 Pro |

|---|---|---|---|

| MiMo V2 Pro | $1.00 | $3.00 | Baseline |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 5x more expensive |

| Claude Opus 4.6 | $15.00 | $75.00 | 25x more expensive |

| GPT-5.2 | ~$7.50 | ~$30.00 | 10x more expensive |

MiMo V2 Pro's coding ability surpasses Claude Sonnet 4.6, but at only 1/5 the price. Its agentic capability is close to Claude Opus 4.6, but at only 1/25 the price.

💡 Best Value Recommendation: MiMo V2 Pro is currently one of the most powerful low-cost models on the market. You can access it directly via the APIYI (apiyi.com) API proxy service—it's perfect for cost-sensitive development projects that don't compromise on quality.

MiMo V2 Omni: A Multimodal AI Model

MiMo V2 Omni is Xiaomi's flagship multimodal model—a unified architecture that natively supports text, images, video, and audio.

Core Specifications

| Parameter | Details |

|---|---|

| Model Name | MiMo V2 Omni |

| Release Date | March 18-19, 2026 |

| Context Window | 256K tokens |

| Input Modalities | Text + Image + Video + Audio |

| Output Modality | Text |

| Audio Processing | Supports 10+ hours of continuous audio (industry first) |

| Pricing | Input $0.40/MTok · Output $2.00/MTok |

Multimodal Capability Highlights

1. Visual Reasoning Surpasses Claude Opus 4.6

On the MMMU-Pro (multidisciplinary visual reasoning) and CharXiv RQ (complex chart analysis) benchmarks, MiMo V2 Omni outperforms Claude Opus 4.6 and approaches Gemini 3 levels.

2. 10-Hour Continuous Audio Understanding

This is an industry-first capability—it can process over 10 hours of continuous audio in a single request without any quality degradation. Ideal for:

- Full-length meeting analysis and summaries

- Podcast/interview content extraction

- Long-form voice conversation understanding

- Joint audio-visual analysis

3. Native Tool Calling and UI Positioning

The Omni model features built-in structured tool calling, function execution, and UI element positioning—it can be used directly in AI Agent frameworks without extra wrappers.

4. Real-world Demo

At the launch event, Xiaomi showcased a complete workflow using Omni:

User provides a simple request

↓

Omni autonomously writes the script

↓

Shoots 4 scenes

↓

Edits, synthesizes voice, and fixes rendering errors

↓

Uploads and publishes a 15-second short video

The entire process was completed autonomously.

Pricing: Ultimate Value for Multimodal

| Billing Item | Price |

|---|---|

| Input | $0.40 / million tokens |

| Output | $2.00 / million tokens |

This is one of the lowest-priced multimodal models available. Compared to Gemini 3.1 Pro ($2/$12) and Claude Opus 4.6 ($15/$75), Omni offers a massive price advantage.

🚀 Use Cases: If your application needs to handle images, video, or long-form audio, MiMo V2 Omni is a highly cost-effective choice. You can call it directly via APIYI (apiyi.com), with full support for standard OpenAI-compatible formats.

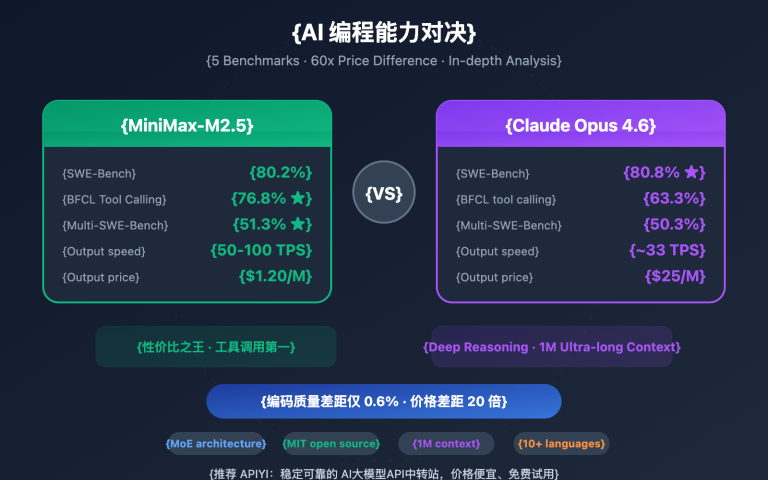

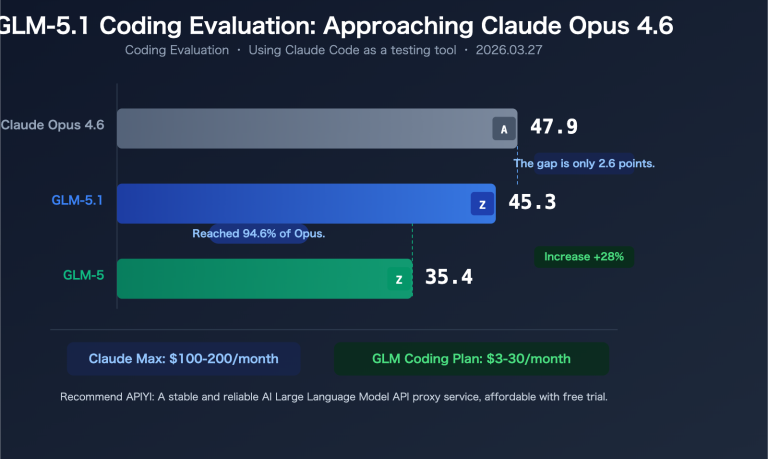

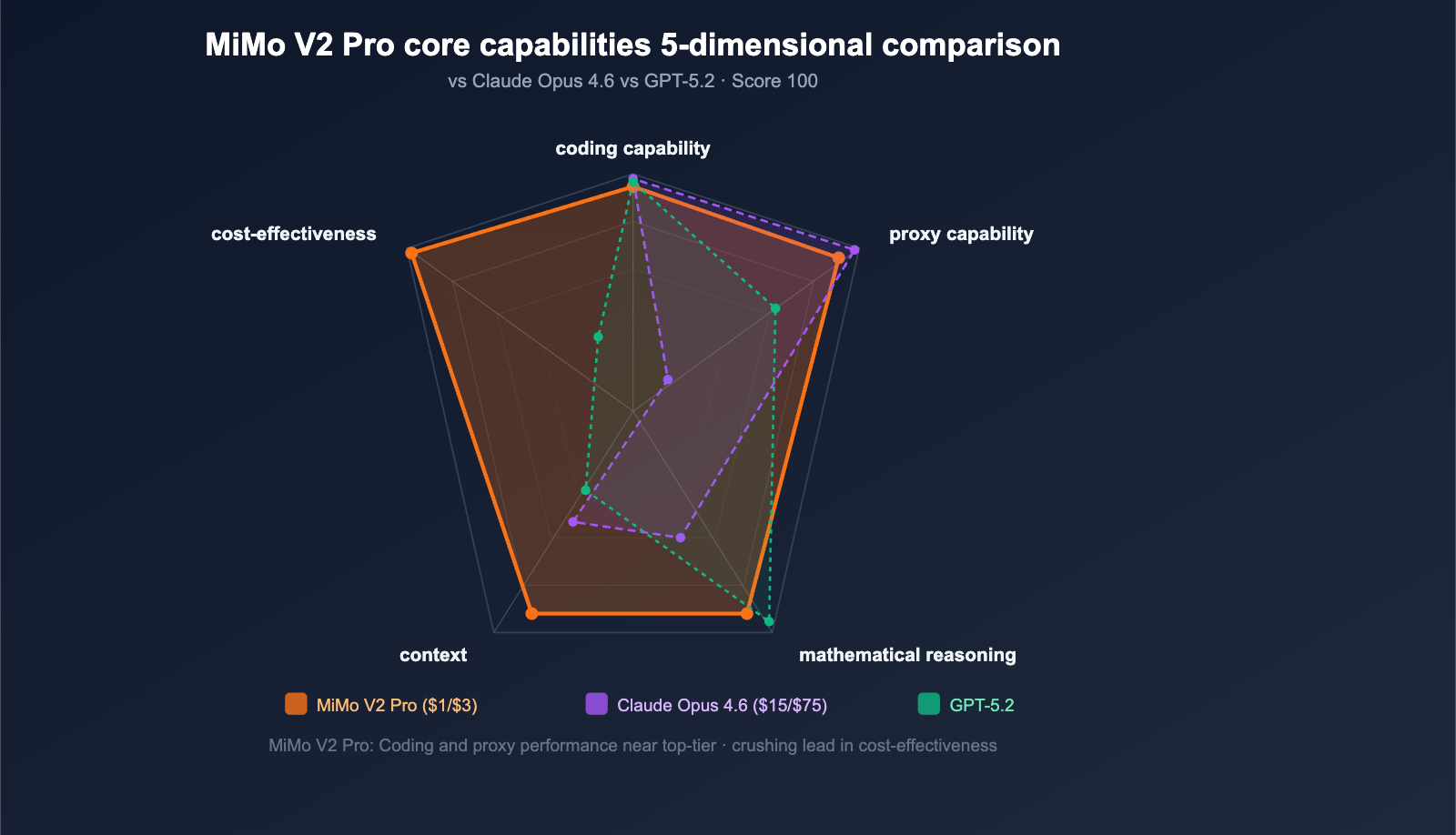

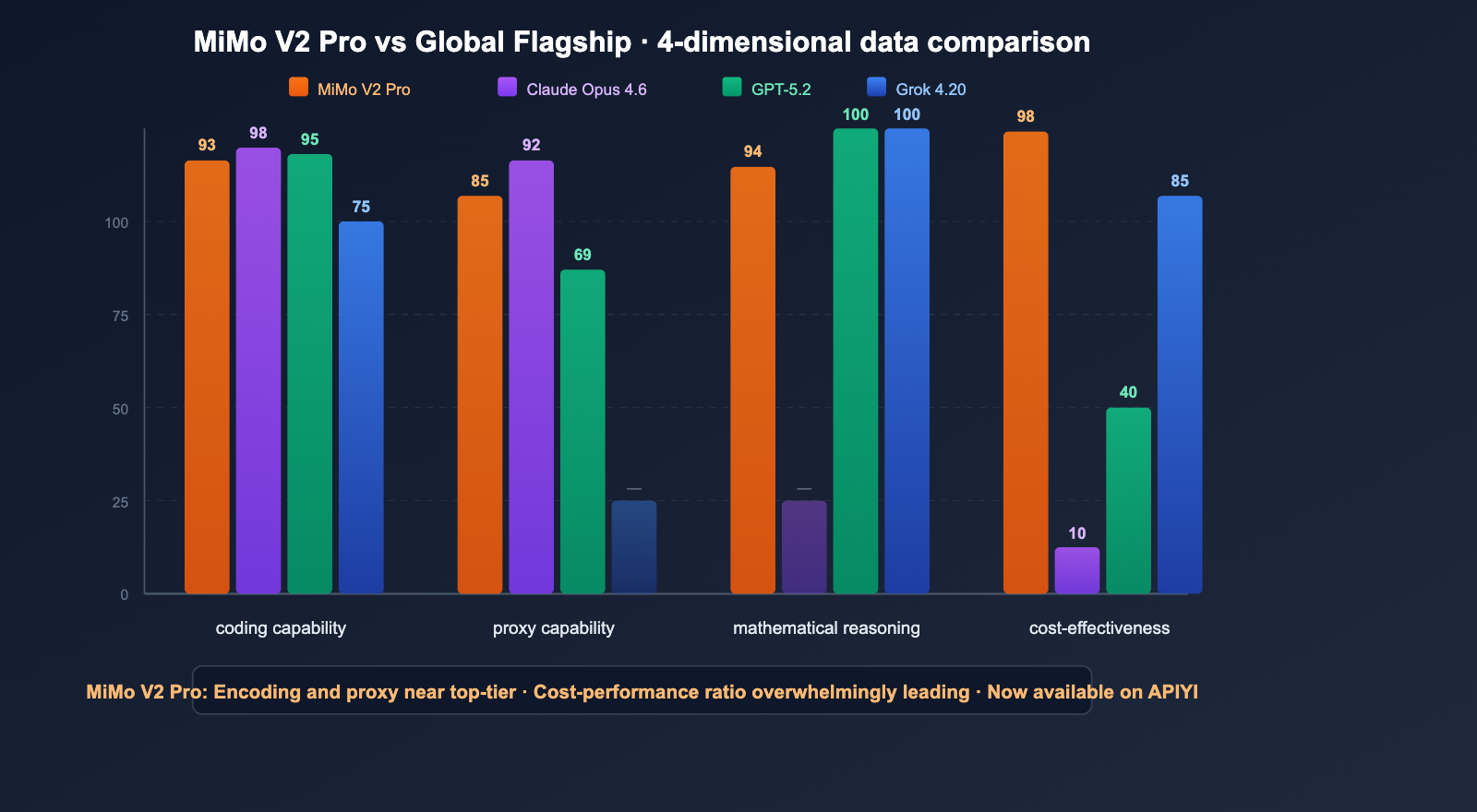

MiMo V2 Pro vs. Global Leading Models: A Comparative Analysis

Comprehensive Comparison

| Dimension | MiMo V2 Pro | Claude Opus 4.6 | GPT-5.2 | Grok 4.20 |

|---|---|---|---|---|

| Architecture | 1T MoE (42B active) | Closed-source | Closed-source | Closed-source MoE |

| Coding Accuracy | 92.5% | Strongest (SWE 81.4%) | Strong (SWE ~80%) | SWE ~75% |

| Agent Capability (ClawEval) | 61.5 (#3) | 66.3 (#1) | 50.0 | — |

| Math | 94.0% | — | AIME 100% | AIME 100% |

| Context Window | 1M | 1M | Varies by model | 2M |

| Input Price | $1.00 | $15.00 | ~$7.50 | $2.00 |

| Output Price | $3.00 | $75.00 | ~$30.00 | $6.00 |

| Inference Mode | <think> tags |

Adaptive Thinking | Extended reasoning | Reasoning/Non-reasoning |

| Multimodal | ❌ (Pro is text-only) | ✅ | ✅ | ✅ Limited |

Positioning of MiMo V2 Pro

Performance: Close to Claude Opus 4.6 (only a 5-point gap in agent capability)

Price: Approximately 1/25th of Opus

↓

Positioning: "The Opus for the rest of us" / King of cost-effectiveness

Best Use Cases for MiMo V2 Pro:

- Cost-sensitive applications requiring strong reasoning capabilities

- Agentic tasks (multi-step reasoning, tool invocation)

- Large-scale code generation and analysis

- Mathematical and logical reasoning

- Text-only scenarios where multimodal features aren't required

Scenarios where Claude Opus 4.6 still excels:

- Extremely complex software engineering (SWE-bench gap of ~6 percentage points)

- Projects requiring 128K+ ultra-long outputs

- Enterprise-grade security and compliance requirements

- Tasks requiring Adaptive Thinking

💰 Selection Advice: For daily development and batch tasks, using MiMo V2 Pro ($1/$3) can save you a significant amount of money. Reserve Claude Opus 4.6 for security-critical and architectural-level tasks. You can use an API proxy service like APIYI (apiyi.com) to call both models with a single API key and switch between them as needed.

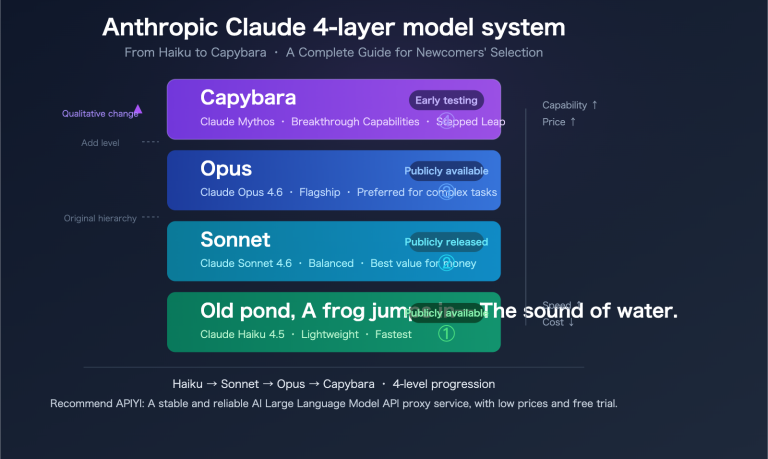

A Quick Look at the MiMo V2 Family

Xiaomi has just dropped three new models, covering every scenario from ultra-lightweight to flagship performance.

| Model | Parameters | Positioning | Input Price | Output Price | Open Source |

|---|---|---|---|---|---|

| MiMo V2 Flash | 309B (15B active) | Lightweight & Fast | $0.09 | $0.29 | ✅ MIT |

| MiMo V2 Pro | ~1T (42B active) | Reasoning Flagship | $1.00 | $3.00 | ❌ API |

| MiMo V2 Omni | — | Multimodal | $0.40 | $2.00 | ❌ API |

MiMo V2 Flash Notes:

- Fully open-source under the MIT license; weights are available for download on HuggingFace.

- SWE-bench Verified: 73.4% (Top-ranked open-source model).

- AIME 2025: 94.1%.

- Inference speed: 150+ tokens/second.

- Outperforms DeepSeek-R1-0528 in 7 out of 8 test categories.

🎯 Family Strategy: Use Flash for simple tasks (at an ultra-low $0.09/$0.29), Pro for reasoning tasks (best value at $1/$3), and Omni for multimodal tasks ($0.40/$2.00). You can access the entire MiMo V2 lineup in one place via APIYI at apiyi.com.

API Invocation in Action

Invoking MiMo V2 Pro

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://api.apiyi.com/v1" # Unified APIYI endpoint

)

response = client.chat.completions.create(

model="mimo-v2-pro",

messages=[

{"role": "system", "content": "You are a senior software engineer specializing in code review and architecture design."},

{"role": "user", "content": "Review the following Python code for concurrency safety..."}

],

max_tokens=8192

)

print(response.choices[0].message.content)

Invoking MiMo V2 Omni (Multimodal)

# Image understanding example

response = client.chat.completions.create(

model="mimo-v2-omni",

messages=[

{

"role": "user",

"content": [

{"type": "text", "text": "Analyze the data flow in this architecture diagram"},

{"type": "image_url", "image_url": {"url": "data:image/png;base64,..."}}

]

}

]

)

View MiMo V2 Pro vs. Claude Sonnet 4.6 Benchmark Code

import openai

import time

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://api.apiyi.com/v1"

)

models = ["mimo-v2-pro", "claude-sonnet-4-6"]

prompt = "Implement a thread-safe LRU cache in Python that supports concurrency"

for model in models:

start = time.time()

response = client.chat.completions.create(

model=model,

messages=[{"role": "user", "content": prompt}],

max_tokens=4096

)

elapsed = time.time() - start

usage = response.usage

print(f"\n{'='*50}")

print(f"Model: {model}")

print(f"Time taken: {elapsed:.1f}s")

print(f"Tokens: Input {usage.prompt_tokens} / Output {usage.completion_tokens}")

print(f"Preview: {response.choices[0].message.content[:200]}...")

🚀 Get Started Quickly: Register at APIYI (apiyi.com) to get your API key and start calling MiMo V2 Pro and Omni. One key gives you access to over 200 models, including Xiaomi, Claude, GPT, and more.

FAQ

Q1: Does MiMo V2 Pro really have a trillion parameters? Why is it so cheap?

Yes, it has a total of about 1 trillion parameters, but it uses a Mixture-of-Experts (MoE) architecture, activating only about 42B parameters per inference. This means the inference cost is significantly lower than that of a dense model with the same parameter count. This is the same technical approach used by models like DeepSeek and Grok. You can access this trillion-parameter model at 1/3 the price via APIYI (apiyi.com).

Q2: Can MiMo V2 Pro replace Claude for code reviews?

In some scenarios, yes. MiMo V2 Pro's coding accuracy (92.5%) and agentic capabilities (ClawEval 61.5) are very strong. For daily code reviews and bug analysis, it's a highly cost-effective choice. However, for security-critical audits and large-scale architectural refactoring, Claude Opus 4.6 remains more reliable. We recommend using APIYI (apiyi.com) to integrate both models and switch between them flexibly based on the task.

Q3: Is the 10-hour audio processing capability of MiMo V2 Omni reliable?

Xiaomi claims this is an industry-first capability—supporting 10+ hours of continuous audio understanding in a single request without performance degradation. It's well-suited for long-form audio tasks like meeting transcript analysis and podcast content extraction. However, as it's a newly released model, we suggest testing it on non-critical tasks first. You can test it at a low cost ($0.40/$2.00) via APIYI (apiyi.com).

Q4: Will MiMo V2 Pro be open-sourced?

Xiaomi has stated that they plan to open-source it "once the model is stable enough." The MiMo V2 Flash from the same series has already been open-sourced under the MIT license on HuggingFace. Given Xiaomi's proactive stance on open source (MiMo V1 was also open-sourced), it's only a matter of time before V2 Pro is released.

Q5: How should I choose between MiMo V2 Pro, Flash, and Omni?

Choose based on your needs: Select Pro ($1/$3, strongest reasoning) for pure text reasoning tasks; choose Flash ($0.09/$0.29, open-source and self-deployable) for extreme cost efficiency or local deployment; and go with Omni ($0.40/$2.00) if you need to process images, videos, or audio. You can access all three models with a single key via APIYI (apiyi.com).

Conclusion: Xiaomi's AI Ambitions Are Not to Be Underestimated

The release of the MiMo V2 series marks Xiaomi's official transition from a "mobile phone company doing AI" to a "global cutting-edge AI player." The anonymous launch of Hunter Alpha was a textbook product release—letting the performance speak for itself before revealing the identity.

3 Key Takeaways:

- MiMo V2 Pro is currently the most cost-effective reasoning model: It ranks #3 globally in agentic capabilities, outperforms Sonnet 4.6 in coding, and costs only 1/25th of Opus.

- MiMo V2 Omni's multimodal capabilities are worth watching: The 10-hour audio processing is a genuine, differentiating advantage.

- Xiaomi's AI team has incredible execution: They went from zero to a trillion-parameter model in less than a year, with a core team hailing from DeepSeek.

We recommend experiencing the full MiMo V2 model series via APIYI (apiyi.com) to access near-top-tier AI reasoning capabilities at the lowest prices in the industry.

References

-

Xiaomi MiMo V2 Pro Official Page: Technical specifications and benchmark data

- Link:

mimo.xiaomi.com/mimo-v2-pro

- Link:

-

Artificial Analysis: MiMo V2 Pro benchmark evaluation

- Link:

artificialanalysis.ai/models/mimo-v2-pro

- Link:

-

VentureBeat: Xiaomi MiMo V2 Pro launch report

- Link:

venturebeat.com

- Link:

-

OpenRouter: MiMo V2 model pricing and API information

- Link:

openrouter.ai

- Link:

Author: APIYI Team | We launch the latest AI models as soon as they drop. Feel free to visit APIYI at apiyi.com to experience the full Xiaomi MiMo V2 model series.