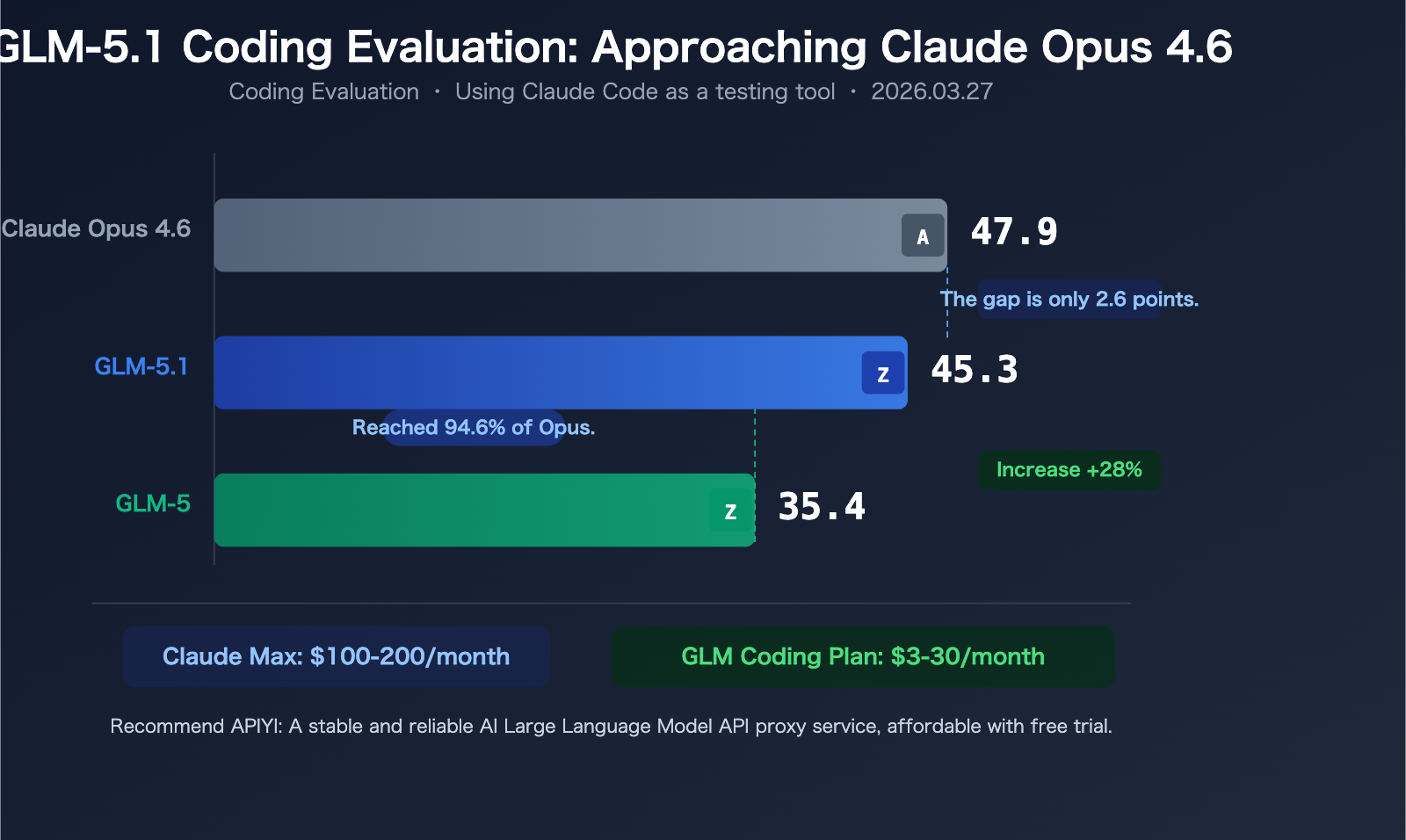

On March 27, 2026, Z.ai (formerly known as Zhipu AI) officially announced: GLM-5.1 is now live, available to all GLM Coding Plan users. In a coding evaluation using Claude Code as the testing tool, GLM-5.1 scored 45.3 points—just 2.6 points behind Claude Opus 4.6's 47.9 points, reaching 94.6% of Opus's performance.

Even more impressive is that GLM-5.1 shows a 28% improvement over its predecessor GLM-5's score of 35.4—a massive generational leap.

And what's the price of entry for all this? The GLM Coding Plan starts at a minimum of $3/month (promotional price), with the standard price beginning at just $10/month.

Core Value: This article will break down GLM-5.1's enhanced coding capabilities, the details and usage of the Coding Plan packages, and its practical value as a high-performance, cost-effective alternative when Claude's official pricing is high or its compute is occasionally unstable. APIYI will integrate it as soon as the API is available.

Core Data: Using Claude Code as the Testing Tool

The coding evaluation (Coding Evaluation) released by Z.ai uses Claude Code as the testing framework, which is a noteworthy detail—GLM-5.1 demonstrated capabilities close to Opus within Claude's own testing environment.

| Model | Coding Evaluation Score | Gap to Opus | Improvement vs GLM-5 |

|---|---|---|---|

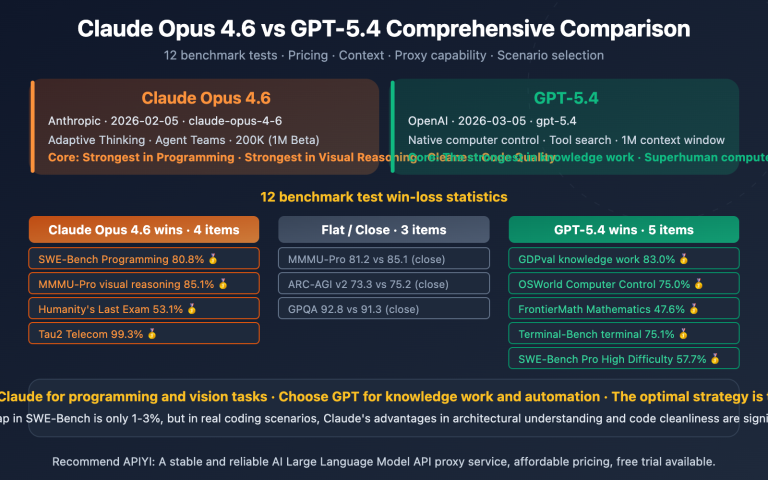

| Claude Opus 4.6 | 47.9 | — | — |

| GLM-5.1 | 45.3 | -2.6 (94.6%) | +28% |

| GLM-5 | 35.4 | -12.5 (73.9%) | Baseline |

Three key findings:

1. GLM-5.1 reached 94.6% of Claude Opus 4.6's score

A 2.6-point gap is almost negligible for most tasks in practical coding scenarios. This means that for daily coding assistance, the experience with GLM-5.1 is already very close to that of Opus.

2. The leap from GLM-5 to GLM-5.1 is astonishing

From 35.4 to 45.3 is a 28% improvement. Considering GLM-5 was only released in February 2026 (with an SWE-bench Verified score of 77.8%, already a leading level among open-source models), achieving such a significant iteration in just over a month is a testament to Z.ai's impressive engineering capabilities.

3. Fairness of the testing environment

Using Claude Code as the testing framework means this evaluation naturally gives Claude models a certain advantage (Claude Code is a tool optimized for the Claude series). For GLM-5.1 to achieve a 94.6% score in this "away game" suggests its actual capability might be even stronger.

Technical Improvements of GLM-5.1 Compared to GLM-5

Although the full technical report for GLM-5.1 hasn't been released yet, we can make educated guesses based on the foundation of GLM-5 and known information:

| Technical Dimension | GLM-5 | GLM-5.1 (Known/Estimated) |

|---|---|---|

| Total Parameters | 744B | Not disclosed (Estimated ≥ GLM-5) |

| Activated Parameters | 40B | Not disclosed |

| Architecture | MoE | MoE (Estimated improvements) |

| Context Window | 200K tokens | Not disclosed (Estimated ≥200K) |

| Training Data | 28.5T tokens | Not disclosed (Estimated more) |

| License | MIT Open Source | Confirmed to be open source |

| Coding Evaluation | 35.4 | 45.3 (+28%) |

Z.ai's global head, Zixuan Li, confirmed on Twitter on March 20th: "Don't panic. GLM-5.1 will be open source."—This means the model weights for GLM-5.1 will eventually be released under an MIT license, just like GLM-5.

🎯 Industry Perspective: GLM-5.1's open-source commitment is very important. It means third-party inference platforms (including APIYI apiyi.com) can quickly integrate it after the official API launches, potentially even offering services at lower prices than the official ones.

GLM Coding Plan Explained: A Coding AI Solution Starting at $3

GLM Coding Plan is a subscription-based coding AI service launched by Z.ai, with its core selling point being getting a coding experience close to Claude's level at a price far lower than Claude's.

Comparison of the Three Tiers

| Tier | Monthly Fee (Regular) | Promotional Price | Requests per 5 Hours | Monthly Searches |

|---|---|---|---|---|

| Lite | $10/month | $3 for the first month | 120 | 100 |

| Pro | $30/month | $15 for the first month | 600 | 1,000 |

| Max | Higher | — | More | 4,000 |

Models and Tools Supported by the Coding Plan

Included Models:

- GLM-5.1 (Latest, coding benchmark score 45.3)

- GLM-5 (coding benchmark score 35.4)

- GLM-5-Turbo (faster version)

- GLM-4.7

Compatible Coding Tools:

- Claude Code (via API compatibility layer)

- Cline

- Kilo Code

- OpenCode

- Clawdbot / OpenClaw

Additional Features:

- Vision Understanding

- Web Search MCP

- Web Reader MCP

- Zread MCP

- 55+ tokens/sec generation speed

- No network restrictions, no account ban risk

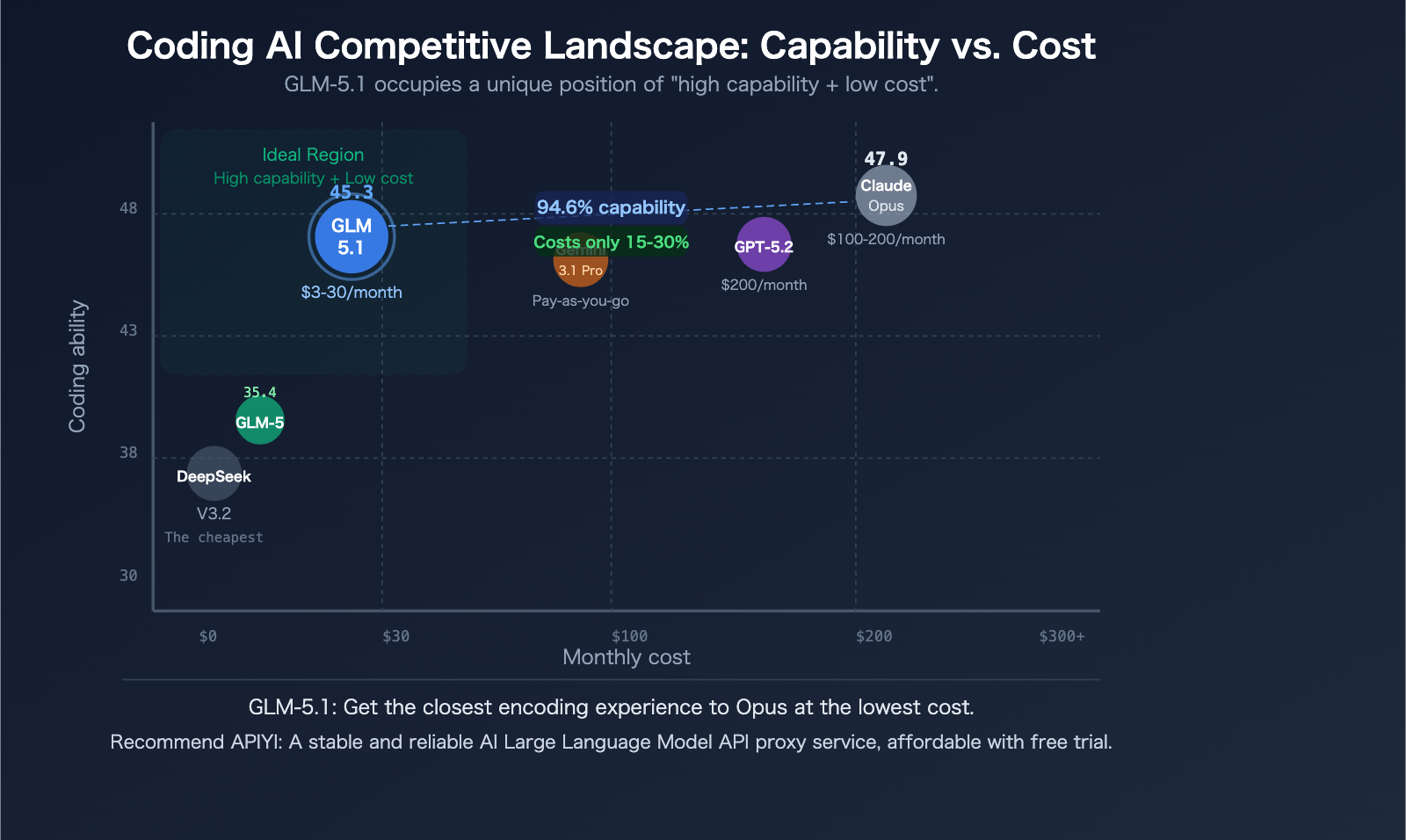

Cost Comparison: Coding Plan vs. Claude Official Subscription

This is the most straightforward cost-performance comparison:

| Comparison Dimension | GLM Coding Lite | GLM Coding Pro | Claude Pro | Claude Max |

|---|---|---|---|---|

| Monthly Fee | $10 (Promo $3) | $30 (Promo $15) | $20 | $100-200 |

| Coding Capability | 45.3 (GLM-5.1) | 45.3 (GLM-5.1) | Sonnet-level | Opus-level (47.9) |

| Ratio to Opus | 94.6% | 94.6% | ~80% | 100% |

| Cost/Capability Ratio | Very High | High | Medium | Medium-Low |

A developer shared on Medium: "GLM Coding Plan lets me get 3x the usage of Claude Max for $30/month"—while subjective, this reflects the real community experience.

💰 Cost Breakdown: If you spend over $30/month on the Claude API, primarily for coding tasks, the GLM Coding Pro plan is worth serious consideration. Getting a model with 94.6% of Opus's coding capability for $30/month offers genuinely high value.

Our Perspective: A Cost-Effective Alternative When Claude's Compute is Unstable

As an API aggregation platform, APIYI observes a real pain point in daily operations: Claude's official compute occasionally becomes unstable. Especially during peak hours, Opus 4.6's response latency increases, request queue times lengthen, impacting developer productivity.

Why is GLM Coding Plan a Good Alternative?

Reason 1: Coding Capability is Already Close Enough

45.3 vs. 47.9—a 2.6-point gap is almost imperceptible in daily coding. Unless you're handling extremely complex code architecture design or deep reasoning tasks, GLM-5.1 is fully capable.

Reason 2: The Price Gap is Huge

Claude Max costs $100-200/month, GLM Coding Pro is only $30. If you allocate the money saved to scenarios that truly require Opus (via on-demand API calls), your total cost might be even lower.

Reason 3: No Worries About Compute Instability

GLM Coding Plan promises no network restrictions and no account ban risk. Although Z.ai itself faced compute capacity issues in late February, its subscription model's capacity management is relatively more predictable.

Reason 4: Good Tool Compatibility

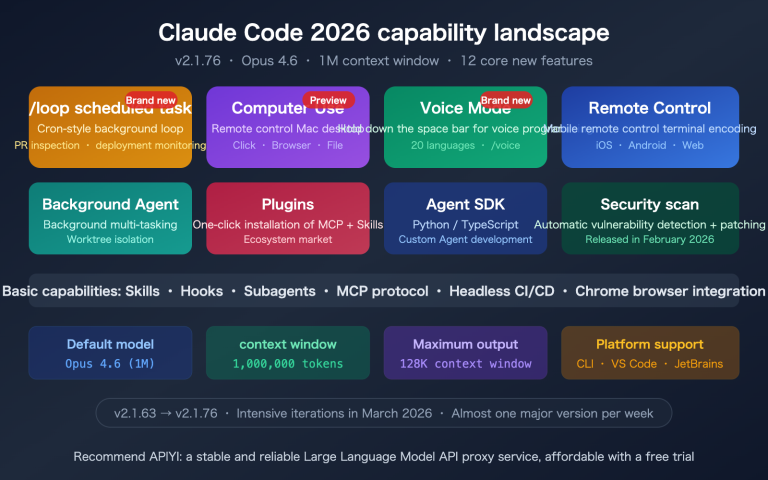

Directly compatible with Claude Code—you don't need to switch coding tools, just change the API endpoint to switch from Claude to GLM. For developers accustomed to the Claude Code workflow, the migration cost is almost zero.

When Should You Stick with Claude?

GLM-5.1 is a good alternative, but not the optimal solution for all scenarios:

- Need a 1M Context Window: Claude Opus 4.6 supports 1M tokens, GLM-5 series supports 200K

- Need Extreme Reasoning Depth: Opus still has an advantage in deep reasoning and complex analysis

- Need Multimodal Output: Claude's image understanding and long-form text creation are stronger in certain scenarios

- Enterprise Compliance Requirements: Some companies have specific requirements for model vendors

🎯 Recommended Strategy: Use GLM Coding Plan as your mainstay for daily coding, and call Claude Opus on-demand via APIYI (apiyi.com) for complex tasks. This "GLM for daily use + Claude for heavy artillery" combination can significantly reduce total costs while ensuring effectiveness.

GLM-5.1 API Access: Current Status and Preparations

Current Status

As of March 27, 2026, the status of GLM-5.1 is as follows:

| Channel | Status | Notes |

|---|---|---|

| GLM Coding Plan (z.ai) | ✅ Available | Available to all Coding Plan users |

| Z.ai Official API | ⏳ Not yet available | Awaiting official release |

| APIYI apiyi.com | ⏳ Awaiting integration | Will be supported immediately upon API release |

| Open Source Weights | ⏳ Open source promised | Specific timeline not yet determined |

Preparations You Can Make Now

Option 1: Use GLM Coding Plan Directly

Go to z.ai/subscribe to subscribe to the Coding Plan, and you can use GLM-5.1 within Claude Code.

Option 2: Call GLM-5 via API (Currently Available)

The GLM-5 API is already available, priced at $1.00/M tokens for input and $3.20/M tokens for output. While its coding benchmark score (35.4) is lower than GLM-5.1's (45.3), it remains strong on other tasks (SWE-bench Verified 77.8%).

import openai

client = openai.OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1" # APIYI unified interface

)

# Currently available: GLM-5

response = client.chat.completions.create(

model="glm-5",

messages=[

{"role": "user", "content": "Optimize the performance of this Python code"}

]

)

# Switch after GLM-5.1 API launches

# model="glm-5.1"

print(response.choices[0].message.content)

View full code (including GLM + Claude hybrid calling strategy)

import openai

import time

# Call multiple vendor models via the APIYI unified interface

client = openai.OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1"

)

# Model selection strategy: use GLM for simple tasks, Claude for complex ones

def smart_route(prompt, complexity="normal"):

"""

Automatically selects a model based on task complexity

- simple: GLM-5 (lowest cost)

- normal: GLM-5.1 (switch after launch)

- complex: Claude Opus 4.6 (strongest reasoning)

"""

model_map = {

"simple": "glm-5",

"normal": "glm-5", # Change to "glm-5.1" after GLM-5.1 launches

"complex": "claude-opus-4-6"

}

model = model_map.get(complexity, "glm-5")

start = time.time()

response = client.chat.completions.create(

model=model,

messages=[{"role": "user", "content": prompt}],

max_tokens=2000,

temperature=0.7

)

elapsed = time.time() - start

return {

"model": model,

"content": response.choices[0].message.content,

"time": f"{elapsed:.2f}s",

"tokens": response.usage.total_tokens

}

# Example: Use GLM for daily coding, Claude for deep reasoning

tasks = [

("Write a Python implementation of quicksort", "simple"),

("Refactor the database connection pool for this microservice", "normal"),

("Design a compensation mechanism for a distributed transaction", "complex"),

]

for prompt, complexity in tasks:

result = smart_route(prompt, complexity)

print(f"[{complexity}] Model: {result['model']}")

print(f" Time: {result['time']} | Tokens: {result['tokens']}")

print(f" Reply: {result['content'][:100]}...\n")

🚀 Quick Start: APIYI apiyi.com currently supports GLM-5 API calls. APIYI will integrate GLM-5.1 immediately upon its official API release. Registration includes free credits and supports unified calls to models from multiple vendors like Claude, GPT, Gemini, and GLM.

GLM-5.1 and the Competitive Landscape of Coding AIs

The launch of GLM-5.1 makes the competitive landscape for coding AIs even more interesting:

| Model | Coding Capability Positioning | Price Positioning | API Status | Open Source Status |

|---|---|---|---|---|

| Claude Opus 4.6 | Strongest (47.9) | Highest | Publicly available | Closed source |

| GLM-5.1 | Close to Opus (45.3) | Low ($3-30/month) | Available via Coding Plan | Will be open source |

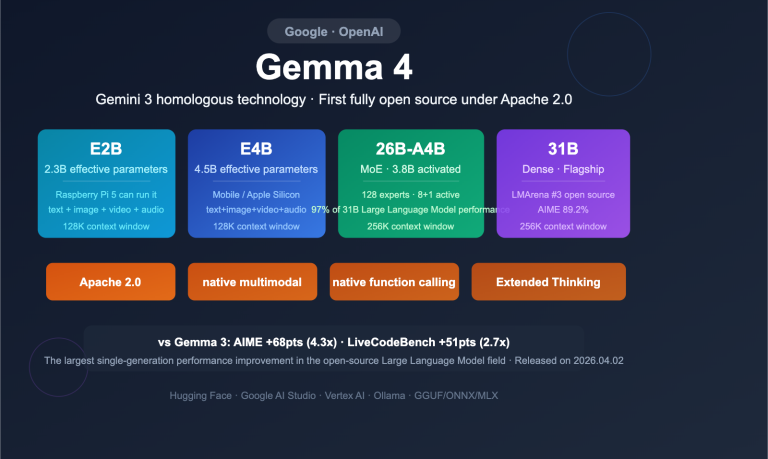

| Gemini 3.1 Pro | Strong (leads in multiple benchmarks) | Medium | Available in Preview | Closed source |

| GPT-5.2 | Strong | High | Publicly available | Closed source |

| DeepSeek V3.2 | Medium | Lowest | Publicly available | Open source |

| GLM-5 | Upper-Mid (35.4) | Low | Publicly available | MIT Open source |

GLM-5.1's unique positioning is: Offering coding capabilities close to closed-source flagship models, with the transparency of an open-source model and the low price of a subscription plan. This is unprecedented—it's the first time an open-source model has come this close to the closed-source champion in coding ability.

Frequently Asked Questions

Q1: When will the GLM-5.1 API be available?

There's no confirmed date yet. GLM-5.1 is available within the Coding Plan, but a standalone API endpoint hasn't been released. Looking at the time between GLM-5's release and its API launch, the GLM-5.1 API might be available within a few weeks. APIYI (apiyi.com) will integrate it as soon as the API officially opens, allowing you to call GLM-5.1 directly through a unified interface.

Q2: How do I use GLM Coding Plan with Claude Code?

Z.ai's API is compatible with the Anthropic API format, so Claude Code can route requests to Z.ai by changing the API endpoint. The specific step is to change the API endpoint in Claude Code's configuration from the official Anthropic one to the endpoint provided by Z.ai—no other settings need to be modified. For detailed configuration, refer to the Z.ai developer documentation: docs.z.ai.

Q3: Can GLM-5.1 completely replace Claude Opus 4.6?

On coding tasks, GLM-5.1 (45.3 points) is already very close to Opus (47.9 points), with almost no noticeable difference in everyday coding scenarios. However, Opus still has clear advantages in these areas: 1M token ultra-long context, extreme-depth reasoning, and complex multi-step Agent workflows. Our recommended strategy is "GLM for daily use + Claude for heavy artillery"—use GLM for daily coding to save costs, and call Opus via APIYI (apiyi.com) on-demand for complex tasks.

Summary: GLM-5.1 Changes the Cost-Performance Game for Coding AI

The launch of GLM-5.1 is a significant milestone in the 2026 coding AI landscape—it's the first open-source (soon-to-be-open-source) model to achieve 94.6% of Claude Opus 4.6's score in coding benchmarks, while costing only a fraction of Opus's price.

Three Key Takeaways:

- Coding performance nears the ceiling: 45.3 vs. 47.9, a gap of just 2.6 points—barely noticeable in daily coding.

- Overwhelming price advantage: Coding Plan at $3-30/month vs. Claude Max at $100-200/month.

- API coming soon: APIYI will integrate the GLM-5.1 API as soon as it's available, allowing calls through a unified interface.

With Claude's official prices remaining high and occasional compute instability, GLM Coding Plan has proven itself a reliable, high-value alternative. Whether you choose the Coding Plan subscription or wait for the pay-as-you-go API, GLM-5.1 deserves a spot in your coding AI toolkit.

Author: APIYI Team | APIYI (apiyi.com) will support GLM-5.1 as soon as its API launches. Visit to get free testing credits and experience unified calling for Claude + GLM + GPT + Gemini models.

📚 References

-

Z.ai Official Announcement: GLM-5.1 Coding Plan Launch

- Link:

z.ai/subscribe - Description: Subscribe to the GLM Coding Plan to use GLM-5.1

- Link:

-

Z.ai Official Blog: GLM-5 Technical Introduction

- Link:

z.ai/blog/glm-5 - Description: Details on GLM-5's architecture, training data, and benchmark tests

- Link:

-

Z.ai Developer Documentation: API Integration and Pricing

- Link:

docs.z.ai/guides/overview/pricing - Description: Model pricing, API call specifications, and tool integration guides

- Link:

-

Artificial Analysis: Independent GLM-5 Evaluation

- Link:

artificialanalysis.ai/models/glm-5 - Description: Third-party benchmark test data and model comparisons

- Link:

-

GLM-5 GitHub Repository: Open-Source Model Weights

- Link:

github.com/zai-org/GLM-5 - Description: Open-sourced under MIT license, includes model weights and usage guides

- Link: