title: "OpenAI Unveils GPT-5.4-Cyber: A New Standard for Cybersecurity Research"

description: "Exploring the release of GPT-5.4-Cyber, OpenAI's specialized model for defensive cybersecurity, featuring binary reverse engineering capabilities."

tags: [AI, Cybersecurity, GPT-5.4-Cyber, OpenAI]

On April 14, 2026, OpenAI officially launched GPT-5.4-Cyber, a "cyber-permissive" fine-tuned variant of GPT-5.4 specifically engineered for defensive cybersecurity tasks. The biggest difference between this and standard consumer-grade models is its lowered refusal threshold for security-related research and, for the first time, its public support for binary reverse engineering capabilities—allowing it to analyze compiled executables to identify vulnerabilities and malicious code without needing source code.

This model is restricted exclusively to security researchers and organizations that have obtained the highest level of certification through OpenAI's Trusted Access for Cyber program. It is not available as a public API for general developers. The timing of this release is also quite strategic: it arrived exactly one week after Anthropic’s comparable product, Claude Mythos, signaling that the race to dominate the "AI × Cybersecurity" space is heating up between the two giants.

What is GPT-5.4-Cyber? A Quick Fact Sheet

Setting the technical details aside for a moment, here’s a table to help you grasp the key information about this release in under 30 seconds:

| Dimension | Fact |

|---|---|

| Launch Date | April 14, 2026 |

| Provider | OpenAI |

| Model Positioning | "Cyber-permissive" fine-tuned variant of GPT-5.4 |

| Core Goal | Defensive Cybersecurity |

| Key New Capability | Binary reverse engineering (no source code required) |

| Access Method | Highest-level certification via Trusted Access for Cyber |

| Individual Entry | chatgpt.com/cyber (requires identity verification) |

| Enterprise Entry | Application via OpenAI representative |

| Scale | Thousands of individual defenders, hundreds of security teams |

| Pricing | Not publicly disclosed |

| Main Competitor | Anthropic Claude Mythos (released one week earlier) |

This model is unlike any GPT version developers are accustomed to—it's not meant for chatting, writing code, or general assistance; it's a "compliance key" for security researchers, designed to unlock specific scenarios that are otherwise restricted by the standard GPT-5.4 safety policies.

📌 Bottom Line: GPT-5.4-Cyber is a narrow door opened by OpenAI specifically for "high-end security researchers." It is not an open model for general developers. Later in this article, we'll address the critical question of whether it can be accessed through third-party API platforms.

Breaking Down the Five Key Capabilities of GPT-5.4-Cyber

Based on official statements from OpenAI and third-party reports, the differences between this model and the standard GPT-5.4 center on five key dimensions.

Capability 1: cyber-permissive Security Policy

This is the core philosophy of the entire model. During training, the standard GPT-5.4 is set to reject requests that "look like malicious attacks or exploit attempts" by default. While this "better safe than sorry" approach protects the average user, it has been a major headache for legitimate security researchers. They often find themselves blocked by the model saying, "I'm sorry, I cannot assist with that," while performing penetration testing, vulnerability research, or malware analysis.

GPT-5.4-Cyber lowers this wall specifically for "verified security researchers," allowing it to analyze exploit concepts, discuss exploit principles, and assist in writing PoCs—scenarios where the standard version would refuse to cooperate.

Capability 2: Binary Reverse Engineering

This is the most technically significant new capability in this release. Traditionally, binary reverse engineering has relied on professional tools like IDA Pro, Ghidra, and Binary Ninja, combined with manual analysis by security researchers. GPT-5.4-Cyber can directly analyze compiled binary files, enabling it to:

- Identify potential vulnerabilities (buffer overflows, format strings, UAF, etc.) without source code.

- Analyze malicious sample behavior (C2 communication, persistence, anti-debugging).

- Reconstruct the control flow logic of executable files.

- Assist in generating or verifying YARA rules.

This means security teams can offload the initial screening to AI, allowing human researchers to focus on the most complex aspects.

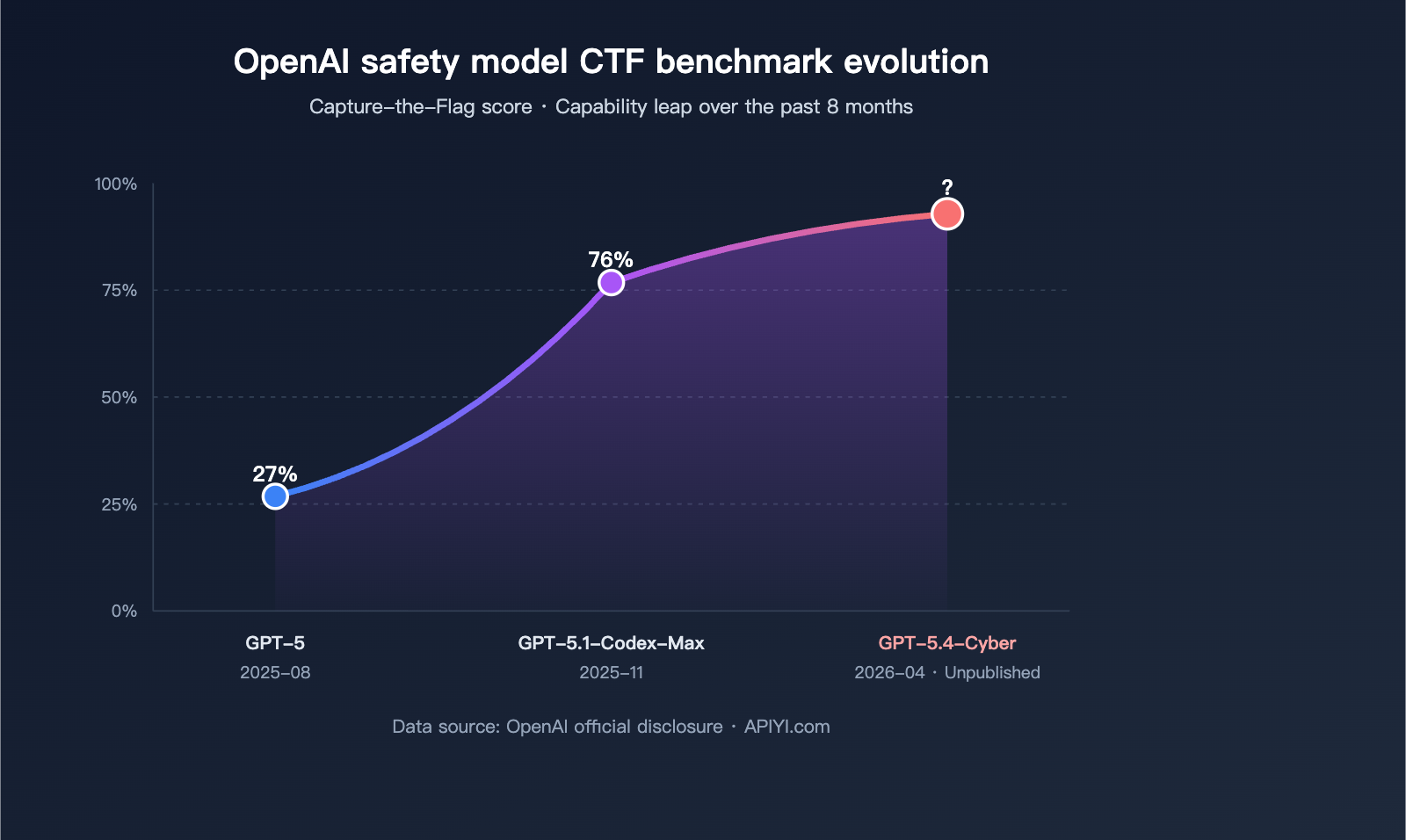

Capability 3: Rapid Leap in CTF Benchmarks

OpenAI disclosed a set of valuable performance data in their release materials, reflecting the model's evolution in cybersecurity scenarios:

- GPT-5 (August 2025) Capture-the-Flag benchmark score: 27%

- GPT-5.1-Codex-Max (November 2025) CTF benchmark score: 76%

While the specific CTF score for GPT-5.4-Cyber itself hasn't been made public, this curve demonstrates the massive amount of training and alignment resources OpenAI has poured into security capabilities over the past year.

Capability 4: Lowered Refusal Boundary

Traditional GPT safety guardrails have a very high false-refusal rate in security research scenarios. The key product decision for GPT-5.4-Cyber is: handing the control of the refusal threshold over to "identity verification results." Within the verified user group, the model uses a more appropriate scale to handle security topics—it doesn't become a "jailbroken GPT," but it also doesn't apply a blanket ban on any risk-related terms like the standard version.

Behind this is a complete identity trust chain: Persona identity document verification → Trusted Access tiered authentication → Model adjusts response strategy based on the authentication level.

Capability 5: Defensive Only

In all their release materials, OpenAI repeatedly emphasizes "defensive cybersecurity" rather than "offensive." This is a deliberate political stance: the model is intended for defenders (Blue Teams, security vendors, research institutions), not for attackers. This position dictates that access reviews will be extremely strict—which we'll cover later.

| Capability Dimension | Standard GPT-5.4 | GPT-5.4-Cyber |

|---|---|---|

| Security Policy Strictness | High (Default refusal of risky requests) | Controlled relaxation (Based on auth level) |

| Binary Reverse Engineering | Limited support | Deep support (Core selling point) |

| Exploit Principle Discussion | Mostly refused | In-depth discussion after verification |

| Malicious Sample Analysis | Shallow description | Can assist with full analysis |

| Access Barrier | Public paid API | Requires highest Trusted Access tier |

| Target Audience | All developers | Verified security researchers |

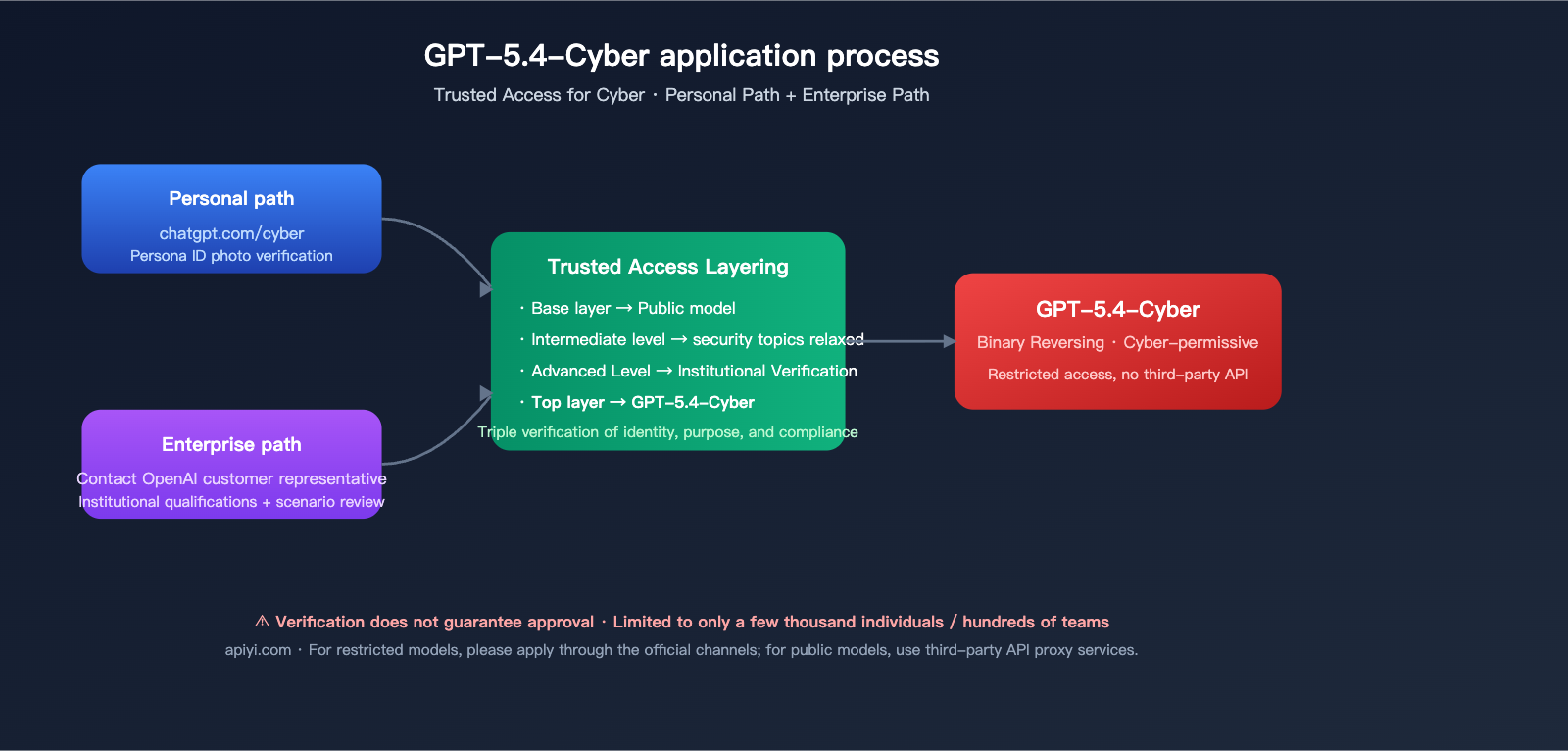

How to Apply for GPT-5.4-Cyber? Trusted Access Tiers and Process

Trusted Access for Cyber is a project launched by OpenAI in February 2026, which also included the release of a $10 million cybersecurity grant fund. The major update on April 14th was expanding the previous coarse-grained "access or deny" model into tiered access, and binding the highest tier of access to GPT-5.4-Cyber.

Tiered Authentication Comparison

| Tier | Target Audience | Available Capabilities | Verification Method |

|---|---|---|---|

| Basic | Registered ChatGPT users | Standard GPT-5.4 and other public models | Standard account |

| Intermediate | Verified security practitioners | Fewer restrictions on security topics | Persona ID photo |

| Advanced | Verified security teams & institutions | More relaxed security task handling | Institutional + Personal dual verification |

| Highest | Core researchers in Trusted Access orgs | Can request GPT-5.4-Cyber | Requires OpenAI review |

Individual Application Process

- Visit

chatgpt.com/cyber - Use the Persona identity verification service to upload a photo of a government-issued ID.

- The system determines your authentication tier.

- If you qualify for the highest tier, you can apply to join the GPT-5.4-Cyber canary list.

Enterprise Application Process

- Contact your company's OpenAI Account Manager.

- Submit your team background, use case description, and compliance commitments.

- Once approved, enable the highest Trusted Access tier for specific members in your workspace.

- Members can then request GPT-5.4-Cyber access within the workspace.

⚠️ Note: OpenAI has explicitly stated that they will be expanding access very cautiously in the beginning. Even after completing identity verification, it does not guarantee you will receive GPT-5.4-Cyber access. The target coverage is "thousands of individual defenders and hundreds of security teams," which is a very scarce resource compared to the global security industry.

Can you call GPT-5.4-Cyber via third-party API platforms? An honest answer

This is the question most of our readers are asking. Based on OpenAI's current public product design, here is a no-nonsense, straightforward answer.

🚨 Current Verdict: No

Access to GPT-5.4-Cyber is deeply tied to your specific OpenAI account identity and is not available through standard API channels. This means:

- ❌ It cannot be accessed by the public or general developers via the official OpenAI API.

- ❌ It cannot be called through any third-party API proxy service, including APIYI (apiyi.com).

- ❌ You cannot gain access by purchasing an API key, as it requires an "identity credential" rather than a "payment credential."

- ✅ It is only available to accounts with Trusted Access for Cyber top-level verification, accessible via ChatGPT or dedicated enterprise workspaces.

This is determined by the model's product positioning: if GPT-5.4-Cyber could be accessed via a proxy API by bypassing identity verification, its "cyber-permissive" features would immediately become a tool for abuse by attackers. OpenAI has intentionally closed off this path by design.

Available Alternatives

For developers and researchers with different needs, here is our recommended path forward:

| Your Goal | Recommended Solution |

|---|---|

| I'm a verified high-level security researcher needing the authentic GPT-5.4-Cyber experience | Apply via the official chatgpt.com/cyber portal; avoid any third-party proxies |

| I need a standard GPT-5.4 model for general AI development | Use the unified interface at APIYI (apiyi.com) to call GPT-5.4 |

| I'm developing security-related scripts but don't meet the criteria for GPT-5.4-Cyber | Use APIYI to call public models like GPT-5, GPT-5.4, or Claude |

| I just want to learn security concepts and perform non-sensitive analysis | A standard GPT-5.4 is more than enough; any public API platform will work |

💡 Important Honest Reminder: APIYI (apiyi.com) does not provide access to GPT-5.4-Cyber. This is due to OpenAI's restricted release policy, not a business choice by APIYI. If any third-party platform claims to "provide access to GPT-5.4-Cyber," it is likely a gray-market service that violates OpenAI's terms of service or may even involve identity fraud. Please be cautious.

What can APIYI do for you?

For the vast majority of use cases that do not require GPT-5.4-Cyber, APIYI (apiyi.com) can still help you:

from openai import OpenAI

client = OpenAI(

api_key="YOUR-APIYI-KEY",

base_url="https://api.apiyi.com/v1"

)

# Both standard GPT-5.4 / GPT-5 are available

response = client.chat.completions.create(

model="gpt-5.4",

messages=[

{"role": "user", "content": "Help me analyze this code for potential SQL injection risks"}

]

)

print(response.choices[0].message.content)

The code above runs reliably on APIYI's unified interface and connects to the public version of GPT-5.4, not GPT-5.4-Cyber. It is perfectly capable of handling routine code reviews, document generation, and business integrations.

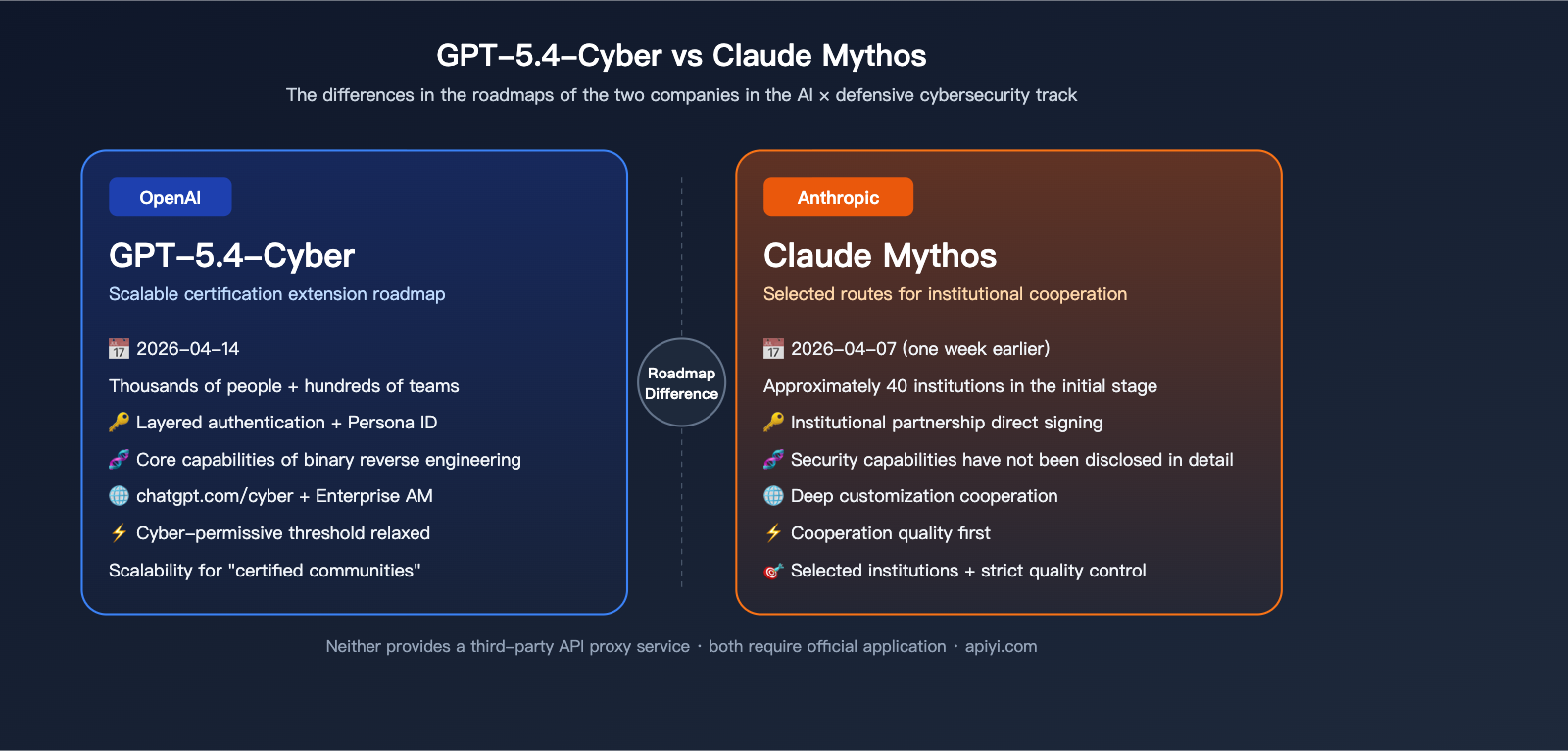

GPT-5.4-Cyber vs. Anthropic Claude Mythos: Two Different Paths

Claude Mythos was released one week before GPT-5.4-Cyber, and the two represent different approaches to "AI × Defensive Cybersecurity."

| Comparison Dimension | OpenAI GPT-5.4-Cyber | Anthropic Claude Mythos |

|---|---|---|

| Release Date | 2026-04-14 | 2026-04-07 (approx. one week earlier) |

| Base Model | GPT-5.4 fine-tuned variant | Claude variant |

| Access Policy | Tiered access, top-tier by request | Deep institutional partnership |

| Initial Scale | Thousands of individuals + hundreds of teams | Approx. 40 institutions |

| Core Selling Point | Binary reverse engineering + cyber-permissive | Not publicly disclosed |

| Distribution Channel | chatgpt.com/cyber + Enterprise AM | Institutional partnerships |

| Strategic Focus | Scalable access for "verified communities" | Curated deep institutional collaboration |

As you can see, the two companies have chosen completely different paths: OpenAI has opted for a scalable "verification-based access" model, hoping to benefit as many verified security professionals as possible; Anthropic has chosen a "curated institutional partnership" model, sacrificing reach for stricter quality control. In the long run, both approaches have their own markets—they will likely coexist for many years to come.

Impact Analysis for the Industry and Developers

Impact 1: AI’s Role in the Security Toolchain is Now "Official"

In the past, security researchers used ChatGPT for code reviews and vulnerability analysis on their own, often running into security policy blocks. The release of GPT-5.4-Cyber marks the first time OpenAI has treated "security researchers" as a distinct user group. We can expect to see more industry-specific vertical models like this in the future.

Impact 2: Identity Verification + Capability Unlocking is the New Paradigm

GPT-5.4-Cyber uses a combination of Persona identity verification and tiered access to unlock its capabilities. This is a fairly fresh product model for general-purpose AI products. We should expect to see more of these "identity + tiered model capabilities" combinations emerging in highly regulated sectors like finance, healthcare, and law.

Impact 3: A Clearer Positioning for Third-Party API Platforms

For third-party unified API platforms like APIYI (apiyi.com), the GPT-5.4-Cyber release actually draws a clear line in the sand: restricted-access models are handled via official direct channels, while public models are served by proxy services to ensure developer convenience. These two markets are complementary rather than competitive.

Impact 4: A Tangible Boost for Blue Team Efficiency

If the binary reverse engineering capabilities of GPT-5.4-Cyber are as mature as the official claims suggest, blue teams will see a massive increase in efficiency for tasks like malicious sample analysis, firmware auditing, and patch diff analysis. This is great news for the industry as a whole.

FAQ

Q1: Can individual developers get access to GPT-5.4-Cyber?

If you are a registered security researcher with a well-defined business use case, you have a chance to reach the highest level of Trusted Access via Persona identity verification. If you're just curious and want to try it out, you basically have no chance. I recommend that average developers focus their energy on the publicly accessible GPT-5.4, which can be stably accessed via the unified interface at APIYI (apiyi.com).

Q2: Can I bypass the restrictions using a VPN or by faking my identity?

Not recommended, and don't do it. Once you're flagged for identity fraud or abuse, your account will be permanently banned, and it could potentially jeopardize the eligibility of your entire organization. Trusted Access has very strict risk control measures.

Q3: Is there any pricing information?

OpenAI has not publicly disclosed pricing for GPT-5.4-Cyber. According to industry standards, such highly restricted vertical models are likely provided through enterprise agreements or seat-based subscriptions, rather than the standard per-token billing for APIs.

Q4: Can I use GPT-5.4-Cyber for offensive attacks?

Both the design and the Terms of Use strictly forbid this. The model is positioned strictly for "defensive cybersecurity," and any violation of these terms will lead to account suspension and potential legal action. OpenAI’s Trusted Access requires you to sign an agreement pledging to follow these usage guidelines.

Q5: When will APIYI (apiyi.com) add support for GPT-5.4-Cyber?

It is not expected to be added, because the model's distribution mechanism is designed to exclude third-party API proxy services. In the future, APIYI will continue to expand support for publicly available models like the standard GPT-5, GPT-5.4, the o-series, and Claude to meet the daily needs of most developers.

Q6: What is the relationship between GPT-5.4-Cyber, Codex, and the o-series?

While all three are OpenAI product lines, they serve different purposes: Codex focuses on code generation, the o-series focuses on deep reasoning, and GPT-5.4-Cyber is a fine-tuned variant specifically for security scenarios. While there may be some overlap in capabilities, their access mechanisms are completely different.

Q7: How can small and medium-sized security teams apply?

Teams should submit their credentials, use cases, and compliance commitments through OpenAI’s sales representatives or official business channels. Initially, OpenAI will prioritize organizations with clear credentials and well-defined purposes. If you don't have a direct channel, it’s best to gather your compliance documentation before applying.

Q8: Can domestic (Chinese) companies use it?

OpenAI services are subject to regional restrictions; direct access by domestic entities presents compliance and availability challenges. If you are just using standard GPT-5.4 for general AI capability development, you can call it via the accessible interfaces on APIYI (apiyi.com). For high-sensitivity capabilities like GPT-5.4-Cyber, it is recommended to engage with OpenAI's international business division through compliant channels.

Summary: A "Niche Yet Highly Impactful" Model

Back to our original question: What does GPT-5.4-Cyber really mean?

- For security researchers: This is an officially sanctioned key that unlocks analytical tasks other models can't handle. Its binary reverse engineering capabilities, in particular, will genuinely transform the daily workflows of blue teams.

- For general developers: You likely won't need this specific model, but don't feel anxious—the public version of GPT-5.4 is more than enough to cover 95% of your daily AI development needs. You can access it reliably and easily via APIYI at apiyi.com.

- For the industry: The product model of "identity verification + tiered access" will be adopted by more vertical scenarios, and the division of labor between third-party API platforms and official restricted models will become increasingly clear.

- For the competitive landscape: The head-to-head clash between OpenAI and Anthropic in cybersecurity models has begun, with their differing roadmaps reflecting their unique takes on "AI safety."

📢 Bottom Line Recommendation: Compliant security researchers should apply officially at chatgpt.com/cyber; general developers can stick with the standard GPT-5.4 via APIYI at apiyi.com. Stay wary of any third-party services claiming they can "proxy invoke GPT-5.4-Cyber"—they are either violating terms of service or outright scamming you.

Author: APIYI Team · Continuously tracking Large Language Model release and integration trends · apiyi.com