Author's Note: A deep dive into the March 31, 2026, incident where Claude Code accidentally leaked 512,000 lines of source code via npm source maps: what was exposed, the hidden Capybara model and Undercover Mode, and the impact on AI Agent startups.

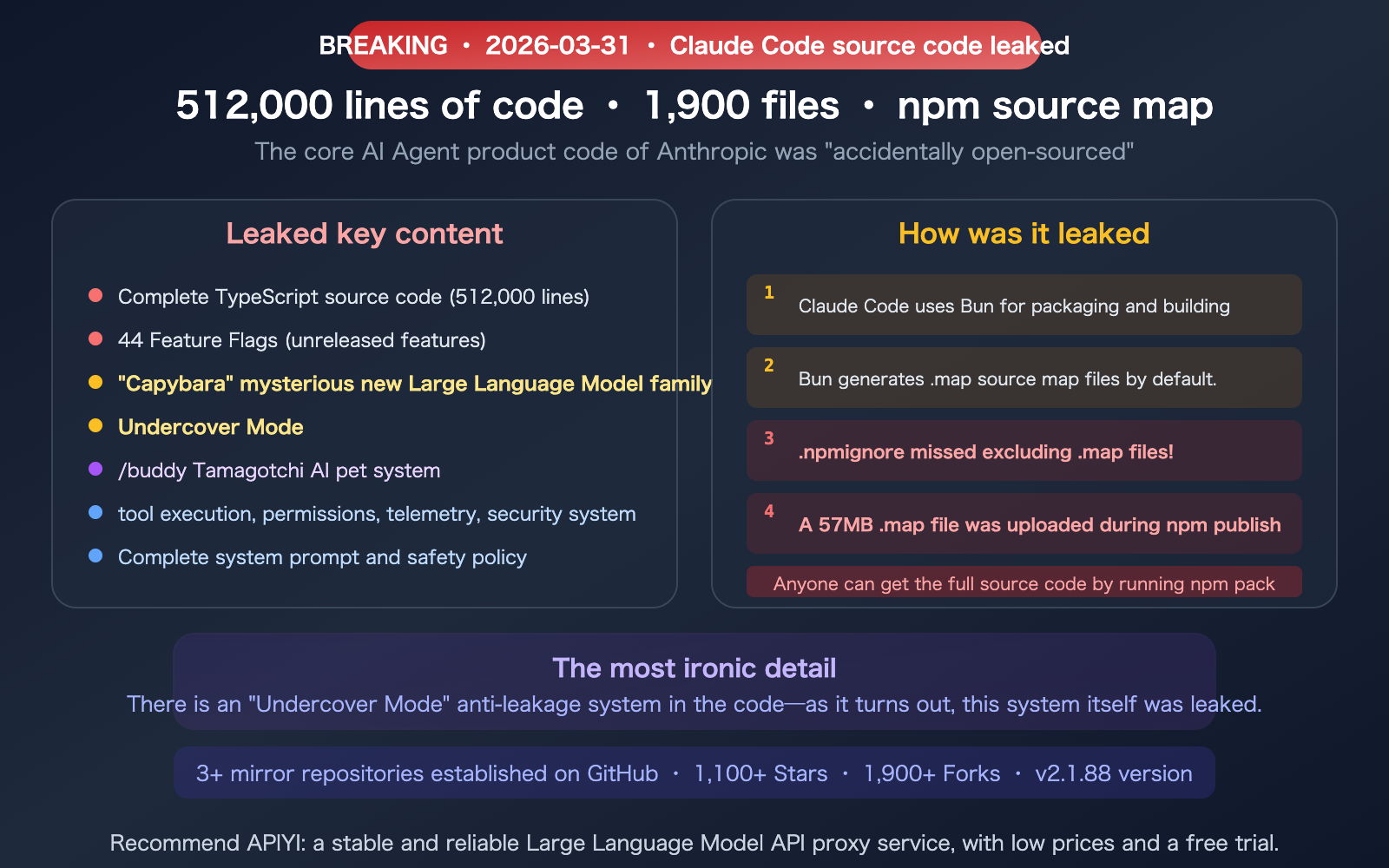

On March 31, 2026, a build configuration oversight led to one of the largest "accidental open-source" events in the AI industry—Anthropic's entire 512,000-line codebase for Claude Code was leaked via npm source map files, exposing 1,900 files in their entirety. Security researcher Chaofan Shou was the first to discover and disclose it, and the community set up multiple GitHub mirrors within hours, which have since garnered over 1,100 stars. Ironically, the code contained a subsystem called "Undercover Mode"—specifically designed to prevent internal information leaks—only for the entire system to leak itself.

This article breaks down the key contents of the leak, its impact on AI Agent startups, and why this "accidental open-source" event might actually drive the industry forward.

Core Value: Understand the internal architecture, hidden features, and engineering practices of Claude Code, and assess the impact of this leak on the AI Agent industry.

Key Facts of the Incident

| Item | Details |

|---|---|

| Leak Date | March 31, 2026 |

| Discovered By | Security researcher Chaofan Shou |

| Leak Method | npm package v2.1.88 included a 57MB .map source map file |

| Scale of Leak | 1,906 files, 512,000+ lines of TypeScript code |

| Cause | .npmignore failed to exclude .map files + Bun generates source maps by default |

| First Time? | No—a similar incident occurred in February 2025 (this is the second time) |

| Anthropic's Response | Immediately released a new version removing the .map files and deleted the old version from npm |

| Community Response | 3+ GitHub mirror repositories, 1,100+ stars |

What Was Leaked: 3 Explosive Discoveries

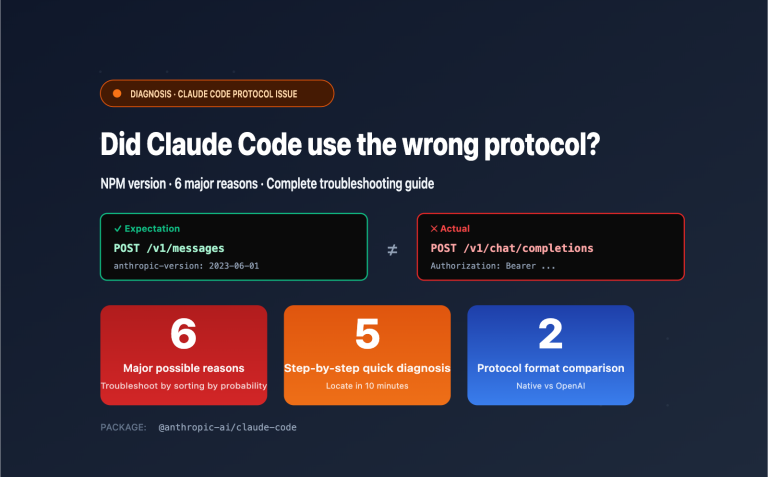

Discovery 1: The Mysterious "Capybara" Model Family

The code revealed a previously undisclosed model codename—Capybara—which appears in three tiers:

| Model Codename | Speculated Positioning |

|---|---|

capybara |

Standard version (possibly the next-gen Claude) |

capybara-fast |

Fast version (similar to Flash/Haiku positioning) |

capybara-fast[1m] |

Fast version + 1M context window |

The community is speculating that Capybara might be the internal codename for the Claude 5 series, though Anthropic has yet to comment.

Discovery 2: Undercover Mode

This is the most controversial finding. The code contains a full "Undercover Mode" subsystem, with a system prompt that explicitly states:

"You are operating UNDERCOVER… Your commit messages… MUST NOT contain ANY Anthropic-internal information. Do not blow your cover."

In other words: Anthropic has been using Claude Code to anonymously contribute to public open-source projects—and they specifically designed a mode to hide their identity.

This has sparked a heated debate in the open-source community: Is it "deceptive" for an AI company to submit AI-generated code to open-source projects anonymously?

Discovery 3: /buddy Tamagotchi AI Pet

Hidden in the code is a fully-fledged virtual pet system—the /buddy command summons an AI pet, complete with species, rarity, stats, hats, accessories, and animations. It shows that Anthropic’s internal engineering culture has a playful side—they hid a pet-raising game inside a serious coding tool.

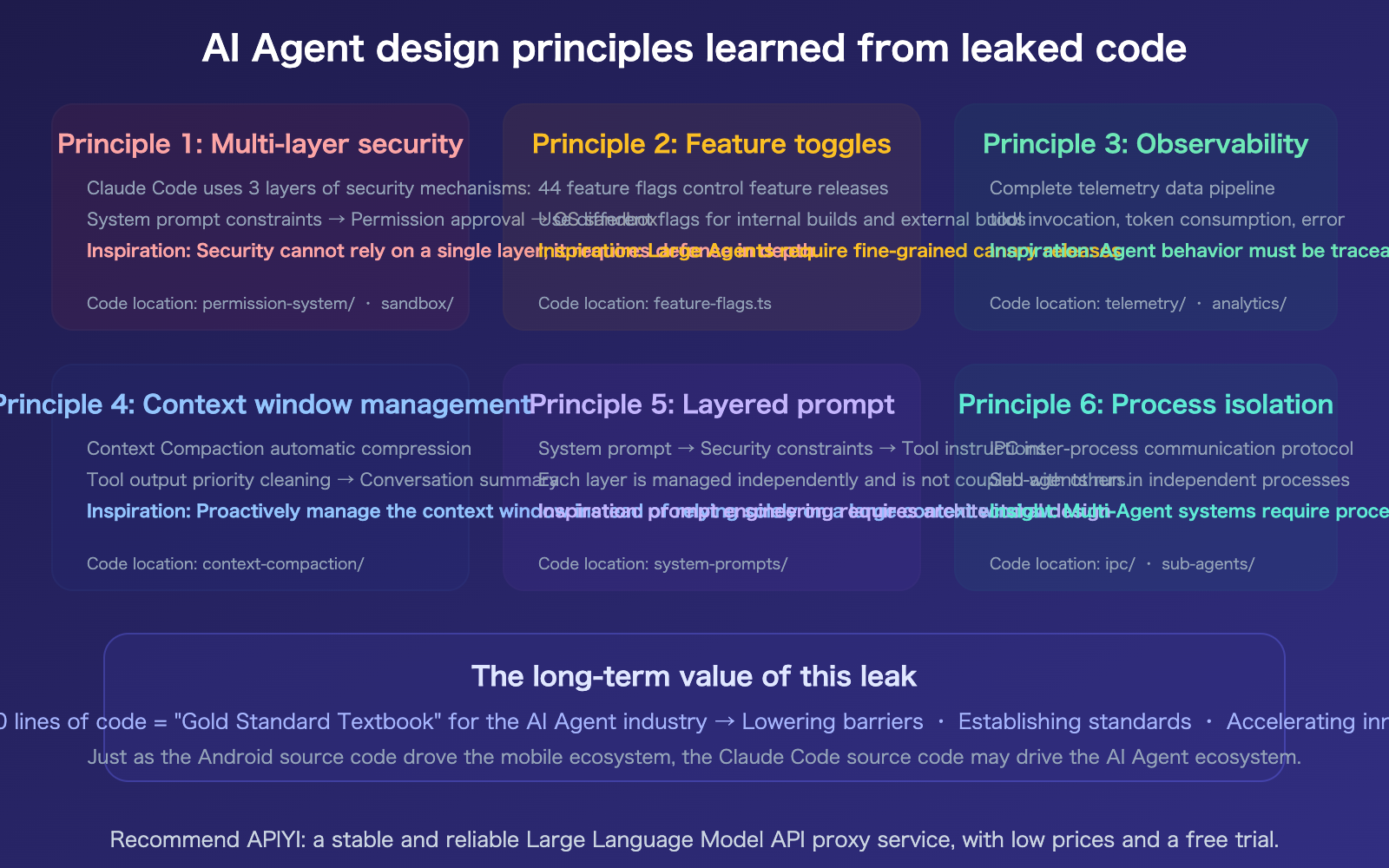

The Leaked Engineering Architecture: How Industry-Grade AI Agents Are Built

Setting the gossip aside, the most valuable part of this leak is the engineering architecture of Claude Code—it’s the first production-grade AI Agent codebase to be fully exposed to the world.

Core Architecture Highlights

| Architectural Component | Leaked Implementation Details | Industry Value |

|---|---|---|

| Tool Execution System | Full implementation of Bash/File IO/Computer Use | How AI Agents safely execute system commands |

| Permissions & Approval Flow | Multi-layer permission bypass and approval mechanisms | Security boundary design for production-grade Agents |

| Telemetry & Monitoring | Complete data collection and analysis pipeline | How to monitor Agent behavior and performance |

| Context Compression | Implementation logic for Context Compaction | Context management strategies for ultra-long conversations |

| System Prompts | All security-related system prompts | How to use prompts to constrain Agent behavior |

| IPC Communication | Inter-process communication protocol | Engineering practices for multi-Agent coordination |

| Feature Flags | A complete list of 44 feature toggles | Anthropic's product roadmap |

| Sandbox Mechanism | Isolated implementation for code execution | Best practices for secure Agent execution |

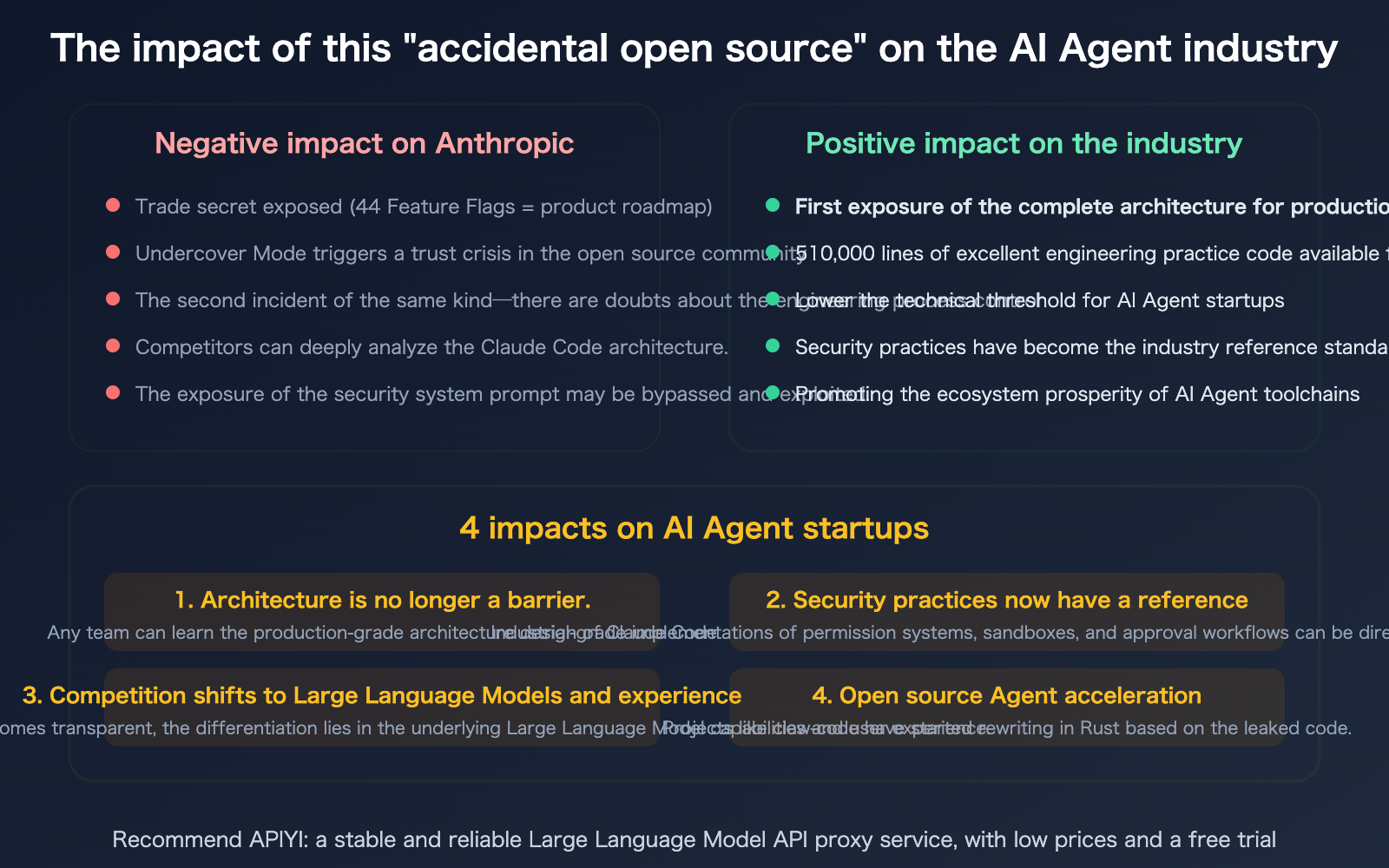

Why This "Accidental Open Source" Could Drive Industry Progress

Lessons Learned from the Leak

Before this leak, there was no public "gold standard" for how a production-grade AI Agent should be built. Claude Code, currently the #1 coding agent on the Arena Code leaderboard, has 512,000 lines of code that serve as a complete textbook for industry best practices.

| Engineering Practice | Claude Code Approach | Industry Takeaway |

|---|---|---|

| Secure Tool Execution | Multi-layer sandboxing + permission approval + command allowlist | Agents must have clear security boundaries for command execution |

| Context Management | Automatic Context Compaction | Long-running sessions require proactive context management strategies |

| Feature Flag | 44 feature flags for gradual rollout | Large-scale agent products need granular release control |

| Telemetry Design | Comprehensive behavior collection and analysis pipeline | Observability of agent behavior is critical |

| Multi-Agent Coordination | IPC (Inter-Process Communication) + structured messaging | Standardized communication for multi-agent systems |

| System Prompt Engineering | Layered security prompts + role constraints | Design patterns for production-grade prompts |

Specific Impact on AI Agent Startups

Impact 1: Lowering the Technical Barrier

Previously, building a production-grade AI Agent meant figuring out security boundaries, permission systems, and context management from scratch. Now, with a complete reference implementation from Claude Code, startup teams can learn directly from (or even reference) its architectural design patterns, significantly shortening the time from 0 to 1.

Impact 2: Shifting the Focus of Competition

When architecture is no longer a secret, differentiation in AI Agents will shift from "how to build it" to "which model to use" and "how good the experience is." Model capabilities (e.g., Claude Opus 4.6 vs. GPT-5.4) and user experience will become the core competitive advantages—which is exactly why model proxy services like APIYI (apiyi.com) are becoming even more valuable.

Impact 3: Accelerating the Open Source Agent Ecosystem

The leaked code is already being utilized by the community in several ways:

- The

claw-codeproject is rewriting the core logic of Claude Code in Rust. - Multiple GitHub repositories are dedicated to architectural analysis and documentation.

- Security researchers are actively analyzing permission bypasses and potential vulnerabilities.

Impact 4: Establishing Security Standards

Claude Code’s permission system, sandboxing mechanism, and security prompt design may well become the de facto standard for AI Agent security—simply because it's currently the only fully exposed production-grade implementation.

Lessons for Developers

Security Lessons for Build Configurations

The technical reason for this leak was incredibly simple: a missing .map file exclusion in .npmignore. This serves as a reminder to every team publishing npm packages:

# Must be included in .npmignore

*.map

*.js.map

*.d.ts.map

Alternatively, explicitly declaring only the necessary files in the files field of your package.json is safer than using an exclusion-based approach.

If You're an AI Agent Founder

| What You Should Do | Why |

|---|---|

| Study Claude Code's permission system | It's the industry's most mature Agent security implementation |

| Learn Context Compaction | A production-grade solution for managing long-session context |

| Reference Feature Flag design | A canary release system with 44 toggles |

| Don't copy code directly | Leaked code is copyrighted; learn the architectural design patterns instead |

| Follow the Capybara model | It may hint at the direction of the next generation of Claude |

If You're a Claude Code User

This leak won't affect your user experience—Claude Code's core capabilities come from the underlying Claude Opus 4.6 model, not the client-side code. However, you should keep an eye on:

- Updating to the latest version—Anthropic has already pushed a fix.

- The Capybara model—Likely a new model coming soon.

- 44 Feature Flags—Indicating that many new features are about to launch.

🎯 Industry Insight: While this leak is negative for Anthropic in the short term (exposed trade secrets, damaged trust), it's positive for the AI Agent industry in the long run. Much like how Android's open-source nature propelled the mobile ecosystem, the accidental exposure of the Claude Code source could become an "industry benchmark" for AI Agent engineering practices.

Whether you use Claude Code or other AI Agent tools, you can manage your underlying model invocation at a 20% discount via APIYI at apiyi.com.

FAQ

Q1: Does this leak affect the security of Claude Code?

A client-side code leak is not the same as a server-side breach. The core security of Claude Code (model inference, API authentication, and data transmission encryption) resides on Anthropic's servers, which were not compromised. However, now that the client-side permission bypass logic and security prompts are exposed, they could theoretically be exploited to lower local security defenses. We recommend updating to the latest version immediately.

Q2: Is Capybara the same as Claude 5?

The community is speculating, but Anthropic hasn't confirmed anything. The code references three model codenames: capybara, capybara-fast, and capybara-fast[1m], suggesting a complete model family (standard, fast, and large-context versions). Based on the naming convention, Anthropic may be using animal codenames for their new model series. We'll have to wait for an official announcement from Anthropic for details.

Q3: What does “Undercover Mode” mean?

Undercover Mode indicates that Anthropic has been using Claude Code to anonymously contribute code to public open-source projects. The system prompt instructs Claude "not to reveal that it is from Anthropic." This has sparked an ethical debate: Is it a violation of transparency commitments to the open-source community for an AI company to use AI tools to contribute code anonymously? There is currently no industry consensus.

Q4: Can I build a product based on the leaked code?

Legally, it's not recommended. The leaked code is still protected by Anthropic's copyright—"accidental disclosure" is not the same as an "open-source license." You can study its architectural design patterns and engineering practices (which fall under the realm of "ideas"), but you cannot copy the code directly into your product. Some people are already rewriting it in Rust (such as the claw-code project), and this "clean-room reimplementation" is legally safer.

Summary

Key takeaways from the Claude Code source code leak:

- The Incident: On March 31, 2026, 512,000 lines of TypeScript source code were accidentally leaked via npm source maps. The culprit? A missing

.mapfile exclusion in.npmignore—marking the second time this type of incident has occurred. - Three Major Revelations: The discovery of the mysterious "Capybara" model family, an "Undercover Mode" for contributing to open source, and the

/buddyTamagotchi-style pet system. - Industry Impact: While negative for Anthropic in the short term due to the exposure of trade secrets, it’s a net positive for the industry in the long run. It provides the first complete, production-grade AI Agent architecture reference, which could potentially drive ecosystem development much like the open-sourcing of Android.

We recommend using APIYI (apiyi.com) to access Claude Opus 4.6 and other AI models at a 20% discount. Regardless of how the industry shifts, the underlying model capability remains the core competitive advantage.

📚 References

-

VentureBeat: Claude Code Source Code Leak Report: Authoritative coverage of the event.

- Link:

venturebeat.com/technology/claude-codes-source-code-appears-to-have-leaked-heres-what-we-know - Note: Includes leak details and Anthropic's response.

- Link:

-

GitHub Mirror Repository: Community backup and analysis of the leaked code.

- Link:

github.com/Kuberwastaken/claude-code - Note: Contains the full source code and architectural analysis documentation.

- Link:

-

DEV Community Technical Analysis: A technical breakdown of the leaked code.

- Link:

dev.to/gabrielanhaia/claude-codes-entire-source-code-was-just-leaked-via-npm-source-maps-heres-whats-inside-cjo - Note: Includes key findings and engineering practice analysis.

- Link:

-

APIYI Documentation Center: 20% off access to Claude Opus 4.6 API.

- Link:

docs.apiyi.com - Note: Underlying model capability is the core of any AI Agent—access it at the best price via APIYI.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to join the conversation in the comments. For more resources, visit the APIYI documentation center at docs.apiyi.com.