Author's Note: This deep dive covers the core capabilities, performance benchmarks, and API integration for the MiniMax-M2.7 and M2.7-highspeed models, helping you secure flagship-level AI performance at a fraction of the cost.

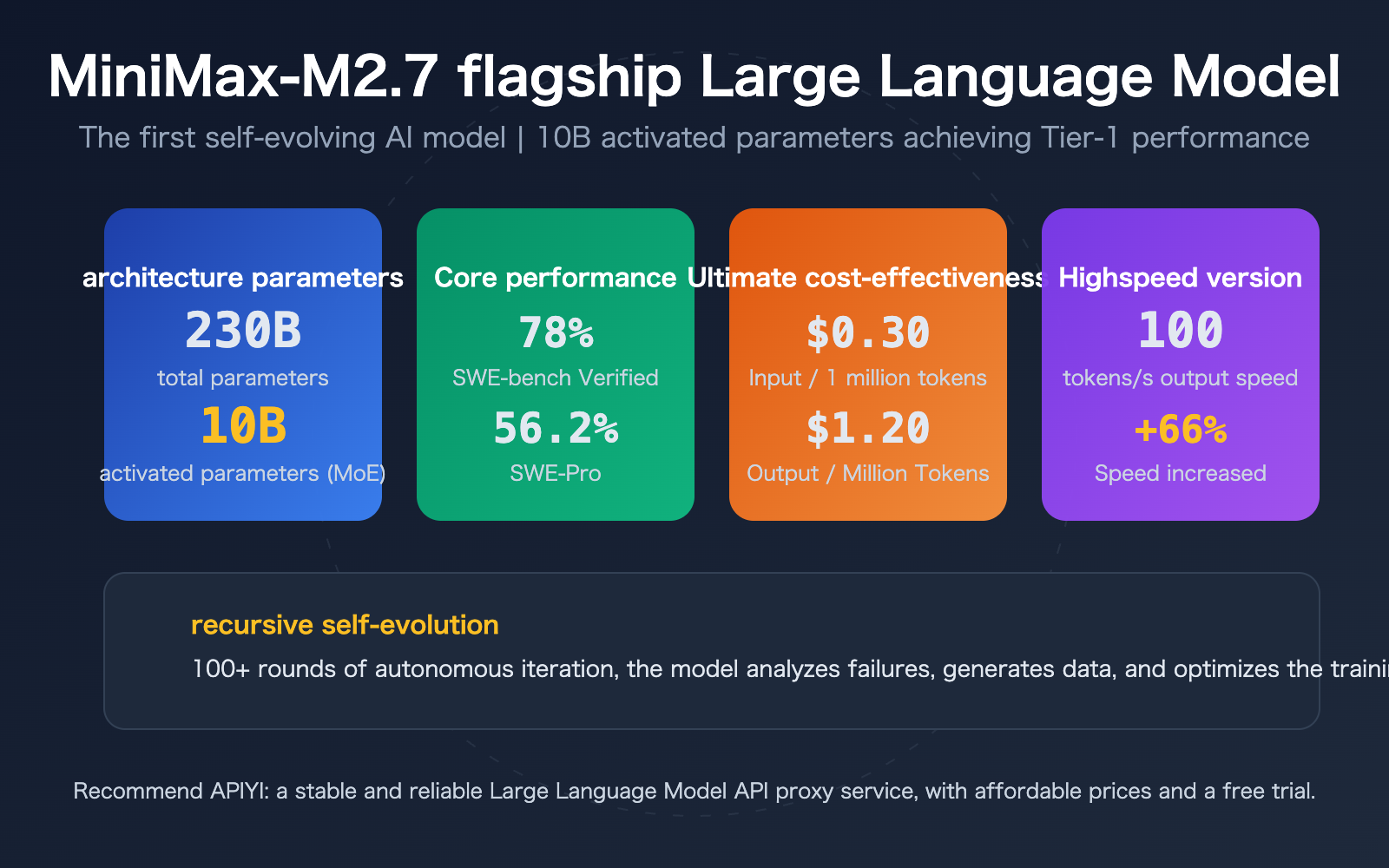

On March 18, 2026, MiniMax launched the MiniMax-M2.7 flagship Large Language Model, the first AI model to actively participate in its own evolutionary process. With only 10B active parameters, it achieves Tier-1 performance on par with Claude Opus 4.6 and GPT-5, all while costing just 1/50th of mainstream flagship models. The simultaneously released MiniMax-M2.7-highspeed version boosts output speed by 66%, reaching 100 tps.

Core Value: Through real-world benchmark data and integration tutorials, this guide will help you decide if the MiniMax-M2.7 is the most cost-effective flagship model for your needs.

MiniMax-M2.7 Key Highlights

| Feature | Description | Value |

|---|---|---|

| 230B Total Params / 10B Active | Sparse Mixture-of-Experts (MoE) architecture; only 10B parameters activated per inference | Flagship performance + ultra-low inference costs |

| Recursive Self-Evolution | Model autonomously runs 100+ iterations to optimize its own training process | 30% performance boost without human intervention |

| SWE-bench 78% | Software engineering benchmark significantly outperforms Opus 4.6's 55% | Top choice for coding and engineering tasks |

| 1/50th the Price of Opus | $0.30/M input, $1.20/M output tokens | Drastic reduction in enterprise-scale deployment costs |

MiniMax-M2.7 Technical Architecture Deep Dive

MiniMax-M2.7 utilizes a Sparse Mixture-of-Experts (MoE) Transformer architecture. While the total parameter count is 230B, only 10B parameters are activated per token. This design makes M2.7 the most compact model in its performance class—delivering Tier-1 results comparable to Claude Opus 4.6 and GPT-5 with minimal computational resources.

The context window reaches 205K tokens (approximately 307 A4 pages), supporting scenarios like long-document analysis and large codebase comprehension. In the Artificial Intelligence Index evaluation, M2.7 ranked first among 136 peer models with a perfect score of 50.

MiniMax-M2.7 Recursive Self-Evolution Mechanism

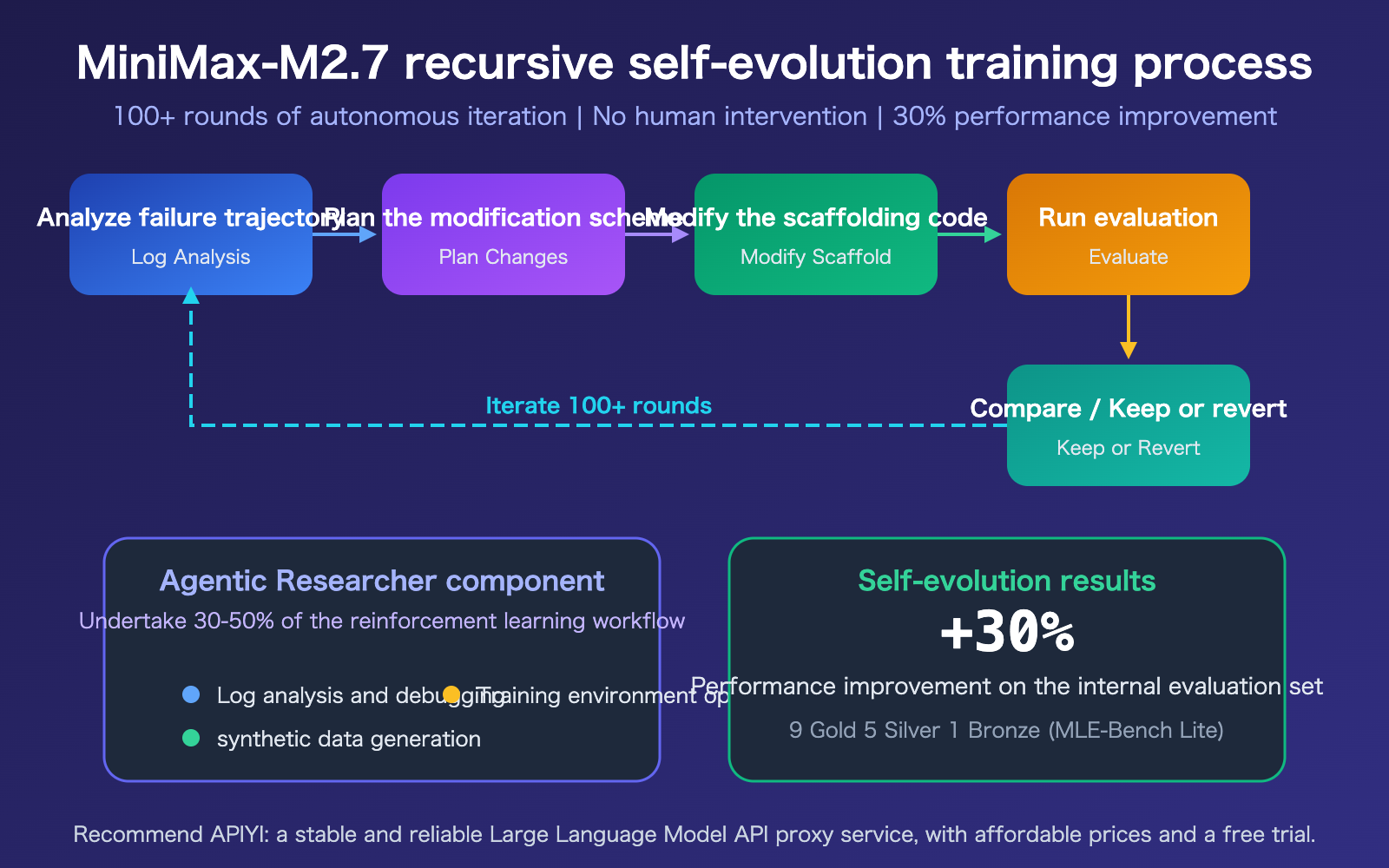

"Recursive self-evolution" is the most groundbreaking technical highlight of M2.7. During training, the model autonomously executes a complete iterative loop: analyzing failure trajectories → planning modifications → modifying training scaffold code → running evaluations → comparing results → deciding whether to keep or revert changes. This process ran autonomously for over 100 rounds.

Its core component, the "Agentic Researcher," handles 30-50% of the reinforcement learning workflow, including log analysis and debugging, synthetic data generation, and training environment optimization. This ultimately resulted in a 30% performance improvement without any human intervention.

MiniMax-M2.7 Performance Benchmarks and Model Comparison

MiniMax-M2.7 Benchmark Results

| Benchmark | M2.7 Score | Claude Opus 4.6 | GPT-5 Series | Notes |

|---|---|---|---|---|

| SWE-bench Verified | 78% | 55% | — | Software engineering tasks, leading by a wide margin |

| SWE-Pro | 56.2% | ~57% | 56.2% (Codex) | Near flagship level |

| VIBE-Pro | 55.6% | — | — | End-to-end project delivery |

| Terminal Bench 2 | 57.0% | — | — | Complex engineering systems |

| MLE-Bench Lite | 66.6% | 75.7% | 71.2% (5.4) | ML competitions, 9 Gold, 5 Silver, 1 Bronze |

| GDPval-AA ELO | 1495 | — | — | #1 in office productivity |

MiniMax-M2.7 Price Comparison

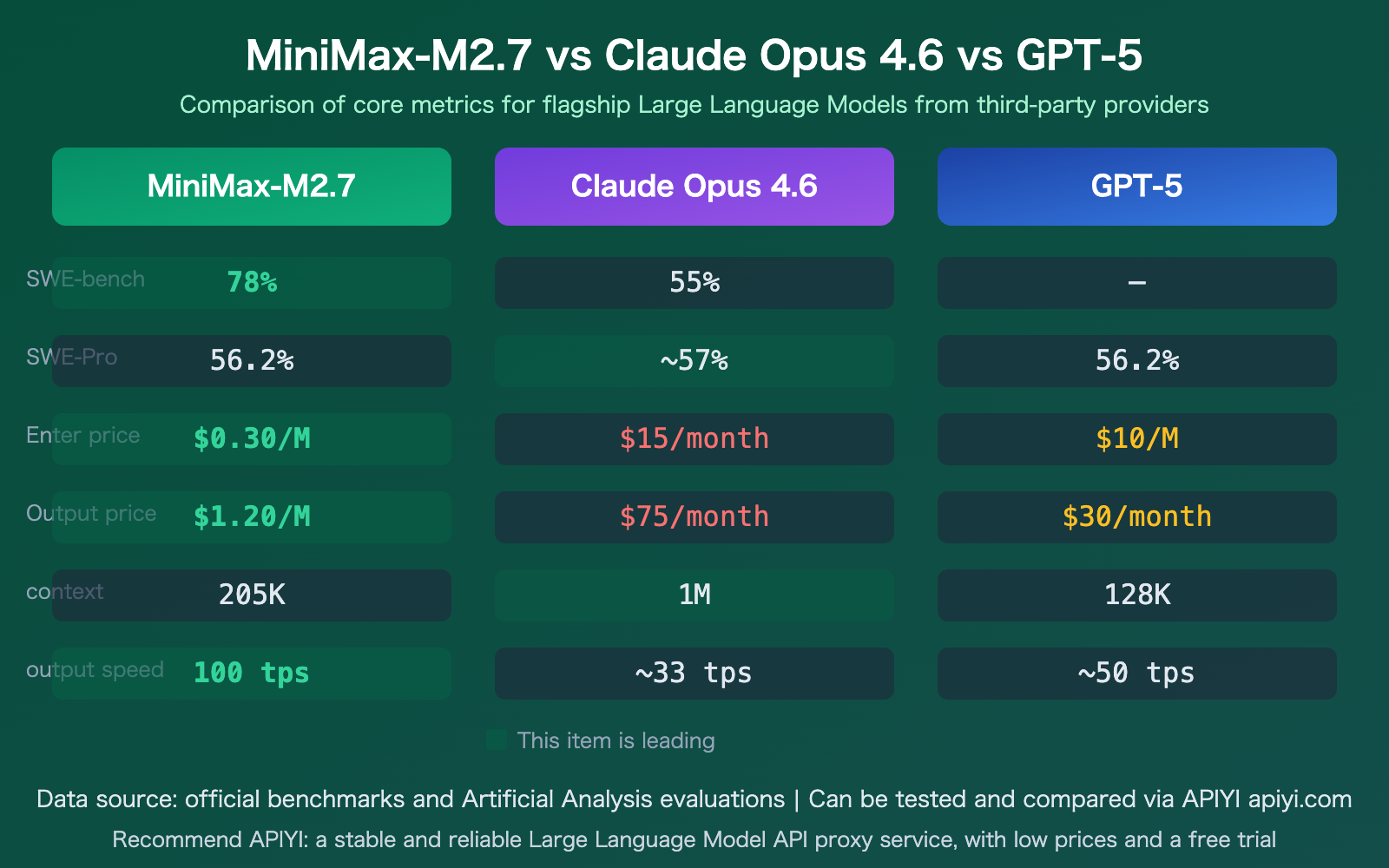

M2.7’s pricing strategy is highly disruptive. At nearly the same performance level, the cost is just a fraction of mainstream flagship models:

| Metric | MiniMax-M2.7 | Claude Opus 4.6 | GPT-5 | Cost Difference |

|---|---|---|---|---|

| Input Price | $0.30/M | $15/M | $10/M | 50x / 33x cheaper |

| Output Price | $1.20/M | $75/M | $30/M | 62x / 25x cheaper |

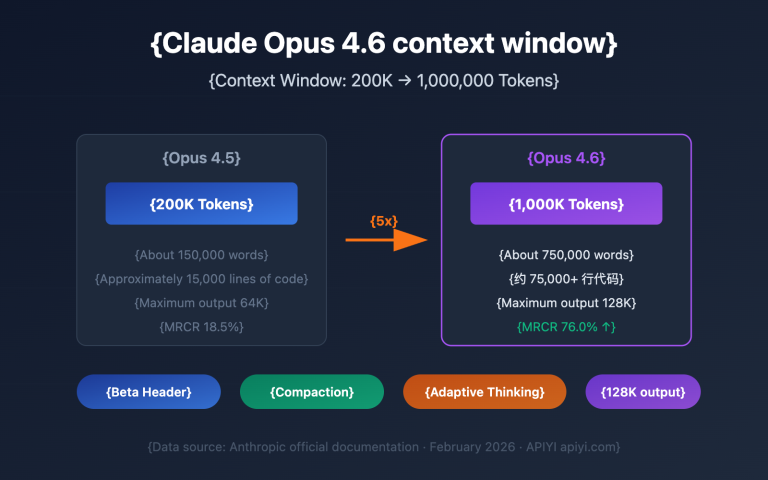

| Context Window | 205K | 1M | 128K | Between the two |

| Active Parameters | 10B | — | — | Smallest Tier-1 model |

🎯 Recommendation: MiniMax-M2.7 excels in programming and engineering tasks with an incredible price-to-performance ratio. We recommend testing it via the APIYI (apiyi.com) platform, which supports unified model invocation for both MiniMax-M2.7 and M2.7-highspeed, making it easy to compare against other flagship models.

MiniMax-M2.7-highspeed Overview

MiniMax-M2.7-highspeed is the performance-optimized version of the M2.7 flagship series. It delivers the exact same results as the standard version—the intelligence level is identical, but the highspeed version is specifically designed for latency-sensitive applications.

Key Advantages of MiniMax-M2.7-highspeed

- Output Speed: Reaches 100 tokens/s, a 66% improvement over the standard version

- Sub-second Latency: Optimized time-to-first-token, perfect for real-time interaction

- Enhanced Inference Backbone: The underlying inference engine is specially optimized, not just a simple quantization downgrade

- Result Consistency: Produces identical outputs to the standard version without sacrificing intelligence

Use Cases for MiniMax-M2.7-highspeed

| Scenario | Description | Why choose highspeed |

|---|---|---|

| Interactive Coding Assistants | Real-time code completion and refactoring in IDEs | Sub-second response improves coding flow |

| Real-time Agent Loops | Multi-step reasoning for Agent Loops | Reduces wait time per step, accelerating the overall process |

| High-throughput Enterprise Pipelines | Batch document processing, data extraction | 100 tps significantly reduces turnaround time |

| Online Customer Support | Real-time conversation and Q&A | Fast, seamless responses for a better user experience |

Recommendation: If your application has strict requirements for response speed, MiniMax-M2.7-highspeed is one of the fastest flagship-level models available today. You can invoke this model directly via APIYI (apiyi.com).

Getting Started with the MiniMax-M2.7 API

Minimal Example

Here is the simplest code to invoke MiniMax-M2.7 via the APIYI platform. You can get it running in just 10 lines:

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="MiniMax-M2.7",

messages=[{"role": "user", "content": "Analyze the performance bottlenecks in this code and provide optimization suggestions"}]

)

print(response.choices[0].message.content)

View full implementation (including highspeed version switching)

import openai

from typing import Optional

def call_minimax_m27(

prompt: str,

model: str = "MiniMax-M2.7",

system_prompt: Optional[str] = None,

max_tokens: int = 2000,

use_highspeed: bool = False

) -> str:

"""

Invoke MiniMax-M2.7 or M2.7-highspeed

Args:

prompt: User input

model: Model name

system_prompt: System prompt

max_tokens: Maximum output tokens

use_highspeed: Whether to use the highspeed version

"""

if use_highspeed:

model = "MiniMax-M2.7-highspeed"

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

messages = []

if system_prompt:

messages.append({"role": "system", "content": system_prompt})

messages.append({"role": "user", "content": prompt})

response = client.chat.completions.create(

model=model,

messages=messages,

max_tokens=max_tokens

)

return response.choices[0].message.content

# Standard version invocation

result = call_minimax_m27(

prompt="Implement an efficient LRU cache in Python",

system_prompt="You are a senior Python engineer"

)

# Highspeed version invocation (suitable for real-time scenarios)

fast_result = call_minimax_m27(

prompt="Quickly explain the purpose of this code",

use_highspeed=True

)

Tip: Get free testing credits via APIYI (apiyi.com) to quickly verify how MiniMax-M2.7 performs in your specific business scenarios. The platform supports one-click switching between standard and highspeed versions.

MiniMax-M2.7 vs. Competitor Model Comparison

| Plan | Core Features | Use Cases | Cost-Effectiveness |

|---|---|---|---|

| MiniMax-M2.7 | 10B active parameters, 78% on SWE-bench | Coding, Agent workflows, large-scale deployment | Extremely High ($0.30/$1.20) |

| M2.7-highspeed | 100 tps, 66% speed boost | Real-time interaction, IDE integration, Agent loops | Extremely High + Fast |

| Claude Opus 4.6 | 1M context window, strongest overall capability | Long documents, complex reasoning, general tasks | Medium ($15/$75) |

| GPT-5 | Mature ecosystem, multimodal support | General scenarios, multimodal applications | Medium ($10/$30) |

Note: The data above is derived from official benchmarks and third-party evaluations by Artificial Analysis. You can perform your own comparisons via the APIYI (apiyi.com) platform.

FAQ

Q1: Is there any difference in output between MiniMax-M2.7 and M2.7-highspeed?

The output is identical for both. The highspeed version achieves faster token generation (100 tps) by optimizing the inference engine, but it doesn't change the model's intelligence or output quality. If your use case isn't latency-sensitive, the standard version works just fine.

Q2: Does the “recursive self-evolution” of MiniMax-M2.7 mean the model keeps changing?

Not at all. Recursive self-evolution is a technical methodology MiniMax uses during the training phase—the model autonomously iterates to optimize its training process and parameters. Once released, the model weights are fixed. You'll get stable and consistent outputs from your model invocation.

Q3: How can I quickly start testing MiniMax-M2.7?

We recommend using an API aggregation platform that supports multiple models for testing:

- Visit APIYI at apiyi.com to register an account.

- Obtain your API key and free credits.

- Use the code examples in this article to verify quickly.

- Simply switch the

modelparameter to toggle between the standard and highspeed versions.

Summary

Key takeaways for MiniMax-M2.7 model invocation:

- Extreme Cost-Effectiveness: With 10B active parameters achieving Tier-1 performance at just 1/50th the price of Opus, it's the top choice for large-scale deployment.

- Outstanding Programming Capabilities: With a 78% score on SWE-bench Verified, it significantly outperforms competitors, making it excellent for software engineering tasks.

- Highspeed Version: The 100 tps output speed is perfect for real-time interactions and Agent loop scenarios, with intelligence levels identical to the standard version.

For developers and enterprise users looking for the best value, MiniMax-M2.7 is one of the most noteworthy flagship models on the market right now.

We recommend using APIYI (apiyi.com) to quickly verify the results. The platform provides free credits, a unified interface for multiple models, and supports one-click switching between the MiniMax-M2.7 standard and highspeed versions.

📚 References

-

MiniMax M2.7 Official Release: Details on model architecture and self-evolution technology

- Link:

minimax.io/news/minimax-m27-en - Description: Official technical blog featuring benchmarks and architectural details.

- Link:

-

MiniMax M2.7 Model Page: Technical specifications and API documentation

- Link:

minimax.io/models/text/m27 - Description: Model parameters, pricing, and integration guides.

- Link:

-

Artificial Analysis Evaluation: Independent third-party performance review

- Link:

artificialanalysis.ai/models/minimax-m2-7 - Description: Independent performance data covering speed and intelligence metrics.

- Link:

-

APIYI Platform Documentation: Quick integration guide for MiniMax-M2.7

- Link:

docs.apiyi.com - Description: How to obtain your API key, view the model list, and access code examples for model invocation.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to join the discussion in the comments section. For more resources, visit the APIYI documentation center at docs.apiyi.com.