On April 21, 2026, OpenAI officially released ChatGPT Images 2.0. Its corresponding API model, gpt-image-2, brings a suite of powerful upgrades, including advanced reasoning, real-time web search, multi-image consistency, and high-precision text rendering.

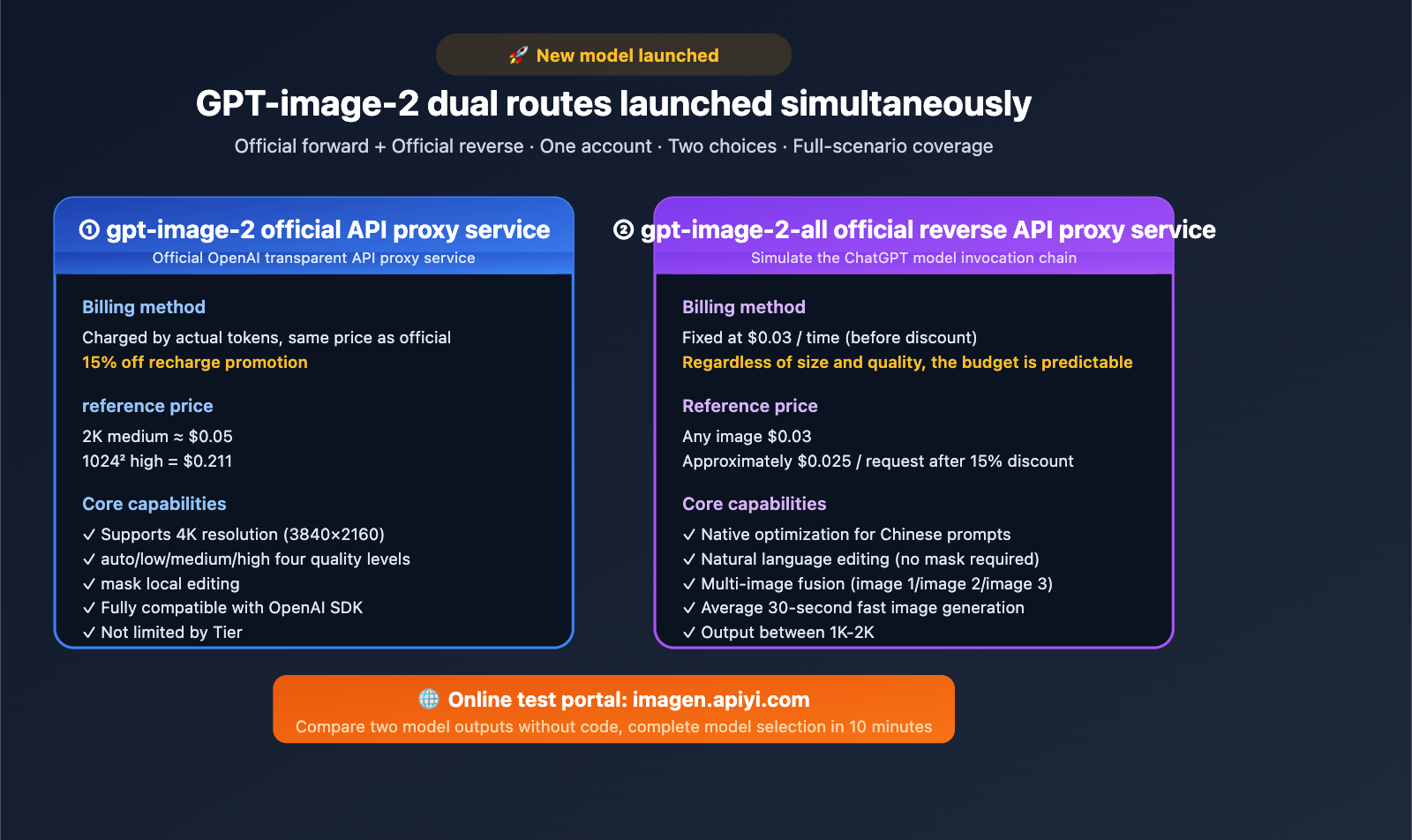

Following this launch, APIYI has simultaneously rolled out two distinct access routes for gpt-image-2:

- ① Official Proxy Version

gpt-image-2: Pay-as-you-go billing with pricing identical to OpenAI’s official rates, featuring a 15% discount on top-ups, stable supply, and unlimited concurrency. - ② Official Reverse-Engineered Version

gpt-image-2-all: Per-request billing at $0.03/request (pre-discount), offering simple integration and predictable costs.

This means developers can leverage both technical routes under a single account, allowing for flexible choices based on specific business needs to balance quality, cost, and stability. This article breaks down the core differences, pricing structures, parameter support, typical use cases, and quick-start guides for both models.

1. Quick Overview: APIYI GPT-image-2 Dual Model Launch

You can quickly understand the core differences between the two models in the table below.

| Dimension | gpt-image-2 (Official Proxy) |

gpt-image-2-all (Official Reverse) |

|---|---|---|

| Positioning | Transparent OpenAI official relay | Simulates ChatGPT web invocation chain |

| Billing | Actual Token usage | Fixed $0.03 / request |

| Default Price Ref | 1024² medium ≈ $0.053, 2K medium ≈ $0.05 | $0.03 / request, regardless of size/quality |

| Top-up Discount | 15% off during promotion | 15% off during promotion |

| Resolution | Supports up to 4K (3840×2160) | Outputs between 1K-2K |

| Quality Levels | auto / low / medium / high | No parameter control |

| Parameter Support | Full support (size, quality, n, mask, etc.) |

No traditional parameters; set via prompt |

| Endpoint | /v1/images/generations + /v1/images/edits |

/v1/chat/completions (Recommended) |

| Concurrency Limit | Not subject to OpenAI Tier limits | No limits |

| Generation Speed | 100-120s (4K high quality 3-5 mins) | ~30s |

| Native Chinese | Supported | Native Chinese prompt optimization |

| Documentation | docs.apiyi.com/api-capabilities/gpt-image-2/overview | docs.apiyi.com/api-capabilities/gpt-image-2-all/overview |

Both models can be tested online at imagen.apiyi.com, allowing you to intuitively compare the output differences between the two routes without writing a single line of code.

2. Deep Dive into the gpt-image-2 Official Proxy Model

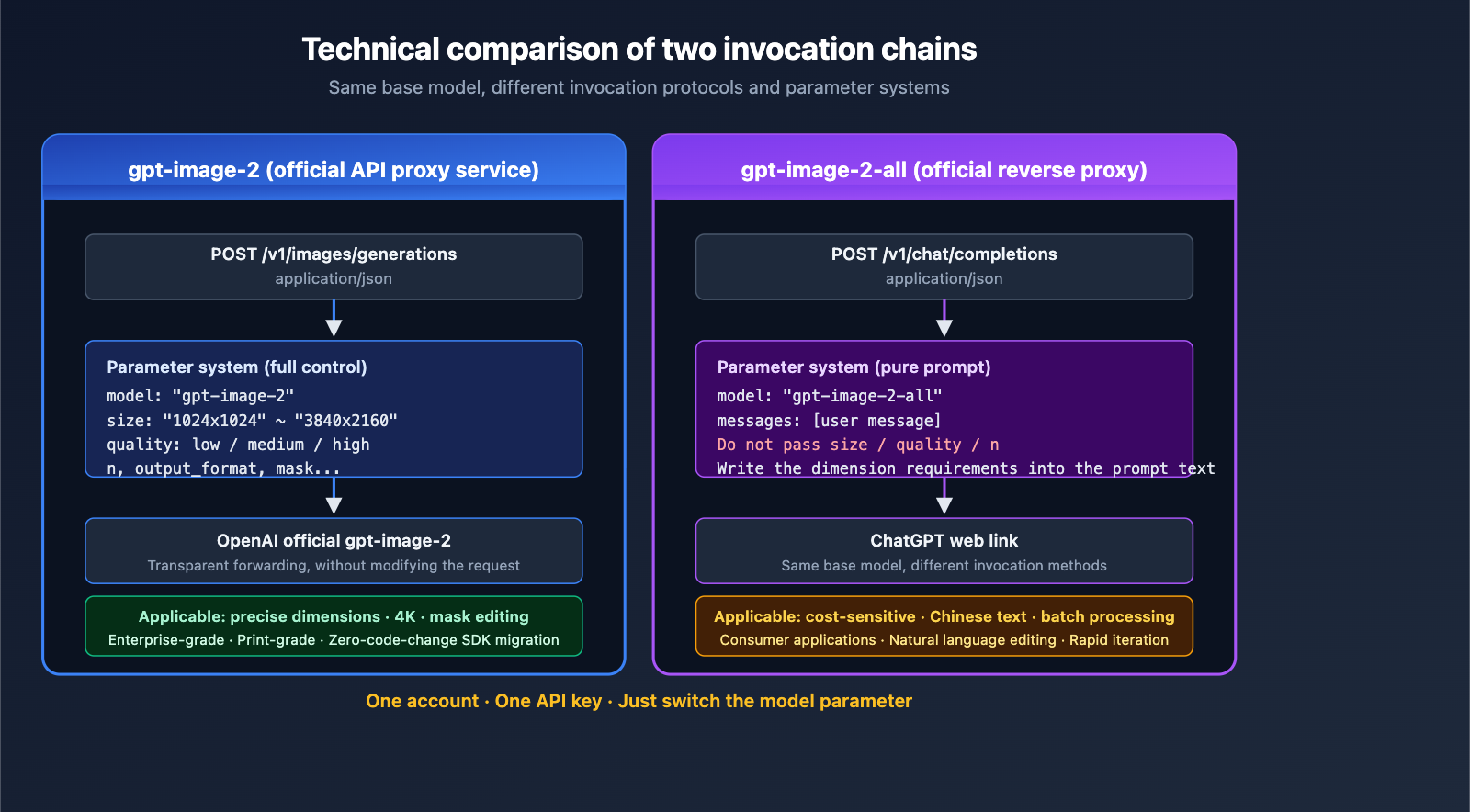

2.1 Technical Positioning of the Official Proxy Model

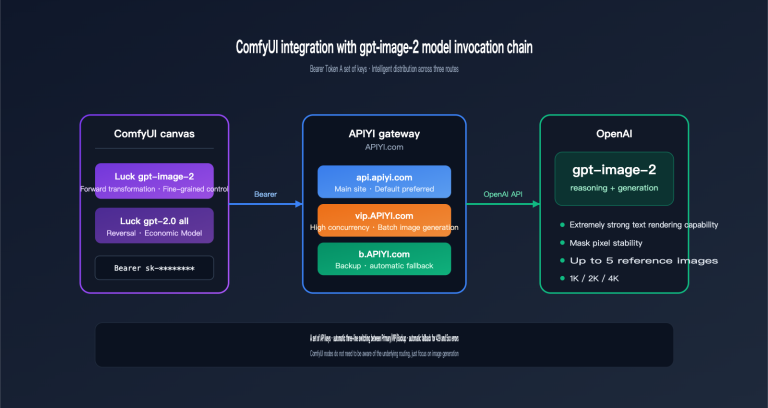

The gpt-image-2 official proxy version acts as a transparent gateway to the official OpenAI API. APIYI only handles the following:

- Protocol Forwarding: Fully compatible with the official OpenAI

/v1/images/generationsendpoint. - Authentication Replacement: Developers use an APIYI key, which the backend swaps for OpenAI authorization.

- Usage Metering: Billing is based on actual token consumption.

- Zero Content Processing: No modifications to prompts or filtering of outputs.

The primary benefit here is that output quality remains identical to the official OpenAI standard, while simultaneously bypassing Tier-based concurrency bottlenecks. While official Tier 1 accounts are limited to 5 generations per minute, the APIYI official proxy channel is not subject to this restriction.

2.2 Supported Resolution Matrix for the Official Proxy Model

The official proxy version retains the complete dimension system provided by OpenAI:

| Preset Size | Aspect Ratio | Typical Use Case |

|---|---|---|

| 1024 × 1024 | 1:1 | Social media avatars, Instagram |

| 1536 × 1024 | 3:2 | Blog header images |

| 1024 × 1536 | 2:3 | Mobile posters |

| 2048 × 2048 | 1:1 | High-resolution branding |

| 2048 × 1152 | 16:9 | Video thumbnails |

| 3840 × 2160 | 16:9 (4K) | Print-ready materials |

| 2160 × 3840 | 9:16 (4K) | Large-format vertical ads |

| Custom | Up to 3:1 | Banners, long images |

Custom Size Constraints: The long edge must be ≤ 3840 px, both dimensions must be multiples of 16, and the total pixel count must be between 655,360 and 8,294,400.

2.3 Four Quality Tiers and Pricing

Pricing is strictly aligned with official OpenAI rates:

| Resolution × Quality | Unit Price (Official) | 15% Off Price |

|---|---|---|

| 1024² low | $0.006 | $0.0051 |

| 1024² medium | $0.053 | $0.045 |

| 1024² high | $0.211 | $0.179 |

| 2048² medium | ≈ $0.05 | ≈ $0.043 |

| 1024×1536 medium | $0.041 | $0.035 |

| 1024×1536 high | $0.165 | $0.140 |

Token Billing: Input text/image $8/1M, Output image $30/1M, Cached input $2/1M.

💡 Cost Optimization Strategy: We recommend using 'low' or 'medium' quality for initial exploration and switching to 'high' for the final version. Through the 15% off recharge promotion at APIYI apiyi.com, your actual costs will be 15% lower than connecting directly to OpenAI.

2.4 Ideal Use Cases for the Official Proxy Model

- Precise resolution control required (e-commerce product details, print materials)

- 4K output required (large-screen ads, wallpapers)

- Mask-based local editing required (product retouching, image inpainting)

- High compatibility with official SDKs (migrate existing code with zero changes)

- Enterprise-level SLA requirements (custom agreements available)

3. Deep Dive into the gpt-image-2-all Reverse Proxy Model

3.1 Technical Positioning of the Reverse Proxy Model

gpt-image-2-all is a reverse-engineered implementation that simulates the ChatGPT web interface call chain. Its core features are fixed pricing + parameter-free usage.

For developers, the biggest difference in experience is the calling method:

- Does not use

/v1/images/generations, but rather/v1/chat/completions. - No need to pass

size,quality, ornparameters (doing so will trigger a validation error). - Resolution and aspect ratio are specified via natural language in the prompt.

- One call outputs one image.

3.2 Fixed Pricing Logic

gpt-image-2-all uses a fixed price of $0.03 per call, regardless of whether you generate a 1K or 2K image, or how long your prompt is (failed requests are not charged).

The value proposition:

| Scenario | Official Proxy (Usage-based) | Reverse Proxy (Fixed) | Winner |

|---|---|---|---|

| 1024² medium small | $0.053 | $0.030 | Reverse saves 43% |

| 2048² medium medium | ~$0.05 | $0.030 | Reverse saves 40% |

| 1024² high quality | $0.211 | $0.030 | Reverse saves 86% |

| 4K ultra-high quality | > $0.20 | Not supported | Official Proxy only |

In short: For low-to-medium quality images, the reverse proxy is much cheaper; however, 4K and high-quality fine-tuned scenarios require the official proxy.

3.3 Output Size Characteristics

The reverse proxy version uses natural language in the prompt to specify dimensions. The model will output images between 1K and 2K based on your request. Common output sizes include:

| Prompt Aspect Ratio Description | Actual Output Resolution |

|---|---|

| "Square 1:1" | 1254 × 1254 |

| "Landscape 16:9" | 1672 × 941 |

| "Portrait 9:16" | 941 × 1672 |

| "Ultra-wide 3:1" | Model may not strictly follow |

Key Point: The reverse proxy does not provide deterministic pixel-level control, making it suitable for scenarios where exact dimensions are not critical.

3.4 Unique Capabilities of the Reverse Proxy Model

While it lacks some parameter controls, the reverse proxy version offers several features not found in the official proxy:

① Native Chinese Prompt Optimization

The reverse proxy version features specific optimizations for Chinese prompts, resulting in higher text rendering accuracy for posters, infographics, and menus compared to using Chinese prompts with the official proxy.

② Multi-image Fusion Editing

By referencing uploaded images as "Image 1/Image 2/Image 3" in your prompt, you can perform multi-image synthesis. While the official proxy also supports multiple images, the prompt syntax in the reverse proxy is more natural.

③ Natural Language Editing (No Mask Required)

To edit an existing image, you don't need to draw a mask; simply use natural language like "change the person's clothes to red." The official proxy requires an alpha channel upload as a mask.

④ Speed Advantage

Average generation time is ~30 seconds, significantly faster than the 100-120 seconds required by the official proxy. For batch tasks, the cumulative time saved is substantial.

3.5 Limitations of the Reverse Proxy Model

- Image URL Expiration: The R2 CDN link returned after generation is valid for 24 hours; we recommend saving it to your own storage immediately.

- No Streaming Support: The

stream=trueparameter is ineffective. - Single Image per Call: Each call outputs only one image; use concurrent calls for batch processing.

- Timeout Recommendation: Set timeouts to 300 seconds to account for upload/download overhead.

3.6 Ideal Use Cases for the Reverse Proxy Model

- Cost-sensitive batch tasks (predictable budget, easy to calculate at $0.03 × N)

- Chinese text rendering (restaurant menus, event posters, infographics)

- Rapid iteration and exploration (30-second output improves workflow efficiency)

- Natural language editing (for users who prefer not to create masks)

- Consumer-facing applications (interactive drawing with elastic size requirements)

4. APIYI GPT-image-2 Dual-Model Quick Start

4.1 Online Testing Portal

Both models are integrated into the APIYI visual testing tool at imagen.apiyi.com, allowing developers and designers to:

- No-Code Comparison: Input the same prompt and see side-by-side image generation results from both models.

- Parameter Adjustment: Adjust

sizeandqualityfor the official-proxy version to intuitively feel the differences. - Export Code: Once satisfied with the test, generate curl, Python, or Node.js code snippets directly.

This is the most direct way to familiarize yourself with the capabilities of both models. We highly recommend spending 10 minutes doing a side-by-side comparison at imagen.apiyi.com before your first integration.

4.2 Official-Proxy Model gpt-image-2 Python Example

from openai import OpenAI

import base64

client = OpenAI(

api_key="YOUR_APIYI_KEY",

base_url="https://api.apiyi.com/v1"

)

response = client.images.generate(

model="gpt-image-2",

prompt="Modern minimalist living room, large floor-to-ceiling windows, natural light streaming in",

size="2048x1152",

quality="medium",

n=1,

output_format="png"

)

image_bytes = base64.b64decode(response.data[0].b64_json)

with open("output.png", "wb") as f:

f.write(image_bytes)

Key point: The official-proxy version uses the standard OpenAI SDK. The code is identical to connecting directly to OpenAI, requiring only a change to the base_url and api_key.

4.3 Official-Reverse Model gpt-image-2-all Python Example

from openai import OpenAI

client = OpenAI(

api_key="YOUR_APIYI_KEY",

base_url="https://api.apiyi.com/v1"

)

response = client.chat.completions.create(

model="gpt-image-2-all",

messages=[

{

"role": "user",

"content": "Generate a 16:9 landscape poster of a modern minimalist living room,"

"large floor-to-ceiling windows, natural light streaming in,"

'render "Nordic Life" in bold Chinese characters in the top right corner'

}

]

)

print(response.choices[0].message.content)

Key point: The official-reverse version uses the chat/completions endpoint. The response will contain an image URL or base64 data. Note: Do not pass size, quality, or n parameters, as this will cause an error.

4.4 Hybrid Architecture Example

A recommended production practice is to use both models in a hybrid setup, routing tasks based on their specific requirements:

def generate_image(prompt: str, task_type: str):

if task_type in ["batch", "draft", "chinese_text"]:

return client.chat.completions.create(

model="gpt-image-2-all",

messages=[{"role": "user", "content": prompt}]

)

elif task_type in ["print", "4k", "precise_size"]:

return client.images.generate(

model="gpt-image-2",

prompt=prompt,

size="3840x2160",

quality="high"

)

else:

return client.images.generate(

model="gpt-image-2",

prompt=prompt,

size="1024x1024",

quality="medium"

)

By using this routing strategy, you can achieve the optimal balance of cost and quality under a single APIYI apiyi.com account.

5. Impact Analysis of APIYI GPT-image-2 Dual Models on Product Teams

5.1 Impact on Startup Teams and Individual Developers

Core Value: Lowering trial-and-error costs and integration barriers.

Previously, the main pain point for new developers integrating OpenAI gpt-image-2 was the Tier 1 limit of only 5 images/minute, plus the wait time for account qualification after the first top-up. Now, through APIYI:

- Ready to use immediately upon registration, zero barriers.

- Official-reverse version at a fixed price of $0.03/image, making budgets extremely predictable.

- Use the official-reverse version for prototyping to get the workflow running, then switch to the official-proxy version for production as needed.

This means the time from "wanting to try gpt-image-2" to "running the first demo" is compressed to 5 minutes.

5.2 Impact on E-commerce and Content Production Teams

Core Value: Batch image generation costs reduced by 40-85%.

Suppose an e-commerce team needs to generate 5,000 product images per month (1024×1024 resolution, medium quality):

- OpenAI Direct: 5,000 × $0.053 = $265/month + Tier rate limits slowing down the pace.

- APIYI Official-Proxy: 5,000 × $0.053 × 0.85 = $225/month + no concurrency limits.

- APIYI Official-Reverse: 5,000 × $0.03 × 0.85 = $128/month + 30-second fast generation.

If the business doesn't have strict requirements for precise dimensions, switching entirely to the official-reverse version can save 50%+ in costs.

5.3 Impact on Enterprise Clients

Core Value: Flexibility in technical selection.

In the past, enterprise clients had to choose between OpenAI official and third-party alternative models. Now they can:

- Critical Business Flows: Use the official-proxy to maintain quality and SLA consistency with the official version.

- Batch Task Flows: Use the official-reverse to maximize cost advantages.

- A/B Testing: Perform batch comparisons at

imagen.apiyi.combefore deciding on model investment.

APIYI's enterprise service channel also provides large clients with customized independent channels, SLA commitments, and compliant invoicing.

5.4 Impact on AI Tool Products

Core Value: A win-win for user experience and cost control.

Many consumer-facing AI image generation products previously struggled to balance "giving users good image quality" and "controlling costs." Now, with the dual-model matrix:

- Free users → Official-reverse at $0.03/image to ensure basic usability.

- Paid users → Official-proxy at high quality to provide a differentiated experience.

- Enterprise users → Official-proxy at 4K to meet print-grade requirements.

VI. APIYI GPT-image-2 Dual-Model FAQ

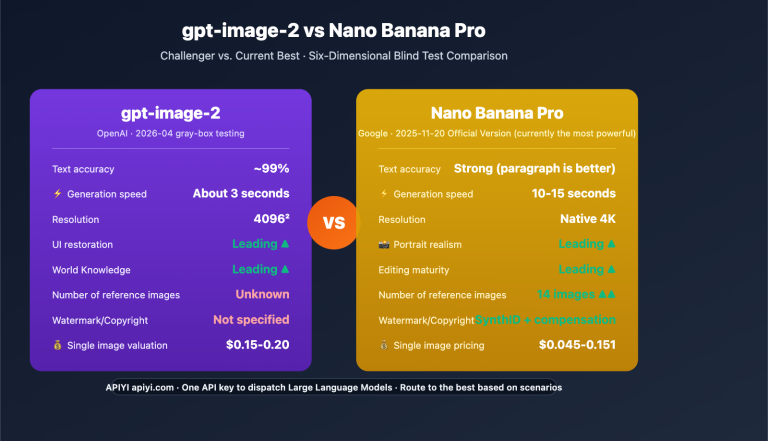

Q1: Is there a significant difference in image quality between the two models?

For low-to-medium quality scenarios (1024 medium and below), the difference is negligible and hard to distinguish with the naked eye. In high-quality scenarios (1024 high / 2K / 4K), the official proxy service has a clear advantage because it allows you to explicitly specify quality="high" and precise resolutions. We recommend running a few tests with the same prompt at imagen.apiyi.com to compare them yourself.

Q2: Is the reverse-engineered gpt-image-2-all version worse than the official model?

Not at all. gpt-image-2-all still uses OpenAI's underlying gpt-image-2 model; it simply completes the request via the ChatGPT web interaction chain. The core differences lie in parameter control and the pricing model, not in the model weights themselves.

Q3: Can both models be used under one APIYI account?

Yes, absolutely. Your API key from APIYI (apiyi.com) can call both gpt-image-2 and gpt-image-2-all simultaneously; just switch the model parameter. Billing is consolidated under a single account.

Q4: Images generated by the reverse-engineered version expire in 24 hours. What's the best way to handle this?

The best practice is to download the image to your own object storage (OSS / S3 / R2) immediately after receiving the response, rather than relying on the URL returned by APIYI. If you use response_format="b64_json", you'll get the base64 data directly, which avoids expiration issues entirely.

Q5: How do I migrate code I previously wrote using the official OpenAI SDK?

- Switching to official proxy

gpt-image-2: Just update thebase_urlandapi_key; the rest of your code remains the same. - Switching to reverse-engineered

gpt-image-2-all: You'll need to change to thechat/completionsendpoint, remove thesize/qualityparameters, and include your dimension requirements directly in the prompt.

We recommend testing at imagen.apiyi.com to confirm the output quality meets your needs before moving to production.

Q6: Do both models support Chinese prompts?

Both do, but their performance varies slightly. The reverse-engineered gpt-image-2-all is natively optimized for Chinese prompts, which is especially noticeable when rendering Chinese text. The official proxy version supports Chinese but is more aligned with the training distribution of English prompts. For production, we suggest testing based on your specific use case.

Q7: Does the 15% off (85% price) recharge promotion apply to both models?

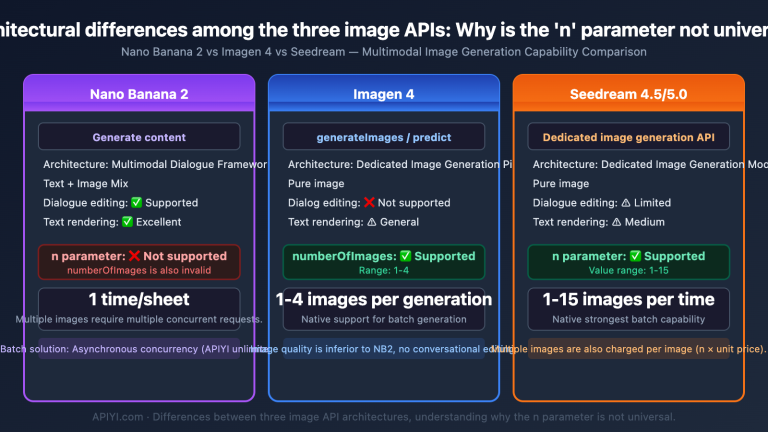

Yes. Your recharged balance can be used for all model invocations on APIYI, including the official proxy gpt-image-2, the reverse-engineered gpt-image-2-all, and other image models like Nano Banana Pro/2 and Imagen. Please refer to the current announcements on apiyi.com for specific promotion rules.

Q8: Can enterprise customers get lower prices or dedicated channels?

Yes. APIYI provides a business channel for large clients. Depending on your monthly usage, you can apply for custom discounts, dedicated high-concurrency channels, SLA guarantees, compliant invoicing, and dedicated technical support. Please contact the APIYI business team at apiyi.com directly for a custom solution.

VII. Summary of the APIYI GPT-image-2 Dual-Model Launch

Here is the core value of this launch in a nutshell:

One account, two paths, three choices:

- Quality-first →

gpt-image-2(official proxy), pay-as-you-go + 15% discount.- Cost-first →

gpt-image-2-all(reverse-engineered), fixed price at $0.03/request.- Hybrid strategy → Use the official proxy for critical business and the reverse-engineered version for batch tasks.

For teams currently evaluating or already using gpt-image-2, here is our recommended action plan:

- Visit

imagen.apiyi.comto test both models online. - Compare output by running a few typical prompts through both to evaluate quality and speed differences.

- Plan your routing by designing a hybrid strategy based on your business needs.

- Control costs by taking advantage of the 15% off recharge promotion and using the reverse-engineered version for batch exploration.

- Enterprise onboarding for large clients to get custom solutions via the APIYI business channel.

Image generation has entered a new phase of "parallel paths + business layering." A single model with a single pricing structure can no longer cover every scenario. By launching these two models simultaneously, APIYI is essentially returning the power of choice to developers—allowing you to flexibly combine these paths to find the optimal solution for your business.

About the Author: The APIYI technical team is dedicated to providing stable, transparent, and comprehensive AI model API services for developers and enterprise clients. Visit the official APIYI website at apiyi.com for the latest documentation and enterprise service details for gpt-image-2, gpt-image-2-all, Nano Banana Pro, and other mainstream image models.