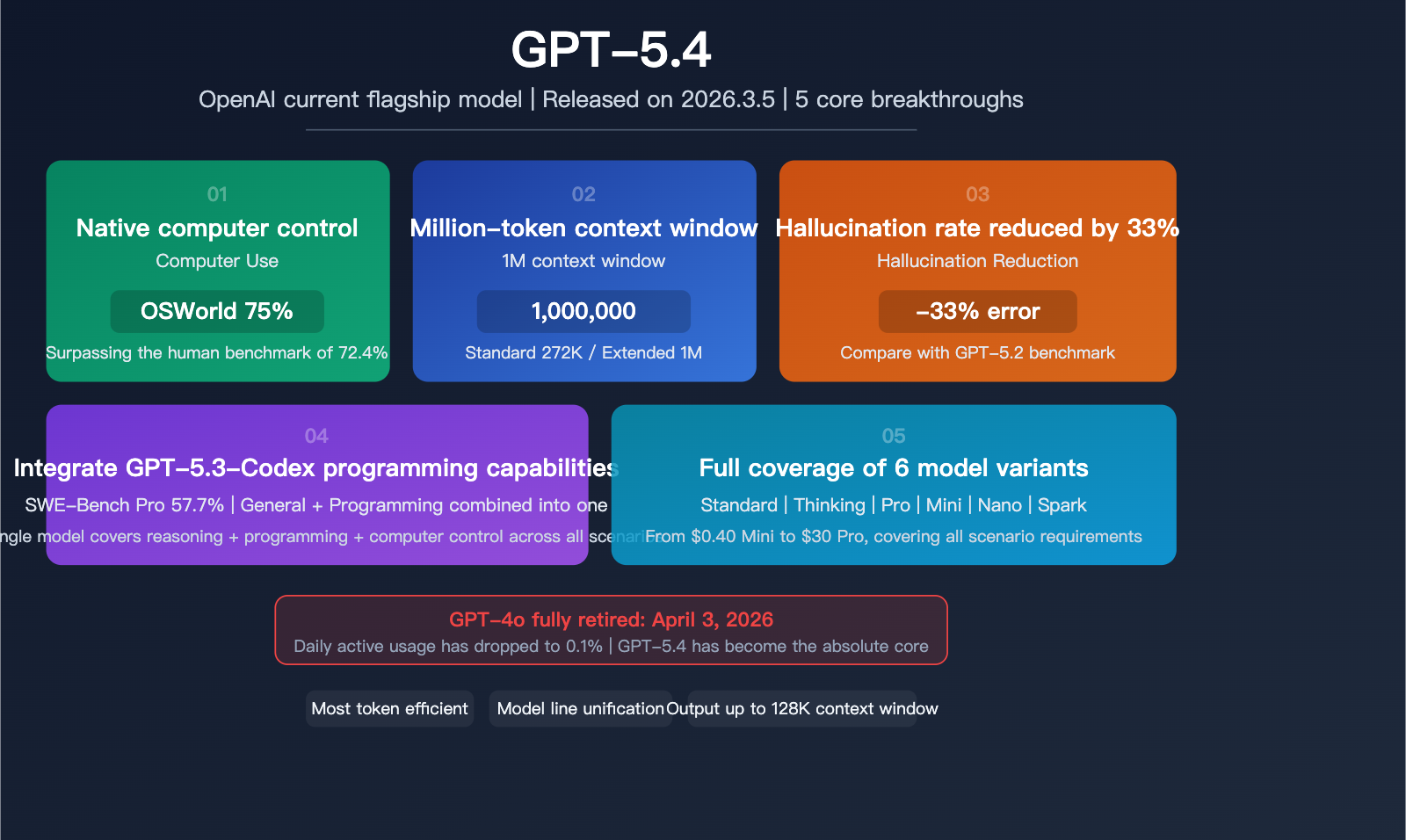

Author's Note: GPT-5.4 has officially become OpenAI's active flagship model, featuring native computer control that surpasses human benchmarks, a million-token context window, integrated Codex programming capabilities, and a 33% reduction in hallucination rates. This article provides an in-depth analysis of the technical details, evaluation data, and the impact of the GPT-4o retirement.

On March 5, 2026, OpenAI officially released GPT-5.4, the first unified flagship model to integrate native computer control, a million-token context window, and Codex programming capabilities. Meanwhile, GPT-4o is set to be fully retired on April 3, marking the end of an era. This article provides an in-depth analysis of the 5 core breakthroughs brought by GPT-5.4, covering technical architecture, evaluation data, and practical applications.

Core Value: Get up to speed in 5 minutes on all of GPT-5.4's core capabilities, pricing plans, competitor comparisons, and migration strategies following the retirement of GPT-4o.

Quick Overview of GPT-5.4

| Feature | Details |

|---|---|

| Release Date | March 5, 2026 |

| Developer | OpenAI |

| Positioning | Active flagship model, replacing the GPT-5.2 series |

| Core Breakthroughs | Native computer control, million-token context window, Codex integration |

| Hallucination Rate | 33% lower than GPT-5.2 |

| OSWorld Benchmark | 75% (surpassing the human benchmark of 72.4%) |

| SWE-Bench Pro | 57.7% (surpassing GPT-5.3-Codex's 56.8%) |

| Model Variants | Standard / Thinking / Pro / Mini / Nano / Spark |

| GPT-4o Retirement | Fully retired on April 3, 2026 |

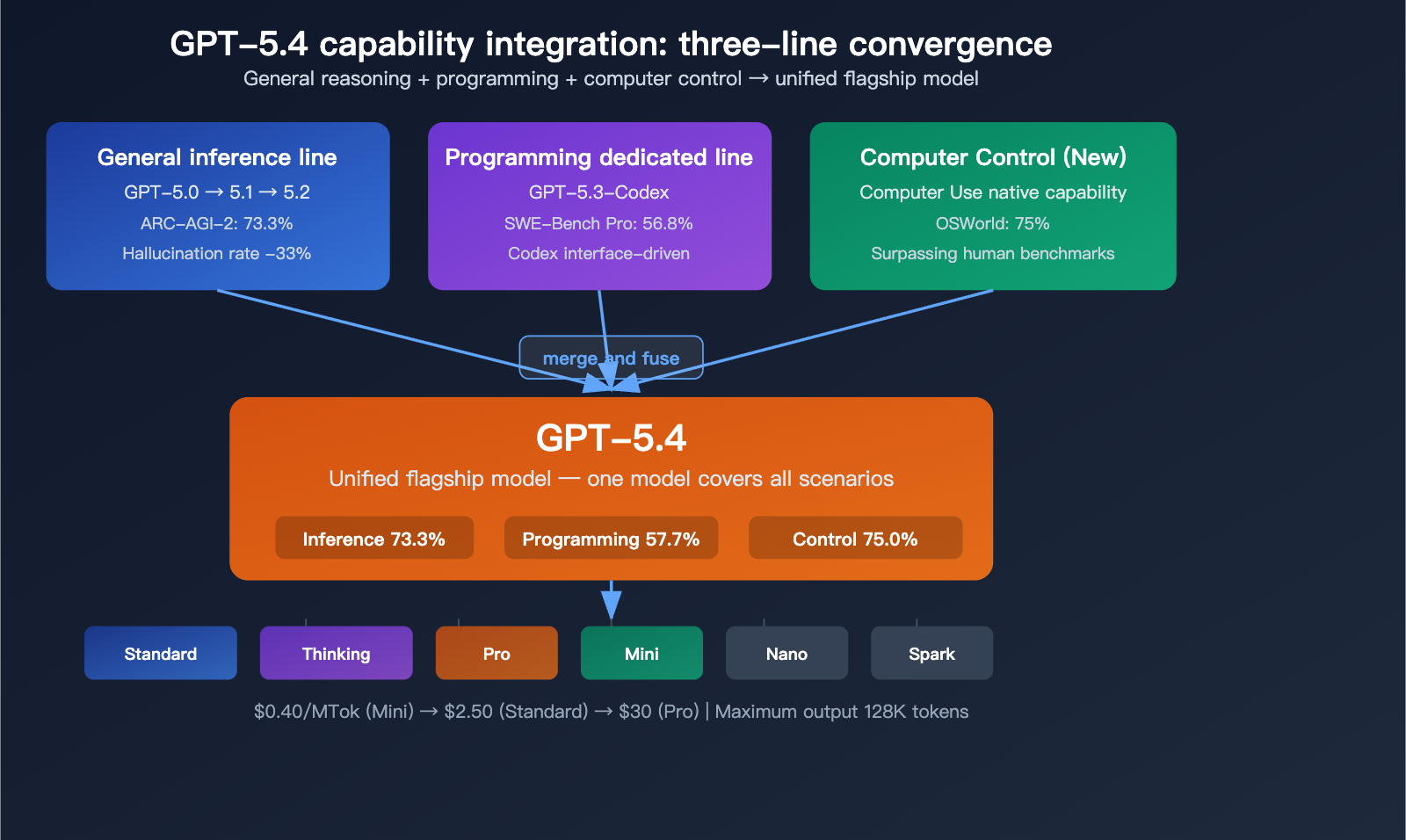

The Historical Positioning of GPT-5.4

GPT-5.4 isn't just another routine version update; it’s a major consolidation of OpenAI's model lineup. Previously, OpenAI maintained two separate model lines: general reasoning (GPT-5.x) and programming-specific (GPT-5.3-Codex). GPT-5.4 marks the first time these two lines have been merged into a single flagship model—it's now both the most powerful general reasoning model and the most capable programming model, while also being the first to feature native computer control.

This means developers no longer need to switch back and forth between "using GPT-5.2 for reasoning" and "using Codex for programming." A single GPT-5.4 model now covers all scenarios.

A Deep Dive into the 5 Core Breakthroughs of GPT-5.4

Breakthrough 1: Native Computer Use

The most eye-catching new capability in GPT-5.4 is Computer Use. This isn't achieved through plugins or external tools; it’s a native, built-in feature. GPT-5.4 can directly "see" the screen, move the mouse, click buttons, and type text, allowing it to operate a computer just like a human to complete complex workflows.

| Benchmark | GPT-5.4 | Human Expert Baseline | Rating |

|---|---|---|---|

| OSWorld-Verified | 75.0% | 72.4% | Surpasses Human |

In the OSWorld-Verified evaluation, GPT-5.4 scored 75%, surpassing the human expert baseline (72.4%) for the first time. This means that when it comes to automating computer tasks, GPT-5.4 is now more reliable than the average human expert.

Practical use cases for this capability include:

- Automated Office Workflows: Automatically entering data and generating reports in Excel, CRM, or ERP systems.

- Cross-Application Workflows: Extracting information from emails, creating tasks in project management tools, and notifying the relevant team members.

- Web Automation: Automatically browsing websites, filling out forms, and submitting applications.

- Software Testing: Automatically interacting with GUIs to perform end-to-end testing.

Breakthrough 2: 1 Million Token Context Window

The context window for GPT-5.4 has been expanded to 1 million tokens (in API mode), with a standard mode of 272K tokens. This allows the model to handle massive documents, entire codebases, and complex, multi-step Agent tasks.

| Context Mode | Capacity | Use Case |

|---|---|---|

| Standard Mode | 272K tokens | Daily conversations and general tasks |

| Extended Mode | 1M tokens | Long document analysis, codebase processing |

| Max Output | 128K tokens | Long-form text generation |

The core value of a million-token context is that it supports long-range Agent planning—the model can complete a full loop of planning, execution, and verification within a single session without losing critical information due to context overflow.

Breakthrough 3: 33% Reduction in Hallucinations

OpenAI has achieved a significant boost in factual accuracy with GPT-5.4:

- Single-Claim Error Rate: Reduced by 33% compared to GPT-5.2.

- Overall Response Error Rate: Reduced by 18% compared to GPT-5.2.

This makes GPT-5.4 much more reliable when handling factual queries. This is a crucial advancement for enterprise applications, medical consultations, legal analysis, and other scenarios where accuracy is paramount.

Breakthrough 4: Integrated GPT-5.3-Codex Programming Capabilities

GPT-5.4 comes with the full programming power of GPT-5.3-Codex built-in, with further improvements on top:

| Programming Benchmark | GPT-5.4 | GPT-5.3-Codex | Change |

|---|---|---|---|

| SWE-Bench Pro | 57.7% | 56.8% | +0.9% |

| SWE-Bench Verified | ~80% | – | Top-tier |

GPT-5.4 scored 57.7% on SWE-Bench Pro, slightly edging out the 56.8% score of GPT-5.3-Codex. This means you no longer need to use a separate Codex model for programming tasks—GPT-5.4 can handle reasoning, coding, and computer control all in one.

The Codex interface remains available, but it is now powered by GPT-5.4 under the hood.

Breakthrough 5: Intelligent Tool Search

GPT-5.4 introduces Tool Search, allowing the model to automatically discover and invoke the most appropriate tool from a vast ecosystem without requiring humans to pre-configure every single integration. This significantly boosts the autonomy of Agents in complex workflows.

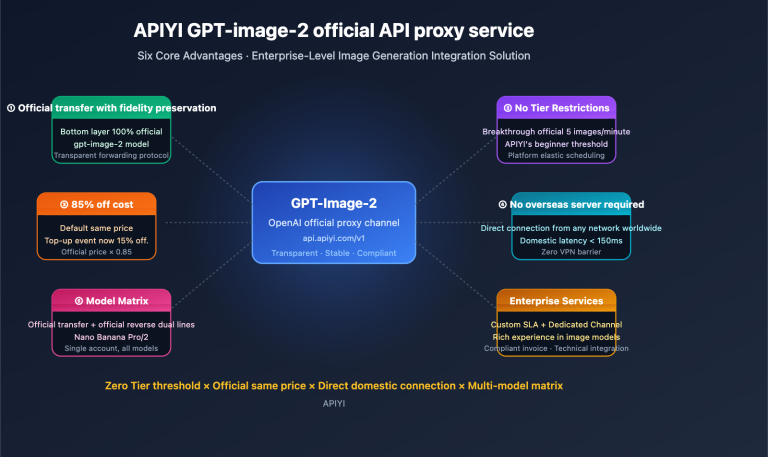

🎯 Developer Tip: These breakthroughs mean you can now cover reasoning, programming, and automation with a single model. Through the APIYI (apiyi.com) platform, you can access all variants of GPT-5.4 with a single API key, while also having the flexibility to switch to competing models like Claude or Gemini for performance comparisons.

GPT-5.4 Model Variants and Pricing

The Full GPT-5.4 Model Family

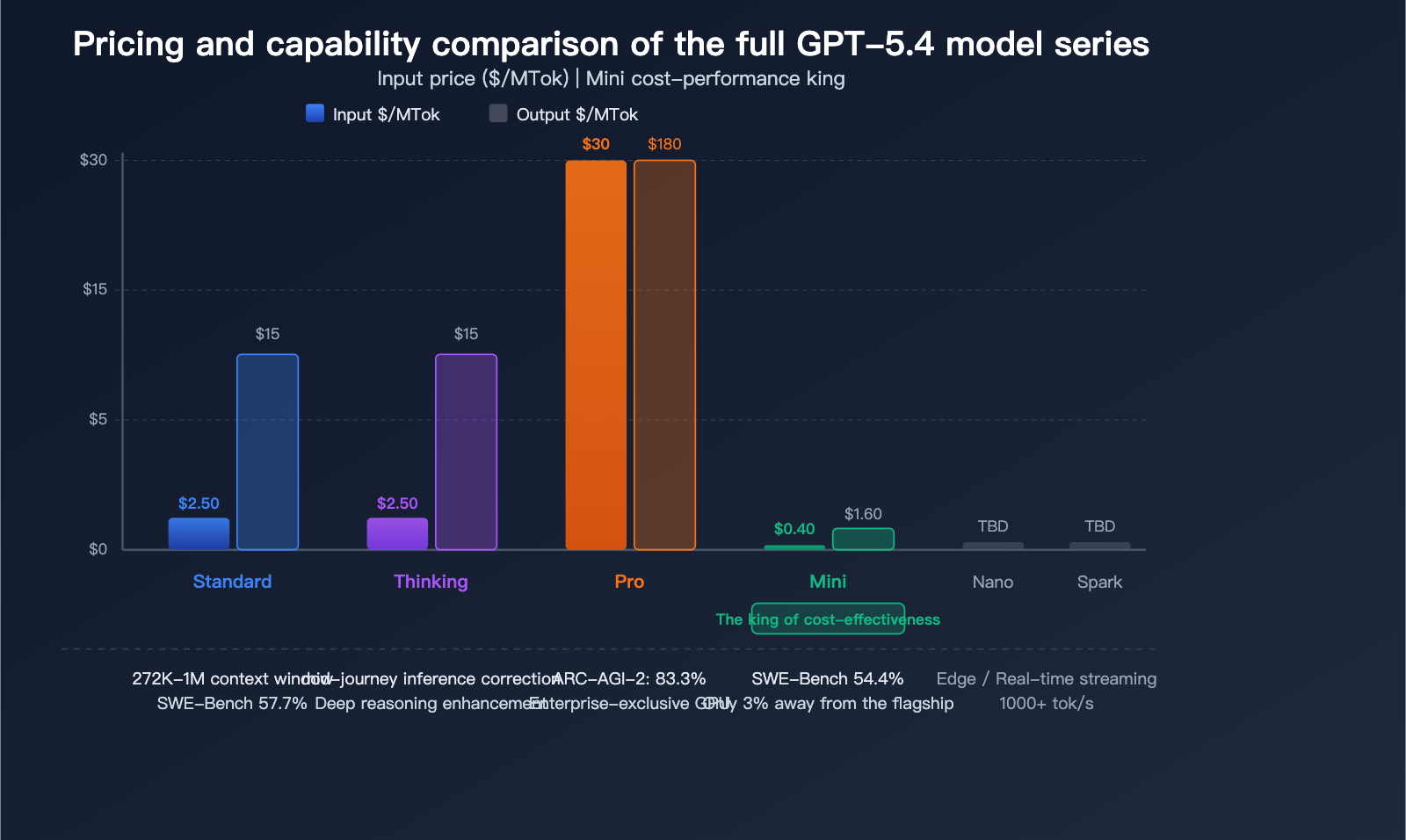

GPT-5.4 comes in 6 different variants, covering everything from high-end performance to lightweight, cost-effective needs:

| Model Variant | Positioning | Input Price ($/MTok) | Output Price ($/MTok) | Key Features |

|---|---|---|---|---|

| GPT-5.4 | General Flagship | $2.50 | $15.00 | Standard 272K context window |

| GPT-5.4 (>272K) | Long Context | $5.00 | $15.00 | Extended to 1M context window |

| GPT-5.4 Thinking | Deep Reasoning | – | – | Supports mid-process reasoning correction |

| GPT-5.4 Pro | Enterprise | $30.00 | $180.00 | Dedicated GPU, highest precision |

| GPT-5.4 Mini | Lightweight & Efficient | ~$0.40 | ~$1.60 | Excellent cost-performance ratio |

| GPT-5.4 Spark | Real-time Streaming | – | – | 1000+ tokens/second |

Pricing Analysis: The standard GPT-5.4 is priced at $2.50/MTok for input and $15.00/MTok for output. GPT-5.4 Mini is significantly more affordable at roughly $0.40/$1.60, making it a great choice for large-scale deployments. GPT-5.4 Pro is designed for enterprise tasks requiring maximum precision, though it comes at a premium.

💰 Cost Optimization: For most development scenarios, GPT-5.4 Mini is more than enough and offers incredible value. By using the APIYI (apiyi.com) platform, you can access more flexible billing options and easily compare the cost-effectiveness of various GPT-5.4 variants against competing models in one place.

The Unique Design of GPT-5.4 Thinking

The most distinct capability of GPT-5.4 Thinking is its mid-process reasoning correction—the model can identify its own errors during the reasoning phase and correct them in real-time, rather than waiting until the final output to reveal mistakes. This is particularly valuable for complex, multi-step reasoning tasks.

The Impressive Performance of GPT-5.4 Mini

Released on March 17, GPT-5.4 Mini scored 54.38% on the SWE-Bench Pro, trailing the flagship model by only 3 percentage points while being about 6 times cheaper. This makes Mini one of the most cost-effective programming models currently available.

GPT-5.4 Evaluation Data and Competitor Comparison

GPT-5.4 Core Evaluation Performance

| Benchmark | GPT-5.4 | GPT-5.4 Pro | Notes |

|---|---|---|---|

| OSWorld-Verified | 75.0% | – | Computer control, superhuman benchmark |

| SWE-Bench Pro | 57.7% | – | Programming capability |

| SWE-Bench Verified | ~80% | – | Code repair |

| ARC-AGI-2 | 73.3% | 83.3% | General reasoning |

| GDPval | – | 83% | Knowledge work |

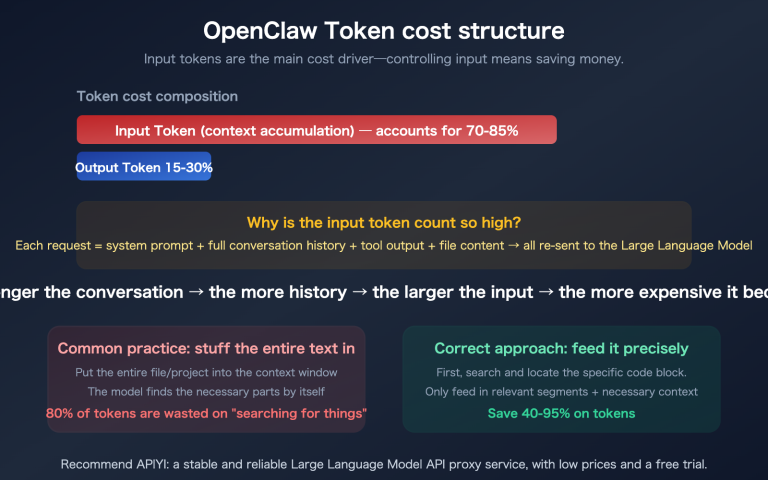

GPT-5.4 Token Efficiency Improvements

OpenAI has dubbed GPT-5.4 the "most token-efficient reasoning model." When solving the same problems, GPT-5.4 uses significantly fewer tokens than GPT-5.2, which directly translates to lower costs and faster speeds.

For production environments with high-frequency model invocation, this means:

- Reduced Costs: Fewer tokens consumed for the same tasks.

- Increased Speed: Fewer tokens mean faster response times.

- Longer Effective Context: The model utilizes context information more efficiently within its million-token context window.

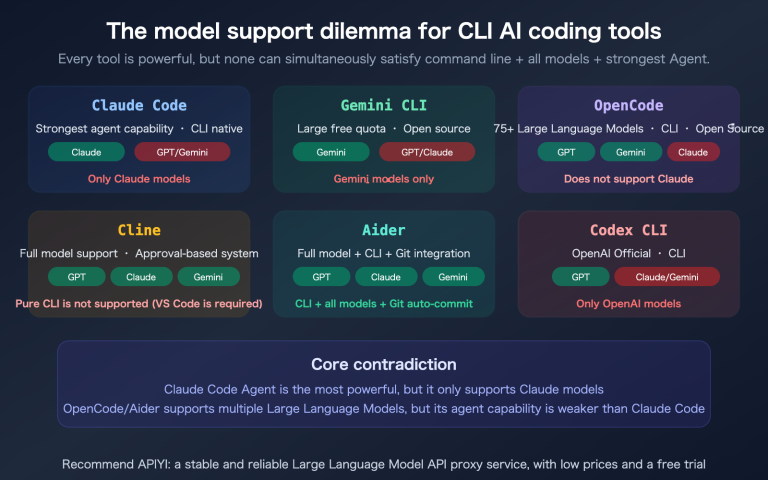

🎯 Comparison Tip: GPT-5.4 excels at computer control and programming, but the Claude series still holds unique advantages in pure reasoning tasks. We recommend using the APIYI (apiyi.com) platform to access both GPT-5.4 and Claude, allowing you to select the optimal model for your specific tasks.

The Retirement of GPT-4o: The End of an Era

GPT-4o Retirement Timeline

The retirement of GPT-4o is a phased process:

| Date | Event |

|---|---|

| February 13, 2026 | GPT-4o retired from most ChatGPT plans |

| February 13, 2026 | Concurrent retirement: GPT-4.1, GPT-4.1 Mini, o4-mini |

| April 3, 2026 | Full retirement of GPT-4o from Enterprise/Education plans |

| API Level | Temporarily retained, but migration is strongly advised |

Impact of GPT-4o Retirement

Prior to the retirement announcement, GPT-4o's daily active usage had already dropped below 0.1%. The vast majority of users have naturally migrated to the GPT-5.x series. However, the retirement still impacts the following areas:

Enterprise System Migration: Internal enterprise systems built on GPT-4o will need to be re-adapted to the API format and capability features of GPT-5.4.

Custom GPTs: Custom GPTs built on GPT-4o must complete their model switch before April 3.

Azure Users: Azure AI Foundry maintains an independent retirement schedule that is not perfectly synchronized with OpenAI.

Recommendations for Migrating from GPT-4o to GPT-5.4

| Migration Dimension | GPT-4o | GPT-5.4 | Notes |

|---|---|---|---|

| Context | 128K | 272K-1M | Significant length increase |

| Pricing | Lower | $2.50/$15 | Standard version is slightly pricier |

| Programming | Average | SWE-Bench 57.7% | Significant improvement |

| Computer Control | Not supported | Native support | Brand new capability |

| Accuracy | Baseline | 33%+ lower hallucination rate | Massive improvement |

💡 Migration Tip: If your system is still using GPT-4o, we recommend completing your migration before April 3. You can start by testing with GPT-5.4 Mini (which is closest in price to GPT-4o) to verify compatibility before choosing the right variant for your needs. Through the APIYI (apiyi.com) platform, you can switch models with a single click without modifying your code, significantly lowering your migration costs.

Quick Access to GPT-5.4

Minimalist API Invocation Example

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="gpt-5.4",

messages=[{"role": "user", "content": "Analyze the performance bottlenecks in this code"}]

)

print(response.choices[0].message.content)

View GPT-5.4 Computer Use invocation example

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

# GPT-5.4 Computer Use mode

response = client.chat.completions.create(

model="gpt-5.4",

messages=[{

"role": "user",

"content": "Open the browser, search for the latest AI papers, and organize them into a table"

}],

tools=[{

"type": "computer_use",

"display_width": 1920,

"display_height": 1080

}]

)

print(response.choices[0].message.content)

🚀 Quick Start: We recommend getting your API key via APIYI (apiyi.com). The platform supports the entire GPT-5.4 variant series, as well as unified API access for competing models like Claude and Gemini. You can switch and compare models using just one key.

FAQ

Q1: Should I choose GPT-5.4 or GPT-5.3-Codex?

Go with GPT-5.4. It has all the programming capabilities of GPT-5.3-Codex built-in and even outperforms it on SWE-Bench Pro with a score of 57.7% compared to 56.8%. While the Codex interface is still available, it's now powered by GPT-5.4 under the hood. You can easily switch between different GPT-5.4 variants for testing via APIYI (apiyi.com).

Q2: Is there an alternative after GPT-4o is retired?

GPT-5.4 Mini is the closest alternative to GPT-4o. Priced at approximately $0.40/$1.60 per million tokens, it scores 54.38% on SWE-Bench Pro, significantly outperforming GPT-4o. If your system relies on GPT-4o, you can seamlessly switch to GPT-5.4 Mini via the APIYI (apiyi.com) platform without needing to modify your code framework.

Q3: Is the GPT-5.4 Computer Use feature safe?

OpenAI has implemented multi-layered security mechanisms for the Computer Use feature, including operation confirmation, interception of sensitive actions, and audit logs. In enterprise environments, we recommend using it in conjunction with proper permission controls. Currently, the Computer Use feature is primarily accessed via API and the Codex interface; it hasn't been fully rolled out to the consumer ChatGPT version yet.

Summary

The 5 core breakthroughs of the GPT-5.4 flagship model:

- Native Computer Control: Surpassing human benchmarks by 75% in OSWorld, it's the first general-purpose model with native Computer Use capabilities.

- Million-token Context Window: Supports 272K standard / 1M extended tokens, enabling long-range Agent task planning.

- 33% Reduction in Hallucinations: Significant improvements in factual accuracy, making it more reliable for enterprise scenarios.

- Codex Programming Integration: Achieves 57.7% on SWE-Bench Pro, covering both reasoning and programming within a single model.

- 6 Model Variants: Ranging from the $0.40 Mini to the $30 Pro, covering all use-case requirements.

The release of GPT-5.4 marks a new phase for OpenAI's model lineup, shifting from "parallel development" to a "unified flagship" strategy. With GPT-4o set to retire on April 3rd, GPT-5.4 will become the absolute core of the OpenAI ecosystem. We recommend using APIYI (apiyi.com) to quickly integrate the full GPT-5.4 model series. The platform provides a unified interface and multi-model switching capabilities, helping developers efficiently handle model migration and selection.

📚 References

-

Official OpenAI Announcement – GPT-5.4: Authoritative model introduction and evaluation data.

- Link:

openai.com/index/introducing-gpt-5-4 - Note: Includes complete technical specifications, evaluation data, and release details.

- Link:

-

OpenAI GPT-4o Retirement Announcement: Retirement schedule for GPT-4o and older models.

- Link:

openai.com/index/retiring-gpt-4o-and-older-models - Note: Includes retirement timelines for various plans and migration guides.

- Link:

-

GPT-5.4 Complete Guide – NxCode: Comprehensive analysis of features, evaluations, and pricing.

- Link:

nxcode.io/resources/news/gpt-5-4-complete-guide-features-pricing-models-2026 - Note: Includes pricing for all variants and detailed evaluation comparisons.

- Link:

-

GPT-5.4 vs GPT-5.3-Codex Comparison: Is it worth migrating from Codex?

- Link:

nxcode.io/resources/news/gpt-5-4-vs-gpt-5-3-codex-upgrade-comparison-2026 - Note: Detailed functional and performance comparison between the two models.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to discuss your experience with GPT-5.4 in the comments. For more information on AI model integration, please visit the APIYI documentation at docs.apiyi.com.