In the first half of 2026, the global image generation API market saw the arrival of two heavyweights: Google’s Nano Banana 2 (Gemini 3.1 Flash Image Preview), launched in late February, which quickly topped the Artificial Analysis Image Arena with its "Pro-level quality + Flash-level speed," and Alibaba Tongyi Lab’s Wan 2.7 Image, released on April 6, which introduced a Thinking Mode and 4K Pro resolution to domestic image models for the first time.

Both claim to be "industry-leading," but their technical approaches, trade-offs, and use cases are vastly different. Based on official documentation, Artificial Analysis rankings, and real-world tests from the English-speaking community, this article provides a comprehensive comparison across 7 dimensions: technical architecture, generation quality, text rendering, multi-subject consistency, pricing, Chinese-language performance, and API integration, to help you choose the right model for your production environment.

If you want to test both models in parallel using the same API key, you can do so directly via the APIYI (apiyi.com) platform. It’s a great way to run blind tests using your own business prompts.

Nano Banana 2 vs. Wan 2.7 Image: Quick Look at Core Capabilities

Basic Positioning Comparison

| Dimension | Nano Banana 2 | Wan 2.7 Image |

|---|---|---|

| Developer | Google DeepMind | Alibaba Tongyi Lab |

| Base Model | Gemini 3.1 Flash Image | Alibaba Wan Series |

| Release Date | 2026-02-27 | 2026-04-06 |

| Core Focus | High Speed + Pro Quality | Thinking Mode + 4K Pro |

| Max Resolution | Up to 4K (approx. 4096×4096) | Standard 2048×2048 / Pro 4K |

| Official Access | Gemini API / Vertex AI | Alibaba Cloud Model Studio / WaveSpeedAI |

| Arena Ranking | Text-to-Image #1 | Not yet independently ranked |

Fundamental Differences in Technical Approach

Before diving into the details, it's essential to understand the core design philosophy behind each:

- Nano Banana 2 follows a "World Knowledge + Speed" path: It shares the world model and real-time search capabilities of Gemini 3.1. It’s not just an image generation model; it’s a model that "understands the real world behind the prompt."

- Wan 2.7 Image follows a "Reasoning + Precise Control" path: It introduces a Thinking Mode, allowing the model to reason and plan composition, spatial relationships, and semantic intent before generating. It also provides fine-grained control tools like HEX color codes and support for 9 reference images.

These paths aren't simply better or worse; they cater to different business needs. This explains why Nano Banana 2 leads the Artificial Analysis aggregate rankings, while Wan 2.7 is often preferred by domestic users for specific Chinese copy or strict brand color requirements.

🎯 Selection Criteria: If your business covers multilingual and cross-cultural content, prioritize Nano Banana 2. If your business has strict brand color requirements, long-form Chinese text, or professional layout needs, prioritize Wan 2.7. We recommend integrating both via the APIYI (apiyi.com) platform to route traffic based on specific scenarios.

Technical Architecture Comparison: Nano Banana 2 vs. Wan 2.7 Image

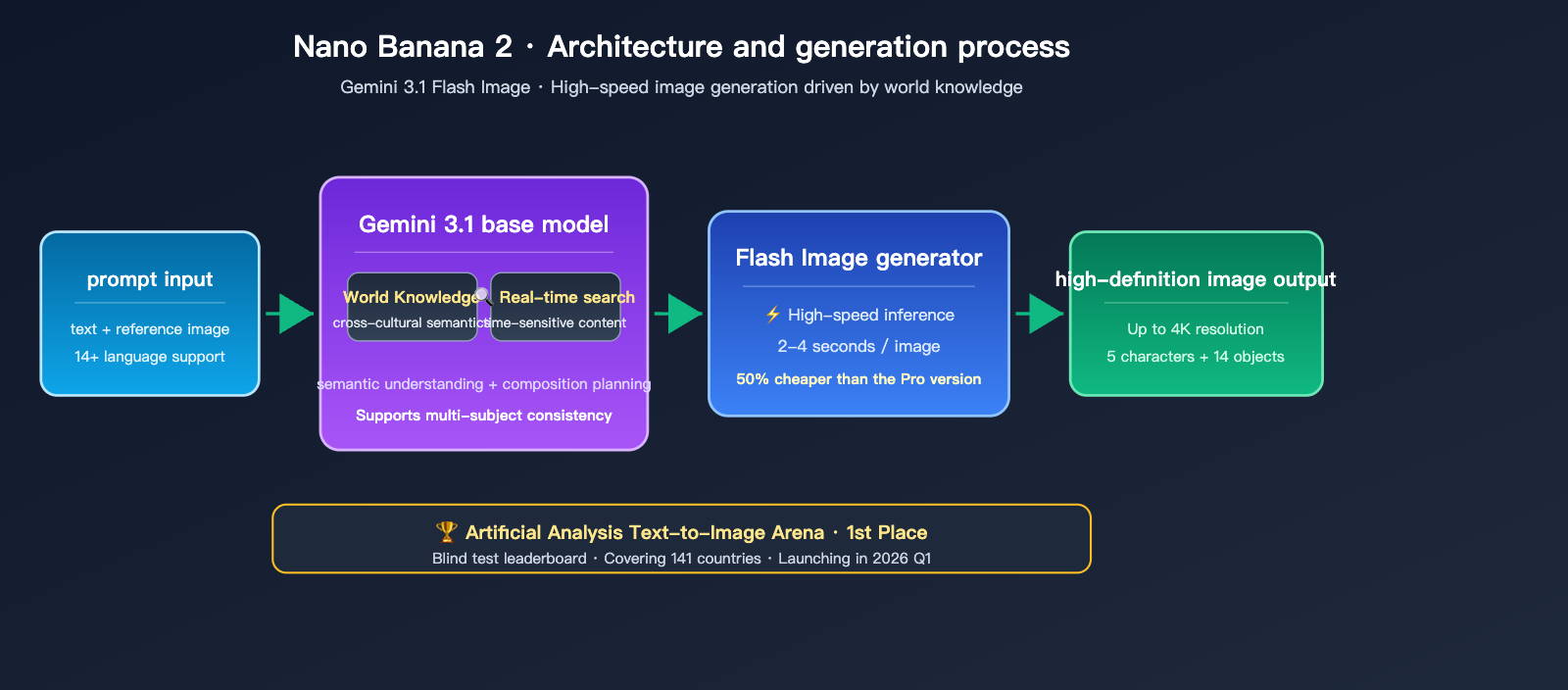

Architectural Features of Nano Banana 2

Nano Banana 2 is built upon the shared world knowledge representation of the Gemini 3.1 Flash model. Here are its three key technical highlights:

- Gemini World Knowledge Base: The model understands cross-cultural concepts (e.g., "What is Tang Dynasty porcelain?" or "What is Bauhaus design?") without needing word-for-word explanations in the prompt.

- Real-time Search Integration: Gemini's real-time information capabilities are integrated into image generation, allowing for more accurate visual representations of time-sensitive content (such as the latest products or trending sports events).

- Flash-level Speed: Compared to Nano Banana Pro, single-image generation speed is 2-3x faster, with costs reduced by approximately 50%, offering a significant advantage in batch generation scenarios.

Google has officially rolled out Nano Banana 2 to the Gemini App, Google Search (across 141 countries), Google Ads, Google Cloud, and Flow, making it the top-tier image model with the widest channel coverage currently available.

Architectural Features of Wan 2.7 Image

Wan 2.7 Image inherits the unified multimodal architecture of the Wan video generation model, where the image component acts as a "single-frame special case" of the video architecture. Its three core differentiators are:

- Thinking Mode: The model first processes the prompt, plans the composition and spatial layout, and then proceeds to actual diffusion generation—similar to the Chain of Thought in Large Language Models, but applied to visual composition.

- 4K Pro Output: Available in two tiers: Standard (2048×2048) and Pro (4096×4096). The Pro version is specifically designed for print advertisements, large-format posters, and similar use cases.

- 12-Language Long-Text Rendering: Supports text area embedding of over 3000+ tokens, enabling the generation of formulas, tables, and multilingual poster copy directly within images.

Architecturally, Wan 2.7 Image feels more like an "industrial-grade visual production tool," pushing controllability to the forefront of image generation models.

Nano Banana 2 vs. Wan 2.7 Image: Generation Quality Benchmarks

Artificial Analysis Image Arena Performance

According to the Artificial Analysis Image Arena blind test leaderboard updated in March 2026:

| Category | Nano Banana 2 | Wan 2.7 Image |

|---|---|---|

| Text-to-Image (Overall) | #1 | Not yet ranked |

| Text Rendering | Significant Improvement | Excellent (Strong in long text) |

| 3D Imaging | Leading | Good |

| Portrait Details | Good | Leading (Skin texture) |

| Street Scene Composition | Leading | Moderate |

| Complex Spatial Relations | Excellent | Leading (Thinking Mode) |

| Overall Win Rate (6 tests) | 5 Wins | 1 Win |

Data from the English-speaking community's "6-scene real-world test" shows that while Wan 2.7 Image Pro only won 1 out of 6 tests, that specific win was in portrait details. Wan 2.7 avoids the "over-smoothed AI look" by preserving skin textures (pores, color variations, and blemishes), which is currently a noticeable weakness for Nano Banana 2.

Quality Strengths by Use Case

| Quality Dimension | Winner | Advantage Description |

|---|---|---|

| Real-world Street Scenes / Narrative | Nano Banana 2 | Stronger compositional depth + lighting |

| Human Skin Details | Wan 2.7 Image | Avoids plastic look, retains realistic blemishes |

| Multilingual Text (incl. Chinese) | Nano Banana 2 | Improved for 14 languages, strong for posters |

| Long Chinese Text Rendering | Wan 2.7 Image | Stable output for 3000+ tokens |

| Multi-subject Consistency | Nano Banana 2 | Limit of 5 characters + 14 objects |

| Spatial Relation Instructions | Wan 2.7 Image | Thinking Mode reasons before drawing |

| Brand Color Precision | Wan 2.7 Image | Native support for HEX color values |

💡 Quality Conclusion: Nano Banana 2 is the "all-around champion," while Wan 2.7 Image is a "specialized tool for niche scenarios." Nano Banana 2 wins in most general use cases, but Wan 2.7 Image holds a clear advantage when it comes to strict brand color compliance, long-form Chinese typesetting, and realistic human skin textures.

Nano Banana 2 vs. Wan 2.7 Image: Pricing and Cost Comparison

Pricing Structure of the Two Models

| Billing Metric | Nano Banana 2 | Wan 2.7 Image |

|---|---|---|

| Input token price | $0.50 / 1M tokens | From ~$0.075 / 1M tokens |

| Output token price | $3.00 / 1M tokens | Tiered, higher for Pro |

| 1K Image (1024×1024) | ~$0.039 / image | ~$0.020-$0.030 / image |

| 2K Image | ~$0.134 / image | ~$0.050-$0.080 / image |

| 4K Image | ~$0.24 / image | ~$0.10-$0.15 / image (Pro) |

| Bulk Discount | 50% off via Batch API | 50% off for select scenarios |

| Avg. cost per 1K images | ~$67 / 1000 images | ~$30-$60 / 1000 images |

3 Criteria for Cost-Effective Selection

Simply asking "which one is cheaper" doesn't tell the whole story—different business scenarios weigh quality, speed, and price differently. Here are three criteria to help you decide:

- High-frequency UGC generation (>100k images/month): If you're price-sensitive, the Wan 2.7 Image standard version is a better fit, potentially saving you 30%-50% in monthly costs.

- Brand assets / Advertising design: If quality is your priority, Nano Banana 2 offers superior overall quality. Even if it's 10%-20% more expensive per image, the time saved on manual post-processing makes it worth it.

- 4K print-ready images: Wan 2.7 Image Pro is one of the few models that natively outputs 4K images, and its unit price is actually lower than the 4K upgrade version of Nano Banana 2.

🎯 Recommendation: If you're still unsure which category your business falls into, I recommend using the APIYI (apiyi.com) platform to enable access to both models. Run 100 images with the same prompt for each, and use the platform's backend to track the total cost of model invocation. You'll have a solid, data-backed selection conclusion within a week.

Optimizing Costs via Aggregator Platforms

Pricing for these two models varies significantly across channels—official direct access, Alibaba Cloud, Atlas Cloud, WaveSpeedAI, and various aggregators all have different rates. Here’s a practical cost-optimization strategy:

- Access them through an aggregator (like APIYI at apiyi.com) for unified billing and invoicing.

- Set daily budget alerts in the aggregator's dashboard to prevent runaway spending.

- Leverage the 50% discount offered by Batch API for non-real-time tasks (like batch generation overnight).

title: "Nano Banana 2 vs Wan 2.7 Image: Text Rendering and API Comparison"

description: "A deep dive into the text rendering capabilities and API integration of Nano Banana 2 and Wan 2.7 Image, including a guide on unified access via APIYI."

tags: [AI, Image Generation, API, Nano Banana 2, Wan 2.7 Image]

Nano Banana 2 vs Wan 2.7 Image: Text Rendering Capabilities

Text rendering has always been a "tough benchmark" for image generation models. Just a few months ago, most models would render "Beautiful Life" as garbled characters. Both of these new models, however, represent a qualitative leap in this area:

| Text Rendering Dimension | Nano Banana 2 | Wan 2.7 Image |

|---|---|---|

| English Short Text | Excellent | Excellent |

| Chinese Short Text | Good | Excellent |

| Long Paragraphs | Good (Stable single line) | Excellent (3000+ tokens) |

| Mathematical Formulas | Good | Excellent |

| Tables / Structured | Good | Excellent |

| Multilingual Mixed | Supports 14+ languages | Supports 12 languages |

| Layout Precision | Moderate | Precise (Positioning supported) |

| Font Variety | Rich | Moderate |

Nano Banana 2 shines in its broad cross-language coverage. You can embed text in Chinese, English, Japanese, Korean, and Arabic all on a single poster, which is incredibly valuable for scenarios like cross-border e-commerce.

Wan 2.7 Image excels in long-form Chinese stability. It can render entire product descriptions, complete recipe steps, or even complex mathematical derivation formulas within a single image—a capability that remains out of reach for most other image models.

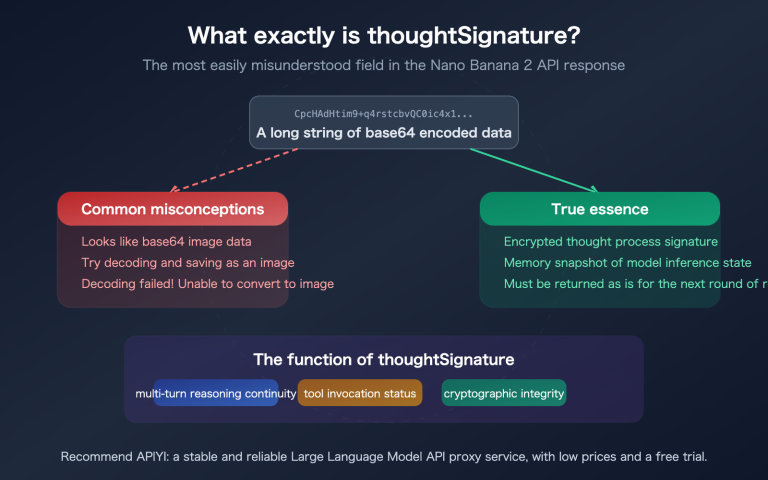

Nano Banana 2 vs Wan 2.7 Image: API Invocation Comparison

API Compatibility and SDK Support

| Access Dimension | Nano Banana 2 | Wan 2.7 Image |

|---|---|---|

| Official SDK | Google Gen AI SDK | Alibaba Cloud DashScope SDK |

| OpenAI Compatibility | Via Vertex AI | Partial third-party support |

| Streaming | Supported on some endpoints | Mostly unsupported |

| Batch Processing | Batch API | Alibaba Cloud batch mode |

| Callbacks / Webhooks | Supported | Supported |

| Multi-image Input | Up to 5 reference subjects | Up to 9 reference images |

Since their native SDKs aren't compatible with each other, you'd typically need to maintain two separate sets of SDK code if you want to use both models, or use an aggregation platform for unified access.

Unified Access via Aggregation Platforms

from openai import OpenAI

# Using APIYI for unified model access

client = OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1"

)

def generate_image(prompt: str, model: str, size: str = "1024x1024"):

response = client.images.generate(

model=model,

prompt=prompt,

size=size,

n=1

)

return response.data[0].url

# Invoke Nano Banana 2

nano_url = generate_image(

prompt="A tech-style poster, main title 'APIYI', subtitle 'Unified AI Gateway'",

model="gemini-3.1-flash-image"

)

# Invoke Wan 2.7 Image

wan_url = generate_image(

prompt="Corporate introduction poster in brand color #1E40AF, including a full paragraph of Chinese product description",

model="wan-2.7-image-pro",

size="2048x2048"

)

📌 Complete A/B Testing and Statistics Code

import time

from openai import OpenAI

client = OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1"

)

TEST_PROMPTS = [

"A minimalist tech product poster, with 'GPT-4' in the center",

"Ink-wash painting style of the Great Wall in autumn, with the poem 'He who has not been to the Great Wall is not a true man'",

"A scientist in a laboratory, wearing a white coat, holding a test tube",

"Retro cyberpunk street scene, neon sign showing '2026 Future City'",

"Food nutrition poster containing a complete product description paragraph"

]

def run_ab_test(prompt: str):

results = {}

for model in ["gemini-3.1-flash-image", "wan-2.7-image-pro"]:

start = time.time()

try:

response = client.images.generate(

model=model,

prompt=prompt,

size="1024x1024"

)

results[model] = {

"url": response.data[0].url,

"latency": time.time() - start,

"tokens": getattr(response, "usage", None)

}

except Exception as e:

results[model] = {"error": str(e)}

return results

for prompt in TEST_PROMPTS:

print(f"Prompt: {prompt}")

print(run_ab_test(prompt))

print("---")

The real value here is that with one SDK, one API key, and one base_url, you can call both models simultaneously. You can simply swap the model parameter whenever you need, without the headache of maintaining two separate SDK implementations.

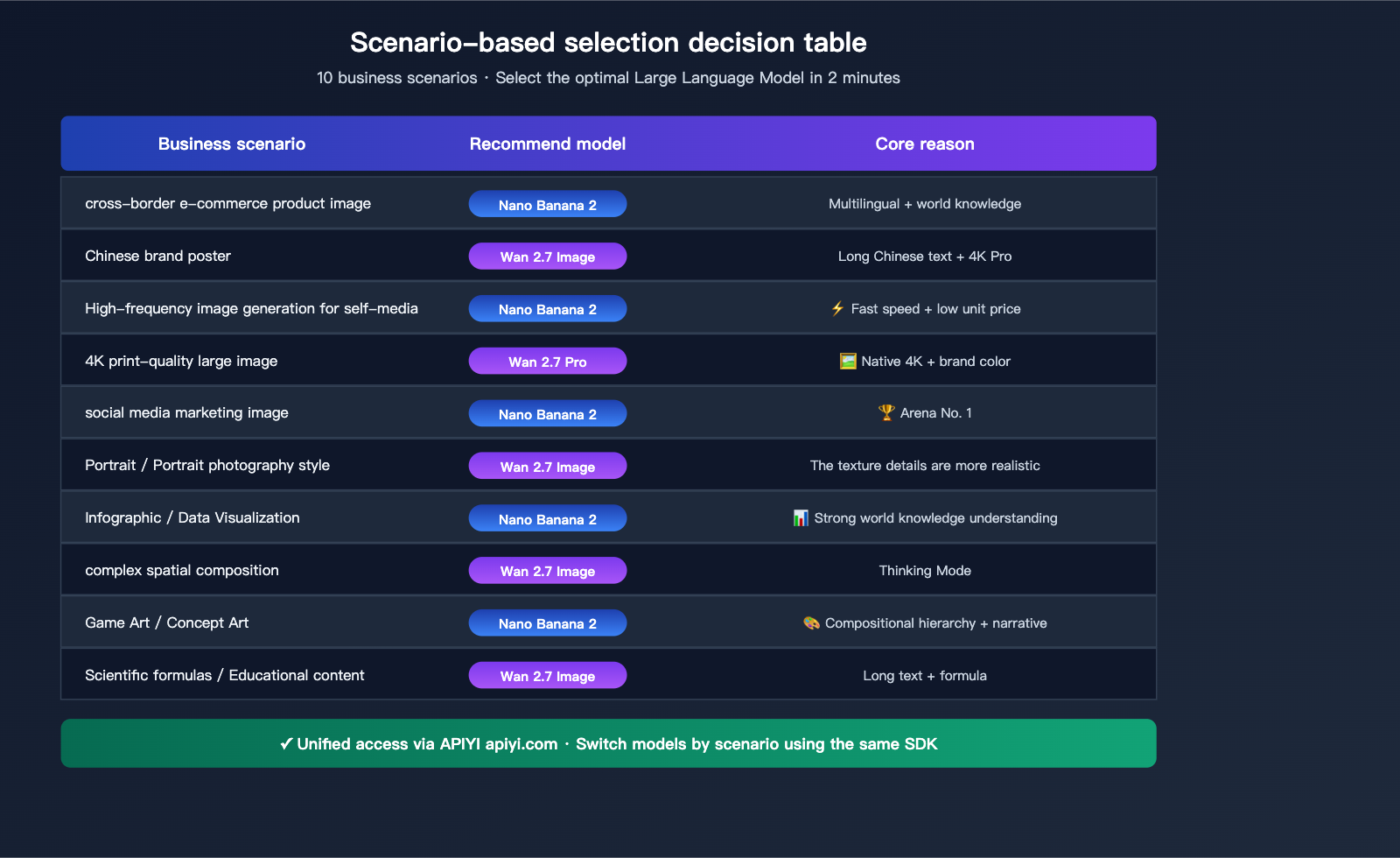

Nano Banana 2 vs. Wan 2.7 Image: Scenario-Based Selection Guide

Precision Recommendations by Business Type

| Business Scenario | Recommended Model | Key Reason |

|---|---|---|

| Cross-border E-commerce Images | Nano Banana 2 | Multilingual + Global Knowledge |

| Chinese Brand Posters | Wan 2.7 Image | Long Chinese text + 4K Pro |

| Social Media Content | Nano Banana 2 | Fast speed + Low cost |

| 4K Print-ready Images | Wan 2.7 Image Pro | Native 4K + Brand color accuracy |

| Social Media Marketing | Nano Banana 2 | Text rendering + Arena #1 |

| Portrait / Photography Style | Wan 2.7 Image | Realistic texture details |

| Infographics / Data Viz | Nano Banana 2 | Strong world knowledge |

| Complex Spatial Composition | Wan 2.7 Image | Thinking Mode reasoning |

| Game Art / Concept Art | Nano Banana 2 | Composition depth + Narrative |

| Scientific Formulas / Education | Wan 2.7 Image | Long text + Formula rendering |

3 Typical Business Combination Strategies

Strategy 1: Nano Banana 2 (Primary) + Wan 2.7 Image (Backup)

Ideal for small-to-medium teams. Route 90% of requests to Nano Banana 2 to ensure speed and overall quality, switching to Wan 2.7 Image only for long-form Chinese or strict brand-color requirements. This keeps token costs predictable without needing constant model switching.

Strategy 2: Dual-Model Parallelism + Quality Voting

Perfect for brands or design studios. Send the same prompt to both models simultaneously and have a product manager or designer select the final result. While this doubles the cost per request, it significantly raises the quality ceiling.

Strategy 3: Wan 2.7 Image (Primary) + Nano Banana 2 (Specialized)

Best for domestic content platforms or e-commerce hubs. Use Wan 2.7 Image for core Chinese content, while dedicating Nano Banana 2 to cross-border, multilingual, or time-sensitive trending content.

🎯 Pro Tip: Regardless of your strategy, we recommend using the APIYI (apiyi.com) aggregation platform. It allows you to unify your access, manage model tagging, set budget alerts, and handle invoicing, which drastically simplifies your operations.

FAQ: Nano Banana 2 vs. Wan 2.7 Image

Q1: Which model has better Chinese language understanding?

Both models offer a significant upgrade over the previous generation. Wan 2.7 Image is more stable with long Chinese passages, classical poetry, and technical terminology, thanks to its extensive training on Chinese corpora. Nano Banana 2 excels in everyday Chinese and mixed-language scenarios, especially when the prompt involves cultural context (e.g., "Song Dynasty porcelain").

Q2: Which model renders text without blurring?

Both models achieve 100% clarity for short text (≤50 characters). The difference lies in long-form text: Wan 2.7 Image supports rendering long passages of 3000+ tokens (great for menus or product descriptions), while Nano Banana 2 is better suited for short, multilingual advertising copy.

Q3: Which model is faster for API calls?

Nano Banana 2 is significantly faster—generating a single image takes about 2-4 seconds, whereas the standard Wan 2.7 Image takes about 5-8 seconds, and the Pro version (4K output) takes about 15-20 seconds. If your business requires real-time performance, prioritize Nano Banana 2.

Q4: Can both models edit existing images?

Yes, both do. Nano Banana 2 offers powerful image editing and multi-subject consistency (up to 5 characters and 14 objects). Wan 2.7 Image provides style transfer and complex editing based on up to 9 reference images, offering tighter control for local refinement.

Q5: Which is more stable to call within China?

Wan 2.7 Image nodes are located in China, so no proxy is needed, and it's fully compliant for invoicing. Nano Banana 2 requires cross-border traffic, and calling the official Google API directly requires a VPN. If you're deploying production services in China, using an compliant aggregation platform like APIYI (apiyi.com) to access Nano Banana 2 is the standard approach to avoid network and compliance risks.

Q6: Can I use both models together to improve a single image?

Yes. A typical approach is a "generate + edit" pipeline: use Nano Banana 2 to quickly generate the base image, then use Wan 2.7 Image to perform local refinements (like adjusting brand colors or optimizing Chinese text areas). This hybrid pipeline often yields higher quality than a single model, making it perfect for high-end content production.

Q7: Are there any legal or compliance differences?

Both have implemented copyright and content safety filters. Nano Banana 2's Layer 2 policy is very strict regarding celebrity likenesses and well-known IPs. Wan 2.7 Image has more granular filtering rules for sensitive terms within the Chinese cultural context. Before commercial use, we recommend reading the terms of service for both or consulting the legal support team of your aggregation platform.

Q8: If I can only pick one, which one should I choose?

- If your business is primarily overseas / cross-border / multilingual, choose Nano Banana 2.

- If your business is primarily domestic / Chinese-focused / requires precise brand control, choose Wan 2.7 Image.

- If your business demands the absolute highest quality, choose Nano Banana 2 (it has a higher overall win rate).

- If your business prioritizes cost + 4K output, choose Wan 2.7 Image Pro.

Q9: Will there be a next generation in the next 6 months?

Google typically iterates on the Gemini Image series every 4-6 months, with the next-gen Nano Banana 3 expected in Q3-Q4 2026. The Alibaba Wan series usually updates every 3-5 months, with Wan 2.8 expected in Q3 2026. In the short term, the conclusions in this article remain valid.

Summary: How to Choose Between Nano Banana 2 and Wan 2.7 Image

Returning to the original question—Nano Banana 2 vs. Wan 2.7 Image, which one should you choose? The answer is quite clear:

Nano Banana 2 is the overall leader for the first half of 2026. It topped the Artificial Analysis Image Arena, with per-call prices 50% lower than the previous generation and speeds 2-3 times faster. Combined with the cross-cultural semantic understanding provided by Gemini 3.1's world knowledge, it is the optimal choice for most general scenarios. For teams that need speed, competitive pricing, multilingual support, and cross-border capabilities, it is the undisputed default choice.

Wan 2.7 Image is the specialized champion for niche scenarios. Its "Thinking Mode" makes complex spatial composition more stable, its 4K Pro output meets print-grade requirements, its 3000+ token long-text rendering is ideal for long-form Chinese content, and its realistic skin textures avoid the "plastic look." For domestic brands, long-form Chinese content, and precise color control, its advantages are hard for Nano Banana 2 to replace in the short term.

The best strategy is actually a "combination play"—don't force yourself to pick just one. By using an aggregation platform like APIYI (apiyi.com), you can access both models simultaneously and route requests dynamically based on the scenario. This allows you to leverage the overall quality of Nano Banana 2 while calling on the specialized capabilities of Wan 2.7 Image for critical tasks. Features like unified billing, tagging by call, and isolating API keys by business line keep the operational costs of a multi-model architecture to a minimum.

Start testing today: We recommend opening an account on APIYI (apiyi.com) this week, preparing 20-50 representative prompts, and using the same code to call both models. Have your product and design teams perform a blind test—you'll have the data you need to make the right decision for your business within a week.

Author: APIYI Team — Focused on AI Large Language Model API proxy services and image generation model aggregation.