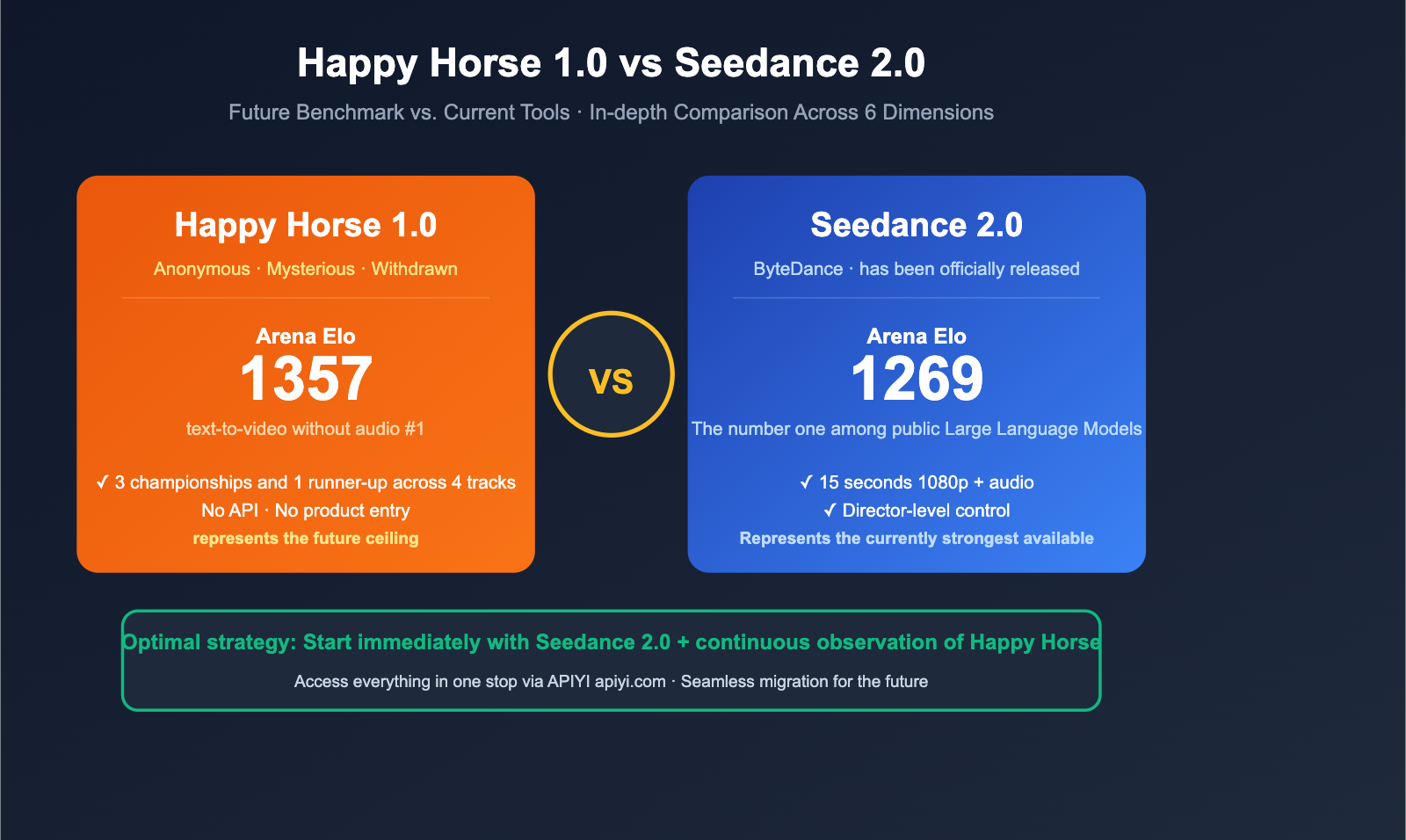

On April 7, 2026, a mysterious video model called Happy Horse 1.0 dropped into the Artificial Analysis Video Arena, instantly dethroning the previous champion, Seedance 2.0. One is an anonymous, "generation-gap" dark horse with almost no public information; the other is a "director-level" tool-oriented model officially released by ByteDance in March. So, which one is actually worth your time today?

Core Value: After reading this, you'll understand the real gap between Happy Horse 1.0 and Seedance 2.0, know which one to choose for different scenarios, and learn how to get your project up and running with Seedance 2.0 while waiting for a public release of Happy Horse.

Quick Look: Happy Horse 1.0 vs. Seedance 2.0 Core Differences

Let's get straight to the point: Happy Horse 1.0 is stronger on the Elo leaderboard, but Seedance 2.0 is the only truly usable option today. The former disappeared after just 72 hours in the Arena with no API or product access; the latter is already stable and available on Dreamina, CapCut, and fal.ai.

| Comparison Dimension | Happy Horse 1.0 | Seedance 2.0 |

|---|---|---|

| Developer | Anonymous (pseudonymous) | ByteDance |

| Release Date | April 7, 2026 (Arena drop) | March 2026 (Official release) |

| Current Status | ❌ Removed from leaderboard, no API | ✅ Available on Dreamina / CapCut / fal.ai |

| T2V Elo (no audio) | 1332-1357 🥇 | 1269 (former #1) |

| I2V Elo (no audio) | 1391-1402 🥇 | 1351 |

| Architecture Hypothesis | Transfusion single-stream multimodal | Unified Multimodal Architecture |

| Audio-Video Integration | ✅ Native | ✅ Native + multilingual dialogue |

| Max Video Length | Not disclosed | 15 seconds 1080p |

| Controllability | Chunk-level (speculated) | Director-level (9 images + 3 videos + 3 audio references) |

| Open Source | ❌ Closed source | ❌ Closed source |

💡 Quick Takeaway: If you need to start a project today, Seedance 2.0 is the clear choice. If you're doing model research and want to understand the ceiling of video generation, keep an eye on the upcoming official release of Happy Horse. If you need to integrate Seedance 2.0 into your workflow, you can use the APIYI (apiyi.com) unified interface to avoid switching back and forth between Dreamina, CapCut, and fal.ai.

title: "Happy Horse 1.0 vs Seedance 2.0: A Deep Dive into Key Differences"

description: "A comprehensive comparison between Happy Horse 1.0 and Seedance 2.0, covering Elo rankings, production readiness, architecture, and control capabilities."

tags: [AI, Video Generation, Seedance, Happy Horse, Multimodal]

Happy Horse 1.0 vs Seedance 2.0: A Deep Dive into Key Differences

1. Elo Performance: Happy Horse Leads by 60+ Points

The Artificial Analysis Video Arena is currently the most authoritative blind-test leaderboard for AI video generation, relying on real user votes and Elo ratings. Here’s how the two models stack up:

| Category | Happy Horse 1.0 | Seedance 2.0 | Gap |

|---|---|---|---|

| Text-to-Video (No Audio) | 1332-1357 🥇 | 1269 | +63 ~ +88 |

| Image-to-Video (No Audio) | 1391-1402 🥇 | 1351 | +40 ~ +51 |

| Text-to-Video (With Audio) | 1205-1215 (#2) | — | — |

| Image-to-Video (With Audio) | 1160 🥇 | — | — |

In the Elo system, a 60-point lead roughly translates to a 58% win rate advantage in blind tests. Happy Horse’s lead over Seedance 2.0 in the T2V (no audio) category is essentially a "generational gap." However, keep in mind:

- Seedance 2.0 surpassed Google Veo 3, OpenAI Sora 2, and Runway Gen-4.5 with 1269 points when it launched in March 2026.

- Seedance 2.0 remains the #1 publicly available model.

- Happy Horse is an unreleased model; being theoretically #1 doesn't mean it's practically usable.

2. Usability: Seedance 2.0 Wins Hands Down

This is the biggest difference between the two, and the one 90% of users should care about.

| Usability Metric | Happy Horse 1.0 | Seedance 2.0 |

|---|---|---|

| Official API | ❌ None | ✅ Dreamina API |

| Third-party Platforms | ❌ None | ✅ fal.ai / API proxy service |

| Online Access | ❌ Withdrawn | ✅ Dreamina / CapCut |

| Documentation | ❌ None | ✅ Comprehensive |

| Commercial License | ❌ Unclear | ✅ Clear |

| Pricing | ❌ Unknown | ✅ Public |

Happy Horse 1.0 appeared on Artificial Analysis for a fleeting 72 hours and then vanished. It has no API, no product interface, no GitHub, and no Hugging Face presence. Its "top spot" currently exists only in screenshots of the Elo leaderboard.

Seedance 2.0, on the other hand, is a fully released product from ByteDance, providing stable services via Dreamina and CapCut, with API access available through third-party platforms. It’s ready for production today.

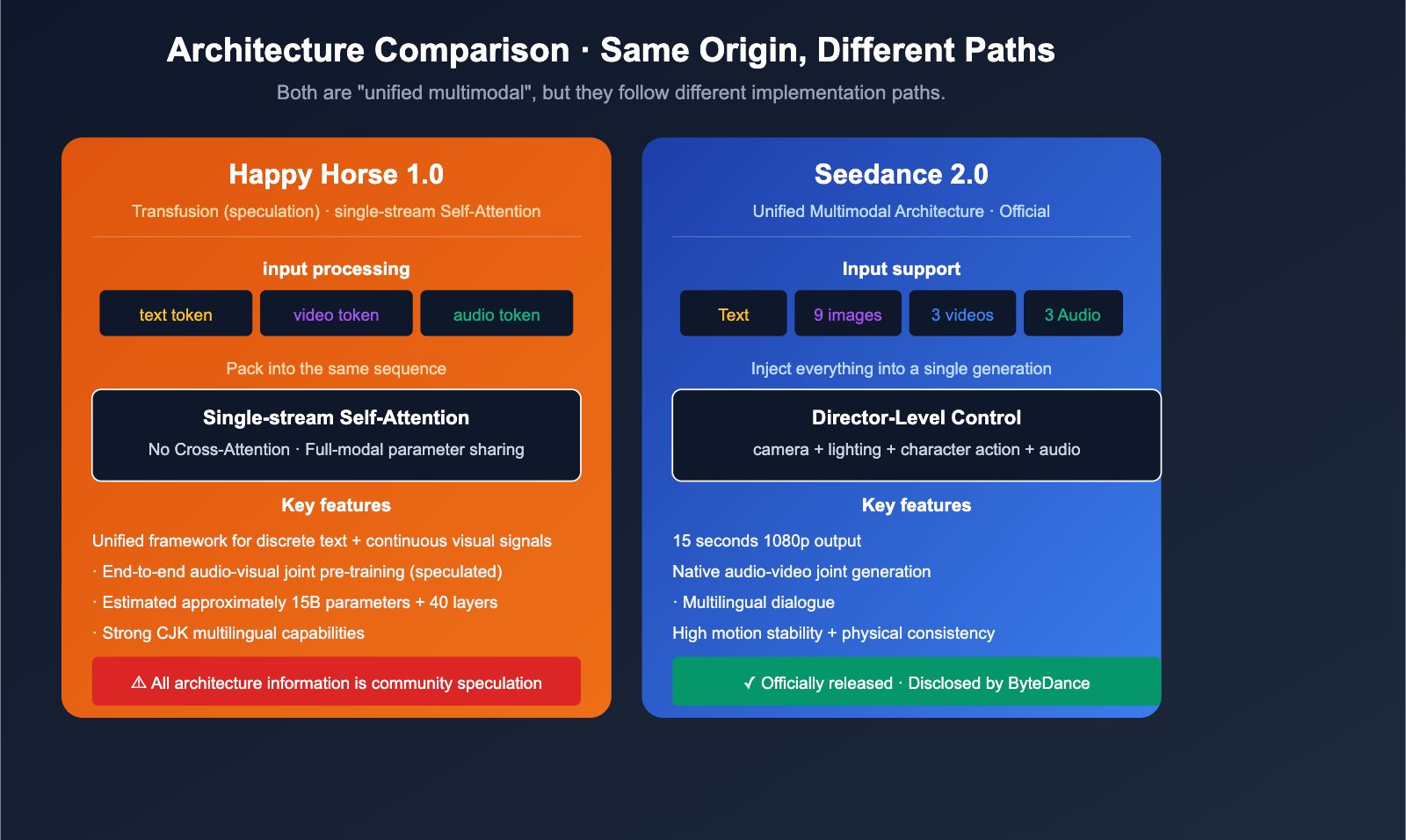

3. Architecture: Both are "Unified Multimodal," but the Paths Differ

Both belong to the "Unified Multimodal Architecture" family, but their implementation paths differ.

Happy Horse 1.0 (Speculated):

- Industry consensus suggests a Transfusion-style architecture.

- Joint processing of discrete text modeling and continuous visual signals in a single framework.

- Single-stream Self-Attention, no Cross-Attention.

- Text/video/audio tokens are packed into a single sequence for joint denoising.

Seedance 2.0 (Officially Disclosed):

- Unified Multimodal Architecture.

- Accepts four types of input: text, image, audio, and video.

- Native support for multi-language dialogue generation.

- "Director-level" control: A single generation can incorporate up to 9 reference images, 3 video clips, and 3 audio clips.

Note: Since Happy Horse has no public research paper, all architectural details are community speculation. Seedance 2.0’s architecture is based on official disclosures from ByteDance and is more reliable.

4. Input Control: Seedance 2.0 is a "Director's Tool"

This is where Seedance 2.0 truly shines. It doesn't position itself as a simple "prompt-to-video" generator, but as a director's workstation:

| Input Type | Seedance 2.0 Support | Happy Horse 1.0 |

|---|---|---|

| Text prompt | ✅ | ✅ |

| Reference images | 9 images | Unknown (likely supported) |

| Reference video clips | 3 clips | Unknown |

| Reference audio clips | 3 clips | Unknown |

| Camera motion control | ✅ | Unknown |

| Lighting control | ✅ | Unknown |

| Character action control | ✅ | Unknown |

| Audio prompts | ✅ | Unknown |

ByteDance calls this "director-level control." It means you're no longer just "describing a scene"—you're "directing a shoot." This is a massive advantage for creators of short dramas, commercials, and music videos.

Because Happy Horse 1.0 lacks any official documentation, these capabilities remain a complete black box.

5. Video Length and Resolution

| Spec | Seedance 2.0 | Happy Horse 1.0 |

|---|---|---|

| Max Duration | 15 seconds | Unreleased |

| Resolution | 1080p | Unreleased |

| Frame Rate | Industry standard | Unreleased |

| Audio Quality | Cinema-grade | Unreleased |

Seedance 2.0’s combination of "15 seconds, 1080p, and native audio" is the most practical spec set for commercial video models today—it's essentially "ready to use" for short-form video, advertising, and e-commerce.

6. Ecosystem and Toolchain

| Ecosystem Metric | Seedance 2.0 | Happy Horse 1.0 |

|---|---|---|

| Official Products | Dreamina + CapCut | None |

| Third-party Platforms | fal.ai, etc. | None |

| API Proxy | Available via APIYI (apiyi.com) | Unavailable |

| Chinese Community | Extensive tutorials | Nearly zero |

| Templates/Presets | Built into Dreamina | None |

🎯 Recommendation: The primary value of Happy Horse 1.0 right now is "showing the industry where the ceiling can go," while Seedance 2.0 is "something you can use to deliver projects today." We recommend using Seedance 2.0 as your primary production tool and keeping an eye on Happy Horse as a future development. If you need stable access to top-tier international video models like Seedance 2.0, Veo 3, or Sora 2 within China, you can access them all via APIYI (apiyi.com).

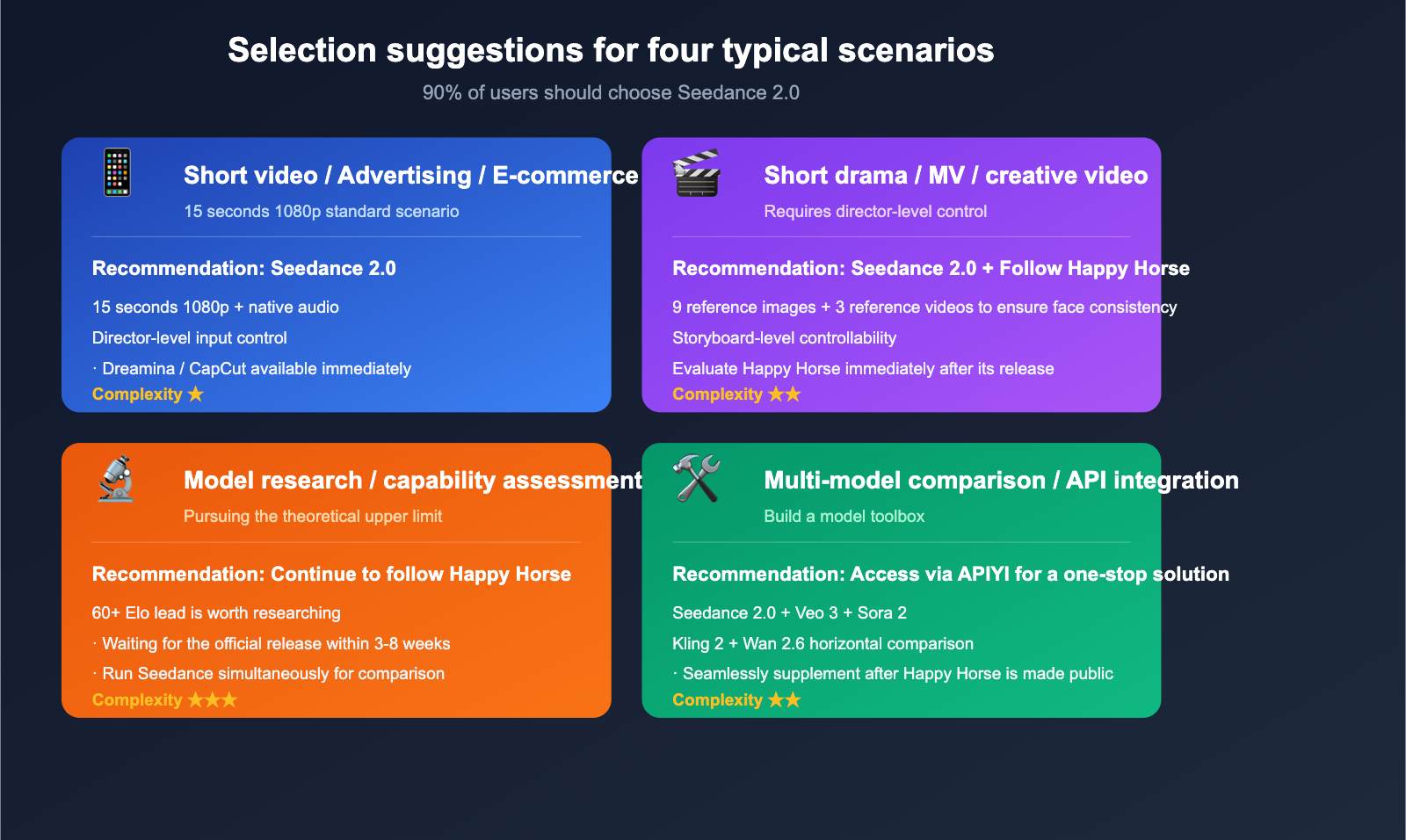

Happy Horse 1.0 vs. Seedance 2.0 Scenario Recommendations

Scenario 1: Short Video / Advertising / E-commerce Assets

Recommendation: Seedance 2.0

Why: With 15-second 1080p output, native audio, and director-level input control, it’s practically tailor-made for this. You can access it directly via Dreamina and CapCut without any complex engineering integration.

Scenario 2: Short Dramas / MV / Creative Video

Recommendation: Seedance 2.0 (Primary) + Keep an eye on Happy Horse

Why: Short dramas demand "director-level control" and scene consistency. Seedance 2.0’s ability to use 9 images and 3 video references is currently the best in class. While Happy Horse boasts superior image quality, it’s not currently available for use, so keep an eye out for its official release.

Scenario 3: Model Research / Pushing the Limits

Recommendation: Keep track of Happy Horse 1.0 release updates

Why: Happy Horse’s 60+ point lead on the Arena confirms it represents the next generation of image quality. For researchers, it’s a must-watch, but you’ll need to wait for its public release (expected within 3-8 weeks).

Scenario 4: Cross-Model Comparative Testing

Recommendation: Run a side-by-side comparison with Seedance 2.0, Veo 3, Sora 2, and Wan 2.6.

Why: Any serious video generation project requires testing 3-5 models to find the best fit for your specific needs. Happy Horse is currently sitting this one out. The most pragmatic approach is to use APIYI (apiyi.com) to integrate models like Seedance 2.0, Veo 3, Sora 2, Kling 2, and Wan 2.6 into a single "model toolkit." Once Happy Horse goes public, you can add it to your stack immediately.

Happy Horse 1.0 vs. Seedance 2.0 Decision Guide

Use this decision table to make your choice in 30 seconds.

| Your Need | Recommended Solution |

|---|---|

| I need to deliver a project today | Seedance 2.0 |

| I need 15s 1080p + audio | Seedance 2.0 |

| I need "director-level" fine-grained control | Seedance 2.0 |

| I need the absolute limit of image quality | Wait for Happy Horse official release |

| I'm doing technical research | Keep following Happy Horse |

| I need to integrate via API into my product | Seedance 2.0 + APIYI (apiyi.com) |

| I need infinite-length video | Neither; check out MAGI-1 |

| I have trouble accessing overseas models | Either + APIYI (apiyi.com) API proxy service |

💡 The Smart Move: 90% of real-world projects should go with Seedance 2.0, while the remaining 10% can wait for the official Happy Horse release. These aren't really competitors—Happy Horse is the "future," while Seedance 2.0 is the "now."

Happy Horse 1.0 vs. Seedance 2.0 FAQ

Q1: Is Happy Horse 1.0 really that much better than Seedance 2.0?

In the blind Elo ratings on the Artificial Analysis Video Arena, Happy Horse 1.0 leads Seedance 2.0 by about 60–90 points in the T2V (text-to-video) no-audio category. This translates to a ~58% win rate advantage in blind tests, which is definitely a significant lead. However, keep three things in mind: (1) Elo is a single-dimensional overall preference score and doesn't necessarily reflect your specific use case; (2) Happy Horse has no product or API, so this "lead" currently only exists on a leaderboard; and (3) Seedance 2.0 has an exclusive advantage in "director-level control," a dimension that the Arena doesn't currently measure.

Q2: When will Happy Horse 1.0 be available?

It's uncertain. It appeared out of nowhere on the Arena on April 7th and vanished from the leaderboard 72 hours later, with no official timeline since. Based on the release patterns of similar anonymous models in the past (like early variants of GPT-4 Turbo or Claude 3.5 Sonnet on LMArena), there is usually an official product release within 3–8 weeks. Until then, there is no public access point.

Q3: Can I use Seedance 2.0 in China?

Yes. Seedance 2.0 is a product from ByteDance and natively supports Chinese environments on platforms like Dreamina and CapCut. If you need to integrate it into your own system via API, you can also access it through third-party API proxy services. We recommend using APIYI (apiyi.com) to access Seedance 2.0, Veo 3, Sora 2, and other video models through a single platform, saving you the hassle of switching between multiple account systems.

Q4: Should I wait for Happy Horse to be released before starting my video project?

We don't recommend waiting. There are two reasons: (1) When Happy Horse will be released is a total black box—it could be 3 weeks or 3 months; (2) The engineering pipeline for video generation (prompt engineering, reference image preparation, and post-processing) is decoupled from the model itself. The workflow you build today with Seedance 2.0 will be almost seamlessly transferable to Happy Horse later. The best strategy is to start working with Seedance 2.0 now and switch later if needed.

Q5: Do both models support Chinese prompts?

Seedance 2.0 natively supports Chinese prompts and has a better understanding of Chinese contexts—a natural advantage for a Chinese company like ByteDance. Since Happy Horse 1.0 has no public API, we can't verify its Chinese support, though the community speculates it should support Chinese based on its multilingual capabilities.

Q6: If I need to deliver a 30-second commercial today, how should I use Seedance 2.0?

The most practical approach is: (1) Break the 30 seconds into two 15-second segments and generate them separately using Seedance 2.0 (leveraging its 15-second maximum video capability); (2) Prepare 9 reference images and 3 reference videos for each segment, using director-level control to ensure scene consistency; (3) Use CapCut for post-production splicing and transitions; (4) Automate batch generation by connecting to the Seedance 2.0 API via APIYI (apiyi.com). If you're looking for the absolute best visual quality, you can run Veo 3 or Sora 2 in parallel to compare and choose the best output.

Summary

The comparison between Happy Horse 1.0 and Seedance 2.0 is essentially a conversation between the "benchmark of the future" and the "tool of today."

Two key facts:

- Happy Horse 1.0 truly represents the next generation of visual quality: A 60+ point Elo lead isn't a fluke; it shows that the ceiling for AI video generation is still very high.

- Seedance 2.0 is the only top-tier choice available, usable, and worth using today: With 15-second 1080p output, native audio, and director-level control, there is no other commercial alternative.

Optimal Strategy:

- For production projects: Use Seedance 2.0 immediately to build your workflow.

- Stay informed: Evaluate a migration as soon as Happy Horse is officially released.

- Cross-comparison: Use APIYI (apiyi.com) to access Seedance 2.0, Veo 3, Sora 2, Kling 2, and Wan 2.6 simultaneously to build your own "model toolbox."

🚀 Actionable Advice: If you want to integrate Seedance 2.0 today, the fastest path is: First, register at APIYI (apiyi.com) and get an API key; second, call the Seedance 2.0 API via the unified OpenAI-compatible interface; third, prepare your 9 reference images and 3 reference videos to start your first "director-level" creation. When Happy Horse is officially released, you'll only need to switch the model name in the APIYI dashboard to migrate seamlessly without changing any code.

Author: APIYI Team — Dedicated to providing developers with stable access to mainstream AI models. Visit apiyi.com to learn more.

References

-

DataLearner – Happy Horse 1.0 Technical Profile

- Link:

datalearner.com/ai-models/pretrained-models/happy-horse - Description: A summary of known technical information for Happy Horse.

- Link:

-

ByteDance Seed – Seedance 2.0 Official Page

- Link:

seed.bytedance.com/en/seedance2_0 - Description: Official introduction to the architecture, capabilities, and positioning.

- Link:

-

MindStudio – Seedance 2.0 Workflow Guide

- Link:

mindstudio.ai/blog/what-is-seedance-2-release-guide - Description: A detailed guide on director-level control using 9 images, 3 videos, and 3 audio clips.

- Link:

-

fal.ai – Seedance 2.0 Integration Page

- Link:

fal.ai/seedance-2.0 - Description: Documentation for third-party API integration.

- Link:

-

BuildFastWithAI – Seedance 2.0 Review

- Link:

buildfastwithai.com/blogs/seedance-2-bytedance-ai-video-2026 - Description: A comparative analysis against Veo 3, Sora 2, and Runway.

- Link:

-

Artificial Analysis – Video Arena Leaderboard

- Link:

artificialanalysis.ai/video/leaderboard/text-to-video - Description: Raw Elo data for Happy Horse and Seedance 2.0.

- Link: