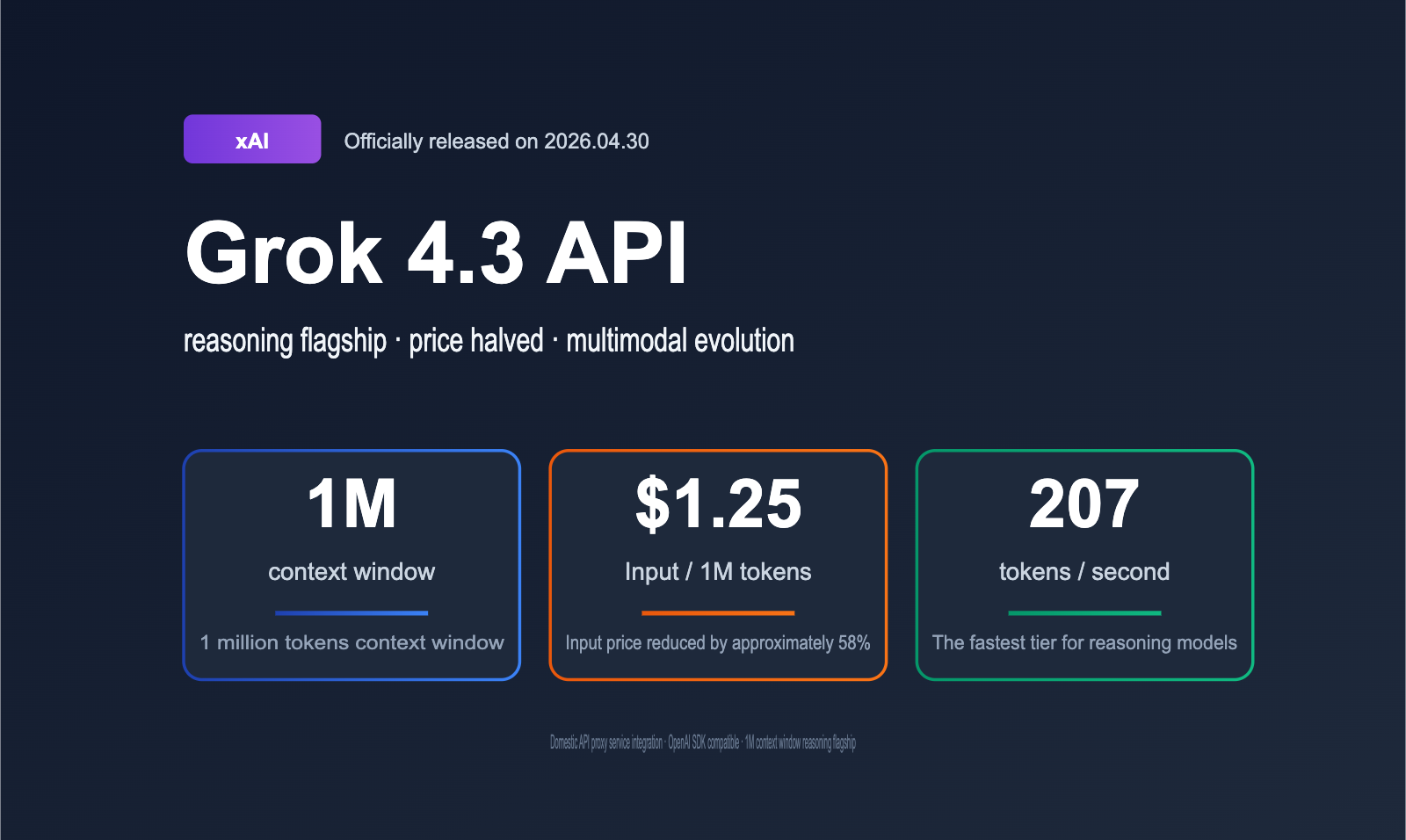

On April 30, 2026, xAI completed the full rollout of the Grok 4.3 API. The combination of slashing input prices by approximately 40%, expanding the context window to 1M tokens, and introducing native video input support for the first time has effectively rewritten the cost model for agentic applications. This article provides a comprehensive breakdown of the core upgrades, pricing details, performance benchmarks, and implementation paths for the Grok 4.3 API.

Core Value: Get up to speed in 3 minutes on all key information regarding the Grok 4.3 API, its industry significance, and the fastest way to integrate it domestically via an API proxy service.

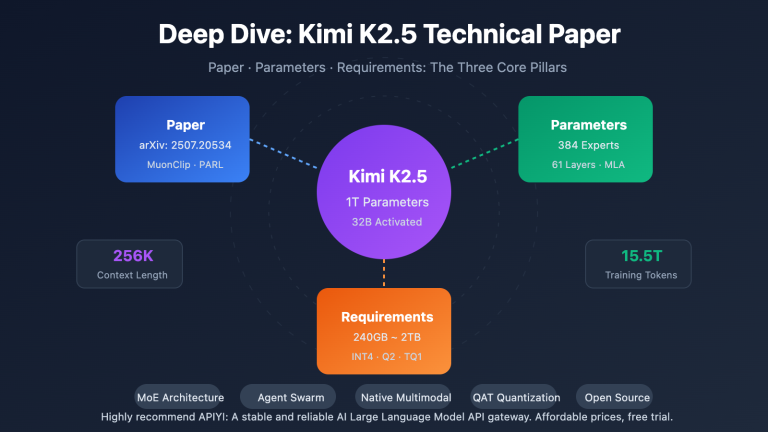

Quick Overview of Grok 4.3 API

xAI's latest update is a "price reduction + capacity expansion + multimodal" power move. Just four months after the previous Grok 4.20, xAI has used a major version iteration to shift the cost curve for reasoning models down a notch. Let's align all the key parameters in a table before we dive into the details.

Before looking at the table, it's worth clarifying where Grok 4.3 sits in the xAI product matrix. Currently, xAI offers three tiers of API models: Grok 4 Fast, which focuses on "extreme cost-effectiveness"; Grok 4.3, the "reasoning flagship"; and Grok Code Fast 1, which is dedicated to coding tasks. Grok 4.3 is the model xAI strongly recommends for all API users as the default, explicitly stated in the documentation as their "smartest and fastest" flagship model.

Key Parameters of Grok 4.3 API

| Feature | Details |

|---|---|

| Official Release | April 30, 2026 (Full API availability) |

| Beta Release | April 17, 2026 (For SuperGrok Heavy users) |

| Model ID | grok-4.3 |

| Context Window | 1,000,000 tokens (1M) |

| Output Speed | ~207 tokens/second |

| Input Price | $1.25 / million tokens |

| Output Price | $2.50 / million tokens |

| Supported Modalities | Text, Image (single image ≤ 20 MiB), Video (New) |

| Reasoning Mode | Enabled by default (Reasoning Always-On) |

| Knowledge Cutoff | November 2024 |

| Batch API Discount | 50–80% of standard price (24-hour processing) |

| AA Intelligence Index | 53 (Significantly higher than the 34 median for this price point) |

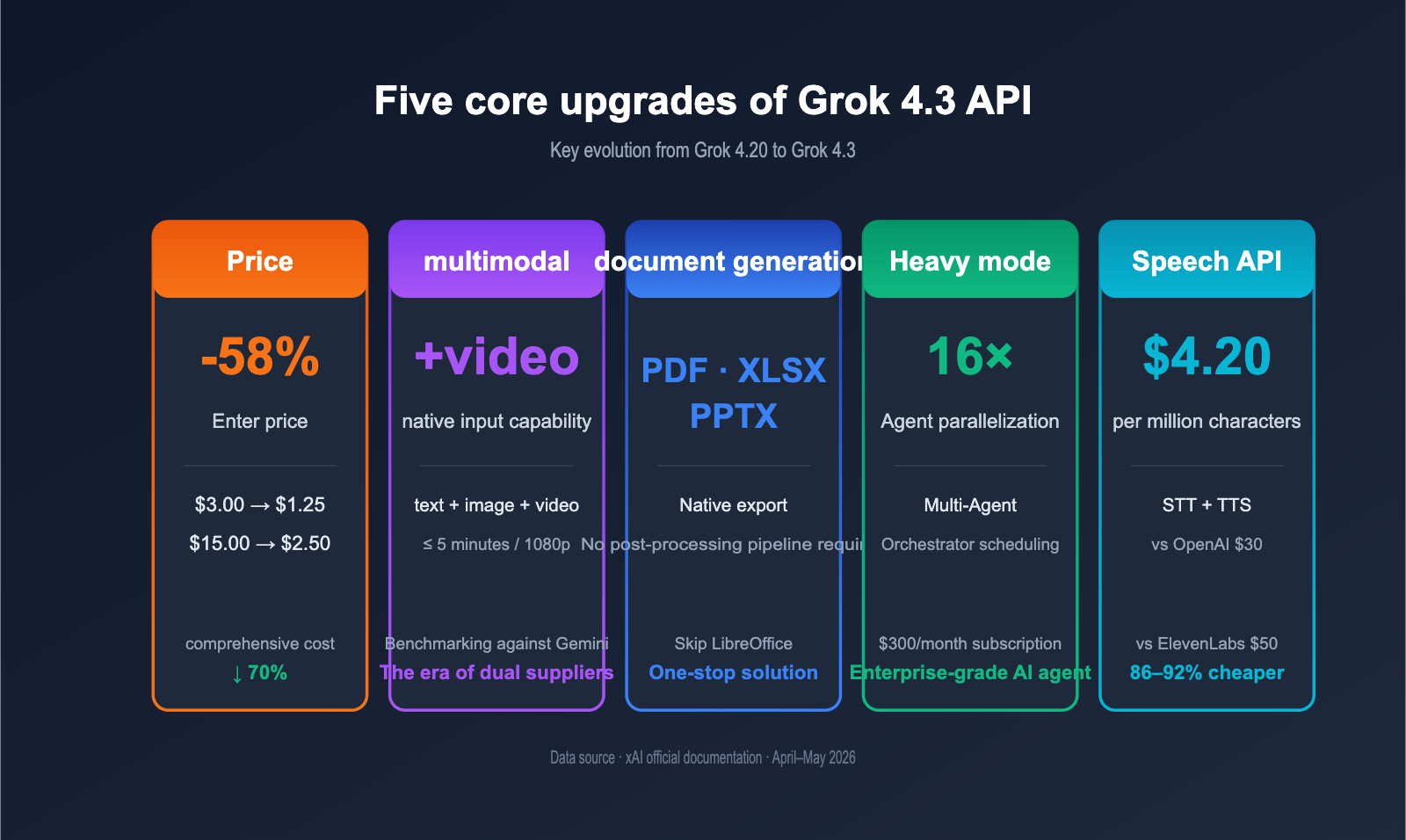

Five Core Upgrades of Grok 4.3 API

xAI's update goes beyond just pricing. We've condensed all the changes into five dimensions to help you get up to speed quickly.

| Upgrade Area | 4.20 Era | Grok 4.3 Status | Impact |

|---|---|---|---|

| Pricing | $3.00 / $15.00 | $1.25 / $2.50 | ~58% reduction in input cost |

| Multimodal | Text + Image | Text + Image + Video | Agents can watch videos directly |

| Document Gen | Text only | Native PDF/XLSX/PPTX output | Eliminates post-processing pipeline |

| Heavy System | Single Agent | 16-Agent Parallel Scheduling | Complex tasks completed in one run |

| Voice API | No standalone API | STT/TTS API $4.20/million chars | 86–92% cheaper than OpenAI |

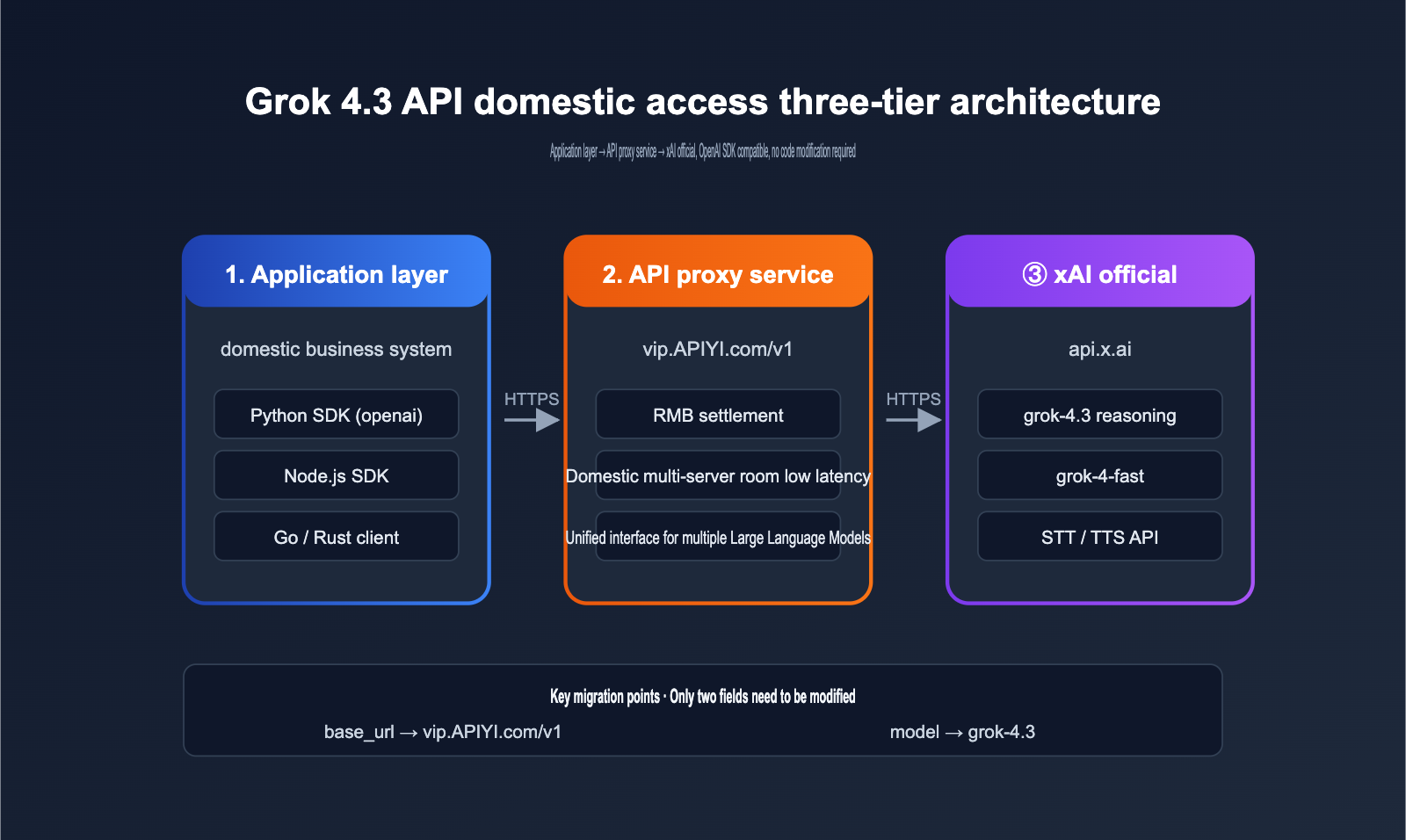

🎯 Quick Trial Tip: For domestic developers, using the APIYI (apiyi.com) API proxy service is one of the most reliable ways to access the Grok 4.3 API. Simply set the

base_urltohttps://vip.apiyi.com/v1and the model field togrok-4.3. The response speed matches the official API, and it supports OpenAI SDK-compatible calls.

Deep Dive into Grok 4.3 API Pricing

Pricing is the most talked-about aspect of this release. Let's break down the bill across four key areas: unit price, batch, tool invocation, and subscriptions.

Grok 4.3 API Standard Pricing

xAI's official price list clearly outlines token-level rates. The following data is sourced from official xAI documentation and real-time quotes from OpenRouter.

| Item | Unit Price | Notes |

|---|---|---|

| Input tokens | $1.25 / million | Includes tokenized images and video frames |

| Output tokens | $2.50 / million | Includes output tokens from reasoning steps |

| Cached input tokens | $0.31 / million | Billed at this rate when cache is hit |

| Image input | Token-based | Max 20 MiB per image |

| Video input | Frame-based tokens | New capability |

Assuming a standard 3:1 input-to-output ratio, the blended price for the Grok 4.3 API is approximately $1.56 per million tokens. Compared to the GPT-5 series and Claude Opus 4.7, this sits in the most affordable tier for reasoning models.

Grok 4.3 Server-Side Tool Invocation Pricing

Grok 4.3 API features built-in server-side tools. The following three tool categories are billed per invocation, separate from token costs.

| Tool Type | Price | Use Case |

|---|---|---|

| Web Search | $5 / 1k calls | Real-time web retrieval |

| X (Twitter) Search | $5 / 1k calls | X platform timeline retrieval |

| Code Execution | $5 / 1k calls | Sandbox code execution |

💡 Cost Optimization Tip: For medium-concurrency scenarios, we recommend mixing Grok 4.3 with Grok 4 Fast. Route simple queries to 4 Fast (at 1/4 the cost of 4.3) and reserve complex reasoning tasks for 4.3. The APIYI (apiyi.com) platform allows you to switch between these two models under the same

base_urlwithout needing to rewrite your authentication logic.

Grok 4.3 SuperGrok Heavy Subscription

Beyond token-based API billing, xAI has introduced the SuperGrok Heavy subscription for power users.

| Subscription Tier | Monthly Fee | Includes |

|---|---|---|

| Grok Free | $0 | Rate-limited access to Grok 4.3 |

| SuperGrok | $30 / month | Higher limits + video input |

| SuperGrok Heavy | $300 / month | 16-Agent Heavy mode + priority rate limits + early access features |

While the price is slightly higher than ChatGPT Pro ($200/month) or Claude Max ($200/month), the 16-Agent Heavy mode is arguably the closest thing to an "enterprise-grade agent cluster" currently available in a public model.

Grok 4.3 API Real-World Cost Estimation

Many teams want to know exactly how much they'll save by switching to Grok 4.3. We've estimated costs for three typical business scales, assuming a 3:1 input-to-output ratio.

| Business Scale | Monthly Tokens | Grok 4.3 Monthly Fee | Claude Opus 4.7 Monthly Fee | Savings |

|---|---|---|---|---|

| Individual Developer | 10M | ~$15 | ~$185 | -92% |

| Mid-sized SaaS | 500M | ~$780 | ~$9,200 | -92% |

| Enterprise Support | 5,000M | ~$7,800 | ~$92,000 | -92% |

Note: When Claude Opus 4.7's prompt caching hit rate is high, actual costs can drop by another 30–50%. Grok 4.3 also supports cached input discounts ($0.31 / million tokens). The table above shows "raw prices without caching," so the real-world gap might be slightly smaller, but it remains in the 6–8x range.

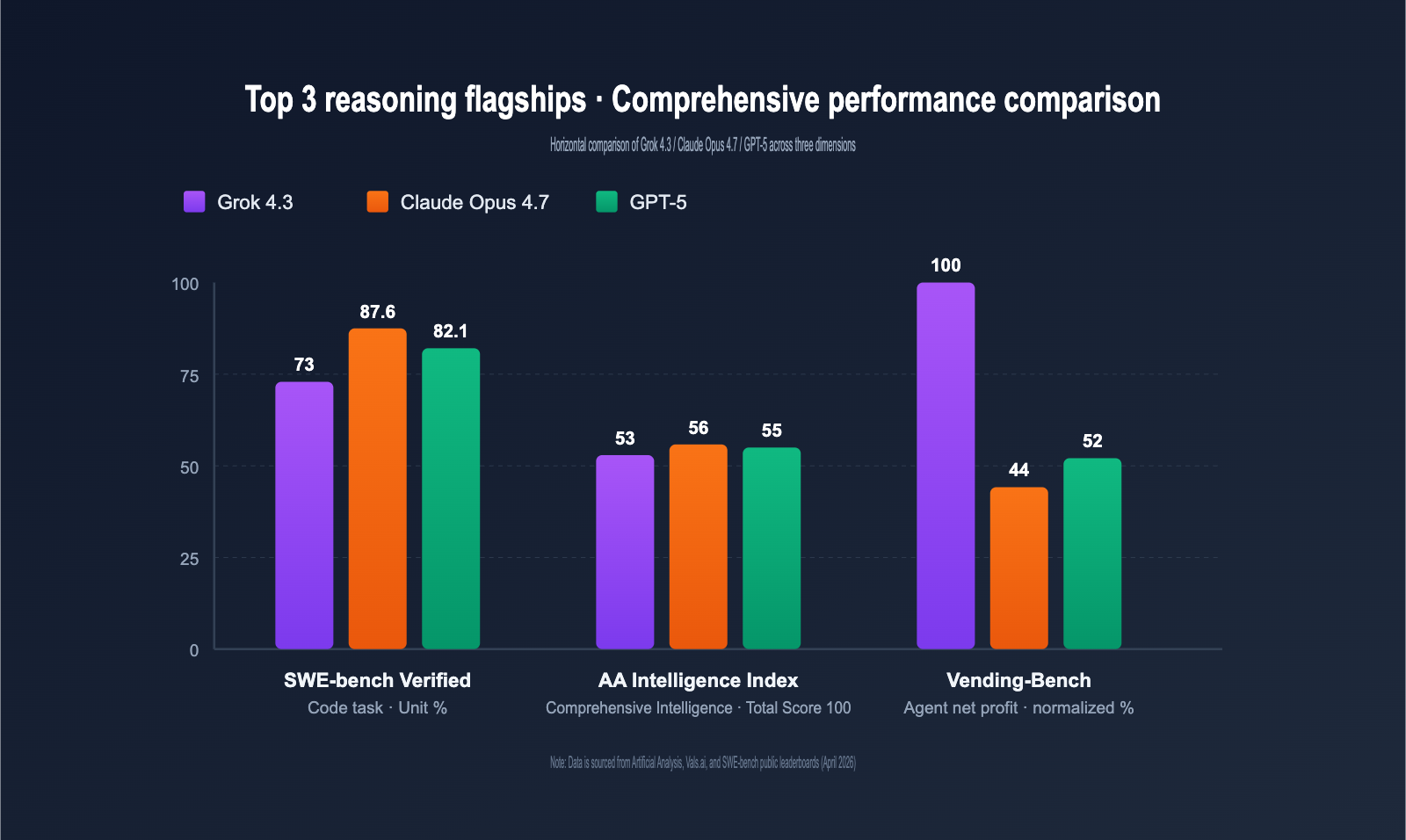

Grok 4.3 API Performance Benchmarks

Price isn't everything. We've used recent public data from mainstream leaderboards to evaluate where Grok 4.3 truly stands in reasoning, coding, and agentic tasks.

Comprehensive Comparison with Peers

The table below summarizes benchmark data available as of late April 2026. Prices reflect API list prices.

| Model | AA Intelligence Index | SWE-bench Verified | Output Speed | Input Price | Context Window |

|---|---|---|---|---|---|

| Grok 4.3 | 53 | ~73% | 207 tps | $1.25 | 1M |

| Claude Opus 4.7 | 56 | 87.6% | 78 tps | $15.00 | 200k |

| GPT-5 (High Reasoning) | 55 | 82.1% | 95 tps | $5.00 | 400k |

| Gemini 3 Pro | 54 | 79.4% | 130 tps | $3.50 | 2M |

| Grok 4 Fast | 41 | 58.2% | 235 tps | $0.30 | 256k |

A few clear conclusions emerge:

- Coding isn't Grok 4.3's strongest suit: It trails Claude Opus 4.7 by about 14 percentage points on SWE-bench.

- Agentic tasks are Grok 4.3's home turf: In long-sequence simulation tasks like Vending-Bench, Grok 4.3 outperforms Opus 4.7 by approximately 1.26x.

- Unbeatable throughput + price combo: With 207 tps speed and a $1.25 input price, it's in a league of its own among reasoning models.

Grok 4.3 Performance by Task Type

Broken down by task granularity, here's how it performs in practice:

| Task Type | Grok 4.3 Performance | Recommended Use Case |

|---|---|---|

| Long Context Summarization | ⭐⭐⭐⭐⭐ | 1M window + high throughput; handles entire books with ease |

| Agentic Workflows | ⭐⭐⭐⭐⭐ | Top-tier performance for long-chain tasks like Vending-Bench |

| Code Generation & Refactoring | ⭐⭐⭐⭐ | Not quite Opus 4.7 level, but price advantage offsets the gap |

| Complex Math Reasoning | ⭐⭐⭐⭐ | AIME series performance is close to GPT-5 |

| Multimodal Understanding | ⭐⭐⭐⭐ | Video input is a new capability with decent accuracy |

| Long-term Memory | ⭐⭐ | Still lacks native persistent memory; requires an external memory layer |

🎯 Selection Advice: Choosing the right model depends on your specific application and quality requirements. We recommend testing on the APIYI (apiyi.com) platform, which supports unified interface calls for major reasoning models like Grok 4.3, Claude Opus 4.7, and GPT-5, making it easy to perform side-by-side comparisons with your real business data.

Deep Dive into the Three New Capabilities of the Grok 4.3 API

Beyond the pricing adjustments, Grok 4.3 introduces three significant new capabilities that were completely absent in the Grok 4.20 era.

Grok 4.3 Video Input Capability

Grok 4.3 is the first xAI API model to natively support video input. It doesn't rely on "transcribing first and feeding text"; instead, it processes video frames directly through a vision encoder.

Supported video parameters:

| Parameter | Limit |

|---|---|

| Single video duration | ≤ 5 minutes (officially recommended) |

| Video resolution | ≤ 1080p |

| Frame rate sampling | Automatically samples at 1–4 fps |

| File formats | mp4, mov, webm |

| Billing method | Billed by image tokens after frame sampling |

In practical applications, there are two primary use cases: extracting key events from surveillance/security footage and structured summarization of educational/meeting videos. The latter, combined with the 1M context window and video input, allows you to "feed in a 4-hour lecture and output a complete chapter-by-chapter summary."

The table below summarizes typical video input applications we've observed and their key technical requirements.

| Use Case | Key Technical Requirements | Implementation Difficulty |

|---|---|---|

| Surveillance event detection | Set system prompt to focus on events, set fps sampling to 2 | Low |

| Meeting minutes | Combine with STT to extract speech; use video frames to identify speaker changes | Medium |

| Educational video notes | Slice long videos into 5-minute segments, then summarize | Low |

| Product demo documentation | Identify UI operation steps via frame sampling, generate illustrated tutorials | Medium |

| Short video content moderation | Single video ≤ 60 seconds, use batch concurrent calls | Low |

Grok 4.3 Document Generation Capability

The most underrated feature of the new version is document generation. Grok 4.3 can directly generate downloadable PDF, Excel (XLSX), and PowerPoint (PPTX) files within the conversation, with content populated by the model in real-time.

# Minimal example: Have Grok 4.3 generate a financial report Excel file

from openai import OpenAI

client = OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="grok-4.3",

messages=[{

"role": "user",

"content": "Generate a 2025 Q4 SaaS industry financial report comparison in XLSX format, including revenue, growth rate, and gross margin columns."

}],

extra_body={"output_format": "xlsx"}

)

# The response contains the URL for the downloadable file

print(response.choices[0].message.attachments[0].url)

View full document generation code (including PDF/PPTX format switching)

from openai import OpenAI

import requests

import os

client = OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

OUTPUT_FORMATS = ["pdf", "xlsx", "pptx"]

def generate_doc(prompt: str, fmt: str, save_dir: str = "./outputs"):

"""Call Grok 4.3 to generate a document in the specified format and save it locally"""

if fmt not in OUTPUT_FORMATS:

raise ValueError(f"format must be one of {OUTPUT_FORMATS}")

response = client.chat.completions.create(

model="grok-4.3",

messages=[{"role": "user", "content": prompt}],

extra_body={"output_format": fmt}

)

attachment = response.choices[0].message.attachments[0]

file_url = attachment.url

file_name = attachment.filename or f"output.{fmt}"

os.makedirs(save_dir, exist_ok=True)

save_path = os.path.join(save_dir, file_name)

file_resp = requests.get(file_url, timeout=60)

file_resp.raise_for_status()

with open(save_path, "wb") as f:

f.write(file_resp.content)

return save_path

if __name__ == "__main__":

pdf_path = generate_doc(

"Write a 5-page industry trend report for 2026 AI Large Language Models",

"pdf"

)

print(f"PDF saved: {pdf_path}")

ppt_path = generate_doc(

"Create a 10-page product launch PPT for the Grok 4.3 API",

"pptx"

)

print(f"PPTX saved: {ppt_path}")

💡 Integration Tip: The document generation feature can be passed through directly via the APIYI (apiyi.com) API proxy service without additional parameter adjustments. Both Python and Node.js SDKs can call it as-is.

Grok 4.3 Voice API

Often overlooked is the fact that on the same day xAI released Grok 4.3, they also launched independent STT (Speech-to-Text) and TTS (Text-to-Speech) APIs. These two APIs are billed separately from the main model.

| Voice API | xAI Price | OpenAI Equivalent Price | Price Difference |

|---|---|---|---|

| STT (Whisper equivalent) | $4.20 / million characters | ~$30 / million characters | 86% cheaper |

| TTS (High expressiveness) | $4.20 / million characters | ~$50 / million characters (ElevenLabs) | 92% cheaper |

This pricing strategy means xAI has slashed the price floor for voice AI to 1/10th of the industry standard. For high-volume audio scenarios like customer service bots, podcast generation, and in-car voice assistants, the cost curve has been completely rewritten.

Impact Analysis of Grok 4.3 API for Developers

Direct Impact on AI Application Developers

| Dimension | Specific Change | Recommendation |

|---|---|---|

| Cost Structure | Reasoning application costs ↓ 40–60% | Re-evaluate the usage ratio of advanced models |

| Architecture | 1M context window reduces RAG necessity | Use long-context to replace some retrieval in the short term |

| Multimodal Products | Video input lowers the barrier for visual AI | Projects for monitoring/education/medical video are worth starting |

| Agent Products | 16-Agent Heavy increases complexity ceiling | Multi-Agent architecture is now practical |

| Voice Products | TTS/STT costs plummet | Voice front-ends can be fully AI-powered |

Mid-to-Long-Term Impact on the AI Industry

First, the price war for reasoning models has officially begun. Grok 4.3 brings the price of the 1M context + reasoning combination to about 1/12th of Claude Opus 4.7. This isn't a minor test; it shatters the industry consensus that "reasoning models must be expensive." This will force OpenAI and Anthropic to respond in the second half of 2026, either by following suit or by differentiating through other dimensions (stronger coding, longer memory).

Second, "native video input" is moving from demo to production. Previously, Gemini was the only commercial API providing video input at scale. With Grok 4.3, video multimodal enters a true dual-supplier era. This means video AI products that were previously stalled due to "single-supplier risk" can now be scheduled. Whether it's an education company wanting to "upload classroom recordings to generate notes" or an automotive company wanting "semantic search for dashcam event playback," the technical path is now ready.

Third, Multi-Agent enters the "subscription" era. The 16-Agent architecture of SuperGrok Heavy is provided as a subscription. If this business model succeeds, it will pave the way for products billed by the "number of Agents." Previously, Multi-Agent was primarily implemented at the application layer via open-source frameworks (LangGraph, AutoGen, CrewAI). Now that xAI has moved this layer down to the model platform, the "Agent-as-a-Service" paradigm is starting to take hold.

Fourth, the lack of persistent memory in xAI is a genuine weakness. ChatGPT and Claude have supported cross-session memory for over a year, but Grok 4.3 has yet to catch up. This is a significant disadvantage for "personal assistant" products. In the short term, the only solution is to build a memory layer at the application level (vector database + RAG). Community solutions like Mem0 and Letta are mature, but official native memory is required to unlock deeper product forms.

Fifth, reasoning speed has become a new competitive dimension. An output speed of 207 tokens/second is the fastest in the industry for reasoning models. This allows tasks that previously required batch processing to move to real-time interaction, such as code reviews, long-document Q&A, and dynamic content generation. Combined with low prices, this speed advantage will spawn a new class of applications: high-frequency, low-latency reasoning microservices.

Grok 4.3 API Selection Decision Matrix

Not every scenario is a perfect fit for switching to Grok 4.3. We’ve compiled the real-world business inquiries from the past two weeks into a decision matrix to help you with your selection.

Scenarios Where Grok 4.3 API Is Highly Recommended

| Scenario | Reason for Recommendation |

|---|---|

| Long document summary & analysis | 1M context window + 207 tps output; handles entire books or 200-page reports with ease |

| Customer service / Complaint handling agent | Reasoning is enabled by default, and the price is low enough to give "every user their own agent" |

| Video content understanding | The only video-native input alongside Gemini, but at a lower price point |

| Large-scale offline data labeling | Batch API costs drop to nearly $0.65 / million tokens after discounts |

| Multimodal meeting minutes | Integrates video, audio, and text; generates documents directly into PDF/PPTX |

| Long-chain Agentic tasks | Vending-Bench data proves this is a core strength of Grok 4.3 |

Scenarios Where Grok 4.3 API Is Not Recommended

| Scenario | Reason for Non-recommendation |

|---|---|

| Top-tier coding agent | SWE-bench scores still trail Claude Opus 4.7 by about 14 percentage points |

| Personal assistant (strong memory) | Lacks persistent memory; requires building your own Memory layer |

| Ultra-low latency interaction | 207 tps is fast, but Grok 4 Fast (235 tps) is cheaper and better suited |

| Extremely sensitive to native Chinese | Chinese performance is good but still slightly behind top-tier Claude / GPT-5 |

| Strictly compliant legal / medical writing | Knowledge cutoff is November 2024, not as current as Claude Opus 4.7 |

Internal Task Allocation: Grok 4.3 vs. Grok 4 Fast

Many teams ask a practical question: Should we use Grok 4.3 or Grok 4 Fast for the same project? Our advice is to route tasks based on complexity.

| Task Type | Recommended Model | Reason |

|---|---|---|

| Simple FAQ answering | Grok 4 Fast | Unit price is 1/4 of 4.3, and it's faster |

| Content moderation/classification | Grok 4 Fast | Doesn't require reasoning; Fast is sufficient |

| Complex planning generation | Grok 4.3 | Reasoning must be enabled; 4.3 is the default choice |

| Multi-step tool invocation | Grok 4.3 | Server-side tool chain requires reasoning support |

| Long document (>200k) processing | Grok 4.3 | Fast context is limited to 256k; only 4.3 has 1M |

💡 Architecture Implementation Tip: Using the APIYI (apiyi.com) API proxy service, you can automatically route requests to either Grok 4 Fast or Grok 4.3 based on token length or task tags. With the same SDK code and API key, you only need to change one field to switch models, significantly reducing engineering overhead.

Complete Guide to Accessing Grok 4.3 API in China

Accessing xAI's official interface from within China faces two main hurdles: network connectivity and payment. Here is the most reliable path for integration.

Grok 4.3 API Integration Steps (OpenAI SDK Compatible)

# Complete domestic integration example using the official OpenAI SDK

from openai import OpenAI

client = OpenAI(

api_key="Your APIYI API key",

base_url="https://vip.apiyi.com/v1" # APIYI API proxy service base_url

)

response = client.chat.completions.create(

model="grok-4.3",

messages=[

{"role": "system", "content": "You are a senior AI product analyst"},

{"role": "user", "content": "Analyze the three long-term impacts of Grok 4.3 on the agent industry"}

],

temperature=0.7,

max_tokens=2048

)

print(response.choices[0].message.content)

View full code for streaming output + image input

from openai import OpenAI

import base64

client = OpenAI(

api_key="Your APIYI API key",

base_url="https://vip.apiyi.com/v1"

)

# 1. Streaming output

def stream_chat(prompt: str):

stream = client.chat.completions.create(

model="grok-4.3",

messages=[{"role": "user", "content": prompt}],

stream=True

)

for chunk in stream:

content = chunk.choices[0].delta.content

if content:

print(content, end="", flush=True)

print()

# 2. Image input

def vision_chat(image_path: str, question: str):

with open(image_path, "rb") as f:

b64 = base64.b64encode(f.read()).decode()

response = client.chat.completions.create(

model="grok-4.3",

messages=[{

"role": "user",

"content": [

{"type": "text", "text": question},

{"type": "image_url", "image_url": {

"url": f"data:image/png;base64,{b64}"

}}

]

}]

)

return response.choices[0].message.content

if __name__ == "__main__":

stream_chat("Explain the impact of a 1M context window on RAG architecture in three paragraphs")

answer = vision_chat(

"./screenshot.png",

"Where is Grok 4.3 located in this architecture diagram?"

)

print(answer)

Grok 4.3 API Tool Use in Practice

Beyond the OpenAI-compatible protocol, Grok 4.3 natively supports three types of server-side tools. Simply declare them via the tools field, and the model will autonomously decide when and which tool to call—no extra orchestration required at the application layer.

# Grok 4.3 server-side tool invocation example: Let the model perform autonomous web search + sandbox code execution

from openai import OpenAI

client = OpenAI(

api_key="Your APIYI API key",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="grok-4.3",

messages=[{

"role": "user",

"content": "Check xAI's official token pricing for Grok 4.3 today, and use Python to calculate the total cost for 1 million invocations"

}],

tools=[

{"type": "web_search"}, # Web search

{"type": "code_execution"} # Sandbox execution

]

)

print(response.choices[0].message.content)

The model will chain tool calls as needed—for example, performing a web_search to grab the latest pricing, followed by code_execution to run the math, and finally providing a structured answer. This "autonomous tool chain" capability, which required manual orchestration in the Grok 4.20 era, can now be completed in a single request with Grok 4.3.

Best Practices for Migrating from OpenAI to Grok 4.3 API

Many teams have built systems based on OpenAI interfaces. When migrating to Grok 4.3, keep these key points in mind.

| Migration Item | Original OpenAI Syntax | Recommended Grok 4.3 Syntax |

|---|---|---|

| base_url | https://api.openai.com/v1 |

https://vip.apiyi.com/v1 |

| Model field | gpt-5 |

grok-4.3 |

| Reasoning config | reasoning_effort="high" |

Enabled by default; no config needed |

| Tool declaration | tools=[{"type": "function", ...}] |

Same; server-side tools use built-in types like web_search |

| Streaming output | stream=True |

Fully compatible |

| JSON mode | response_format={"type": "json_object"} |

Fully compatible |

For actual projects, we suggest a three-step approach: First, switch only the base_url and model in the test environment to ensure basic chat works. Second, route high-value reasoning requests to Grok 4.3 while keeping standard chats on the original model for A/B testing. Third, decide whether to switch fully or adopt a hybrid architecture based on real data.

🎯 Hybrid Architecture Suggestion: On the APIYI (apiyi.com) platform, all mainstream models (Grok 4.3, Claude Opus 4.7, GPT-5, Gemini 3 Pro) share the same

base_urland API key. You only need to change themodelfield in your application code to switch, making hybrid architecture implementation seamless.

Grok 4.3 API Integration Notes

| Note | Description |

|---|---|

| Model field | Use grok-4.3 strictly (not grok4.3 or Grok-4.3) |

| base_url | We recommend https://vip.apiyi.com/v1 for stable, low-latency access |

| Reasoning field | Enabled by default; no extra parameters required |

| Ultra-long context | Recommend input ≤ 800k tokens to leave headroom for reasoning |

| Video input | Pass via the video_url field; currently recommended for videos under 5 minutes |

🎯 Practical Usage Tip: We suggest applying for a test key on APIYI (apiyi.com) to complete a minimal viable loop before switching to production quotas. The platform supports prepaid balances and pay-as-you-go, with no need to bind foreign credit cards, making it ideal for domestic team financial workflows.

Grok 4.3 API FAQ

Q1: Is the Grok 4.3 API really cheaper than Grok 4.20, and what’s the price drop?

Yes, and the drop is significant. Grok 4.20 was previously priced at approximately $3.00 / $15.00 (input/output), while Grok 4.3 has been adjusted to $1.25 / $2.50. This represents a reduction of about 58% for input and 83% for output. If you calculate it based on a 3:1 input-to-output ratio, the overall cost reduction is roughly 70%. This is a clear signal from xAI that they are aggressively targeting the reasoning model market.

Q2: Is the 1M context window of the Grok 4.3 API available in China?

Yes, it is. The 1M context window is a native capability of the model itself and is not subject to regional restrictions. When developers in China access it via an API proxy service like APIYI (apiyi.com), the long context is fully supported. However, keep in mind: the higher the number of tokens in a single request, the higher the latency (end-to-end can take over 30 seconds). We recommend handling ultra-long context asynchronously or using segmented scheduling in production environments.

Q3: How should I choose between Grok 4.3 and Claude Opus 4.7?

Choose based on your task type: If your core business involves code generation or long-chain coding agents, go with Claude Opus 4.7, as it still leads in SWE-bench by about 14 percentage points. If your business focuses on long-context summarization, Vending-Bench style agents, or multimodal video understanding, choose Grok 4.3; it's 12 times cheaper and offers better task alignment. A hybrid architecture is the mainstream approach for 2026, where a unified API proxy service is used to route between both models.

Q4: What is the 16-Agent Heavy system in Grok 4.3? Can it be called via API?

The 16-Agent Heavy is a parallel scheduling system built on top of the main model by xAI. It uses an orchestrator to coordinate up to 16 worker agents to process sub-tasks in parallel, making it ideal for complex planning and long-duration simulations. Currently, the Heavy mode is only available to SuperGrok Heavy ($300/month) subscribers. The standard API does not yet expose a direct 16-agent entry point, but you can implement multi-agent orchestration at the application layer using Grok 4.3 to achieve results close to the native Heavy experience.

Q5: Grok 4.3 API lacks persistent memory; what are the alternatives?

You'll need to build a memory module at the application layer. A common practice is to store user conversation history in a vector database and use RAG to retrieve top-k fragments to prepend to the context before making a model invocation. There are mature community solutions like Mem0 or Letta that can directly interface with OpenAI-compatible APIs, making them compatible with Grok 4.3 as well. We suggest setting your base_url to APIYI (apiyi.com) to get basic conversations running first, then layering on the memory component to minimize iteration costs.

Q6: What video input scenarios does Grok 4.3 support, and are there duration limits?

The official recommendation is for single videos to be ≤ 5 minutes, ≤ 1080p, and in mp4/mov/webm format. These are billed based on image tokens after frame extraction. Typical applications include extracting key events from surveillance footage, generating structured minutes for meeting videos, creating chapter notes for educational videos, and automatically documenting product demo videos. For longer videos, we recommend slicing them on the client side and then calling Grok 4.3 in parallel to process them.

Q7: Do I need to change my code to migrate from OpenAI / Claude to Grok 4.3?

You only need to change two fields. The Grok 4.3 API is fully compatible with OpenAI's Chat Completions protocol. Simply change the model field from gpt-5 or claude-opus-4-7 to grok-4.3, and update the base_url from the original provider's domain to https://vip.apiyi.com/v1. Streaming output, tool calling, and JSON mode all retain the same field names as OpenAI, so there's no need to rewrite your client-side logic. Video input is unique to Grok 4.3 and is passed via the video_url field, which won't interfere with your existing image input workflows.

Q8: Which scenarios is the Grok 4.3 API Batch mode suitable for?

It's suitable for non-real-time, bulk tasks that can tolerate a 24-hour turnaround, such as offline data labeling, historical log analysis, large-scale document pre-processing, and content moderation archiving. The Batch API can save an additional 20–50% on top of standard pricing. For batch processing tasks with high input and low output, the actual cost can be pushed down to an extremely low range, approaching $0.65 per million tokens. If your business is not latency-sensitive, moving traffic to Batch is the most cost-effective migration path.

Key Points for Developers in China Using Grok 4.3 API

Finally, here is a checklist for Chinese teams, covering technology, compliance, and cost.

Technical Integration

First, prioritize using a stable API proxy service rather than building your own proxy. The official xAI interface for Grok 4.3 requires a stable overseas network connection, and self-built proxies are prone to connection jitter under high concurrency. API proxy services deployed across multiple domestic data centers offer better latency and stability. Second, once you switch the base_url to https://vip.apiyi.com/v1, your SDK requires no changes—whether you're using the Python OpenAI SDK, the Node.js openai package, or Go's go-openai, everything will work out of the box.

Compliance and Billing

First, Chinese teams can use API proxy services to settle payments in RMB, avoiding issues with overseas credit cards and cross-border payment compliance. Second, proxy platforms generally offer pay-as-you-go or prepaid balance models, which are more finance-friendly for domestic companies. Third, regarding data export compliance, we recommend performing sensitive information desensitization at the application layer; avoid feeding raw customer privacy data directly into reasoning models.

Cost Control

First, take advantage of Grok 4.3's cached_input discount; for scenarios with long and fixed system prompts, the actual unit price can be pushed down to $0.31 per million tokens. Second, route all non-real-time business to Batch to save another 20–50%. Third, route simple tasks to Grok 4 Fast and reserve Grok 4.3 for reasoning tasks; this can reduce overall costs by 60–70%.

🎯 Summary for Domestic Implementation: We recommend that Chinese teams adopt the following path for Grok 4.3: API proxy service (APIYI apiyi.com) + OpenAI SDK + model hybrid routing + Batch API priority. This combination balances stability with cost control and has already been validated across multiple domestic SaaS products.

Summary: The True Significance of the Grok 4.3 API

Returning to our initial assessment, Grok 4.3 isn't just an update that brings a "smarter model"; it's an update that "redefines the cost curve for reasoning models." Three numbers tell the story best: $1.25 input price, 1M context window, and 207 tokens/second output speed. Combined, this is a unique proposition in the reasoning model tier.

The best use cases for the Grok 4.3 API center on: long-context summarization and analysis, structured processing of multiple video streams, multi-agent collaborative workflows, and high-throughput reasoning where real-time performance is critical. It’s not a direct replacement for Claude Opus 4.7, but for many tasks previously handled by Opus 4.7, Grok 4.3 offers a new option that is "12 times cheaper with a 5 times larger context window."

For developers in China, the integration path for the Grok 4.3 API is already very mature. We recommend accessing and testing it directly through the APIYI (apiyi.com) platform. The base_url is compatible with the OpenAI SDK, and you can simply use grok-4.3 as the model field—no code modifications required. On the same platform, you can also call Claude Opus 4.7, GPT-5, and Gemini 3 Pro, making horizontal comparisons and hybrid orchestration incredibly convenient.

The real test for Grok 4.3 will come in the second half of 2026: Will OpenAI and Anthropic follow suit with price cuts? Can xAI bridge the gap in persistent memory? And can the 16-Agent Heavy mode break out of its subscription-only walls? Until then, it remains one of the most cost-effective reasoning APIs available, and it's well worth every agent application developer running it against their own real-world data.

References

-

xAI Official Model Documentation: Model IDs, pricing, and capability specifications

- Link:

docs.x.ai/developers/models - Note: Contains full API parameters and billing rules for Grok 4.3.

- Link:

-

xAI Official Updates: Product releases and announcements

- Link:

x.ai/news - Note: Grok 4.3 launch event and feature introductions.

- Link:

-

OpenRouter Real-time Price List: Multi-model comparison and historical pricing

- Link:

openrouter.ai/x-ai/grok-4.3 - Note: Real-time pricing and latency monitoring.

- Link:

-

Artificial Analysis Leaderboard: Comprehensive intelligence index and speed data

- Link:

artificialanalysis.ai/models/grok-4-3 - Note: Comparisons across dimensions like AA index, speed, and context window.

- Link:

-

APIYI Integration Documentation: Complete tutorial for accessing Grok 4.3 via domestic API proxy services

- Link:

help.apiyi.com - Note: Includes Python/Node.js SDK examples and billing instructions.

- Link:

Author: APIYI Team — Focused on AI Large Language Model API proxy services, helping domestic developers call mainstream models like Grok 4.3, Claude Opus 4.7, and GPT-5 with one click. Visit APIYI (apiyi.com) to get free testing credits.