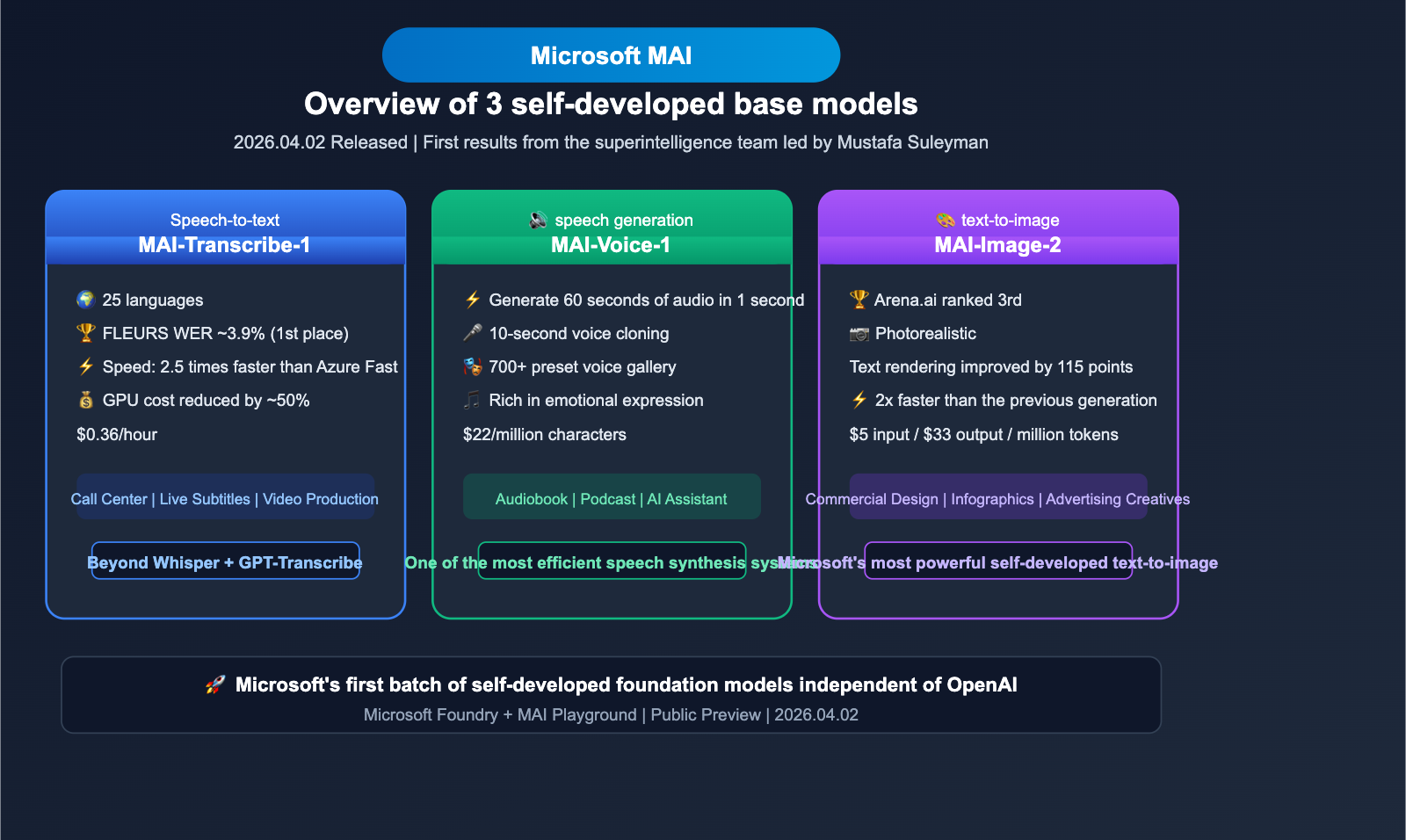

On April 2, 2026, the Microsoft MAI Super Intelligence team officially released 3 proprietary foundation models: MAI-Transcribe-1 (speech-to-text), MAI-Voice-1 (speech generation), and MAI-Image-2 (text-to-image). This marks the first major product launch since the formation of the MAI team under Mustafa Suleyman, signaling Microsoft's move to build AI capabilities independent of OpenAI.

Core Value: Get up to speed in 3 minutes on the technical specs, benchmark performance, API pricing, and the impact of these new Microsoft MAI models on the AI industry landscape.

Quick Overview of the 3 New Microsoft MAI Models

| Feature | Details |

|---|---|

| Release Date | April 2, 2026 |

| Publisher | Microsoft MAI Super Intelligence Team (CEO: Mustafa Suleyman) |

| Models Released | MAI-Transcribe-1 / MAI-Voice-1 / MAI-Image-2 |

| Platform Access | Microsoft Foundry + MAI Playground |

| Strategic Significance | Microsoft's first proprietary multimodal foundation models, reducing reliance on OpenAI |

| Current Status | Public Preview |

These three models cover speech recognition, speech generation, and image generation, respectively. They represent the first competitive foundation models launched independently by Microsoft following the renegotiation of their partnership terms with OpenAI.

MAI-Transcribe-1: A Deep Dive into Microsoft's Speech-to-Text Model

MAI-Transcribe-1 Core Technical Specs

MAI-Transcribe-1 is Microsoft's most powerful speech recognition model to date, securing the top spot overall in the FLEURS benchmark.

| Parameter | MAI-Transcribe-1 |

|---|---|

| Supported Languages | 25 languages |

| FLEURS Benchmark WER | ~3.9% (Ranked #1) |

| Processing Speed | 2.5x faster than Azure Fast |

| GPU Costs | ~50% lower than competitors |

| API Pricing | $0.36/hour |

| Key Advantage | Lowest WER in 11 core languages |

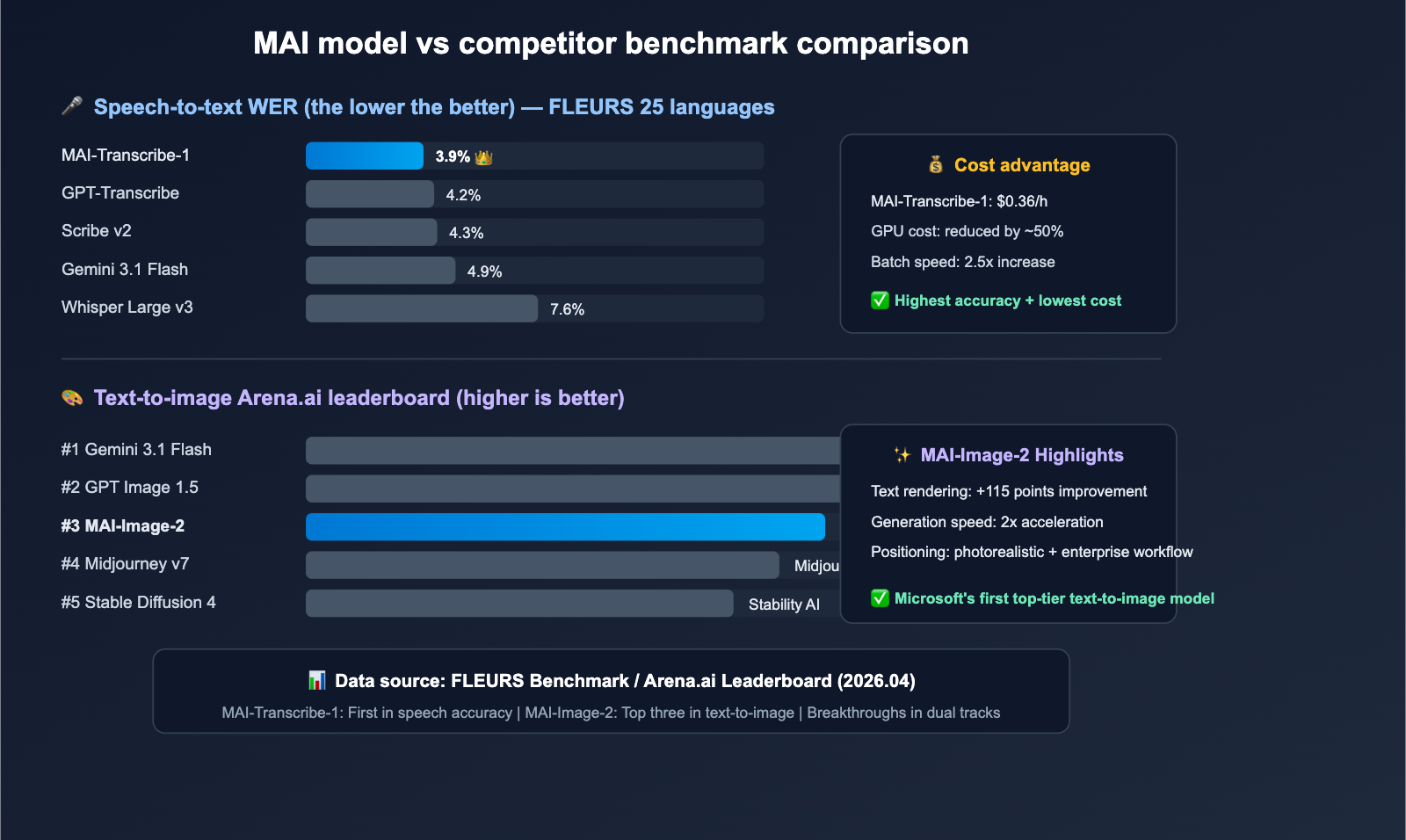

MAI-Transcribe-1 vs. Competitors: WER Comparison

In the FLEURS 25-language benchmark, MAI-Transcribe-1 leads the pack in Word Error Rate (WER):

| Model | FLEURS WER | Top Languages | Pricing Reference |

|---|---|---|---|

| MAI-Transcribe-1 | ~3.9% | 11/25 #1 | $0.36/hour |

| GPT-Transcribe (OpenAI) | ~4.2% | — | Token-based |

| Scribe v2 (ElevenLabs) | ~4.3% | — | From $0.40/hour |

| Gemini 3.1 Flash | ~4.9% | — | Token-based |

| Whisper Large v3 | ~7.6% | — | Open source/Free |

5 Key Advantages of MAI-Transcribe-1

1. Enterprise-Grade Multilingual Accuracy

MAI-Transcribe-1 ranks first overall across 25 languages, achieving the industry's lowest WER for 11 core languages (including English, Chinese, Japanese, Spanish, etc.). It also outperforms Whisper Large v3 across the remaining 14 languages and beats Gemini 3.1 Flash in 11 of them.

2. 2.5x Faster Batch Processing

Compared to Microsoft's previous Azure Fast transcription solution, MAI-Transcribe-1 delivers a 2.5x boost in batch processing speed. This translates to significant efficiency gains for use cases like call center log analysis, automated meeting minutes, and video subtitle generation.

3. ~50% Reduction in GPU Costs

Through architectural optimizations, MAI-Transcribe-1 maintains top-tier accuracy while cutting GPU inference costs by about half, drastically lowering the total cost of ownership for large-scale transcription tasks.

4. Versatile Use Cases

- IVR Systems: Real-time transcription for interactive voice response.

- Call Centers: Automated transcription and analysis of customer service calls.

- Live Subtitling: Real-time captioning for events and meetings.

- Video Production: Automated subtitle generation for video content.

- Market Research: Batch transcription of interview recordings.

5. Competitive API Pricing

The $0.36/hour price point offers a clear competitive edge in the enterprise transcription market, especially when considering its superior WER performance.

🎯 Developer Tip: For developers looking to integrate speech-to-text capabilities, MAI-Transcribe-1 is available via Microsoft Foundry. If you need to orchestrate multiple AI models (e.g., speech-to-text + text generation + image generation), you can use the APIYI (apiyi.com) platform to unify API calls from different providers and simplify your integration complexity.

MAI-Voice-1: Technical Overview of Microsoft's Speech Generation Model

MAI-Voice-1 Core Specs

MAI-Voice-1 is Microsoft's high-efficiency speech generation model, designed with a focus on extreme performance.

| Parameter | MAI-Voice-1 |

|---|---|

| Generation Efficiency | < 1 second for 60 seconds of audio (single GPU) |

| Voice Cloning | Custom voice creation with just 10s of audio |

| Voice Library | 700+ preset voices |

| API Pricing | $22/million characters |

| Integration | Azure Speech / Microsoft Foundry |

| Existing Apps | Copilot audio expression and podcast features |

MAI-Voice-1 Technical Highlights

1. Extreme Generation Efficiency

Generating 60 seconds of high-quality audio in under a second on a single GPU makes MAI-Voice-1 one of the most efficient speech synthesis systems available, perfect for real-time feedback applications.

2. 10-Second Voice Cloning

The "Personal Voice" feature allows users to create highly accurate custom voices using only 10 seconds of audio samples. Note that this feature requires approval through Microsoft's Responsible AI process.

3. 700+ Voice Gallery

Through Azure Speech integration, developers gain access to over 700 preset voices covering various languages, accents, and styles to suit any application.

4. Emotionally Expressive Output

MAI-Voice-1 doesn't just generate clear speech; it simulates emotional depth—including tone shifts, pacing, and emotional inflection—making the output sound natural and engaging.

💡 Use Cases: MAI-Voice-1 is ideal for audiobook production, automated podcast generation, customer service voice responses, and accessibility tools. Developers can combine Large Language Models to generate text, then use MAI-Voice-1 to convert it into speech, building a complete AI voice assistant pipeline. You can easily integrate the LLM text generation step via the APIYI (apiyi.com) platform.

A Deep Dive into MAI-Image-2: Microsoft's Most Powerful Text-to-Image Model

MAI-Image-2 Key Specifications

MAI-Image-2 is Microsoft's first self-developed text-to-image model to achieve top-tier competitiveness on industry leaderboards.

| Parameter Dimension | MAI-Image-2 |

|---|---|

| Arena.ai Ranking | 3rd Place (only behind Gemini 3.1 Flash and GPT Image 1.5) |

| Generation Speed | Over 2x faster than the previous generation |

| Text Rendering Improvement | +115 points over the previous generation |

| Input Price | $5/million tokens |

| Output Price | $33/million tokens |

| Core Strengths | Photorealism, robust text rendering, high-precision complex layouts |

MAI-Image-2's Position on the Arena.ai Leaderboard

| Rank | Model | Vendor | Core Strength |

|---|---|---|---|

| 1 | Gemini 3.1 Flash Image | Best overall multimodal performance | |

| 2 | GPT Image 1.5 | OpenAI | Leading creative diversity |

| 3 | MAI-Image-2 | Microsoft | Text rendering + Photorealism |

| 4 | Midjourney v7 | Midjourney | Outstanding artistic style |

| 5 | Stable Diffusion 4 | Stability AI | Open-source ecosystem |

4 Key Technical Highlights of MAI-Image-2

1. Photorealistic Quality

MAI-Image-2 has reached new heights in generating photorealistic imagery. Details like volumetric lighting, material textures, and light-shadow transitions are near-photographic, making it ideal for commercial advertising and product showcases.

2. Significantly Enhanced Text Rendering

Compared to its predecessor, MAI-Image-2’s in-image text rendering capability has improved by 115 points. This means significantly better clarity and accuracy when generating infographics, posters, signage, and other images containing text elements.

3. Precision in Complex Layouts

In generation tasks involving multiple objects, complex spatial relationships, and detailed scenes, MAI-Image-2 demonstrates higher compositional precision than its competitors, reducing issues like object overlap and scale distortion.

4. Enterprise-Grade Workflow Integration

WPP, the world's largest advertising group, is already using MAI-Image-2 at scale for creative production. Microsoft has positioned this model as a productivity tool for designers and marketers, deeply integrated into the Microsoft 365 ecosystem.

🔧 Technical Practice: In real-world AI image generation applications, developers often need to compare the output of multiple models. Through the APIYI (apiyi.com) platform, you can unify access to various image generation model APIs like DALL-E and Stable Diffusion, making it easy to switch between models and compare results quickly.

Microsoft's MAI Strategy: The First Step Toward Reducing OpenAI Dependency

Why Microsoft is Developing Its Own Models

The relationship between Microsoft and OpenAI is undergoing a subtle shift. The release of the three MAI models is a clear strategic signal.

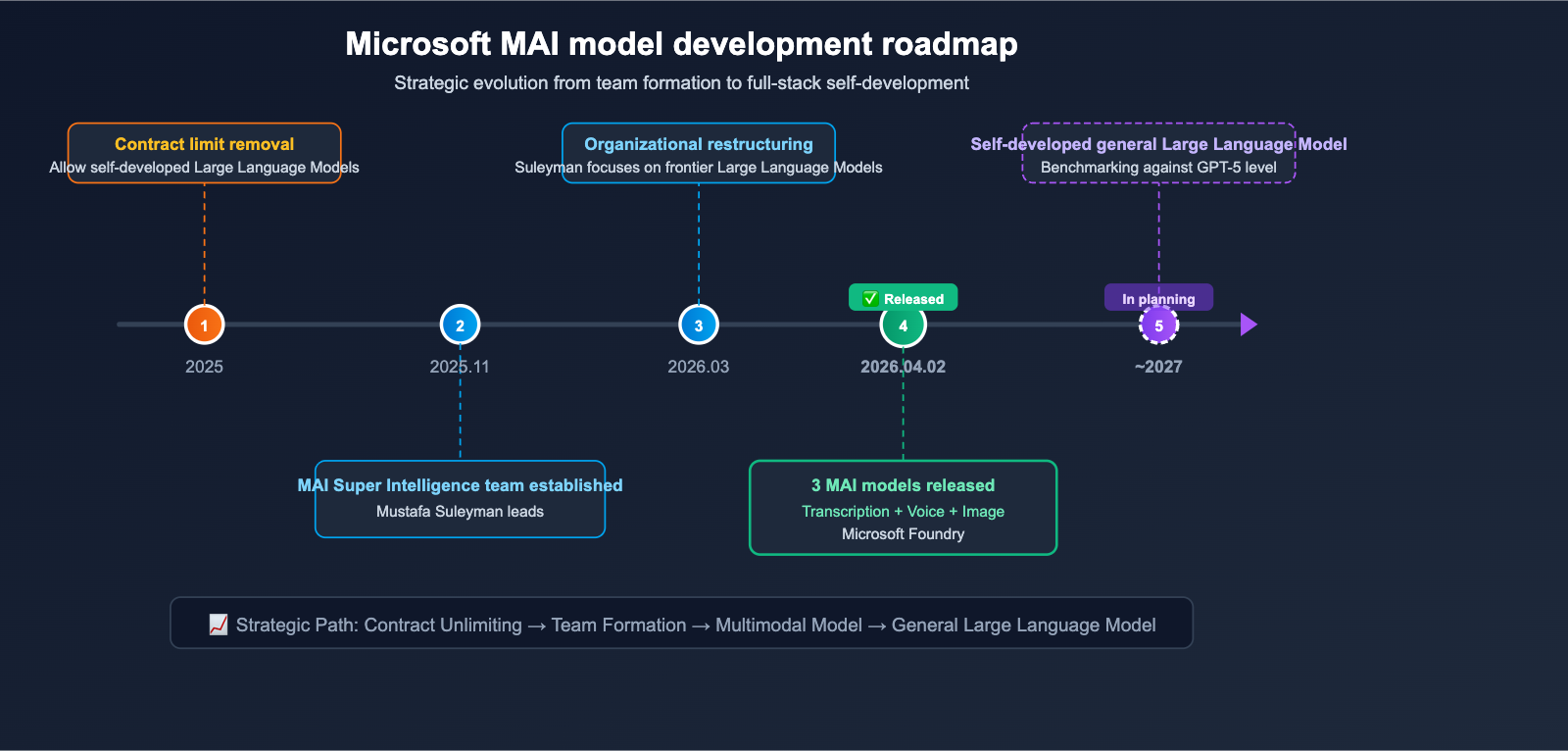

Key Timeline:

- 2025: Microsoft and OpenAI renegotiated partnership terms, removing contractual constraints that previously limited Microsoft from developing its own general-purpose AI models.

- November 2025: Mustafa Suleyman formed the MAI super-intelligence team, focusing on cutting-edge model R&D.

- March 2026: Satya Nadella announced an organizational restructuring; Suleyman now focuses entirely on frontier models and is no longer responsible for day-to-day Copilot operations.

- April 2, 2026: The MAI team released its first three self-developed foundation models.

- 2027 Goal: Plans to launch a general-purpose Large Language Model to compete at the GPT-5 level.

Current State of Microsoft's AI Model Matrix

| Model Category | Provided by OpenAI | Microsoft Self-Developed (MAI) |

|---|---|---|

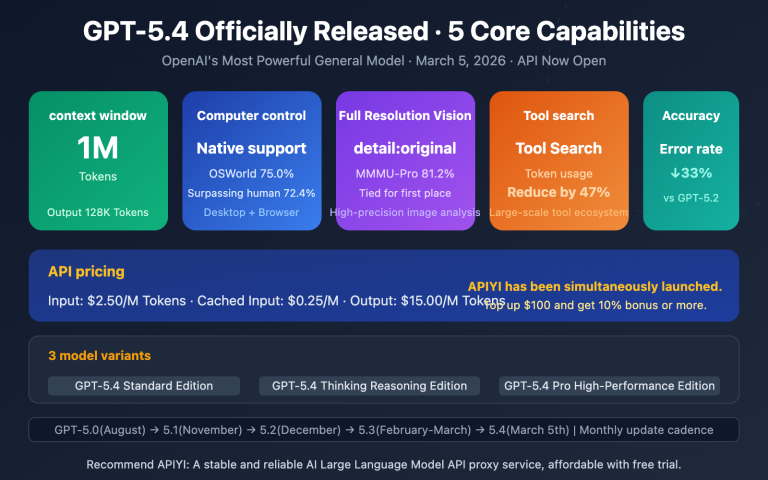

| General LLM | GPT-5.4 (Copilot Core) | Planned (2027) |

| Speech Recognition | Whisper / GPT-Transcribe | MAI-Transcribe-1 ✅ |

| Speech Generation | — | MAI-Voice-1 ✅ |

| Text-to-Image | DALL-E 3 | MAI-Image-2 ✅ |

| Code Model | Codex | Planned |

What This Means for Developers

Microsoft is building a "dual-track" AI model supply system: continuing to use OpenAI's general LLMs (GPT-5.4) while simultaneously launching self-developed alternatives in the speech and image sectors. This means developers will have more choices within the Microsoft ecosystem.

🎯 Industry Insight: The launch of Microsoft's self-developed models means competition in the AI model market will further intensify. For developers, choosing which model to use and through which channel to access it has become even more critical. By using the APIYI (apiyi.com) platform to unify access to AI model APIs from multiple vendors, you can flexibly switch underlying models without modifying your code, allowing you to adapt to the rapidly changing market landscape.

Microsoft MAI Model API Pricing and Integration

Pricing Overview

| Model | Billing Method | Price | Integration Platform |

|---|---|---|---|

| MAI-Transcribe-1 | Per audio hour | $0.36/hour | Microsoft Foundry / Azure Speech |

| MAI-Voice-1 | Per character | $22/million characters | Microsoft Foundry / Azure Speech |

| MAI-Image-2 | Per Token | Input $5/million + Output $33/million Tokens | Microsoft Foundry |

Integration Methods

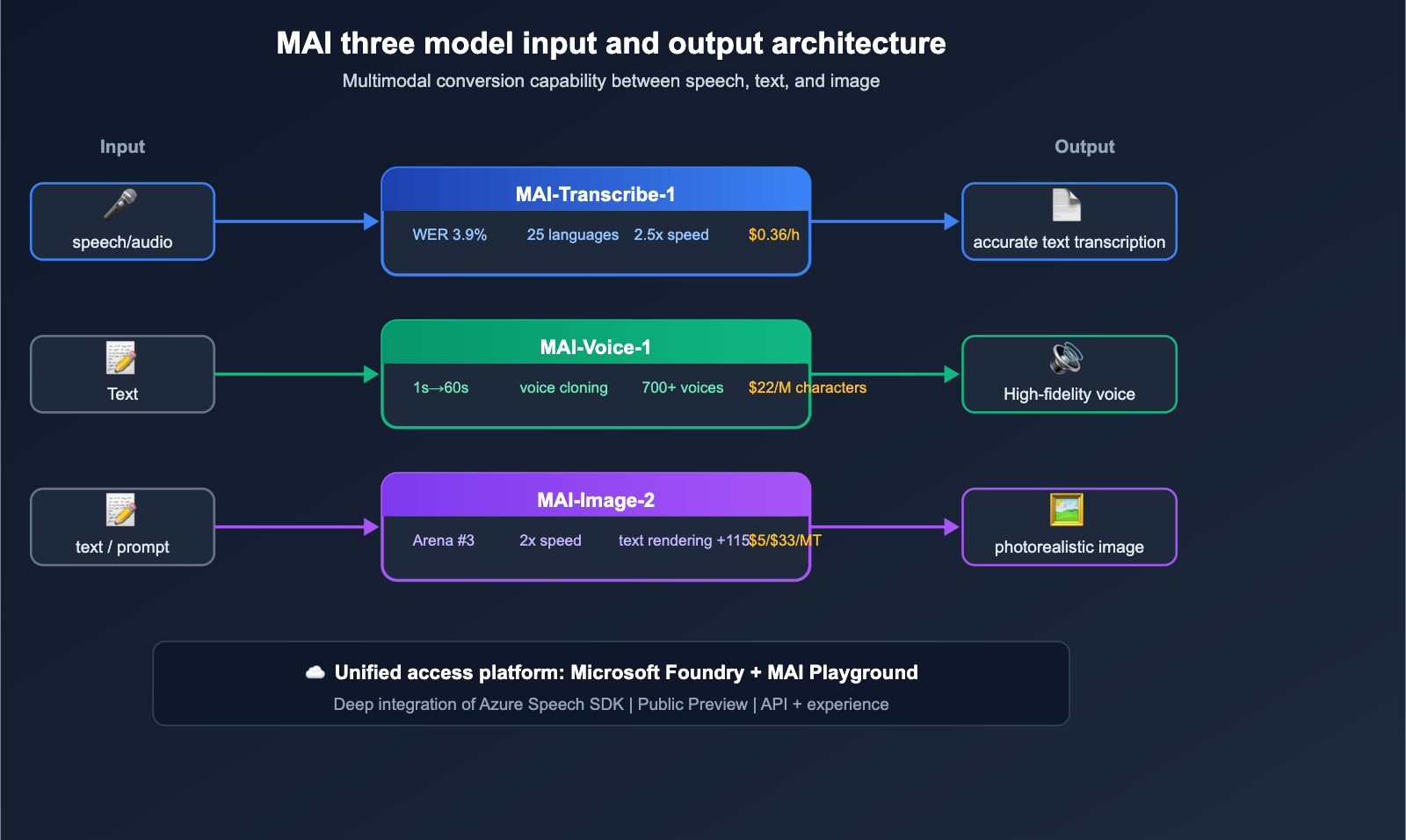

Method 1: Microsoft Foundry

All three models are available for API access via the Microsoft Foundry developer platform in public preview. Developers can call these models directly through the Foundry API endpoints.

Method 2: MAI Playground

MAI Playground is Microsoft's new model experience platform. Developers can use it to test the capabilities of MAI-Transcribe-1 and MAI-Voice-1 for free, allowing for quick evaluation of whether they fit your specific use cases.

Method 3: Azure Speech Integration

MAI-Transcribe-1 and MAI-Voice-1 are deeply integrated into the Azure Speech service. Existing Azure users can call them directly via the Azure Speech SDK.

💰 Cost Optimization: When building multimodal AI applications, you'll often need to combine models from different providers for tasks like transcription, text generation, and image generation. Using the APIYI (apiyi.com) platform allows you to manage your API keys and usage centrally, saving you the hassle of registering and managing multiple platforms individually. The platform supports model access from various providers, including Microsoft, OpenAI, Anthropic, Alibaba Cloud, and more.

Analysis: The Impact of Microsoft's MAI Models on the AI Industry

Impact on the AI Model Market

1. Shifts in the Speech Recognition Landscape

With a Word Error Rate (WER) of 3.9%, MAI-Transcribe-1 directly challenges OpenAI's GPT-Transcribe (4.2%) and ElevenLabs' Scribe v2 (~4.3%). Combined with a 50% cost advantage, it's poised to quickly capture market share in the enterprise transcription sector.

2. Intensifying Competition in Text-to-Image

MAI-Image-2 has climbed into the top three on Arena.ai, solidifying a "Big Three" landscape in text-to-image generation: Google (Gemini 3.1 Flash), OpenAI (GPT Image 1.5), and Microsoft (MAI-Image-2). This puts even greater pressure on independent providers like Midjourney and Stability AI.

3. The Trend Toward "Full-Stack In-House" AI

Following in the footsteps of Google (Gemini series) and Meta (Llama series), Microsoft has begun building its own full-stack AI model capabilities. This signals that future competition in the AI market will increasingly concentrate among a few major tech giants.

Impact on Developers

- More Model Choices: The Microsoft ecosystem is no longer limited to just OpenAI.

- Increased Price Competition: Competition among multiple vendors will continue to drive API prices down.

- Multimodal Model Orchestration: Developers need to learn how to flexibly select and combine models from different providers based on specific scenarios.

🚀 Development Tip: With the rapid growth in AI model options, we recommend that developers use a unified access platform like APIYI (apiyi.com) to manage model invocations and avoid vendor lock-in. The platform provides a standard, OpenAI-compatible interface, meaning you can switch models simply by updating the

modelparameter.

Microsoft MAI Model FAQ

Q1: What is the relationship between MAI models and OpenAI models?

MAI models are independently developed by Microsoft's MAI Super Intelligence team and have no connection to OpenAI. Microsoft is currently adopting a "dual-track" strategy: general-purpose Large Language Models will continue to use OpenAI's GPT-5.4, while the self-developed MAI series is being introduced for voice and image tasks. Following the renegotiation between Microsoft and OpenAI in 2025, the contractual clauses that restricted Microsoft from developing its own models were removed.

Q2: How much better is MAI-Transcribe-1 compared to Whisper?

In the FLEURS 25 language benchmark, MAI-Transcribe-1 achieves a Word Error Rate (WER) of approximately 3.9%, compared to about 7.6% for Whisper Large v3, showing a clear lead in accuracy. Additionally, the batch processing speed of MAI-Transcribe-1 is 2.5 times faster than the Azure Fast solution, with GPU costs reduced by about 50%. However, Whisper's advantage lies in being open-source and free, making it suitable for scenarios that are extremely cost-sensitive.

Q3: Can MAI-Image-2 replace DALL-E?

According to Arena.ai rankings, MAI-Image-2 (ranked 3rd) holds a higher overall position than DALL-E 3. It has a distinct advantage, particularly in text rendering and photorealism. However, DALL-E still offers unique performance in certain creative styles. For enterprise users, the deep integration of MAI-Image-2 within the Microsoft ecosystem may be a more compelling draw.

Q4: How can I quickly try out these three MAI models?

The fastest way is to visit the MAI Playground (Microsoft's new model experience platform) for a free trial. Formal API access requires going through the Microsoft Foundry developer platform. If your application needs to call multiple AI models simultaneously, you can use the APIYI (apiyi.com) platform to centrally manage API access from different providers, simplifying your development workflow.

Q5: When does Microsoft plan to release its own general-purpose Large Language Model?

According to public information, Microsoft is currently deploying an Nvidia GB200 chip cluster and plans to build frontier-level computing power within the next 12–18 months. It is expected that a self-developed general-purpose Large Language Model capable of competing with GPT-5 will be launched around 2027. Until then, the core Large Language Model for Copilot will continue to use OpenAI's GPT-5.4.

Summary of Microsoft MAI's 3 New Models

The Microsoft MAI team has delivered an impressive debut just five months after its inception:

- MAI-Transcribe-1: Ranks #1 on the FLEURS benchmark with a WER of ~3.9%, delivers a 2.5x speed boost, cuts costs by 50%, and is priced at $0.36/hour.

- MAI-Voice-1: Generates 60 seconds of audio in under 1 second on a single GPU, supports 10-second voice cloning, and includes 700+ preset voices.

- MAI-Image-2: Ranks #3 on the Arena.ai text-to-image leaderboard, features a 115-point improvement in text rendering, and supports complex layouts with photorealistic quality.

The release of these three models not only showcases Microsoft's in-house R&D capabilities but also signals an accelerating trend of "full-stack in-house development" among AI giants. For developers, as the variety of models continues to grow, using unified access platforms like APIYI (apiyi.com) to manage model invocation across multiple vendors will become a key strategy for boosting development efficiency and reducing switching costs.

📝 Author: APIYI Team | For more technical insights on AI models and API integration guides, please visit the APIYI Help Center: help.apiyi.com