Author's Note: An objective comparison of Claude Opus 4.6 and GPT-5.4 across 12 benchmarks, pricing, context windows, agent capabilities, and use cases to help developers make the right choice.

February and March 2026 saw the arrival of two heavyweight flagship models in the AI space: Anthropic's Claude Opus 4.6 (February 5th) and OpenAI's GPT-5.4 (March 5th). Both are the most powerful general-purpose models ever released by their respective companies, but their design philosophies and areas of strength are distinctly different.

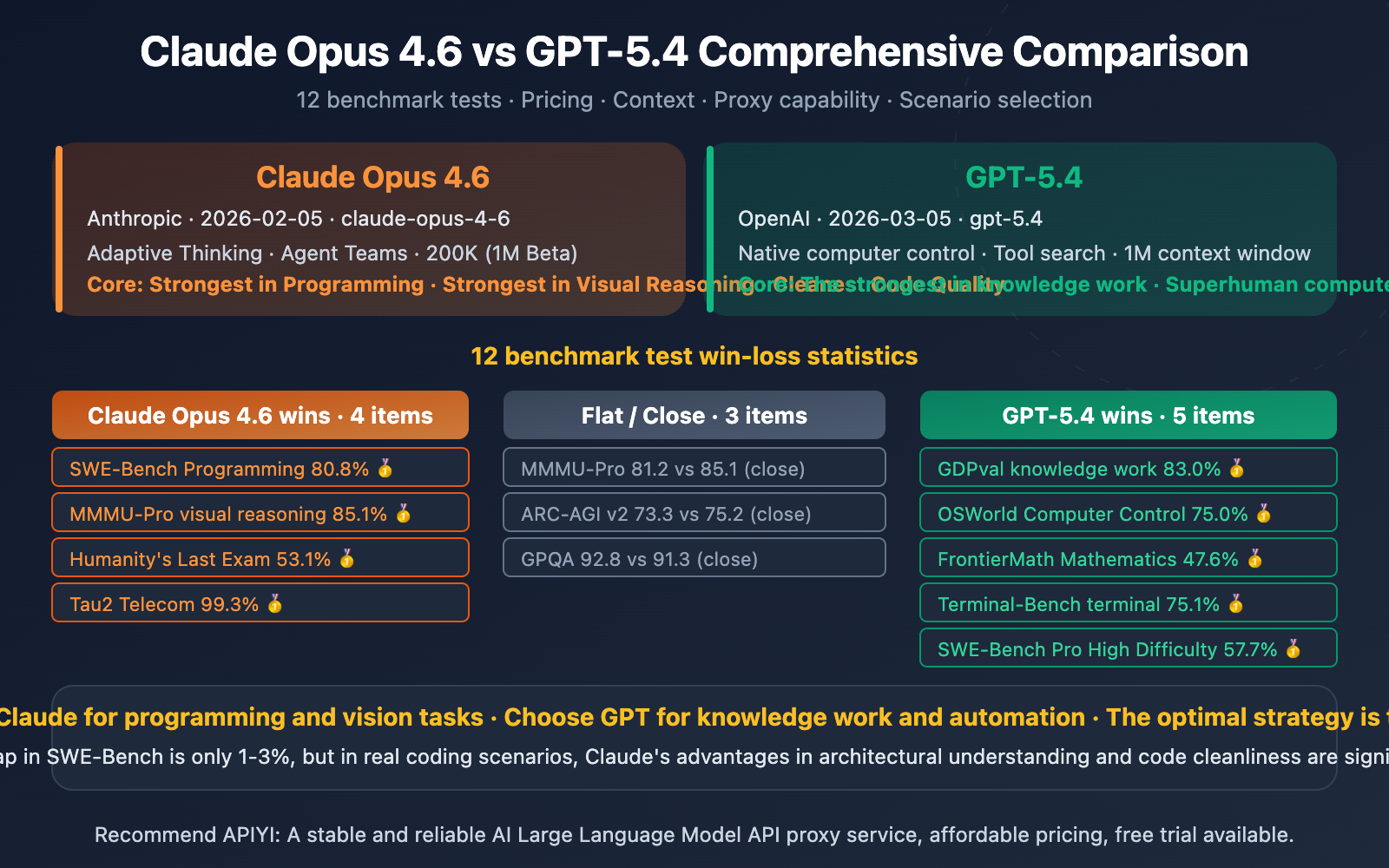

Benchmark results show: GPT-5.4 wins 5 categories, Claude Opus 4.6 wins 3 categories—however, Claude's lead in core dimensions like programming, reasoning, and code quality holds more practical value.

Core Value: After reading this article, you'll know exactly which model to choose for different scenarios like programming, reasoning, automation, and vision.

Claude Opus 4.6 vs GPT-5.4 Core Data Comparison

| Comparison Dimension | Claude Opus 4.6 | GPT-5.4 | Notes |

|---|---|---|---|

| Release Date | 2026-02-05 | 2026-03-05 | 1 month apart |

| Model ID | claude-opus-4-6 | gpt-5.4 | — |

| Context Window | 200K (1M Beta) | 1,000K | GPT officially supports 1M |

| Max Output | 128K | 128K | Same |

| Input Price | $5.00/M | $2.50/M | GPT is 50% cheaper |

| Output Price | $25.00/M | $15.00/M | GPT is 40% cheaper |

| Cached Input | $0.50/M | $0.25/M | GPT is 50% cheaper |

| Reasoning Mode | Adaptive Thinking (Adaptive) | 5-level reasoning (none→xhigh) | Each has its own characteristics |

| Computer Control | ✅ (72.7%) | ✅ (75.0%) | GPT surpasses human |

| Agent Teams | ✅ Agent Teams | ❌ | Claude exclusive |

| Tool Search | ❌ | ✅ Token reduction 47% | GPT exclusive |

| Finance Plugins | ❌ | ✅ Excel/Sheets | GPT exclusive |

Design Philosophy Differences Between Claude Opus 4.6 and GPT-5.4

The design philosophies of these two models are completely different:

Claude Opus 4.6 follows a "Deep Intelligence" path. Adaptive Thinking allows the model to automatically determine reasoning depth based on problem complexity, without manual budget setting. The Agent Teams feature enables a main Claude instance to spawn multiple independent sub-agents for parallel work, coordinating through shared task lists and messaging systems. This architectural design is better suited for complex programming tasks requiring deep understanding and long-chain reasoning.

GPT-5.4 follows a "Versatile Tool User" path. It's the first to integrate programming (inherited from GPT-5.3 Codex), computer control, full-resolution vision, and tool search into a single general-purpose model. The tool search mechanism lets the model look up tool definitions on-demand, reducing Token usage by 47%. Finance plugins (Moody's, MSCI, etc.) and ChatGPT for Excel target enterprise-level professional work.

🎯 Selection Tip: Their areas of strength are almost complementary. Through APIYI apiyi.com, you can use one API key to call both Claude Opus 4.6 and GPT-5.4, switching flexibly based on the scenario.

Claude Opus 4.6 vs GPT-5.4 Benchmark Detailed Analysis

Claude Opus 4.6 vs GPT-5.4 Complete Benchmark Table

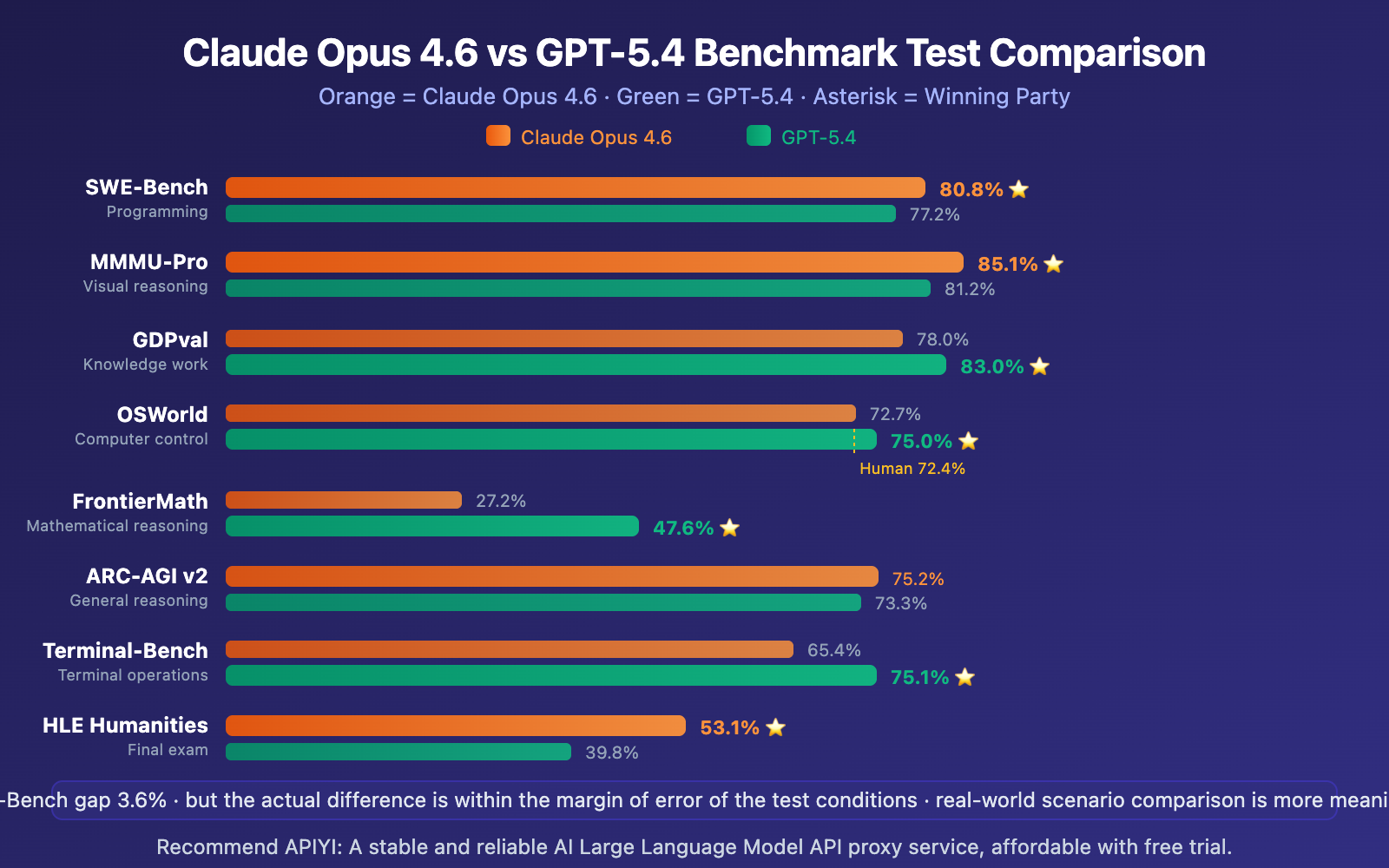

| Benchmark | Claude Opus 4.6 | GPT-5.4 | Gap | Winner |

|---|---|---|---|---|

| SWE-Bench Verified | 80.8% | 77.2% | +3.6% | Claude |

| SWE-Bench Pro (High Difficulty) | ~45.9% | 57.7% | +11.8% | GPT |

| MMMU-Pro Visual Reasoning | 85.1% | 81.2% | +3.9% | Claude |

| GDPval Knowledge Work | 78.0% | 83.0% | +5.0% | GPT |

| OSWorld Computer Control | 72.7% | 75.0% | +2.3% | GPT |

| FrontierMath Mathematics | 27.2% | 47.6% | +20.4% | GPT |

| ARC-AGI v2 General Reasoning | 75.2% | 73.3% | +1.9% | Claude |

| Terminal-Bench Terminal | 65.4% | 75.1% | +9.7% | GPT |

| Humanity's Last Exam | 53.1% | 39.8% | +13.3% | Claude |

| Tau2 Telecom | 99.3% | 98.9% | +0.4% | Claude |

| GPQA Graduate Reasoning | 91.3% | 92.8% | +1.5% | GPT |

| BrowseComp Web Browsing | 84.0% | 82.7% | +1.3% | Claude |

It's important to note: The differences between 80.0%, 80.6%, and 80.8% on SWE-Bench are actually within the margin of error of the testing conditions. In other words, on standardized programming benchmarks, the two models are already converging. The real differences lie in code quality, architectural understanding, and actual development experience.

🎯 Practical Testing Advice: Benchmarks are just a starting point for reference. We recommend getting free credits through APIYI apiyi.com to compare the actual performance of both models in your own projects—that's more valuable than any benchmark.

Claude Opus 4.6 vs GPT-5.4 Unique Capabilities Comparison

Claude Opus 4.6 Unique Advantages

1. Agent Teams

The Agent Teams feature introduced in Claude Opus 4.6 is currently unique in the AI field. A main Claude instance (Lead) can spawn multiple independent sub-agents (Teammates), each with its own complete, independent context window, collaborating in parallel through a shared task list and messaging system.

For deep research tasks, this multi-agent technology boosts performance by about 15 percentage points. This architecture is particularly well-suited for parallel refactoring of large codebases—the main agent handles planning while sub-agents work on different modules.

2. Adaptive Thinking

Unlike GPT-5.4's manual 5-level reasoning scale, Claude's Adaptive Thinking lets the model automatically judge problem complexity and dynamically allocate reasoning depth. At the default high level, Claude almost always activates chain-of-thought reasoning; for simple problems, it skips this automatically, saving tokens and reducing latency.

Adaptive Thinking also supports Interleaved Thinking—sprinkling reasoning steps between tool calls—which is especially effective for agent-based workflows.

GPT-5.4 Unique Advantages

1. Native Computer Control

GPT-5.4 is OpenAI's first general-purpose model with built-in native computer control capabilities. Its OSWorld score of 75.0% directly surpasses the human baseline of 72.4%. It can operate browsers and desktop applications through both Playwright code and direct keyboard/mouse instructions.

2. Tool Search

In systems with many tools, the traditional approach requires sending all tool definitions to the model at once. GPT-5.4's Tool Search lets the model look up tool definitions on-demand, reducing token usage by 47% while maintaining accuracy.

3. Deep Financial Industry Integration

The integration of ChatGPT for Excel/Google Sheets with Moody's/MSCI/FactSet data gives GPT-5.4 an ecosystem advantage in financial analysis that Claude currently can't match. Internal investment banking benchmarks improved from 43.7% to 87.3%.

🎯 API Access: Both Claude Opus 4.6 and GPT-5.4 can be called through the unified interface at APIYI apiyi.com. GPT-5.4 pricing matches the official rates ($2.50/$15.00), with a 10% bonus on top-ups of $100 or more.

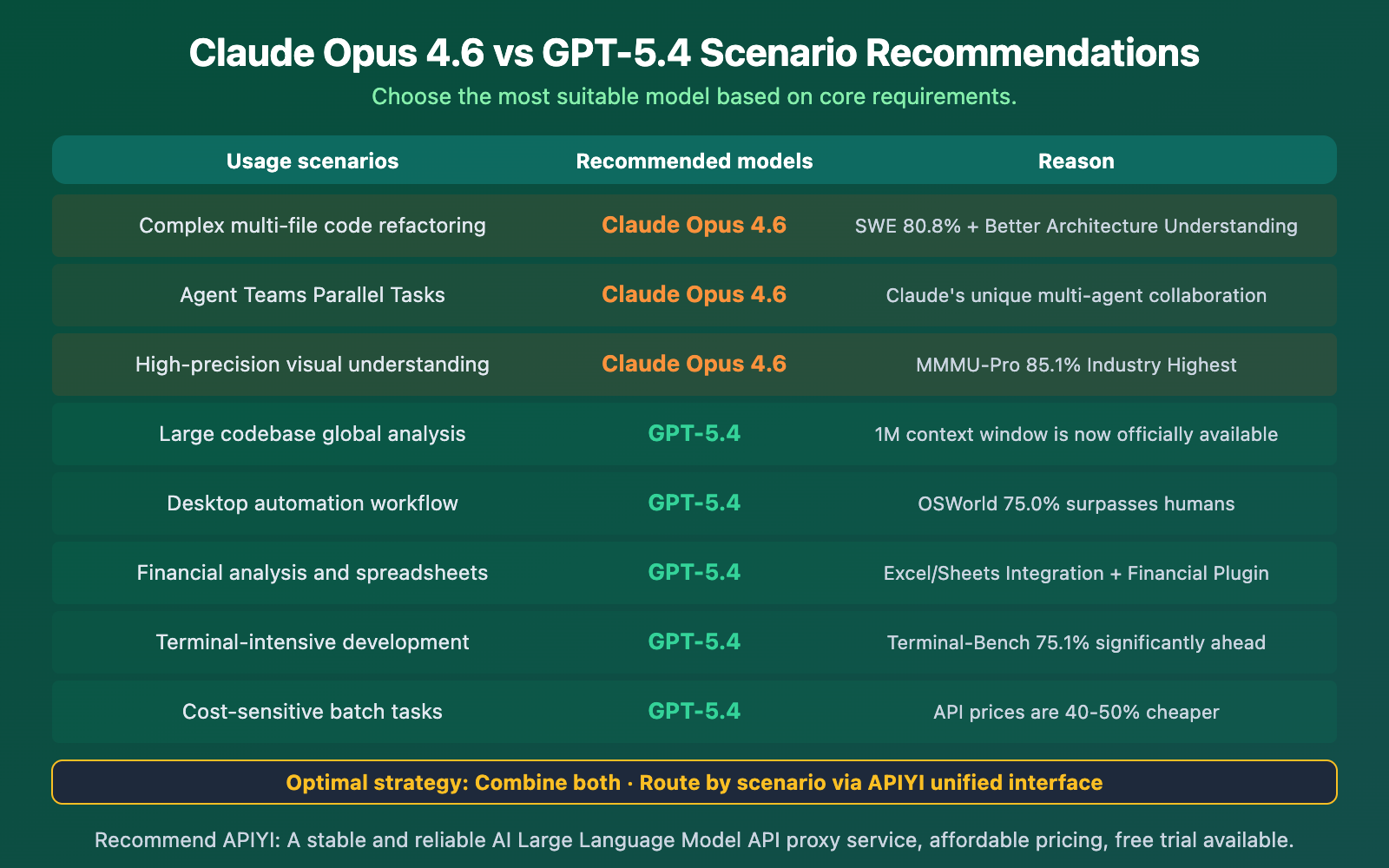

Claude Opus 4.6 vs GPT-5.4 Scenario Selection Guide

Claude Opus 4.6 vs GPT-5.4 API Integration Examples

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

# Complex code refactoring → Claude Opus 4.6

refactor = client.chat.completions.create(

model="claude-opus-4-6",

messages=[{"role": "user", "content": "Refactor this module's dependency injection"}]

)

# Large-scale project analysis → GPT-5.4

analysis = client.chat.completions.create(

model="gpt-5.4",

messages=[{"role": "user", "content": "Analyze security vulnerabilities across the entire project"}]

)

Recommendation: Register an account at APIYI apiyi.com to access both flagship models through a single platform. GPT-5.4 pricing matches the official rates, with a 10% bonus on top-ups of $100 or more. Switching models is as simple as changing one parameter.

Frequently Asked Questions

Q1: Which is better for programming, Claude Opus 4.6 or GPT-5.4?

It depends on the dimension. On the standard SWE-Bench programming benchmark, Claude leads with 80.8% vs 77.2%, and it also has superior code quality and multi-file refactoring capabilities. However, GPT-5.4 overtakes it on the high-difficulty SWE-Bench Pro with 57.7% vs ~45.9%, and also leads significantly in terminal operation tasks (75.1% vs 65.4%). For most developers, the programming capabilities of the two models are already converging.

Q2: Is the price difference significant? How should I choose?

GPT-5.4 is comprehensively cheaper: Input is $2.50 vs $5.00/M (50% less), and output is $15.00 vs $25.00/M (40% less). If cost is the primary consideration, GPT-5.4 is more suitable. If your project demands extremely high code quality and architectural understanding, Claude's premium is worth it. We recommend using APIYI (apiyi.com) to mix and match both models based on your scenarios to optimize costs.

Q3: How can I use both models through a single platform?

Register an account on APIYI (apiyi.com):

- Get a unified API key

- Set the

base_urltohttps://vip.apiyi.com/v1 - For refactoring tasks:

model="claude-opus-4-6" - For large project analysis:

model="gpt-5.4" - For daily tasks:

model="gpt-5.3-chat-latest"(most cost-effective)

Top up $100 and get 10% bonus. One account to call all mainstream models.

Conclusion

The core takeaways for Claude Opus 4.6 vs GPT-5.4:

- Choose Claude for Programming & Visual Reasoning: Industry-leading scores of 80.8% on SWE-Bench and 85.1% on MMMU-Pro. Produces cleaner code, and its Agent Teams multi-agent collaboration is a unique advantage.

- Choose GPT for Knowledge Work & Automation: Surpasses human performance with 83.0% on GPQAval and 75.0% on OSWorld. Its 1M context window is now officially usable, and its API pricing is 40-50% cheaper.

- The Smartest Strategy is to Combine Them: Their strengths are almost complementary—use Claude for refactoring, GPT for large project analysis and automation, and GPT-5.3 Instant for daily tasks to save money.

The 80.8% vs 77.2% gap on SWE-Bench might seem small, but in real-world development, Claude's advantages in architectural understanding and code cleanliness are still noticeable. GPT-5.4, on the other hand, has built its advantage in another dimension with its 1M context, computer control capabilities, and lower pricing.

We recommend accessing both flagship models through APIYI (apiyi.com). Use one API key to call them all, with a 10% bonus on top-ups of $100 or more.

📚 References

-

GPT-5.4 vs Claude Opus 4.6 Programming Comparison: SWE-Bench, Code Quality, and Agent Capabilities from a Developer's Perspective

- Link:

blog.getbind.co/gpt-5-4-vs-claude-opus-4-6-which-one-is-better-for-coding/ - Description: The most detailed programming comparison, including SWE-Bench Pro and Terminal-Bench data.

- Link:

-

GPT-5.4 vs Opus 4.6 vs Gemini 3.1 Pro Three-Way Comparison: Comprehensive 12-Benchmark Analysis

- Link:

digitalapplied.com/blog/gpt-5-4-vs-opus-4-6-vs-gemini-3-1-pro-best-frontier-model - Description: Covers pricing, context, benchmark tests, and strengths/weaknesses.

- Link:

-

Claude Opus 4.6 Official Release Announcement: Details on New Features like Agent Teams and Adaptive Thinking

- Link:

anthropic.com/news/claude-opus-4-6 - Description: First-hand information on Claude's unique features.

- Link:

-

Claude Opus 4.6 Adaptive Thinking API Documentation: Developer Integration Guide

- Link:

platform.claude.com/docs/en/build-with-claude/adaptive-thinking - Description: Learn the specifics of using Adaptive Thinking, including parameters and setup.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to discuss in the comments. For more resources, visit the APIYI Documentation Center at docs.apiyi.com.