Author's Note: A deep dive into the real reasons behind the shutdown of OpenAI's Sora, the competitive pressure from Seedance 2.0, and an analysis of why Anthropic’s strategy of avoiding image and video models—focusing instead on compute concentration—offers a vital lesson for the industry.

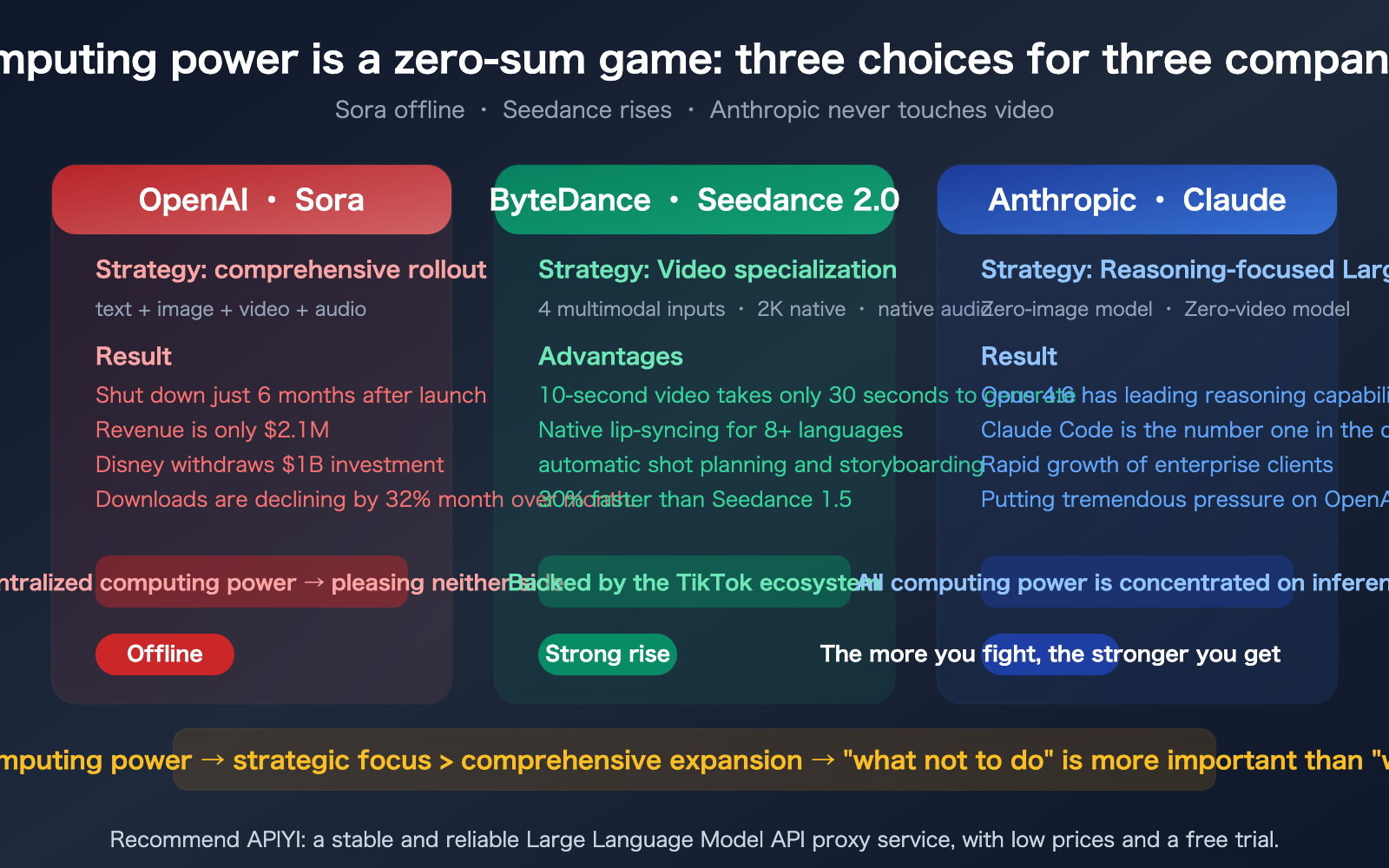

On March 24, 2026, OpenAI officially announced the shutdown of Sora—after just six months on the market, it had generated a total revenue of only $2.1 million, leading Disney to withdraw its $1 billion investment plan. Meanwhile, ByteDance’s Seedance 2.0 has rapidly captured the market with native 2K resolution, 4-modality input, and lightning-fast 30-second generation. But there’s a more profound truth to consider: Anthropic has never built an image or video generation model, yet it continues to gain momentum in the AI race. This article explores the underlying logic of Sora’s shutdown, the rise of Seedance, and the strategic focus of Anthropic through the lens of compute economics.

Core Value: Understand the compute dilemma in the AI video generation market in 3 minutes, and why "what you don't do" is a more critical strategic decision than "what you do."

Key Facts Behind the Shutdown of Sora 2

Let’s take a clear look at what happened before we dive into the "why."

| Fact | Details | Impact |

|---|---|---|

| Shutdown Date | Officially announced March 24, 2026 | Only 6 months after launch |

| Cumulative Revenue | ~$2.1M from in-app purchases | Nowhere near enough to cover compute costs |

| Download Trend | Peak of 3.33M in Nov → 1.13M in Feb | 32% month-over-month decline |

| Disney Exit | Withdrew $1B investment plan | Lost the most important content partner |

| Official Stance | "Rising compute demand, focusing on world simulation to advance robotics" | Compute reallocated to text/reasoning models |

| CEO Stance | Sam Altman told staff: Shutting down Sora frees up resources for next-gen models | Video is clearly not a priority |

In their farewell statement, the Sora team said: "We’re saying goodbye to Sora. Thank you to everyone who used Sora to create, share, and build community. What you did with Sora matters, and we know this news is disappointing."

Meanwhile, CNBC pointed out that OpenAI is cutting high-cost projects ahead of its IPO, making the shutdown of Sora part of a broader cost-control strategy.

3 Deep Reasons for the Sora 2 Shutdown

Reason 1: The Compute Black Hole of Video Generation

This is the fundamental issue. The compute power required for video generation is hundreds of times higher than that for text generation:

| Task Type | Single Compute Cost | Output per Dollar | GPU Utilization Efficiency |

|---|---|---|---|

| Text Chat | Millisecond-level | Thousands of chats | Extremely High |

| Image Generation | Second-level | Hundreds of images | High |

| Video Generation (10s) | Minute-level | Dozens of videos | Low |

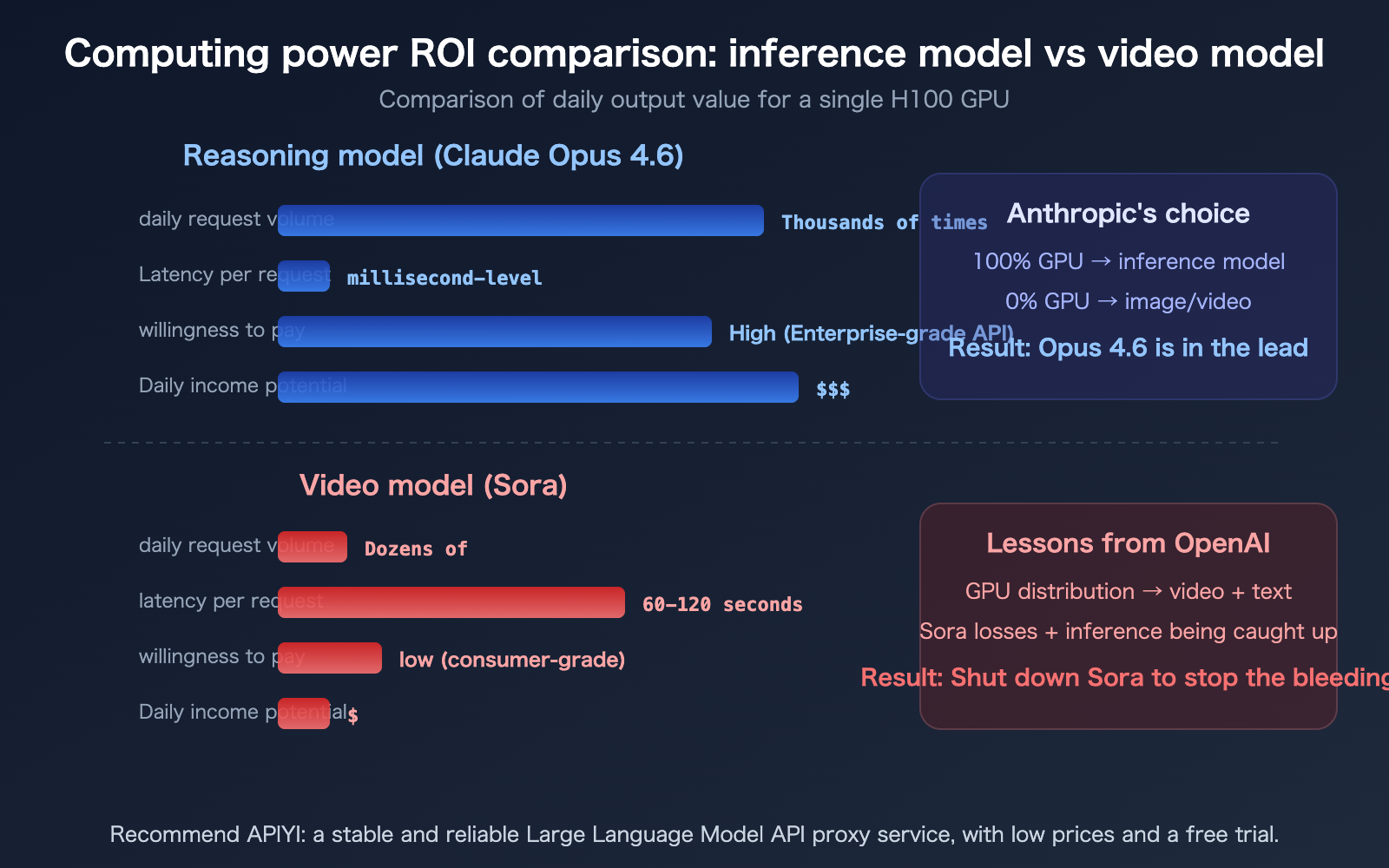

Every Sora video request was "hammering" OpenAI's server infrastructure; even with subscription fees, it couldn't cover the costs. More importantly, those GPUs could generate significantly higher commercial value if used for text and reasoning models (like GPT-5 or o3).

Reason 2: The "Dimensionality Reduction" Attack from Competitors like Seedance 2.0

ByteDance released Seedance 2.0 in February 2026, and it outperformed Sora in almost every dimension:

| Comparison Dimension | Sora | Seedance 2.0 |

|---|---|---|

| Output Resolution | 1080p | Native 2K (2048×1080) |

| Generation Speed | ~60-120s / 10s video | ~30s / 10s video |

| Input Modality | Text + Image | Text + Image (9) + Video (3) + Audio (3) |

| Native Audio | Not supported (requires post-dubbing) | Supported (lip-sync in 8+ languages) |

| Camera Control | Basic | Automatic storyboarding and camera planning |

| Ecosystem Advantage | Standalone app | Backed by TikTok / Douyin content ecosystem |

Seedance 2.0 leverages the TikTok ecosystem, naturally possessing both video consumption scenarios and a massive user base. As a standalone app, Sora lacked a content distribution channel, leaving users with no clear outlet for the videos they generated.

Beyond Seedance, competitors like Google’s Veo 3, Kuaishou’s Kling 2.0, and Luma’s Ray 2 were all released in early 2026. The video generation track has become extremely crowded, and Sora’s first-mover advantage has been neutralized or even surpassed.

Reason 3: OpenAI’s Strategic Pivot

OpenAI is facing immense competitive pressure from Anthropic. Reports from Bloomberg and CNBC have noted that Anthropic’s AI systems are gaining rapid popularity among enterprise clients and software engineers, posing a direct commercial threat to OpenAI.

Under this pressure, OpenAI must concentrate its compute power on the core battlefield: text reasoning and coding capabilities. Every GPU used to render a Sora video is one less GPU available to train GPT-5 or optimize o3.

🎯 Industry Insight: The shutdown of Sora doesn't mean "video generation is dead," but rather "it’s not cost-effective for OpenAI to do video generation." Spreading compute across too many product lines prevents any single one from reaching its full potential.

If your AI application needs to perform model invocation for both text and video, we recommend using APIYI (apiyi.com) for unified management—a single platform covering various models like Seedance, Kling, Claude, and more.

Why Anthropic Never Touches Image and Video Models

This is a strategic choice that deserves a deeper look. Since its inception, Anthropic has never released any image or video generation models—they haven't even followed the path taken by Google’s Nano Banana (Gemini image generation), which treats image capabilities as a "sidecar" to text models.

Anthropic’s Strategy of Concentrated Compute

Anthropic’s logic is straightforward: Compute is finite, and it must be concentrated on the most valuable directions.

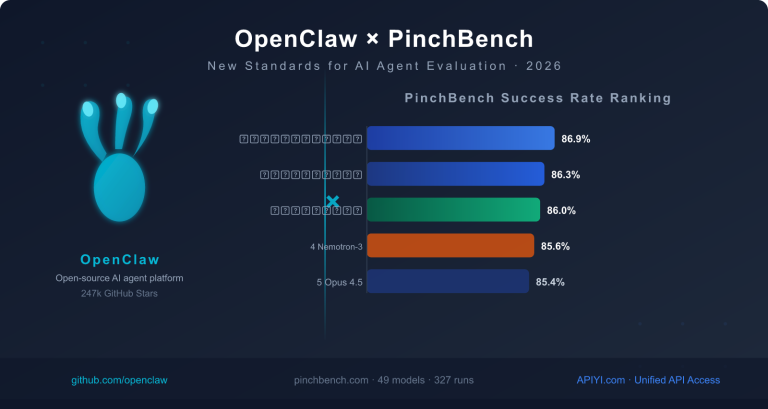

For Anthropic, the most valuable directions are reasoning and coding—these are the core capabilities that enterprise clients are willing to pay a premium for. The reason Opus 4.6’s reasoning capability leads the competition is largely because Anthropic hasn't diverted its GPUs toward image and video models.

This stands in stark contrast to OpenAI:

| Strategic Dimension | OpenAI (Distributed) | Anthropic (Concentrated) |

|---|---|---|

| Product Line | GPT + DALL·E + Sora + Whisper + Jukebox | Claude (Text/Code only) |

| Compute Allocation | Text + Image + Video + Audio | 100% Text Reasoning |

| Core Competitiveness | "Can do everything" | "Best-in-class reasoning and code" |

| Business Model | Consumer + API + Enterprise | API + Enterprise (High ARPU) |

| Sora Result | Shut down after 6 months, $2.1M revenue | — |

| Claude Code Result | — | Market leader in coding agents |

Why the ROI of Reasoning Models Far Exceeds Video Models

From a business perspective, the return on compute for reasoning models versus video models isn't even in the same league:

- Reasoning Models: Enterprise clients pay per token, offering high profit margins, high usage frequency, and easy integration into workflows.

- Video Models: Consumer willingness to pay is low (as proven by Sora), compute consumption is massive, and usage frequency is low.

An H100 GPU used to run Claude Opus 4.6 can serve thousands of enterprise-level reasoning requests daily, generating substantial API revenue. The same GPU used for image generation might only produce a few dozen videos, and most users aren't willing to pay for that.

The Signal Behind Anthropic’s Lack of Image Generation

Despite leaked configuration files showing internal function names like create_image and edit_image, Anthropic has yet to officially release any image generation capabilities. Manifold’s prediction market regarding "Will Anthropic release an image generation model by 2026?" continues to trend downward.

Anthropic’s institutional culture and resource allocation strategy are both pushing in the same direction: perfecting reasoning capabilities rather than spreading resources thin to chase images and video.

🎯 Developer Tip: Just because Anthropic doesn't do image/video doesn't mean your application can't have these capabilities. Through APIYI (apiyi.com), you can simultaneously access Claude (reasoning), Nano Banana 2 (image generation), and Seedance (video generation), leveraging the complementary strengths of different models.

The Computational Economics of the AI Video Generation Sector

The shutdown of Sora has served as a wake-up call for the entire AI video generation industry: there is a massive gap between the computational costs of video models and their commercial viability.

AI Video Generation API Pricing Comparison

| Model | Price per Second | 10s Video Cost | Generation Speed | Resolution |

|---|---|---|---|---|

| Sora | $0.10/sec | $1.00 | 60-120s | 1080p |

| Kling 2.0 | $0.07/sec | $0.70 | Medium | 1080p |

| Ray 2 | $0.05-0.16/sec | $0.50-1.62 | 47-167s | 1080p |

| Ray 2 Flash | $0.02-0.05/sec | $0.17-0.54 | 30-53s | 720p |

| Seedance 2.0 | Undisclosed | Tiered Pricing | ~30s | 2K |

To put this in perspective with text model costs: Claude Opus 4.6 output tokens cost approximately $60 per million tokens. A complete inference conversation (~1000 output tokens) costs about $0.06—which is equivalent to the cost of generating just 0.6 seconds of video with Sora.

Who Will Survive in the Video Sector?

The shutdown of Sora doesn't mean AI video generation has no future; rather, it highlights a critical requirement: only companies with an established content ecosystem can survive in the video sector long-term.

- ByteDance (Seedance): Backed by TikTok and Douyin, it naturally possesses video consumption scenarios and a massive user base.

- Google (Veo): Backed by YouTube, the world's largest video platform.

- Kuaishou (Kling): A domestic short-video platform with a closed-loop ecosystem.

In contrast, OpenAI lacks its own content platform—videos generated by Sora lack a consumption outlet, leaving users unsure of where to post their creations.

Note: Even though Sora is offline, the video generation API market remains active. Models like Kling, Seedance, and Ray 2 can be accessed uniformly through APIYI (apiyi.com), allowing you to leverage multiple video generation capabilities with a single API key.

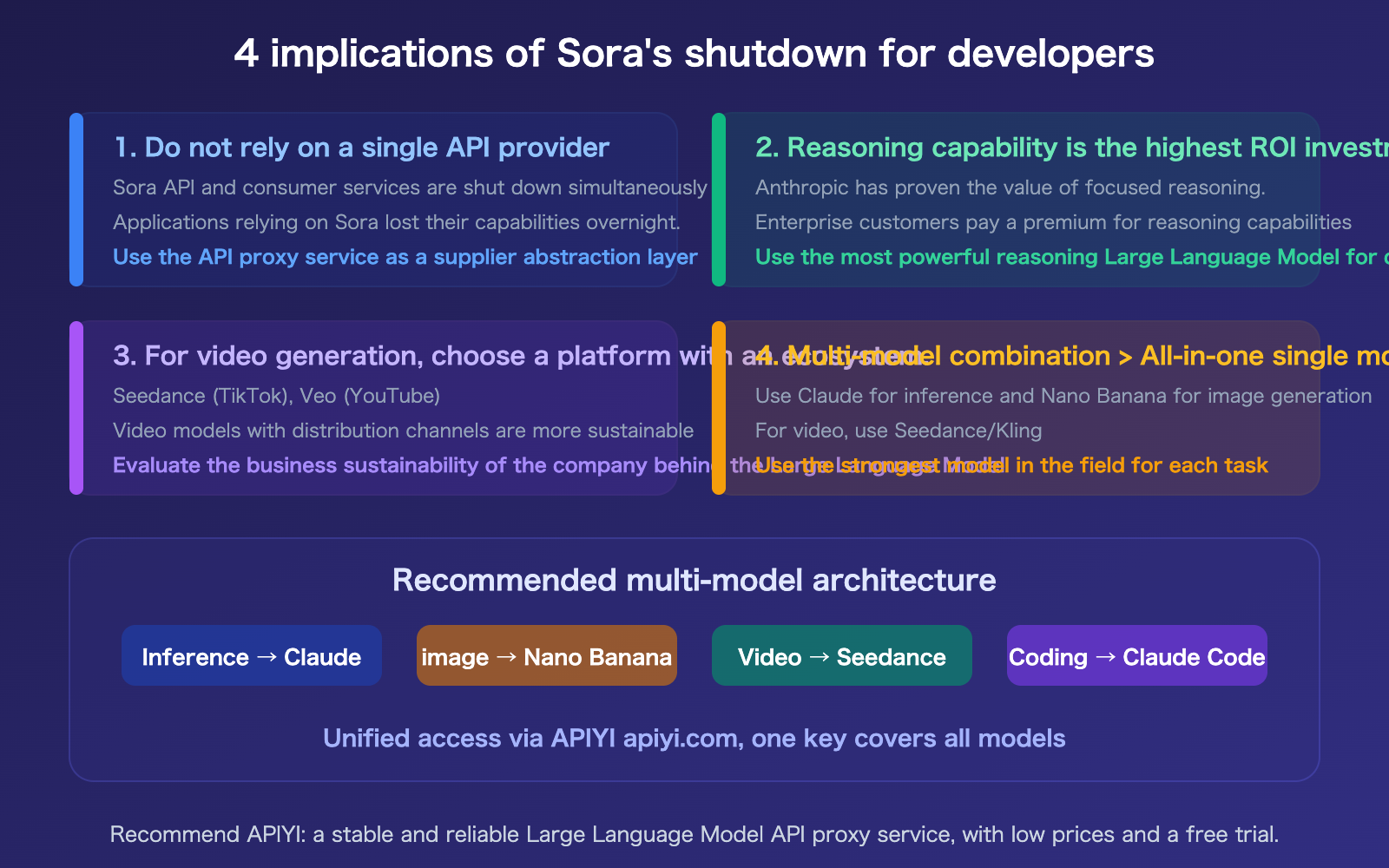

Insights for Developers

FAQ

Q1: What happens to my Sora API integration now that Sora is offline?

Since both the Sora API and consumer-facing services have been shut down, all existing API integrations will stop working. We recommend migrating immediately to alternatives: Seedance 2.0 (best quality and speed), Kling 2.0 (best value at $0.07/sec), or Ray 2 Flash (most affordable, starting at $0.02/sec). You can quickly switch to these models via APIYI (apiyi.com) by simply updating the model name in your code.

Q2: Will Anthropic develop image or video generation in the future?

It's unlikely in the near term. While leaked files show a create_image function name within Claude, Anthropic’s corporate culture and resource allocation strategy prioritize reasoning capabilities above all else. Manifold prediction markets also suggest a low probability. Anthropic’s competitive edge actually stems from this "strategic discipline of what not to do"—focusing all their compute power on reasoning.

Q3: Which is better for API integration: Seedance 2.0 or Kling 2.0?

It depends on your needs. Seedance 2.0 is superior in quality and features (2K native, native audio, 4-modality input), but its API pricing isn't fully transparent yet. Kling 2.0 offers more transparent API pricing ($0.07/sec) and a more open ecosystem. If you're chasing the highest quality, go with Seedance; if you're looking for the best value, choose Kling. Both are available via APIYI (apiyi.com).

Q4: Does OpenAI shutting down Sora affect the development of GPT-5?

Quite the opposite—shutting down Sora is specifically intended to free up compute resources for GPT-5. Sam Altman has made it clear to employees that the resources previously allocated to Sora will be redirected toward their next-generation model. From a competitive standpoint, OpenAI is facing strong pressure from Anthropic’s Claude in the enterprise market and must concentrate its compute power on text and reasoning to maintain its edge.

Summary

The key takeaways from the Sora shutdown are:

- Compute is a zero-sum game: OpenAI’s experience proves that trying to do text, images, video, and audio simultaneously makes it impossible to excel in any single area. Anthropic avoids images and video entirely, focusing 100% of their compute on reasoning, which has resulted in Opus 4.6 leading the competition in reasoning capabilities.

- The video sector requires a content ecosystem: Sora lacked a distribution channel, leading to low user retention and revenue of only $2.1M. Seedance benefits from its connection to TikTok, and Veo from YouTube, providing them with natural video consumption scenarios.

- Multi-model orchestration is the optimal solution: The strategy that "what you don't do" is more important than "what you do" means developers should combine the best models for each specific domain rather than relying on a single "all-in-one" model.

We recommend using APIYI (apiyi.com) to manage your model invocations—use Claude for reasoning, Nano Banana for images, and Seedance/Kling for video. The platform offers free credits and a unified interface, allowing you to access all these models with a single API key.

📚 References

-

TechCrunch: OpenAI's Sora is shutting down: Full report on the shutdown of Sora

- Link:

techcrunch.com/2026/03/24/openais-sora-was-the-creepiest-app-on-your-phone-now-its-shutting-down/ - Note: Includes download statistics, revenue data, and the official statement from OpenAI.

- Link:

-

CNBC: OpenAI shutters Sora as company reels in costs: Analysis from the perspective of compute costs and IPO

- Link:

cnbc.com/2026/03/24/openai-shutters-short-form-video-app-sora-as-company-reels-in-costs.html - Note: Includes financial data and an analysis of competitive pressures.

- Link:

-

Variety: Disney Drops Plans for $1 Billion Investment: Report on Disney's withdrawal of investment

- Link:

variety.com/2026/digital/news/openai-shutting-down-sora-video-disney-1236698277/ - Note: Detailed background on Disney's exit from the partnership.

- Link:

-

Seedance 2.0 Official Website: Comprehensive feature overview of ByteDance's video generation model

- Link:

seedance2.ai - Note: Includes detailed information on 4-modal input, 2K output, and native audio capabilities.

- Link:

-

APIYI Documentation Center: Unified API access for multiple models

- Link:

docs.apiyi.com - Note: Supports one-stop integration for Claude, Nano Banana, and various video generation models.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to join the discussion in the comments section. For more resources, visit the APIYI documentation center at docs.apiyi.com.