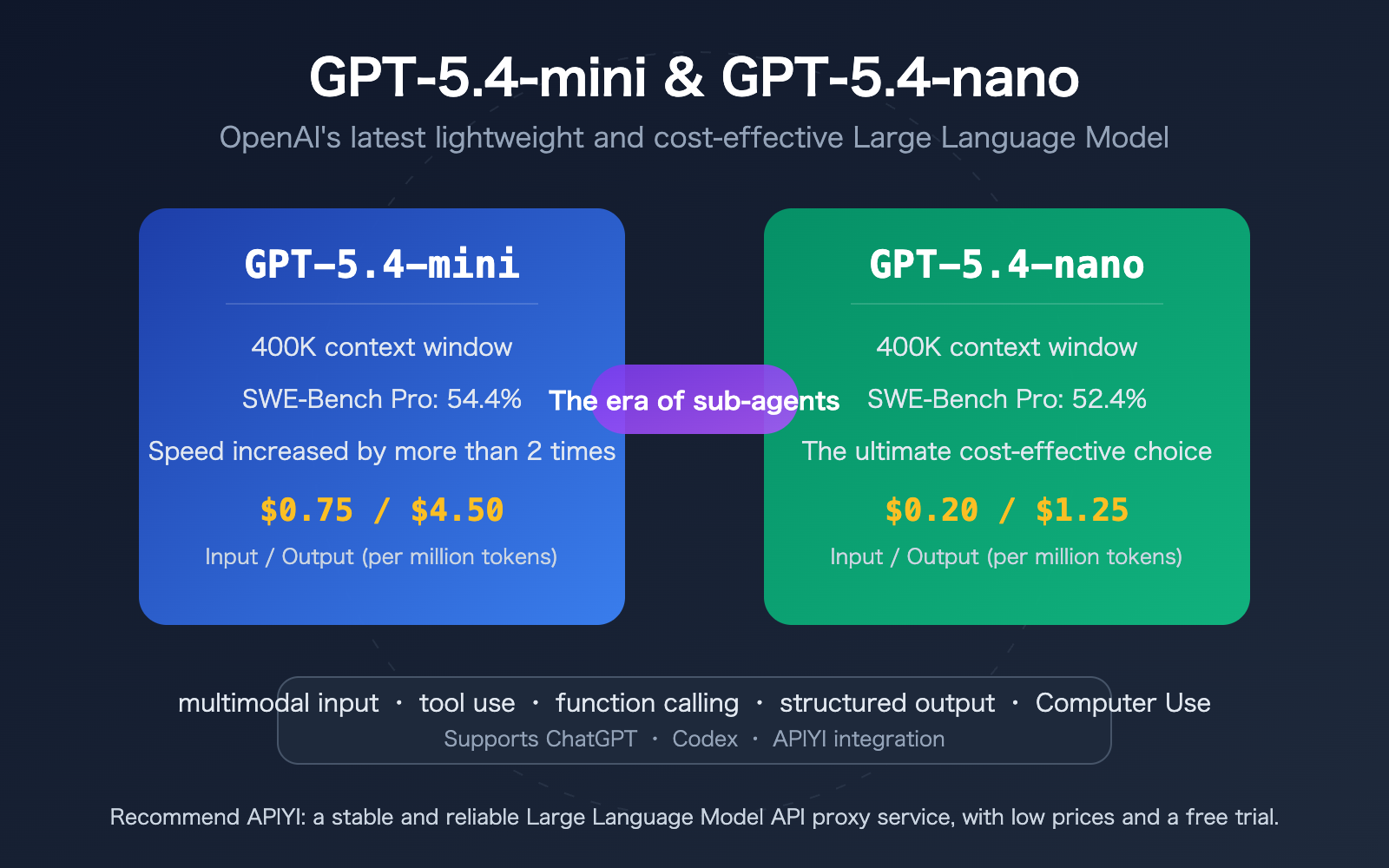

Author's Note: GPT-5.4-mini and GPT-5.4-nano are OpenAI's latest lightweight models, offering a 2x speed boost and pricing as low as $0.20 per million tokens. This article provides a detailed breakdown of their specifications, pricing, benchmarks, and API integration methods.

On March 17, 2026, OpenAI officially released the GPT-5.4-mini and GPT-5.4-nano lightweight models. GPT-5.4-mini comprehensively outperforms GPT-5 mini in coding, reasoning, multimodal understanding, and tool use, with speeds more than twice as fast. Meanwhile, GPT-5.4-nano, priced at $0.20 per million input tokens, has become the most affordable model in the GPT-5.4 series to date.

Core Value: Spend 3 minutes learning the key specifications, performance, and integration methods for GPT-5.4-mini and GPT-5.4-nano to find the best lightweight solution for your business needs.

GPT-5.4-mini and GPT-5.4-nano Core Specifications

| Specification | GPT-5.4-mini | GPT-5.4-nano | GPT-5.4 (Flagship) |

|---|---|---|---|

| Context Window | 400K Tokens | 400K Tokens | 400K Tokens |

| Input Price | $0.75/1M Tokens | $0.20/1M Tokens | Higher |

| Output Price | $4.50/1M Tokens | $1.25/1M Tokens | Higher |

| Multimodal Input | Text + Image | Text + Image | Text + Image |

| Tool Use | Supported | Supported | Supported |

| Availability | ChatGPT + Codex + API | API Only | ChatGPT + Codex + API |

Deep Dive: GPT-5.4-mini Capabilities

GPT-5.4-mini is the lightweight version in the GPT-5.4 series that comes closest to the flagship model's performance. It scored 54.4% on the SWE-Bench Pro coding benchmark, nearing the flagship's 57.7%; it reached 72.1% on the OSWorld-Verified computer operation benchmark (compared to 75.0% for the flagship); and it scored 88.0% on the GPQA Diamond graduate-level scientific reasoning test (compared to 93.0% for the flagship). This means GPT-5.4-mini delivers a near-flagship experience in most scenarios while being over twice as fast.

In ChatGPT, GPT-5.4-mini is available to free and Plus users via the "Thinking" feature. At the API level, it supports a full suite of capabilities, including text and image input, tool use, function calling, web search, file search, Computer Use, and Skills.

Deep Dive: GPT-5.4-nano's Ultimate Cost-Effectiveness

GPT-5.4-nano is designed specifically for speed and cost-sensitive scenarios. With an input price of just $0.20 per million tokens and an output price of $1.25 per million tokens, it’s the most affordable option in the GPT-5.4 series. In his tests, Simon Willison found that using GPT-5.4-nano to describe 76,000 images cost only $52. GPT-5.4-nano is available exclusively via API and is highly recommended for high-frequency, low-complexity automated tasks like classification, data extraction, sorting, and coding sub-agents.

🎯 Integration Tip: Both GPT-5.4-mini and GPT-5.4-nano are now available on the APIYI (apiyi.com) platform. Pricing is consistent with OpenAI's official site, with official proxy API top-ups starting at a 10% discount, making it easy for domestic users to integrate and test quickly.

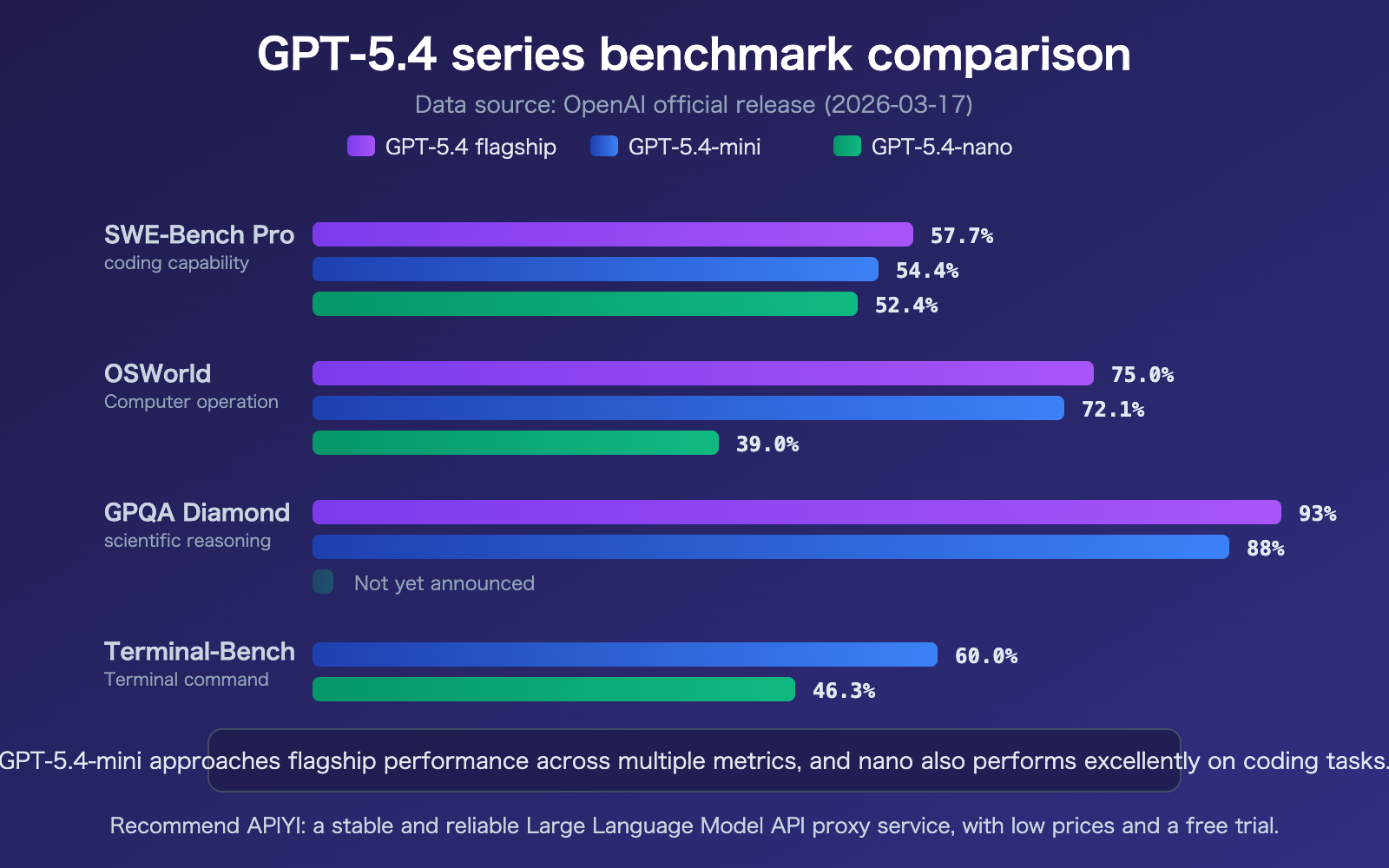

GPT-5.4-mini and GPT-5.4-nano Benchmark Comparison

| Benchmark | GPT-5.4 Flagship | GPT-5.4-mini | GPT-5.4-nano | Description |

|---|---|---|---|---|

| SWE-Bench Pro | 57.7% | 54.4% | 52.4% | Coding capability |

| OSWorld-Verified | 75.0% | 72.1% | 39.0% | Computer operation |

| GPQA Diamond | 93.0% | 88.0% | — | Graduate-level reasoning |

| Terminal-Bench 2.0 | — | 60.0% | 46.3% | Terminal command capability |

GPT-5.4-mini's performance on SWE-Bench Pro (54.4%) is nearly 10 percentage points higher than the GPT-5 mini (45.7%), bringing it very close to flagship levels. The GPT-5.4-nano also hit 52.4%, outperforming the previous generation's mini model. It's worth noting that GPT-5.4-mini excels in Computer Use scenarios (OSWorld 72.1%), where it can quickly interpret dense user interface screenshots to complete tasks.

GPT-5.4-mini and GPT-5.4-nano Pricing and Cost Analysis

| Model | Input Price | Output Price | Est. Cost per 10k Conversations | Use Cases |

|---|---|---|---|---|

| GPT-5.4-nano | $0.20/M | $1.25/M | ~$2-5 | Classification, extraction, sub-agents |

| GPT-5.4-mini | $0.75/M | $4.50/M | ~$8-15 | General chat, coding, reasoning |

| GPT-5.4 Flagship | Higher | Higher | ~$30+ | Complex reasoning, research tasks |

OpenAI automatically applies Prompt Caching for repeated system instructions and large documents, further reducing actual usage costs. For high-frequency invocation scenarios, input costs can drop significantly after a cache hit.

🎯 Cost Optimization Tip: Integrate GPT-5.4-mini and GPT-5.4-nano via the APIYI (apiyi.com) platform, where you can get official API recharges starting at about 90% off. For developers with high-volume usage, combining Prompt Caching with platform discounts can further optimize your actual costs.

Getting Started with GPT-5.4-mini and GPT-5.4-nano

Minimal Example

Here’s the simplest way to get started. You can invoke GPT-5.4-mini with just 10 lines of code:

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="gpt-5.4-mini",

messages=[{"role": "user", "content": "Explain what a sub-agent architecture is in one sentence"}]

)

print(response.choices[0].message.content)

View GPT-5.4-nano invocation example

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

# GPT-5.4-nano is ideal for classification and data extraction tasks

response = client.chat.completions.create(

model="gpt-5.4-nano",

messages=[

{"role": "system", "content": "You are a text classifier, return the result in JSON format"},

{"role": "user", "content": "Please classify the following feedback as positive/neutral/negative: This product is pretty good, but the delivery was a bit slow"}

],

response_format={"type": "json_object"}

)

print(response.choices[0].message.content)

Tip: Get your API key from APIYI (apiyi.com) to quickly test GPT-5.4-mini and GPT-5.4-nano. The platform supports a unified interface for the entire OpenAI model series, so there's no need to switch your

base_url.

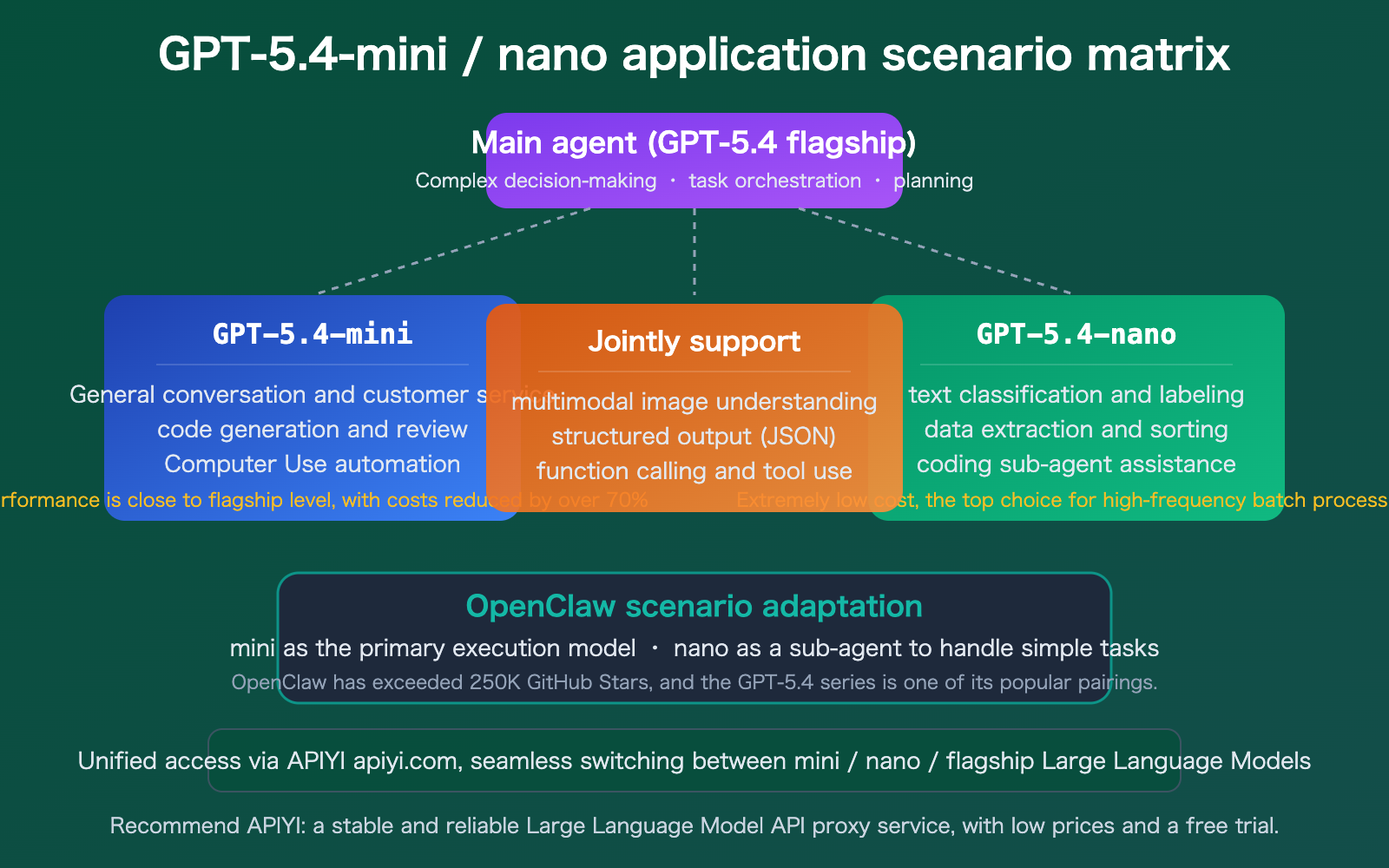

Use Cases for GPT-5.4-mini and GPT-5.4-nano

Using GPT-5.4-mini and GPT-5.4-nano in OpenClaw

OpenClaw is the fastest-growing open-source AI agent tool of 2026, with over 250,000 stars on GitHub. It allows you to connect Large Language Models like GPT and Claude to real-world software, enabling automated workflow management, file operations, and API calls.

In the OpenClaw ecosystem, the combination of GPT-5.4-mini and GPT-5.4-nano is a perfect match:

- GPT-5.4-mini as the primary model: Handles conversational interaction, code generation, and complex instruction comprehension, with light monthly usage costing around $12–25.

- GPT-5.4-nano as a sub-agent: Handles simple decision-making tasks like classification, extraction, and routing at an extremely low cost.

- Multi-model collaboration: In OpenClaw’s multi-agent architecture, the "mini" model does the "thinking," while the "nano" model handles the "execution."

🎯 Recommended Approach: Connect to OpenClaw via APIYI (apiyi.com) to manage calls for the entire GPT-5.4 series under a single API key, simplifying your configuration and cost management.

FAQ

Q1: Should I choose GPT-5.4-mini or GPT-5.4-nano?

If you need general-purpose capabilities (chat, coding, reasoning, Computer Use), go with GPT-5.4-mini. If you're handling high-frequency, batch-processed simple tasks (classification, extraction, sorting), GPT-5.4-nano is your best bet. You can even use them together in the same project: let mini handle the complex decision-making while nano takes care of the simple execution.

Q2: How much of an improvement is GPT-5.4-mini over the previous GPT-5 mini?

SWE-Bench Pro performance jumped from 45.7% to 54.4% (+8.7 percentage points), and it's over twice as fast. Plus, it adds new capabilities like Computer Use and Skills. You're getting significantly better performance at the same price, making it a much better value.

Q3: How can I quickly integrate via the APIYI platform?

We recommend using APIYI (apiyi.com) for a quick setup:

- Visit APIYI at apiyi.com to register your account.

- Get your API key; official proxy top-ups are available at about a 10% discount.

- Set your

base_urltohttps://vip.apiyi.com/v1. - Simply call the

gpt-5.4-miniorgpt-5.4-nanomodel names directly.

Summary

Here are the key takeaways for GPT-5.4-mini and GPT-5.4-nano:

- GPT-5.4-mini offers near-flagship performance: With 54.4% on SWE-Bench Pro and 72.1% on OSWorld, plus 2x faster speeds, it's the go-to lightweight solution for most developers.

- GPT-5.4-nano delivers extreme cost-efficiency: At $0.20 per million input tokens, it's designed specifically for high-frequency scenarios like classification, extraction, and sub-agent tasks.

- Core components of sub-agent architecture: In agent tools like OpenClaw, the mini + nano combo is the sweet spot for balancing cost and performance.

These models mark OpenAI's official entry into the "sub-agent era"—you don't need a flagship model for every task. By mixing and matching these lightweight models, you can keep quality high while slashing costs.

We recommend using APIYI (apiyi.com) to quickly integrate GPT-5.4-mini and GPT-5.4-nano. The platform offers pricing consistent with the official site, provides about a 10% discount on official proxy top-ups, and gives you a unified interface for multiple models.

📚 References

-

OpenAI Official Announcement: Details on the release of GPT-5.4 mini and nano

- Link:

openai.com/index/introducing-gpt-5-4-mini-and-nano/ - Description: Official specifications, pricing, and capability overview

- Link:

-

OpenAI API Pricing Page: Latest model pricing information

- Link:

openai.com/api/pricing/ - Description: Official pricing reference, including cache pricing

- Link:

-

OpenAI Developer Documentation – GPT-5.4-mini: Model API documentation

- Link:

developers.openai.com/api/docs/models/gpt-5.4-mini - Description: Detailed API parameters and usage instructions

- Link:

-

Simon Willison's Review: Practical analysis of GPT-5.4 mini and nano

- Link:

simonwillison.net/2026/Mar/17/mini-and-nano/ - Description: In-depth usage review and cost analysis from an independent developer

- Link:

-

OpenClaw Official Documentation – OpenAI Configuration: OpenClaw integration guide

- Link:

docs.openclaw.ai/providers/openai - Description: How to configure GPT-5.4 series models within OpenClaw

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to share your experiences with GPT-5.4-mini and GPT-5.4-nano in the comments. For more resources, visit the APIYI documentation center at docs.apiyi.com.