The bottom line: Using an Official Relay to access gpt-image-2 carries no additional legal risk beyond what you’d face by calling OpenAI’s official API directly, provided you use it reasonably. However, if your API proxy service uses Reverse-engineered channels, those compliance risks will flow directly down the call chain to your enterprise. This article provides a rigorous assessment method and an 8-point legal checklist.

Since OpenAI officially released gpt-image-2 on April 21, 2026, it has been widely adopted in B2B scenarios across China. The three questions corporate legal teams ask most often are: Can we use it directly? Are there compliance risks? Who owns the generated images?

These questions seem simple, but they actually touch on OpenAI’s Service Terms, Usage Policies, indemnification clauses, content safety mechanisms, commercial ownership, and the "differences in API proxy service types"—a point often overlooked by domestic enterprises. Without reading the original English terms from OpenAI, legal teams can easily arrive at a conservative but incorrect conclusion.

This article breaks down the compliance landscape of gpt-image-2 in the order of an enterprise’s actual approval process.

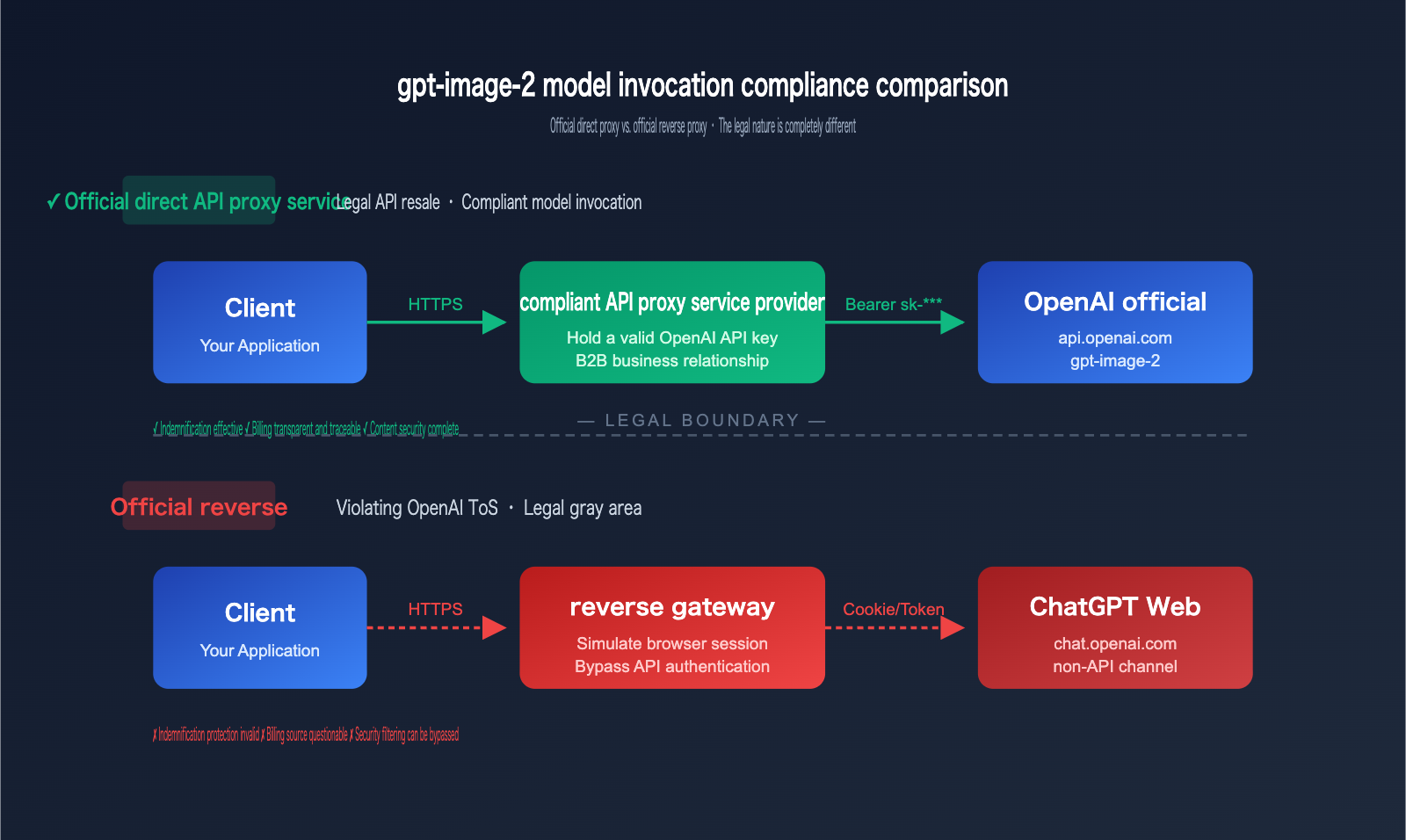

1. Two Channels You Must Distinguish Before Integrating gpt-image-2

For domestic legal teams, the core of compliance review for AI services isn't the model itself, but whether the "call chain can be traced back to legitimate authorization." This is why we must first clarify the role of the "API proxy service."

1.1 Official Relay vs. Reverse-engineered: Fundamental Legal Differences

| Channel Type | Technical Implementation | OpenAI Authentication | Billing Source | Legal Characterization |

|---|---|---|---|---|

| Official Relay | Provider holds a legitimate OpenAI API key, forwards requests | Standard Authorization: Bearer sk-xxx |

Paid via official OpenAI billing | Legitimate API resale |

| Reverse-engineered | Reverse-engineered ChatGPT web sessions/accounts | Simulates browser Session/Cookie | Multi-account polling/bypassing billing | Violates OpenAI ToS, legal gray area |

| Self-hosted | Enterprise holds its own API key | Own key | Self-paid | Standard user, fully compliant |

Key Judgment: When you send a request to an endpoint like https://api.example.com/v1/images/generations, you need to know how the provider is reaching OpenAI on the backend.

🎯 Important Note: When choosing an API proxy service, be sure to confirm that the provider uses an Official Relay. For example, the APIYI (apiyi.com) platform connects to the official OpenAI API and uses legitimately held enterprise-grade API keys, maintaining a standard B2B commercial relationship with OpenAI. This determines the compliance boundary for downstream enterprise users.

1.2 Why "Reverse-engineered" Channels Pose Liability Risks

OpenAI’s [Service Terms] explicitly state that API users shall not "circumvent, disable, or interfere with OpenAI’s access restrictions." If a provider uses reverse-engineered ChatGPT channels:

- Breach of contract liability flows down the chain: The provider is in breach; as a downstream user, even if you can prove you were unaware, you may still be deemed to have "indirectly breached" the terms.

- OpenAI’s Indemnification does not apply: We’ll explain this in detail later, but simply put, you won't get the protection of OpenAI’s "indemnification" against third-party intellectual property infringement claims when using reverse-engineered channels.

- Content safety mechanisms may be bypassed: Reverse-engineered channels often modify safety parameters like

moderation, which may lead to your enterprise generating images that violate OpenAI’s prohibited categories without your knowledge.

1.3 How to Quickly Identify the Proxy Channel Type

When conducting due diligence on a service provider, corporate legal teams can use the following questions to quickly identify the type:

✅ Three Questions to Determine Compliance

Q1: "Please provide proof of your API service relationship with OpenAI

(e.g., OpenAI account type, enterprise account screenshots, monthly bills)."

Q2: "Are the endpoints you call api.openai.com or

interfaces derived from chat.openai.com?"

Q3: "If our request triggers OpenAI’s content moderation,

are the error codes returned as-is? Or have they been rewritten by you?"

Official Relay providers can usually answer the first two questions directly, and the answer to the third question is "returns 400/safety_violations as-is." Reverse-engineered providers often provide vague answers or refuse to provide documentation.

II. Commercial Boundaries for gpt-image-2 Under Official OpenAI Terms

Once you've confirmed your channel is compliant, the next legal concern is: "Can we use the generated images for commercial purposes?" This is defined by three documents: OpenAI's Terms of Use, Service Terms, and Usage Policies.

2.1 Ownership of Output: OpenAI Explicitly Assigns Rights to Users

Section 3 of the OpenAI Terms of Use, "Content," clearly states:

"OpenAI hereby assigns to you all its right, title and interest in and to Output."

Key takeaways:

- You retain ownership of your Input.

- You hold full rights to the Output, as OpenAI proactively assigns these rights to you.

- This means that for images generated by

gpt-image-2, you are the legal rights holder.

2.2 Scope of Commercial Use: All Business Activities, Including Resale

| Commercial Activity | Permitted? | OpenAI Terms Basis |

|---|---|---|

| Internal company use (internal marketing/PPTs/prototypes) | ✅ Yes | Terms §3 |

| Marketing and advertising | ✅ Yes | Terms §3 |

| Product packaging/printed materials | ✅ Yes | Terms §3 |

| E-commerce product images/direct sales (printed) | ✅ Yes | Terms §3 |

| Resale as a SaaS service (calling API for downstream) | ✅ Yes | Terms §3 + Service Terms |

| Training competing models | ❌ No | Service Terms §2(c) |

The Single Red Line: You must not use gpt-image-2 output to train any model that competes with OpenAI's services. This is the only commercial use strictly prohibited by OpenAI.

🎯 Enterprise Integration Tip: If your business involves providing an "AI image generation SaaS" to downstream clients, this path is permitted under OpenAI's terms. We recommend using the APIYI (apiyi.com) platform for multi-model testing. It supports a unified interface for mainstream image models like

gpt-image-2,Imagen 4, andFlux 1.1 Pro, making it easier for enterprises to conduct technical evaluations while maintaining compliance.

2.3 The Reality of Copyright Protection: You "Own" It, But Can't Easily "Assert" It

This is where legal teams often get confused. OpenAI assigning you "rights" does not mean the image automatically enjoys "copyright protection."

| Dimension | OpenAI Terms | Copyright Law (US/China Judicial Practice) |

|---|---|---|

| Ownership | OpenAI assigns all rights to the user | Varies by case |

| Full Copyright | Not guaranteed | US Copyright Office: Pure AI-generated content lacks copyright; some Chinese cases recognize human creative contribution |

| Commercial Rights | Fully permitted, including resale | Unaffected—these are contractual rights, independent of copyright |

| Preventing Theft | No guarantee of exclusivity | May be unable to claim copyright infringement relief |

Practical Conclusion: You can safely use gpt-image-2 output for commercial purposes, but don't assume it will receive the same copyright protection as a hand-drawn design. For high-value IP assets (e.g., brand logos, core mascots), we recommend having a human designer perform substantial secondary creation on the AI output to ensure the copyright is enforceable.

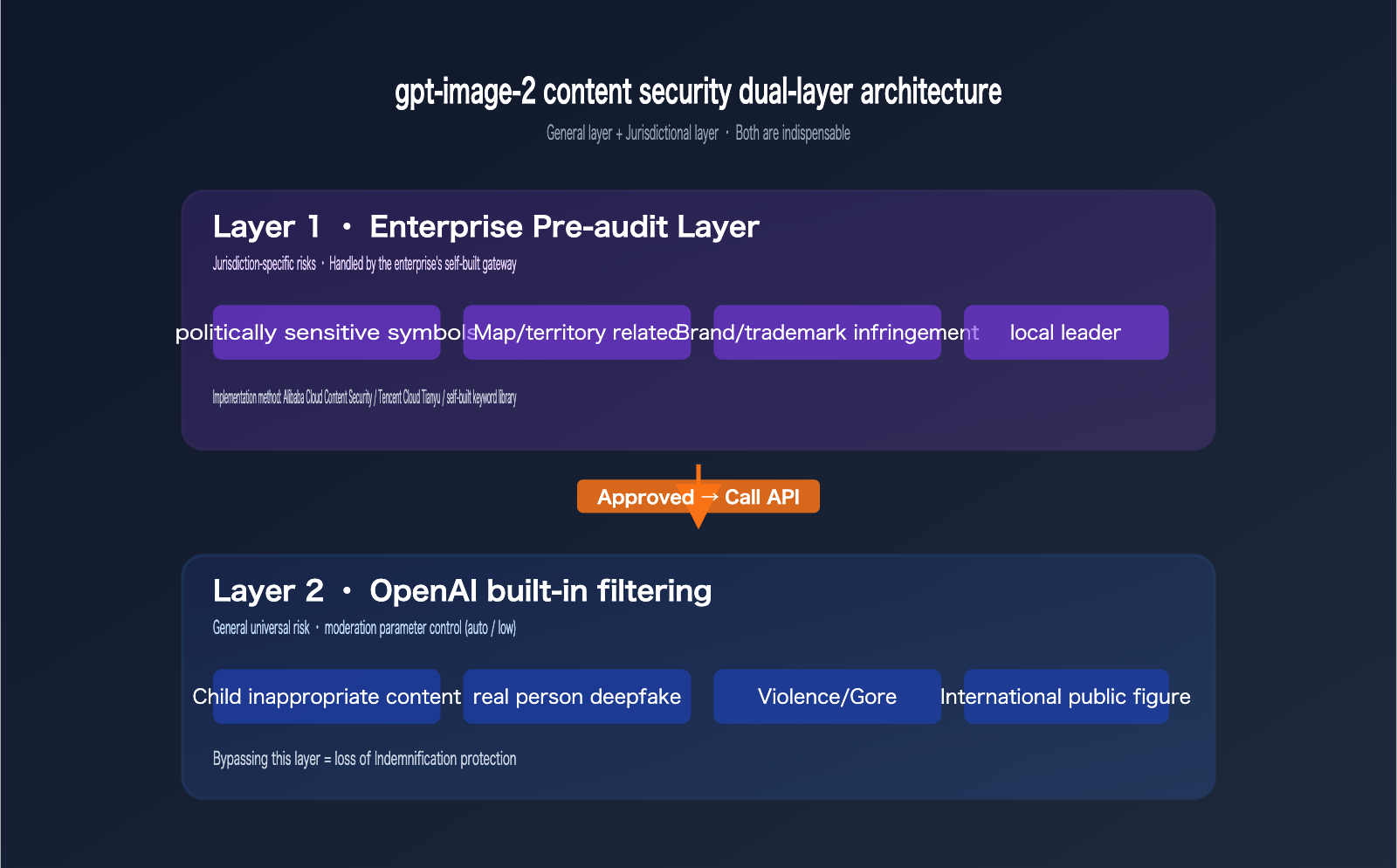

III. gpt-image-2 Content Safety Mechanisms and Enterprise Pre-screening Obligations

OpenAI's content safety is an underrated part of the compliance spectrum. Many corporate legal teams mistakenly believe that "the model's safety filter is enough," which is a dangerous misconception.

3.1 Two-Layer Built-in Safety Mechanisms for gpt-image-2

The gpt-image-2 API includes two layers of safety checks when generating images:

# Layer 1: Prompt input check (before generation)

# Layer 2: Output image check (before returning)

# Enterprise-controllable parameters:

{

"model": "gpt-image-2",

"prompt": "your prompt here",

"moderation": "auto", # Default, standard filtering

# "moderation": "low", # Optional, lenient filtering, but cannot be disabled

"size": "1024x1024",

"quality": "high"

}

Important: The moderation parameter only allows a choice between auto (default) and low; there is no "off" option. This means OpenAI always retains baseline filtering—for example, content harmful to minors or deepfakes of real public figures will be rejected in any mode.

3.2 Content Categories Not Covered by OpenAI Safety Filters

OpenAI's filters focus on universal risks and do not cover jurisdiction-specific risks in the enterprise's location:

| Risk Category | OpenAI Filtering | Mainland China Legal Requirements |

|---|---|---|

| Inappropriate content for children | ✅ Strong filtering | ✅ Consistent |

| Deepfakes of real people | ✅ Strong filtering | ✅ Consistent |

| Violence/Gore | ⚠️ Depends on severity | ✅ Strictly regulated |

| Politically sensitive symbols | ⚠️ Partially covered | ❗️ Requires enterprise pre-screening |

| Images of national leaders | ⚠️ Only covers international figures | ❗️ Requires enterprise pre-screening |

| Maps/Territorial boundaries | ❌ Not covered | ❗️ Requires enterprise pre-screening |

| Commercial logos/Brand infringement | ❌ Not covered | ❗️ Requires enterprise pre-screening |

Key Insight: OpenAI's content safety is the universal layer, while enterprises in mainland China have jurisdictional-layer compliance obligations. Even when accessing gpt-image-2 through a compliant channel, enterprises must still perform "pre-content screening" on the client side or their own gateway.

🎯 Integration Tip: In practice, we recommend that enterprises use a domestic content safety API (such as Alibaba Cloud Content Security or Tencent Cloud Tianyu) to filter keywords in the prompt before calling

gpt-image-2. After receiving the generated image, perform an output-side check using an image content moderation API. API proxy services like APIYI (apiyi.com) return OpenAI'ssafety_violationserror codes as-is, making it easy for enterprises to unify logs for both layers of screening.

3.3 Safety Compliance and the Strong Binding of Indemnification

This is the legal detail most easily overlooked. Section 7 of OpenAI's Service Terms provides an Indemnification clause:

When API users utilize the output within the scope of legal use and are sued by a third party for intellectual property infringement, OpenAI will cover the costs of defense and reasonable damages.

However, this protection has two exclusions:

- The user knows or should know that the output might be infringing, yet uses it anyway.

- The user disables or bypasses the safety mechanisms provided by OpenAI.

Practical Implication: If you insist on using moderation: low or bypass OpenAI's safety filters through technical means, you voluntarily waive your Indemnification protection. Even if you receive a perfect API output, if you are sued for infringement, OpenAI will not step in to defend you.

IV. gpt-image-2 Data Export Compliance: PIPL and Data Security Law

When companies in mainland China integrate gpt-image-2, they face a challenge that is more fundamental and occurs earlier than "choosing an API proxy service": data export compliance. While this is a major focus for legal teams, many technical teams overlook it during the initial selection process.

4.1 What Data Is Considered "Exported"?

OpenAI's API processing servers are located in the United States, so any request sent to gpt-image-2 involves data export. Specifically, for image generation scenarios, the following data is transmitted:

| Data Type | Exported? | PIPL Sensitivity |

|---|---|---|

| Prompt text | ✅ Yes | Depends on content |

| Reference image (image-to-image) | ✅ Yes | High (may contain faces/scenes) |

| Generated image | ✅ Yes (returned) | Medium |

| User IP/UA | ✅ Yes (if direct) | Low |

| Business-related data (user_id, session_id) | ⚠️ Depends on implementation | Medium |

🎯 Recommendation for Data Transmission Path: Use a compliant domestic API proxy service (such as APIYI at apiyi.com). The prompt first reaches a domestic server before being routed abroad, which mitigates the compliance risks associated with "user terminals connecting directly to overseas servers." The domestic entry architecture of this platform aligns with PIPL requirements for "data processors."

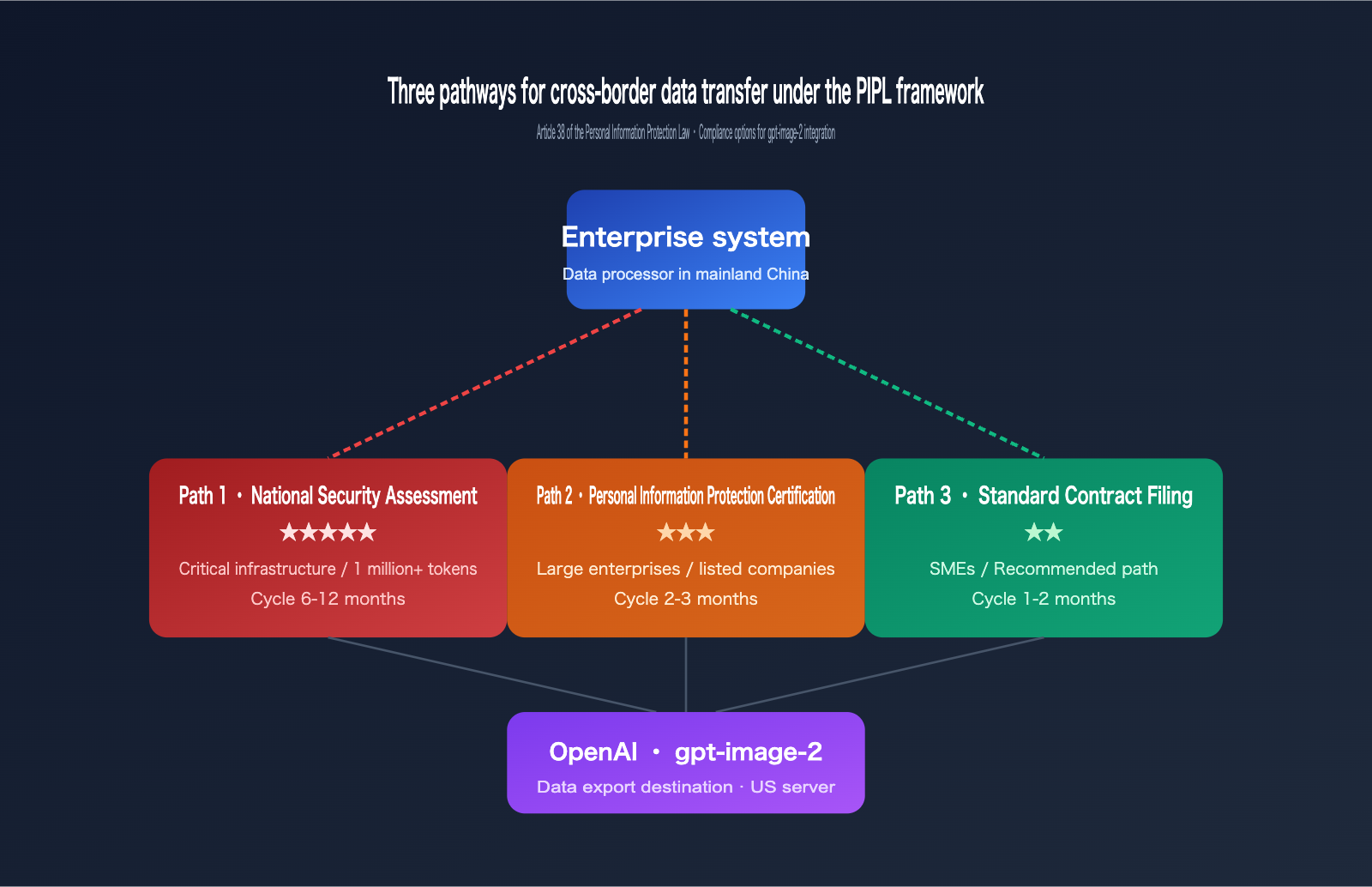

4.2 Three Compliance Paths Under the PIPL Framework

Article 38 of the Personal Information Protection Law (PIPL) defines three paths for data export compliance:

Path 1: Security Assessment by the National Cyberspace Administration

- Applicable to: Critical information infrastructure operators, entities processing personal info of 1M+ users

- Difficulty: ★★★★★ (Government assessment, 6-12 month cycle)

Path 2: Personal Information Protection Certification

- Applicable to: General enterprises, via professional institution certification

- Difficulty: ★★★ (Institutional certification, 2-3 month cycle)

Path 3: Standard Contract Filing (SCC)

- Applicable to: Most enterprises, signing and filing standard contracts

- Difficulty: ★★ (Relatively simple, but requires ongoing supervision)

For gpt-image-2 integration, the vast majority of enterprises fall under Path 3 (Standard Contract). This means you need to:

- Sign agreements containing SCC clauses with downstream users.

- Assess the types and sensitivity of the exported data.

- File the records with your local Cyberspace Administration office.

4.3 Best Practices for Reducing Data Sensitivity

There are several ways to reduce the complexity of compliance without impacting your business operations:

| Practice | Implementation Cost | Compliance Benefit |

|---|---|---|

| Standardized prompt templates (avoid dynamic user privacy) | Low | High |

| Reference image desensitization (face blurring/background replacement) | Medium | High |

| User ID hashing (do not pass raw user_id) | Low | Medium |

| Local preprocessing (sensitive keyword filtering) | Medium | High |

| Compliant proxy service (handled by domestic providers) | Low | High |

4.4 PIPL Compliance and gpt-image-2 Business Mapping

| Business Scenario | Data Export Complexity | Recommended Compliance Path |

|---|---|---|

| Internal corporate document illustration | Low (no personal info) | Standard Contract Filing |

| Marketing material generation (no faces) | Low | Standard Contract Filing |

| User profile generation (with faces) | High | PI Protection Certification + SCC |

| E-commerce product images (with models) | Medium | Standard Contract Filing + Model Authorization |

| Social media avatar generation (with user photos) | High | PI Protection Certification + Individual Consent |

V. 8-Point Legal Compliance Checklist for Enterprise Integration of gpt-image-2

I've consolidated the analysis above into a checklist you can hand directly to your legal or compliance team.

5.1 Complete Checklist (by approval order)

| No. | Item | Compliance Standard | Risk Level |

|---|---|---|---|

| 1 | API proxy service channel | Must be official direct routing; OpenAI billing proof must be available | High |

| 2 | Service provider qualifications | Legally registered company (domestic/overseas) with ICP/business licenses | High |

| 3 | Data transmission path | Clear documentation on whether prompts/images use SSL and if they are logged/stored | Medium |

| 4 | Pre-processing content moderation | Dual-layer mechanism: internal prompt review + output image review | High |

| 5 | moderation parameter policy |

Default to auto; written exception required if low is used |

Medium |

| 6 | Commercial boundary statement | Terms of Service (ToS) must state no usage for training competing models | Medium |

| 7 | Image ownership transfer | Clear ownership agreements with downstream clients/end-users | Medium |

| 8 | Emergency response plan | Defined procedure for handling complaints/lawsuits arising from generated images | High |

5.2 Priority Differences by Enterprise Role

| Enterprise Role | Key Checklist Items | Legal Focus |

|---|---|---|

| Direct Call (Internal) | 1, 4, 5 | Proxy channel compliance, content safety |

| SaaS Provider (Reseller) | 1, 6, 7 | Commercial boundaries, client rights agreements |

| Public/State-owned Co. | All 8 items | Complete audit trail, traceable proof |

| Cross-border E-commerce | 1, 4, 7 | Multi-jurisdictional compliance, ownership handoff |

🎯 Integration Path Recommendation: For public and state-owned enterprises, we recommend choosing an API proxy service that provides official invoices, corporate payment options, and SLA agreements. The APIYI (apiyi.com) platform supports corporate invoicing and formal service agreements, meeting all requirements for enterprise compliance audits.

5.3 A Template for a "Service Provider Compliance Commitment"

To translate this checklist into a contractual obligation, we suggest requiring the API proxy service provider to sign off on the following clauses:

Key Clauses for Compliance Commitment (For Legal Reference)

1. Channel Compliance: The provider guarantees all API requests are routed

through the official OpenAI API endpoint (api.openai.com) and does not

use reverse engineering or authentication bypass methods.

2. Qualification Retention: The provider commits to retaining proof of

their service relationship with OpenAI for at least 36 months and

providing it during client compliance audits.

3. Parameter Transparency: The provider commits not to modify the

moderation parameters passed by the client; any changes require

prior written notice.

4. Error Code Transparency: The provider commits to passing through all

safety violation and policy violation error codes from OpenAI

directly to the client.

5. No Data Retention: The provider commits to storing prompts and images

only temporarily during request processing and clearing them within

N hours after completion.

VI. gpt-image-2 Integration: A Minimal Compliance Code Example

Now that we've covered the legal side, here is a minimal, runnable code example featuring pre-processing moderation to help your technical team establish a compliance baseline.

6.1 Minimalist Call Code

# pip install openai

from openai import OpenAI

# Use a compliant API proxy service (e.g., APIYI apiyi.com) while maintaining standard OpenAI SDK calls

client = OpenAI(

api_key="sk-your-key",

base_url="https://api.apiyi.com/v1" # Replace with your compliant proxy endpoint

)

response = client.images.generate(

model="gpt-image-2",

prompt="A modern minimalist office workspace, natural lighting",

size="1024x1024",

quality="high",

moderation="auto" # Default value, maintains Indemnification protection

)

print(response.data[0].url)

📦 Full Version with Dual-Layer Moderation (Click to expand)

import os

import logging

from openai import OpenAI

from openai import BadRequestError

# Replace with your compliant proxy endpoint, e.g., APIYI apiyi.com

client = OpenAI(

api_key=os.environ["OPENAI_API_KEY"],

base_url="https://api.apiyi.com/v1"

)

def pre_check_prompt(prompt: str) -> bool:

"""

Pre-check: Call a local content safety API

Placeholder example; integrate with Alibaba Cloud/Tencent Cloud content security

"""

forbidden_keywords = [

# Jurisdiction-specific keywords

"political leaders", "sensitive political terms",

# Commercial risk keywords

"famous brand + knockoff", "competitor logo"

]

return not any(kw in prompt for kw in forbidden_keywords)

def post_check_image(image_url: str) -> bool:

"""

Post-check: Call an image moderation API

Placeholder example; integrate with image content security services

"""

# In a real environment, call Alibaba Cloud/Tencent Cloud image moderation

return True

def generate_compliant_image(prompt: str):

# Step 1: Pre-check

if not pre_check_prompt(prompt):

logging.warning("Prompt failed pre-check")

return None

# Step 2: Call gpt-image-2

try:

response = client.images.generate(

model="gpt-image-2",

prompt=prompt,

size="1024x1024",

quality="high",

moderation="auto" # Must keep default to retain Indemnification

)

image_url = response.data[0].url

except BadRequestError as e:

# OpenAI safety_violations will be passed through here

logging.error(f"OpenAI refused generation: {e}")

return None

# Step 3: Post-check

if not post_check_image(image_url):

logging.warning("Image failed post-check")

return None

return image_url

if __name__ == "__main__":

url = generate_compliant_image(

"A modern minimalist office workspace, natural lighting"

)

print(f"Compliant image URL: {url}")

6.2 Three-Tier Logging: Preparing for Legal Audits

One detail often overlooked in compliant integration is log retention. Here are the three tiers of logs we recommend recording:

[L1] Request-level logs

- request_id, timestamp, user_id

- prompt (anonymized or hashed)

- moderation parameter value

[L2] Response-level logs

- status_code returned by OpenAI

- Error type if safety_violations occur

- URL and hash of the generated image

[L3] Moderation-level logs

- Pre-check pass/fail result

- Post-check pass/fail result

- Reason for rejection and matched keywords

🎯 Log Retention Recommendation: Legal audits typically require keeping complete call logs for at least 6-12 months. APIYI (apiyi.com) provides enterprise-grade request-level log search capabilities, allowing you to filter by

user_id, time range, or error type, making it easier to support your company's internal compliance audits.

VII. gpt-image-2 Risk Matrix: Compliance Priorities for Different Business Scenarios

Not every gpt-image-2 application scenario requires "maximum compliance." Providing your legal team with a risk matrix helps business units allocate compliance resources based on priority.

7.1 Risk Matrix (Based on "Data Sensitivity × Commercial Use")

| Business Scenario | Data Sensitivity | Commercial Use | Overall Risk | Recommended Compliance Level |

|---|---|---|---|---|

| Internal PPT Illustrations | Low | Low (Internal) | 🟢 Low | Basic: SCC Filing |

| Marketing Assets (No faces) | Low | Medium (Public) | 🟡 Medium | Standard: SCC + General Content Moderation |

| E-commerce Product Images | Medium | High (Direct Sales) | 🟡 Medium | Standard: SCC + Trademark/IP Screening |

| User Avatar Gen (w/ faces) | High | Medium (User-facing) | 🟠 High | Advanced: PIPL Certification + Separate Consent |

| Celebrity Image Generation | High | Any | 🔴 Extreme | Not Recommended: Excessive legal risk |

| Government/Public Projects | High | Low | 🟠 High | Advanced: Security Assessment + Domestic Alternatives |

| SaaS Reselling | Medium | High | 🟠 High | Advanced: Full Compliance Chain + User Agreement |

7.2 Compliance Paths for Three Typical Enterprise Types

Type A: Small to Medium Internet Companies (< 100 employees)

Compliance Strategy: Pragmatic

- Channel: Choose a reputable domestic compliant API proxy service

- Documentation: Standard Contractual Clauses (SCC) filing is sufficient

- Content Moderation: Alibaba Cloud/Tencent Cloud Content Security API

- Logs: 6-month retention

- Budget: 50k - 100k RMB / year

Type B: Large Enterprises / Publicly Listed Companies

Compliance Strategy: Rigorous

- Channel: Prioritize providers with formal qualifications (Xinchuang/MLPS)

- Documentation: Standard Contract + PIPL Certification + Internal Compliance Manual

- Content Moderation: Dual-layer moderation + manual spot checks

- Logs: 12-month retention, auditable

- Budget: 300k - 500k RMB / year

Type C: State-Owned Enterprises / Government Projects

Compliance Strategy: Risk-Averse

- Channel: Use only Xinchuang-certified providers; prioritize domestic models

- Documentation: CAC Security Assessment

- Content Moderation: Three-layer moderation (Pre/Mid/Post)

- Logs: 36+ months, complete audit trail

- Budget: 1M+ RMB / year

7.3 Risk Incident Response Plan

Regardless of how robust your compliance is, you need an emergency plan. Risks related to gpt-image-2 generally fall into these three categories:

| Incident Type | Trigger Scenario | Immediate Response | Action Within 7 Days |

|---|---|---|---|

| User IP Infringement Complaint | Customer service receives third-party claim | Take down the image immediately | Initiate OpenAI Indemnification process |

| Content Violation Leak | Regulatory inquiry | Suspend service, take down all generated content | Cooperate with investigation, submit full logs |

| API Proxy Provider Issues | Provider flagged / goes out of business | Switch to backup endpoint | Assess data risk, notify users |

| Prompt Injection Attack | User bypasses prompt template for harmful content | Temporarily disable user-input | Upgrade pre-moderation mechanism |

🎯 Emergency Capability Building: A complete response requires your API proxy service to provide "request-level log search" capabilities. APIYI (apiyi.com) provides enterprise-grade log interfaces, supporting real-time search by

request_id,user_id, time range, and error type, significantly shortening response times during regulatory audits or user complaints.

7.4 Balancing Compliance Costs and Business Value

Finally, let's look at the ROI of compliance investment from a decision-maker's perspective:

| Investment Area | One-time Cost | Ongoing Cost | Business Value |

|---|---|---|---|

| Choosing the right API proxy | 0 | Near 0 | ⭐⭐⭐⭐⭐ |

| Deploying dual-layer content security | 50k-150k | 10k-20k/mo | ⭐⭐⭐⭐⭐ |

| SCC Standard Contract Filing | 20k-50k | 0 | ⭐⭐⭐⭐ |

| PIPL Certification | 100k-300k | 0 | ⭐⭐⭐ |

| Security Assessment | 500k-1M | 0 | ⭐⭐⭐ |

| Full Audit Log System | 50k-100k | 10k/mo | ⭐⭐⭐⭐⭐ |

Conclusion: Choosing the right API proxy is the highest-ROI compliance decision—the cost is near zero, yet it covers over 60% of your compliance risks.

VIII. gpt-image-2 Legal & Compliance FAQ

Q1: If our company is in China, will using gpt-image-2 be considered "illegal access to overseas services"?

No. China's "Cybersecurity Law" and "Data Security Law" do not prohibit companies from accessing overseas APIs for business purposes. The real compliance points are: (a) Is data cross-border transfer compliant (does the prompt contain personal info)? (b) Does the service provider have a legal entity in China? By accessing through a domestic compliant API proxy service, the data transmission path is processed within the domestic provider's infrastructure, significantly reducing the compliance friction of direct connections.

Q2: Legal asks, "If something goes wrong, who is liable?" How should we answer?

Define responsibilities layer by layer in the contract chain:

- OpenAI: Third-party IP infringement claims (subject to compliance usage, via Indemnification).

- API Proxy Provider: Channel compliance, API key authenticity, and billing accuracy.

- Your Enterprise: Legality of input content, compliance of final use, and downstream user agreements.

- Downstream Users: Compliance of secondary usage (if you are a SaaS provider).

These four layers of responsibility should be clearly defined in your contracts, rather than just signing a "Standard Service Agreement."

Q3: gpt-image-2 vs gpt-image-1: What has changed legally?

There are no changes at the API terms level—both are subject to the same OpenAI Service Terms and Usage Policies. Technically, gpt-image-2 introduces agentic reasoning, performing more planning before generation. This means content safety filtering will be smarter but stricter—some edge-case prompts that passed on gpt-image-1 might be rejected on gpt-image-2. We recommend that legal teams ask the technical team to perform a regression test on historical prompts during version upgrades.

Q4: Can we register copyright for images generated by gpt-image-2?

Theoretically, you can try, but the success rate varies by jurisdiction:

- US Copyright Office: Explicitly does not accept copyright registration for purely AI-generated content.

- China: Some court precedents acknowledge "creative contributions by prompt engineers," but it still requires case-by-case judgment.

- EU: Generally conservative, leaning towards non-protection.

Practical Advice: If it's a core IP asset (e.g., brand logo, IP character), use gpt-image-2 for the draft, then have a designer perform substantial secondary creation to increase the success rate of copyright registration.

Q5: If the API proxy provider goes out of business, can we still use the images we generated?

Yes. OpenAI has assigned image ownership to the "user calling the API" (i.e., your enterprise). This is a contractual right, independent of the existence of the API proxy provider. The prerequisite is that you have saved complete request/response logs as proof of ownership.

Q6: Should the company mention gpt-image-2 in our ToS / Privacy Policy?

Highly recommended. Disclose three things:

- Your product/service uses the OpenAI gpt-image-2 model to generate images.

- User-provided prompts are transmitted to OpenAI/the API proxy provider.

- The scope of use for generated images (commercial/non-commercial, exclusive/non-exclusive).

Clear disclosure in your privacy policy is required by China's "Personal Information Protection Law" and helps the company claim "informed user consent" in the event of a dispute.

Q7: Can government/public institutions use gpt-image-2?

Yes, but they must do two extra things:

- Choose an API proxy provider with "Xinchuang/MLPS" qualifications to ensure the technical stack is compliant.

- Perform strict desensitization on prompt content; never transmit classified or politically sensitive information.

Q8: Do we need to label images generated by gpt-image-2 as "AI-generated"?

Highly recommended. Article 12 of the "Interim Measures for the Management of Generative Artificial Intelligence Services" by the CAC states:

"Providers shall label generated content such as images and videos in accordance with the 'Provisions on the Administration of Deep Synthesis of Internet Information Services'."

There are two ways to implement this:

- Explicit Labeling: Embed "AI-generated" text in the corner or metadata of the image.

- Implicit Watermarking: Add an invisible digital watermark (Provenance Watermark) to the image.

Images generated by gpt-image-2 embed C2PA (Content Provenance and Authenticity) metadata by default, which is the international standard for AI content authentication. However, companies must still perform explicit labeling based on local regulatory requirements.

Q9: If a user uploads an image for i2i (image-to-image), how is copyright handled?

This is a common gpt-image-2 scenario that requires special attention:

| Scenario | Input Image Copyright | Output Image Copyright |

|---|---|---|

| User uploads their own photo | User-owned | User (secondary creation) |

| User uploads someone else's work | Third-party owned | High legal risk |

| User uploads a photo with a celebrity's face | Involves portrait rights | Extremely high legal risk |

Best Practice: Require users in your product ToS to ensure the copyright and portrait rights of uploaded images themselves, and maintain logs of user behavior.

Q10: What if OpenAI suddenly restricts access from China?

Historically, OpenAI has adjusted access policies for mainland China several times. Compliant domestic API proxy providers usually have overseas nodes and multi-endpoint configurations, allowing for seamless switching when policies change. This is one of the core reasons why enterprises prioritize proxy solutions over self-built direct connections. We recommend writing "endpoint high-availability SLA" into your contract with the API proxy provider.

IX. Summary: Three Core Judgments for gpt-image-2 Legal Compliance

Returning to the question at the beginning of this article, if you need to address the concerns of corporate legal teams as concisely as possible, you only need these three points:

9.1 Three Core Judgments

✅ Judgment 1: There is no additional legal risk in using gpt-image-2 for commercial purposes.

Prerequisites: Use official direct channels + do not bypass safety mechanisms + do not use it to train competing models.

✅ Judgment 2: Ownership of generated images belongs to the corporate user invoking the API.

Note: Ownership does not equate to full copyright; for core IP assets, we recommend human-led secondary creation.

✅ Judgment 3: Enterprises have an obligation for "pre-content moderation."

Reason: OpenAI only provides general filtering; enterprises are responsible for jurisdiction-specific risks.

9.2 Three Actionable Recommendations for Corporate Legal Teams

- Establish a Due Diligence Checklist for API proxy services: Include at least three core areas: channel type, entity qualifications, and data retention policies.

- Implement a Two-Layer Content Security Mechanism: Combine OpenAI's safety filtering with local content security APIs to ensure coverage of jurisdiction-specific risks.

- Build a Comprehensive Invocation Logging System: Retain request, response, and moderation logs for at least 12 months to facilitate audits.

9.3 Best Practice Recommendations

🎯 Overall Recommendation:

gpt-image-2is perfectly safe to use in enterprise scenarios, provided you choose the right access method and implement supporting compliance mechanisms. We recommend that enterprises connect via an API proxy service like APIYI (apiyi.com), which offers a complete compliance chain. This platform supports corporate invoicing, provides full invocation logs, and passes through security error codes exactly as they are, meeting the verification standards of most corporate legal teams.

Compliance isn't about blocking business; it's about putting risks on the table. When you can present your legal team with a complete solution—including "channel compliance proof + two-layer content security + 12-month audit logs"—the approval process for gpt-image-2 is usually very smooth.

gpt-image-2 is currently one of OpenAI's most powerful image generation models, excelling in 2K resolution, text rendering, and complex composition. By doing your homework on legal compliance, your subsequent product iteration and commercialization path will be much smoother.

A final word for corporate legal teams: "The goal of compliance review isn't to say 'no,' but to figure out 'how to use it safely.'" We hope this article serves as a starting point for your decision on whether or not to use gpt-image-2.

If you encounter specific issues during the compliance review process—such as service provider qualification checklists, SCC contract templates, or content moderation API selection—these are topics that can be covered in future articles, and we will continue to provide practical guides.

Author: APIYI Team — An enterprise-grade Large Language Model API access platform (apiyi.com). We support unified API calls for 200+ mainstream models, including gpt-image-2, Claude 4.7, and Gemini 3 Pro, serving the compliance-driven access needs of listed companies, state-owned enterprises, and cross-border businesses.

Reference Terms: OpenAI Terms of Use, OpenAI Service Terms, OpenAI Usage Policies, OpenAI Indemnification Policy. This article does not constitute legal advice; please consult your company's legal team or a professional attorney for specific compliance decisions.