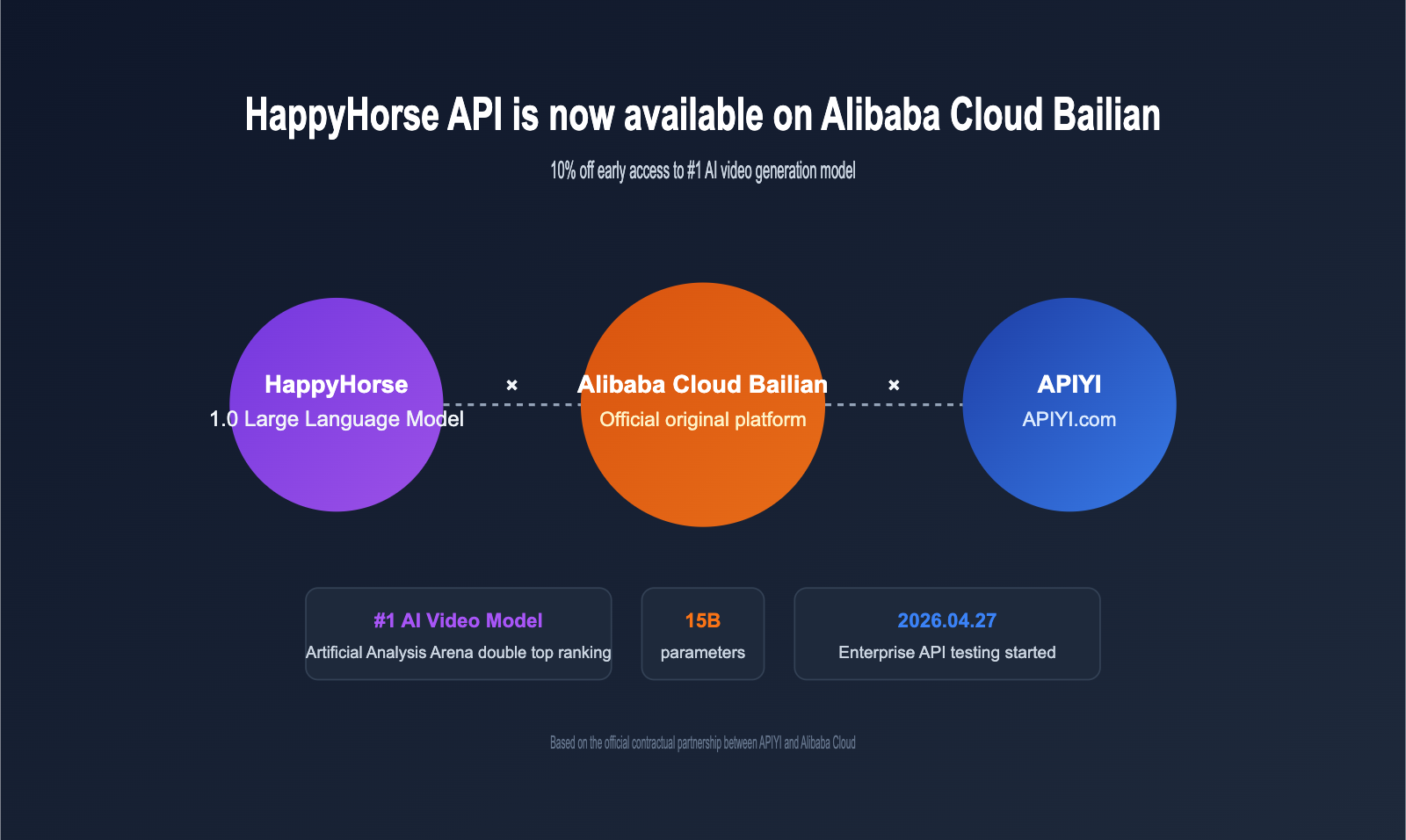

On April 27, 2026, Alibaba officially confirmed that the HappyHorse API has entered enterprise-level testing on the Alibaba Cloud Bailian platform. This video generation model, which consistently holds the #1 spot on both the text-to-video and image-to-video leaderboards of the Artificial Analysis Video Arena, has finally transitioned from anonymous testing to commercial availability. With its 15B parameter unified multimodal architecture, native 1080p HD output, and synchronized audio generation capabilities, the HappyHorse API has quickly become one of the most anticipated video models for developers and content teams alike.

As an official partner of Alibaba Cloud, APIYI (apiyi.com) has signed a formal cooperation agreement and is currently fast-tracking the integration of the HappyHorse API. Once launched, the pricing for HappyHorse API calls on APIYI will remain consistent with the official Alibaba Cloud Bailian portal. Additionally, we're offering a promotion where you get $10 free with every $100 top-up (approximately 10% off), allowing developers to leverage this top-tier video generation capability at a more optimized cost.

Quick Overview of HappyHorse API

Before we dive into the technical details of the HappyHorse API, let's break down the key facts of this release in the table below. This information is compiled from public English-language sources including Alibaba Cloud, Bloomberg, CNBC, and Artificial Analysis.

| Dimension | HappyHorse API Key Facts |

|---|---|

| Model Version | HappyHorse-1.0 |

| Development Team | Alibaba ATH Innovation Division (including Tongyi Lab, Alibaba Platform Technology, Taotian Tech) |

| Model Scale | ~15B parameters, 40-layer Transformer |

| Architecture Type | Unified single-stream Transformer |

| Core Capabilities | Text-to-video (T2V) / Image-to-video (I2V) / Native audio synchronization |

| Output Specs | Native 1080p HD, average ~10 seconds per generation |

| Visual Styles | 50+ (Cinematic, Anime, Documentary, Commercial, Sci-Fi, etc.) |

| Prompt Length | Up to 2,500 characters |

| Invocation Mode | Pro / Std (Standard mode) |

| Task Mode | Asynchronous task (POST /api/generate → GET /api/status) |

| Bailian Launch | Enterprise API testing started 2026-04-27 |

| Commercial Launch | Official commercial release in May 2026 |

| Leaderboard Results | T2V (no audio) Elo 1333, I2V (no audio) Elo 1392, both ranked #1 globally |

🎯 Integration Tip: If you're planning to complete your technical integration before the official commercial launch in May, we recommend keeping an eye on the HappyHorse API launch notification at APIYI (apiyi.com). Our platform's integration with Alibaba Cloud Bailian is based on an official partnership, ensuring pricing parity with the official Bailian service. Plus, we support switching between multiple video models under a unified interface, making it easier for you to evaluate the differences between the HappyHorse API and your existing video generation solutions.

HappyHorse API Technical Architecture Analysis

Unified Multimodal Design of HappyHorse API

The primary difference between HappyHorse-1.0 and previous-generation video models lies in its architectural layer. According to technical white paper details disclosed by media outlets such as Bloomberg, Heise, and TechNode, the model behind the HappyHorse API utilizes a unified single-stream Transformer architecture, which processes video tokens and audio tokens within the same sequence for joint training.

This design has three direct impacts:

- Native Audio-Visual Synchronization: Audio is not aligned post-generation; it is generated alongside the visuals. Consequently, lip-syncing, ambient sounds, and action sound effects naturally align on the physical timeline.

- Cross-Modal Semantic Sharing: When generating video, the model can "hear" the audio it is about to produce, ensuring that the visuals and sound remain consistent at the narrative level.

- Unified Inference Path: Text-to-video, image-to-video, and both audio-enabled and non-audio modes follow the same inference path. You only need to toggle parameters during API invocation.

The 40-layer Transformer uses a "sandwich" layout: the first and last 4 layers use modality-specific projections, while the middle 32 layers share parameters. This structure allows the HappyHorse API to retain unique representations for each modality while fully integrating cross-modal information in the backbone.

Asynchronous Task Model of HappyHorse API

The HappyHorse API operates on an asynchronous task model rather than returning results instantly. Developers first create a task via a POST request to obtain a task_id, then poll for the status via a GET request (or use a callback mechanism to receive completion notifications). This differs significantly from traditional synchronous conversational APIs.

Below is a minimal, functional example of a HappyHorse API call:

import requests

import time

# APIYI unified access endpoint (post-launch)

API_BASE = "https://vip.apiyi.com/v1"

API_KEY = "your_APIYI_key"

headers = {

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json"

}

# 1. Create video generation task

create_payload = {

"model": "happyhorse-1.0/video",

"prompt": "A white horse running in a golden wheat field, sunset glow, cinematic camera movement",

"mode": "pro", # pro or std

"duration": 5, # video duration (seconds)

"with_audio": True # whether to generate synchronized audio

}

create_resp = requests.post(

f"{API_BASE}/happyhorse/generate",

headers=headers,

json=create_payload

)

task_id = create_resp.json()["task_id"]

# 2. Poll task status

while True:

status_resp = requests.get(

f"{API_BASE}/happyhorse/status/{task_id}",

headers=headers

)

status = status_resp.json()["status"]

if status in ["succeeded", "failed"]:

break

time.sleep(3)

# 3. Get video URL

if status == "succeeded":

video_url = status_resp.json()["output"]["video_url"]

print(f"Video generated: {video_url}")

💡 Code Note: The

base_urlin the example above uses the unified endpointhttps://vip.apiyi.com/v1provided by APIYI. Based on official partnerships, the HappyHorse API is fully compatible with the official Alibaba Cloud Bailian interface. You can use the same request structure without needing to rewrite your invocation logic for different video models.

Key Parameters for HappyHorse API

| Parameter | Type | Required | Description |

|---|---|---|---|

model |

string | Yes | Fixed as happyhorse-1.0/video |

prompt |

string | Yes | Text description, max 2500 characters |

mode |

string | No | pro or std, defaults to std |

duration |

int | No | Video duration (seconds), typically 3-10s |

with_audio |

boolean | No | Whether to generate synced audio, default false |

image_url |

string | No | Input image URL for image-to-video |

style |

string | No | Visual style tag (cinematic / anime / etc.) |

aspect_ratio |

string | No | Aspect ratio, e.g., 16:9 / 9:16 / 1:1 |

seed |

int | No | Random seed for reproducibility |

webhook_url |

string | No | Callback URL for task completion |

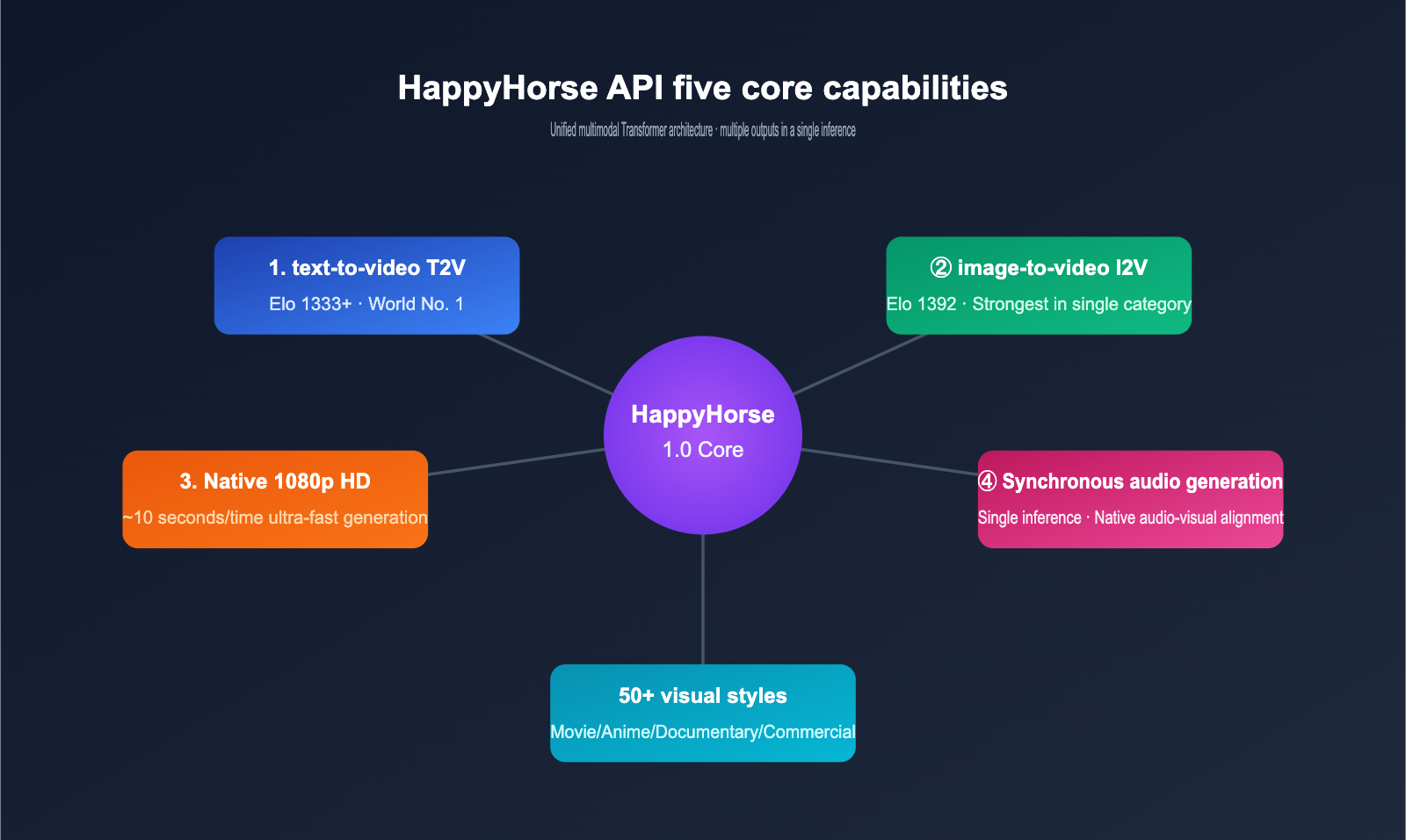

5 Core Capabilities of HappyHorse API

Capability 1: World-Leading Text-to-Video (T2V)

In blind tests on the Artificial Analysis Video Arena, the HappyHorse API's text-to-video mode (without audio) achieved an Elo score of 1333-1357, outperforming the long-standing leader, ByteDance Seedance 2.0, by approximately 60 points. This isn't just a marginal lead; it represents a generational gap in the rankings.

Regarding generation quality, the HappyHorse API excels in:

- Complex Motion Capture: High-speed actions like running, jumping, or vehicle steering avoid the "jelly effect" common in traditional video models.

- Physical Consistency: Physical processes such as gravity, fluids, and fabric movement closely mimic real-world laws.

- Long Prompt Adherence: The 2500-character prompt limit allows developers to describe scenes using complete scripts, with significantly higher model adherence than previous generations.

Capability 2: Best-in-Class Image-to-Video (I2V)

Image-to-video (I2V) is the HappyHorse API's most prominent strength, with an Elo score of 1392. In comparative tests against fal.ai and WaveSpeedAI, the HappyHorse API leads in:

- Subject Identity Preservation: After inputting a portrait, the character's facial features, hairstyle, and clothing remain highly consistent throughout the generated video.

- Reasonable Scene Extension: After inputting a static scene, the model can logically infer the spatial structure beyond the frame.

- Controllable Camera Movement: Professional camera movements like zooming, panning, and orbiting can be specified via prompts.

Capability 3: Native Audio Synchronization

This is the most distinctive feature of the HappyHorse API. While traditional video models require generating audio separately and stitching it together, the HappyHorse API uses a unified Transformer to place audio tokens and visual tokens in the same sequence, outputting video and audio simultaneously in a single inference pass.

Supported audio types include:

- Character dialogue (with lip-syncing)

- Ambient sound (wind, flowing water, city backgrounds)

- Foley sound effects (footsteps, door closing, clinking tableware)

- Multilingual dubbing (based on language cues in the prompt)

Capability 4: Native 1080p HD and High-Speed Generation

The HappyHorse API outputs 1920×1080 native resolution by default, requiring no post-processing upscaling. Average generation time is approximately 10 seconds (for short video scenarios), making it one of the fastest in its class.

| Output Metric | HappyHorse API Performance |

|---|---|

| Default Resolution | 1920×1080 (1080p HD) |

| Frame Rate | 24 / 30 fps selectable |

| Short Video Time | ~10 seconds (3s video) |

| Standard Video Time | ~25 seconds (5s video) |

| Long Video Time | ~60 seconds (10s video) |

| Audio Sample Rate | 48 kHz synchronized generation |

Capability 5: 50+ Visual Style Presets

The HappyHorse API includes over 50 built-in visual style presets, covering mainstream categories such as cinematic, anime, documentary, commercial advertising, fashion, sci-fi, and vintage film. Developers can simply add style keywords to their prompt or specify them via the style parameter to achieve the corresponding aesthetic.

🎯 Style Usage Tip: For commercial content creation, we recommend comparing the actual performance of different HappyHorse API style presets on the APIYI (apiyi.com) platform. The platform allows a single account to call both the HappyHorse API and other video models simultaneously, making it easy to perform quick horizontal evaluations before committing to large-scale production.

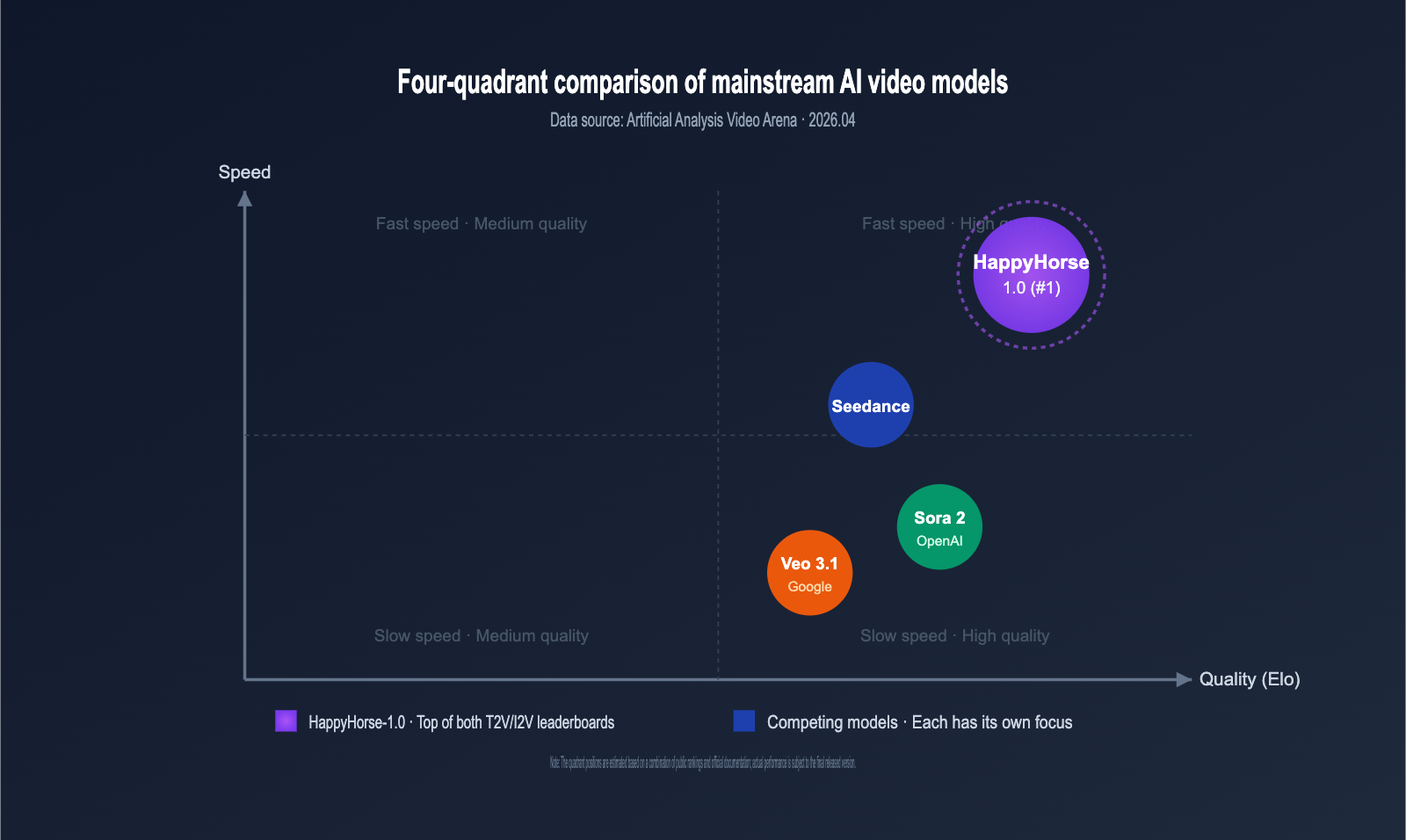

HappyHorse API vs. Mainstream Video Models

To help developers build a clear understanding of the landscape, we’ve compiled a comparison of the HappyHorse API against current mainstream video generation models. Data is sourced from Artificial Analysis, fal.ai, WaveSpeedAI, and official model documentation.

HappyHorse API vs. ByteDance Seedance 2.0

| Comparison Dimension | HappyHorse API | ByteDance Seedance 2.0 |

|---|---|---|

| Artificial Analysis T2V Elo | 1333-1357 (#1) | ~1273 (#2) |

| Artificial Analysis I2V Elo | 1392 (#1) | ~1331 (#2) |

| Native Audio Generation | ✅ Single-pass inference | ❌ Requires secondary synthesis |

| Default Resolution | 1080p HD | 1080p HD |

| Prompt Limit | 2500 characters | 1800 characters |

| Style Presets | 50+ | 30+ |

| Commercial Status | Commercialized May 2026 | Commercialized |

HappyHorse API vs. OpenAI Sora 2

| Comparison Dimension | HappyHorse API | OpenAI Sora 2 |

|---|---|---|

| Physical Consistency | Strong (Unified Transformer) | Strong (DiT architecture) |

| Audio-Video Sync | ✅ Native single-pass inference | ⚠️ Requires separate generation |

| Long Video Limit | Currently ~10 seconds | Up to 60 seconds |

| Chinese Prompt Adherence | Excellent (Native training) | Average (English-focused) |

| Domestic Access | ✅ Direct via Alibaba Cloud Bailian | ❌ Requires international routing |

| Price Transparency | High (Public Bailian pricing) | Medium (Subscription-based) |

HappyHorse API vs. Google Veo 3.1

| Comparison Dimension | HappyHorse API | Google Veo 3.1 |

|---|---|---|

| Text-to-Video Elo | 1333+ (#1) | ~1290 |

| 4K Output | Not supported yet | ✅ Supported |

| Multi-shot Narrative | Primarily single-shot | ✅ Supports multi-shot switching |

| Character Consistency | Strong | Medium |

| Ecosystem Availability | Primarily Alibaba Cloud Bailian | Google Cloud ecosystem |

| Overall Cost-Effectiveness | High (Domestic access advantage) | Medium (Requires international access) |

💡 Selection Advice: Different video models have their own strengths in physical realism, long-form video, 4K output, and multi-shot switching. We recommend using APIYI (apiyi.com) to switch between HappyHorse API, Sora, and Veo under a single interface for A/B testing. The platform has an official partnership with Alibaba Cloud, ensuring that HappyHorse API pricing remains consistent with Bailian, keeping your comparative costs under control.

HappyHorse API Access Paths: Alibaba Cloud Bailian vs. APIYI

Direct Access via Alibaba Cloud Bailian

Alibaba Cloud Bailian is the official platform for the HappyHorse API. Since April 27, 2026, Bailian has opened enterprise-level API testing, with full commercialization beginning in May. Direct access is ideal for:

- Enterprise users already deployed within the Alibaba Cloud ecosystem.

- Clients with strict requirements for domestic compliance and data localization.

- Teams that already have an Alibaba Cloud enterprise account and invoicing entity.

Access via APIYI

APIYI (apiyi.com) has signed an official partnership agreement with Alibaba Cloud and is currently finalizing the integration of the HappyHorse API. Once launched, the HappyHorse API call pricing on APIYI will be identical to the official Alibaba Cloud Bailian site, with added recharge bonuses. This is ideal for:

- Users who need to call multiple video models (HappyHorse, Sora, Veo, Runway, etc.) under a single interface.

- Individual developers or small teams looking to reduce the management overhead of multiple platform accounts.

- Users who value recharge incentives and promotional offers.

- Teams requiring USD billing, overseas invoicing, and multi-channel customer support.

Full Comparison of Access Paths

| Dimension | Direct Access (Alibaba Cloud Bailian) | Unified Access (APIYI) |

|---|---|---|

| HappyHorse API Pricing | Official list price | Same as Bailian official site |

| Recharge Promotions | Internal Alibaba Cloud events | $10 bonus for every $100 (approx. 10% off) |

| Access Difficulty | Medium (Requires enterprise credentials) | Low (Available to individuals/enterprises) |

| Video Model Variety | Primarily Alibaba-affiliated | HappyHorse + Sora + Veo + Runway, etc. |

| Customer Support | Primarily via ticketing | 7×24 WeChat/Ticketing dual-channel |

| Target Audience | Deep Alibaba Cloud ecosystem users | Cross-platform developers, content teams |

| Interface Compatibility | Alibaba native | OpenAI compatible + Alibaba native dual-protocol |

| Billing Method | RMB/Deduction packages | USD recharge, 10% off equivalent |

🎯 Path Recommendation: If you are already a heavy Alibaba Cloud user, connecting directly to Bailian is the natural choice. If you need to switch flexibly between multiple video models or want to deploy the HappyHorse API with better discounts, we recommend keeping an eye on the launch progress at APIYI (apiyi.com). The platform integrates the HappyHorse API based on an official contract with Alibaba Cloud, offering pricing consistent with Bailian plus an additional 10% recharge discount.

HappyHorse API Recharge Promotions and Pricing Strategy

Cost Calculation for HappyHorse API on APIYI

While the specific unit price for the HappyHorse API is determined by the official Alibaba Cloud Bailian pricing, APIYI’s recharge mechanism allows you to reduce your actual unit cost by approximately 10%. Taking a $100 recharge as an example:

| Item | Amount |

|---|---|

| Actual Recharge | $100 |

| Platform Bonus | $10 |

| Actual Account Balance | $110 |

| Equivalent Discount | Approx. 10% off (90.9% effective rate) |

| Recharge Limit | No single-transaction limit; stackable |

For example: Assuming the standard (std) mode cost for a single HappyHorse API generation on Bailian is $0.10, recharging $100 via APIYI gives you:

- Direct Bailian Cost: $100 ÷ $0.10 = approx. 1,000 generations

- APIYI Recharge Cost: $110 ÷ $0.10 = approx. 1,100 generations

The difference is equivalent to 100 extra free generations. For mid-sized content teams, this can significantly lower the marginal cost of video generation in just one month.

Estimated Cost Structure for Different HappyHorse API Modes

| Invocation Mode | Use Case | Relative Cost | Recommended Usage |

|---|---|---|---|

| std (Standard) | Internal testing, prototyping | Lower | Bulk material generation |

| pro (Professional) | Commercial content, final output | Higher | Key shots, client delivery |

| With Audio | Complete audiovisual products | Slightly higher than pro | Short videos, commercials |

| Without Audio | Independent post-production dubbing | Lowest | Visual asset library building |

💡 Cost Control Tip: We recommend using the std mode + no audio to generate a large number of drafts during the prototyping phase. Once you've selected the best options, switch to pro mode + audio for the final delivery version. This tiered invocation strategy is especially easy to implement on the APIYI apiyi.com platform, which supports flexible switching between different HappyHorse API modes under the same API key, with the 10% recharge bonus further compressing your unit costs.

Typical Use Cases for HappyHorse API

HappyHorse API Use Case 1: Short Video Content Production

Short videos are the core battlefield for the HappyHorse API. With an average generation time of 10 seconds, native audio, and 50+ style presets, this combination makes it possible to produce hundreds of short videos per day.

Typical workflow:

- Break down the script into 5-10 second segments.

- Use the HappyHorse API for text-to-video generation for each segment.

- Stitch together + simple editing + subtitles.

HappyHorse API Use Case 2: E-commerce Product Videos

Image-to-video (I2V) is incredibly valuable in e-commerce. Merchants can upload a main product image, and the HappyHorse API automatically generates a showcase video, eliminating the need for re-shooting.

ecommerce_payload = {

"model": "happyhorse-1.0/video",

"image_url": "https://cdn.example.com/product.jpg",

"prompt": "360-degree product display, indoor soft lighting, e-commerce style",

"mode": "pro",

"duration": 5,

"style": "commercial",

"aspect_ratio": "1:1"

}

HappyHorse API Use Case 3: Advertising and Marketing Materials

Commercial advertisements require high visual quality and brand consistency. The combination of pro mode and cinematic style presets best showcases the cinematic feel of the HappyHorse API.

HappyHorse API Use Case 4: Education and Training Videos

Educational content requires precise expression of knowledge points. The HappyHorse API's prompt limit of up to 2,500 characters is perfect for describing teaching scenarios using a complete script.

HappyHorse API Use Case 5: Game and Film Storyboarding

During pre-production, directors often need to preview scripts with dynamic visuals. The physical consistency and camera control of the HappyHorse API make it a low-cost alternative for storyboarding.

HappyHorse API Integration FAQ

Q1: When will the HappyHorse API be officially available for commercial use?

According to the official announcement from Alibaba Cloud, the HappyHorse API entered enterprise-level testing on April 27, 2026, and is scheduled for official commercial release in May. APIYI is currently finalizing our official partnership agreement with Alibaba Cloud. Our launch will align with Alibaba Cloud's commercial rollout. Please keep an eye on announcements at apiyi.com for specific dates.

Q2: Will the HappyHorse API be more expensive on APIYI than on Alibaba Cloud Bailian?

No. Because APIYI has an official partnership with Alibaba Cloud, our pricing for the HappyHorse API will be identical to the official Alibaba Cloud Bailian platform. Furthermore, APIYI offers a promotion where you get $10 extra for every $100 deposited, effectively providing a 10% discount compared to the official rate, making your actual costs lower than connecting directly to Bailian.

Q3: Does the HappyHorse API support Chinese prompts?

Yes, fully. HappyHorse was developed by Alibaba's ATH innovation department, with core contributions from the Tongyi Lab. The model has undergone deep training on Chinese corpora, resulting in semantic understanding and scene reconstruction capabilities that significantly outperform international competitors primarily focused on English.

Q4: How long can the videos generated by the HappyHorse API be?

During the testing phase, video durations are primarily limited to under 10 seconds, consistent with the test samples found on the Artificial Analysis leaderboard. Whether this will be extended to longer videos after the commercial launch depends on future announcements from Alibaba Cloud Bailian. If you need long, multi-shot videos, we recommend using a "segment-and-stitch" approach.

Q5: Should I choose HappyHorse API, Sora, or Veo?

If your primary focus is on Chinese-language scenarios, native audio-video synchronization, and cost transparency, the HappyHorse API is currently your best choice. If you require long-form video (60 seconds+) or native 4K output, you might consider Sora or Veo. We recommend running a side-by-side test using the unified interface at apiyi.com. Our platform supports HappyHorse API, Sora, and Veo, and our official partnership ensures that pricing remains consistent with official rates, keeping your evaluation costs under control.

Q6: Does the HappyHorse API support callback notifications to avoid polling?

Yes. The HappyHorse API provides a webhook callback mechanism. When creating a task, you can specify a callback address via the webhook_url parameter. Once the task is complete, the API will send a POST request to notify you, eliminating the need for polling.

Q7: What are the rate limits for the HappyHorse API?

During the testing phase, rate limits are governed by Alibaba Cloud Bailian's announcements, typically measured in both RPM (requests per minute) and TPM (tasks per minute). Once the HappyHorse API is live on APIYI, we will provide the same official rate limits as Bailian, and enterprise users can apply for quota increases.

Q8: Can videos generated by the HappyHorse API be used commercially?

During the testing phase, the service is prioritized for enterprise customers, and commercial usage scope is subject to the Alibaba Cloud Bailian user agreement. Generally, users hold commercial rights to content generated through paid API calls, but we recommend confirming specific terms with our customer support team before signing any agreements.

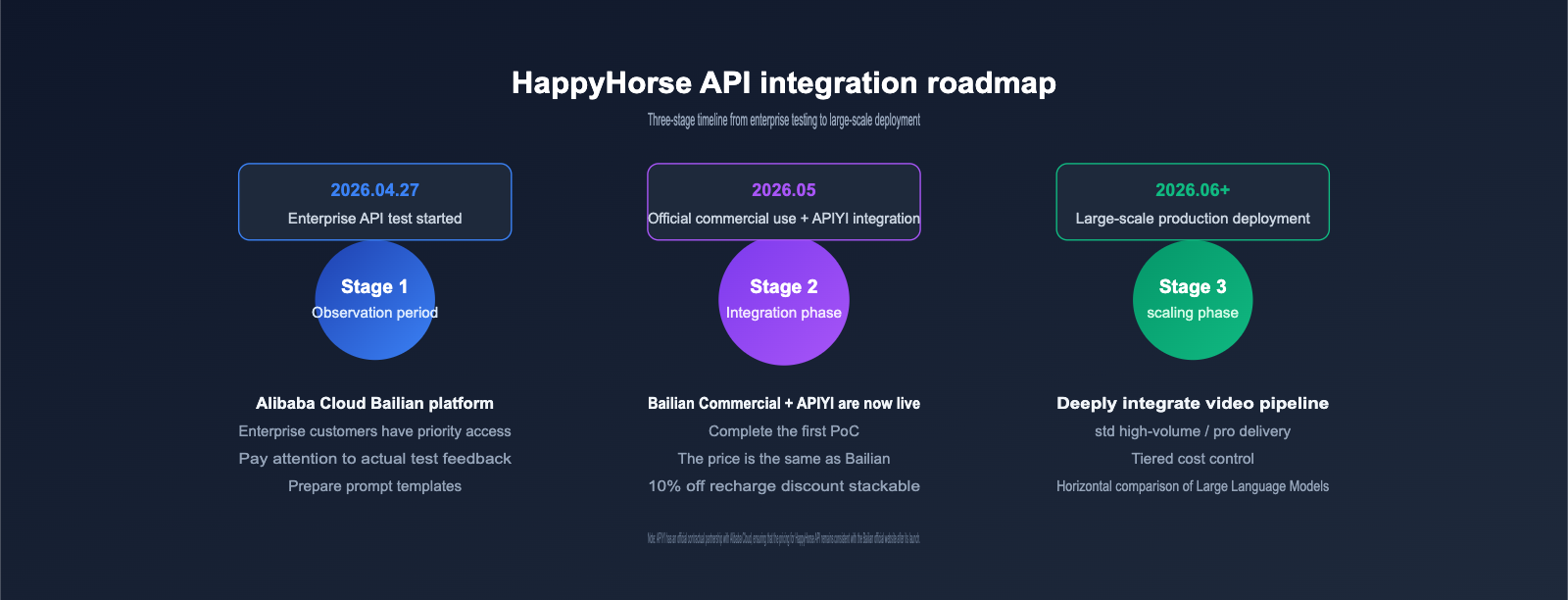

HappyHorse API Integration Roadmap and Recommendations

For developers and teams planning to use the HappyHorse API as soon as it's available, we've put together a phased recommendation:

Phase 1: Observation (2026.04.27 – 2026.05)

- Keep an eye on official Alibaba Cloud Bailian announcements for test feedback on the HappyHorse API.

- Read real-world test cases from English-language communities like Artificial Analysis, fal.ai, and WaveSpeedAI.

- Prepare your prompt templates and asset libraries in anticipation of the commercial window.

Phase 2: Integration (May 2026, First Month of Commercialization)

- For Alibaba Cloud ecosystem users, connect directly to Bailian for quick validation.

- For those needing multi-model comparisons, watch for the HappyHorse API launch announcement at apiyi.com.

- Complete your first Proof of Concept (PoC) to verify the actual performance of the HappyHorse API within your business workflows.

Phase 3: Scaling (From June 2026 onwards)

- Establish your cost baseline on the APIYI platform using the $100 + $10 bonus promotion.

- Use the standard mode for high-volume tasks and the pro mode for final delivery to manage costs effectively.

- Deeply integrate the HappyHorse API into your existing video production pipelines.

🎯 Overall Recommendation: The HappyHorse API is one of the most noteworthy video model releases of 2026. We suggest that technical teams complete a PoC as soon as possible, while content teams should begin building out prompt and style preset libraries. On the apiyi.com platform, you can complete this setup at a lower cost thanks to our 10% deposit bonus. Our official partnership with Alibaba Cloud ensures that pricing remains consistent with the official Bailian site, while also allowing you to perform side-by-side comparative testing with other mainstream video models.

HappyHorse API Launch Summary

The release of the HappyHorse API marks a new phase for video generation models, moving from "single-modal stitching" to "unified multimodal generation." With 15B parameters, a 40-layer unified Transformer, native audio-video synchronization, and a #1 ranking on the Artificial Analysis dual leaderboard, the HappyHorse API is a highly competitive force in the 2026 video model market.

Alibaba Cloud Bailian, as the original platform, opened enterprise testing on April 27th, with full commercial availability starting in May. APIYI (apiyi.com), through our official partnership with Alibaba Cloud, is working hard to integrate the HappyHorse API. Once launched, our pricing will remain consistent with the official Bailian website. Plus, with our current promotion—get $10 free for every $100 topped up—you're effectively getting a 10% discount, making us the preferred access point for individual developers and cross-platform content teams.

For those looking to get a head start with the HappyHorse API, we recommend the following:

- Register for an APIYI (apiyi.com) account in advance and keep an eye out for the HappyHorse API launch announcement.

- Prepare your prompt templates and test assets so you're ready to go as soon as access opens.

- Take advantage of our top-up promotion to optimize your costs for both PoC and large-scale production.

The next round of competition in video generation has begun. Choosing the right access path means securing a more efficient cost structure for your video content production over the next six months.

Author: APIYI Technical Team

Last Updated: 2026-04-27

Contact: Visit the APIYI website at apiyi.com for the latest HappyHorse API integration progress and top-up promotion details.