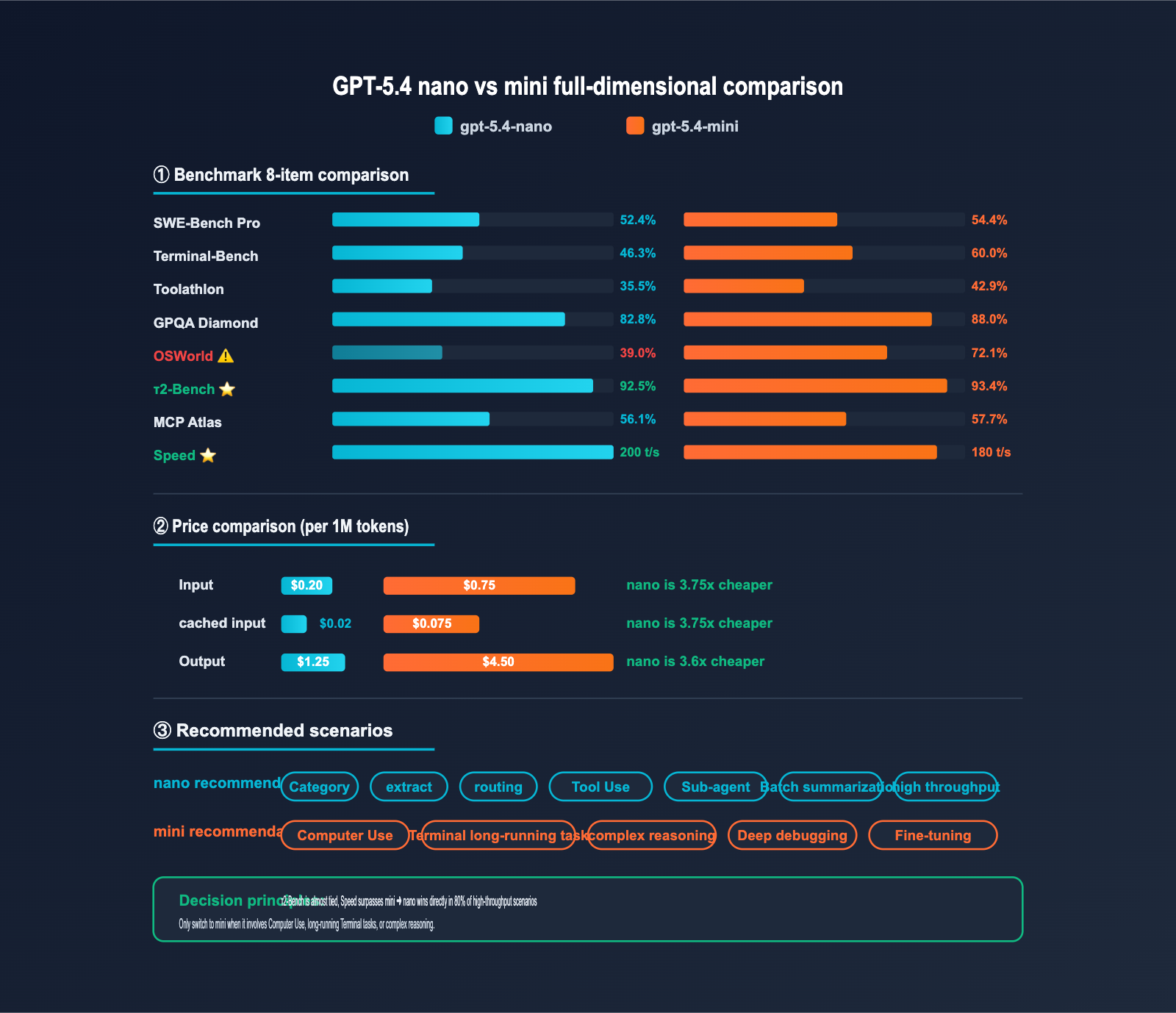

Author's Note: OpenAI's cheapest model, gpt-5.4-nano, is priced at just $0.20/$1.25. With a τ2-Bench score of 92.5%, it’s nearly on par with the mini model. This article provides a detailed breakdown of the 7 best use cases for nano, when you should swap it for mini, and an ultimate optimization strategy using 90% discount caching.

If your application handles over 10,000 calls per day, or if you're selecting models for high-throughput tasks like customer support, classification, or RAG routing, you might have noticed that OpenAI has pushed the "floor price" of the GPT-5.4 series to a new low — gpt-5.4-nano at $0.20 input / $1.25 output per 1M tokens, which is 3.75x cheaper on input than the 5.4-mini.

This isn't just a "stripped-down cheap model." OpenAI's published benchmarks show that nano hits 92.5% in tool calling (τ2-Bench), nearly matching the mini's 93.4%. In general knowledge QA (GPQA Diamond), it scores 82.8%, only 5.2 percentage points behind the mini. This means for a vast number of "high-throughput + low-complexity" scenarios, nano is the true optimal solution.

Core Value: This article dives into 7 specific application scenarios, detailing where nano is "good enough and cheaper," where you "must use mini," and provides code snippets and cost estimates for each.

Core Highlights of GPT-5.4 nano Use Cases

| Feature | Description | Value |

|---|---|---|

| Ultra-low price | $0.20 / $1.25 per 1M tokens | 3.75x cheaper than 5.4-mini |

| Caching -90% | Cached input only $0.02 / 1M | Nearly free for high-frequency context |

| Tool use near mini | τ2-Bench 92.5% vs mini 93.4% | Sufficient for most tool use cases |

| Strong QA | GPQA Diamond 82.8% | Capable of general FAQ and knowledge retrieval |

| 400K context window | 400K input + 128K output | No pressure for bulk document processing |

| Leading speed | ~200 t/s, 10% faster than mini | Top choice for high-throughput pipelines |

How to determine the "sufficiency threshold" for GPT-5.4 nano

To decide if nano is sufficient, you can use a simple "three-zone classification":

Green Zone (Use nano with confidence): Tool calling, structured extraction, classification/labeling, knowledge QA, content routing, bulk translation/summarization — for these tasks, the performance gap between nano and mini is < 10 percentage points, and the price advantage far outweighs the capability gap.

Yellow Zone (Evaluate carefully): Complex multi-step reasoning, long-chain Agent orchestration, code generation — while it can still handle SWE-Bench Pro at 52.4%, we recommend running an A/B test with nano before making a final decision.

Red Zone (Use mini directly): Computer Use (nano is only 39% on OSWorld), long terminal tasks (46.3% is weaker), and custom scenarios requiring fine-tuning — in these cases, nano's performance clearly lags behind, so go with mini or the standard model.

GPT-5.4 nano Use Case 1: Real-time Classification

Scenario Description

Real-time classification is the classic use case for the nano model—this includes sentiment analysis, intent recognition, topic tagging, and content moderation. These tasks typically require only a few hundred tokens for input and a few dozen for output per call, making them extremely sensitive to latency and cost.

Minimal Code Example

import openai

import json

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

def classify_intent(user_query: str) -> dict:

"""Classify user query intent"""

response = client.chat.completions.create(

model="gpt-5.4-nano",

messages=[

{"role": "system", "content": "You are an intent classifier. Return in JSON format: {intent, confidence, sub_category}"},

{"role": "user", "content": user_query}

],

response_format={"type": "json_object"}

)

return json.loads(response.choices[0].message.content)

# Usage

result = classify_intent("I want to cancel my order from last week")

# {"intent": "refund_request", "confidence": 0.95, "sub_category": "subscription_cancel"}

Cost Estimation

| Scenario Scale | Cost per Call | Daily Cost (100k calls) |

|---|---|---|

| Entry-level Support (50 in + 20 out) | $0.000035 | $3.5 |

| Mid-sized SaaS (200 in + 30 out) | $0.000078 | $7.8 |

| Enterprise-level (500 in + 50 out) | $0.000163 | $16.3 |

💡 Optimization Tip: Place your classification labels and examples in the system prompt. Once caching is enabled, input costs can drop by another 90%. When calling via APIYI (apiyi.com), cache discounts are fully synchronized.

GPT-5.4 nano Use Case 2: Data Extraction

Scenario Description

Extracting structured fields from unstructured text (resumes, contracts, news, emails) is where nano shines. When combined with Structured Outputs (strict JSON Schema enforcement), you can achieve a 99%+ success rate for formatting.

Practical Code Example

from pydantic import BaseModel

from typing import Optional

class ContactInfo(BaseModel):

name: str

email: Optional[str]

phone: Optional[str]

company: Optional[str]

role: Optional[str]

def extract_contact(text: str) -> ContactInfo:

response = client.beta.chat.completions.parse(

model="gpt-5.4-nano",

messages=[

{"role": "system", "content": "Extract contact information; return null for missing fields."},

{"role": "user", "content": text}

],

response_format=ContactInfo

)

return response.choices[0].message.parsed

Extraction Tasks Suited for nano

- Resume/CV key field extraction

- Invoice/receipt digit recognition

- Email signature block parsing

- News entity recognition (names, locations, organizations)

- Form data normalization

- Log event categorization

GPT-5.4 nano Use Case 3: Content Reranking

Scenario Description

Reranking search results, recommendation lists, and message queues. The low cost of nano makes using an LLM as a reranker economically viable in production environments.

Reranking Code Example

def rerank_documents(query: str, candidates: list[str], top_k: int = 5) -> list:

"""Rerank candidate documents based on query relevance"""

docs_text = "\n".join([f"[{i}] {doc[:300]}" for i, doc in enumerate(candidates)])

response = client.chat.completions.create(

model="gpt-5.4-nano",

messages=[{

"role": "user",

"content": f"""Rank the following documents by relevance based on the query "{query}".

Documents:

{docs_text}

Return JSON: {{"ranking": [list of document indices, from most relevant to least relevant]}}"""

}],

response_format={"type": "json_object"}

)

ranking = json.loads(response.choices[0].message.content)["ranking"]

return [candidates[i] for i in ranking[:top_k]]

🎯 Scenario Tip: nano reranking offers higher accuracy than traditional BM25 + vector search rerankers, while costing only 27% of GPT-5.4-mini. You can access it directly via APIYI (apiyi.com); no application is required for the Default group.

GPT-5.4 nano Use Case 4: Sub-agent Execution Layer

Scenario Description

In multi-agent architectures, the main agent (usually a mini or standard model) handles planning, while the sub-agent (execution worker) manages specific tool calls, data queries, and status updates. With a 92.5% score on τ2-Bench, nano is perfectly capable of serving as a worker.

Multi-agent Collaboration Example

def execute_subtask(task: dict, available_tools: list) -> dict:

"""nano acting as a Sub-agent to execute a single subtask"""

response = client.chat.completions.create(

model="gpt-5.4-nano",

messages=[

{"role": "system", "content": f"You are an execution worker. Available tools: {available_tools}"},

{"role": "user", "content": f"Execute task: {task['description']}"}

],

tools=task.get("tools", []),

tool_choice="auto"

)

return {

"task_id": task["id"],

"result": response.choices[0].message.content,

"tool_calls": response.choices[0].message.tool_calls

}

# Main Agent uses mini, Sub-agent uses nano — saving 60%+ in costs

GPT-5.4 nano Use Case 5: RAG Routing Layer

Scenario Description

In a RAG system, the nano model acts as a "routing layer" to determine the query type (technical question / pre-sales inquiry / product feedback / small talk) and dispatches it to the appropriate processor. This design ensures that the more expensive mini or standard models are only invoked when truly necessary.

RAG Routing Example

def route_query(query: str) -> str:

"""nano determines which RAG processor to route the query to"""

response = client.chat.completions.create(

model="gpt-5.4-nano",

messages=[

{"role": "system", "content": """Return a routing label based on the query type:

- "technical_docs": Technical documentation query

- "product_faq": Product FAQ

- "code_help": Code assistance

- "small_talk": Small talk (no RAG needed)

- "complex_reasoning": Complex reasoning (forward to mini/standard model)"""},

{"role": "user", "content": query}

],

max_tokens=20

)

return response.choices[0].message.content.strip()

route = route_query(user_input)

if route == "complex_reasoning":

final_model = "gpt-5.4-mini" # Upgrade to mini

else:

final_model = "gpt-5.4-nano" # Continue with nano

💰 Cost Optimization: This "nano routing + mini/standard processing" architecture can typically reduce overall model invocation costs by 60-80%. You can flexibly switch between these models using the same API key via APIYI (apiyi.com) by simply modifying the model parameter.

GPT-5.4 nano Use Case 6: High-Throughput Summarization and Translation

Scenario Description

This is ideal for batch processing tasks like news summarization, document translation, and comment rewriting. With a 400K context window, nano can process entire long documents in one go, and the cost per item is virtually negligible.

Batch API Example

# Prepare batch tasks

batch_requests = []

for doc_id, content in documents.items():

batch_requests.append({

"custom_id": f"summary-{doc_id}",

"method": "POST",

"url": "/v1/chat/completions",

"body": {

"model": "gpt-5.4-nano",

"messages": [

{"role": "system", "content": "Summarize the following content in 100 words"},

{"role": "user", "content": content}

],

"max_tokens": 200

}

})

# Submit Batch API (same price but doesn't consume online quota)

batch = client.batches.create(

input_file_id=file_id,

endpoint="/v1/chat/completions",

completion_window="24h"

)

GPT-5.4 nano Use Case 7: Tool Use

Scenario Description

On the τ2-Bench, the nano model achieved a score of 92.5%, nearly matching the mini model's 93.4%. For standardized function calling scenarios like "checking the weather, tracking orders, or querying documents," nano is more than capable of handling the job.

Function Calling Example

tools = [{

"type": "function",

"function": {

"name": "get_order_status",

"description": "Query the status of an order",

"parameters": {

"type": "object",

"properties": {

"order_id": {"type": "string"}

},

"required": ["order_id"]

}

}

}]

response = client.chat.completions.create(

model="gpt-5.4-nano",

messages=[{"role": "user", "content": "What is the status of my order #12345?"}],

tools=tools,

tool_choice="auto"

)

# nano accurately identifies the need to call get_order_status and extracts order_id="12345"

GPT-5.4 nano Pricing Breakdown

Official Pricing Structure

| Billing Type | Price (per 1M tokens) | Notes |

|---|---|---|

| Input | $0.20 | Standard pricing |

| Cached Input | $0.02 | 90% discount |

| Output | $1.25 | Includes reasoning tokens |

| Batch API | $0.20 / $1.25 | Same price, does not count against online quota |

| Regional Data Residency | +10% | For data compliance scenarios |

nano vs. mini Price Comparison

| Dimension | gpt-5.4-nano | gpt-5.4-mini | Ratio |

|---|---|---|---|

| Input | $0.20 | $0.75 | nano is 3.75x cheaper |

| Cached Input | $0.02 | $0.075 | nano is 3.75x cheaper |

| Output | $1.25 | $4.50 | nano is 3.6x cheaper |

| Response Speed | ~200 t/s | ~180 t/s | nano is ~10% faster |

| Context Window | 400K | 400K | Same |

| Max Output | 128K | 128K | Same |

💰 Cost Optimization: For high-throughput scenarios with millions of requests per day, the price difference between nano and mini can add up to thousands of dollars per month. By accessing via APIYI (apiyi.com), you can also enjoy a 10% bonus on $100+ top-ups, which is equivalent to 15% off the official price, potentially reducing your total costs by up to 25% compared to the official site.

Comprehensive Benchmark Comparison: GPT-5.4 nano vs. mini

| Metric | gpt-5.4-nano | gpt-5.4-mini | Gap | Is nano sufficient? |

|---|---|---|---|---|

| SWE-Bench Pro | 52.4% | 54.4% | -2.0pp | ✅ Nearly tied |

| Terminal-Bench 2.0 | 46.3% | 60.0% | -13.7pp | ⚠️ Use mini for long tasks |

| Toolathlon | 35.5% | 42.9% | -7.4pp | ✅ Good for general tasks |

| GPQA Diamond | 82.8% | 88.0% | -5.2pp | ✅ Capable for Q&A |

| OSWorld-Verified | 39.0% | 72.1% | -33.1pp | ❌ Use mini for Computer Use |

| τ2-Bench(Tool Use) | 92.5% | 93.4% | -0.9pp | ✅ Nearly tied |

| MCP Atlas | 56.1% | 57.7% | -1.6pp | ✅ Nearly tied |

| Response Speed | ~200 t/s | ~180 t/s | +10% | ✅ nano is actually faster |

Selection Recommendations

When to prioritize nano:

- Tasks in the "green zone" (classification, extraction, sorting, routing, tool use, batch processing)

- High volume (>10k requests/day) where cost is a concern

- Need for low-latency responses (<1 second)

- Sub-agent execution layers (use mini for the main agent, nano for workers)

When to upgrade to mini:

- Tasks involving Computer Use (significant performance gap in OSWorld)

- Long terminal tasks (>10 steps)

- Complex multi-step reasoning or deep code debugging

- When task quality is more critical than cost

📊 Trade-off Advice: For 80% of "high-throughput + low-complexity" scenarios, nano offers unbeatable cost-effectiveness compared to mini. You can use the APIYI API proxy service (apiyi.com) to directly compare the performance of both models on your specific tasks by simply swapping the

modelparameter.

Accessing GPT-5.4 nano via APIYI

Available in the Default Group

The APIYI platform applies the same open-access policy to both GPT-5.4 nano and 5.4-mini:

- ✅ Default Group: Fully open; available for new users immediately upon registration.

- ✅ SVIP Group: Fully open; no restrictions.

- ✅ Cache Discount Sync: The $0.02/1M cache pricing is fully supported.

- ✅ Batch API Sync: Batch tasks enjoy the same pricing.

APIYI vs. Official Pricing Comparison

| Item | OpenAI Official | APIYI (apiyi.com) |

|---|---|---|

| Base Price | $0.20 / $1.25 per 1M | $0.20 / $1.25 per 1M (Same) |

| Cache Discount | $0.02 / 1M (90%) | $0.02 / 1M (Fully synced) |

| Top-up Bonus | None | Deposit $100, get $10 free (10%) |

| Actual Cost | 100% standard price | |

| Domestic Access | Requires VPN | Direct access, no VPN needed |

| Payment Methods | International Credit Card | RMB, Alipay, WeChat Pay |

| SDK Compatibility | OpenAI Native | Fully compatible with OpenAI SDK |

| Min. Deposit | $5 | Starts from $1 |

💰 Cost Optimization: For applications with over 1 million calls per month, accessing nano via APIYI (apiyi.com) allows you to stack cache optimization on top of the 15% discount, resulting in a total cost reduction of 25-35% compared to calling OpenAI directly.

FAQ

Q1: What is gpt-5.4-nano? How does it differ from gpt-5.4-mini?

GPT-5.4-nano is the most affordable and fastest lightweight model in the OpenAI GPT-5.4 series ($0.20/$1.25 per 1M tokens), with a response speed of approximately 200 t/s. Key differences from 5.4-mini: 1) It's 3.6-3.75x cheaper; 2) Computer Use (OSWorld 39% vs 72.1%) and long-running Terminal tasks (46.3% vs 60%) are significantly weaker; 3) In other scenarios (classification, extraction, Tool Use, Q&A), the performance gap is usually < 10pp.

Q2: Which use cases are best for nano? Which ones require mini?

Best for nano (Green Zone):

- Real-time classification (sentiment, intent, topic)

- Structured data extraction

- Content ranking and re-ranking

- Sub-agent execution layers

- RAG routing layers

- High-throughput summarization/translation

- Standardized tool invocation (τ2-Bench 92.5%)

Must use mini (Red Zone):

- Computer Use/Desktop automation (OSWorld gap of 33pp)

- Long-running Terminal tasks (>10 steps)

- Complex multi-step reasoning

- Custom scenarios requiring Fine-tuning

Q3: Why is nano not recommended for Computer Use?

In OSWorld-Verified evaluations, nano scored only 39.0%, far below mini's 72.1%. This means nano has a high failure rate in multi-step desktop operations (e.g., open browser → search → click → fill form) and cannot reliably complete the task chain. If your scenario requires Computer Use, you should choose mini or the 5.4 standard version.

Q4: How do I enable the $0.02/1M cache discount for nano?

OpenAI's caching mechanism is triggered automatically; no extra parameters are needed. It automatically hits when the prompt prefix (usually the system prompt + shared context) matches requests from the last 5-10 minutes, granting a 90% discount.

Optimization Tips:

- Place the system prompt at the very beginning of the messages array.

- Follow it immediately with shared context (classification labels, Schema definitions).

- Place the actual user query at the end.

- Maintain call frequency (it expires after >5 minutes).

When calling via APIYI (apiyi.com), the cache discount is fully synced with the official site.

Q5: What are the best practices for handling batch tasks with nano?

Three Key Strategies:

- Use Batch API: Submit batch tasks via the

/v1/batchesendpoint. They complete within 24 hours at the same price and do not consume your online RPM quota. - Share System Prompts: Use the same instructions for all tasks to trigger cache hits.

- Set Reasonable max_tokens: While nano's output is cheap, it adds up. Set a reasonable limit of 50-500 tokens based on the task.

By submitting Batch tasks via APIYI (apiyi.com), you enjoy a 10% top-up bonus, bringing your actual cost to about 15% off the official price.

Q6: How do I call GPT-5.4 nano via APIYI?

APIYI is fully compatible with the OpenAI SDK. Just follow these three steps:

- Visit APIYI (apiyi.com) to register an account (no application needed; Default group is ready to use).

- Get your API key.

- Update your code's

base_urltohttps://vip.apiyi.com/v1and set the model togpt-5.4-nano.

client = openai.OpenAI(

api_key="YOUR_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="gpt-5.4-nano",

messages=[...]

)

A $100 deposit grants a 10% bonus, equivalent to ~15% off the official price, with cache discounts synced.

Q7: When is nano more cost-effective than mini? How do I calculate it?

Decision Formula:

Is nano cost-effective = (Tolerance for quality degradation) × (Call volume) × (Price difference)

> (Quality improvement gains from upgrading to mini)

Real-world Scenarios:

- Call volume > 10K/day: Savings > $30/day ($1000/month)

- Call volume > 100K/day: Savings > $300/day ($9000/month)

- Call volume > 1M/day: Savings > $3000/day ($90000/month)

For Green Zone tasks (classification, extraction, Tool Use), the quality loss for nano is usually < 5%, while cost savings are 73% (based on the 3.6x price multiplier). The overall ROI almost always favors nano.

Q8: What are the known limitations of GPT-5.4 nano?

Key limitations:

- No Computer Use support: OSWorld score of 39% is too low for reliable desktop automation.

- No Fine-tuning support: Cannot be fine-tuned with custom datasets.

- No Audio/Video input: Text and image input only.

- Weak at long Terminal tasks: Terminal-Bench score of 46.3%; operations exceeding 10 steps are prone to failure.

- Limited complex reasoning: GPQA score of 82.8% is close to mini, but performance on extremely difficult tasks like FrontierMath drops significantly.

Alternative: Switch directly to gpt-5.4-mini or the 5.4 standard version if you encounter these limitations.

GPT-5.4 nano Application Scenarios: Key Takeaways

- Price Floor: $0.20/$1.25 per 1M tokens, which is 3.6–3.75 times cheaper than the 5.4-mini.

- 90% Cache Discount: Input costs as low as $0.02/1M, making high-frequency context scenarios virtually free.

- 7 "Green Zone" Scenarios: Classification, extraction, ranking, sub-agent tasks, routing, batch processing, and tool use.

- τ2-Bench 92.5%: Tool invocation performance is nearly on par with the mini; it's sufficient for 90%+ of function calling scenarios.

- GPQA 82.8%: Strong general knowledge Q&A capabilities, perfect for FAQs and content moderation.

- 200 t/s Speed: 10% faster than the mini, making it the top choice for high-throughput pipelines.

- "Red Zone" Warning: You must switch to the mini for Computer Use or long-running terminal tasks.

Summary

Here are the core takeaways for GPT-5.4 nano application scenarios:

- Scenario Positioning: The nano is the best choice for high-throughput, low-complexity tasks—real-time classification, data extraction, sub-agent workers, RAG routing, and batch processing are its primary battlegrounds.

- Capability Boundaries: While its performance on τ2-Bench, GPQA, and SWE-Bench Pro is nearly on par with the mini, its capabilities for Computer Use and long-running terminal tasks are significantly weaker.

- How to Access: Call it directly via the APIYI (apiyi.com) "Default" group. Cache discounts are synced, and you get a 10% bonus on top-ups.

GPT-5.4 nano isn't just a "cheap model that does everything poorly"; it's a lightweight weapon meticulously optimized by OpenAI for high-throughput + low-complexity scenarios. If your application falls into the 7 "Green Zone" scenarios listed above, the nano is almost always more cost-effective than the mini. However, if your use case involves Computer Use or long-running terminal tasks, switching to the mini is the right move.

We recommend using the APIYI (apiyi.com) platform to quickly integrate GPT-5.4 nano. The "Default" group requires no application, cache discounts are fully synced, you get a 10% bonus on top-ups, and it offers stable, direct connectivity within China.

Further Reading

If you're interested in the GPT-5.4 nano API, we recommend checking out these articles:

- 📘 GPT-5.4 mini API Upgrade Guide – Learn about the capabilities and use cases of the previous mini model tier.

- 📊 Deep Dive into OpenAI Caching: Best Practices for 90% Discounts – Master engineering techniques for cache optimization.

- 🚀 Building a RAG Routing Layer with GPT-5.4 nano – Explore a hybrid architecture using "nano for routing + mini for processing."

📚 References

-

Official OpenAI GPT-5.4 nano Documentation: Model specifications, pricing, and invocation examples.

- Link:

developers.openai.com/api/docs/models/gpt-5.4-nano - Note: Get the latest and most authoritative official technical parameters.

- Link:

-

AI Cost Check Benchmark Analysis: Full-dimensional evaluation of nano vs. mini.

- Link:

aicostcheck.com/blog/gpt-5-4-mini-nano-pricing-benchmarks - Note: Third-party evaluation, perfect for side-by-side capability comparisons.

- Link:

-

APIYI GPT-5.4 nano Integration Guide: Domestic access solutions, group instructions, and recharge discounts.

- Link:

docs.apiyi.com - Note: A practical guide for developers in China to get started.

- Link:

-

OpenAI Pricing Page: Complete price list and details on the caching mechanism.

- Link:

developers.openai.com/api/docs/pricing - Note: The latest billing standards for all models.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to share your experiences with GPT-5.4 nano in the comments section. For more resources on model integration, visit the APIYI documentation center at docs.apiyi.com.