Author's Note: The latest xAI flagship, Grok 4.3, is now available via official API proxy service. This article provides a complete breakdown of its 1M context window, 159 t/s lightning-fast output, debut video input capabilities, and the domestic access plan that slashes costs by 40% compared to Grok 4.20.

xAI launched Grok 4.3 Beta on April 17, 2026, and officially opened it for API access on April 30, 2026. What makes this flagship model stand out isn't just the 1M context window + 159 tokens/second output speed + debut video input; it's the aggressive pricing adjustment. Compared to the previous Grok 4.20, input costs have dropped by 37.5% and output costs by 58.3%, resulting in an overall cost reduction of approximately 40%.

This isn't just marketing hype. The official xAI documentation is live, and Artificial Analysis has measured its Intelligence Index at 53 points (compared to an average of 35 for models at the same price point), ranking it 10th among 146 models globally. Additionally, xAI has introduced video input capabilities to the API level for the first time, marking a significant milestone for the Grok series in the multimodal arena.

Core Value: This article provides a comprehensive guide to integrating the Grok 4.3 API, covering model specifications, pricing structures, benchmark data, multimodal invocation methods, and full-group access plans in China. It also includes ready-to-run Python, cURL, and video input examples.

Grok 4.3 API Key Highlights

| Feature | Description | Value |

|---|---|---|

| 1M Long Context | 1,000,000 tokens (approx. 1500 A4 pages) | Process entire books or codebases at once |

| 159 t/s Speed | Official xAI benchmark, far exceeding similar models | Fast streaming, minimal user wait time |

| Video Input Debut | First xAI API model to support native video input | Analyze video content without pre-processing |

| 40% Price Drop | Input -37.5%, Output -58.3% vs 4.20 | Massive cost savings for batch tasks |

| Full Group Access | Available on APIYI Default + SVIP groups | Affordable, accessible for new users |

Key Differences: Grok 4.3 vs. 4.20

Grok 4.3 is the flagship version where xAI has comprehensively optimized reasoning depth and speed over Grok 4.20. The most significant changes are in three areas:

First, the reasoning mechanism has been upgraded to "Always-on." Grok 4.3 has built-in, persistent Chain-of-Thought reasoning that cannot be disabled or adjusted. This means every call "thinks" before it answers. While this design results in a time-to-first-token (TTFT) of approximately 19.34 seconds, it significantly improves factual accuracy and complex instruction following, ranking 6th globally in that category.

Second, the price structure has been significantly lowered. Grok 4.20's input price was about $2/1M and output about $6/1M, while Grok 4.3 has been slashed to $1.25 and $2.50. This is a clear signal from xAI in the API price war: they are capturing the agentic workflow market through cost leadership. This is why the APIYI platform has adopted a full-group access strategy for Grok 4.3: it's affordable, the risk per call is manageable, and there's no need to isolate it to specific groups.

Third, the multimodal boundaries have expanded. Grok 4.3 is the first model in the xAI API to support native video input. Users no longer need to extract frames or transcode; they can simply pass a video URL to perform content analysis.

Getting Started with the Grok 4.3 API

Minimal Python Example (Text Invocation)

Grok 4.3 is fully compatible with the OpenAI SDK. Here is the simplest way to call it:

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="grok-4.3",

messages=[

{"role": "user", "content": "Implement a high-performance LRU cache in Python"}

]

)

print(response.choices[0].message.content)

Minimal cURL Example

curl https://vip.apiyi.com/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer YOUR_API_KEY" \

-d '{

"model": "grok-4.3",

"messages": [

{"role": "user", "content": "Analyze the key points of this long document"}

]

}'

Multimodal Invocation Example (Image + Video Input)

Grok 4.3 is the first API model from xAI to support native video input. The invocation method is consistent with OpenAI's vision models:

# Image input

response = client.chat.completions.create(

model="grok-4.3",

messages=[{

"role": "user",

"content": [

{"type": "text", "text": "What system does this architecture diagram describe?"},

{"type": "image_url", "image_url": {"url": "https://example.com/diagram.png"}}

]

}]

)

# Video input (Grok 4.3 debut capability)

response = client.chat.completions.create(

model="grok-4.3",

messages=[{

"role": "user",

"content": [

{"type": "text", "text": "Summarize the core content of this video and extract a timeline"},

{"type": "video_url", "video_url": {"url": "https://example.com/lecture.mp4"}}

]

}]

)

View full production-ready code (including cost estimation, tiered pricing, and error handling)

import openai

from typing import List, Dict

# Grok 4.3 pricing (per 1M tokens)

PRICE_INPUT_BASE = 1.25

PRICE_OUTPUT_BASE = 2.50

PRICE_INPUT_HIGH = 2.50 # >200K input

PRICE_OUTPUT_HIGH = 5.00 # >200K input

PRICE_CACHE_HIT = 0.20 # Cache hit price

def call_grok_43(

messages: List[Dict],

api_key: str,

max_tokens: int = 4096

) -> Dict:

"""

Production-grade Grok 4.3 invocation, including tiered cost estimation

"""

client = openai.OpenAI(

api_key=api_key,

base_url="https://vip.apiyi.com/v1"

)

try:

response = client.chat.completions.create(

model="grok-4.3",

messages=messages,

max_tokens=max_tokens

)

usage = response.usage

input_tokens = usage.prompt_tokens

output_tokens = usage.completion_tokens

# Tiered pricing (>200K triggers 2x markup)

if input_tokens <= 200_000:

input_cost = input_tokens / 1_000_000 * PRICE_INPUT_BASE

output_cost = output_tokens / 1_000_000 * PRICE_OUTPUT_BASE

else:

input_cost = input_tokens / 1_000_000 * PRICE_INPUT_HIGH

output_cost = output_tokens / 1_000_000 * PRICE_OUTPUT_HIGH

total_cost = input_cost + output_cost

print(f"📊 Input: {input_tokens:,} tokens | Output: {output_tokens:,} tokens")

print(f"💰 Cost of this call: ${total_cost:.4f}")

return {

"content": response.choices[0].message.content,

"tokens": {"input": input_tokens, "output": output_tokens},

"cost_usd": total_cost

}

except openai.RateLimitError:

return {"error": "Rate limit exceeded, please retry later"}

except openai.APIError as e:

return {"error": f"API error: {str(e)}"}

# Usage example

result = call_grok_43(

messages=[

{"role": "system", "content": "You are a senior architect"},

{"role": "user", "content": "Design a rate-limiting system that supports tens of millions of QPS"}

],

api_key="YOUR_API_KEY"

)

print(result["content"])

🎯 Quick Start Tip: Grok 4.3 is fully open to the Default group on APIYI, and new users can call it directly without any application. We recommend accessing it via the APIYI (apiyi.com) platform, where depositing $100 grants you an extra 10%, equivalent to about 15% off the official price. Plus, it provides direct domestic access without needing a VPN and maintains full compatibility with the OpenAI SDK.

Grok 4.3 API Pricing Details

Official Tiered Pricing Structure

Grok 4.3 adopts a long-context tiered pricing strategy similar to the GPT-5.5 series, but with a lower trigger threshold (200K vs 272K):

| Input Range | Input Price (per 1M) | Output Price (per 1M) | Cache Hit Price |

|---|---|---|---|

| 0 – 200K tokens | $1.25 | $2.50 | $0.20 (84% discount) |

| 200K – ∞ tokens | $2.50 (2x) | $5.00 (2x) | $0.20 |

⚠️ Important: Tiered pricing applies to the entire request rather than just the excess portion. This means that as long as the input exceeds 200K, both the input and output for the entire request are billed at the higher tier. We recommend chunking long documents to around 180K to avoid the higher tier.

Grok 4.3 vs Grok 4.20 Price Comparison

| Dimension | Grok 4.20 | Grok 4.3 | Reduction |

|---|---|---|---|

| Input Price | ~$2.00 / 1M | $1.25 / 1M | -37.5% |

| Output Price | ~$6.00 / 1M | $2.50 / 1M | -58.3% |

| Blended Rate (3:1) | ~$3.00 / 1M | $1.56 / 1M | -48% |

| Context Window | 256K | 1M | +290% |

| Multimodal | Text+Image | Text+Image+Video | Added Video |

Actual cost calculation examples:

- Simple call (2K input + 1K output): $0.005 (less than a cent at standard rates)

- Medium task (50K input + 5K output): $0.075

- Long document analysis (180K input + 5K output, avoiding the tier): $0.238

- Extra-long document (500K input + 10K output, triggering the tier): $1.30

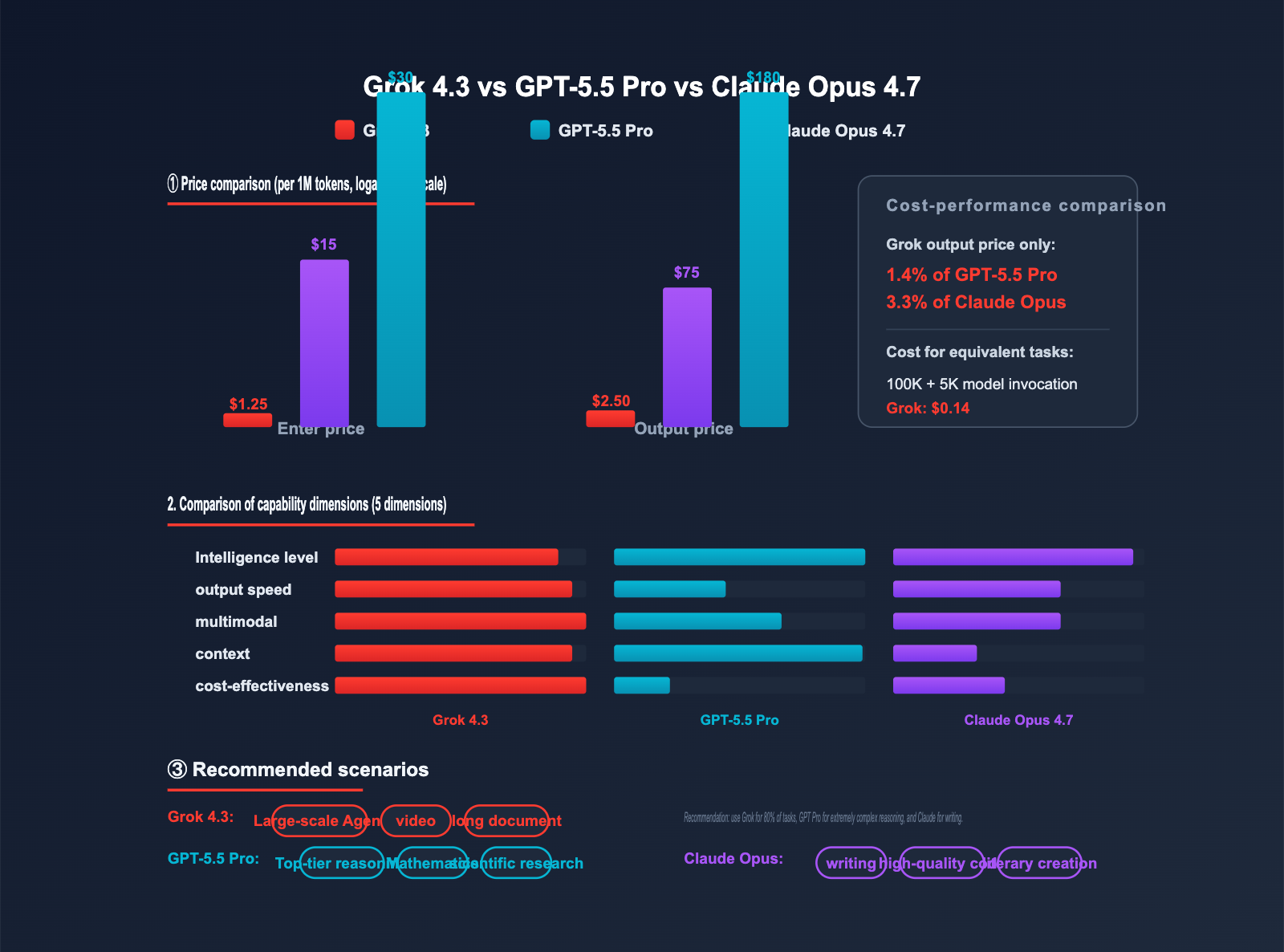

💰 Cost Optimization: For equivalent tasks, the output cost of Grok 4.3 is only 1.4% of GPT-5.5 Pro ($2.50 vs $180). For large-scale batch tasks, agentic workflows, and long-term production deployments, this price gap is significant enough to reshape your application architecture. You can further reduce actual costs by taking advantage of the 10% bonus on deposits at APIYI (apiyi.com).

Grok 4.3 API Performance Benchmark

Official Test Data

The Artificial Analysis platform has completed a comprehensive evaluation of Grok 4.3. The results show that its intelligence level is significantly higher than the average for its price range:

| Metric | Grok 4.3 Score | Industry Average | Rank |

|---|---|---|---|

| Intelligence Index | 53 | 35 | #10 / 146 |

| Instruction Following | Excellent | Average | #6 / 146 |

| Output Speed | 147.8 t/s | ~80 t/s | #21 / 146 |

| Time to First Token | 19.34s | 8s | Slower (Always-on inference) |

| End-to-End Latency | Moderate | Moderate | Average for inference models |

Benchmark Analysis

Intelligence Index of 53, significantly higher than the 35 average: This means Grok 4.3 ranks in the top tier globally for comprehensive intelligence tasks (math, coding, reasoning, and knowledge), all while being priced far lower than other top-ten models (such as GPT-5.5 Pro at $30/$180 or Claude Opus 4.7 at $15/$75).

Instruction Following #6: This is Grok 4.3's strongest capability. For agentic workflows, complex multi-step tasks, and scenarios requiring strict JSON output, Grok 4.3 is more reliable than other models in its price bracket.

Time to First Token of 19.34 seconds: This is the trade-off for its always-on inference mechanism. If your application is sensitive to initial response times (like customer service chatbots), we recommend using a streaming API to display a "Thinking…" status, or consider using the faster Grok 4 standard version.

Grok 4.3 vs. Leading Flagship Models Comparison

| Model | Input Price | Output Price | Context | Intelligence Index | Multimodal | Recommended Use Case |

|---|---|---|---|---|---|---|

| Grok 4.3 | $1.25 | $2.50 | 1M | 53 | Text+Image+Video | Large-scale Agent / Video Analysis |

| GPT-5.5 Pro | $30 | $180 | 1.05M | ~60 | Text+Image | Top-tier Reasoning / Research |

| Claude Opus 4.7 | $15 | $75 | 200K | ~58 | Text+Image | Writing / High-quality Coding |

| Gemini 2.5 Pro | $1.25 | $10 | 2M | ~55 | Text+Image+Video | Long Documents / Multimodal |

| Grok 4.20 | $2.00 | $6.00 | 256K | ~48 | Text+Image | Superseded by 4.3 |

Selection Strategy Recommendations

Grok 4.3 Analysis: Grok 4.3 leads in cost-effectiveness, speed, and video input capabilities. However, it does have higher time-to-first-token latency and a lower threshold for long-context tier triggering (200K). That said, for large-scale agentic workflows and scenarios requiring video understanding where cost-efficiency is key, Grok 4.3 is currently the best choice.

GPT-5.5 Pro Analysis: GPT-5.5 Pro remains the leader in extremely difficult reasoning tasks like FrontierMath. However, its price—which is 6 times higher—makes it suitable only for high-value scenarios. In contrast, Grok 4.3 offers similar capabilities in 80% of routine reasoning tasks at just 1/24th of the price, offering crushing cost-performance value.

Claude Opus 4.7 Analysis: Claude Opus 4.7 excels in prose, long-form writing, and code quality. However, its context window is limited to 200K, and the price is on the higher side. For 1M context window requirements and large-scale batch tasks, Grok 4.3 remains the more reliable choice.

📊 Comparison Tip: You can use the APIYI (apiyi.com) API proxy service to seamlessly switch between flagship models like Grok 4.3, GPT-5.5, and Claude Opus 4.7 using a single API key—simply update the

modelparameter. This unified access method is perfect for applications that need to dynamically schedule tasks across different model types.

Grok 4.3 API Use Cases

The combination of "high intelligence + low price + full modality + long context" makes Grok 4.3 particularly well-suited for the following scenarios:

- Large-scale Agentic Workflows: High instruction following capabilities + affordable pricing, perfect for agent systems with thousands of daily model invocations.

- Ultra-long Document Understanding: With a 1M token context window (approx. 1,500 pages), you can input entire technical books or complete codebases in one go.

- Video Content Analysis: The first xAI model to support native video input, eliminating the need for manual frame extraction.

- Multimodal Hybrid Tasks: Complex applications that process text, images, and video simultaneously.

- Batch API Tasks: Cost-sensitive scenarios such as large-scale data labeling, content generation, and batch translation.

- Enterprise Knowledge Bases: Ultimate cost-efficiency with a 1M context window + $0.20 cache hit pricing.

- Rapid Prototyping and Experimentation: 159 t/s lightning-fast output + affordable pricing, ideal for frequent iterations.

🎯 Scenario Decision: If your application requires a mix of "high intelligence + large scale + cost control," Grok 4.3 is currently the most cost-effective option. You can access it directly via APIYI (apiyi.com); it's available in the Default group without needing a separate application.

Accessing Grok 4.3 via APIYI

Full Group Access Strategy

APIYI has adopted a completely different access strategy for Grok 4.3 compared to GPT-5.5 Pro:

- ✅ Default Group: Fully open; available to new users immediately.

- ✅ SVIP Group: Fully open; no restrictions whatsoever.

- ✅ Official Proxy: Identical to the official xAI API, with no proxy overhead.

Why is Grok 4.3 open to all groups while GPT-5.5 Pro is restricted to SVIP? The core reason is the cost risk per model invocation:

- GPT-5.5 Pro: A single invocation can cost several dollars, posing a high risk of accidental misuse → Restricted to SVIP group.

- Grok 4.3: A single invocation typically costs only a few cents, so even if misused, it won't cause significant financial loss → Fully open to all groups.

This design philosophy reflects APIYI's operational approach of "risk-based model management"—making affordable models easily accessible to all users while protecting beginners from high-cost models.

APIYI vs. Official Cost Comparison

| Item | xAI Official | APIYI apiyi.com |

|---|---|---|

| Base Price | $1.25 / $2.50 per 1M | $1.25 / $2.50 per 1M (Same price) |

| Recharge Bonus | None | Get $10 free with $100 recharge (10%) |

| Actual Cost | 100% Standard | Approx. 90% of standard (15% off) |

| Domestic Access | Requires VPN | Direct connection, no VPN needed |

| Payment Methods | International Credit Card | Supports RMB, Alipay, WeChat Pay |

| SDK Compatibility | xAI Native SDK | Fully compatible with OpenAI SDK |

| Min. Recharge | $5 | From $1 |

| Group Limits | None | Default + SVIP fully open |

💰 Cost Optimization: By accessing Grok 4.3 via APIYI apiyi.com, you get a 10% bonus on $100 recharges, effectively giving you a 15% discount compared to official rates. For teams with high monthly usage, this discount significantly lowers your API costs over the year.

FAQ

Q1: What is Grok 4.3? What are the core differences from the previous Grok 4.20?

Grok 4.3 is the flagship reasoning model officially launched by xAI on 2026-04-30. Key differences: 1) Context window expanded from 256K to 1M; 2) Input price dropped from $2 to $1.25 (-37.5%), output price from $6 to $2.50 (-58.3%); 3) First-time support for native video input; 4) Always-on reasoning mechanism improves factual accuracy.

Q2: Why is Grok 4.3 open to all groups on APIYI, while GPT-5.5 Pro is SVIP-only?

The core reason is the difference in cost risk per model invocation: GPT-5.5 Pro has an output price of $180/1M, where a single complex request could cost several dollars, creating a high risk of misuse. Therefore, it is restricted to the SVIP group. Grok 4.3 has an output price of only $2.50/1M, meaning a single request usually costs just a few cents; even if a beginner makes a mistake, it won't cause significant loss. Thus, it is fully open to the Default group. This is part of APIYI's "risk-based management" philosophy.

Q3: When should I use Grok 4.3 vs. GPT-5.5 (Standard/Pro)?

Choose Grok 4.3 for: Large-scale agent tasks, video analysis, 1M-token long documents, batch processing, and cost-sensitive applications.

Choose GPT-5.5 Standard for: Routine chat, customer service, translation, and other lightweight tasks that don't require always-on reasoning (no latency advantage).

Choose GPT-5.5 Pro for: FrontierMath-level mathematical problems, 20-hour-level ultra-complex agent tasks, and top-tier scientific reasoning.

Simple rule: 80% of tasks can be handled by Grok 4.3; only switch to GPT-5.5 Pro for extremely complex reasoning.

Q4: How do I use Grok 4.3’s video input? What formats are supported?

Video input is passed via the video_url field in the messages array. It supports mainstream formats like mp4, mov, and webm. Example:

messages=[{

"role": "user",

"content": [

{"type": "text", "text": "Summarize the key points of this video"},

{"type": "video_url", "video_url": {"url": "https://example.com/video.mp4"}}

]

}]

Note that video content is converted into tokens for billing; it is recommended to keep videos under 10 minutes to avoid triggering tiered pricing.

Q5: How do I call Grok 4.3 via APIYI? What code needs to be changed?

APIYI is fully compatible with the OpenAI SDK. Just follow these three steps:

- Visit APIYI apiyi.com to register (no application needed; Default group is available immediately).

- Get your API key.

- Update the

base_urlin your code tohttps://vip.apiyi.com/v1and set themodeltogrok-4.3.

client = openai.OpenAI(

api_key="YOUR_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="grok-4.3",

messages=[...]

)

Recharging $100 gives you a 10% bonus, effectively providing a 15% discount off official prices.

Q6: How can I avoid tiered pricing when Grok 4.3 input exceeds 200K?

The tiered pricing threshold for Grok 4.3 is 200K. Beyond this, input and output prices double. Avoidance strategies:

- Chunking: Split long documents into multiple requests of around 180K (leaving a 20K buffer).

- Pre-compression: Use a cheaper model (like Grok 4 Mini) to compress the document before sending it to 4.3 for reasoning.

- Cache Reuse: Enable caching for repetitive content to enjoy an 84% discount ($0.20/1M).

- Accept the Tier: If the task must be processed in one go, simply accept the 2x billing (the cost is still lower than GPT-5.5 Pro's standard price).

Q7: Why is the time-to-first-token (TTFT) for Grok 4.3 so high?

Grok 4.3 has a built-in always-on Chain-of-Thought reasoning mechanism. Every call "thinks" before it outputs, resulting in a TTFT of approximately 19.34 seconds. This is a design trade-off to improve factual accuracy and instruction following. If your scenario is sensitive to initial response time:

- Use streaming mode and display a "Thinking…" prompt.

- Choose Grok 4 Standard (lower TTFT, but slightly less intelligent).

- Choose GPT-5.5 Standard (no resident reasoning, faster response).

Q8: What are the known limitations of Grok 4.3?

Key limitations include:

- High TTFT: Approx. 19.34 seconds; not suitable for real-time chat scenarios.

- Reasoning cannot be disabled: The always-on CoT mechanism cannot be turned off or adjusted.

- Verbose output: Can generate up to 88M tokens in evaluations; keep an eye on

max_tokens. - Low tiered threshold: 200K triggers 2x pricing (GPT-5.5 is 272K).

- Video length recommendation: Very long videos trigger tiered pricing; keep them under 10 minutes.

- Text-only output: Does not support image/video generation; it is for understanding only.

Grok 4.3 API Key Takeaways

- Pricing Powerhouse: $1.25 for input / $2.50 for output. That’s a 40% overall price drop compared to 4.20, making it incredibly cost-effective compared to similar models.

- 1M Context Window: Roughly equivalent to 1,500 A4 pages. You can feed in entire codebases or complete technical books in one go.

- 159 t/s Blazing Speed: Among the fastest in the industry, significantly cutting down wait times for long-text generation.

- First-Ever Video Input: The first API model from xAI to support native video input, pushing the boundaries of multimodal capabilities.

- Always-on Reasoning: Intelligence Index of 53 (Global #10) and Instruction Following #6.

- Full Access: Available now on APIYI across all groups (Default + SVIP). No application required—you can start calling it immediately.

- 15% Off for Domestic Users: Recharge 100 and get 10 extra via APIYI (apiyi.com), effectively giving you a 15% discount compared to the official site.

Summary

Here are the core highlights of the Grok 4.3 API:

- Pricing: At $1.25 / $2.50 per 1M tokens, the 40% price reduction puts it in direct competition with the Gemini 2.5 Pro in terms of value.

- Capability: With an Intelligence Index of 53 (Global #10) and an Instruction Following rank of #6, it’s perfect for high-intelligence tasks and large-scale agent workflows.

- Access: Use APIYI (apiyi.com) for direct access across all groups. Plus, you get a 10% bonus on recharges, and it’s stable for direct domestic connections without needing a VPN.

Grok 4.3 isn't just "another Pro model"; it's xAI’s flagship weapon for redefining value. If you're looking for the perfect mix of high intelligence, low cost, multimodal support, and a massive context window—whether for large-scale agent systems, video analysis, enterprise knowledge bases, or processing 1M-token documents—Grok 4.3 is currently your best bet. It complements GPT-5.5 Pro perfectly: use Grok 4.3 for your standard complex reasoning tasks, and save GPT-5.5 Pro for those extreme-difficulty challenges.

We recommend getting started with Grok 4.3 via the APIYI (apiyi.com) platform. You get instant access to the Default group, a 10% bonus on recharges, and a stable, direct connection.

Further Reading

If you're interested in the Grok 4.3 API, we recommend checking out these articles:

- 📘 GPT-5.5 Pro API Integration Guide – Learn about OpenAI's flagship reasoning model and how it complements Grok 4.3 in various scenarios.

- 📊 Grok 4.3 vs. Gemini 2.5 Pro: A Deep Dive into Cost-Effectiveness – An analysis of the capability differences between these two flagship models at the same price point.

- 🚀 Grok 4.3 Video Input in Action: Build a Video Understanding Agent in Ten Minutes – Explore production-grade applications of xAI's video capabilities.

📚 References

-

xAI Official API Documentation: Grok 4.3 model specifications, pricing, and invocation examples.

- Link:

docs.x.ai/developers/models - Note: Get the latest and most authoritative official technical parameters.

- Link:

-

Artificial Analysis Grok 4.3 Review: Intelligence Index, speed, and latency test data.

- Link:

artificialanalysis.ai/models/grok-4-3 - Note: Independent third-party evaluation, perfect for side-by-side model comparisons.

- Link:

-

APIYI Grok 4.3 Integration Documentation: Domestic access solutions, grouping instructions, and recharge discounts.

- Link:

docs.apiyi.com - Note: A practical integration guide tailored for developers in China.

- Link:

-

OpenRouter Grok 4.3 Performance Page: Multi-provider comparison and detailed benchmark breakdowns.

- Link:

openrouter.ai/x-ai/grok-4-3 - Note: A great reference for cross-platform performance comparison and pricing transparency.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to share your experience with Grok 4.3 in the comments. For more model integration resources, visit the APIYI documentation center at docs.apiyi.com.