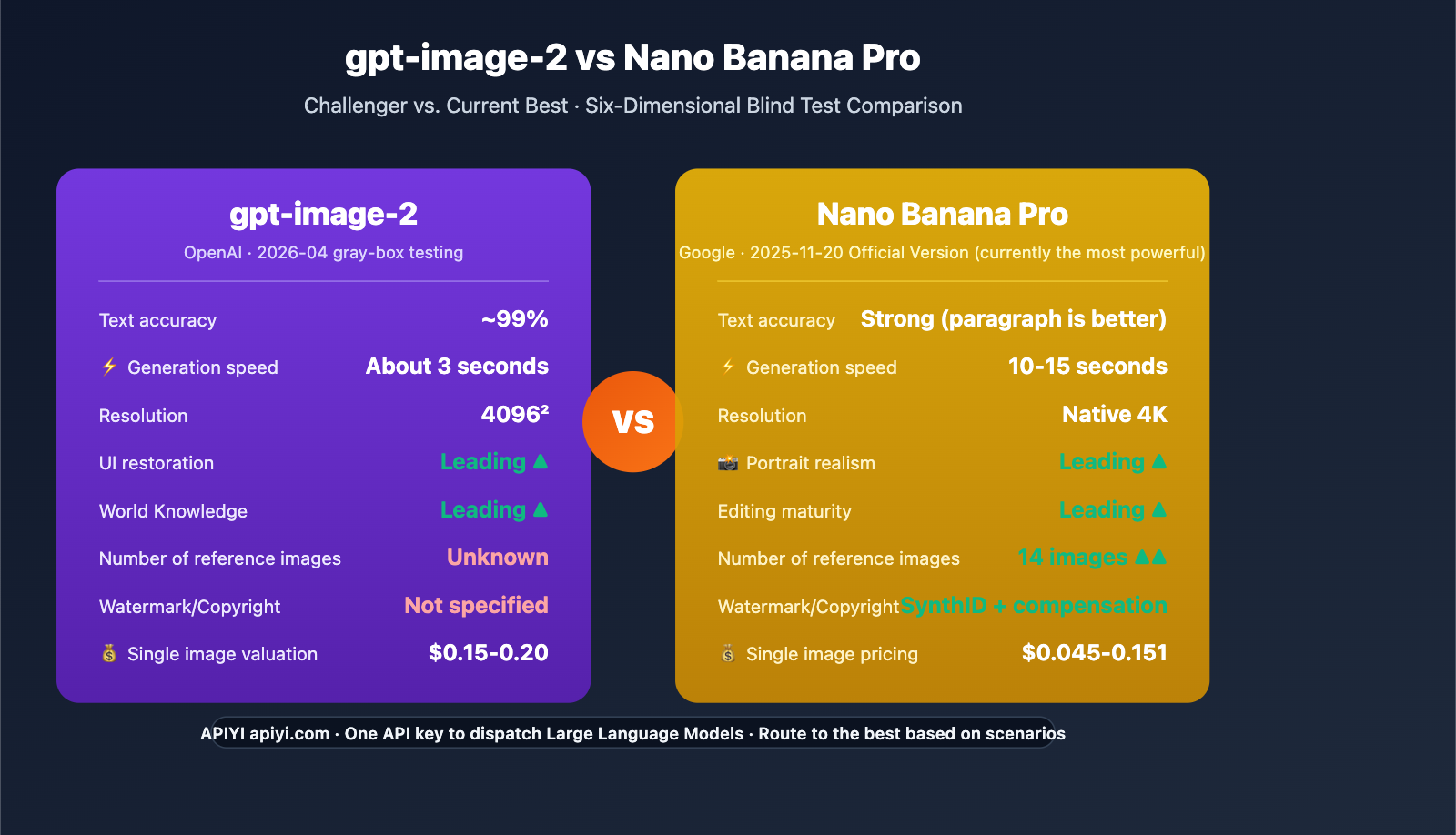

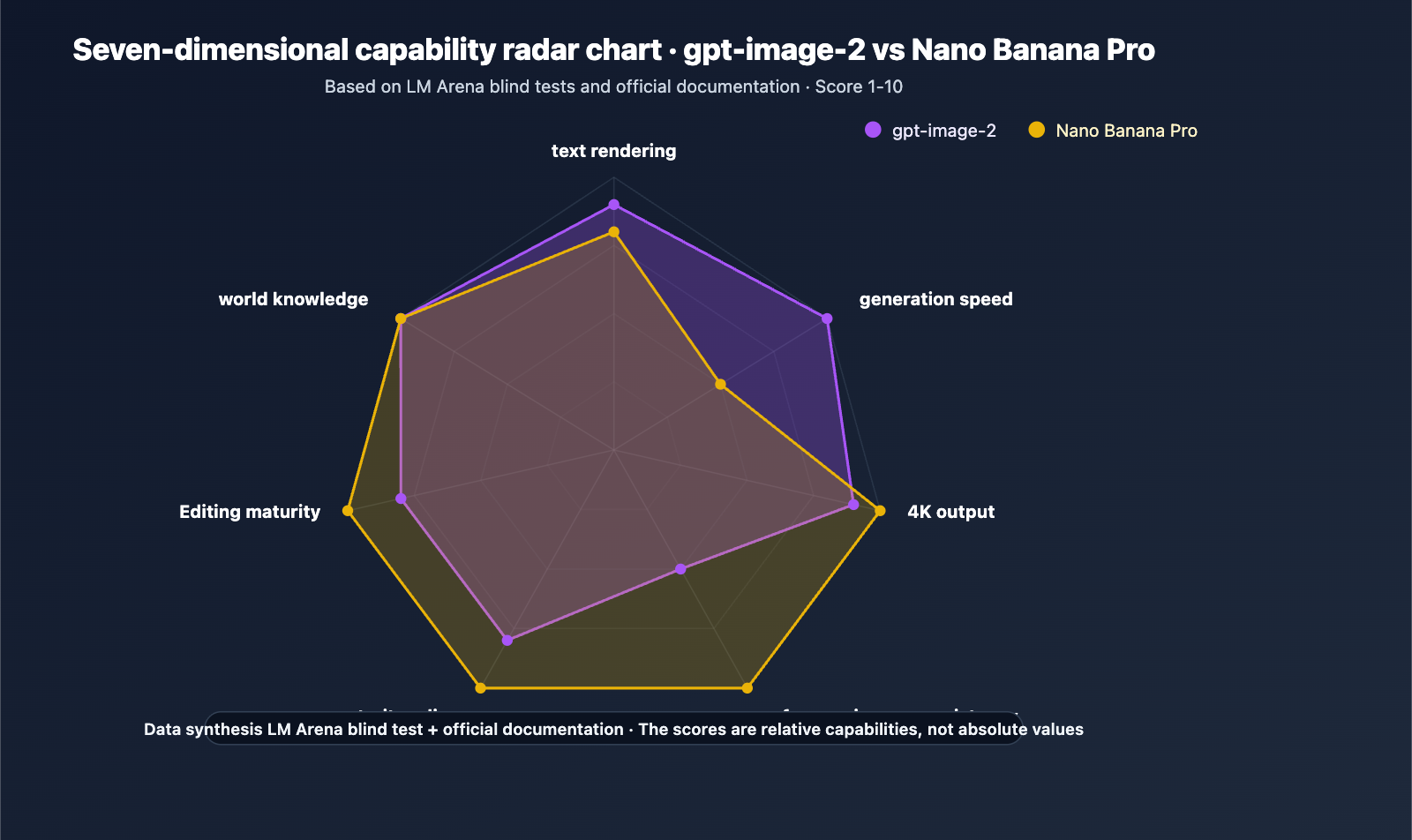

Author's Note: Based on LM Arena blind tests and official documentation, I’ve conducted a deep dive comparing gpt-image-2 and Nano Banana Pro across six dimensions: text rendering, 4K resolution, speed, reference images, pricing, and editing capabilities. This will help you determine if the new model can truly shake the "top-tier" status of the Banana Pro.

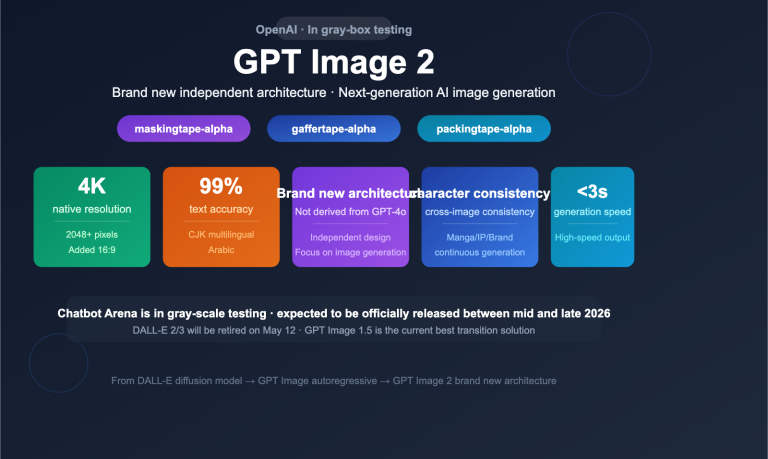

Nano Banana Pro (Gemini 3 Pro Image) has been widely recognized as the industry's most powerful image model since its release on November 20, 2025, thanks to its native 4K support, 14-image reference capability, Search grounding, and SynthID watermarking. Meanwhile, gpt-image-2 has achieved nearly 100% accuracy in text rendering during LM Arena blind tests, with some testers noting, "The gap between it and Nano Banana Pro is as significant as the gap between Nano Banana Pro and DALL-E."

This isn't another "both sides have their merits" compromise analysis. Based on public LM Arena blind test logs, independent tester data, and official technical documentation, this article clearly defines which model you should choose for specific scenarios.

Core Value: After reading this, you'll know exactly where gpt-image-2 outperforms the Banana Pro, where it still falls short, and the most pragmatic tech stack choice for your current needs.

gpt-image-2 vs Nano Banana Pro: Key Takeaways

| Dimension | gpt-image-2 (Preview) | Nano Banana Pro (Released) |

|---|---|---|

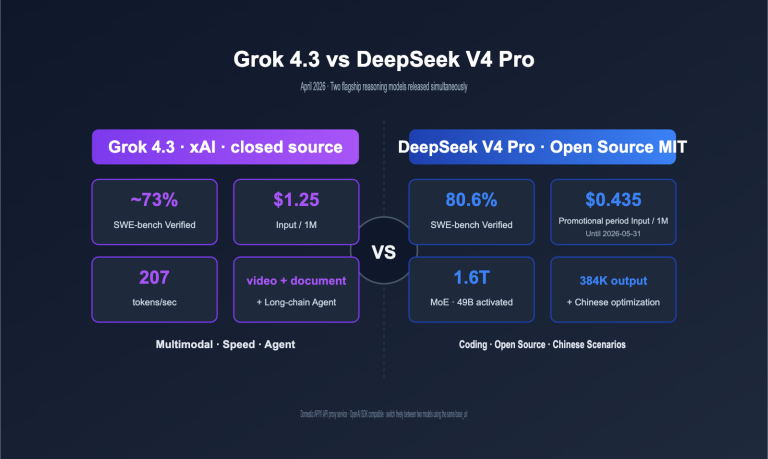

| Vendor | OpenAI | Google DeepMind |

| Status | 2026-04 Beta | 2025-11-20 GA |

| Text Rendering | ~100% (Blind test leader) | Strong (Slightly weaker on dense text) |

| Generation Speed | ~3 seconds | 10-15 seconds |

| Native Resolution | Expected 2048²/4096² | Native 4K |

| Reference Images | Expected support | 14 images (Leader) |

Positioning Differences

Nano Banana Pro remains the current gold standard. This isn't just an emotional conclusion—Google Cloud has opened Nano Banana Pro to enterprise customers, integrating it into core creative tools like Vertex AI, Google Workspace, Adobe Firefly, Photoshop, Figma, and Canva, all while providing copyright protection. It is a production-ready flagship.

gpt-image-2 is a potential challenger. LM Arena blind test data shows it surpasses the Banana Pro in text rendering, UI reconstruction, and world knowledge. However, it still lags behind in spatial reasoning (e.g., Rubik's cube reflections), portrait realism, and multi-reference consistency. Furthermore, it hasn't been officially released, so there's no clear pricing or rate limiting yet.

In-Depth Comparison: gpt-image-2 vs. Nano Banana Pro Across Six Dimensions

Dimension 1: Text Rendering

Blind Test Verdict: gpt-image-2 leads. LM Arena testers report that gpt-image-2 achieves near 100% character-level accuracy, outperforming Nano Banana Pro in scenarios involving UI labels, signage, and short multilingual text.

Nano Banana Pro's Strong Suit: Google officially highlights that it is "the current model best suited for generating images containing correct, clear text." For long-form paragraph text (such as infographics or document-style posters), readability remains a strength for Banana Pro. gpt-image-2 has yet to be rigorously tested on dense paragraph blocks.

| Text Type | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| UI Buttons/Labels | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Short Titles/Slogans | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Product Packaging Text | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Infographic Paragraphs | ⭐⭐⭐⭐ (Unverified) | ⭐⭐⭐⭐⭐ |

| Multilingual Text | ⭐⭐⭐⭐⭐ (CJK/RTL) | ⭐⭐⭐⭐⭐ (Gemini Multilingual) |

Dimension 2: Generation Speed

gpt-image-2 is significantly faster. Arena observers measured a single generation at approximately 3 seconds, while Nano Banana Pro typically takes 10-15 seconds. For interactive experiences and batch pipelines, this is an order-of-magnitude difference.

- Interactive Scenarios: Users are willing to wait 3 seconds, but 10-15 seconds requires a loading animation design.

- Batch Scenarios: In one hour, gpt-image-2 can produce roughly 1,200 images, compared to 240-360 images for Nano Banana Pro.

Dimension 3: Resolution and Aspect Ratio

A dead heat. Both natively support 4K (2048×2048 / 4096×4096). gpt-image-2 explicitly mentions the addition of 16:9 widescreen, while Nano Banana Pro supports various ratios within its Vertex AI documentation.

From a commercial printing perspective, both have overcome the 1536×1024 resolution bottleneck of the gpt-image-1.5 era, so this is no longer a deciding factor for model selection.

Dimension 4: Reference Images and Multi-Subject Consistency

Nano Banana Pro leads. This is currently the most critical gap:

- Nano Banana Pro: Supports input of up to 14 reference images, making it ideal for character locking, multi-subject fusion, and brand visual system generation.

- gpt-image-2: Based on early previews, it only supports standard image-to-image mode; the number of reference images and persistent embedding mechanisms have not yet been disclosed.

Scenario Impact:

| Application | Recommended Model | Reason |

|---|---|---|

| Comic/Animation Character Bible | Nano Banana Pro | Multi-shot character consistency |

| E-commerce Multi-Scene Product Shots | Nano Banana Pro | More stable product consistency |

| Brand Visual System Batching | Nano Banana Pro | 14 reference images lock the style |

| UI/UX Prototype Single Output | gpt-image-2 | 3-second speed + text accuracy |

Dimension 5: API Pricing and Access

| Item | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| Estimated Cost per Image | ~$0.15-$0.20 | ~$0.045-$0.151 (2nd Gen) |

| Subscription Plans | None (Pay-as-you-go) | Gemini Subscription $19.99-$124.99/mo |

| Enterprise Access | OpenAI Direct / API Aggregator | Vertex AI / Google Cloud |

| Ecosystem Integration | OpenAI SDK | Firefly / Photoshop / Figma / Canva |

| Copyright Protection | Not officially specified | Official version provides copyright indemnity |

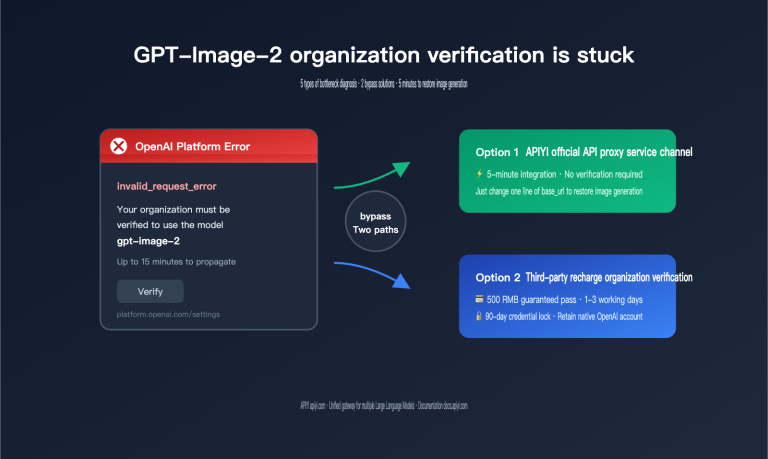

Pricing Note: gpt-image-2 pricing is an industry estimate; please refer to official announcements for accuracy. Both models can be accessed via APIYI (apiyi.com) using a single API key, avoiding the management overhead of multiple accounts.

Dimension 6: Editing Capabilities and Watermarking

Nano Banana Pro offers more mature editing: It claims "industry-best editing capabilities," supporting inpainting, style transfer, and multi-subject fusion. It includes SynthID watermarking, meaning all outputs come with content provenance markers—a hard requirement for compliance-heavy scenarios (legal, news, finance).

gpt-image-2 offers higher editing precision (based on early previews), but the company has not disclosed whether it includes built-in watermarking. During the initial launch phase, enterprise clients with strict compliance needs should prioritize Nano Banana Pro.

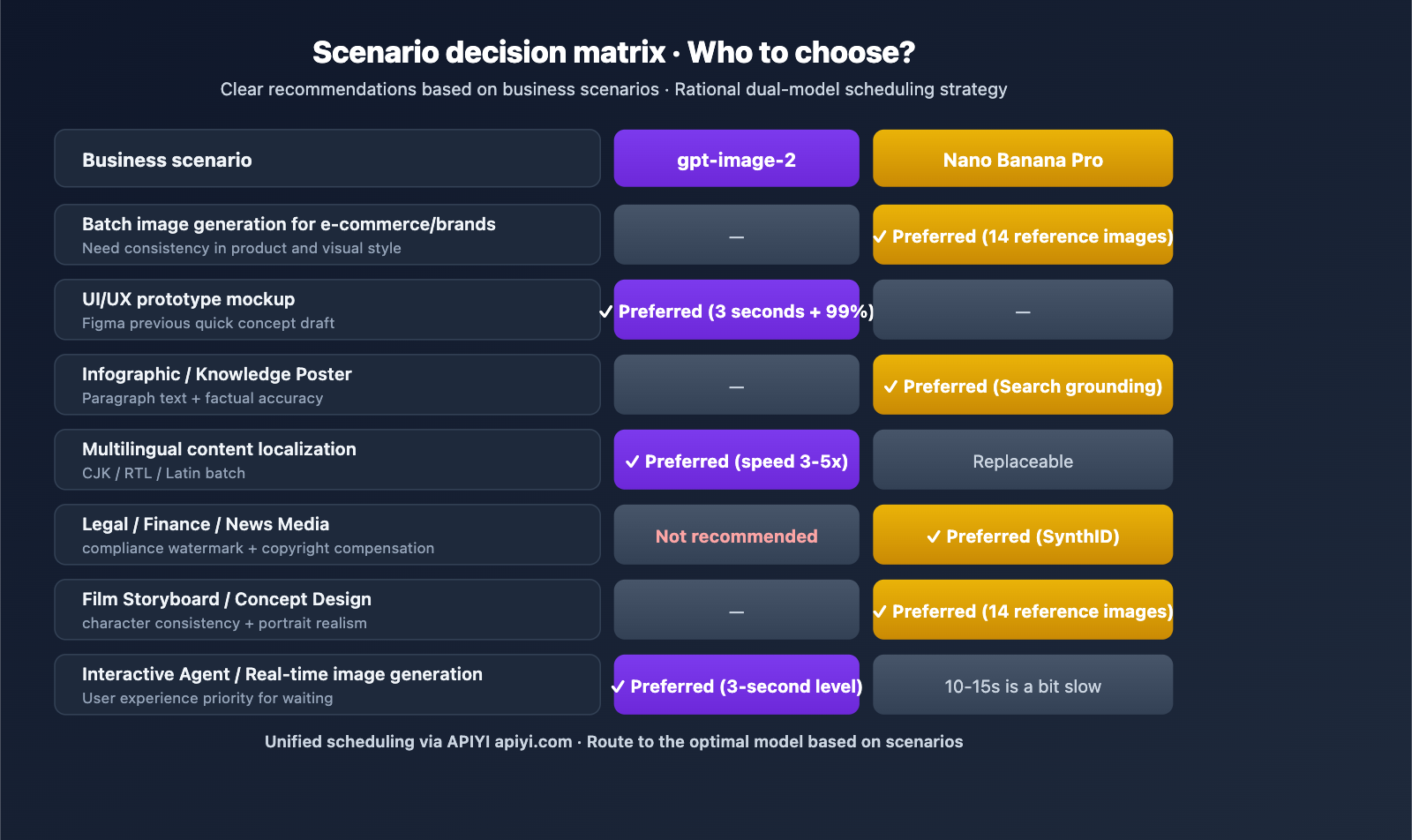

Scenario-Based Recommendations: Which One Should You Choose?

Scenario A: E-commerce/Marketing Bulk Image Generation → Nano Banana Pro

Reasoning: It offers brand consistency through 14 reference images, copyright indemnity, and a complete ecosystem already integrated into Photoshop, Figma, and Canva. It's the top choice for bulk product imagery, brand visual systems, and multi-scenario e-commerce visuals.

Scenario B: UI/UX Prototypes and Developer Agents → gpt-image-2 (Post-release)

Reasoning: The 3-second speed is critical for interactive agents, and its 99% text accuracy ensures UI mockups are ready for stakeholder approval.

Scenario C: Infographics/Educational Posters → Nano Banana Pro

Reasoning: With search grounding capabilities and paragraph-level text rendering, it's perfect for educational content, data visualization, and science communication posters.

Scenario D: Multilingual Localized Ads → Both, but gpt-image-2 is faster

Reasoning: Both support CJK/RTL/Latin scripts, but the 3-second speed of gpt-image-2 makes it 3-5 times more productive than Banana Pro for bulk localization tasks.

Scenario E: Compliance-Sensitive Content (Legal/News/Finance) → Nano Banana Pro

Reasoning: SynthID watermarking and copyright indemnity are essential for enterprise-grade compliance; gpt-image-2 has yet to make clear commitments in these areas.

Scenario F: Film Storyboards/Concept Design → Nano Banana Pro

Reasoning: Its superior multi-reference image support and hyper-realistic portrait capabilities make it ideal for pre-production work that requires strict character consistency.

gpt-image-2 vs. Nano Banana Pro API Invocation Example

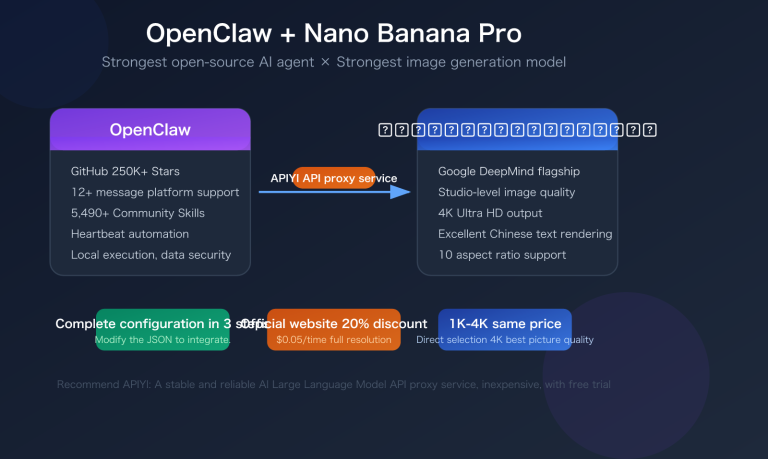

Using the unified interface provided by APIYI (apiyi.com), you can use the same code to call both models simultaneously, making A/B testing a breeze:

from openai import OpenAI

client = OpenAI(

api_key="YOUR_APIYI_KEY",

base_url="https://vip.apiyi.com/v1"

)

prompt = "A premium coffee cafe menu board with hand-lettered 'Today Special: Flat White $5'"

# Call gpt-image-2 (post-release)

gpt_response = client.images.generate(

model="gpt-image-1.5", # Replace after gpt-image-2 is released

prompt=prompt,

size="1024x1024",

quality="high"

)

# Call Nano Banana Pro

nano_response = client.images.generate(

model="nano-banana-pro",

prompt=prompt,

size="1024x1024"

)

print(f"GPT: {gpt_response.data[0].url}")

print(f"Nano: {nano_response.data[0].url}")

View full A/B comparison code (includes multi-reference images, multilingual, and batch testing)

from openai import OpenAI

from typing import Literal, List

import time

client = OpenAI(

api_key="YOUR_APIYI_KEY",

base_url="https://vip.apiyi.com/v1"

)

def generate_and_benchmark(

prompt: str,

models: List[str] = ["gpt-image-1.5", "nano-banana-pro"],

size: str = "1024x1024"

) -> dict:

"""

Compare generation results and speed across multiple models

Args:

prompt: Test prompt

models: List of models to compare

size: Output dimensions

Returns:

Dictionary containing the URL and latency for each model

"""

results = {}

for model in models:

start = time.time()

try:

response = client.images.generate(

model=model,

prompt=prompt,

size=size,

quality="high"

)

elapsed = time.time() - start

results[model] = {

"url": response.data[0].url,

"seconds": round(elapsed, 2)

}

except Exception as e:

results[model] = {"error": str(e)}

return results

test_prompts = [

"UI: Mobile banking app with 'Transfer $500' button",

"Ad: Summer sale poster with '50% OFF' slogan",

"Localization: Japanese coffee menu with 'コーヒー ¥580'"

]

for p in test_prompts:

result = generate_and_benchmark(p)

print(f"\nPrompt: {p}")

for model, data in result.items():

print(f" [{model}] {data}")

Platform Tip: Use the free testing credits on APIYI (apiyi.com) to quickly compare how these two models perform in your specific business scenarios. The platform supports both OpenAI and Google ecosystems, so you don't have to maintain separate accounts just for testing.

gpt-image-2 vs. Nano Banana Pro: A Comparative Analysis

Nano Banana Pro's Moat: With support for 14 reference images, SynthID watermarking, enterprise-grade ecosystem integration (Photoshop/Figma/Canva/Vertex AI), and copyright indemnification—these production-ready capabilities are not something gpt-image-2 will easily catch up with in the short term.

gpt-image-2's Breakthroughs: A 3-second generation speed, 99% text accuracy, UI rendering capabilities, and precise recreation of real-world brands and interfaces. These were the exact areas where OpenAI lagged behind Nano Banana Pro in the gpt-image-1.5 era; the new version is expected to systematically bridge these gaps.

Conclusion: Nano Banana Pro's dominance won't be fully shaken in the short term, but for specific niche scenarios (UI prototyping, high-speed interactive agents, multilingual batch processing), gpt-image-2 will become the superior choice. The rational strategy is to use both and route based on the specific scenario.

Routing Recommendation: Use APIYI (apiyi.com) to build a multi-model routing layer. This allows your business interface to automatically route requests to either gpt-image-2 or Nano Banana Pro based on the scenario, maximizing cost-effectiveness.

Frequently Asked Questions (FAQ)

Q1: Can gpt-image-2 really outperform Nano Banana Pro?

In some dimensions, yes; in overall strength, it's difficult in the short term. LM Arena blind tests show that gpt-image-2 leads Nano Banana Pro in four dimensions: text rendering (near 100%), UI rendering, world knowledge, and speed (~3 seconds). However, Nano Banana Pro maintains a clear advantage in six other areas: multi-reference image consistency (14 images), hyper-realistic portraiture, editing maturity, enterprise ecosystem (Photoshop/Figma), compliance watermarking (SynthID), and copyright indemnification.

Q2: How big is the difference in generation speed?

gpt-image-2 takes about 3 seconds, while Nano Banana Pro takes about 10-15 seconds—a difference of roughly 3-5 times. This has a significant impact on interactive agents, real-time creative tools, and batch pipelines (hourly throughput). However, for complex tasks requiring 14 reference images to lock in a character, the extra time spent on Nano Banana Pro is well worth it.

Q3: Should I choose Nano Banana Pro now or wait for gpt-image-2?

Use Nano Banana Pro now, but prepare a migration path. Reasons: (1) gpt-image-2 is expected to be released between late April and mid-May 2026, and quotas will be tight at launch; (2) Nano Banana Pro is already production-ready with copyright protection; (3) By using APIYI (apiyi.com), you can build a dual-model routing layer, allowing you to switch to gpt-image-2 seamlessly on launch day for the tasks that need it, without disrupting your existing business.

Q4: Which one should I choose for e-commerce batch image generation?

Prioritize Nano Banana Pro. The 14 reference images are crucial for product consistency—the same item must maintain visual uniformity across shelves, lifestyle scenes, model shots, and close-ups, which is a core strength of Nano Banana Pro. While gpt-image-2 is faster, its reference image capability is yet to be fully proven. For large-scale brand scenarios, Nano Banana Pro is the top choice.

Q5: How can I call both gpt-image-2 and Nano Banana Pro via API?

We recommend using APIYI (apiyi.com) for unified access:

- Visit apiyi.com to register and obtain your API key.

- Set the

base_urltohttps://vip.apiyi.com/v1and use the official OpenAI SDK. - Simply switch the

modelfield during invocation:gpt-image-1.5/nano-banana-pro/ or the futuregpt-image-2. - A single account supports all models, with unified management for billing, balance, and monitoring.

This approach avoids the hassle of maintaining separate OpenAI and Google Cloud accounts and makes it easy to route to the optimal model in real-time based on your needs.

Q6: What are the differences between the two in compliance-sensitive scenarios?

Nano Banana Pro has a clear advantage. It features built-in SynthID watermarking (all outputs include content provenance), and the official version provides copyright indemnification (via Vertex AI Enterprise). OpenAI has not yet disclosed the watermarking strategy or copyright terms for gpt-image-2. For industries sensitive to legal, financial, or media compliance, Nano Banana Pro is the safer choice.

Q7: Is the $0.15-$0.20 pricing for gpt-image-2 reliable?

These are industry estimates; wait for the official announcement from OpenAI. Historically, gpt-image-1.5 was about 20% cheaper than gpt-image-1. If gpt-image-2 follows a similar strategy, the final price might land in the $0.10-$0.15 range. Nano Banana Pro (Gen 2) is currently $0.045-$0.151. While gpt-image-2 might be slightly more expensive, its higher speed means you should compare the cost per unit of throughput once it's live.

Q8: What does it mean when LM Arena blind tests say the “gap is as big as it was with DALL-E”?

This is a subjective evaluation from a senior tester—it means that in the specific dimensions of text rendering and UI restoration, the lead gpt-image-2 has over Nano Banana Pro is comparable to the lead Nano Banana Pro once had over DALL-E. This doesn't mean the overall strength gap is identical—Nano Banana Pro still leads in portrait realism, multi-reference consistency, and editing capabilities. When looking at blind test results, consider the specific dimensions rather than generalizing.

gpt-image-2 vs Nano Banana Pro Key Takeaways

- Not a complete disruption: While gpt-image-2 leads in text rendering, speed, UI, and general world knowledge, Nano Banana Pro maintains its edge in reference images, editing capabilities, ecosystem integration, and compliance.

- Use case dictates the choice: Opt for gpt-image-2 for UI prototyping, rapid Agent development, and multilingual batch tasks; choose Nano Banana Pro for e-commerce batch processing, brand visuals, and compliance-heavy scenarios.

- Significant speed gap: With a 3-second vs. 10-15 second turnaround, the performance gap is amplified 3-5 times in high-frequency interaction scenarios.

- Reference images are the moat: Nano Banana Pro’s ability to maintain multi-subject face consistency using 14 reference images will be hard to surpass in the short term.

- Dual-model coexistence strategy: Use the APIYI (apiyi.com) unified interface to route requests to the optimal model based on the specific scenario.

Summary

The core takeaways from the gpt-image-2 vs Nano Banana Pro comparison are:

- gpt-image-2 is a challenger, not a disruptor: It outperforms Nano Banana Pro in text, speed, and UI, but its overall capabilities still lag by about one version.

- Nano Banana Pro’s moat remains solid: Features like 14-image reference support, SynthID watermarking, deep integration with Photoshop/Figma/Canva, and copyright indemnification are what make it truly production-ready.

- A rational strategy involves dual-model routing: Don't treat this as an "either-or" decision. Route tasks based on the scenario—use gpt-image-2 for UI prototypes and high-speed Agents, and stick with Nano Banana Pro for e-commerce, branding, and compliance needs.

For team decision-making, we recommend integrating Nano Banana Pro via APIYI (apiyi.com) now to handle current production requirements. Meanwhile, you can build your OpenAI ecosystem code framework using gpt-image-1.5; once gpt-image-2 is released, you'll only need to update the model field to seamlessly expand your scheduling pool.

Related Articles

If you're interested in the comparison between gpt-image-2 and Nano Banana Pro, we recommend checking out these articles:

- 📘 gpt-image-2 vs gpt-image-1.5: A Comprehensive Guide to the 8 Major Upgrades – Explore the leap in capabilities for OpenAI's image models.

- 📊 gpt-image-2: A Deep Dive into 6 Key Use Cases – Learn how to implement these models in your business workflows.

- 🚀 The Complete API Invocation Guide for Nano Banana Pro – Best practices for Google's flagship image model.

📚 References

-

Google DeepMind Official: Gemini 3 Pro Image (Nano Banana Pro) Technical Documentation

- Link:

deepmind.google/models/gemini-image/pro - Description: Official specifications and API parameters for Banana Pro.

- Link:

-

Google Cloud Enterprise Announcement: Nano Banana Pro Enterprise Edition Launch

- Link:

cloud.google.com/blog/products/ai-machine-learning/nano-banana-pro-available-for-enterprise - Description: Details on Vertex AI access, copyright indemnification, and SynthID watermarking.

- Link:

-

nanobananafree Comparison Report: 5 Major Upgrades: GPT Image 2 vs Nano Banana 2/Pro

- Link:

nanobananafree.org/blog/gpt-image-2-guide-vs-nano-banana-2-pro - Description: Comparative data on text rendering, reference image handling, speed, and pricing.

- Link:

-

YouMind LM Arena Blind Test: Hands-on with the Leaked GPT Image 2

- Link:

youmind.com/blog/gpt-image-2-vs-nano-banana-pro - Description: First-hand observations from blind testing these two models.

- Link:

-

TechCrunch Report: Google Launches Nano Banana 2 with Faster Image Generation

- Link:

techcrunch.com/2026/02/26/google-launches-nano-banana-2-model-with-faster-image-generation - Description: Authoritative coverage on the evolution and market positioning of the Banana series.

- Link:

Author: APIYI Technical Team

Technical Discussion: We'd love to hear your thoughts in the comments! For more resources, visit the APIYI documentation center at docs.apiyi.com.