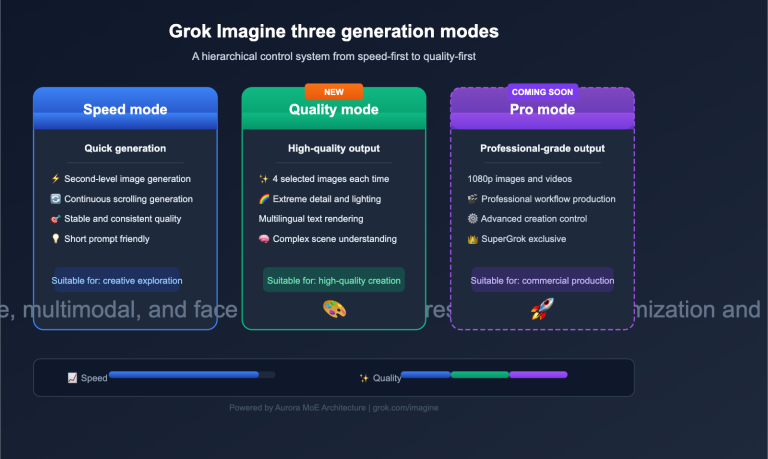

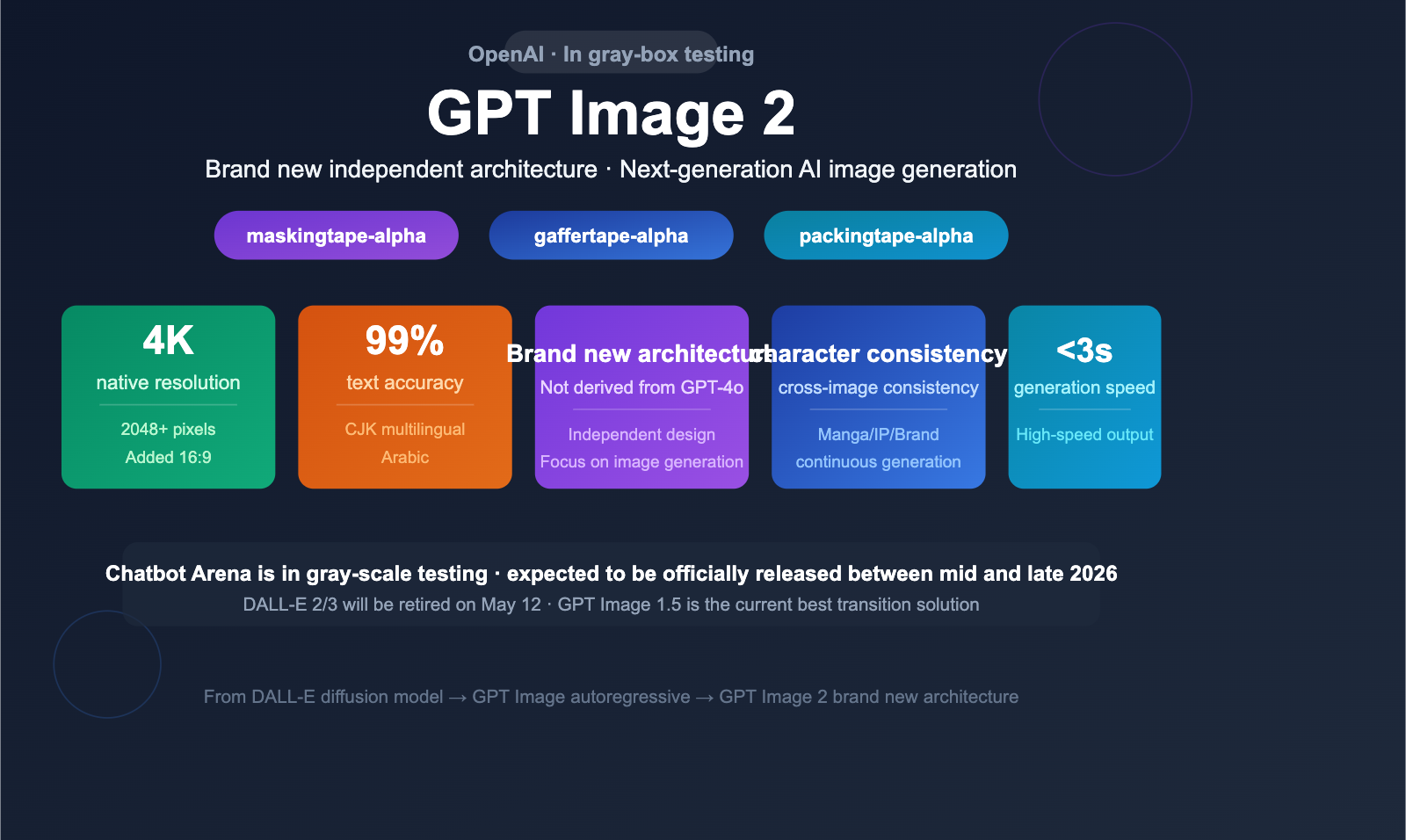

OpenAI's next-generation image generation model, GPT Image 2, has entered the gray-box testing phase, with three codenamed models (maskingtape/gaffertape/packingtape) appearing in anonymous evaluations on the Chatbot Arena. Although not yet officially released, leaked information suggests that GPT Image 2 utilizes a brand-new, independent architecture, with expected breakthroughs in text rendering, resolution, multilingual support, and face consistency.

Core Value: Get up to speed in 3 minutes on the latest intel regarding GPT Image 2, its anticipated capability upgrades, and the complete evolution of OpenAI's image generation product line from DALL-E to GPT Image.

Quick Look at the Latest GPT Image 2 Intel

GPT Image 2 is currently in a gray-box testing phase, and the API has not been officially released. The following information is derived from Arena evaluation leaks and various analyses, and has not been confirmed by OpenAI.

| Information Item | Details |

|---|---|

| Current Status | In Gray-box/Beta testing, not officially released |

| Arena Codename | maskingtape-alpha / gaffertape-alpha / packingtape-alpha |

| Architecture | Brand-new independent architecture, not a derivative of GPT-4o |

| Expected Resolution | Native 4K (2048×2048 or 4096×4096) |

| Text Rendering | Expected 99%+ accuracy, supports non-Latin scripts like CJK/Arabic |

| Generation Speed | Expected under 3 seconds |

| Expected Release | Mid-to-late 2026 |

Decoding the 3 Gray-box Codenames

During anonymous battles on the Chatbot Arena, three previously unseen image model codenames appeared:

| Codename | Analysis |

|---|---|

| maskingtape-alpha | "Masking tape" — May imply enhanced local editing/masking capabilities |

| gaffertape-alpha | "Gaffer tape" — Likely corresponds to a professional/high-end variant |

| packingtape-alpha | "Packing tape" — Likely corresponds to a batch/bulk generation variant |

All three codenames share the "tape" theme, with the "alpha" suffix indicating they are in an early testing stage. Some ChatGPT users have already randomly triggered these new models during their sessions.

🎯 Technical Advice: Once GPT Image 2 is officially released, developers can integrate it immediately via the APIYI platform (apiyi.com). The platform already supports the full line of GPT Image 1.5 models and will quickly adapt once the new models go live.

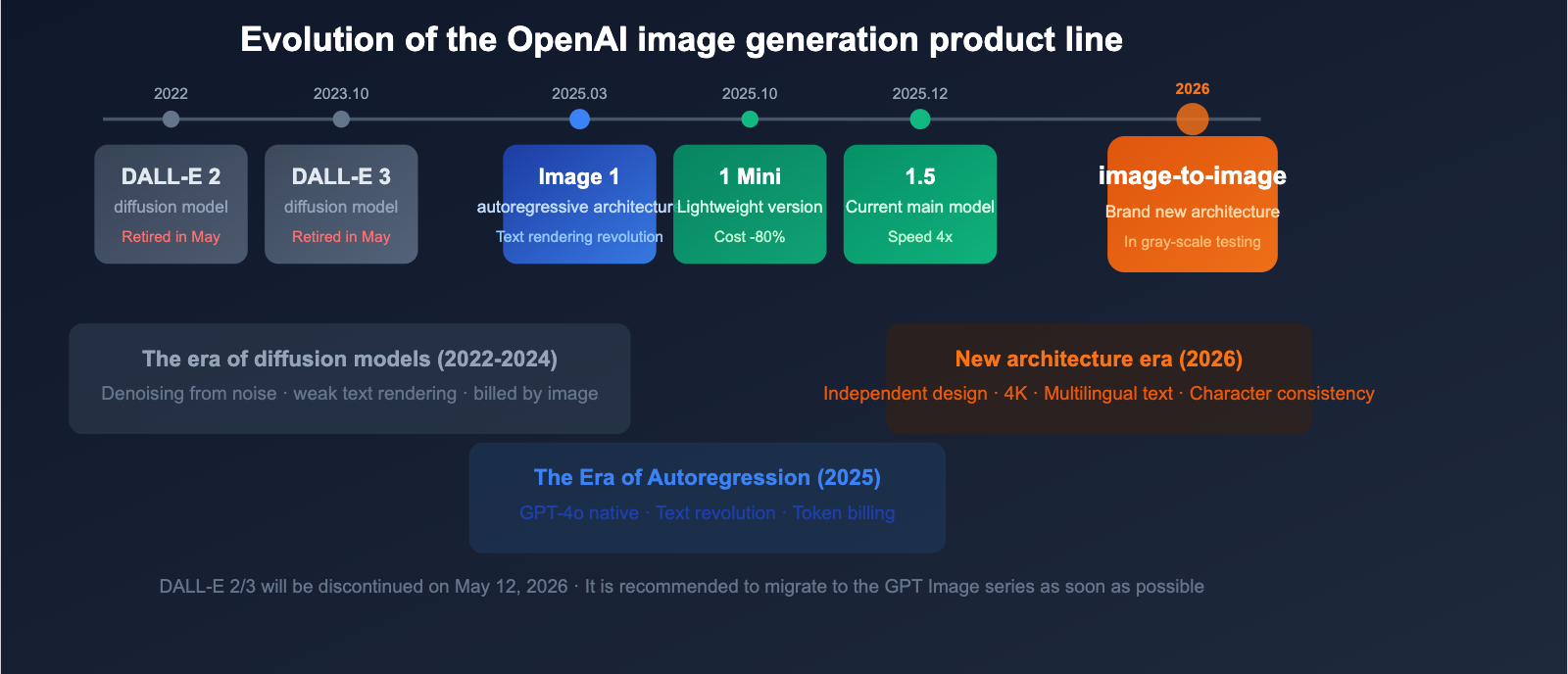

Complete Evolution of the GPT Image Product Line

To understand the positioning of GPT Image 2, you first need to grasp the full evolution of OpenAI's image generation product line.

Product Line Timeline

| Model | Release Date | Architecture | Key Features |

|---|---|---|---|

| DALL-E 2 | 2022 | Diffusion Model | Pioneering AI image generation |

| DALL-E 3 | Oct 2023 | Diffusion Model | Significant improvements in prompt understanding |

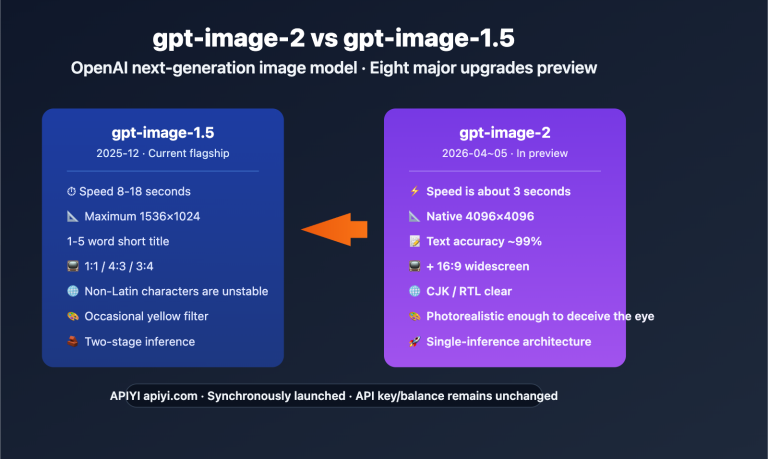

| GPT Image 1 | Mar/Apr 2025 | Autoregressive (Native to GPT-4o) | Revolutionary text rendering, image editing |

| GPT Image 1 Mini | Oct 2025 | Autoregressive (Lightweight) | 80% cost reduction |

| GPT Image 1.5 | Dec 2025 | Autoregressive (Optimized) | 4x speed boost, color shift fixes |

| GPT Image 2 | 2026 (Expected) | New Independent Architecture | 4K/Multilingual text/Face consistency |

Architectural Shift: From the diffusion models of DALL-E to the autoregressive models of GPT Image 1, and now to the all-new independent architecture of GPT Image 2, OpenAI has implemented major underlying architectural transformations with every generation.

DALL-E Series Retirement Countdown

OpenAI has announced that DALL-E 2 and DALL-E 3 will be discontinued on May 12, 2026. This means all applications relying on the DALL-E API must migrate to the GPT Image series before this date.

5 Key Upgrades Expected for GPT Image 2

Based on leaks from Arena testing and various analyses, GPT Image 2 is expected to bring major upgrades in the following five areas.

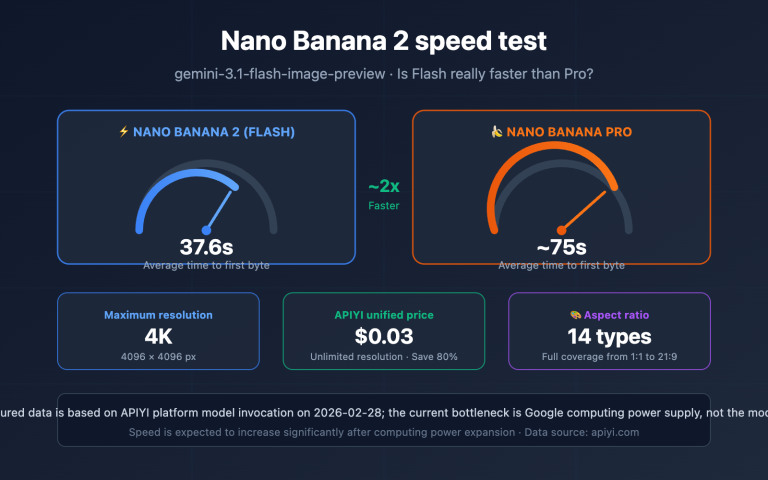

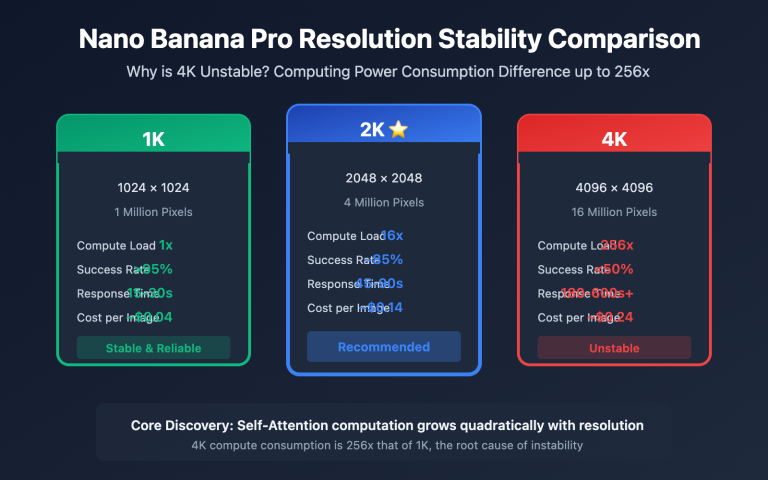

Upgrade 1: Native 4K Resolution

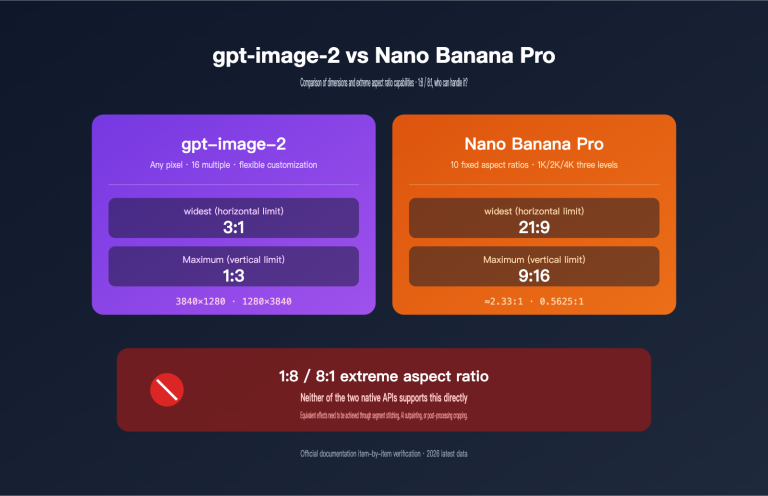

The maximum resolution for GPT Image 1.5 is 1536×1024. GPT Image 2 is expected to support native 4K output (2048×2048 or 4096×4096), along with a 16:9 widescreen aspect ratio, meeting the needs of professional content creation and commercial printing.

| Dimension | GPT Image 1.5 | GPT Image 2 (Expected) |

|---|---|---|

| Max Resolution | 1536×1024 | Native 4K |

| Aspect Ratio | 1:1, 3:2, 2:3 | New 16:9 Widescreen |

| Output Quality | High | Near-photorealistic |

Upgrade 2: 99%+ Text Rendering Accuracy

Text rendering is a signature capability of the GPT Image series. While GPT Image 1.5 has already achieved about 95% accuracy for English text, it still struggles with non-Latin scripts like CJK (Chinese, Japanese, Korean) and Arabic. GPT Image 2 is expected to boost text rendering accuracy to over 99% and provide full support for multilingual text.

This upgrade is particularly important for Chinese users—it means that generating images containing accurate Chinese text will finally become reliable.

Upgrade 3: Character Consistency

Currently, GPT Image 1.5 struggles to maintain consistent character appearances across multiple generations. GPT Image 2 is expected to support cross-image character consistency, making scenarios like continuous illustrations, comic series, and brand mascot generation practical.

Upgrade 4: Region-based Control

GPT Image 1.5's composition relies entirely on text prompts. GPT Image 2 may introduce region-based prompting, allowing users to specify the content of different areas within the frame for more precise compositional control.

Upgrade 5: Generation Speed Under 3 Seconds

GPT Image 1.5 already achieved a 4x speed increase compared to the first generation. With a brand-new architecture, GPT Image 2 is expected to complete high-quality image generation in under 3 seconds, further shortening the creative cycle.

Summary of the 5 Major Upgrades

| Capability Dimension | GPT Image 1.5 (Current) | GPT Image 2 (Expected) | Improvement |

|---|---|---|---|

| Max Resolution | 1536×1024 | Native 4K (2048+) | 2-4x |

| English Text Accuracy | ~95% | 99%+ | +4pts |

| CJK Text Accuracy | Poor | Expected to be Good | Quantum Leap |

| Character Consistency | Not Supported | Cross-image consistent | New Capability |

| Composition Control | Text prompts only | Region-based prompts | New Capability |

| Generation Speed | ~5-10 seconds | <3 seconds | 2-3x |

| Aspect Ratio | 3 types | New 16:9 | More options |

💡 Recommendation: If you are currently using DALL-E 3 or GPT Image 1, we suggest migrating to GPT Image 1.5 as soon as possible. The DALL-E series will be retired on May 12th, and GPT Image 1.5 offers significant improvements in both quality and speed. You can seamlessly switch between versions via the APIYI (apiyi.com) platform.

Current API Pricing for GPT Image 1.5 (For Reference)

While waiting for the official release of GPT Image 2, understanding the current pricing for GPT Image 1.5 helps in gauging future trends.

Billing by Image

| Quality | 1024×1024 | 1024×1536 / 1536×1024 |

|---|---|---|

| Low | $0.009 | $0.013 |

| Medium | $0.034 | $0.050 |

| High | $0.133 | $0.200 |

Billing by Token

| Token Type | Price |

|---|---|

| Image Input | $8.00/M tokens |

| Image Input (Cached) | $2.00/M tokens |

| Image Output | $32.00/M tokens |

| Text Input | $5.00/M tokens |

| Text Output | $10.00/M tokens |

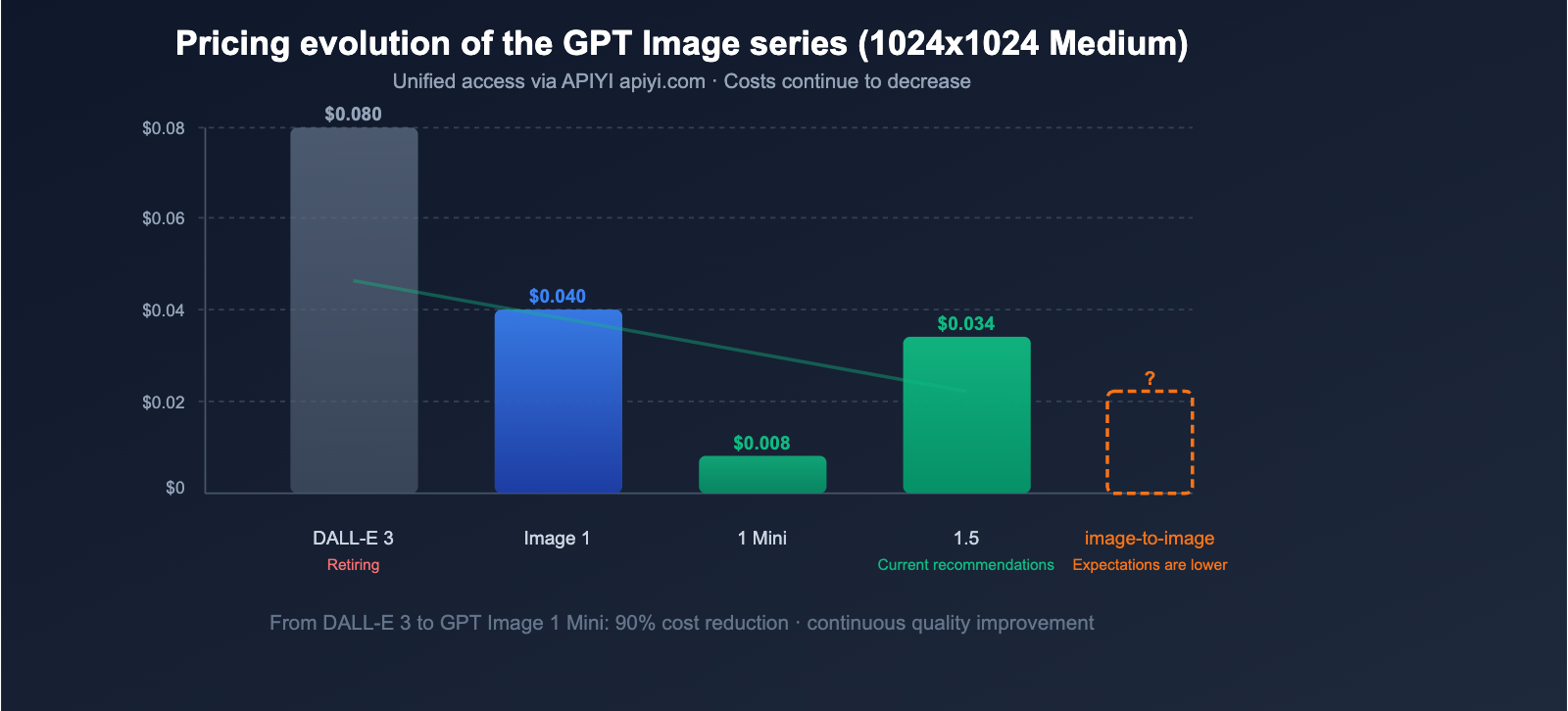

Pricing Trend Analysis

From DALL-E 3 to GPT Image 1.5, OpenAI's image generation costs have been on a steady downward trend:

| Model | 1024×1024 (Standard) | Relative Cost |

|---|---|---|

| DALL-E 3 | $0.040-$0.080 | Baseline |

| GPT Image 1 | ~$0.040 (Medium) | On par, significantly better quality |

| GPT Image 1 Mini | ~$0.008 | 80% Reduction |

| GPT Image 1.5 | $0.034 (Medium) | Lower price + 4x speed |

GPT Image 2 is expected to continue this trend, potentially introducing a new "turbo" pricing tier.

💰 Cost Optimization: Currently, GPT Image 1.5 Low quality is only $0.009 per image, making bulk generation extremely cost-effective. You can flexibly manage your strategy for different quality tiers by using the APIYI (apiyi.com) platform.

Quick Start Guide to the GPT Image API

While we wait for the release of GPT Image 2, developers can start building applications using GPT Image 1.5 today. The API is fully compatible, so migrating to GPT Image 2 later will be as simple as updating the model name.

Text-to-Image Invocation Example

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://api.apiyi.com/v1" # Unified interface via APIYI

)

# Generate an image

result = client.images.generate(

model="gpt-image-1.5",

prompt="A Shiba Inu wearing a spacesuit standing on the lunar surface, with the blue Earth in the background, realistic style",

size="1536x1024",

quality="high",

n=1,

)

# Get image data

image_base64 = result.data[0].b64_json

Image Editing (Inpainting) Example

# Localized image editing

result = client.images.edit(

model="gpt-image-1.5",

image=open("original.png", "rb"),

mask=open("mask.png", "rb"),

prompt="Replace the background with a beach at sunset",

size="1024x1024",

)

Key Parameters

| Parameter | Type | Description | Options |

|---|---|---|---|

model |

string | Model ID | gpt-image-1.5 / gpt-image-1 |

prompt |

string | Text description | Natural language description |

size |

string | Output dimensions | 1024x1024 / 1536x1024 / 1024x1536 / auto |

quality |

string | Quality level | low / medium / high |

n |

int | Number of images | 1 (currently only single image supported) |

output_format |

string | Output format | png / jpeg / webp |

All GPT Image model outputs include C2PA metadata to identify AI-generated content and support transparent backgrounds (PNG alpha).

Tips for Text Rendering in GPT Image

Text rendering is a core strength of the GPT Image series. Here are some practical tips to improve rendering accuracy:

| Tip | Description | Example |

|---|---|---|

| Quote text clearly | Wrap the desired text in quotes | "The image says 'Welcome Home'" |

| Specify font style | Describe visual characteristics | "Bold sans-serif font" |

| Specify position | Describe where the text should appear | "Title centered at the top" |

| Limit text length | Keep it under 20 characters per pass | Generate long text in multiple steps |

| Use English | English rendering is currently most reliable | GPT Image 2 will improve multilingual support |

🚀 Get Started: We recommend using the APIYI (apiyi.com) platform to access the GPT Image API. It supports OpenAI-compatible interfaces and will provide immediate support for GPT Image 2 upon release.

GPT Image 2 vs. Competitors: An Outlook

The AI image generation landscape is highly competitive in 2026, and GPT Image 2 will face challenges from several directions.

Comparison of Leading Image Generation Models

| Model | Vendor | Architecture | Text Rendering | Max Resolution | Pricing Model |

|---|---|---|---|---|---|

| GPT Image 2 (Expected) | OpenAI | New standalone | 99%+ | Native 4K | Token/Image |

| GPT Image 1.5 | OpenAI | Autoregressive | ~95% | 1536×1024 | Token/Image |

| Imagen 3 | Diffusion | Good | 1024×1024 | Token | |

| FLUX 1.1 Pro | Black Forest | Diffusion | Excellent | 2048×2048 | Per Image |

| Ideogram 3.0 | Ideogram | Diffusion | Excellent | 2048×2048 | Per Image |

| Midjourney V7 | Midjourney | Diffusion | Improving | 2048×2048 | Subscription |

The core advantages of the GPT Image series lie in: text rendering precision, world knowledge (knowing what specific objects/brands look like), native image editing, and deep integration with the ChatGPT ecosystem.

Expected Use Cases for GPT Image 2

The capability upgrades in GPT Image 2 will unlock several previously difficult application scenarios:

| Use Case | Key Dependency | Current Feasibility | GPT Image 2 Expectation |

|---|---|---|---|

| Chinese Posters/Banners | CJK text rendering | ❌ High error rate | ✅ 99%+ precision |

| Sequential Comics/Art | Face consistency | ❌ Different every time | ✅ Consistent across images |

| 4K Commercial Printing | High resolution | ❌ Max 1536px | ✅ Native 4K |

| E-commerce Batch Gen | Speed + Quality | ⚠️ Usable | ✅ <3s + Higher quality |

| UI/UX Mockups | Precise layout | ⚠️ Limited | ✅ Region-level control |

| Multilingual Materials | Multilingual text | ❌ Poor for non-Latin | ✅ Full language support |

| Brand IP Merch | Face consistency + HD | ❌ Hard to achieve | ✅ Fully supported |

For Chinese developers and content creators, the breakthrough in CJK text rendering will be the most valuable upgrade in GPT Image 2.

Autoregressive vs. Diffusion: Fundamental Architectural Differences

The autoregressive architecture used by the GPT Image series differs fundamentally from the diffusion models used by DALL-E, Midjourney, and FLUX:

| Dimension | Diffusion Models (DALL-E/MJ/FLUX) | Autoregressive Models (GPT Image) |

|---|---|---|

| Generation Method | Gradual denoising from noise | Pixel-by-pixel, like writing text |

| Text Rendering | Weaker (doesn't understand semantics) | Extremely strong (inherits LLM ability) |

| World Knowledge | Limited (training data only) | Rich (inherits LLM knowledge) |

| Image Editing | Requires additional models | Native support |

| Prompt Understanding | Good | Excellent (LLM-level) |

| Generation Speed | Faster (parallel denoising) | Slower (sequential generation) |

💡 Technical Insight: The "new standalone architecture" of GPT Image 2 may be a hybrid of autoregressive and diffusion methods, combining the strengths of both. Through the APIYI (apiyi.com) platform, you can invoke both GPT Image and diffusion models like FLUX to directly compare the real-world results of these two architectures.

title: "DALL-E Migration Guide: Must Complete by May 12"

description: "DALL-E 2 and DALL-E 3 are retiring on May 12, 2026. Follow this guide to migrate to GPT Image 1.5 seamlessly using APIYI."

tags: [AI, DALL-E, Migration, APIYI, GPT Image]

DALL-E Migration Guide: Must Complete by May 12

DALL-E 2 and DALL-E 3 will be officially retired on May 12, 2026. All developers must complete their migration before this deadline.

Migration Path

| Current Model | Recommended Migration | Difficulty |

|---|---|---|

| DALL-E 2 | GPT Image 1.5 | Low (API compatible) |

| DALL-E 3 | GPT Image 1.5 | Low (Model name swap) |

| GPT Image 1 | GPT Image 1.5 | Very Low (Direct replacement) |

Migration Notes

- API Compatibility: The GPT Image series uses the same

/v1/images/generationsendpoint; you only need to update themodelparameter. - Parameter Differences: GPT Image 1.5 introduces a new

qualityparameter (low/medium/high), whereas DALL-E 3 usesquality(standard/hd). - Billing Changes: Billing shifts from DALL-E's per-image model to a dual-billing structure (per-token + per-image) for GPT Image.

- Output Formats: GPT Image adds support for WebP format and transparent backgrounds.

🎯 Migration Tip: Use the APIYI (apiyi.com) platform to test your migration. You can compare the output differences between DALL-E and GPT Image without affecting your production environment. The platform supports a unified interface for multiple models, making the switch incredibly easy.

FAQ

Q1: When will GPT Image 2 be officially released?

There is no official release date yet. Based on the Arena beta testing progress and historical release patterns, it is expected to arrive between mid-to-late 2026. Given the ~9-month gap between GPT Image 1 and 1.5, we estimate a summer release. Once released, the APIYI (apiyi.com) platform will provide immediate support.

Q2: Should I wait for GPT Image 2 or use GPT Image 1.5 now?

We recommend using GPT Image 1.5 immediately. It is currently the most powerful image generation model from OpenAI, with Low quality costing only $0.009 per image. The API is compatible, so migrating to GPT Image 2 later will only require a simple model name swap. Waiting will only cause you to miss the migration window before DALL-E retires.

Q3: What does the new architecture of GPT Image 2 mean?

GPT Image 1/1.5 is based on the image generation capabilities of the GPT-4o multimodal model. GPT Image 2 is reportedly a brand-new, independent architecture that no longer relies on GPT-4o. This could mean more focused image generation optimizations, higher resolution limits, and lower inference costs. You can use the APIYI (apiyi.com) platform to quickly compare the actual differences between the old and new architectures once version 2 is released.

Q4: Does the GPT Image series support Chinese text rendering?

GPT Image 1.5 has limited support for Chinese text rendering and is prone to typos or garbled characters. GPT Image 2 is expected to significantly improve rendering accuracy for non-Latin scripts (including Chinese, Japanese, Korean, and Arabic), which is a major benefit for Chinese content creators.

Summary

The beta testing of GPT Image 2 marks a new era for OpenAI's image generation capabilities. With a brand-new independent architecture, native 4K resolution, 99%+ multilingual text rendering, face consistency, and region-level control—these anticipated upgrades are set to redefine the boundaries of AI image generation once they go live.

Key Takeaways:

- Status: Currently in beta testing, with 3 codenames spotted in the Arena.

- Architecture: A completely new, independent architecture, not a derivative of GPT-4o.

- Anticipated Upgrades: 4K resolution / 99%+ text accuracy / face consistency / region-level control / 3-second generation time.

- Current Recommendation: GPT Image 1.5 (at a low cost of $0.009/image) remains the best choice for now.

- Urgent Action: DALL-E 2/3 will be retired on May 12th; please migrate as soon as possible.

- Expected Release: Mid-to-late 2026.

We recommend using APIYI (apiyi.com) to quickly integrate the full range of GPT Image models and gain immediate API access as soon as GPT Image 2 is officially released.

References

- OpenAI Image Generation API Documentation:

developers.openai.com/api/docs/guides/image-generation - OpenAI Model List:

developers.openai.com/api/docs/models - OpenAI API Pricing:

developers.openai.com/api/docs/pricing

This article was written by the APIYI technical team. For more tutorials on using Large Language Models, please visit APIYI at apiyi.com.