Author's Note: OpenAI's latest gpt-5.5-pro model is now officially available via API, featuring a 1M context window and top-tier reasoning capabilities. This article provides a comprehensive breakdown of its technical specifications, pricing structure, SVIP group restrictions, and access solutions for domestic users.

OpenAI officially released GPT-5.5 on April 23, 2026, and opened the gpt-5.5-pro advanced reasoning version via API on April 24, 2026. This is the flagship model in the GPT-5.5 series, specifically designed for complex multi-step tasks, deep reasoning, and high-value scenarios. It offers a 1,050,000 token context window and a maximum output of 128K.

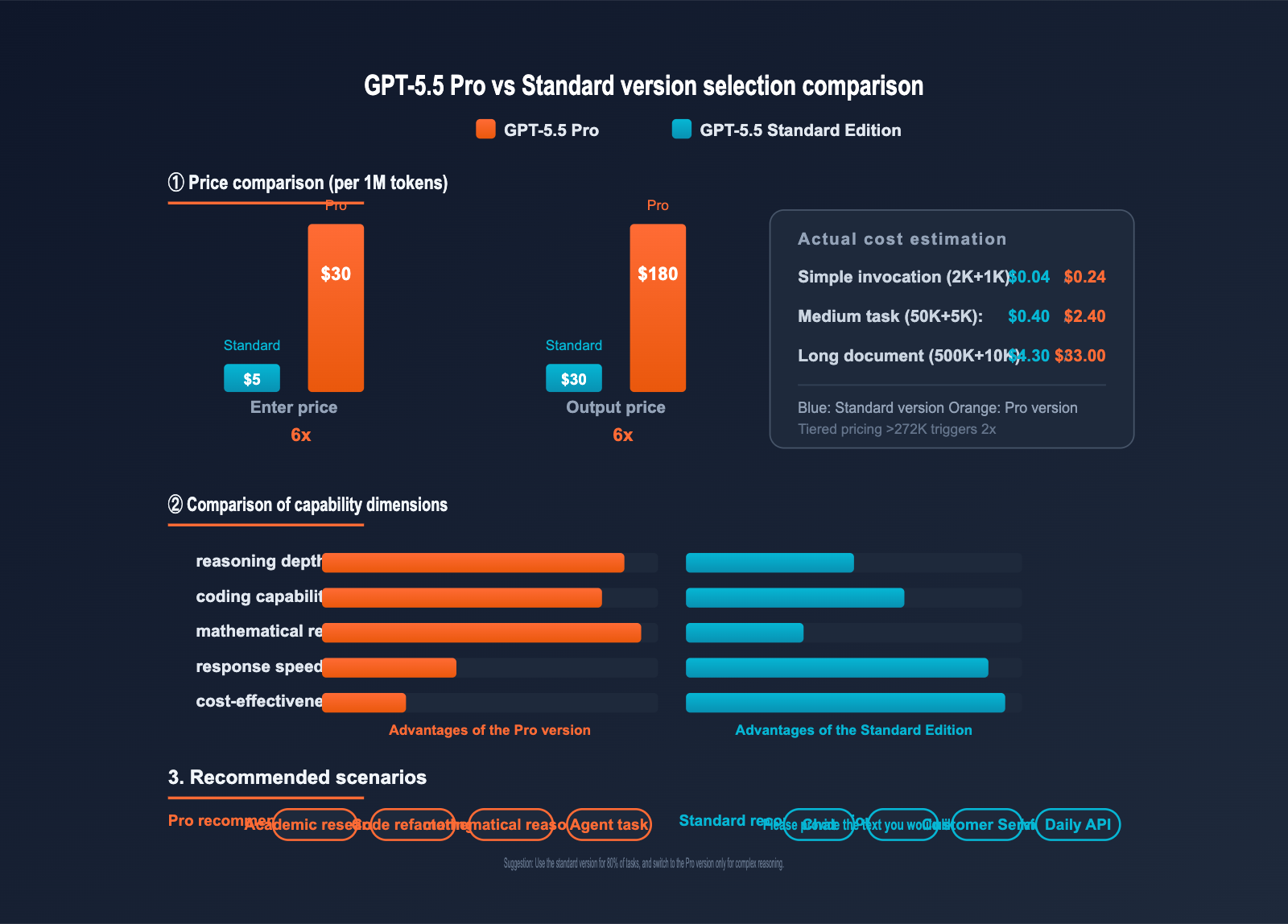

This is not just marketing hype—the official OpenAI documentation is live, and developers can call the API directly. However, since its pricing is 6 times higher than the standard GPT-5.5 ($30 / $180 per 1M tokens), a single call can cost several dollars, presenting unique cost-control challenges for domestic users.

Core Value: This article provides a complete guide to the GPT-5.5 Pro API, covering model specifications, pricing, benchmark data, and the SVIP group protection mechanism, along with a ready-to-run, minimalist code example.

{Launched on 2026-04-24}

{Official pricing (per 1M tokens)}

{$30 input / $180 output}

{GPT-5.5 Pro}

{1M context window · Top-Tier Reasoning}

{TERMINAL-BENCH 2.0}

{82.7%}

{Agentic King}

{FRONTIERMATH T1-3}

{52.4%}

{Top-tier mathematics}

{context window}

{1.05M}

{extra-long context window}

{APIYI.COM · Direct domestic connection · 10% bonus on top-ups}

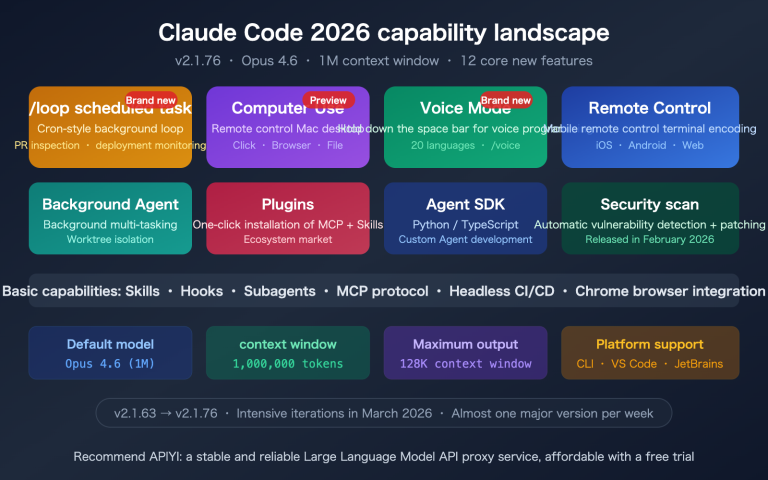

GPT-5.5 Pro API Key Highlights

| Feature | Description | Value |

|---|---|---|

| 1M+ Long Context | 1,050,000 tokens input + 128K output | Process entire books or massive codebases at once |

| Top-tier Reasoning | 52.4% on FrontierMath Tier 1-3 | Ideal for math, research, and complex decision-making |

| Agentic Coding King | 82.7% on Terminal-Bench 2.0 | Autonomously completes multi-step tasks lasting up to 20 hours |

| Multimodal Understanding | Text input/output + image input | Document parsing, UI understanding, visual reasoning |

| SVIP Group Access | Available only to APIYI SVIP groups | Prevents accidental high-cost model usage; keeps costs under control |

Key Differences: GPT-5.5 Pro vs. Standard Version

GPT-5.5 Pro is an optimized version of GPT-5.5, specifically tailored for deep reasoning and high-precision tasks. Officially described as "producing smarter and more precise responses," it trades increased compute for higher accuracy. The Pro version supports a full Reasoning Token mechanism, where the model generates internal, invisible reasoning chains before providing the final answer. This gives it a significant lead in high-difficulty benchmarks like FrontierMath and Expert-SWE.

In terms of pricing, the Pro version's input cost is 6 times that of the standard version ($30 vs $5), and the output cost is also 6 times higher ($180 vs $30). This means the cost of a single complex reasoning call can jump from a few cents to several dollars, making precisely choosing when to use the Pro version the key to managing your API costs.

{GPT-5.5 Pro capability matrix}

{gpt-5.5-pro}

{Reasoning Model}

{Core capabilities}

{Reasoning Token}

{Web Search}

{File Search}

{Code Interpreter}

{MCP integration}

{key parameters}

{CONTEXT}

{1.05M}

{tokens}

{MAX OUTPUT}

{128K}

{tokens}

{KNOWLEDGE}

{Dec 2025}

{Benchmark test score}

{Terminal-Bench 2.0}

{82.7%}

{Expert-SWE}

{73.1%}

{GDPval (44 occupations)}

{84.9%}

{OSWorld-Verified}

{78.7%}

{Data source: OpenAI official GPT-5.5 release report (2026-04)}

GPT-5.5 Pro API Quick Start

Minimal Python Example

Here is the simplest way to call GPT-5.5 Pro. It's fully compatible with the OpenAI SDK:

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="gpt-5.5-pro",

messages=[

{"role": "user", "content": "Prove the equivalent proposition of the Riemann hypothesis: the real part of all non-trivial zeros is 1/2."}

]

)

print(response.choices[0].message.content)

Minimal cURL Example

curl https://vip.apiyi.com/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer YOUR_API_KEY" \

-d '{

"model": "gpt-5.5-pro",

"messages": [

{"role": "user", "content": "Analyze this 200K token codebase and refactor"}

]

}'

View full production-ready code (includes error handling and cost estimation)

import openai

from typing import Optional, List, Dict

# Pro version pricing (per 1M tokens)

PRICE_INPUT_BASE = 30.0 # 0-272K input price

PRICE_OUTPUT_BASE = 180.0 # 0-272K output price

PRICE_INPUT_HIGH = 60.0 # >272K input price

PRICE_OUTPUT_HIGH = 270.0 # >272K output price

def call_gpt55_pro(

messages: List[Dict],

api_key: str,

max_tokens: int = 4096,

estimate_cost: bool = True

) -> Dict:

"""

Production-ready call for GPT-5.5 Pro, including cost estimation and error handling.

Args:

messages: List of messages

api_key: API key

max_tokens: Maximum output tokens

estimate_cost: Whether to print cost estimation

Returns:

Dictionary containing response content and actual cost

"""

client = openai.OpenAI(

api_key=api_key,

base_url="https://vip.apiyi.com/v1"

)

try:

response = client.chat.completions.create(

model="gpt-5.5-pro",

messages=messages,

max_tokens=max_tokens

)

usage = response.usage

input_tokens = usage.prompt_tokens

output_tokens = usage.completion_tokens

# Tiered pricing calculation

if input_tokens <= 272_000:

input_cost = input_tokens / 1_000_000 * PRICE_INPUT_BASE

output_cost = output_tokens / 1_000_000 * PRICE_OUTPUT_BASE

else:

input_cost = input_tokens / 1_000_000 * PRICE_INPUT_HIGH

output_cost = output_tokens / 1_000_000 * PRICE_OUTPUT_HIGH

total_cost = input_cost + output_cost

if estimate_cost:

print(f"📊 Input tokens: {input_tokens:,}")

print(f"📊 Output tokens: {output_tokens:,}")

print(f"💰 Call cost: ${total_cost:.4f}")

return {

"content": response.choices[0].message.content,

"input_tokens": input_tokens,

"output_tokens": output_tokens,

"cost_usd": total_cost

}

except openai.RateLimitError:

return {"error": "Rate limit exceeded, please check your SVIP group quota."}

except openai.APIError as e:

return {"error": f"API error: {str(e)}"}

# Usage example

result = call_gpt55_pro(

messages=[

{"role": "system", "content": "You are a mathematics expert."},

{"role": "user", "content": "Derive the partial differential form of the Black-Scholes equation."}

],

api_key="YOUR_API_KEY"

)

print(result["content"])

🎯 Quick Start Tip: GPT-5.5 Pro calls can be expensive. We recommend obtaining SVIP group permissions via the APIYI (apiyi.com) platform before you start. The platform is fully compatible with the OpenAI SDK, offers a 10% bonus on $100+ recharges (effectively ~15% off the official price), and provides direct domestic access without needing a VPN.

GPT-5.5 Pro API Pricing Explained

Official Tiered Pricing Structure

OpenAI uses a long-context tiered pricing mechanism for the GPT-5.5 series: prices adjust automatically when the input tokens in a single request exceed 272K.

| Input Range | Prompt Price (per 1M tokens) | Completion Price (per 1M tokens) |

|---|---|---|

| 0 – 272K tokens | $30.00 | $180.00 |

| 272K – ∞ tokens | $60.00 (2x) | $270.00 (1.5x) |

⚠️ Important Note: Tiered pricing is calculated based on the entire request, not just the portion that exceeds the limit. This means if your input exceeds 272K, the entire request's input and output will be billed at the higher rate. We recommend evaluating chunking strategies for long document analysis.

Price Comparison: Pro vs. Standard

| Model | Input Price | Output Price | Price Multiplier | Context Window |

|---|---|---|---|---|

| gpt-5.5 | $5.00 / 1M | $30.00 / 1M | 1x (Base) | 1M tokens |

| gpt-5.5-pro | $30.00 / 1M | $180.00 / 1M | 6x | 1.05M tokens |

Actual cost estimation examples:

- Simple call (2K input + 1K output): Standard $0.04 vs. Pro $0.24

- Medium task (50K input + 5K output): Standard $0.40 vs. Pro $2.40

- Long document analysis (500K input + 10K output): Standard $4.30 vs. Pro $33.00

💡 Cost Optimization Tip: For routine tasks that don't require deep reasoning, we recommend using the GPT-5.5 Standard version. The value of the Pro version is only realized in scenarios where you genuinely need top-tier reasoning capabilities. You can seamlessly switch between both versions on the same platform via APIYI (apiyi.com) to manage your costs effectively.

GPT-5.5 Pro API Performance Benchmark

Official Benchmark Data

OpenAI has released the performance results for the GPT-5.5 series across several authoritative benchmarks. The Pro version shows particularly significant advantages in mathematics and complex tasks:

| Benchmark | Evaluation Metric | GPT-5.5 Pro Score | Notes |

|---|---|---|---|

| Terminal-Bench 2.0 | Terminal command execution | 82.7% | Core ability to complete long tasks autonomously |

| Expert-SWE | 20-hour programming task | 73.1% | Complex codebase refactoring |

| SWE-Bench Pro | Real-world GitHub Issue | 58.6% | Production environment code fixes |

| GDPval (44 professions) | Knowledge work coverage | 84.9% | Cross-domain professional tasks |

| OSWorld-Verified | Computer operation | 78.7% | Computer Use automation |

| FrontierMath Tier 1-3 | High-difficulty math | 52.4% | Top-tier performance in math reasoning |

| FrontierMath Tier 4 | Extremely difficult math | 39.6% | Breakthrough exclusive to Pro version |

Benchmark Analysis

Terminal-Bench 2.0 at 82.7%: This indicates that GPT-5.5 Pro can autonomously complete complex, multi-step operations in the terminal, including environment configuration, dependency installation, and code debugging. For agentic coding workflows, the Pro version offers significantly higher stability than the standard version.

FrontierMath Tier 4 at 39.6%: FrontierMath Tier 4 represents the most challenging level of mathematical evaluation, featuring problems at the Fields Medal level. The Pro version is the first publicly available model to break the 35% threshold in Tier 4, marking a major advancement in AI's capability in frontier mathematical research.

GPT-5.5 Pro vs. Standard Edition Comparison

| Comparison Dimension | GPT-5.5 Pro | GPT-5.5 Standard | Recommended Choice |

|---|---|---|---|

| Mathematical Reasoning | FrontierMath 52.4% | Under 30% | Pro |

| Coding Ability | Expert-SWE 73.1% | Expert-SWE ~55% | Pro (Complex tasks) |

| General Conversation | Slower, higher cost | Fast, lower cost | Standard |

| Long Document Summary | 1.05M context | 1M context | Either |

| Customer Service/Translation | Overkill | Perfectly adequate | Standard |

| Research/Academic | Top-tier reasoning depth | Average reasoning depth | Pro |

| Agent Workflow | Terminal 82.7% | Terminal ~70% | Pro (High complexity) |

| Cost Per Request | $0.24-$33+ | $0.04-$4.30 | Choose as needed |

Selection Decision Advice

Pro Edition Benchmarking: GPT-5.5 Pro maintains a lead in high-difficulty benchmarks like FrontierMath and Expert-SWE. However, its 6x higher price makes it unsuitable for routine tasks. In contrast, the cost of the Pro version only translates into real value when a task truly requires top-tier reasoning depth (scientific research, complex code refactoring, or 20-hour-level Agent tasks).

Standard Edition Benchmarking: The GPT-5.5 Standard edition excels in cost-effectiveness and speed. However, it lacks the capability for extremely difficult tasks like FrontierMath Tier 4. For 80% of daily model invocation scenarios, the Standard edition remains the more sensible choice.

📊 Selection Advice: Start by using the GPT-5.5 Standard edition for 80% of your routine tasks, and only switch to the Pro version for complex reasoning scenarios that the Standard edition cannot handle. You can easily switch the

modelparameter via the APIYI (apiyi.com) platform without needing to change any other code.

GPT-5.5 Pro API Use Cases

The high cost of GPT-5.5 Pro means it is only suitable for specific high-value scenarios:

- Academic Research and Mathematical Reasoning: FrontierMath Tier 4 level math problems, physical derivations, and theorem proving.

- Ultra-long Codebase Refactoring: Refactoring at the architectural level with a single input of 200K+ tokens of a complete codebase.

- Deep Scientific Research: Chemical synthesis path design, complex bioinformatics analysis, and medical diagnostic reasoning.

- Computer Use Automation: Multi-step tasks requiring hours of operation, with OSWorld 78.7% stability assurance.

- Multi-step Agent Workflows: Complex tasks involving search, research, document generation, and code execution.

- High-value Decision-making Applications: Legal contract analysis, investment research reports, and business strategy deduction.

🎯 Scenario Assessment: If your task can be completed by GPT-4o-mini or the GPT-5.5 Standard edition, don't use the Pro version. The value of the Pro version is only realized in scenarios that the "Standard edition cannot solve." We recommend using the APIYI (apiyi.com) platform to test with the Standard edition first, and only upgrade to the Pro version once you've confirmed that the capabilities are insufficient.

Integration Guide for GPT-5.5 Pro on APIYI

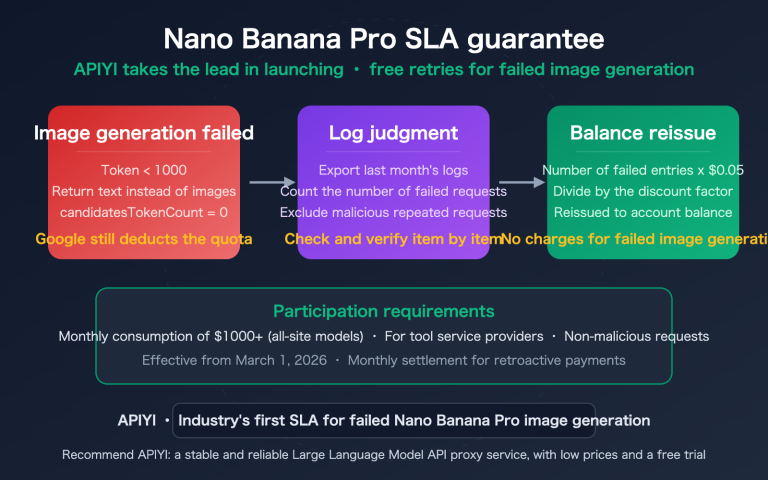

Why is access limited to the SVIP group?

Given that the cost for a single model invocation of GPT-5.5 Pro can reach several US dollars, the APIYI platform has implemented a group isolation mechanism:

- ✅ SVIP Group: Full access to

gpt-5.5-promodel invocation. - ❌ Default Group: Access is currently restricted to prevent accidental charges for new users.

The core reasoning behind this design is simple: many users are unfamiliar with the price difference between the Pro version and the standard version when first integrating the API. A single incorrect code execution could potentially exhaust an entire month's budget. By using SVIP group isolation, we ensure that only users who fully understand the model costs can access the Pro version.

How to apply for the SVIP group

- Log in to the APIYI (apiyi.com) user dashboard.

- Contact customer support and provide a brief description of your use case.

- Once support verifies your requirements, they will grant you SVIP group access.

- Specify the

group=svipparameter during your API invocation (or use the dedicated endpoint).

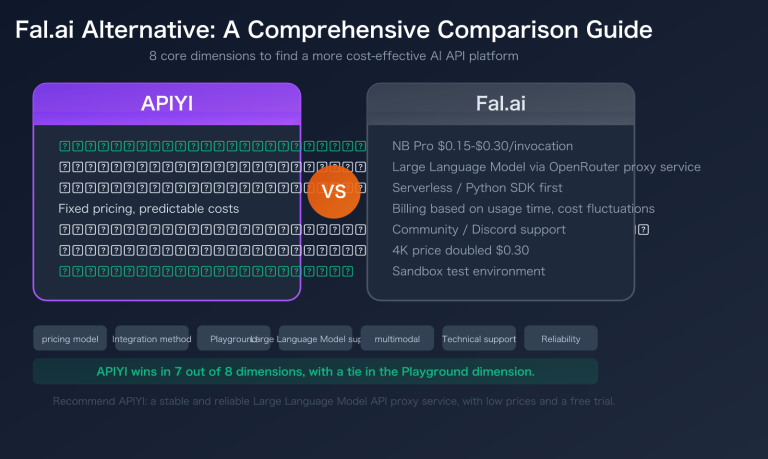

APIYI vs. Official Pricing Comparison

| Item | OpenAI Official | APIYI (apiyi.com) |

|---|---|---|

| Base Price | $30 / $180 per 1M | $30 / $180 per 1M (Same) |

| Top-up Bonus | None | Deposit $100, get $10 free (10%) |

| Actual Cost | 100% Standard | ~90% Standard (approx. 15% off) |

| Domestic Access | Requires VPN | Direct connection, no VPN needed |

| Payment Methods | International Credit Card | RMB, Alipay, WeChat Pay |

| SDK Compatibility | OpenAI Native | Fully compatible with OpenAI SDK |

| Minimum Deposit | $5 | Starting from $1 |

💰 Cost Optimization: Through the APIYI (apiyi.com) 10% bonus on $100 deposits, you effectively get about 15% off the official price. For teams frequently using GPT-5.5 Pro, this discount can significantly reduce your API costs over the year.

Frequently Asked Questions (FAQ)

Q1: What is GPT-5.5 Pro? What are the key differences from the GPT-5.5 standard version?

GPT-5.5 Pro is a high-level reasoning version launched by OpenAI on 2026-04-24, optimized for deep reasoning and high-precision tasks. Key difference: The Pro version costs $30/$180 per 1M tokens (6x the standard version), but it scores significantly higher on difficult tasks like FrontierMath and Expert-SWE. The Pro version supports a deeper Reasoning Token mechanism, which generates longer reasoning chains internally.

Q2: When was GPT-5.5 Pro released? Which platforms support it?

OpenAI officially released the GPT-5.5 series on 2026-04-23, and the gpt-5.5-pro model became available in the API on 2026-04-24. It can currently be accessed via the official OpenAI API, OpenRouter, and aggregation platforms like APIYI (apiyi.com). Note that APIYI only provides access via the SVIP group; the Default group does not have access.

Q3: Why does APIYI only open GPT-5.5 Pro to the SVIP group?

This is a cost-protection mechanism for APIYI. Since a single GPT-5.5 Pro invocation can cost several dollars, new users might accidentally incur high charges during initial testing. By isolating it to the SVIP group, we ensure that only users who understand the cost structure can access it, preventing budget loss for beginners. Users who need access can contact APIYI support to apply for SVIP permissions.

Q4: Which use cases are best for GPT-5.5 Pro? When should I use it instead of the standard version?

The Pro version is only recommended for high-value scenarios:

- Academic Research: FrontierMath Tier 4 level mathematics/scientific problems.

- Complex Code Refactoring: Full-scale refactoring of 200K+ token codebases.

- Multi-step Agent Tasks: Automation tasks involving hours of "Computer Use."

- Deep Decision-making: Legal contract review and investment research reports.

Rule of thumb: Test with the standard version first; if it works, don't switch to Pro. The Pro version is 6x the price of the standard version and should only be used when the standard version's capabilities are insufficient.

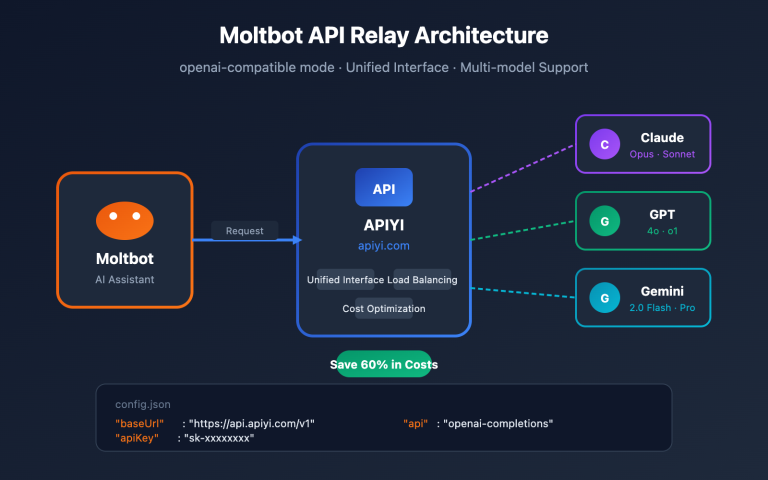

Q5: How do I call GPT-5.5 Pro via APIYI? Do I need to change the base_url?

APIYI is fully compatible with the OpenAI SDK. To call it:

- Visit APIYI (apiyi.com), register, and apply for SVIP group access.

- Obtain your API key.

- Change the

base_urlin your code tohttps://vip.apiyi.com/v1. - Set the

modelparameter togpt-5.5-pro.

client = openai.OpenAI(

api_key="YOUR_KEY",

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="gpt-5.5-pro",

messages=[...]

)

Depositing $100 gives you a 10% bonus, effectively providing a 15% discount compared to official pricing.

Q6: How does billing change when the 1M context window exceeds 272K tokens?

The GPT-5.5 series uses tiered billing: when a single request's input exceeds 272K tokens, the input price for the entire request becomes 2x ($60/1M), and the output price becomes 1.5x ($270/1M). Note that tiered billing applies to the entire request, not just the portion that exceeds the limit. For long document analysis, we recommend a chunking strategy: split documents into requests of around 250K tokens to avoid triggering the higher tier.

Q7: What are the known limitations of GPT-5.5 Pro?

Key limitations include:

- No Streaming Support: You must wait for the full response to get results, which is not ideal for real-time interactive scenarios.

- No Prompt Caching Discount: Unlike the standard version, the Pro version does not offer prompt caching discounts.

- Slower Response Time: Due to deep reasoning, a single invocation typically takes 30 seconds to several minutes.

- Input-only Image Support: Does not support image generation (must be paired with other models).

- Regional Data Residency +10% Surcharge: Using data residency endpoints incurs additional fees.

For scenarios requiring high real-time performance, we recommend using the GPT-5.5 standard version.

Q8: How can I precisely control costs when calling GPT-5.5 Pro?

Three key strategies for cost control:

- Tiered Scheduling: Use the standard version for routine tasks and reserve the Pro version for complex tasks only.

- Set

max_tokensLimits: Prevent excessively long outputs; we recommend setting a reasonable limit of 1K-8K tokens depending on the task. - Monitor Token Usage: Track input/output tokens in your production code in real-time and estimate costs.

- Avoid the 272K Tier: Process long documents in chunks to avoid triggering the 2x price tier.

By using the APIYI (apiyi.com) platform, you can also take advantage of the 10% top-up bonus to further reduce your actual costs.

GPT-5.5 Pro API Key Takeaways

- Top-tier Reasoning Benchmark: Breaks through 39.6% on FrontierMath Tier 4, setting the current record for public models.

- 1M+ Ultra-long Context Window: Supports 1.05M tokens of input and 128K of output—you can process an entire technical book in one go.

- Agentic Powerhouse: Achieves 82.7% on Terminal-Bench 2.0, capable of autonomously handling 20-hour-long coding tasks.

- Pricing at 6x the Standard Version: $30/$180 per 1M tokens; precise scheduling is essential to manage costs.

- SVIP Group Protection: Exclusively available to APIYI SVIP members to prevent beginners from accidentally incurring high costs of several dollars per request.

- 15% Off for Domestic Access: Recharge 100 and get 10 free via APIYI (apiyi.com), effectively achieving an 85% cost ratio compared to the official site.

- Full SDK Compatibility: Simply replace the

base_urlwithvip.apiyi.comin your OpenAI SDK to get started.

Summary

Here are the core takeaways for the GPT-5.5 Pro API:

- Capability Positioning: Designed specifically for deep reasoning and high-value tasks, it is currently OpenAI's most powerful model in terms of reasoning.

- Pricing Structure: $30/$180 per 1M tokens. Note that requests exceeding 272K tokens trigger a 2x tiered billing rate, so precise scheduling is a must.

- Access Method: Call via the APIYI (apiyi.com) SVIP group. Enjoy a "recharge 100, get 10 free" offer and stable, direct domestic access without needing a VPN.

GPT-5.5 Pro isn't your everyday model; it’s a professional-grade tool built for complex reasoning, scientific research, massive codebase refactoring, and multi-step agent tasks. For 80% of standard API calls, the GPT-5.5 standard version remains the more economical choice. Before jumping into the Pro version, we recommend evaluating whether your task truly requires top-tier reasoning capabilities.

We recommend using the APIYI (apiyi.com) platform to quickly integrate GPT-5.5 Pro, where you'll benefit from SVIP group cost protection, a 10% recharge bonus, and reliable, direct domestic connectivity.

Related Articles

If you're interested in the GPT-5.5 Pro API, we recommend checking out these resources:

- 📘 GPT-5.5 Standard API Integration Guide – Get to know the entry-level version of the GPT-5.5 series and best practices for 80% of use cases.

- 📊 GPT-5.5 Pro vs. Claude Opus 4.7: A Deep Dive – Master the capability differences and selection criteria for these two flagship reasoning models.

- 🚀 OpenAI Agent Workflow in Action: GPT-5.5 Pro + Computer Use – Explore production-grade deployments for long-running, multi-step automation tasks.

📚 References

-

Official OpenAI GPT-5.5 Pro Model Documentation: Model specifications, API endpoints, and pricing information.

- Link:

developers.openai.com/api/docs/models/gpt-5.5-pro - Note: Get the latest and most authoritative technical parameters for GPT-5.5 Pro.

- Link:

-

OpenAI GPT-5.5 Series Launch Blog: Model capabilities, benchmark data, and use cases.

- Link:

openai.com/index/introducing-gpt-5-5 - Note: Official positioning and capability overview for the GPT-5.5 series.

- Link:

-

APIYI GPT-5.5 Pro Integration Documentation: Domestic access solutions and SVIP group application process.

- Link:

docs.apiyi.com - Note: A practical integration guide tailored for developers in China.

- Link:

-

OpenAI Pricing Page: Complete pricing table and tiered billing rules.

- Link:

developers.openai.com/api/docs/pricing - Note: The latest billing standards for all models.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to share your experience with GPT-5.5 Pro in the comments. For more information on model integration, visit the APIYI documentation center at docs.apiyi.com.