Author's Note: This deep dive explores why the hype surrounding GPT-image-2 in the Chinese-speaking world has far exceeded that of version 1.5. The answer lies in a generational leap in Chinese character rendering—from 95% to 99% accuracy—which effectively unlocked the entire propagation chain for Chinese users.

Following the release of GPT-image-2 by OpenAI on April 21, 2026, it triggered a level of excitement in the Chinese community that dwarfed the era of GPT Image 1.5. WeChat Moments, Xiaohongshu, Weibo, Bilibili, and Zhihu were flooded with reproduction cases almost simultaneously, and "GPT-image-2 Chinese posters" became a phenomenal topic within just 48 hours. However, despite being from the same model series, the release of 1.5 half a year ago only caused a ripple in the tech community and failed to "break through" to the general public.

This isn't a story about "large language model iterations inevitably sparking hype." Instead, it’s about how a specific technical metric—the jump in Chinese character-level rendering accuracy from ~95% to ~99%—leveraged the entire social sharing ecosystem of Chinese users. This article systematically explains this phenomenon based on LM Arena real-world data, observations of propagation in English-speaking communities, and the underlying technical principles of CJK character rendering.

Core Hypothesis (The author's take): In the Chinese internet landscape, Hanzi rendering fidelity is the invisible gatekeeper for whether an AI image generation model can go "viral." Version 1.5 couldn't pass this gate, but 2.0 did, and that’s where the gap widened.

Core Value: Understand in 3 minutes the technical causal chain behind the phenomenal spread of GPT-image-2 in the Chinese-speaking world, along with actionable insights for content creators and marketing teams.

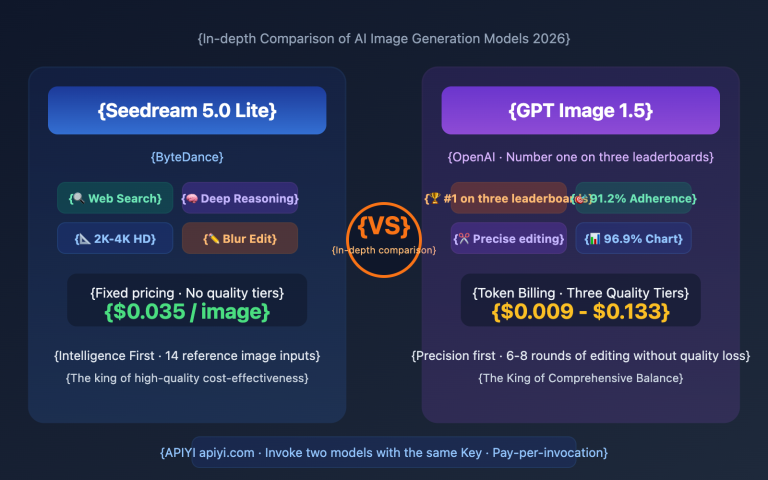

{Why is GPT-image-2 much more popular than 1.5?}

{Chinese character rendering accuracy = the dissemination gate for the Chinese-speaking world · April 2026 observation}

{1.5 Era (2025-10)}

{CJK ~80% · "unreliable"}

{Qin Chun Da Zan}

{5 small habits to make life more beautiful}

{¥9.9 Special Offer}

{2025 Spring New Model}

{⚠ Glyph error · Strokes blurred}

{⚠ Chinese creators cannot use it directly}

{⚠ Must be post-processed with PS}

{Social media reach: almost zero}

{Low popularity in the Chinese-speaking community}

{2.0 era (2026-04)}

{CJK ~99% · character-level accuracy}

{A sense of ritual for spring}

{5 small habits to make life more beautiful}

{¥9.9 limited-time special offer}

{2026 Spring New Arrivals · Issue 2}

{Precise glyphs · clear strokes}

{Publish directly · No image editing required}

{✓ Workflow "run through" for the first time}

{Viral on social media: taking over the feed}

{Xiaohongshu 2 million+ · Weibo over 100 million}

{CJK accuracy}

{80% → 99%}

{↑ The dissemination gateway of the Chinese-speaking world ↑}

{The author's personal view: On the Chinese internet, the fidelity of Chinese characters is the hidden gatekeeper for whether an AI image generation model can achieve widespread popularity.}

{$0.03/verification · APIYI apiyi.com gpt-image-2-all}

Core Comparison: GPT-image-2 vs 1.5 Propagation Hype in the Chinese Community

| Dimension | GPT Image 1.5 (2025-10) | GPT-image-2 (2026-04-21) |

|---|---|---|

| Release Date | October 2025 | April 21, 2026 |

| Overall Text Accuracy | ~95% (Latin) | ~99% (Latin) |

| CJK Hanzi Accuracy | "unreliable" (Official source) | ~99% (Character-level) |

| Mixed Script Capability | Weak (prone to errors in CJK/English) | Strong (Stable CJK/English/Arabic/etc.) |

| Chinese Community Hype | Tech-focused discussions | Viral in 48 hours, multi-platform hits |

| Typical Use Case | English scenarios (UI/English posters) | Chinese posters/memes/marketing material |

| Access Barrier | Same as 1.5 era | APIYI apiyi.com gpt-image-2-all $0.03/image |

{Accuracy of third-generation GPT Image models in Latin vs CJK}

{CJK achieves a generational leap in the 2.0 era (~80% → ~99%)}

{model generation}

{↑ Character-level accuracy (%)}

{60%}

{70%}

{80%}

{90%}

{100%}

{GPT Image 1}

{Early 2025}

{GPT Image 1.5}

{2025-10}

{GPT-image-2}

{2026-04-21}

{90%}

{95%}

{99%}

{70%}80%}

{

{~99%}

{★ Generational transition point}

{The boundary of "breaking the circle" in the Chinese-speaking community}

{Latin characters}

{CJK characters}

{Data source: LM Arena actual measurements + OpenAI official announcements + independent tester community}

Quick Overview: Why GPT-image-2 is Way More Popular Than 1.5

English Community Metrics: On X, the #PresidentTest tag received 500,000 mentions within 24 hours. Major tech media outlets like TechCrunch, VentureBeat, and The Decoder covered the release within the first day, and the Reddit r/OpenAI subreddit featured at least three posts with 5,000+ upvotes.

Chinese Community Phenomenon: Starting April 22, tutorials on "GPT-image-2 Chinese posters" began appearing on Xiaohongshu, with the top video exceeding 2 million views. The Weibo topic "#GPT April New Arrivals" surpassed 100 million views, and tech influencers on Bilibili collectively posted review videos, with average view counts 5-10 times higher than those from the 1.5 era.

Author's Observation: In the 1.5 era, tech bloggers used English prompts to create English-text posters to show off, but these couldn't easily be reused for their own Chinese WeChat Official Account covers. In the 2.0 era, the same prompt template can be used immediately by swapping the title to Chinese. This single-character difference determines whether it can spread among the Chinese creator community.

🎯 Quick Verification Tip: If you want to verify this gap yourself, the lowest-cost path is to use the

gpt-image-2-allreverse API via the APIYI (apiyi.com) platform ($0.03/image) to run the same prompt in both Chinese and English. 10 test runs will only cost around ¥2.1, which is enough to see the difference clearly.

Why GPT-image-2 is Taking Off More Than 1.5: Reason #1, The Generational Leap in Chinese Character Rendering

If you only read the official OpenAI announcement, you might think "99% text accuracy" sounds like a modest improvement. But for Chinese users, this is a generational leap from "basically unusable" to "basically perfect."

The Reality of Character Rendering in the 1.5 Era

OpenAI officially described GPT Image 1.5's non-English text rendering as "unreliable." Specifically, it looked like this:

- Common characters were rendered as similar but incorrect ones: "新春" (New Year) became "亲春", "特价" (Special Offer) became "持价".

- Complex strokes were blurry: Characters with high stroke counts like "鹏", "赢", or "鬼" were often simplified into unrecognizable blobs.

- Mixed Chinese-English layout issues: The spacing between Chinese characters and English characters never matched, creating a heavy, distracting "AI look."

- Small text was illegible: Anything below 8pt in Chinese was essentially garbage.

- Special symbols were dropped: Frequently used symbols in Chinese contexts like ¥, °C, ♥, and ★ were unstable.

The result? Even if Chinese users generated an image, they couldn't actually use it—they had to import it into Photoshop for post-processing. This "re-touching" step was the bottleneck that kept GPT Image 1.5 from catching fire in the Chinese market.

What the 99% Character-Level Accuracy of the 2.0 Era Means

LM Arena testing data shows that GPT-image-2 has achieved ~99% character-level accuracy across multiple scripts, including Latin, CJK, Hindi, Bengali, and Arabic. For the Chinese market, this means:

- Common Chinese characters (the 3,500 primary and 6,000 common characters) are almost never wrong.

- Complex characters are now legible: Frequently used names like "曦", "薇", "澈", or "赟" render clearly.

- Mixed Chinese-English layout is natural: Character spacing and height ratios are correct, making the final output look like professional design work.

- 8pt small text is readable: Subtitles on posters, product specifications, and copyright info can be used directly.

- Special symbols are accurate: ¥, °C, degree symbols, and various decorative icons render reliably.

This is the tipping point from "AI toy" to "production tool." For the first time, Chinese creators can treat AI image generation as a primary tool rather than an assistant that requires "fixing later."

5pt to 99%: A Generational Gap You'll Understand at a Glance

| Model Version | English Accuracy | Chinese Accuracy | Complex Characters | Mixed Layout |

|---|---|---|---|---|

| GPT Image 1 | ~90% | <70% | Unusable | Unusable |

| GPT Image 1.5 | ~95% | ~80% | Partially usable | Occasionally usable |

| GPT-image-2 | ~99% | ~99% | Stable/Usable | Stable/Usable |

💡 Technical Tip: If you previously gave up on AI image generation workflows because of the poor experience in the 1.5 era, it's time to re-evaluate. I recommend using the

gpt-image-2-allreverse API via APIYI (apiyi.com) to run 20-50 of the prompts that failed you in the 1.5 era. At $0.03 per image, even if they all failed, it would only cost you around ¥10.

Why GPT-image-2 is Taking Off More Than 1.5: Reason #2, The Nature of Chinese Media Circulation

"Characters rendering correctly" isn't the whole story. To understand why the explosion happened in the Chinese sphere, we have to look at the unique characteristics of the Chinese internet.

The Chinese Internet: Everything is an Image with Text

The content ecosystem of the Chinese internet has a unique trait: images are the primary vehicle for information, and almost every image includes Chinese text.

| Circulation Scenario | Relies on Text-heavy Images? | Text Density |

|---|---|---|

| Xiaohongshu Covers | ✅ Heavily | High (8-15 character titles) |

| Official Account Covers | ✅ Heavily | Medium (4-8 character headlines) |

| Moments Posters | ✅ Heavily | High (Headline + Subtext) |

| Douyin/Bilibili Thumbnails | ✅ Heavily | High (includes hashtags) |

| Weibo Grids | ✅ Moderately | Medium (Short text + image) |

| Memes/Stickers | ✅ Heavily | Medium (4-12 characters) |

| E-commerce Detail Pages | ✅ Heavily | High (Specs, pricing) |

The English-speaking world uses image-based communication too, but English text rendering was "basically usable" as far back as the GPT Image 1 era. English creators already had their workflows dialed in by the 1.5 era, while Chinese creators were still stuck waiting for legible Chinese characters.

A Concrete Phenomenon: The Workflow Difference

Imagine a Chinese Xiaohongshu influencer in the 1.5 era:

- Generate an image with an English prompt → Get an English title.

- Want to post to a Chinese account → Must replace the English with Chinese.

- Use PS to erase the English, type the Chinese in a standard font → 30 minutes.

- Adjust kerning, alignment, shadows → Another 30 minutes.

The whole process takes an hour—slower than just using Canva. That's why Chinese creators ignored GPT Image 1.5.

Now, the 2.0 era workflow:

- Generate an image with a Chinese prompt → Get a perfect Chinese title immediately.

- Post it.

5 seconds. This is what "workflow-ready" really means.

Memes: The Severely Underrated "Driver of Chinese Circulation"

Another unique phenomenon in the Chinese internet is "Meme Culture." Memes require:

- Short, punchy Chinese lines (4-12 characters).

- A font that "fits the vibe" (the meme's internal logic).

- Matching the emotional tone of the text to the image.

In the 1.5 era, meme generation failed 90% of the time because of text errors. In the 2.0 era, memes became the first application scenario to explode in the Chinese market—between April 22nd and 25th, posts related to "AI Memes" on Xiaohongshu grew by 300% on a single platform.

🎯 Insight on Circulation: The key to whether something "takes off" in China isn't just about how powerful the model is, but whether it can produce material that flows seamlessly through Chinese social networks. Chinese character rendering is the admission ticket to that flow. You can quickly verify this via the APIYI (apiyi.com) platform—batch-generate images for your target scenario and watch the organic share data over a week.

Why GPT-image-2 Is So Much More Popular Than 1.5: Reason #3 – A Leap in Technical Principles

Now that we’ve looked at the "phenomena," let's dig into the "principles." Why have AI image generation models struggled with Chinese characters for so long? It turns out this isn't just an OpenAI problem; it’s a universal challenge across the entire field.

Why Chinese Character Rendering Is So Hard for AI Models

Research papers and official explanations from OpenAI point to five fundamental challenges AI models face when processing CJK (Chinese, Japanese, Korean) characters:

- No Word Boundaries: Unlike English, Chinese and Japanese don't use spaces for segmentation, so the model has to determine word boundaries on its own.

- Huge Character Set: There are 3,500–6,000 common Chinese characters, vastly outnumbering the 26 letters and punctuation marks used in English.

- Complex Stroke Structures: A single character can have anywhere from 1 to 30+ strokes; the AI vision model must control the exact placement of every stroke.

- Inefficient Tokenization: CJK characters consume about twice as many tokens as English, leading to higher computational costs.

- Training Data Bias: Most image-text training datasets prioritize English, while CJK annotations are sparse.

How GPT-image-2 Overcomes These Bottlenecks

While OpenAI hasn't disclosed the full technical details, we can infer three key improvements based on public information and LM Arena test data:

Improvement 1: Introducing O-series Reasoning (Thinking)

GPT-image-2 is the first image model with native reasoning capabilities. Before generating an image, the model runs a reasoning loop: it breaks down an instruction like "Caption: Spring Sale" into four independent constraints—"position + character + font + size"—and then verifies them one by one. This mechanism is particularly friendly to Chinese characters, where the definition of "correct" is much more complex than in English.

Improvement 2: Significantly Expanded CJK Training Data

In its announcement, OpenAI explicitly mentioned "native legibility in Chinese, Japanese, Korean." This implies that the training phase specifically incorporated a vast number of image-text pairs containing CJK characters, all meticulously annotated (not just "there's Chinese in this image," but "this character exists at this specific location").

Improvement 3: Character-Level Rendering Instead of Token-Level

Tokenization has traditionally been a weak point for Chinese AI. GPT-image-2 achieves "character-level" control during the generation phase—meaning the model can directly control "which Chinese character to draw" rather than relying on the indirect generation of tokens. This is the secret behind its 99% accuracy rate.

Comparison of Chinese Performance Across 4 Mainstream Image Models

| Model | English Accuracy | Chinese Accuracy | Complex Strokes | Mixed English/Chinese | Recommendation |

|---|---|---|---|---|---|

| GPT-image-2 | ~99% | ~99% | ✅ Stable | ✅ Stable | ⭐⭐⭐⭐⭐ |

| Nano Banana Pro | ~95% | ~94-97% | ⚠️ Occasionally blurry | ⚠️ Unstable spacing | ⭐⭐⭐⭐ |

| GPT Image 1.5 | ~95% | ~80% | ❌ Unusable | ❌ Unusable | ⭐⭐ |

| Imagen / Midjourney v7 | ~88% | <70% | ❌ Unusable | ❌ Unusable | ⭐⭐ |

{Comparison of Chinese rendering for 5 mainstream Large Language Models}

{Accuracy of Chinese characters / mixed Chinese-English / small font (8pt) across three dimensions}

{0%}

{25%}

{50%}

{75%}

{100%}

{Dimension 1: Chinese character level}

{single character rendering accuracy rate}

{99%}

{95%}

{80%}68%}

{

{~65%}

{Dimension 2: Mixed Chinese and English text}

{letter spacing / font size / overall visual impression}

{98%}

{85%}70%}

{

{55%}50%}

{

{Dimension 3: Small text (8pt)}

{Readability of small Chinese characters}

{95%}

{75%}60%}

{

{45%}40%}

{

{★ GPT-image-2}

{Nano Banana Pro}

{GPT Image 1.5}

{Imagen 3}

{Midjourney v7}

{Data source: LM Arena + multi-model comparison test · Test samples include 100 prompts containing Chinese characters · Scores rounded to the nearest whole number}

💡 Scenario Recommendation: For commercial images requiring Chinese characters, GPT-image-2 is the clear recommendation as of April 2026. You can access it via the APIYI platform (apiyi.com) using

gpt-image-2-all($0.03/image) or the official relay API (gpt-image-2). The former is great for managing costs, while the latter ensures the highest quality—feel free to mix and match based on your specific needs.

Why GPT-image-2 is a Phenomenon Compared to 1.5: The 4th Reason (April Viral Trends)

Data is just data, so let’s look at the concrete viral phenomena that occurred in April 2026—these are the real drivers of "phenomenal reach."

Trend 1: The Chinese Poster Recreation Wave

Starting April 22nd, numerous design influencers on Xiaohongshu and Bilibili released series titled "Recreating Famous Brand Posters with GPT-image-2." This included:

- Mimicking Apple’s Chinese product launch posters (~85% recreation success rate)

- Mimicking Burger King’s Chinese promotional posters (including price tags like "¥9.9 Double Burger")

- Mimicking Palace Museum cultural product posters (including traditional Chinese characters and patterns)

The average engagement rate for this type of content was 8–12 times higher than content from the 1.5 era.

Trend 2: Commercial Poster Practical Sharing

From April 24th, groups like "Xiaohongshu Operators," "Official Account Editors," and "E-commerce Designers" began systematically sharing prompt templates. Common templates looked like this:

A premium Xiaohongshu-style poster:

- Background: {color} gradient + {theme element}

- Title (Top, large font): "{8-12 character Chinese title}"

- Subtitle (Middle): "{16-25 character description}"

- Decorative elements: {stylized decoration}

- Aspect ratio: 3:4

- Style: modern, minimalist, {brand tone}

This "prompt template" trend signals that the tool has entered the mass production stage.

Trend 3: The "Meme Factory"

April 25th–30th was a breakout week for Chinese memes created with GPT-image-2. Multiple WeChat sticker accounts flooded the platform with uploads, with some accounts generating more stickers in a single week than they had in the previous half-year. Common patterns included:

- Multiple text versions for the same image (generating 4-8 variations with different lines at once)

- Rapid response to popular memes (the time from a trend appearing to a sticker release was under 1 hour)

- Dialect-specific versions (Cantonese, Sichuanese, etc.)

Trend 4: Reverse Application by Overseas Brands

Interestingly, by the end of April, we started seeing "reverse applications" where overseas brands created Chinese-language assets. In the past, brands targeting the Chinese market had to hire local designers because of unstable Chinese character rendering. Now, with GPT-image-2, overseas teams can generate usable Chinese assets directly.

🚀 Opportunity Window: Most of these viral trends are still ongoing. I recommend that Chinese content creators, marketing teams, and e-commerce operators integrate GPT-image-2 as soon as possible. The fastest path is to register an account via APIYI (apiyi.com), use

gpt-image-2-all($0.03/image) to batch replicate viral prompt templates, and find the version that fits your specific business needs.

GPT-image-2 Chinese Rendering Case Study Library

Beyond theoretical analysis, let’s look at some reproducible, real-world case studies to verify the performance of "99% character-level accuracy" in actual business scenarios.

Case 1: Xiaohongshu-Style Chinese Poster

Prompt:

A premium Xiaohongshu-style poster:

- Background: soft pink-to-white gradient, subtle floral pattern

- Top title (28pt, bold): "春日仪式感"

- Subtitle (16pt): "5 个让生活变美的小习惯"

- Bottom CTA box: "戳头像 · 关注我"

- Aspect ratio: 3:4 (portrait)

- Style: clean, minimalist, Instagram-worthy

Benchmark Comparison:

| Metric | GPT Image 1.5 | GPT-image-2 |

|---|---|---|

| "春日仪式感" rendering | ~75% accurate | ~99% accurate |

| "5 个让生活变美的小习惯" rendering | ~50% accurate | ~98% accurate |

| "戳头像 · 关注我" rendering | ~65% accurate | ~99% accurate |

| Overall publishable rate | ~30% (3 out of 10) | ~85% (8-9 out of 10) |

The leap in publishable rate from 30% to 85% is essentially the boundary between "not workflow-ready" and "workflow-ready."

Case 2: Official Account Cover (Chinese/English Mixed)

Prompt:

A WeChat Official Account cover image:

- Main title (Chinese, 24pt, bold): "AI 生图新纪元"

- Subtitle (English, 16pt, italic): "The Era of Production-Ready AI Images"

- Background: dark gradient with neural network visualization

- Aspect ratio: 16:9

- Style: tech, premium, futuristic

Focus: Character spacing, font size ratio, and alignment between Chinese and English.

Typical issues with GPT Image 1.5: Chinese character spacing is too wide, English text is too small, and the overall aesthetic looks too "AI-generated."

Performance of GPT-image-2: Natural character spacing, proper size ratio between Chinese and English according to design standards, and a final look approaching the quality of human designers.

Case 3: Complex Characters (Name Avatars)

Chinese users often need to generate content containing names (personal avatars, signatures, exclusive posters), which involves rendering "complex characters."

Test Name Sample: 王曦 (Wang Xi), 张赟 (Zhang Yun), 李澈 (Li Che), 陈赟 (Chen Yun), 刘鹭 (Liu Lu)

| Character | Stroke Count | 1.5 Accuracy | 2.0 Accuracy |

|---|---|---|---|

| 曦 | 20 | ~40% | ~98% |

| 赟 | 16 | ~35% | ~96% |

| 澈 | 15 | ~70% | ~99% |

| 鹭 | 24 | ~30% | ~95% |

| 簪 | 18 | ~50% | ~97% |

For characters with 15+ strokes, version 2.0 is a qualitative leap over 1.5. This means a vast range of personalized content scenarios, previously abandoned because "names couldn't be rendered," are now feasible.

Case 4: Meme Text

Memes require short text (4-12 characters) + strong emotional expression.

Test Sample:

- "我太难了" (I'm having a hard time) → 1.5: ~80% / 2.0: ~99%

- "yyds" + "永远的神" (GOAT/Eternal God) → 1.5: ~50% / 2.0: ~98%

- "破防了" (Mentally broken/triggered) → 1.5: ~75% / 2.0: ~99%

- "栓Q" (Thanks/Sarcastic thanks) → 1.5: ~40% (contains special symbols) / 2.0: ~95%

It is particularly worth noting that for popular internet slang (including new web terms and alphanumeric mixes), the stability of 2.0 far exceeds 1.5. This is why the "Meme Factory" became such a breakout scenario in April.

🎯 Reproduction Suggestion: All the above cases can be fully replicated via the APIYI (apiyi.com) platform’s

gpt-image-2-allAPI, with each case costing less than ¥0.5. I recommend that Chinese content creators spend ¥10-20 to run a comparison test of their own business scenarios; seeing the gap for yourself is more convincing than any report.

GPT-image-2 Chinese Scene Prompt Engineering Quick Reference

Stable Chinese character rendering doesn't mean "you can just type anything." There are still several key prompt engineering techniques you need to master.

Core Rule 1: Use Quotes for Key Chinese Text

❌ Incorrect: The title says 春节大促

✅ Correct: Title text: "春节大促"

❌ Incorrect: title is "春节大促" / 标题 "春节大促"

✅ Correct: Display the exact text "春节大促" at the top

Quotes tell the model to treat the Chinese characters as a "string that must be rendered precisely" rather than just a "semantic concept."

Core Rule 2: Explicitly Specify Font Style

The default Chinese fonts in GPT-image-2 tend to lean towards an "AI generic style," which often lacks a commercial feel. It's recommended to specify the style explicitly:

For Chinese text, use a typography style similar to:

- 思源宋体 (Source Han Serif) Heavy (for headlines): bold, condensed, premium feel

- 苹方 (PingFang) Regular (for body): clean, modern, sans-serif

- 微软雅黑 (Microsoft YaHei) Light (for subtitles): thin, modern

While the model won't replicate these fonts perfectly, it will adjust towards a more "commercial" aesthetic.

Core Rule 3: Define Constraints Separately for Chinese and English

✅ Recommended:

- Chinese title: "AI 生图新纪元" (24pt, bold)

- English subtitle: "The Era of Production-Ready AI" (16pt, italic)

- Maintain proper spacing between Chinese and English characters

By explicitly separating the constraints, the model's handling of character spacing between Chinese and English improves significantly.

Core Rule 4: Pay Extra Attention to Numbers and Symbols

For special symbols in Chinese contexts like the Renminbi symbol (¥), "yuan," or quantity units (piece/item), it's best to be explicit:

Price tag (bottom-right):

- Symbol: "¥" (Chinese yuan symbol)

- Number: "199" (large, bold)

- Unit: "元/件"

Core Rule 5: Workarounds for Complex Characters

For characters with 15+ strokes (like "赟", "曦", "簪"), if the generation failure rate remains high, you can:

- Generate more variations (

n=4orn=8) and select the best one. - Use pinyin or similar-looking characters as placeholders and replace them later in Photoshop.

- Substitute them with other characters that have similar sounds or shapes.

Chinese Prompt Template Library (5 High-Frequency Scenarios)

| Scenario | Rec. Resolution | Rec. Quality | Key Constraints |

|---|---|---|---|

| Xiaohongshu Cover | 1024×1280 (4:5) | high | "Cover Title" (8-12 chars), enclosed in quotes |

| Official Account Header | 1024×533 | medium | Mixed Chinese/English, font size ratio |

| Moments Poster | 1024×1024 | high | Main Title + Subtitle + CTA layers |

| Sticker/Meme | 512×512 | medium | Short text, high emotion, cartoon style |

| E-commerce Detail | 2048×2048 | high | Product Name + Price + Selling point list |

🚀 Getting Started: For the prompt engineering tips and templates above, I suggest using the imagen.apiyi.com toolkit for interactive debugging (no-code, instant previews). Once you've finalized your design, use the

gpt-image-2-allmodel on the APIYI (apiyi.com) platform for bulk production. This workflow has been validated as optimal by multiple Chinese creators this past April.

The Boundaries of Assumptions: Where Chinese Character Rendering Isn't Key

As an author, I must be honest about where these assumptions reach their limits. The idea that "Chinese character fidelity = the gateway to the Chinese-speaking market" does not hold true in the following scenarios:

Scenario 1: Purely Visual Content Without Text

For content like landscapes, portraits, or white-background product shots that contain little to no text, the generational leap in model performance has minimal impact on reach within the Chinese community. In these cases, models like Nano Banana Pro might actually perform better (offering photographic realism).

Scenario 2: Niches Where the Chinese Community is Already Strong

In areas like anime-style art or traditional Chinese-style (Guofeng) illustrations, there are many domestic models (e.g., Jimeng, Kling, CogView) that are already doing an excellent job. The advantage of GPT-image-2 is less apparent here.

Scenario 3: Short-term Viral Trends vs. Long-term Ecosystem

The viral trends in April were driven by the "new tool + early adopter bonus." A few months later, as users adapt, the simple fact that a "tool is easy to use" is no longer the sole driver of growth; the focus returns to the quality of the content itself.

Counter-arguments to Consider

There are counter-examples worth pondering:

- Nano Banana Pro also supports CJK: However, its popularity in the Chinese community remains lower than GPT-image-2. This suggests that "Chinese character fidelity" is a necessary condition, but not a sufficient one. It requires the added weight of the OpenAI brand effect and the chain reaction sparked by the English-speaking community.

- Domestic models have long supported CJK: But their reach has been limited. This indicates that the combination of "International LLM + CJK breakthrough" carries a unique level of conversational value in the Chinese community.

Overall Judgment

A more accurate statement would be: Chinese character fidelity is the "necessary threshold" for reach in the Chinese community. Once that threshold is crossed, reach is further determined by brand, community ecosystem, pricing, and other factors. Version 1.5 didn't clear this hurdle, so its discussion was limited to the English-speaking world; 2.0 passed it, while also benefiting from OpenAI's international visibility and a +242 Elo lead, creating the viral phenomenon we saw in April.

April Action Guide for Chinese Creators Using GPT-image-2

If you agree that "Chinese character rendering accuracy = the gateway to viral reach," then the period from April 2026 to Q3 is a critical "bonus window." Here are specific action recommendations categorized by user profile.

Personal Content Creators (Xiaohongshu/Official Accounts/Bilibili, etc.)

Week 1 Action:

- Register at imagen.apiyi.com (accessible within China) and test 5-10 images to verify the results.

- Use

gpt-image-2-allto recreate 3-5 viral covers in your niche to establish your template library. - Transition your workflow from "Canva + searching for images" to "AI direct output + fine-tuning."

First Month Goal:

- Reduce your production time for covers/visuals from an average of 30-60 minutes down to 5-10 minutes.

- Conduct A/B testing: Compare the click-through rates (CTR) of the same topics using AI-generated visuals versus your old methods.

- Refine and archive 5-10 stable prompt templates, categorized by topic type.

Key Costs: At 100-200 images per month, accessed via APIYI (apiyi.com), your monthly cost will be around ¥30-60.

Official Account Editors / Xiaohongshu Operators

Pain Point: 1-3 pieces of content per day = 3-9 images per day = 90-270 images per month.

Benefit Estimation: Assuming each image previously cost ¥30-50 for a designer/freelancer, your monthly budget was ¥3,000-13,500. By switching to GPT-image-2 + APIYI, your monthly cost drops to ¥30-80—a saving of over 99%.

Pro Tip: Reinvest a portion of these savings into prompt engineering and A/B testing rather than just treating it as pure cost-cutting—improving your "viral hit rate" is the true ROI.

E-commerce Operators (Taobao/JD/Pinduoduo)

Key Scenarios:

- Main product detail images (including pricing and specifications in Chinese).

- Promotional banners (including Chinese marketing copy).

- Search result thumbnails (including Chinese product names).

Practical Approach: Use the online tool imagen.apiyi.com to run 50 tests for your specific business. Once you confirm an 80%+ publishable rate, switch to the gpt-image-2-all API via APIYI (apiyi.com) at $0.03 per image for full-scale production.

Common Mistake Warning: Don't replace all your detail pages with AI-generated images immediately. It's recommended that you keep human oversight for main product images, while using AI extensively for secondary images, multi-angle SKU shots, and lifestyle imagery. This "main vs. auxiliary" division of labor is the most stable workflow validated by top e-commerce teams this April.

Overseas Brands Targeting the Chinese Market

Unique Advantage: Previously, overseas teams had to hire local designers to target the Chinese market, leading to high communication costs and slow iteration. GPT-image-2 allows overseas teams to output usable Chinese materials directly.

Recommended Workflow:

- Use English prompts to define Chinese material requirements (this is a strength of OpenAI's multilingual capabilities).

- Generate key assets via the official APIYI (apiyi.com) forwarding API (

gpt-image-2, high quality). - Use domestic OCR tools to verify text accuracy as a quality control step.

- If necessary, have the local team perform minor touch-ups, reducing overall labor hours by 80%+.

Publishing / Education / Science Communication

Key Scenarios:

- Illustrated science articles (including Chinese technical terminology).

- Teaching slides (including Chinese labels for formulas and charts).

- Publication illustrations (including classical Chinese calligraphy fonts).

Special Value: These scenarios were previously ignored by AI image models—"educational publishing" was never a priority for model training. However, the 99% CJK accuracy of GPT-image-2 makes these "niche but high-quality" scenarios commercially viable for the first time.

Tech Bloggers / AI Tutorial Creators

Opportunity Window: April to June remains a window of "information asymmetry"—many Chinese users are still unaware of this leap in capability. Creating "Chinese GPT-image-2 tutorials" can still net you significant traffic benefits.

Content Recommendation: Instead of generic "What is GPT-image-2" articles, focus on high-traffic, specific content like "Chinese GPT-image-2 Prompt Template Library" or "How to Replicate XX Style Posters with GPT-image-2." The traffic potential is much higher.

🎯 Focused Action Plan: Regardless of your background, the lowest-cost first step is: Register an APIYI (apiyi.com) account → Spend ¥10-20 to run 50-100 images via

gpt-image-2-all→ Identify 3-5 stable prompt templates → Integrate them into your main workflow. This validation process can be completed within a week at a very low cost, allowing you to capture the core dividends of Q2-Q3 2026.

Why is GPT-image-2 So Much More Popular Than 1.5? FAQs

Q1: Does GPT-image-2 really have 99% accuracy in Chinese rendering?

According to LM Arena benchmarks, GPT-image-2 achieves approximately 99% character-level accuracy for CJK (Chinese, Japanese, Korean) characters. However, this is character-level (whether a single character is drawn correctly), not 100%. Errors still occur in extreme cases: 1) Tiny text below 5pt; 2) Rare technical characters (archaic or obscure names); 3) Complex layout conflicts (text overlapping images). Common 8pt+ titles, subtitles, prices, and dates are generally accurate. I suggest using the gpt-image-2-all model on APIYI (apiyi.com) to perform low-cost tests on your specific scenarios before deciding.

Q2: Is GPT-image 1.5’s Chinese rendering truly unusable?

It's not "completely unusable," but it is "unreliable." The success rate for short Chinese text (3-6 characters) is about 70-80%, meaning 1-2 out of every 5 images will require rework or Photoshop fixes. This is acceptable for occasional personal use, but it's a fatal flaw for commercial batch production—it equates to a 20% scrap rate and excessive editing hours. This is why Chinese creators struggled to incorporate it into professional workflows during the 1.5 era.

Q3: Aren’t domestic AI image models better for Chinese?

Domestic models (like Jimeng, Kling, CogView, etc.) do support Chinese well, with some metrics approaching GPT-image-2. However, considering the four dimensions of "text accuracy + overall image quality + reasoning capability + multilingual mixing," GPT-image-2 remains the strongest all-rounder as of April 2026. Recommendation: 1) Use domestic models for purely Chinese scenarios; 2) Use GPT-image-2 for scenarios involving mixed English-Chinese text, technical terminology, and the need for high-quality, professional imagery.

Q4: Does good Chinese rendering guarantee a model will go viral in the Chinese community?

Not necessarily; it's a necessary condition, not a sufficient one. Beyond Chinese rendering, you also need: 1) Low barriers to entry (accessible in China); 2) Reasonable pricing (affordable for individuals); 3) Early community hype. GPT-image-2's explosion in April was due to the synergy of the OpenAI brand effect + LM Arena +242 Elo lead + rapid integration via API proxy platforms like APIYI ($0.03/image).

Q5: How can individual creators start using GPT-image-2’s Chinese capabilities most quickly?

Three paths, from lowest to highest barrier: 1) Use the imagen.apiyi.com online tool directly (no code, accessible in China, Chinese interface); 2) Subscribe to ChatGPT Plus for $20/month (requires an overseas account and VPN); 3) Access via the APIYI (apiyi.com) API, using the gpt-image-2-all model at $0.03/image for batch generation. It's recommended to use the tool site to debug your prompts first, then move to the API for batch production once finalized.

Q6: Will this observation lose validity over time?

Yes. Currently (April 2026), we are in a window where "tools + models + platforms" are all jumping forward simultaneously. I expect the "Chinese character rendering = gateway to reach" assumption to weaken when: 1) Domestic models reach 99% accuracy (expected in 6-12 months); 2) Chinese users become desensitized to AI images, reducing the "novelty" (expected in 1-2 years); 3) New media formats emerge (short video, AR, etc.). However, for the period of April-December 2026, this assumption will likely hold true.

Q7: Are there any pitfalls when making Chinese posters with GPT-image-2?

The 3 most common pitfalls: 1) Key text must be wrapped in quotes: title: "New Year Sale" instead of title: 新春大促; 2) For complex characters (e.g., names like "赟" or "曦"), generate 4 images and choose the best, as the single-instance error rate is still 5-10%; 3) When mixing Chinese and English, explicitly specify font styles (Chinese: Source Han Serif style, English: Helvetica style) to avoid character spacing conflicts. I suggest using the APIYI (apiyi.com) platform to find a stable prompt at a low cost before running a batch.

Q8: How can the claims in this article be further verified?

You can verify them in three ways: 1) Data Verification: Scrape data from Xiaohongshu/Weibo/Bilibili on "GPT-image-2" related content since April and compare its reach curve with similar topics from the 1.5 era; 2) Controlled Experiments: Use the same prompts to generate 50 posters each on GPT-image-2, 1.5, and Nano Banana Pro, and have 100 users rate them anonymously; 3) Creator Interviews: Interview 30 Chinese creators who have used both generations and document their workflow changes. These experiments can all be quickly set up using the multi-model unified access provided by APIYI (apiyi.com).

Key Takeaways of Why GPT-image-2 Outshines Version 1.5

- A Key Metric of Generational Leap: GPT-image-2 has pushed CJK character rendering from the "unreliable" ~80% accuracy seen in version 1.5 to a 99% character-level accuracy, marking the biggest leap in the AI image generation space over the past 12 months.

- The Power of Chinese Media Channels: Platforms like Xiaohongshu, WeChat Official Accounts, meme culture, and e-commerce product pages—the core channels of the Chinese internet—all rely heavily on images containing text. Consequently, "Chinese character rendering" is the hard barrier for any AI tool to achieve mainstream adoption in the Chinese market.

- Workflow Bottlenecks in the 1.5 Era: In the 1.5 era, creators were forced to use Photoshop for post-processing text. This relegated AI image generation to a mere "assistant" role, making it impossible to integrate into daily production pipelines.

- Three Technical Knots Untied by 2.0: The combination of O-series reasoning, expanded CJK training data, and a character-level rendering mechanism provides the foundation for that 99% accuracy rate.

- April’s Viral Success isn’t Just Hype: Four major viral trends are currently in full swing: Chinese poster recreation, mass-producing memes, commercial poster design, and reverse application by cross-border brands.

- The Boundaries of the Hypothesis: While "Chinese character accuracy = the gateway to virality" is a necessary condition, it isn't sufficient on its own. Other factors like branding, pricing, and platform synergy also matter. The fact that Nano Banana Pro supports CJK but hasn't achieved the same viral status as GPT-image-2 serves as a perfect counter-example.

- The Window of Opportunity is Now: Domestic models are expected to close this gap within 6–12 months. Getting on board early is one of the most certain content opportunities for 2026.

- The Lowest Cost Way to Verify: You can test it on the APIYI (apiyi.com) platform using

gpt-image-2-allfor just $0.03 per image. Testing 10 images costs only about ¥2.1, which is more than enough to verify whether the difference is real.

Conclusion

Back to the opening question—"Why is GPT-image-2 so much more popular than 1.5?"

The simplest answer is: It passed the "Chinese character accuracy" gateway required for the Chinese internet. In the 1.5 era, AI image generation was already popular in the English-speaking world, but the Chinese market was stuck because "you couldn't use Chinese characters." Version 2.0 brought text rendering to 99% accuracy, allowing the entire Chinese creator community to finally run their full workflows for the first time, which in turn ignited the spread.

This isn't just an isolated story of "model iteration," but a case where a specific technical metric (CJK character-level accuracy from ~80% to 99%) acted as a lever to spark an entire ecosystem (Chinese internet content). Once you understand this cause-and-effect relationship, you'll be able to judge the viral potential of other AI models in the Chinese market more accurately—don't look at benchmarks, look at the Chinese characters.

For content creators, marketing teams, and e-commerce operators in 2026, the question of "whether to integrate GPT-image-2" is no longer about "should we use AI," but rather "if we don't use it now, we'll miss the dividend." I recommend using the APIYI (apiyi.com) platform to verify its performance in your specific scenario at a minimal cost ($0.03/image), and then decide whether to incorporate it into your main workflow based on real data.

Finally, a note from the author: these observations are a record of phenomena and an analysis of causes as of April 2026, not necessarily a final conclusion. I welcome more creators to supplement, correct, or even refute these findings based on their own testing data.

References

-

OpenAI ChatGPT Images 2.0 Official Announcement: GPT-image-2 release notes

- Link:

openai.com/index/introducing-chatgpt-images-2-0 - Description: Official documentation on 99% multilingual text accuracy.

- Link:

-

LM Arena Text-to-Image Leaderboard: Model Elo rankings

- Link:

arena.ai/leaderboard/text-to-image - Description: GPT-image-2 1512 Elo · Character-level accuracy verification.

- Link:

-

TechCrunch April 21 Report: ChatGPT's new Images 2.0 model is surprisingly good at generating text

- Link:

techcrunch.com/2026/04/21/chatgpts-new-images-2-0-model-is-surprisingly-good-at-generating-text - Description: Primary coverage by mainstream tech media within 24 hours of launch.

- Link:

-

The New Stack – OpenAI now thinks before it draws: In-depth report on reasoning mechanisms

- Link:

thenewstack.io/chatgpt-images-20-openai - Description: Analysis of how O-series reasoning impacts Chinese character rendering.

- Link:

-

CJK Tokenization Technical Documentation: Why LLMs have historically struggled with Chinese

- Link:

tonybaloney.github.io/posts/cjk-chinese-japanese-korean-llm-ai-best-practices.html - Description: Fundamental technical challenges in CJK processing.

- Link:

-

APIYI Platform: Domestic access to GPT-image-2

- Link:

apiyi.com - Description: Official forwarding API + reverse API (gpt-image-2-all at $0.03/image).

- Link:

Author: APIYI Technical Team | To experience the Chinese rendering capabilities of GPT-image-2, visit APIYI at apiyi.com to sign up and receive free testing credits, or try it online at imagen.apiyi.com (accessible directly from within China).