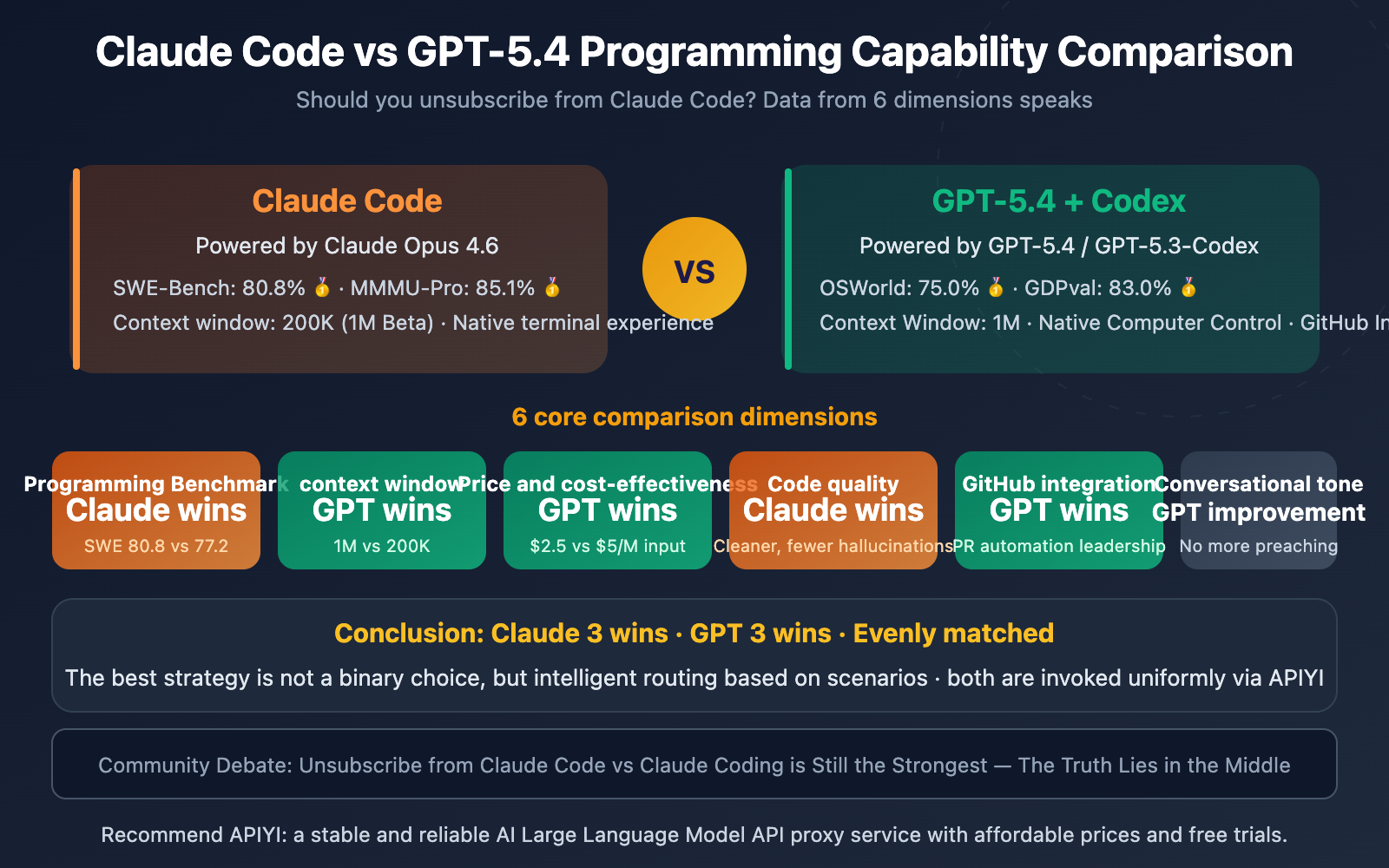

Author's Note: A neutral comparison of Claude Code and GPT-5.4's programming capabilities, code quality, context window, pricing, and developer experience to help you decide if it's time to switch.

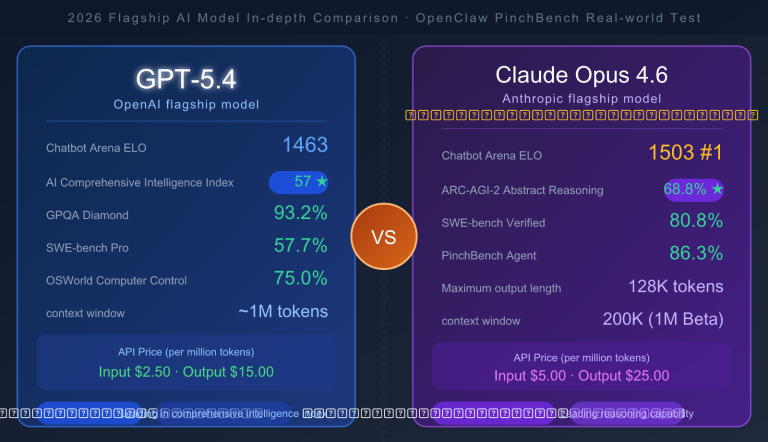

On the day GPT-5.4 was released, a certain sentiment started echoing across social media: "Unsubscribe from Claude Code!" The reasoning seemed solid—a 1M context window, leading capabilities across the board, and the long-standing "robotic tone" issue finally being addressed.

But reality isn't quite that simple. Benchmark data shows that Claude Opus 4.6 still leads GPT-5.4 on the SWE-Bench coding benchmark with 80.8% vs. 77.2%. Actual feedback from the developer community is even more divided.

Core Value: This article objectively compares Claude Code and GPT-5.4 across 6 dimensions to help you decide whether to switch—or if the smarter choice might be using both.

Claude Code vs. GPT-5.4 Core Data Comparison

| Dimension | Claude Code (Opus 4.6) | GPT-5.4 / Codex | Winner |

|---|---|---|---|

| SWE-Bench Coding | 80.8% | 77.2% | Claude |

| MMMU-Pro Visual Reasoning | 85.1% | 81.2% | Claude |

| GDPval Knowledge Work | 78.0% | 83.0% | GPT |

| OSWorld Computer Control | 72.7% | 75.0% | GPT |

| FrontierMath Mathematics | 27.2% | 47.6% | GPT |

| Terminal-Bench Terminal | 65.4% | 75.1% | GPT |

| Context Window | 200K (1M Beta) | 1,000K | GPT |

| API Input Price | $5.00/M | $2.50/M | GPT |

| API Output Price | $25.00/M | $15.00/M | GPT |

| Code Cleanliness | Cleaner, more standard | Standard | Claude |

| Refactoring & Debugging | Leading | Standard | Claude |

| GitHub PR Automation | Average | Deep Integration | GPT |

The score is Claude 4 wins, GPT 8 wins—but don't jump to conclusions just yet. In programming scenarios, the weight of SWE-Bench, code quality, and refactoring capabilities is far higher than knowledge work or computer control. Let's break it down one by one.

Claude Code vs GPT-5.4: A Deep Dive into Programming Capabilities

Dimension 1: Programming Benchmarks — Claude Code Takes the Lead

In the most watched programming benchmark, SWE-Bench Verified (which tests the ability to fix real-world GitHub issues), the results are in:

| Model | SWE-Bench Verified | SWE-Bench Pro |

|---|---|---|

| Claude Opus 4.6 | 80.8% 🥇 | — |

| Gemini 3.1 Pro | 80.6% | — |

| GPT-5.4 | 77.2% | 57.7% |

Claude Opus 4.6 leads GPT-5.4 by 3.6 percentage points. In production-grade code fixing scenarios—like understanding multi-file architectures and tracing complex dependency chains—Claude demonstrates a stronger grasp of code structure.

However, GPT-5.4 significantly outperforms Claude on Terminal-Bench 2.0 (tasks heavy on terminal operations), scoring 75.1% against Claude's 65.4%. If your workflow relies heavily on terminal commands, GPT might have the edge.

Dimension 2: Code Quality and Developer Experience — Claude Code is Cleaner

Feedback from across the dev community points to one consistent conclusion: Claude generates code that is cleaner, follows better patterns, and has fewer hallucinations.

Specifically:

- Refactoring Tasks: Claude performs better in complex refactoring and debugging.

- Architectural Understanding: When analyzing large repositories and layered architectures, Claude's reasoning chain is more stable with less "context drift."

- Generation Speed: Claude Code's initial generation is faster (roughly 1,200 lines in 5 minutes vs. Codex's 200 lines in 10 minutes).

GPT-5.4’s strengths lie more in documentation generation and writing boilerplate code—tasks that don't require a deep, holistic understanding of the project's architecture.

Dimension 3: Context Window — GPT-5.4 Crushes It

This is GPT-5.4's biggest structural advantage:

| Capability | Claude Code | GPT-5.4 |

|---|---|---|

| Standard Context | 200K | 1,000K |

| Beta Context | 1M | — |

| Max Output | 32K | 128K |

A 1M token context window means you can feed an entire production-grade codebase into the model at once. Just a heads-up: requests exceeding 272K tokens are billed at 2x the input price and 1.5x the output price. In practice, most programming tasks don't actually need more than 200K context.

🎯 Pro Tip: The context window is GPT-5.4's killer feature, but it only really shines when you're dealing with massive codebases. For small to medium projects, Claude's 200K context combined with its superior architectural understanding might be the better bet. You can access both via the unified API proxy service at APIYI (apiyi.com).

Claude Code vs GPT-5.4: Price and Ecosystem Comparison

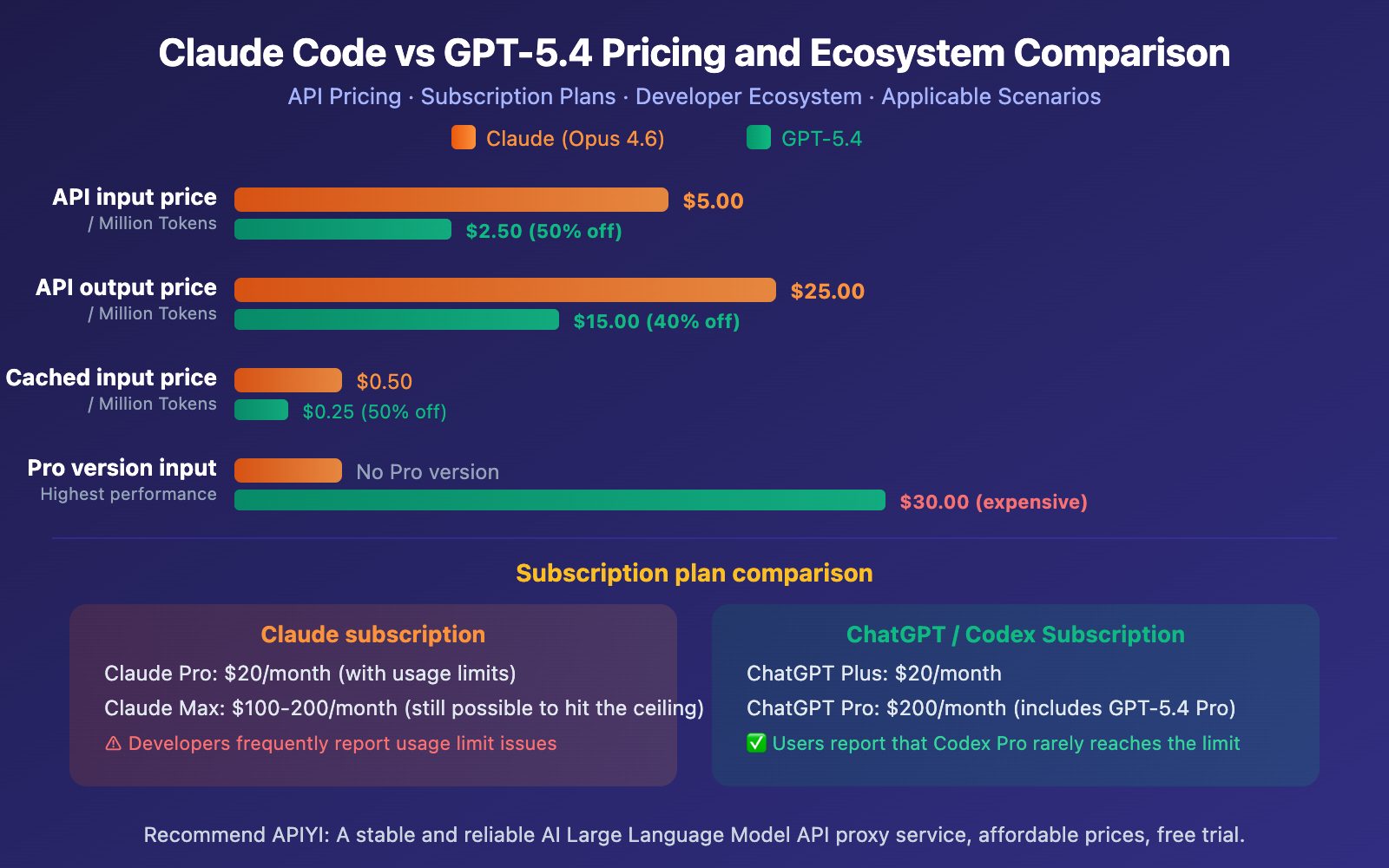

Dimension 4: Pricing — GPT-5.4 Offers Better Value

GPT-5.4 is consistently cheaper than Claude Opus 4.6 across the board for API pricing:

- Input: $2.50 vs $5.00/M (50% cheaper)

- Output: $15.00 vs $25.00/M (40% cheaper)

- Cached Input: $0.25 vs $0.50/M (50% cheaper)

On the subscription side, developers generally find Claude's usage limits to be much stricter. The $20/month Codex plan offers more generous quotas than the $17/month Claude Pro plan. Many developers report that they almost never hit the ceiling with Codex Pro, whereas Claude users frequently run into rate limits even on higher-tier plans.

Dimension 5: GitHub Integration — GPT Codex Clearly Ahead

This is an often-overlooked difference that has a huge impact on developer workflows.

According to developer feedback, Claude Code's GitHub PR reviews tend to "give long-winded comments but miss obvious bugs," while Codex provides "detection for bugs that are actually hard to find," including inline comments and actionable fix workflows. Codex's GitHub App also maintains consistent behavior between the CLI and the web interface.

Dimension 6: Conversational Tone — GPT-5.x is Sounding More Human

This is a common point of discussion on social media. The GPT-5 series has definitely evolved from being "too robotic" to sounding much more natural:

- GPT-5.0: Criticized for being a "cold robot."

- GPT-5.1: Added more warmth and conversational flow.

- GPT-5.3 Instant: Focused on being "less cringe," with hallucinations reduced by 26.8%.

- GPT-5.4: Inherits the tone improvements of 5.3 while boosting professional capabilities.

That said, Claude is still widely considered superior for natural dialogue and the readability of its code explanations. GPT-5.4 has closed the gap, but it's not quite there yet.

🎯 Cost Optimization: No matter which model you choose, using a unified access point like APIYI (apiyi.com) allows for more flexible billing. GPT-5.4 pricing is synced with the official rates ($2.50/$15.00), and you'll get a 10% bonus on top-ups of $100 or more.

Claude Code vs GPT-5.4 API Invocation Example

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

# Complex refactoring → Use Claude Opus 4.6 (Higher code quality)

refactor_result = client.chat.completions.create(

model="claude-opus-4-6",

messages=[{"role": "user", "content": "Refactor the dependency injection architecture of this module"}]

)

# Large codebase analysis → Use GPT-5.4 (1M context window)

analysis_result = client.chat.completions.create(

model="gpt-5.4",

messages=[{"role": "user", "content": "Analyze the entire project for security vulnerabilities"}]

)

Recommendation: You can call both Claude and GPT-5.4 simultaneously by registering an account at APIYI (apiyi.com). GPT-5.4 pricing is synced with the official site, and you'll get a 10% bonus on top-ups of $100 or more. Switching models based on the scenario is as simple as changing a single parameter.

FAQ

Q1: Should I unsubscribe from Claude Code?

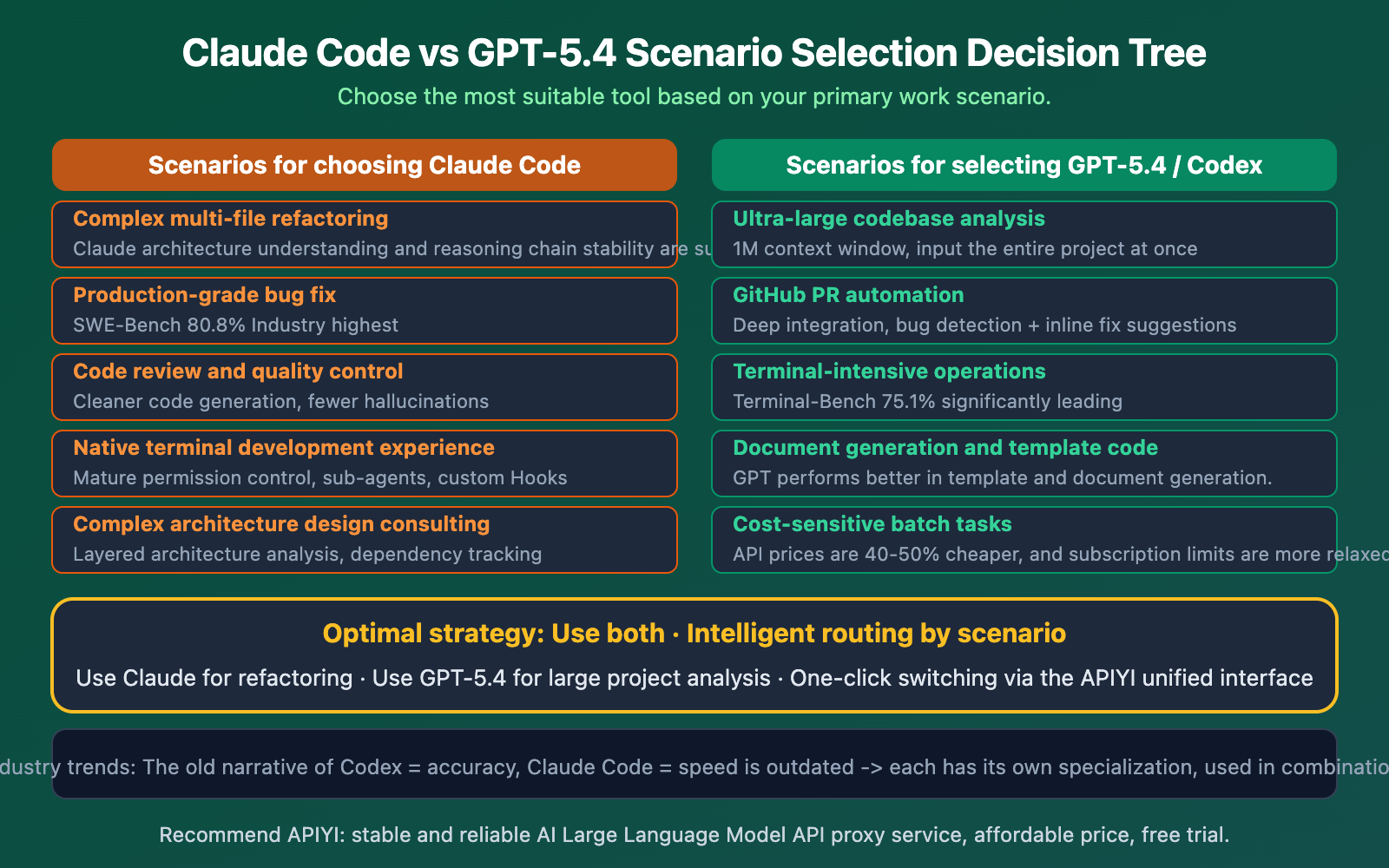

It depends on your primary workflow. If your core needs involve complex code refactoring and production-level bug fixes, Claude remains the strongest choice (leading with 80.8% on SWE-Bench). If you need an ultra-long context window, GitHub integration, and lower costs, GPT-5.4 / Codex has the edge. The best strategy isn't to choose one over the other, but to use both via API based on the specific task.

Q2: Is GPT-5.4’s coding capability truly leading across the board?

Not exactly. GPT-5.4 leads in dimensions like GDPval (knowledge work), OSWorld (computer control), and FrontierMath (mathematics). However, on the most critical coding benchmark, SWE-Bench, Claude Opus 4.6 maintains a lead with 80.8% vs 77.2%. In terms of code quality, refactoring capabilities, and architectural understanding, the developer community still tends to favor Claude. You can compare both through unified model invocation at APIYI (apiyi.com).

Q3: How can I use both Claude and GPT-5.4 simultaneously?

Register an account through APIYI (apiyi.com):

- Get a unified API key.

- Set the

base_urltohttps://vip.apiyi.com/v1. - Use

model="claude-opus-4-6"for refactoring tasks. - Use

model="gpt-5.4"for large project analysis. - Use

model="gpt-5.3-chat-latest"for daily tasks (the most cost-effective).

Get a 10% bonus on top-ups of $100 or more. One account covers all mainstream models.

Summary

The core takeaways for Claude Code vs. GPT-5.4:

- Claude still leads in coding benchmarks: With an SWE-bench score of 80.8% vs. 77.2%, its code quality is cleaner, and it's stronger at refactoring and debugging—so claims that you should "unsubscribe from Claude Code" are a bit premature.

- GPT-5.4 dominates in context and value: A 1M token context window (5x Claude's), API pricing that's 40-50% cheaper, and deeper GitHub integration make it perfect for massive projects and cost-sensitive scenarios.

- The best strategy is to use both: Use Claude for refactoring and bug fixes, GPT-5.4 for analyzing huge codebases and terminal operations, and GPT-5.3 Instant for daily tasks to save money.

Don't get swayed by "Unsubscribe from Claude Code" clickbait. Smart developers choose the right tool for the job—they don't just stick to one brand.

We recommend using APIYI (apiyi.com) to access both Claude and GPT-5.4. One API key calls all models, and you'll get a 10% bonus on top-ups of $100 or more.

📚 References

-

Claude Code vs. Codex Deep Dive: A detailed comparison from the developer's perspective by the Builder.io team.

- Link:

builder.io/blog/codex-vs-claude-code - Note: Includes hands-on comparisons of pricing, code quality, and GitHub integration.

- Link:

-

GPT-5.4 Targets Claude: Competitive Analysis: How GPT-5.4 is positioning itself against Claude.

- Link:

trendingtopics.eu/gpt-5-4-targets-anthropics-claude-with-premium-pricing-and-coding-muscle/ - Note: In-depth analysis of GPT-5.4 Pro's premium pricing and coding ambitions.

- Link:

-

GPT-5.4 vs. Opus 4.6 vs. Gemini 3.1 Pro: Full-Scale Comparison: Data from 12 benchmarks.

- Link:

digitalapplied.com/blog/gpt-5-4-vs-opus-4-6-vs-gemini-3-1-pro-best-frontier-model - Note: The most comprehensive comparison of the "Big Three," including competitiveness analysis and selection advice.

- Link:

-

Claude Sonnet 4.6 vs. GPT-5 Developer Benchmark: Real-world dev scenario testing by SitePoint.

- Link:

sitepoint.com/claude-sonnet-4-6-vs-gpt-5-the-2026-developer-benchmark/ - Note: Specific task data for refactoring, debugging, and documentation generation.

- Link:

Author: APIYI Tech Team

Tech Discussion: Feel free to join the discussion in the comments. For more resources, visit the APIYI documentation center at docs.apiyi.com.