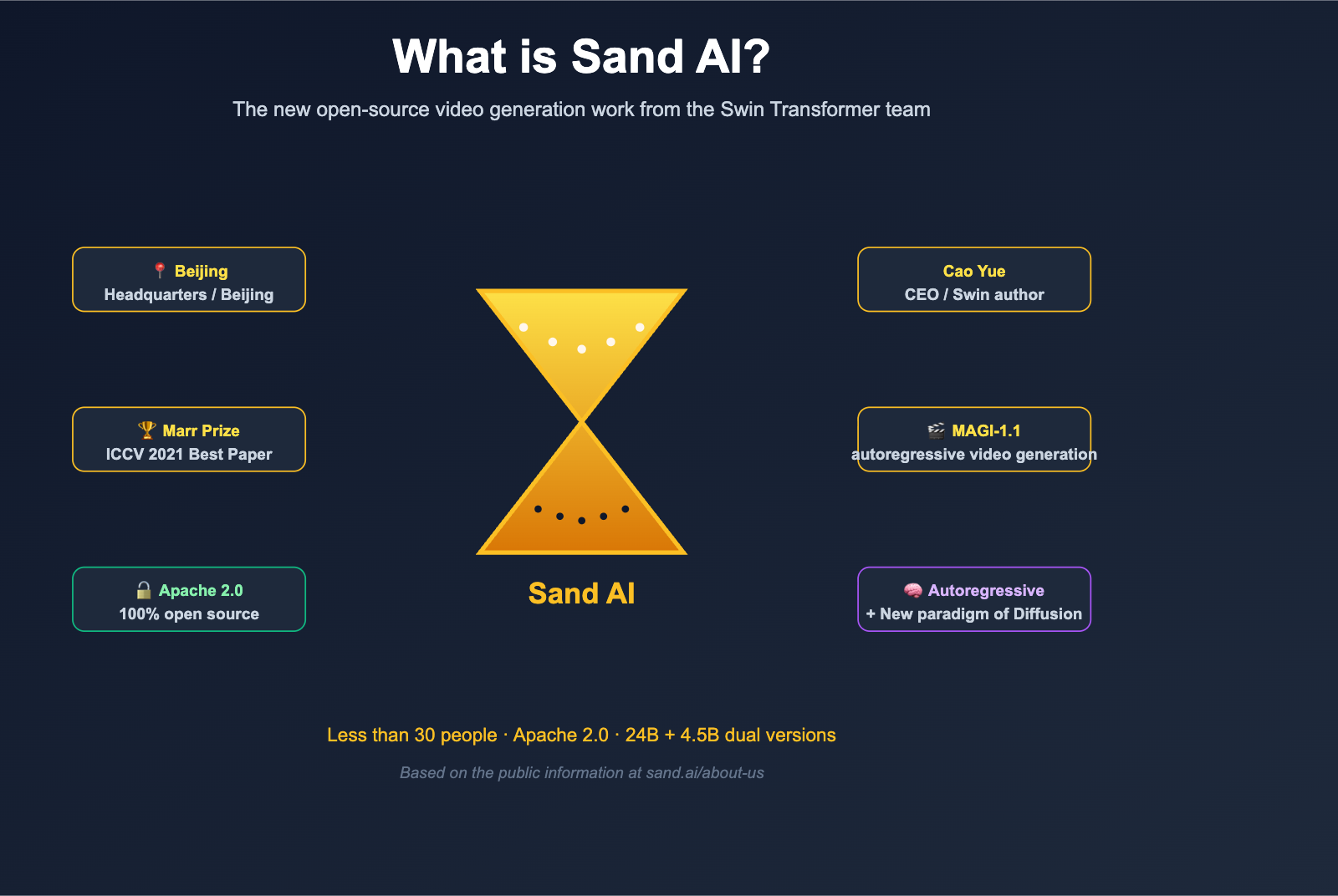

If you've been seeing the name Sand AI popping up repeatedly on Hugging Face, GitHub, or AI Twitter lately, and you're curious about their MAGI-1 / MAGI-1.1 models, this article is for you. Unlike many "overnight" video model teams, Sand AI has some serious technical pedigree: its CEO, Cao Yue, is a core author of Swin Transformer. That paper won the ICCV 2021 Best Paper Award (Marr Prize), has garnered over 30,000 citations on Google Scholar, and is widely used in major products from Microsoft Office 365, Azure, TikTok, and Kuaishou. In other words, Sand AI isn't just a team jumping on a trend; it’s the result of the original Swin Transformer team applying a decade of visual modeling expertise to video generation.

What has the global community even more excited is that Sand AI didn't just build a competitive video generation model—they chose to fully open-source it. The complete weights, code, and inference tools for MAGI-1 are available under the Apache 2.0 license on GitHub and Hugging Face. In the 2025-2026 wave of "open-source domestic video models," Sand AI stands out as one of the few teams to successfully execute and open-source the "autoregressive video generation" path. This article covers six dimensions—company background, founder history, MAGI technical architecture, open-source strategy, and target audience—to give you a clear picture of what Sand AI is all about.

Sand AI Core Overview

Before we dive into the details, here’s a quick summary of the key facts about Sand AI.

| Dimension | Sand AI Public Info |

|---|---|

| Company Name | Sand AI (sand.ai) |

| Background | Founded by core author of Swin Transformer, Cao Yue |

| Headquarters | Beijing, China |

| Team Size | Under 30, average age under 30 |

| Mission | "Empowering everyone with AI," embracing open source and collaboration |

| CEO | Cao Yue (Yue Cao), former head of the Vision Model Research Center at BAAI |

| Flagship Product | MAGI / MAGI-1 / MAGI-1.1 autoregressive video generation model |

| Initial Release | April 21, 2025 (MAGI-1) |

| Latest Version | MAGI-1.1 (100% open source) |

| Model Specs | 24B and 4.5B parameter versions |

| License | Apache 2.0, GitHub SandAI-org/MAGI-1 + Hugging Face sand-ai/MAGI-1 |

| Core Innovation | Autoregressive Denoising Diffusion |

| Web Portal | magi.sand.ai/app/projects |

| API Platform | platform.sand.ai/docs |

| Main Competitors | Wan series, HunyuanVideo, Hailuo, Sora, etc. |

🎯 Quick Tip: If you want to remember Sand AI in one sentence, think of it as "an open-source startup bringing the visual modeling prowess of Swin Transformer to video generation." If you want to test the differences between the MAGI series and other video models right now, we recommend running a few established models like Sora 2, Veo 3.1, or Kling on a unified platform like APIYI (apiyi.com) first, then pulling MAGI-1.1 from sand.ai or Hugging Face to compare. You'll immediately notice the unique characteristics of the "autoregressive" approach.

Sand AI: Company Background and Team DNA

To understand why Sand AI was able to deliver a competitive video model right out of the gate, we have to look at the team's background.

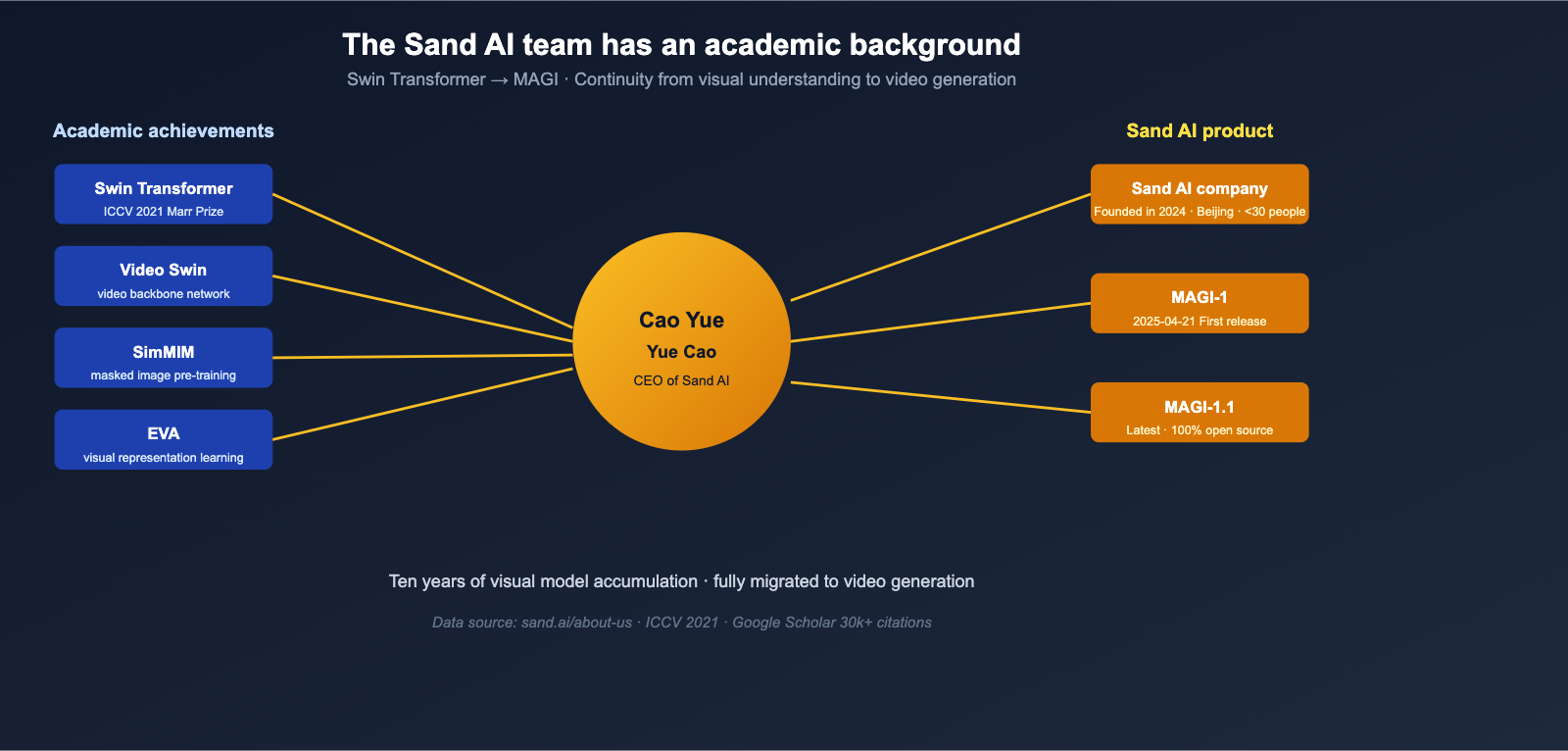

The Founder: Yue Cao, Father of Swin Transformer

Sand AI's CEO, Yue Cao, is a well-known name in the AI community, both in China and internationally. His core professional journey can be summarized as follows:

| Period | Experience |

|---|---|

| 2019-2022 | Senior Researcher at Microsoft Research Asia; core author of Swin Transformer |

| 2021 | Swin Transformer won the ICCV 2021 Best Paper Award (Marr Prize) |

| 2022-2023 | Co-founder of Lightyear AI, later acquired by Meituan |

| 2023-2024 | Head of the Visual Model Research Center at Beijing Academy of Artificial Intelligence (BAAI), focusing on foundational visual models and multimodal Large Language Models |

| 2024-Present | Founder and CEO of Sand AI |

The influence of Swin Transformer continues to this day—the paper has been cited over 30,000 times on Google Scholar and is widely used in visual understanding pipelines for products like Microsoft Office 365, Azure Cognitive Services, TikTok, and Kuaishou. It also served as the precursor to Video Swin Transformer. In a sense, Yue Cao himself represents the continuity of the technical path "from visual understanding to video generation."

Team Size: A "Hyper-Elite Small Team" of Under 30

The team structure at Sand AI is vastly different from most Large Language Model companies: The entire team consists of fewer than 30 people, covering product, marketing, engineering, and research, with an average age of under 30. This small-team structure is relatively rare in the recent AI startup boom, but it means:

- Short decision chains and rapid iteration;

- High coupling between engineering and research, allowing paper-level innovations to turn directly into products;

- No "departmental walls" like in big corporations; three people can get a new pipeline up and running.

This "small but tough" DNA is a key reason why Sand AI was able to release a highly polished model like MAGI-1 in April 2025.

Company Mission and Open Source Stance

On their "About Us" page, Sand AI defines their mission as: "Advance AI to benefit everyone," and explicitly states their commitment to "embracing open source, driving progress through open collaboration, and making frontier AI available to all." This isn't just a marketing slogan—Sand AI’s subsequent releases, MAGI-1 and MAGI-1.1, were fully open-sourced under the Apache 2.0 license. They released weights, inference code, and distilled versions on GitHub and Hugging Face, an open-source stance that is quite aggressive in the current video generation landscape.

Sand AI's Flagship Product MAGI: A New Paradigm for Autoregressive Video Generation

Now that we've covered the team, let's dive into the real highlight—Sand AI's flagship MAGI series. It differs fundamentally from mainstream approaches like Sora, Kling, Veo, and HunyuanVideo: It isn't a pure diffusion model that "generates the entire video at once," but rather combines "autoregressive" and "diffusion" techniques to generate video in chunks.

MAGI Key Facts

| Dimension | MAGI / MAGI-1 / MAGI-1.1 |

|---|---|

| First Release | April 21, 2025 |

| Latest Version | MAGI-1.1 (100% Open Source) |

| Parameter Specs | 24B (Full) + 4.5B (Lightweight) |

| Distilled Versions | 4.5B Distill + Distill+Quant (Released May 26, 2025) |

| Open Source License | Apache 2.0 |

| Repository | github.com/SandAI-org/MAGI-1 / huggingface.co/sand-ai/MAGI-1 |

| Video Duration | Currently 1-10 seconds, supports infinite extension |

| Frames per Segment | 24 frames per chunk, joint denoising |

| Concurrency | Processes up to 4 chunks simultaneously |

| Generation Time | Typically 1-2 minutes |

| Style Support | Realistic video + 3D semi-cartoon style |

| Control | Second-level timeline control + chunk-based prompting |

| Physics Understanding | Significantly leading in video continuation on the Physics-IQ benchmark |

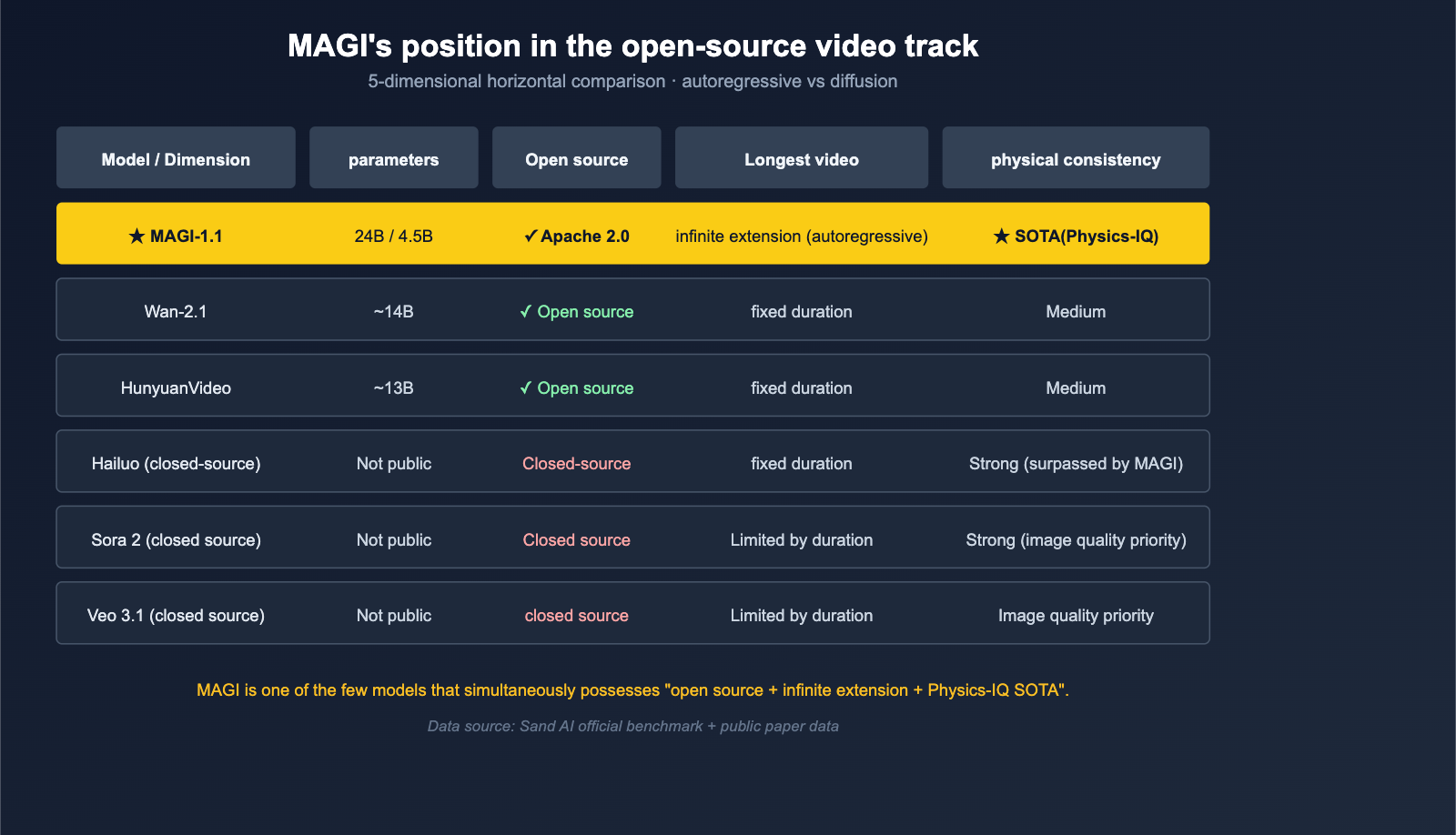

| Performance Positioning | SOTA among open-source models, outperforms Wan-2.1 / HunyuanVideo, surpasses closed-source Hailuo |

Autoregressive + Diffusion: Why It's a New Paradigm

Mainstream video diffusion models (Sora, Veo, Kling, etc.) typically denoise the entire video as a single tensor at once. While this approach is strong in image quality, it has two inherent flaws:

- Difficult to extend infinitely: The video length a model can generate at once is capped by VRAM and latency during inference;

- Weak physical consistency: Generating the whole sequence at once lacks the causal chain of "inferring the next frame based on the previous one."

MAGI takes the route of breaking the video into chunks of 24 frames, performing diffusion denoising within each chunk, and using autoregressive causal constraints between chunks. This means:

- Want a longer video? Just keep autoregressing further; there is no theoretical upper limit—which is why the sand.ai website emphasizes "infinite video extension capabilities";

- Want more realistic physics? Each frame is based on the previously generated frames, giving it a structural advantage on physical prediction benchmarks like Physics-IQ;

- Want finer control? You can feed a separate prompt for each chunk, creating a "segmented director" effect.

This design has performed exceptionally well in Sand AI's internal tests: It beat strong competitors like Wan-2.1 and HunyuanVideo among open-source models, and even surpassed Hailuo in closed-source comparisons, with performance on the Physics-IQ benchmark "significantly better than all existing models."

Engineering Innovations in the MAGI Architecture

To make the autoregressive + diffusion approach actually work, Sand AI packed a whole suite of architectural modifications into MAGI:

| Module | Function |

|---|---|

| Block-Causal Attention | Creates causal connections between chunks, preventing future information leakage |

| Parallel Attention Block | Improves parallel efficiency within a single chunk |

| QK-Norm + GQA | Stabilizes training + reduces KV Cache burden |

| Sandwich Normalization in FFN | Further stabilizes large model training |

| SwiGLU | Enhances non-linear expressive power |

| Softcap Modulation | Controls extreme values in attention distribution |

| Transformer-based VAE | Faster decoding speed |

While none of these innovations are "breakthroughs" on their own, when stacked together, they give MAGI-1 the ability to handle long videos, strong physics, controllability, and scalability—four capabilities that are often difficult to balance simultaneously.

🎯 Architecture Selection Advice: If your business requires "long video continuation" or "shot-level controllability," the autoregressive + diffusion paradigm of MAGI is worth considering. Before it provides a commercial API, you can use models already available for commercial use like Sora 2, Veo 3.1, or Kling 3.0 on APIYI (apiyi.com) to build your product prototype, and then seamlessly migrate once the MAGI commercial API matures.

How Sand AI Delivers MAGI to Developers

Having a powerful model is just the start; Sand AI has also designed a highly engineered delivery path. From casual users to developers and researchers, sand.ai provides three distinct entry points.

Three Ways to Use MAGI

| Entry Point | URL | Target Audience |

|---|---|---|

| Web App | magi.sand.ai/app/projects |

Content creators / casual users; generate images directly in the browser |

| API Platform | platform.sand.ai/docs |

Developers looking to integrate MAGI into their own products |

| Open Source Repo | github.com/SandAI-org/MAGI-1 + huggingface.co/sand-ai/MAGI-1 |

Researchers / self-hosting teams; run weights locally |

These three paths cover the entire spectrum of needs, from "no-code generation" to "engineering integration" and "full self-hosting." Compared to teams that "release papers without weights" or "provide demos without open-sourcing," Sand AI's approach is much more thorough.

The Engineering Significance of the 24B and 4.5B Versions

MAGI-1 offers both 24B and 4.5B parameter versions, which shows that Sand AI aims to cater to two distinct types of users:

- 24B Full Version: Geared toward researchers and enterprises with ample GPU resources who demand the highest image quality.

- 4.5B Distilled Version: Designed for engineering teams balancing cost and latency. In May, they added a Distill+Quant version, further compressing VRAM requirements.

This release cadence of "high-end and low-end dual models + continuous distillation" is essentially the most mature strategy for open-source Large Language Models in 2025-2026. In this regard, Sand AI is keeping pace with major open-source players like Mistral and Qwen.

Sand AI's Position and Insights in the Video Generation Track

By connecting the background, products, and delivery paths, Sand AI's position in the 2026 video generation track becomes quite clear.

Why It's Worth Watching

| Perspective | Sand AI's Differentiated Value |

|---|---|

| Academic Depth | Swin Transformer team DNA; continuity in network architecture innovation |

| Route Selection | Autoregression + Diffusion is a rare "third path," rather than a simple Sora clone |

| Open Source Commitment | Apache 2.0 + weights + code + Distill versions are all fully public |

| Product Form | Web / API / Self-hosting entry points are all available |

| Physical Understanding | Physics-IQ benchmark leads significantly; ideal for science, education, and research content |

| Long-form Video | The autoregressive route naturally supports infinite extension |

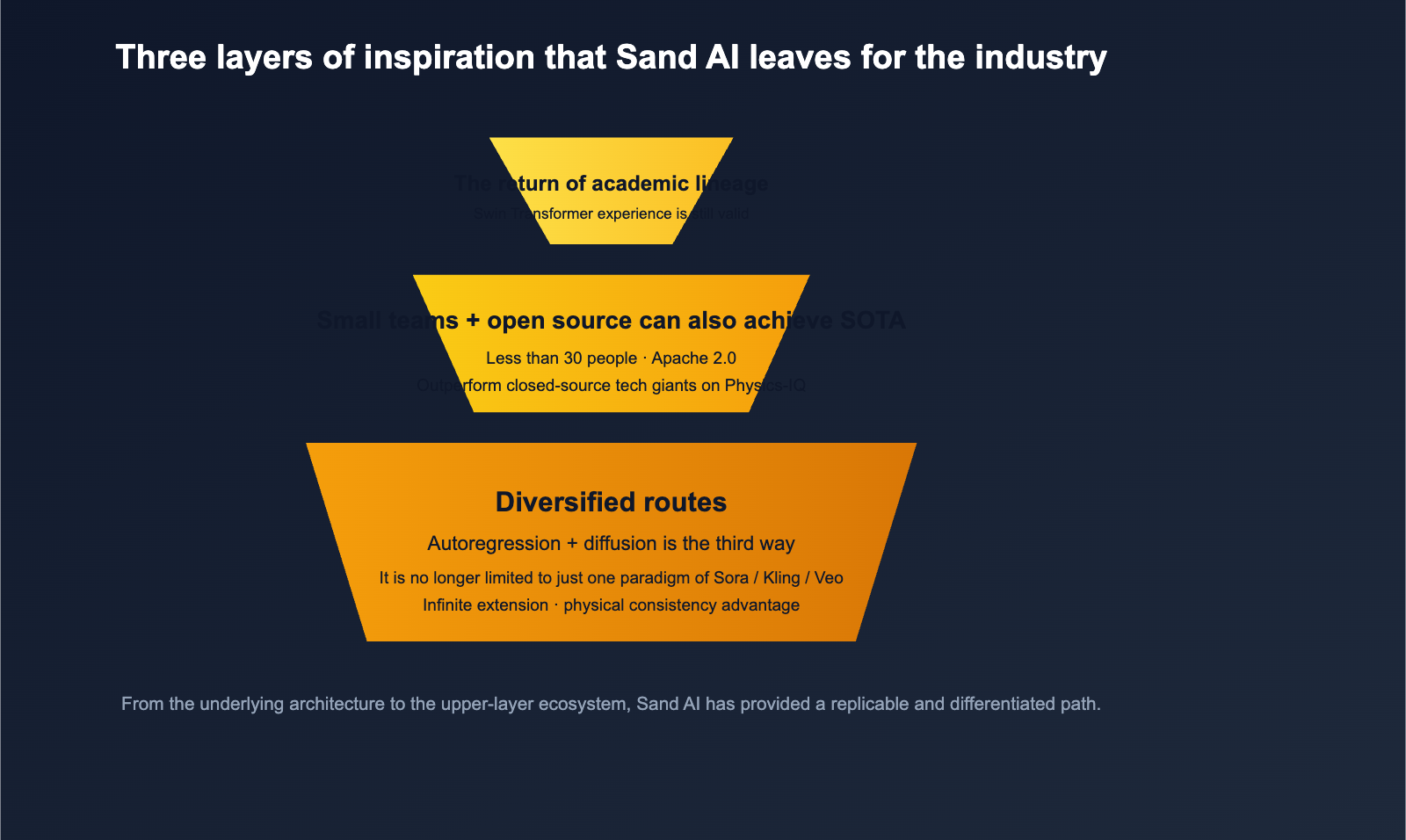

Three Industrial Insights

The rapid rise of Sand AI offers at least three insights for the entire video generation track:

- Diversification of Routes: Beyond Sora / Veo / Kling, the autoregressive + diffusion path is viable and holds structural advantages in physical consistency.

- Small Teams + Open Source Can Achieve SOTA: With fewer than 30 people and an Apache 2.0 license, they can still outperform many closed-source giants on Physics-IQ.

- The Return of Academic Pedigree: Training experience from "classic vision models" like the Swin Transformer still holds significant value in the era of video generation.

These three points serve as direct references for teams looking to enter the video generation space in 2026—you don't need 1,000 H100s to build a decent model, but you do need an engineering culture that "understands architecture, dares to open-source, and is willing to grind on physical consistency."

🎯 Ecosystem Integration Advice: For teams hoping to incorporate both "open-source and closed-source" video models into their products, we recommend managing models like Sora 2, Veo 3.1, Kling 3.0, and MAGI-1 under a unified interface. Before MAGI's commercial API is fully opened to the public, you can use APIYI (apiyi.com) to integrate already commercialized video models to validate your business flow while waiting for further openings on platform.sand.ai.

Who is Sand AI for (and who is it not for)?

Back to a very practical question: Should you be using Sand AI's MAGI right now? The answer depends on your specific needs for video generation.

Who it's for

| User Group | Why it's a good fit |

|---|---|

| Researchers / Authors | Fully open-source + new autoregressive paradigm, perfect for follow-up academic work |

| Self-hosting / Private deployment teams | Apache 2.0 + 4.5B distilled version, weights can be run locally |

| Physics/Education content creators | Leading Physics-IQ, excellent physical consistency |

| Long-form video creators | Autoregressive architecture naturally supports infinite extension |

| Product teams needing "shot-level control" | Supports second-level timelines + chunk-wise prompting |

| Chinese AI ecosystem participants | Beijing-based team, friendly to Chinese prompts |

Who it's not for

| User Group | Reason |

|---|---|

| No-code users just looking for "quick results" | UX of mature products like Sora 2 / Kling is still more lightweight |

| Small teams unwilling to self-host | The platform.sand.ai commercial API is still being refined |

| Those needing 4K + long duration + audio | Current focus is research/creative, not professional film post-production |

| Pure application layers indifferent to "weight licensing" | Calling closed-source APIs directly is less of a headache |

🎯 Trial Suggestion: If you just want to "see the results immediately," we recommend visiting magi.sand.ai to try the web app without logging in or with a quick registration. If you want to compare the real differences between Sand AI and other video models, you can use an API proxy service like APIYI (apiyi.com) to call Sora 2 / Veo 3.1 / Kling 3.0. By using the same set of prompts to generate videos in parallel, you can intuitively judge whether MAGI's autoregressive route is truly better suited for your business.

Sand AI FAQ

Q1: What is Sand AI? Is it in the same category as Stability AI or Midjourney?

Sand AI is an AI startup founded in Beijing, China, by Yue Cao, a core author of Swin Transformer. The core team consists of fewer than 30 people. Unlike Stability AI (which focuses on images) or Midjourney (which is closed-source and subscription-based), Sand AI focuses on video generation and has chosen a fully open-source (Apache 2.0) route. Its flagship products are the autoregressive video generation models MAGI-1 / MAGI-1.1.

Q2: What is the fundamental difference between MAGI-1 and Sora, Kling, or Veo?

The biggest difference lies in the technical approach: Mainstream models like Sora / Veo / Kling generate the entire video at once, whereas MAGI breaks the video into chunks of 24 frames each. It performs diffusion denoising within the chunks and uses autoregressive causal connections between them. This paradigm gives MAGI a structural advantage in "infinite video extension" and "physical consistency"—the official Sand.ai team has demonstrated significantly leading results on the Physics-IQ benchmark.

Q3: Is MAGI-1 truly fully open-source? Can it be used commercially?

Yes. MAGI-1 and MAGI-1.1 are open-sourced under the Apache 2.0 license on GitHub (SandAI-org/MAGI-1) and Hugging Face (sand-ai/MAGI-1), with all code, weights, and inference tools included. Apache 2.0 is a very friendly open-source license that allows commercial use, modification, and closed-source derivatives, provided the copyright notice is retained. This means you can use MAGI-1 in your own products or perform secondary training based on it.

Q4: What hardware is needed to run MAGI-1 locally?

The full version of MAGI-1 is a 24B parameter model, which requires professional-grade multi-GPU setups for local inference. If your hardware budget is limited, we recommend using the 4.5B Distill version or Distill+Quant version released by Sand AI in May 2025. The VRAM requirements are significantly lower, allowing it to run on a single high-end consumer GPU. If you just want to "see the effects," we suggest visiting magi.sand.ai to use the web app, which requires no local configuration.

Q5: Does Sand AI have a commercial API? How does it compare to Sora or Kling?

Sand AI's commercial API platform, platform.sand.ai, is already live, but its ecosystem maturity is still catching up to models like Sora and Kling that are already fully commercialized. If you are building a video generation product that needs to be "immediately available, have sufficient quotas, and support Chinese prompts," we suggest using an API proxy service like APIYI (apiyi.com) to call already commercialized video models like Sora 2, Veo 3.1, or Kling 3.0 to get your business running. Meanwhile, keep an eye on the release schedule of Sand AI's subsequent APIs and switch or integrate them in parallel when the time is right.

Q6: Is Sand AI worth paying attention to moving forward?

Absolutely. Two reasons: First, the academic pedigree of the Swin Transformer team means that subsequent MAGI iterations are likely to continue innovating at the architectural level rather than just stacking data. Second, Sand AI has chosen a differentiated path of "autoregressive + diffusion + fully open-source." If this path proves successful, it will influence the paradigm choices for the entire open-source video generation track for 2026-2027. Whether you are a researcher, product developer, or content creator, we recommend adding sand.ai to your watchlist.

Summary: The Final Answer to "What is Sand AI?"

Returning to our original question—"What is Sand AI?"—we can now provide a comprehensive answer: Sand AI is a lean, sub-30-person AI startup founded in Beijing by Cao Yue, a core author of the Swin Transformer. Their flagship product is the open-source autoregressive video generation model, MAGI-1 / MAGI-1.1. It has achieved performance on physical consistency benchmarks like Physics-IQ that surpasses most open-source and some closed-source models, and they’ve released the full set of weights and code under the Apache 2.0 license on GitHub and Hugging Face. They are a dark horse in the video generation space, characterized by "strong academic roots, innovative technical paths, and a commitment to radical open-source."

For developers and researchers, the true significance of Sand AI isn't just "another video model." Instead, it provides a replicable, differentiated path for the entire video generation industry: one that doesn't rely on massive compute, closed-source walled gardens, or marketing hype, but rather on academic rigor, architectural innovation, and complete transparency. If the pre-2025 video generation landscape was dominated by Sora, the emergence of Sand AI introduces the possibility that, by 2026, the open-source ecosystem can prove that "small teams can also achieve SOTA."

🎯 Final Recommendation: To stay on top of the latest developments from Sand AI and MAGI, we suggest three steps: 1) Follow updates from sand.ai and the

sand-aiorganization on Hugging Face; 2) Run your own real-world use cases through the magi.sand.ai web app to get a firsthand feel for the model; 3) Integrate MAGI alongside commercial models like Sora 2, Veo 3.1, and Kling 3.0 into a unified platform like APIYI (apiyi.com) to conduct a side-by-side comparison. This will help you determine its true value for your business based on your own internal benchmarks. Once you've completed this process, the answer to whether Sand AI belongs in your video generation tech stack will become clear.

Author: APIYI Team | Focusing on the deployment of Large Language Models and the open-source ecosystem. For more evaluations of video and multimodal models, please visit APIYI at apiyi.com.