If you’ve been hearing everyone talk about "Muse Spark" lately but aren't quite sure how it fits in with Llama 4, ChatGPT, or Claude, this guide is for you. Muse Spark is the brand-new flagship Large Language Model released by Meta on April 8, 2026—it’s the first fully self-developed model launched since the formation of Meta’s Superintelligence Labs (MSL), and it represents Meta’s most significant AI comeback since the Llama 4 stumble.

Key Takeaways: By the end of this article, you’ll understand what Muse Spark is, how it differs from Llama 4, what the "Contemplating" mode means, and how you can start using it today.

What is Muse Spark: Key Points

In a nutshell: Muse Spark = The first self-developed multimodal reasoning model from Meta’s Superintelligence Labs.

It was built over nine months by a team led by Alexandr Wang (former founder of Scale AI, who joined Meta in 2025 as Chief AI Officer), under the project codename "Avocado." Muse Spark marks a pivot for Meta; after the setbacks of the Llama series, they are shifting from an "open-source parameter-stacking" approach to a "superintelligence + closed-source + self-developed" strategy.

| Feature | Description | Value |

|---|---|---|

| Developer | Meta Superintelligence Labs (MSL) | Meta's new flagship initiative |

| Release Date | April 8, 2026 | ~1 year after Llama 4 |

| Leader | Alexandr Wang (ex-Scale AI founder) | Meta Chief AI Officer |

| Codename | Avocado | 9 months of development |

| Model Family | First of the Muse family | More to come |

| Architecture | Native multimodal reasoning model | Supports tool use, visual CoT, multi-agent orchestration |

| Input | Text / Audio / Image | Multimodal perception |

| Output | Text (currently) | Potential for expansion |

| Killer Feature | Contemplating mode | Deep reasoning similar to OpenAI o1 |

| Open Source | ❌ Closed (Meta plans to open-source in the "future") | Strategic shift |

💡 Quick Take: If the Llama series was "Meta’s gift to the open-source community," then Muse Spark is "the core engine for Meta’s own business." Zuckerberg’s strategy here is crystal clear: make the model powerful first, then consider open-sourcing it. If you need to experience current flagship Large Language Models (like GPT-5, Claude Opus 4.6, or Gemini 3 Pro) right now, you can access them all via APIYI (apiyi.com), and we'll be sure to add Muse Spark as soon as its API is released.

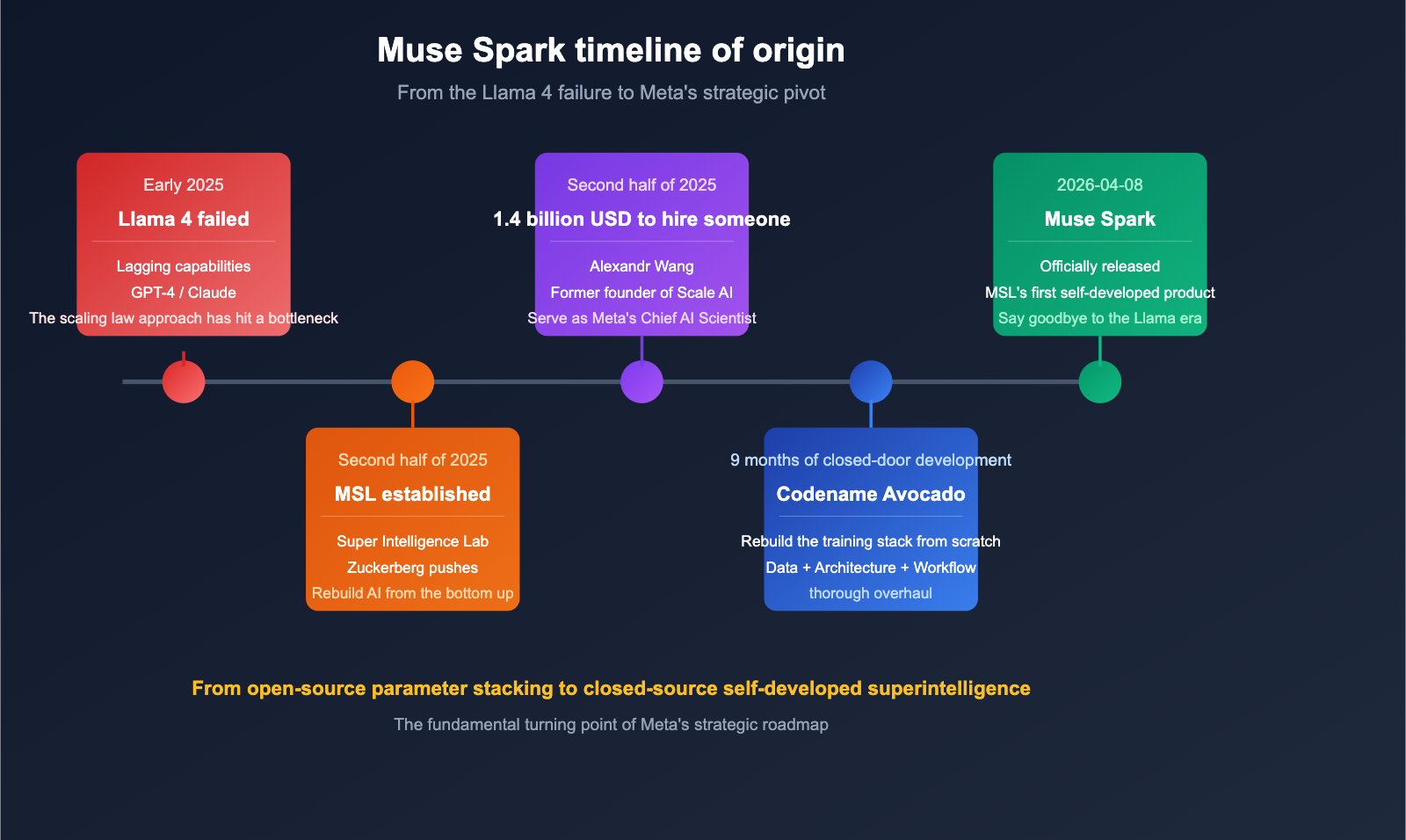

The Origin Story of Muse Spark

To understand why Muse Spark matters, you’ve got to look at the context of its birth. Over the past 18 months, Meta has undergone a massive shift in its AI strategy.

Phase 1: The Llama 4 Setback (Early 2025)

Meta’s Llama series was once the gold standard for open-source Large Language Models. However, the Llama 4 release in early 2025 lagged behind GPT-4, Claude 3.5, and Gemini 1.5 in several key areas. The community generally felt that the "parameter-stacking" approach used for Llama 4 had hit a wall.

Phase 2: Founding the Superintelligence Labs (MSL)

Reportedly frustrated with the progress of Llama 4 and feeling that Meta was falling behind competitors like OpenAI, Anthropic, and Google, Mark Zuckerberg took action. In the second half of 2025, Meta established the Meta Superintelligence Labs (MSL) with a mission to "rebuild Meta’s AI capabilities from the ground up."

Phase 3: The $1.4 Billion Hire of Alexandr Wang

To jumpstart MSL, Meta invested $1.4 billion to bring on Scale AI founder Alexandr Wang as Chief AI Officer to lead the lab. It was one of the largest "acqui-hires" in the history of the AI industry.

Phase 4: 9 Months of "Deep Work" → The Birth of Muse Spark

After Alexandr Wang took the helm, his team—codenamed "Avocado"—went into a nine-month period of intense development, performing a "ground-up overhaul" of Meta’s AI training stack. The result of this effort was the release of Muse Spark on April 8, 2026.

🎯 Key Takeaway: Muse Spark isn't just another model; it represents a strategic pivot for Meta—moving away from "open-source parameter-stacking + community-driven" toward "closed-source proprietary + superintelligence + business integration." This shift has profound implications for the entire AI open-source community.

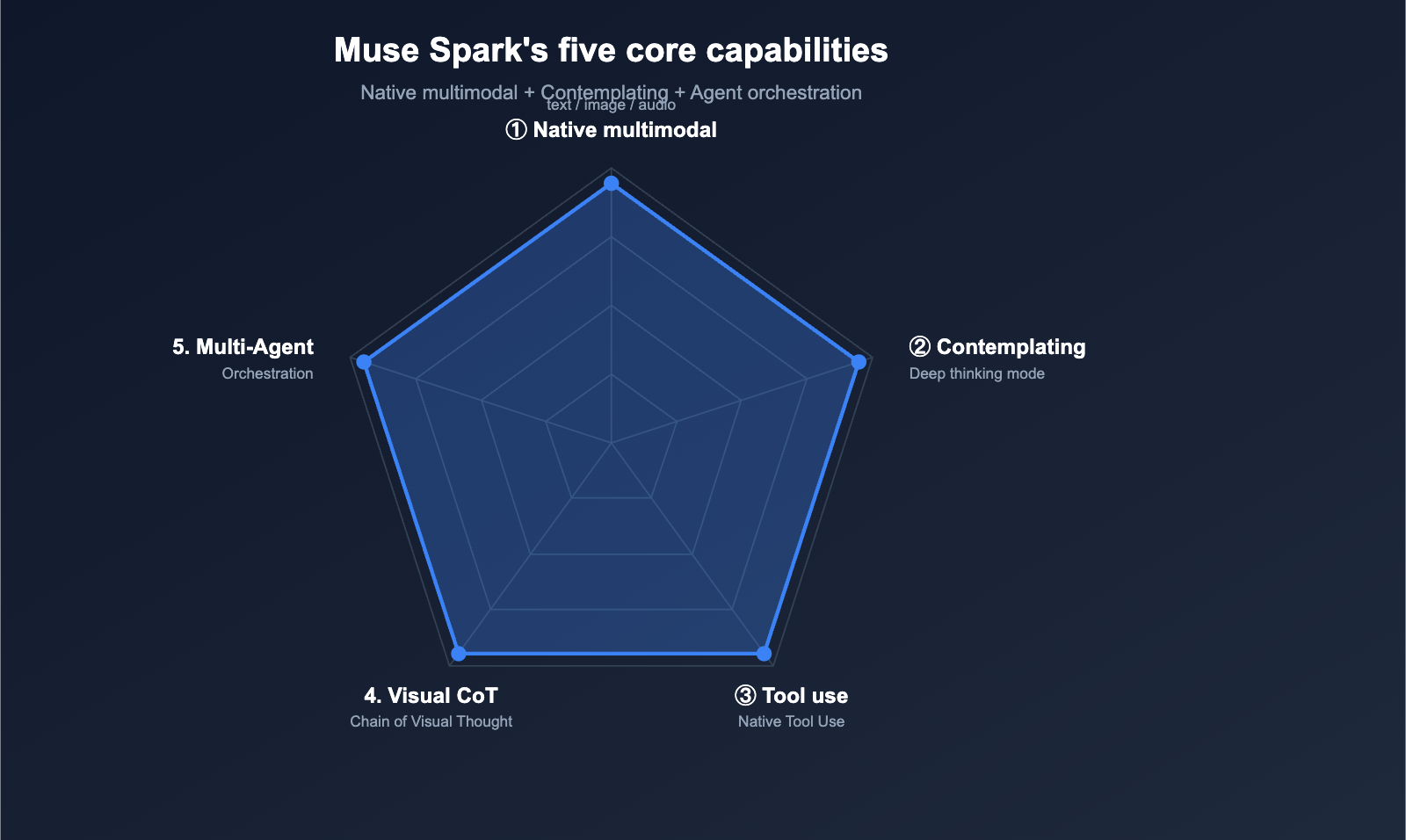

Core Capabilities of Muse Spark

Meta officially defines Muse Spark as a "native multimodal reasoning model that supports tool use, visual chain-of-thought, and multi-agent orchestration." Let's break down these five core capabilities.

Capability 1: Native Multimodal Perception

Muse Spark accepts text, audio, and images simultaneously. In a demo shared on their blog, Meta showed: "Meta AI can understand what you see — take a photo of a snack shelf at the airport, and it can identify and rank all the snacks based on their protein content."

This "see and rank" ability means Muse Spark doesn't just "describe images"; it can understand entities in an image, associate them with external knowledge, and perform reasoning-based ranking, bringing it closer to true multimodal intelligence.

Capability 2: Contemplating Mode

This is Muse Spark's most highly anticipated feature, similar to the reasoning modes found in OpenAI's o1 / o3. When faced with complex problems, the model enters a "deep thinking" state, utilizing more tokens and time to arrive at a solution.

Official benchmark results for Contemplating mode:

| Benchmark | Muse Spark Contemplating | Significance |

|---|---|---|

| Humanity's Last Exam | 58% | Current hardest comprehensive test for human experts |

| FrontierScience Research | 38% | Frontier scientific reasoning capability |

These figures place it firmly in the "frontier model club," on par with Claude Opus 4.6, GPT-5, and Gemini 3 Pro.

Capability 3: Thought Compression

Muse Spark also introduces an intriguing feature — "Thought Compression": The model might use a large number of tokens to solve a problem the first time, but after "internalizing" the process, it uses significantly fewer tokens to solve similar problems in the future.

This acts as the "model's own learning curve"—the more you use it, the more token-efficient and faster it becomes. This is a huge advantage for long-running agents and repetitive tasks.

Capability 4: Visual Chain-of-Thought + Tool Use + Multi-Agent Orchestration

Muse Spark natively supports three agent-oriented capabilities:

- Visual Chain-of-Thought: Explicit reasoning during image understanding, rather than just "answering at a glance."

- Tool Use: Native tool-calling interface, capable of connecting to the web, calculators, code execution, and more.

- Multi-Agent Orchestration: A single Muse Spark instance can orchestrate multiple sub-agents to handle complex tasks.

This combination of capabilities makes Muse Spark more than just a chat model; it's an agent engine that can be directly integrated into business systems.

Capability 5: Specialized Optimization for Healthcare

Meta has invested heavily in the healthcare domain, collaborating with over 1,000 doctors to curate training data. This allows the model to "generate interactive displays to interpret and explain health information." This is a unique focus, suggesting that Meta is betting big on the "AI personal health assistant" market.

Capability 6: An Order of Magnitude Improvement in Compute Efficiency

What has really surprised engineers is Muse Spark's training efficiency. Meta claims:

"Compared to Llama 4 Maverick, Muse Spark can achieve the same level of capability with one order of magnitude less compute."

This indicates that over the past nine months, Meta has rewritten its training stack—reconstructing data strategies, architecture, and training workflows from the ground up. This efficiency gain offers valuable insights for all researchers in the field.

Muse Spark vs. Llama 4 vs. Leading Flagship Models

| Comparison Dimension | Muse Spark | Llama 4 Maverick | GPT-5 | Claude Opus 4.6 |

|---|---|---|---|---|

| Developer | Meta MSL | Meta | OpenAI | Anthropic |

| Route | Closed-source | Open-source | Closed-source | Closed-source |

| Multimodal | Native (Text/Img/Audio) | Text-focused | Native | Native |

| Reasoning Mode | ✅ Contemplating | ❌ | ✅ | ✅ Extended Thinking |

| Tool Use | ✅ Native | ⚠ | ✅ | ✅ |

| Multi-Agent Orchestration | ✅ Native | ❌ | ⚠ | ⚠ |

| Training Compute Efficiency | 10x Higher | Baseline | — | — |

| Humanity's Last Exam | 58% | < 20% | Same tier | Same tier |

| Current Access | meta.ai / Meta AI app | Available | API + ChatGPT | API + Claude |

| API Status | Private preview | Public | Public | Public |

🎯 Recommendation: For developers, the current "barrier" to Muse Spark is that its API is still in private preview, making it inaccessible for direct integration. If you need to use frontier Large Language Models in production today, the most pragmatic choices remain GPT-5, Claude Opus 4.6, or Gemini 3 Pro, all of which can be accessed via APIYI (apiyi.com). Once the Muse Spark API is released to the public, you can then perform a comparative evaluation.

Getting Started with Muse Spark

Although the Muse Spark API isn't public yet, you can start using it for free today.

Option 1: Web (Fastest)

The easiest way is to head straight to meta.ai:

- Visit:

meta.ai - Log in with your Facebook or Instagram account

- Start chatting; Muse Spark is the default model

- Completely free (though Meta may apply rate limits)

Option 2: Meta AI Mobile App

Download the official Meta AI app (iOS / Android) and log in. The mobile version has a great advantage: you can take photos and upload them directly for Muse Spark to analyze—it's the most intuitive way to experience "native multimodal" capabilities.

Option 3: Social Platform Integration (Coming Soon)

Meta has announced that over the next few weeks, Muse Spark will be integrated into:

- WhatsApp chat

- Instagram Direct Messages

- Facebook Messenger

- Ray-Ban Meta AI glasses

This means if you're already using any of Meta's products, you'll soon be using Muse Spark "passively" as part of your daily workflow.

Option 4: API (Private Preview)

Muse Spark currently offers a private API preview, which is only available to select users. Regular developers cannot apply for access at this time. Meta has mentioned that they will "open up broader API access in the future," but hasn't provided a specific timeline.

💡 Practical Advice: Until the Muse Spark API is officially released, the most pragmatic workflow is: (1) Use the web or app version of meta.ai to experience Muse Spark's multimodal and "contemplating" capabilities; (2) In your production applications, use an API proxy service like APIYI (apiyi.com) to access currently available flagship models like GPT-5, Claude Opus 4.6, or Gemini 3 Pro; (3) Once the Muse Spark API is public, evaluate the migration benefits immediately.

Who is Muse Spark For?

Scenario 1: Meta Ecosystem Users

If you’re a heavy user of Facebook, Instagram, WhatsApp, or Messenger, Muse Spark is about to "seamlessly land" in these products. You won't need to do a thing; you'll be using it automatically within a few weeks.

Scenario 2: Multimodal Application Explorers

Muse Spark’s multimodal perception capabilities (especially image understanding + knowledge reasoning) are incredibly practical in certain scenarios—like shopping via photos, health queries, or visual learning. If you're researching products in these areas, I recommend trying it out on meta.ai first.

Scenario 3: Developers of Health-Related Apps

Meta has specifically optimized Muse Spark for the health sector (using training data from over 1,000 doctors). If you're building AI applications in the health space, Muse Spark is definitely one to keep an eye on for the long term.

Scenario 4: AI Model Researchers

Muse Spark’s two key features—"an order of magnitude less compute" and "thought compression"—are technically fascinating. Even if you can't get an API key in the short term, researchers should definitely follow Meta’s upcoming papers and technical reports.

Scenario 5: Want Cutting-Edge Tech Without the Wait?

If you don't fit into any of the categories above but want to use a "top-tier model on par with Muse Spark" right now, you can directly access GPT-5, Claude Opus 4.6, or Gemini 3 Pro via APIYI (apiyi.com). These models are in the same league as Muse Spark on benchmarks like "Humanity's Last Exam" and have fully open, accessible APIs.

Muse Spark FAQ

Q1: Is Muse Spark the same as Llama 5?

No. Muse Spark is the first in Meta’s brand-new model family (the Muse series) and has no lineage with the Llama series. Meta has clearly chosen to move away from the Llama naming convention for two reasons: (1) The Llama series follows an open-source path, while Muse Spark is closed-source; (2) Muse Spark is the product of the rebuilt MSL training stack, making it technically distinct from Llama. Meta has stated they "might" open-source a version of Muse Spark in the future, but there’s no specific timeline.

Q2: Is Muse Spark really free?

Yes. On meta.ai and the Meta AI app, Muse Spark is completely free to use. Meta might impose rate limits on individual users to prevent abuse, but they won't be charging for it. This is a classic strategy where Meta trades a "free flagship model" for user engagement and data.

Q3: Does Muse Spark have an API? Can I use it to build apps?

Currently, Muse Spark only offers a private API preview, available only to selected users. Regular developers cannot apply for it directly. Meta has indicated that they will open up broader API access in the future, but they haven't provided a specific date. If you want to integrate a top-tier Large Language Model into your production apps today, the most pragmatic choice is to use APIYI (apiyi.com) to access flagship models like GPT-5, Claude Opus 4.6, or Gemini 3 Pro, which already have public APIs.

Q4: Is the “Contemplating” mode the same as OpenAI o1?

The underlying philosophy is the same—both use the "increase inference tokens at test time" approach. The difference is that while OpenAI o1/o3 are separate branches of "specialized reasoning models," Muse Spark treats "Contemplating" as an optional mode within the same model. This means you don't have to switch between a "fast model" and a "thinking model"; one Muse Spark is enough. This design philosophy is closer to Anthropic Claude’s Extended Thinking.

Q5: What does a 58% score on “Humanity’s Last Exam” mean?

This is the level of the current "cutting-edge model club." "Humanity's Last Exam" is currently the industry's toughest comprehensive human expert test, covering fields like physics, mathematics, biology, humanities, and law. A score of 58% puts it in the same tier as Claude Opus 4.6 and GPT-5, far surpassing Llama 4 Maverick (which is below 20%) and the Llama 3 series.

Q6: Can developers in China use Muse Spark?

You can access the web version of meta.ai (provided you handle the network requirements), but the Meta AI app and its integration with WhatsApp/Instagram are essentially unavailable in mainland China. For domestic developers, the most pragmatic approach is: (1) Use the web version to experience Muse Spark’s multimodal and "Contemplating" capabilities; (2) Integrate available flagship models like GPT-5, Claude Opus 4.6, or Gemini 3 Pro into your own products via APIYI (apiyi.com) to enjoy stable, low-latency, pay-as-you-go access; and (3) Keep an eye out for when the Muse Spark API becomes publicly available.

Summary

Muse Spark is undoubtedly one of the most significant milestones in the AI industry for 2026. It signals three major shifts:

- A Fundamental Pivot in Meta's Strategy: Moving from "open-source parameter scaling" to "closed-source, self-developed superintelligence"—a complete change in direction.

- An Engineering Breakthrough in Training Efficiency: If the claim that it "achieves equivalent capabilities with an order of magnitude less compute" holds true, it will reshape training cost expectations across the entire industry.

- Multimodal Reasoning + Agent Orchestration as the New Standard: Muse Spark, GPT-5, Claude Opus 4.6, and Gemini 3 Pro are all converging toward a future of "native multimodal capabilities + contemplating + tool use + multi-agent orchestration."

🚀 Actionable Advice: If you want to experience Muse Spark today, here’s the fastest path: First, head over to

meta.aiand log in with your Facebook account to start a conversation. Second, upload an image to test its multimodal perception. Third, keep your technical momentum by integrating flagship models with open APIs, such as GPT-5 or Claude Opus 4.6, via APIYI (apiyi.com). Once the Muse Spark API is officially released, you'll be able to seamlessly switch and compare performance on the APIYI platform to make the best choice for your needs.

Author: APIYI Team — Dedicated to providing developers with stable access to mainstream Large Language Models. Visit apiyi.com to learn more.

References

-

Meta AI Official Blog – Introducing Muse Spark

- Link:

ai.meta.com/blog/introducing-muse-spark-msl - Description: Official documentation on model architecture, capabilities, and benchmarks.

- Link:

-

Meta Press Release

- Link:

about.fb.com/news/2026/04/introducing-muse-spark-meta-superintelligence-labs - Description: Product positioning and launch details.

- Link:

-

TechCrunch – In-depth Report on Muse Spark

- Link:

techcrunch.com/2026/04/08/meta-debuts-the-muse-spark-model-in-a-ground-up-overhaul-of-its-ai - Description: Analysis of the "ground-up overhaul" strategic shift.

- Link:

-

CNBC – Meta's $1.4 Billion Deal and Muse Spark

- Link:

cnbc.com/2026/04/08/meta-debuts-first-major-ai-model-since-14-billion-deal-to-bring-in-alexandr-wang.html - Description: Background on Alexandr Wang joining Meta.

- Link:

-

Fortune – Analysis of Meta AI's Transformation

- Link:

fortune.com/2026/04/08/meta-unveils-muse-spark-mark-zuckerberg-ai-push - Description: Strategic roadmap and market reaction.

- Link:

-

9to5Mac – Introduction to Contemplating Mode

- Link:

9to5mac.com/2026/04/08/goodbye-llama-meta-unveils-muse-spark-ai-with-new-contemplating-mode - Description: Detailed breakdown of the "contemplating" mode features.

- Link:

-

VentureBeat – Goodbye Llama

- Link:

venturebeat.com/technology/goodbye-llama-meta-launches-new-proprietary-ai-model-muse-spark-first-since - Description: The transition from Llama to the Muse architecture.

- Link: