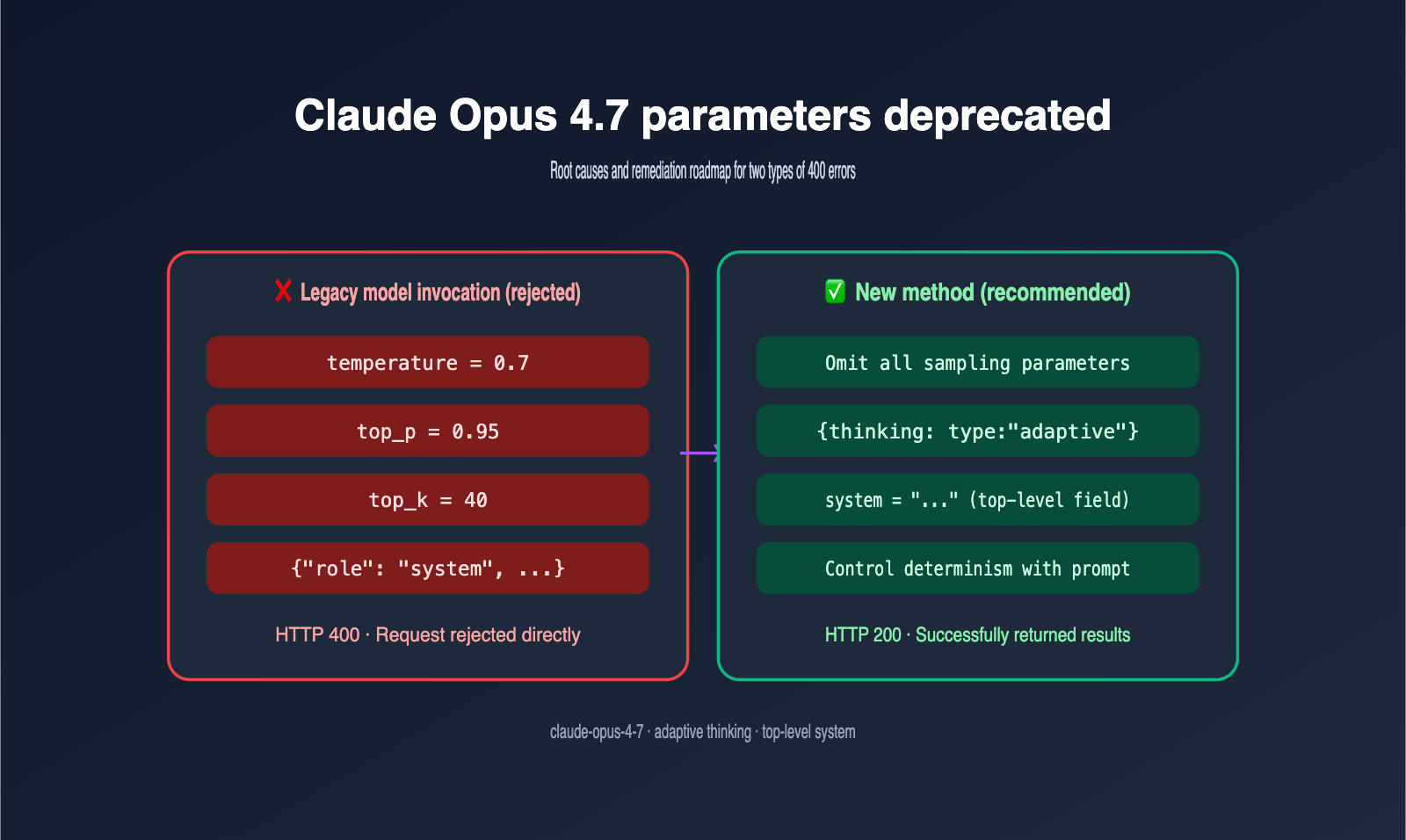

Author's Note: Claude Opus 4.7 has deprecated sampling parameters like temperature, top_p, and top_k, and now requires the system prompt to be passed as a top-level parameter rather than a message role. This article explains the root causes of two common 400 errors and provides code fixes.

After upgrading to Claude Opus 4.7, the biggest hurdle for developers isn't the model itself, but the request parameters. The first frequent error is temperature is deprecated for this model, and the second is Unexpected role "system". The Messages API accepts a top-level system parameter, not "system" as an input message role. Both are HTTP 400 errors; while they seem unrelated, they point to the same fact: Anthropic has performed a relatively aggressive API consolidation with Opus 4.7.

Before fully grasping these changes, many teams habitually use "setting temperature to 0" or "adding a system message" as a silver bullet for consistency. Opus 4.7 has effectively blocked both paths. This guide will break down these two issues from the perspectives of cause, error, and fix, and include copy-pasteable migration code. After reading, you'll not only be able to resolve your 400 errors in under 10 minutes, but you'll also understand the deeper logic behind Anthropic's design changes, helping you avoid similar pitfalls in future model upgrades.

Which parameters have been deprecated in Claude Opus 4.7?

Before diving into the errors, let's look at the complete "deprecation list." The changes Anthropic made to Opus 4.7 are more extensive than they appear, and the impact is much greater than the deprecations seen in the 4.6 era.

| Parameter | Status | Behavior | Alternative |

|---|---|---|---|

temperature |

Deprecated | Setting any non-default value returns 400 | Omit entirely; control randomness via prompt |

top_p |

Deprecated | Same as above | Omit entirely |

top_k |

Deprecated | Same as above | Omit entirely |

thinking.budget_tokens |

Removed | Explicit budget returns 400 | thinking: { type: "adaptive" } |

reasoning_effort (legacy) |

Removed | Old field no longer effective | output_config: { effort: "max" } |

role: "system" in messages |

Unsupported | Always the case, but stricter in 4.7 | Top-level system parameter |

🎯 Must-read before upgrading: If you're using the Python SDK, any old code containing

temperature=0.7,top_p=0.95, ormessages=[{"role": "system", ...}]will throw a 400 error on Opus 4.7. We recommend accessing Opus 4.7 via APIYI (apiyi.com), as the platform provides graceful degradation for parameter compatibility, allowing for a smooth transition to the new interface.

The most easily overlooked item on the list is the thinking parameter. In the Opus 4.6 era, you could pass thinking: {"type": "enabled", "budget_tokens": 8000} to let the model think a bit longer, but this usage is rejected outright in Opus 4.7. The new version only accepts the adaptive type, where the model determines the reasoning intensity itself. Note that adaptive thinking is disabled by default and must be explicitly enabled.

Another hidden change is the tokenizer. Opus 4.7 uses a new tokenizer, and the same text will result in 0% to 35% more tokens on the new model compared to 4.6. This means your cost budget estimated for 4.6 will likely see a "silent price hike" on 4.7, so you'll need to double-check your billing.

Why Claude Opus 4.7 Deprecated the Temperature Parameter

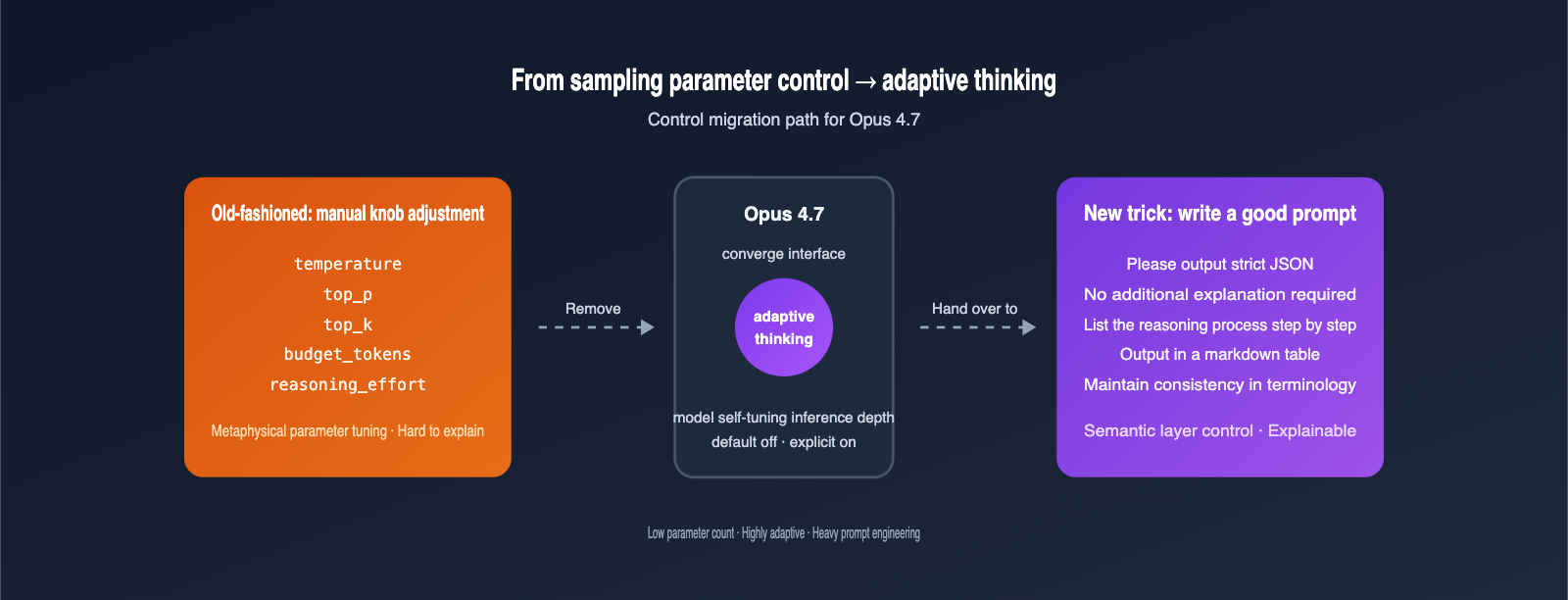

Understanding the logic behind this change will save you a lot of headaches. Anthropic's decision to "retire" the temperature, top_p, and top_k sampling parameters in Opus 4.7 essentially shifts the model from a "tunable parameter library" to an "adaptive black box."

From an architectural perspective, Opus 4.7 has handed control of inference intensity over to the adaptive thinking module, which inherently manages uncertainty. In other words, using sampling-layer methods to adjust randomness is no longer compatible with the model's internal reasoning logic; forcing these settings actually disrupts the new optimization path. Anthropic explicitly states in their documentation that, based on internal evaluations, adaptive thinking consistently outperforms the combination of extended thinking and manual temperature settings.

Looking at it from another angle, "removing the knobs" is a user-friendly upgrade for newcomers. In the past, when developers tuned LLMs, they often fell into the "is my temperature setting wrong?" trap—a metaphysical struggle where they could neither find the optimal value nor explain the performance variations. Opus 4.7 closes this "pseudo-optimization" path, allowing everyone to focus on prompt engineering and context management—the things that actually deliver stable, reliable results.

From an engineering standpoint, the deprecation of these three sampling parameters means Anthropic no longer encourages the "old-school" practice of relying on temperature to force stability. The recommended approach now is to use prompt engineering to explicitly state your requirements—such as "you must provide a deterministic answer" or "please output strict JSON"—letting the model constrain itself at the semantic level rather than being hard-controlled at the sampling level. We recommend that teams using APIYI (apiyi.com) to invoke Opus 4.7 gradually migrate code that previously relied on temperature=0 to a style that "explicitly requests determinism in the system prompt."

This approach actually contrasts with the "five-level reasoning effort" seen in GPT-5.5. OpenAI is "giving developers finer switches," while Anthropic is "taking the switches back and giving them to the model." Neither philosophy is right or wrong, but both clearly signal a move away from traditional hyperparameter tuning. The biggest takeaway for developers is this: the focus of future LLM tuning will shift from "turning knobs" to "writing better prompts and managing context."

It's worth noting that Anthropic's "aggressive convergence" didn't come out of nowhere. During the Opus 4.6 era, the documentation already marked extended thinking as deprecated and prompted developers to transition to adaptive thinking. If you started writing code using the recommended practices back then, this 4.7 upgrade is essentially zero-cost. Conversely, if you've been relying on the old "crank up temperature for creativity, turn it down for stability" playbook, this migration might be a bit painful.

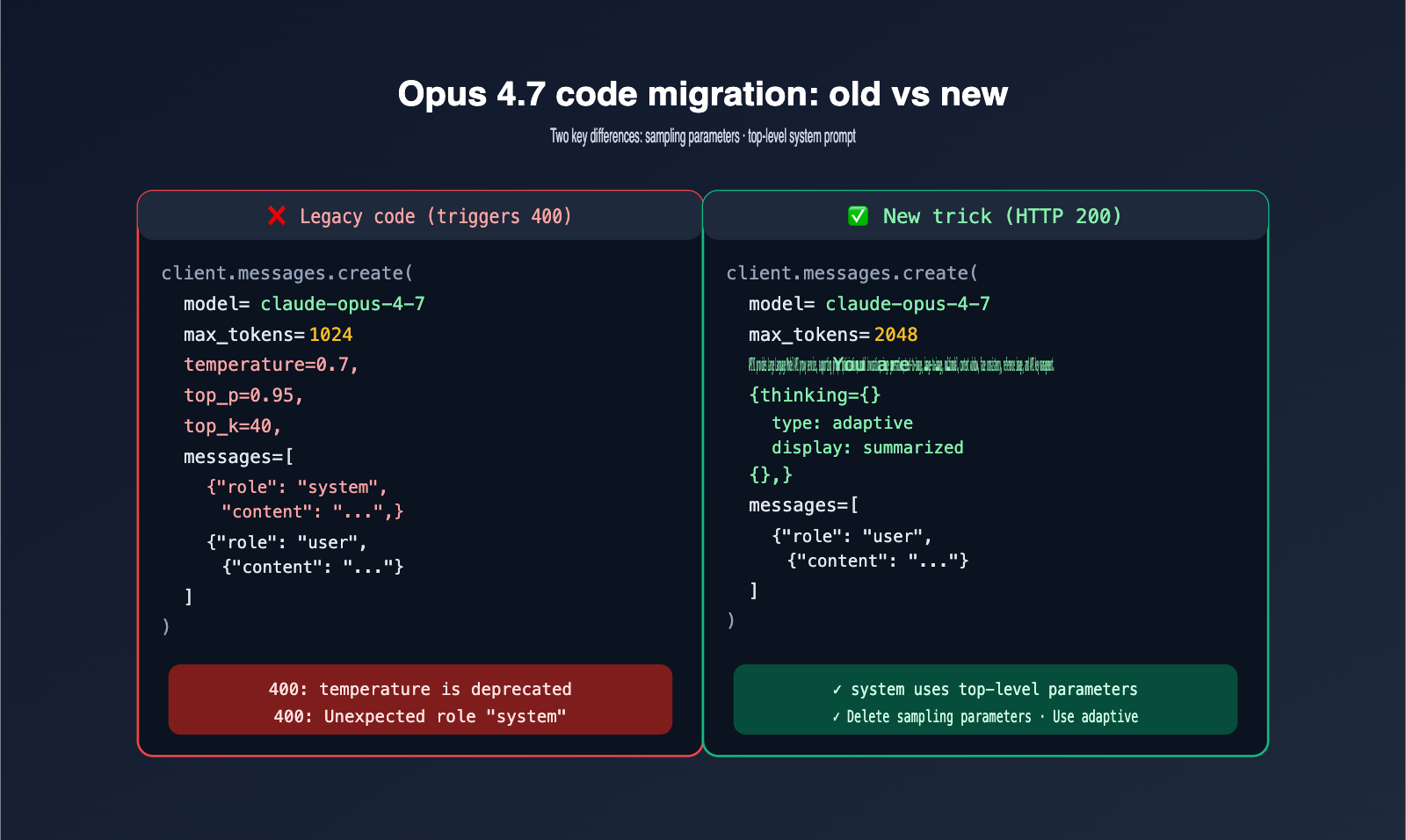

Fix for the Temperature Parameter 400 Error

Now that you know the reason, the fix is straightforward. Below is a minimal example that works reliably in China when paired with the APIYI (apiyi.com) base_url.

# pip install anthropic

from anthropic import Anthropic

client = Anthropic(

api_key="YOUR_APIYI_KEY",

base_url="https://api.apiyi.com" # Unified invocation of Opus 4.7 via APIYI

)

# ❌ Old code: Triggers a 400 error because temperature is deprecated

# response = client.messages.create(

# model="claude-opus-4-7",

# max_tokens=1024,

# temperature=0.7,

# top_p=0.95,

# messages=[{"role": "user", "content": "Hello"}]

# )

# ✅ New code: Omit sampling parameters entirely

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

system="You must return strict JSON. No extra commentary.",

messages=[{"role": "user", "content": "Hello"}]

)

🎯 Quick Fix Suggestion: Point your

base_urltohttps://api.apiyi.comand use the Anthropic-compatible key provided by APIYI (apiyi.com). You only need to delete the three lines of sampling parameters from your old code to get it running. If you're worried about not being able to update everything at once, APIYI (apiyi.com) provides smooth downgrades for deprecated parameters by default, giving you a buffer period for your migration.

The table below summarizes three typical migration strategies to help you choose the best approach.

| Old Usage | New Approach | Benefit |

|---|---|---|

temperature=0 for determinism |

Use system prompt: "Return strict JSON, no extra text" | More stable output, saves tokens |

temperature=1 for creativity |

Omit all sampling parameters, let the model run free | Closer to native 4.7 performance |

top_p / top_k for sampling limits |

Use effort: "max" with adaptive thinking |

Use reasoning depth instead of sampling cuts |

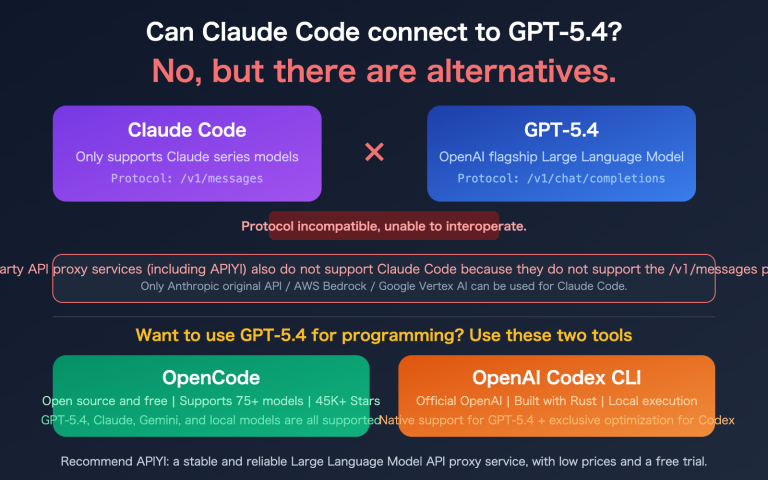

If you are using an OpenAI-compatible protocol (which many third-party frameworks use by default), you should also check if your SDK is hard-coding temperature=1.0 under the hood. There are already many issues in the community where frameworks hard-code default values, causing Opus 4.7 to reject requests. In such cases, either upgrade your framework or use the compatibility layer provided by APIYI (apiyi.com).

The Essence of the "System" Role Error and How to Fix It

The second most common 400 error has nothing to do with temperature; it’s an old issue that has been "amplified" in the new model. Anthropic's Messages API has never supported the system role within the messages array. However, many developers migrating from OpenAI's Chat Completions often write it that way out of habit, triggering the Unexpected role "system" error.

The key to understanding this is that Anthropic treats system as "session-level configuration" rather than "dialogue content." It must appear at the top level of the request body, not inside the messages array. The table below compares the differences between OpenAI and Anthropic.

| Feature | OpenAI Chat Completions | Anthropic Messages |

|---|---|---|

system location |

First item in messages array |

Top-level system field |

system count |

Multiple allowed | Single string only |

| 4.7 Error | None | Unexpected role "system" (400) |

| Migration effort | — | Low, just move the field |

🎯 Migration Tip: If your project uses "OpenAI / Claude dual-run" logic, I recommend wrapping it in an adapter: when calling OpenAI, place

systeminsidemessages; when calling Claude, move it to the top-levelsystemfield. By accessing both models via APIYI (apiyi.com), you can manage them under a single API key system, avoiding redundant configurations.

The fix is straightforward. Compare the incorrect and correct approaches below and follow the pattern:

# ❌ Incorrect: Triggers Unexpected role "system" 400

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

messages=[

{"role": "system", "content": "You are a coding assistant."},

{"role": "user", "content": "Write a quicksort in Python."}

]

)

# ✅ Correct: system as a top-level parameter

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=1024,

system="You are a coding assistant.",

messages=[

{"role": "user", "content": "Write a quicksort in Python."}

]

)

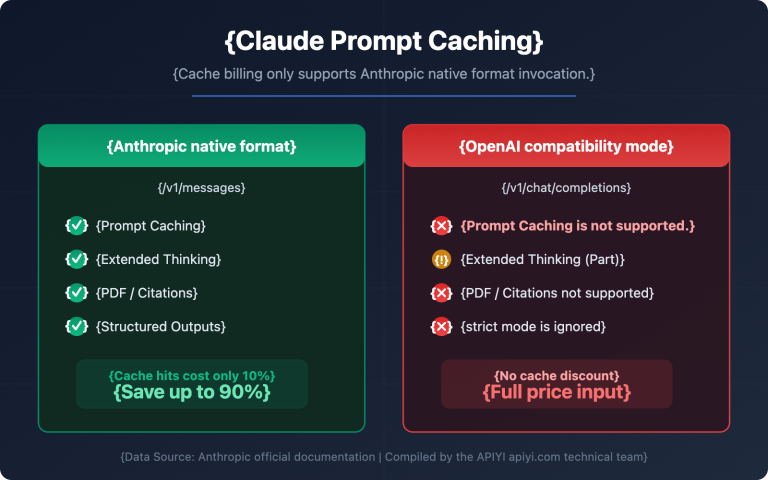

One extra note: if you really need to provide "multiple system instructions" to Claude, the correct way is to merge them into a single string, separated by newlines or numbering. While the Anthropic SDK supports content blocks for the system field, that’s an advanced use case; beginners should stick to the "single string" approach. There's a hidden benefit to this: merging into a single string makes it easier to trigger prompt caching on APIYI (apiyi.com), which will further reduce costs for long-running tasks.

Complete Migration Template for Claude Opus 4.7

Combining the fixes for both types of errors, the code below is a "ready-to-run starter kit for Opus 4.7," featuring adaptive thinking, top-level system parameters, and cache-friendly formatting.

from anthropic import Anthropic

client = Anthropic(

api_key="YOUR_APIYI_KEY",

base_url="https://api.apiyi.com" # Call Opus 4.7 via APIYI

)

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=2048,

system="You are an expert Python engineer. Always return strict JSON.",

thinking={

"type": "adaptive",

"display": "summarized"

},

messages=[

{"role": "user", "content": "Refactor my quicksort to be O(n log n) average."}

]

)

print(response.content[0].text)

🎯 Production Advice: When calling Opus 4.7 via APIYI (apiyi.com), place stable

systemprompts and tool descriptions at the beginning to trigger 0.1x cache billing. This can reduce costs for repeated requests to just 10% of the original price, which is especially beneficial for long-running Agents and document generation tasks.

There are a few other details to keep in mind during migration. First, the thinking field is optional, but if your task is sensitive to reasoning depth, I recommend explicitly enabling adaptive thinking; otherwise, the model will default to a lightweight reasoning mode. Second, since the new tokenizer may count the same text as 0% to 35% more tokens, you should adjust your max_tokens budget accordingly to avoid truncation in long-output scenarios. Third, legacy parameters like thinking_budget should be removed; residual fields won't trigger errors but will be ignored, which might lead you to believe they are still active. Fourth, if your application calls both 4.6 and 4.7, it's best to track billing by model name to avoid cost attribution distortion caused by the mix of old and new tokenizers.

If you're maintaining a codebase that needs to be compatible with both Opus 4.6 and 4.7, the safest approach is to use an allowlist for parameters based on the model name. Skip "sampling parameters + legacy thinking fields" when calling 4.7, and keep them for 4.6, so you don't have to maintain two separate calling functions for different models.

The error lookup table below summarizes the most common 400 errors encountered during the Opus 4.7 upgrade, helping you locate and fix them directly based on the error text.

| Error Keyword | Meaning | Fix |

|---|---|---|

temperature is deprecated |

Deprecated temperature field used |

Remove temperature from request body |

top_p is deprecated |

Deprecated top_p field used |

Remove top_p, let model adapt |

top_k is deprecated |

Deprecated top_k field used |

Remove top_k, let model adapt |

| Unexpected role "system" | system inside messages array |

Move to top-level system field |

Invalid budget_tokens |

Legacy extended thinking budget used | Use adaptive thinking, no budget needed |

Unknown parameter reasoning_effort |

Legacy reasoning intensity field used | Use output_config: {effort: "max"} |

Claude Opus 4.7 Parameter Deprecation FAQ

Q1: Why did Opus 4.7 deprecate so many parameters at once?

The core reason is that adaptive thinking has taken over the responsibilities previously handled by temperature, top_p, top_k, and thinking budget—specifically, controlling randomness and reasoning depth. Anthropic’s internal evaluations show that adaptive thinking consistently outperforms manual tuning, so they’ve decided to consolidate the entry point.

Q2: Can I set temperature to 1.0 to "bypass" the error?

No. Opus 4.7 checks for sampling parameters based on presence, not the value provided. If these keys appear in your request body, the system identifies them as non-default configurations and returns a 400 error. The correct approach is to omit these fields entirely from your request and let the SDK use the model's default sampling behavior.

Q3: Will using the OpenAI SDK via APIYI to call Opus 4.7 trigger a temperature error?

It depends on your SDK version and the framework you're using. The OpenAI SDK typically includes temperature=1.0 by default. If you forward this directly to the Anthropic backend, Opus 4.7 will still reject it. However, when calling via APIYI (apiyi.com), the platform handles these common compatibility issues gracefully and automatically filters out the deprecated fields for you.

Q4: Is the system error unique to 4.7? Did previous Claude models not have this?

Not exactly. The Anthropic Messages API has never allowed system to be included in the messages array. However, Opus 4.7 has stricter validation, whereas some earlier models might have been more "lenient." The best practice has always been to place system at the top level of the request body. Once you migrate it to the top level, it will work correctly across all Claude models.

Q5: For code migrated from OpenAI, what's the minimum number of lines I need to change to run Opus 4.7?

Usually, there are three steps: 1) Change the model to claude-opus-4-7; 2) Move the system entry from messages to the top-level system field; 3) Remove sampling parameters like temperature and top_p. If you point your base_url to https://api.apiyi.com, you can usually get the whole project running in under 10 minutes. If your project has dozens of call points, I recommend creating a unified call_claude() utility function to centralize these changes—that way, if the API changes again in the future, you only have to update it in one place.

Q6: Is adaptive thinking enabled by default? Should I turn it on explicitly?

It's disabled by default. If your task is sensitive to reasoning depth (such as mathematical reasoning, code refactoring, or complex planning), I recommend explicitly passing thinking: {type: "adaptive"}. You can also pair this with output_config: {effort: "max"} to unlock the model's full reasoning potential. Just keep in mind that this increases token usage, so you'll need to balance quality against cost.

Q7: Is calling Opus 4.7 stable within China?

Directly connecting to Anthropic's API can be affected by network conditions, and long-running tasks are particularly prone to interruptions. Calling Opus 4.7 via APIYI (apiyi.com) solves these stability issues for domestic access. The platform is running reliably, and when combined with the 0.1x cache billing, it can significantly reduce your costs.

Q8: How much does the new tokenizer increase costs, and how should I handle it?

The new tokenizer results in a token expansion of 0% to 35% for different texts, averaging about 10% to 15%. The most practical way to handle this is to move cacheable system prompts and tool descriptions to the front to trigger the 0.1x cache billing; this will actually lower your per-request cost rather than increasing it.

Key Takeaways: Claude Opus 4.7 Parameter Deprecation

- Opus 4.7 has completely deprecated the three major sampling parameters:

temperature,top_p, andtop_k. Passing any value for these will trigger a 400 error. - Extended thinking has been removed; only adaptive thinking is supported, and it is disabled by default—you must enable it explicitly.

- The

Unexpected role "system"error is a long-standing rule of the Messages API;systemmust be placed at the top level, not within the message roles. - The new tokenizer results in 0% to 35% more tokens for the same text compared to 4.6, so you'll need to recalculate your budgets and

max_tokens. - The minimum fix requires only three steps: remove sampling parameters, move

systemto the top level, and update the model name toclaude-opus-4-7. - Calling Opus 4.7 via APIYI (apiyi.com) provides graceful parameter compatibility, 0.1x cache billing, and stable domestic access.

- Using adaptive thinking combined with

output_config: {effort: "max"}is the standard way to achieve the best reasoning performance on Opus 4.7.

Summary

The deprecation of parameters in Claude Opus 4.7 might look like a "breaking change" at first glance, but it's actually a pivotal step in Anthropic's evolution of the model from a "detail-exposed tool" into an "adaptive black box." For developers, this means that the stability previously achieved through "switches" like temperature or thinking budget needs to be gradually shifted toward a combination of prompt engineering and adaptive thinking. While this brings some migration costs in the short term, it’ll lead to cleaner code and more stable model performance in the long run. This evolutionary path isn't isolated; mainstream Large Language Models are all trending toward "fewer parameters, stronger adaptability," and the sooner you adapt, the more you'll benefit.

If you're currently upgrading to Opus 4.7 or evaluating the impact of this change on your production environment, we recommend testing the new version via APIYI (apiyi.com). Our platform already provides stable support for Opus 4.7 and includes compatibility downgrades for the deprecated parameters. Combined with our 0.1x caching rate, it’s currently the most cost-effective path for your migration.

May you encounter fewer pitfalls and get off work early.

— The APIYI Technical Team. For more hands-on AI model tutorials, visit APIYI at apiyi.com.