Author's Note: Seed 2.0 Lite 260228 is officially live on the BytePlus ModelArk platform, supporting a 256K context window with tiered pricing as low as $0.25/M tokens. This article details the model's capabilities, pricing strategy, and API integration.

A new member joins the ByteDance Seed 2.0 family. seed-2-0-lite-260228 is now officially available on the BytePlus ModelArk platform. This is the latest version of Seed 2.0 Lite, open to global developers as an official proxy channel.

Core Value: By the end of this article, you'll understand the full technical specs of Seed 2.0 Lite 260228, its tiered pricing mechanism, and how to quickly integrate this cost-effective model via API.

Seed 2.0 Lite 260228 Key Highlights

| Key Point | Description | Value |

|---|---|---|

| Model Version | seed-2-0-lite-260228 (Feb 28, 2026 version) | Latest version via BytePlus official channel |

| Context Window | Supports up to 256K tokens | Ideal for long documents and complex multi-turn dialogues |

| Tiered Pricing | 0-128K: $0.25/M, 128K-256K: $0.50/M | Extremely low cost for short-text scenarios |

| Output Pricing | 0-128K: $2.00/M, 128K-256K: $4.00/M | Over 50% savings compared to the Pro version |

| Multimodal | Supports text, image, and video understanding | A single model covering multiple input formats |

Deep Dive: Seed 2.0 Lite 260228 Features

The seed-2-0-lite-260228 is an updated release from the ByteDance Seed team as of February 2026. Compared to the initial Seed 2.0 series launch on February 14th, the 260228 version features further optimizations in stability and inference efficiency. It's provided to international developers as an official proxy service via the BytePlus ModelArk platform.

BytePlus is ByteDance's global technical service arm, and ModelArk is its flagship Large Language Model PaaS platform. By accessing Seed 2.0 Lite through the BytePlus official proxy, developers get the same model capabilities as ByteDance's domestic services, but with international API standards and billing systems.

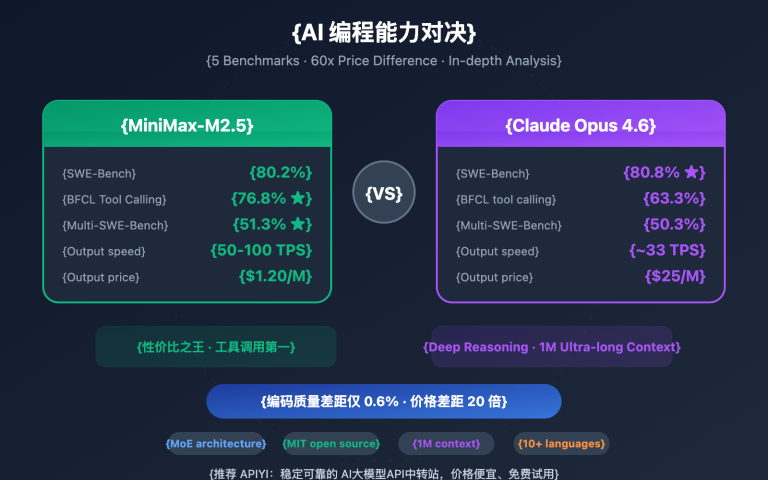

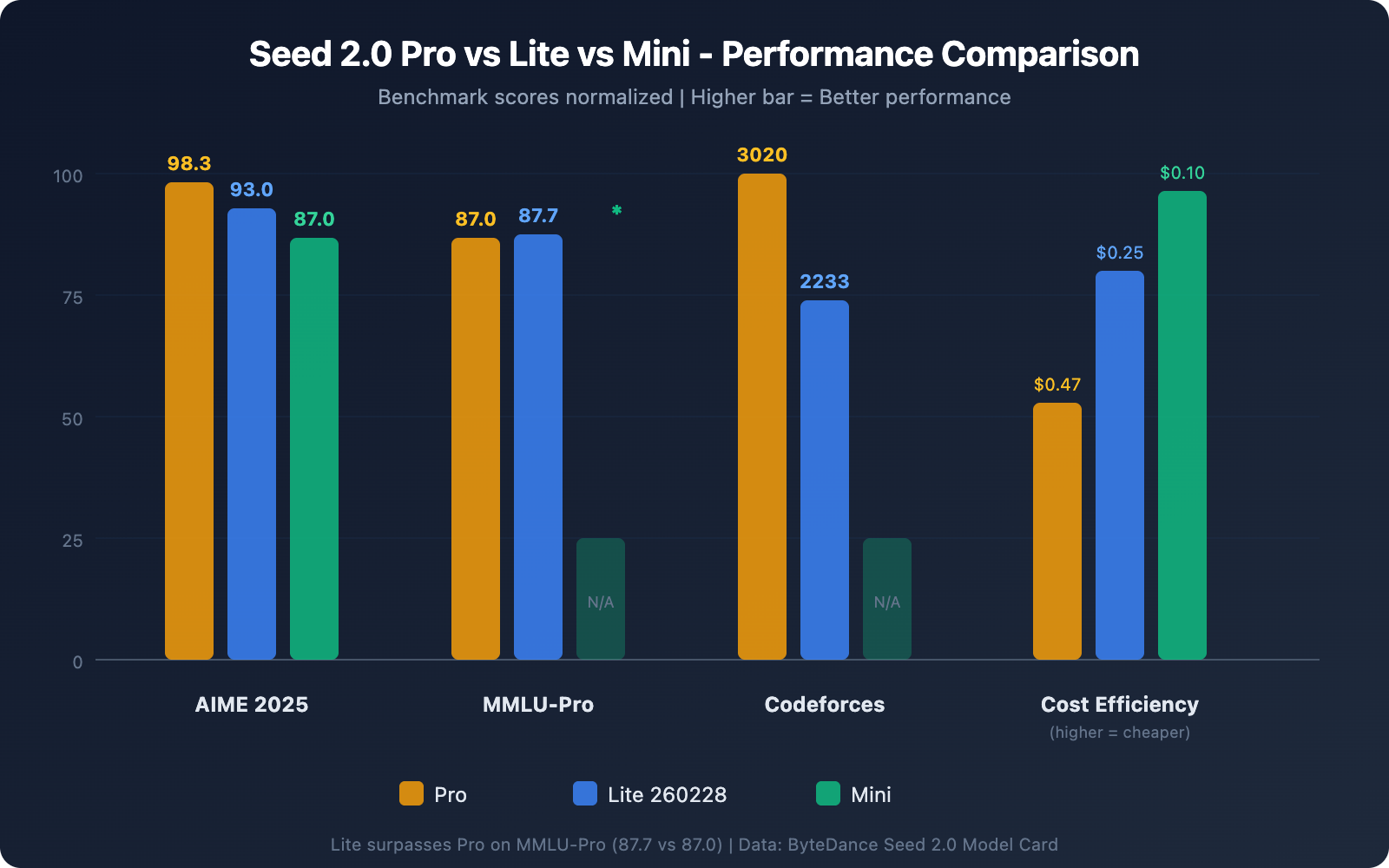

Seed 2.0 Lite Benchmark Performance

Seed 2.0 Lite delivers near-flagship performance across several authoritative benchmarks. It scored 93.0 on the AIME 2025 mathematical reasoning test and 87.7 on the MMLU-Pro knowledge understanding test (even slightly edging out the Pro version's 87.0). It also achieved a 2233 rating on Codeforces and a 73.5 on the SWE-Bench software engineering test. This means the Lite version is a viable, high-performance alternative to the Pro version for most real-world applications.

Seed 2.0 Lite 260228 Tiered Pricing Explained

seed-2-0-lite-260228 uses a pay-as-you-go Chat billing model and introduces a tiered pricing mechanism, applying different rates based on the input token count.

Seed 2.0 Lite 260228 Pricing Table

| Input Range | Prompt Price (Input) | Completion Price (Output) | Use Case |

|---|---|---|---|

| 0 – 128K tokens | $0.2500 / 1M tokens | $2.0000 / 1M tokens | Daily conversations, short document processing |

| 128K – 256K tokens | $0.5000 / 1M tokens | $4.0000 / 1M tokens | Long document analysis, code review |

Seed 2.0 Lite 260228 Pricing Analysis

The tiered pricing design is quite sensible. Most API calls have an input length under 128K, where the input cost is just $0.25/M tokens—a highly competitive price range in today's Large Language Model market. Even for long-context tasks (128K-256K), the $0.50/M tokens input price remains lower than most models in its class.

Here's a cost comparison between Seed 2.0 Lite 260228 and other mainstream models:

Seed 2.0 Lite 260228 vs. Mainstream Model Price Comparison

| Model | Input Price (/M tokens) | Output Price (/M tokens) | Context Window | Available Platforms |

|---|---|---|---|---|

| Seed 2.0 Lite 260228 | $0.25 | $2.00 | 256K | BytePlus, APIYI |

| Seed 2.0 Pro | $0.47 | $2.37 | 256K | BytePlus, APIYI |

| Seed 2.0 Mini | $0.10 | $0.30 | 256K | BytePlus, APIYI |

| GPT-4o | $2.50 | $10.00 | 128K | OpenAI, APIYI |

| Claude Sonnet 4 | $3.00 | $15.00 | 200K | Anthropic, APIYI |

| DeepSeek-V3 | $0.27 | $1.10 | 128K | DeepSeek, APIYI |

From a pricing perspective, Seed 2.0 Lite's input price is close to DeepSeek-V3 but offers a larger 256K context window. Compared to GPT-4o and Claude Sonnet 4, Seed 2.0 Lite's input cost is only about one-tenth of theirs.

Cost Optimization Suggestion: For budget-sensitive projects, Seed 2.0 Lite 260228 is a very competitive choice. You can access the entire Seed 2.0 series through the APIYI (apiyi.com) platform, making it easy to switch between Pro, Lite, and Mini to find the perfect balance between performance and cost.

Seed 2.0 Lite 260228 Quick Start

Minimalist Example

Here's the simplest code to perform a model invocation for Seed 2.0 Lite 260228 via an OpenAI-compatible interface:

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1" # APIYI unified interface

)

response = client.chat.completions.create(

model="seed-2-0-lite-260228",

messages=[{"role": "user", "content": "Tell me about your core capabilities"}]

)

print(response.choices[0].message.content)

View full implementation code (including multimodal and long context)

import openai

import base64

from pathlib import Path

def call_seed_lite(

prompt: str,

model: str = "seed-2-0-lite-260228",

system_prompt: str = None,

image_path: str = None,

max_tokens: int = 2000

) -> str:

"""

Invoke the Seed 2.0 Lite 260228 model

Args:

prompt: User input text

model: Model name

system_prompt: System prompt

image_path: Optional image path (multimodal)

max_tokens: Maximum output tokens

Returns:

Model response content

"""

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

messages = []

if system_prompt:

messages.append({"role": "system", "content": system_prompt})

# Build user message

if image_path:

image_data = base64.b64encode(Path(image_path).read_bytes()).decode()

messages.append({

"role": "user",

"content": [

{"type": "text", "text": prompt},

{"type": "image_url", "image_url": {

"url": f"data:image/png;base64,{image_data}"

}}

]

})

else:

messages.append({"role": "user", "content": prompt})

response = client.chat.completions.create(

model=model,

messages=messages,

max_tokens=max_tokens

)

return response.choices[0].message.content

# Text conversation example

result = call_seed_lite(

prompt="Analyze the time complexity of this code",

system_prompt="You are a senior algorithm engineer"

)

print(result)

# Multimodal example (image understanding)

result = call_seed_lite(

prompt="Describe the content of this image",

image_path="example.png"

)

print(result)

Quick Start: We recommend getting your API key and free test credits through the APIYI (apiyi.com) platform. You can call Seed 2.0 Lite 260228 immediately without needing a BytePlus overseas account. The platform supports OpenAI-compatible formats, allowing you to finish integration in just 5 minutes.

description: A detailed comparison of Seed 2.0 Lite 260228, Pro, and Mini models to help you choose the best fit for your AI reasoning and coding tasks.

Seed 2.0 Lite 260228 vs. Pro/Mini Versions

Choosing the right model from the Seed 2.0 series depends on your specific use case and budget. Here's a breakdown of the core differences between the three models:

Seed 2.0 Series Model Selection Guide

| Dimension | Seed 2.0 Pro | Seed 2.0 Lite 260228 | Seed 2.0 Mini |

|---|---|---|---|

| AIME 2025 | 98.3 | 93.0 | 87.0 |

| MMLU-Pro | 87.0 | 87.7 | – |

| Codeforces | 3020 | 2233 | – |

| SWE-Bench | – | 73.5 | – |

| Input Price | $0.47/M | $0.25/M | $0.10/M |

| Output Price | $2.37/M | $2.00/M | $0.30/M |

| Context Window | 256K | 256K | 256K |

| Best For | Frontier reasoning, competition-level tasks | General production, best value choice | High-concurrency batching, light tasks |

Selection Advice:

- Go with Pro: If your tasks involve top-tier mathematical reasoning or complex programming challenges, Pro's AIME 98.3 and Codeforces 3020 scores make it worth the investment.

- Go with Lite 260228: For daily development, document processing, information synthesis, and code reviews in production environments, Lite can handle 95% of tasks at roughly half the cost of Pro.

- Go with Mini: For high-concurrency, low-latency scenarios like customer service systems or batch data processing, the $0.10/M tokens input cost is incredibly attractive.

Pro Tip: Not sure which one to pick? You can test Seed 2.0 Pro, Lite, and Mini simultaneously on the APIYI (apiyi.com) platform. The unified API format lets you complete a side-by-side evaluation in minutes to find the perfect fit for your business.

Seed 2.0 Lite 260228: Use Case Analysis

With its 256K context window and multimodal capabilities, Seed 2.0 Lite 260228 covers a wide range of application scenarios:

Long Document Processing: The 256K context window can handle approximately 200,000 Chinese characters in one go, making it perfect for contract reviews, academic paper analysis, and technical document summarization. For input ranges under 128K, it costs just $0.25 per million tokens—making long document processing far more affordable than models like GPT-4o.

Coding Assistance: A Codeforces rating of 2233 shows that Lite possesses professional-grade programming skills, capable of handling medium-to-high difficulty algorithm problems and daily development tasks. Combined with its SWE-Bench score of 73.5 in software engineering tests, Lite is well-equipped for code reviews, bug fixes, and feature implementation.

Multimodal Content Understanding: The Seed 2.0 series has fully upgraded its multimodal understanding, supporting text, image, and video inputs. Lite 260228 can be used for chart analysis, product screenshot recognition, and video content extraction—any scenario requiring visual comprehension.

Cost-Effective Agent Applications: As an Agent-ready model, Seed 2.0 Lite supports tool calling and multi-step reasoning. When building AI Agent applications, Lite's low cost and stable performance make it an ideal choice for production environments.

Technical Tip: Seed 2.0 Lite 260228 is particularly suited for production deployments that need to balance performance and cost. The APIYI (apiyi.com) platform provides a unified OpenAI-compatible interface, supporting the full Seed 2.0 series alongside mainstream models like GPT, Claude, and Gemini, allowing you to switch between models with ease.

FAQ

Q1: What’s the difference between seed-2-0-lite-260228 and previous Seed 2.0 Lite versions?

"260228" refers to the latest version released on February 28, 2026. Compared to the initial February 14 release, this version features optimizations in reasoning stability and response speed. The numbers in the model ID represent the version date; we recommend using the latest version for the best experience. When calling the model via APIYI (apiyi.com), simply use the model name seed-2-0-lite-260228.

Q2: How is the tiered pricing calculated?

Tiered pricing is determined by the total input tokens in a single request. If the input is within the 0-128K range, the prompt price is $0.25/M tokens and the output is $2.00/M tokens. If the input falls between 128K-256K, the prompt price doubles to $0.50/M tokens and the output to $4.00/M tokens. Since most daily model invocations involve inputs well below 128K, your actual costs will usually fall into the lowest tier.

Q3: How can I quickly integrate the Seed 2.0 Lite 260228 API?

The fastest way to get started:

- Visit APIYI (apiyi.com) to register an account and get your API key and free test credits.

- Use the Python code examples provided in this article, setting the

modelparameter toseed-2-0-lite-260228. - Call it directly through the OpenAI-compatible interface—no need to configure the BytePlus SDK separately.

Summary

Key takeaways for Seed 2.0 Lite 260228:

- Official Release: Formally launched via the BytePlus ModelArk platform. The version number

seed-2-0-lite-260228marks the February 28, 2026 update. - Ultimate Cost-Effectiveness: Input for the 0-128K range is just $0.25/M tokens—90% cheaper than GPT-4o. It even slightly outperforms the Pro version on MMLU-Pro.

- 256K Long Context Window: Supports processing approximately 200,000 Chinese characters in one go. Tiered pricing makes short-text scenarios even more affordable.

- Multimodal + Agent Capabilities: Supports text, image, and video understanding, along with tool-calling capabilities, making it perfect for building production-grade AI applications.

For developers needing to strike a balance between performance and cost, Seed 2.0 Lite 260228 is one of the most noteworthy mid-range models of 2026. We recommend trying it out via APIYI (apiyi.com), which offers free credits and a unified interface for the entire Seed 2.0 series.

References

-

ByteDance Seed 2.0 Official Intro: Full technical specs for the Seed 2.0 series.

- Link:

seed.bytedance.com/en/seed2 - Description: Includes capability overviews and benchmark data for the Pro, Lite, and Mini models.

- Link:

-

BytePlus ModelArk Pricing: Official API pricing and billing rules.

- Link:

docs.byteplus.com/en/docs/ModelArk/1544106 - Description: Detailed pricing for each model, tiered billing info, and free credit details.

- Link:

-

Seed 2.0 Model Card (Technical Report): Detailed architecture and training methodology.

- Link:

github.com/ByteDance-Seed/Seed2.0 - Description: Open-source technical report containing full benchmark data and model design details.

- Link:

-

LLM Stats – Seed 2.0 Lite: Independent third-party evaluation data.

- Link:

llm-stats.com/models/seed-2-0-lite - Description: Comprehensive info including pricing, context window, and benchmark scores.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to discuss your experience with Seed 2.0 Lite in the comments. For more API integration guides, visit the APIYI Documentation Center at docs.apiyi.com.