On May 11, 2026, several Reddit users spotted a model card labeled "Omni" within the Gemini app interface. The description read: "Create with Gemini Omni: meet our new video model, remix your videos, edit directly in chat, try templates, and more." While Google hasn't made an official announcement yet, this leak has thrust Gemini Omni into the spotlight, just one week before Google I/O 2026, scheduled for May 19-20.

This article synthesizes the latest reports from English-language media outlets including 9to5google, TestingCatalog, ChromeUnboxed, Digit, and WaveSpeed. We've distilled the confirmed intelligence on the Gemini Omni video model into 8 key signals, covering product positioning, core capabilities, performance boundaries, and release pacing. For developers and content teams looking to gauge the technical roadmap before the conference, consider this a level-headed intelligence briefing rather than a collection of rumors.

Core Value: Understand the positioning, capabilities, performance, and release timeline of Gemini Omni in 3 minutes, and master actionable advice ahead of I/O 2026.

Quick Overview of Gemini Omni Video Model

To understand Gemini Omni, you first need to separate facts from speculation. The table below consolidates core information cross-verified by six English-language media outlets to help you avoid getting lost in fragmented leaks.

| Item | Details |

|---|---|

| First Spotted | 2026-05-11, Omni model card appeared in Gemini app UI |

| Source | Reddit user screenshots, followed by reports from 9to5google and TestingCatalog |

| Model Type | Multimodal model for integrated video generation and editing |

| Key Description | "Create with Gemini Omni: meet our new video model" |

| Demonstrated Demos | Blackboard math proof scene, seaside restaurant conversation scene |

| Current Tier | Likely from the Flash tier; Pro tier has not been leaked |

| Usage Signal | Two video generations consumed 86% of the AI Pro daily quota |

| Expected Launch | Google I/O 2026, May 19-20, San Francisco |

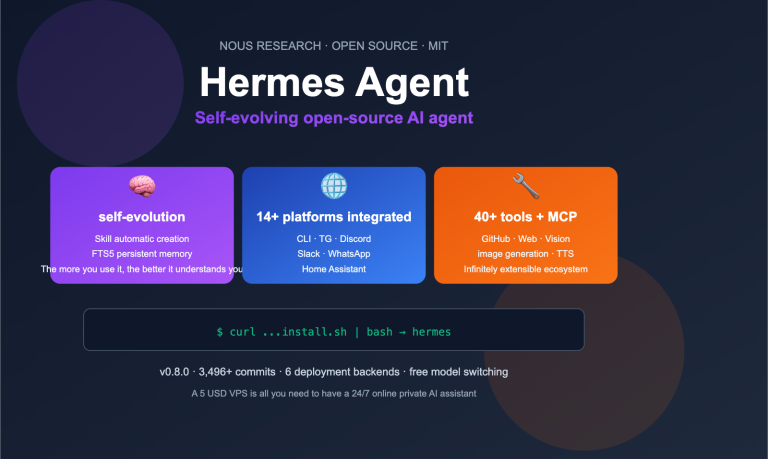

It's important to note that the leaked UI card only confirms that Google has moved Omni into the gray-box testing phase; it doesn't mean all capabilities will be available to all users on the day of I/O. For developers tracking Gemini Omni, we recommend registering an account at APIYI (apiyi.com) and preparing a unified base_url for your interface. Once Google officially releases it, you'll be able to switch models within your existing codebase immediately, saving the overhead of building a separate invocation pipeline.

5 Key Known Capabilities of the Gemini Omni Video Model

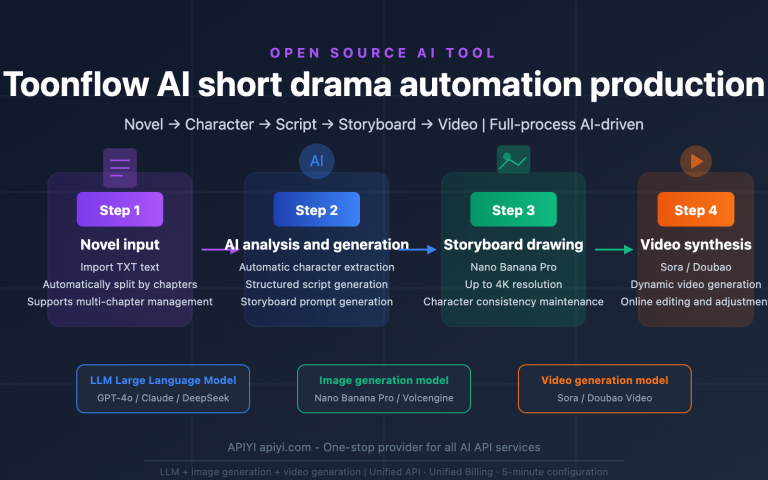

Gemini Omni isn't just a "text-to-video" tool. Based on UI descriptions and early demos, it integrates generation, editing, templates, and chat-based interaction into a unified system. The following five capabilities have been confirmed by multiple media outlets, though they remain in a state of rapid evolution.

First is chat-based video editing. Users can state their modification requests directly in the chat box—such as swapping a subject, changing the scene, or rewriting a specific action—and the model will regenerate the footage based on existing clips, rather than forcing users to manually edit on a timeline. This capability directly challenges traditional post-production tools and is a key differentiator between Omni and Veo 3.1.

Second is watermark removal and object replacement. Early testers have reported that Omni’s performance on "remove watermark" and "swap object" commands is significantly better than its base image generation, making these features a unique selling point. Given the sensitivity of these operations, Google will likely implement copyright and compliance checks upon official release.

Third is native joint audio-video generation. Interpretations from WaveSpeed and GeminiOmniAI point in the same direction: Omni outputs both the video and synchronized spatial audio in a single inference pass, rather than generating video first and layering sound later. This joint modeling approach can reduce common AI video issues like lip-sync errors and inconsistent ambient sound.

Fourth is long-form script context. Multiple media outlets have noted that Omni accepts longer prompts and script contexts than Veo 3, making it well-suited for multi-shot storytelling or long-form product explanations. Combined with the Gemini series' signature strength in long context management, if this capability holds true, it will significantly widen the gap between Omni and short-video-focused models like Sora.

Fifth is reference image-driven consistency. Omni supports using a reference image as an anchor for identity, lighting, and color, ensuring that generated actions retain the visual characteristics of the original character or scene. This is ideal for brand advertising, IP-based video, and digital human content.

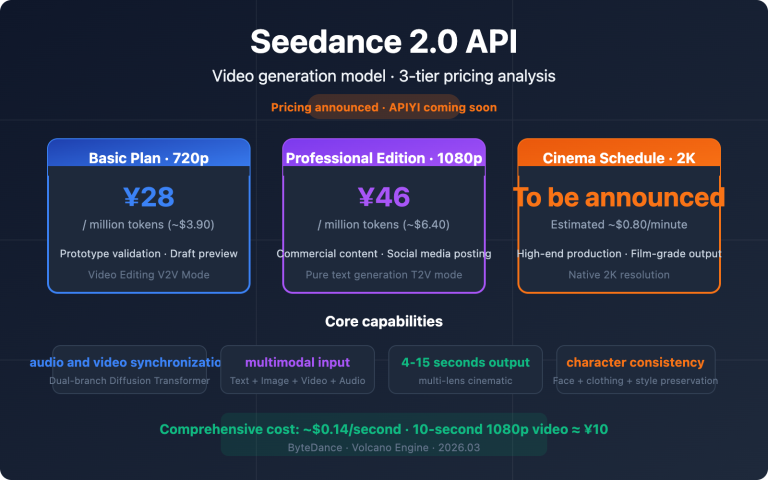

💡 Quick Start Tip: Before Gemini Omni is officially released, you can use mainstream video models like Veo 3.1, Seedance 2, and Hailuo on the APIYI (apiyi.com) platform to refine your prompt engineering. This allows for a smooth transition once Omni goes live, minimizing your trial-and-error costs.

Speculation on the Gemini Omni Flash and Pro Dual-Tier Architecture

Both TestingCatalog and WaveSpeed have noted that while the leaked UI only shows one "Omni" name, the model card naming conventions, parameter options, and speed metrics are highly consistent with the "Flash + Pro" structure seen in other Gemini family members. The table below outlines the speculated differences between these two product lines to help developers plan their future model selection.

| Tier | Speculated Positioning | Speculated Characteristics | Use Cases |

|---|---|---|---|

| Gemini Omni Flash | High-frequency production | Fast speed, lower cost per clip, medium visual quality | Social media short videos, ad A/B testing, bulk content |

| Gemini Omni Pro | High-quality production | Slower inference, refined image quality, superior native audio | Brand films, long-form video scripts, cinematic shots |

There are two main clues suggesting that the currently public demos are from the Flash tier: first, the visual quality of the early math blackboard and restaurant scenes does not exceed the level of Veo 3.1; second, the Pro tier is typically announced alongside high-compute inference features like "Deep Think." Once Google announces the Pro tier and its pricing at I/O 2026, developers will be able to determine whether they need to utilize both product lines for different scenarios.

For teams building video generation applications, a more practical approach is to use the multi-model aggregation interface on APIYI (apiyi.com) as a foundation. By building your business-side prompt management, parameter handling, and callback workflows as a "model-agnostic" middle layer, you can switch to new capabilities—like Omni Flash or Pro—simply by updating the model field without needing to take your system offline.

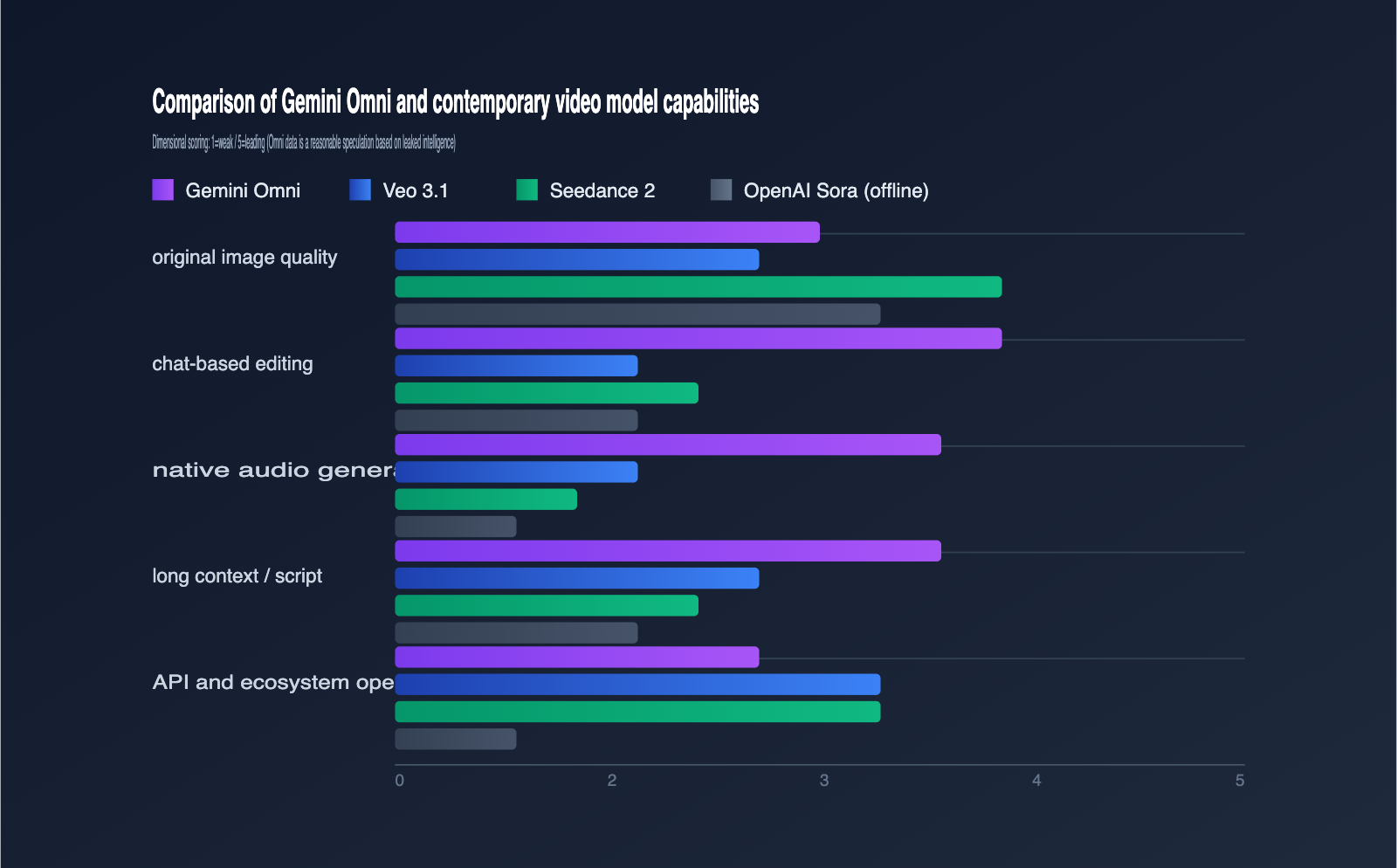

Analysis of the Relationship Between Gemini Omni, Veo 3.1, Seedance 2, and Sora

To understand Gemini Omni's market position, you have to view it within the current landscape of video models. The comparison table below summarizes the capability differences of the most notable models as of May 12, 2026. Note that data related to Omni is still speculative.

| Dimension | Gemini Omni | Veo 3.1 | Seedance 2 | OpenAI Sora |

|---|---|---|---|---|

| Primary Positioning | Video Gen + Chat-based Editing | Video Generation | High-Fidelity Video Gen | Discontinued early 2026 |

| Raw Image Quality | Above Average (est.) | Average | Industry Benchmark | High (historical) |

| Chat-based Editing | Key Highlight | Not Supported | Limited Support | No longer iterating |

| Native Audio | Single-inference sync | Post-processing | Post-processing | No native audio |

| API Availability | Expected with I/O | Vertex AI / Gemini API | Volcengine | Closed |

| Commercial License | TBD | Available | Available | Suspended |

Gemini Omni's real "killer feature" isn't replacing models like Seedance 2 that win on raw image quality; it's using Gemini's multimodal capabilities to compress the "generate → edit → regenerate" workflow into a chat window. For developers, this means the product form of video generation applications may shift from "editor + model" to "chat + model."

The gap left in the content ecosystem after OpenAI shut down Sora in early 2026 provides a perfect opportunity for Gemini Omni to step up. If your team is still evaluating which video generation ecosystem to bet on, I recommend using the unified API proxy service at APIYI (apiyi.com) to integrate both Veo 3.1 and Seedance 2 first. You can then add an Omni call chain once it's officially released, allowing you to defer your final selection until after the conference.

Gemini Omni Demo Observations and Usage Boundaries

Beyond the capability list and hierarchy speculation, another clue worth noting is the performance and usage data from early demos. 9to5google reported on two public demos covering difficult tasks like text rendering and long-take storytelling.

| Demo Theme | Prompt Key Elements | Observations |

|---|---|---|

| Math Blackboard | Professor writing trig identities | Text rendering is stable, minor stitching artifacts |

| Seaside Restaurant | Two men enjoying pasta at a high-end restaurant | Natural camera depth, lighting, and mood |

| Usage Sample | Two video prompts | Consumed 86% of daily AI Pro quota |

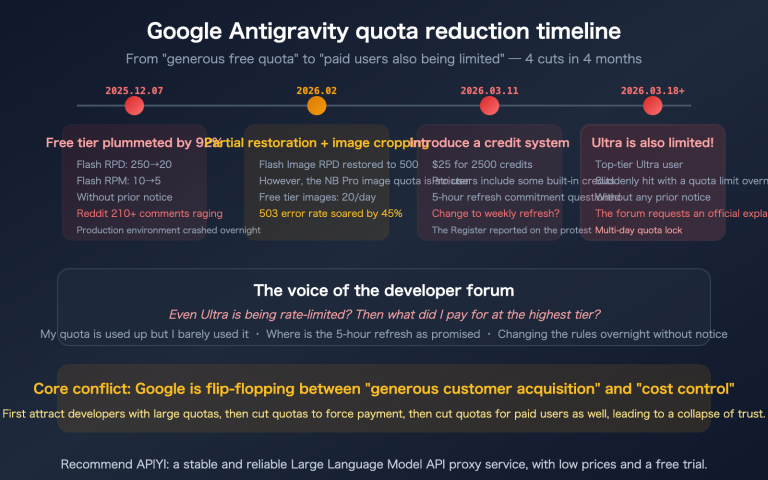

Usage data is the detail most easily overlooked in this leak. Two videos consuming the majority of a daily quota means Omni's compute consumption is significantly higher than standard models like Imagen 4 or Gemini 2.5 Flash. Google has already explicitly stated in another announcement that it will introduce "explicit usage limits" for Gemini accounts, suggesting that Omni will likely follow this restrictive quota strategy upon release.

For smaller teams, the most pragmatic approach is not to tie your video generation to a single channel. I recommend using the APIYI (apiyi.com) platform to call the Gemini series, splitting your daily budget into a mix of models: use Veo 3.1 or Seedance 2 for high-frequency content, and reserve Omni for key demonstrations. This way, you can enjoy Omni's differentiated capabilities without having your cash flow throttled by a single platform's quota policy.

The Impact of the Gemini Omni Video Model on Developers and the Industry

By synthesizing these signals, we can evaluate the potential impact of Gemini Omni from both developer and industry perspectives. This isn't just a dry recap of technical specs or over-hyped optimism; it's a reasoned inference based on the intelligence we have so far.

Impact on Video Generation App Developers

The first wave of impact hits teams building video generation SaaS. Omni makes chat-based editing a first-class citizen, which means traditional video editor UIs are no longer a "must-have." Developers now need to rethink whether to use a conversational interface as the sole entry point or keep a timeline as a fallback.

The second wave involves AI video content creators and MCNs. Native audio-video joint generation will significantly reduce the workload for post-production synthesis, though tight daily quotas will limit the volume of video a single person can produce. A more robust strategy is to use Omni as a "key shot enhancer," while relying on lower-cost models for routine content.

If your product relies on video generation APIs, I suggest you start doing a few things on the APIYI (apiyi.com) platform now: first, unify the encapsulation layer for all model invocations; second, build a prompt A/B testing library; and third, prepare three backup presets—Omni, Veo, and Seedance—for your key business workflows to avoid quota fluctuations on launch day.

Impact on the AI Video Industry Landscape

Since OpenAI Sora exited the spotlight, the leadership position in the AI video space has been rotating between Veo, Seedance, and Runway Gen-4. Once Gemini Omni truly supports native audio-video and long context windows, it will effectively migrate Google's "multimodal moat" directly into the video generation field, putting significant pressure on other vendors.

From an ecosystem perspective, it's highly likely that Google will distribute Omni through three channels simultaneously: the Gemini App, Vertex AI, and AI Studio. This means Omni will appear in consumer-grade chats while also being embedded into existing products as a developer API and enterprise agent tool. If your team needs to manage invocation entry points centrally within your organization, you can use APIYI (apiyi.com) to consolidate multiple invocation channels—like Omni, Veo, and Seedance—under a single bill and audit log.

Gemini Omni Video Model Timeline Around I/O 2026

To help your team plan your integration, I've organized the current public intelligence by date below. Note that events before May 19th are confirmed, while those following are projected.

| Stage | Date | Key Event |

|---|---|---|

| Gray-box Testing | Before 2026-05-11 | Google internal testing of Omni model card |

| UI Leak | 2026-05-11 | Reddit screenshots exposed, followed by major English media |

| Intelligence Gathering | 2026-05-12 to 5-18 | Vendors and creators focus on analysis and pre-hype |

| Official Launch | 2026-05-19 to 5-20 | Google I/O 2026 keynote and developer access |

| API Availability | After 2026-05-20 | Gemini API / Vertex AI / AI Studio gradually open |

| Domestic Proxy Access | Synchronized with API launch | Aggregation platforms like APIYI (apiyi.com) follow up with configurations |

FAQ

Q1: Will Gemini Omni really be released at I/O 2026?

Based on Google's naming conventions and the pace of leaks, I/O 2026 is the most logical release window. However, whether the API will be available on May 19th depends on Google's official announcements at the event. We recommend setting your expectations for May 19-20, while allowing for a one-week buffer for a gradual rollout.

Q2: What is the relationship between Gemini Omni and Veo 3.1?

There are currently three main schools of thought: Omni is the new public name for Veo, Omni is a separate model from Veo, or Omni is a higher-level omni-model that unifies image and video. Given the descriptions in the leaked UI, the third possibility is the most likely, though we still need official confirmation from Google.

Q3: Can developers in China use Gemini Omni?

As long as Google opens up Omni access via the Gemini API and Vertex AI, developers in China can connect through API proxy services like APIYI (apiyi.com). We suggest configuring the base_url for the Gemini series on the platform in advance to avoid last-minute scrambling on launch day.

Q4: The early demos don’t look as good as Seedance 2; does that mean Omni isn’t powerful?

It's not that simple. Many media outlets speculate that the current demos are from the Flash tier, and the Omni Pro version hasn't been revealed yet. Furthermore, Omni's true differentiators are its editing capabilities and native audio integration—raw image quality isn't its primary battlefield.

Q5: Should I wait for Omni, or use another video model now?

We recommend using Veo 3.1 as your general-purpose solution, Seedance 2 for high-quality requirements, and Hailuo for cost-sensitive projects. You can access all three models through APIYI (apiyi.com) and simply add a fourth invocation chain once Omni officially launches.

Summary

The early leaks of Gemini Omni have pushed the conversation around video models to a fever pitch ahead of Google I/O 2026. Based on what we know, its core selling point isn't just image quality, but the combination of conversational editing, native audio-video integration, and a massive context window. The goal is to shift the video generation workflow from complex editors directly into the chat box.

Before May 19th, the smartest strategy isn't to guess the details, but to build your video generation infrastructure. By standardizing your multi-model interface, prompt library, and usage monitoring, you'll keep switching costs low when Omni arrives. We suggest teams use aggregation platforms like APIYI (apiyi.com) to prepare their deployment, keeping the effort required to integrate Gemini Omni to just 1-2 days.

Author: APIYI Technical Team

Contact: Get the latest integration guide for Gemini Omni via APIYI (apiyi.com) as soon as it goes live.

Last Updated: 2026-05-12