title: "Sora-2 API Sunset: Migration Guide and Best Alternatives for 2026"

description: "OpenAI has officially announced the sunset of the Sora-2 API. Learn about the timeline, impact, and how to migrate to top-tier alternatives like SeeDance 2.0 and Wan 2.7."

tags: [AI, Sora, API, Video Generation, Migration]

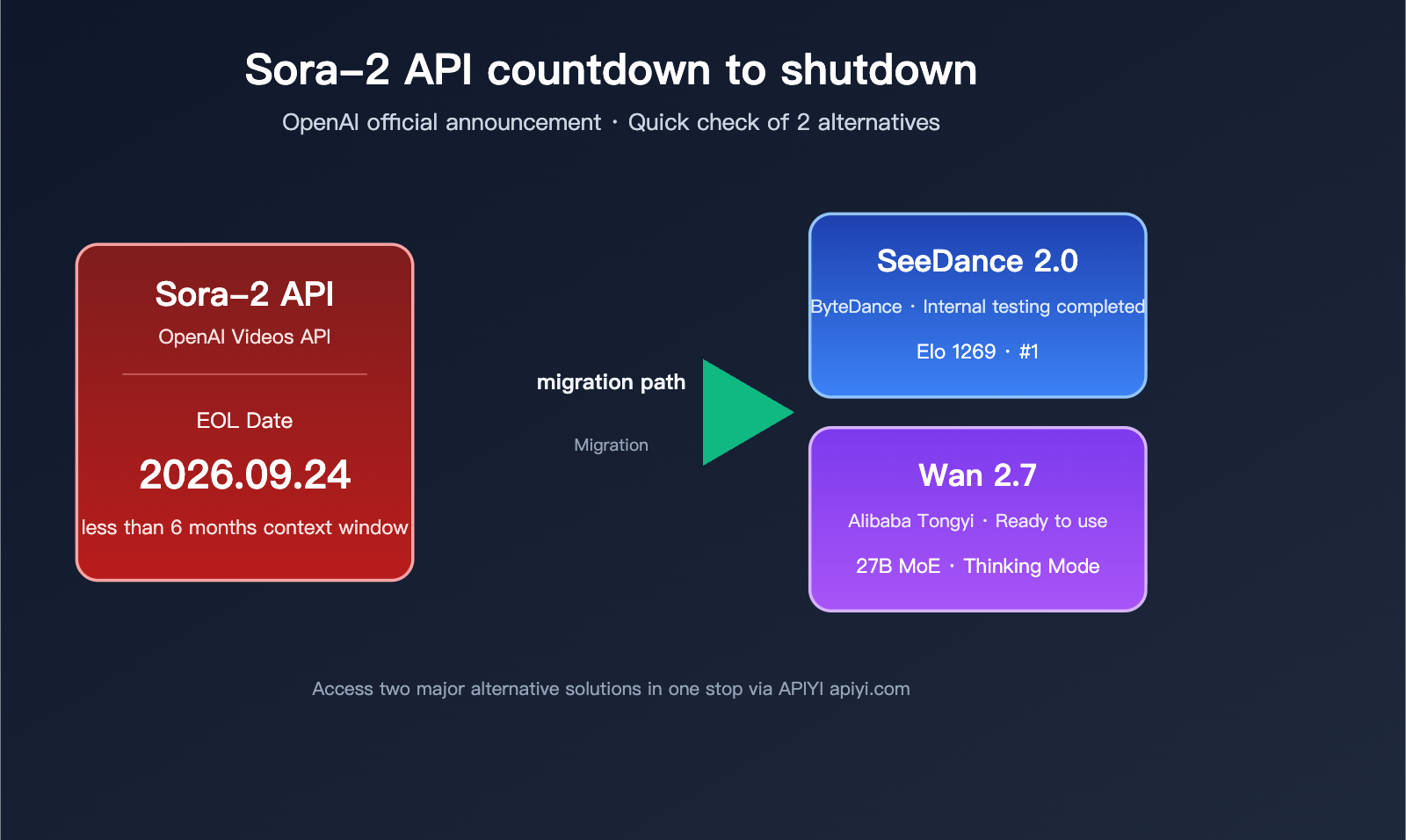

On March 24, 2026, OpenAI officially announced via a developer notification that the Sora-2 API sunset date is set for September 24, 2026. This means any video generation operations relying on sora-2, sora-2-pro, or the Videos API must be migrated within less than six months.

Even more concerning is that the so-called "reverse-engineered Sora APIs" circulating over the past six months have become largely unusable following OpenAI's tightened risk controls. Continuing to rely on those will only lead to further operational pitfalls. This article provides a complete breakdown of the Sora-2 API sunset timeline, the scope of the impact, and recommends two production-ready alternatives based on real-world testing: ByteDance's SeeDance 2.0 and Alibaba's Wan 2.7.

If you're currently using the Sora-2 API for video content production, we recommend comparing SeeDance 2.0 and Wan 2.7 via the APIYI (apiyi.com) platform. The platform has unified access to multiple mainstream video generation models, which can significantly reduce your migration costs.

Sora-2 API Sunset Timeline and Scope of Impact

Official Sunset Milestones for Sora-2 API

OpenAI has adopted a two-phase shutdown strategy for its Sora product: consumer-facing applications are being shut down first, followed by the B2B API, with a roughly five-month buffer period for developers to migrate. While this is one of the most "soft-landing" transitions in OpenAI's history, the window for developers remains extremely tight.

| Milestone | Event | Affected Parties | Buffer Period |

|---|---|---|---|

| 2026-03-24 | Official Notification | All Sora-2 API users | T+0 |

| 2026-04-26 | Sora Web & App Shutdown | End-users, content creators | T+33 days |

| 2026-09-24 | Sora-2 API Sunset | All API-integrated services | T+184 days |

| After 2026-09-25 | API returns 410 Gone | Non-migrated services | — |

OpenAI explicitly advises in its Help Center that if you wish to retain previously generated video content from Sora, you must export your data before the app shuts down on April 26. However, for developers integrated via API, the real deadline is September 24.

It's worth noting that OpenAI has not promised any possibility of an "extension." Based on the execution of several model sunsets since 2025, shutting down on the scheduled date has become standard procedure, so you shouldn't count on any delays.

Full List of Models Affected by the Sora-2 API Sunset

Many teams assume only the sora-2 alias is being retired, but this shutdown affects the entire suite of Videos API models:

| Model ID | Type | Status | Migration Suggestion |

|---|---|---|---|

sora-2 |

Standard alias | Sunset | SeeDance 2.0 / Wan 2.7 |

sora-2-pro |

HD alias | Sunset | SeeDance 2.0 (1080p) |

sora-2-2025-10-06 |

Standard snapshot | Sunset | SeeDance 2.0 |

sora-2-2025-12-08 |

Standard snapshot | Sunset | SeeDance 2.0 |

sora-2-pro-2025-10-06 |

Pro snapshot | Sunset | Wan 2.7 (incl. Thinking Mode) |

| Videos API (Full suite) | API endpoint | Retired | Use aggregation platforms |

In short, OpenAI is removing all video generation API entry points. From September 25 onwards, all requests prefixed with videos will return a 410 Gone or deprecation error. This differs significantly from previous GPT-series model sunsets, where snapshots were retired but aliases were kept. This is a business-line-level exit.

🎯 Key Takeaway: This means you cannot simply "swap the model name" to complete your migration; you must switch to an entirely different video generation API. We recommend completing your integration testing for the new solution by the end of June. You can use an aggregation platform to compare SeeDance 2.0 and Wan 2.7 side-by-side to avoid the stress of a last-minute migration.

The Real Reason Behind the Sora-2 API Sunset

OpenAI has not provided a detailed explanation, but based on analysis from English-language media such as The Decoder, Futurum Group, and Wikipedia, the reasons can be summarized in three areas:

- Unsustainable Compute Costs: The GPU time required for a single video generation call is dozens of times higher than that of a text model, and commercial revenue has failed to cover these costs. After the launch of Sora-2, OpenAI had to impose strict per-user quotas, which in turn stifled developer integration.

- High Content Safety Risks: Even with watermarking and content moderation, Sora faced frequent deepfake and copyright controversies, with legal and compliance costs far exceeding the value of the model itself.

- Strategic Focus: OpenAI is prioritizing compute resources for its core GPT, Operator, and Agent businesses; video is no longer a core strategic direction.

Understanding these reasons is crucial for choosing an alternative—companies that continue to invest in video generation are those that view video as a core strategic pillar. ByteDance and Alibaba happen to view video generation as a key strategic direction, which is why their product iteration speed is far faster than OpenAI's, and why they are well-positioned to take over the Sora-2 user base in 2026.

title: Why "Reverse-Engineered Sora APIs" Are No Longer a Viable Option

description: Discover why reverse-engineered Sora APIs are failing and explore superior, production-ready alternatives like SeeDance 2.0 for your video generation needs.

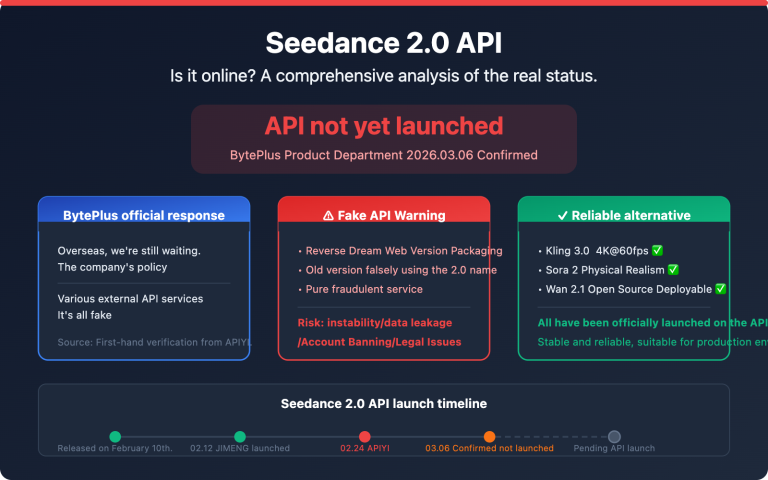

The State of Reverse-Engineered Sora APIs in 2026

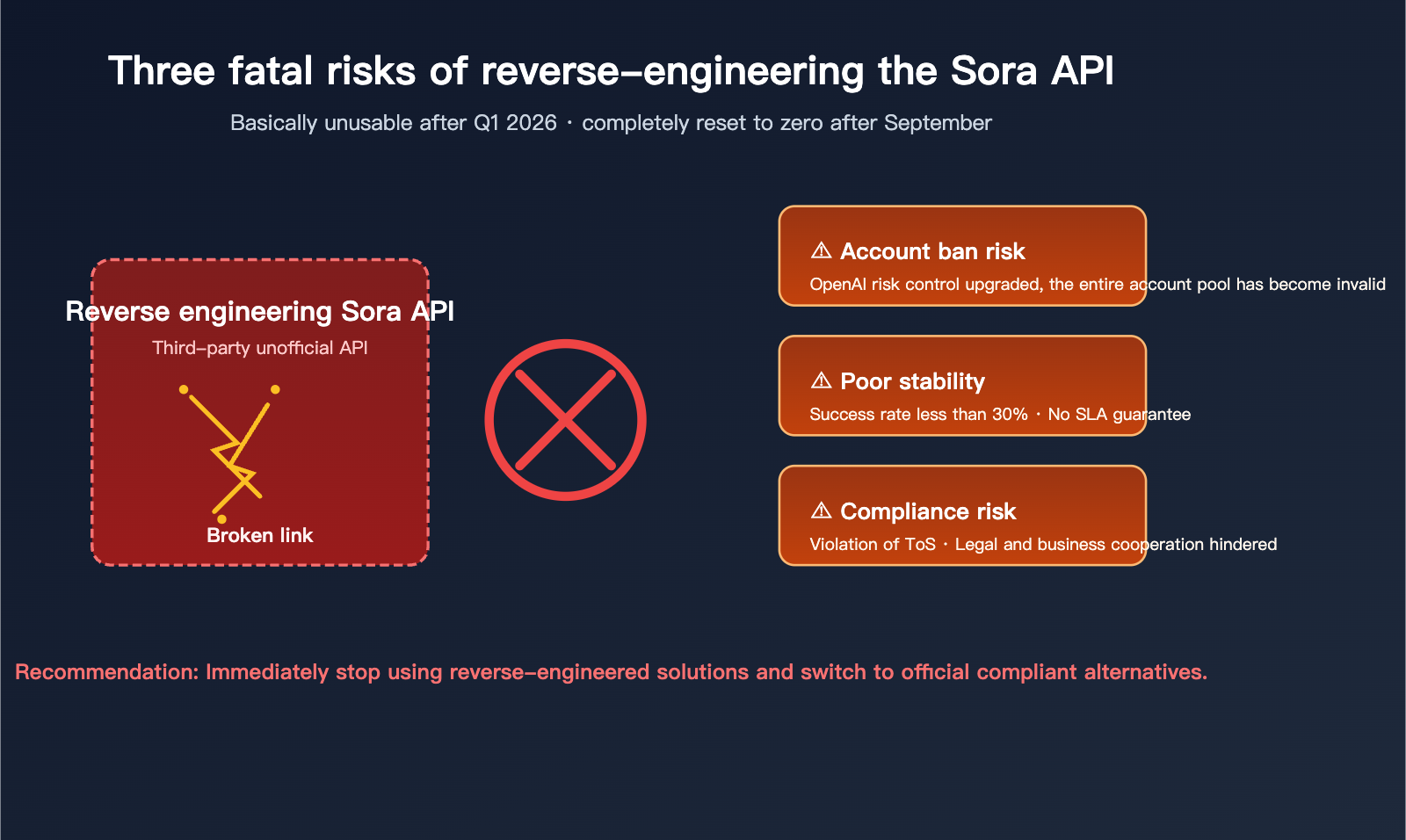

Over the past six months, the Chinese tech community has seen a proliferation of "reverse-engineered" interfaces claiming to provide access to "Sora-2" without an OpenAI account, priced at a fraction of the official cost. However, based on feedback from numerous developer communities, these interfaces have become essentially unusable since Q1 2026:

- In January 2026, OpenAI upgraded its Cloudflare security rules, causing most reverse-engineered interfaces to return 403 errors.

- Some providers rely on account pools, but OpenAI has tightened its crackdown on bulk abnormal accounts, leading to entire pools being invalidated.

- Even when they occasionally work, generation speeds are incredibly slow, and the success rate is under 30%, making them completely unsuitable for production.

- An increasing number of these service providers are "vanishing" or pivoting, leaving users at risk of losing their prepaid balances.

More importantly, since OpenAI has decided to shut down the official API on September 24th, the "upstream" for these reverse-engineered interfaces will disappear entirely. This path will be a dead end after September.

3 Reasons Why You Should Stop Investing in Reverse-Engineered Solutions

| Risk Dimension | Specific Manifestation | Business Consequence |

|---|---|---|

| Stability Risk | Interfaces can be blocked at any time; no SLA | Production environments face constant downtime |

| Compliance Risk | Violates OpenAI ToS; may involve account trading | Legal and partnership obstacles |

| Sunk Costs | Official shutdown in September renders them useless | Wasted resources on secondary migrations |

| Data Risk | Third-party proxies can access your prompts and content | Leakage of trade secrets |

⚠️ Recommendation: If your team is currently using any form of reverse-engineered Sora API, stop new feature development immediately and prioritize evaluating official, compliant alternatives. We recommend accessing SeeDance 2.0 or Wan 2.7 through compliant aggregation platforms to ensure service stability and avoid future compliance headaches.

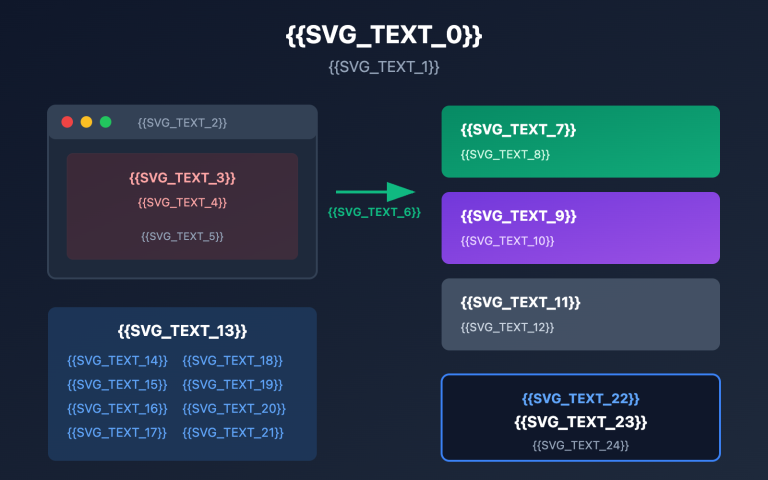

Sora-2 API Alternative 1: SeeDance 2.0

Core Positioning of SeeDance 2.0

SeeDance 2.0 is the flagship video generation model officially released by ByteDance's Doubao model team on February 9, 2026. It entered public beta on the BytePlus ModelArk platform on April 14 and became available via the fal platform on April 9. On the Artificial Analysis video model leaderboard, SeeDance 2.0 achieved an Elo score of 1269, surpassing Google Veo 3, OpenAI Sora 2, and Runway Gen-4.5, making it the current top-ranked model on public leaderboards.

Its biggest differentiator is its unified multimodal audio-video joint generation architecture. Instead of generating a video and then adding audio, it outputs up to 15 seconds of cinematic audio-visual content in a single inference pass. This "audio-visual synchronization" capability is something Sora-2 cannot match, as it requires generating video first and then attaching TTS.

SeeDance 2.0 Key Capabilities

| Capability Dimension | SeeDance 2.0 Performance | Comparison to Sora-2 |

|---|---|---|

| Max Duration | 15 seconds | On par |

| Synchronized Audio | Native audio-video joint generation | Sora-2 requires external TTS |

| Input Modality | Text / Image / Audio / Video | Sora-2 (Text + Image only) |

| Camera Control | Director-level camera instructions | Sora-2 (Weaker) |

| Physical Realism | Industry-leading | On par |

| Artificial Analysis Elo | 1269 (#1) | ~1180 |

| Public Access Channels | BytePlus ModelArk / fal / Aggregators | OpenAI Official (Shutting down) |

| Chinese Prompt Understanding | Excellent | Average |

SeeDance 2.0 is currently available for internal testing via the APIYI (apiyi.com) platform. If you are looking for a replacement for Sora-2, you can contact our customer support to request a trial quota and access SeeDance 2.0 using the OpenAI-compatible protocol.

SeeDance 2.0 Invocation Example

Here is a simple example of how to call SeeDance 2.0 using the OpenAI-compatible interface:

from openai import OpenAI

client = OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1"

)

response = client.videos.generate(

model="seedance-2.0",

prompt="An orange cat jumps from a windowsill onto a sofa, sunset light streams through the blinds, camera slowly follows the cat",

duration=10,

audio=True,

aspect_ratio="16:9"

)

print(response.video_url)

📌 Full Invocation Example (Including Error Handling and Polling)

import time

from openai import OpenAI

client = OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1"

)

def generate_seedance_video(prompt: str, duration: int = 10):

try:

# Initiate the video generation task

task = client.videos.generate(

model="seedance-2.0",

prompt=prompt,

duration=duration,

audio=True,

aspect_ratio="16:9",

resolution="1080p"

)

# Poll for completion

while True:

status = client.videos.retrieve(task.id)

if status.state == "completed":

return status.video_url

elif status.state == "failed":

raise RuntimeError(f"Task failed: {status.error}")

time.sleep(5)

except Exception as e:

print(f"Invocation error: {e}")

return None

url = generate_seedance_video(

prompt="A vintage red double-decker bus drives through the streets of London after the rain, reflections of streetlights on the windows",

duration=12

)

print(url)

SeeDance 2.0 Use Cases

- Branded Short Videos: Native synchronized audio + cinematic camera movement, perfect for commercials and brand assets.

- Multimodal Content Creation: Supports audio and video as reference inputs, ideal for IP-based content derivatives.

- Character Consistency: Excellent multimodal reference capabilities, suitable for serialized content production.

- Overseas Social Media: Native support for 16:9 / 9:16 / 1:1 aspect ratios, directly optimized for TikTok, YouTube, and Instagram.

- Music Videos: Audio-visual joint generation ensures that the visuals are naturally aligned with the rhythm of the music.

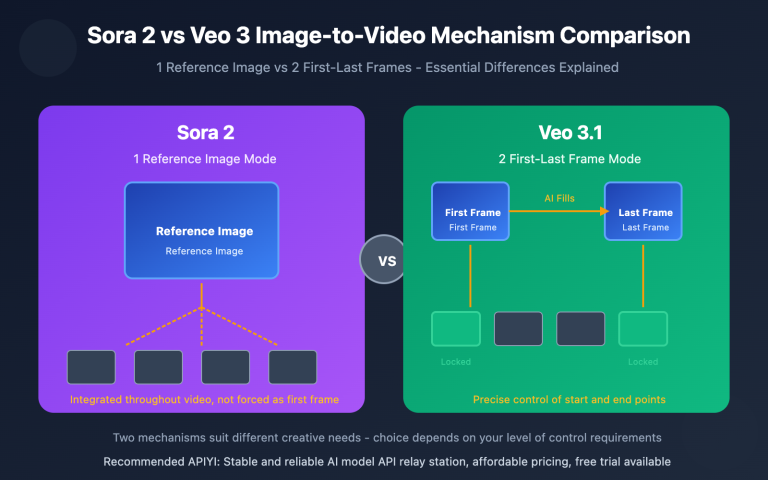

Sora-2 API Alternative 2: Wan 2.7

Core Positioning of Wan 2.7

Wan 2.7 is a next-generation video generation model released by Alibaba Tongyi Lab in March 2026. It's built on a 27B parameter MoE (Mixture-of-Experts) architecture and utilizes Diffusion Transformer + Flow Matching technology. It's now available via the Alibaba Cloud DashScope and WaveSpeedAI platforms, making it the first MoE video model from a domestic manufacturer to offer a public API.

Unlike SeeDance 2.0, which focuses on multimodal fusion, Wan 2.7's two unique selling points are: Thinking Mode and precise start/end frame control. It can "think" through the storyboard structure before generating the video and allows you to specify both the starting and ending keyframes—features currently missing from the Sora-2 API.

Wan 2.7 Key Capabilities Overview

| Capability Dimension | Wan 2.7 Performance | vs. Sora-2 |

|---|---|---|

| Model Architecture | 27B MoE | Not Public |

| Max Duration | 15 seconds | Tied |

| Max Resolution | 1080p | Tied |

| Thinking Mode | ✅ Supports storyboard planning | ❌ Not supported |

| Start/End Control | ✅ Specify both start & end | ❌ Start only |

| 9-Grid Reference | ✅ Up to 9 reference images | ❌ Not supported |

| Subject + Audio Ref | ✅ Supports combined refs | ❌ Not supported |

| Prompt Length Limit | 5000 characters | ~1000 characters |

| Public API Channels | Alibaba Cloud DashScope / WaveSpeedAI / Aggregators | OpenAI Official (Sunset) |

| Unit Price Reference | From $0.10/sec | From $0.50/sec |

🎯 Scenario Recommendation: If your video generation workflow requires precise start and end frames (e.g., brand intros/outros, countdowns), Wan 2.7's control capabilities are something Sora-2 simply can't match. We recommend calling Wan 2.7 through an API proxy service to avoid the hassle of handling Alibaba Cloud's SDK and signature mechanisms yourself.

Wan 2.7 Invocation Example

from openai import OpenAI

# Using APIYI for seamless integration

client = OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1"

)

response = client.videos.generate(

model="wan-2.7",

prompt="Cyberpunk-style neon streets, camera slowly pans up from the ground to an aerial view of the city",

first_frame_url="https://example.com/start.png",

last_frame_url="https://example.com/end.png",

thinking_mode=True,

duration=10

)

print(response.video_url)

📌 Wan 2.7 Multi-Image Reference Full Example (9-Grid Input + Thinking Mode)

from openai import OpenAI

client = OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1"

)

reference_images = [

f"https://example.com/ref{i}.png" for i in range(1, 10)

]

response = client.videos.generate(

model="wan-2.7",

prompt=(

"The protagonist travels through 9 different scenes,"

"each corresponding to one of the reference images,"

"ensuring consistent character appearance and clothing throughout."

),

reference_images=reference_images,

thinking_mode=True,

duration=15,

resolution="1080p",

audio=True

)

print(response.video_url)

Wan 2.7 Use Cases

- Brand Intros/Outros: Precise start/end frame control eliminates the need for post-production alignment.

- Consistent Character Series: 9-grid reference images ensure characters don't drift across multiple shots.

- Complex Storyboarding: Thinking Mode automatically plans the camera structure, perfect for short dramas and commercials.

- Instructional Editing: Supports editing existing videos based on natural language commands.

- 9-Grid Narrative: Input multiple key frames at once to generate a coherent, narrative-driven video.

SeeDance 2.0 vs. Wan 2.7 vs. Sora-2: A Comprehensive Comparison

Core Metrics Comparison

| Comparison Dimension | Sora-2 (Sunset Soon) | SeeDance 2.0 | Wan 2.7 |

|---|---|---|---|

| Provider | OpenAI | ByteDance | Alibaba Tongyi |

| Release Date | 2025-10 | 2026-02-09 | 2026-03 |

| Service Status | Sunset on 2026-09-24 | Public Beta | Public Beta |

| Max Duration | 15s | 15s | 15s |

| Max Resolution | 1080p | 1080p | 1080p |

| Native Audio | ✅ | ✅ (Joint generation) | ✅ |

| Multimodal Input | Text + Image | Text + Image + Audio + Video | Text + Multi-reference images |

| Start/End Frame Control | ❌ | ❌ | ✅ Bidirectional |

| Thinking Mode | ❌ | ❌ | ✅ |

| Artificial Analysis Elo | ~1180 | 1269 (#1) | Not yet ranked |

| Chinese Support | Average | Excellent | Excellent |

| Domestic Compliance | ❌ | ✅ | ✅ |

| Unit Price Reference | $0.50/sec | $0.30/sec | From $0.10/sec |

Scenario-Based Selection Guide

| Business Scenario | Recommended Solution | Reason |

|---|---|---|

| Brand Advertising Shorts | SeeDance 2.0 | Synchronized audio + cinematic camera movement |

| Short Dramas/Series | Wan 2.7 | 9-grid reference ensures character consistency |

| Intro/Outro Customization | Wan 2.7 | Precise start/end frame control |

| Multimodal Remixing | SeeDance 2.0 | Supports audio/video reference inputs |

| Chinese Prompt-Driven | Both | Both outperform Sora-2 in Chinese understanding |

| Quality-First | SeeDance 2.0 | #1 on Artificial Analysis |

| Cost-Sensitive/Batch | Wan 2.7 | Starts at $0.10/sec, clear price advantage |

| Overseas Social Media | SeeDance 2.0 | Native support for multiple aspect ratios |

| E-commerce Videos | Wan 2.7 | Subject reference ensures product consistency |

💡 Selection Advice: If you can only pick one in the short term, SeeDance 2.0 is ideal for "quality-first" scenarios, while Wan 2.7 excels in "control-first" and "cost-first" scenarios. We recommend enabling access to both models via an API proxy service to route requests dynamically based on your specific business needs, ensuring maximum flexibility during the transition.

Cost Estimation (10s 1080p Video)

| Model | Estimated Cost/Run | Estimated Monthly (10k runs) | Note |

|---|---|---|---|

| Sora-2 | ~$5.00 | ~$50,000 | Unavailable after Sept |

| SeeDance 2.0 | ~$3.00 | ~$30,000 | Includes native audio |

| Wan 2.7 | ~$1.00 | ~$10,000 | Entry-level price |

Note: Data is based on public information; actual pricing may vary. Using an API proxy service often provides better rates than direct vendor accounts.

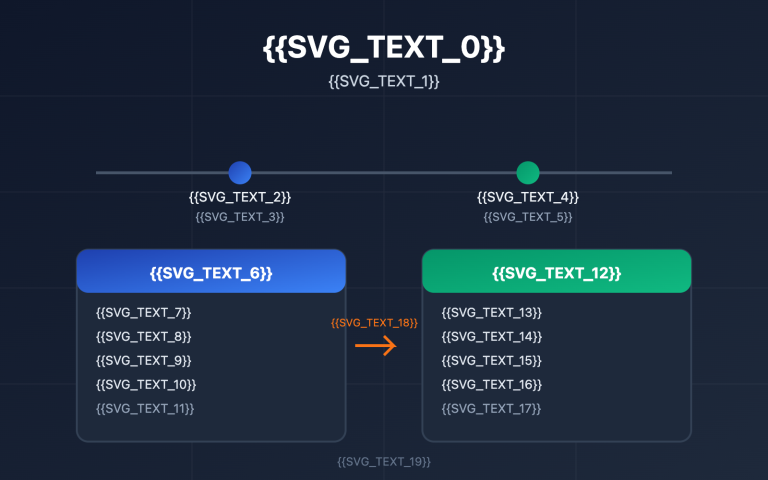

Quick Start: Migrating from Sora-2 API

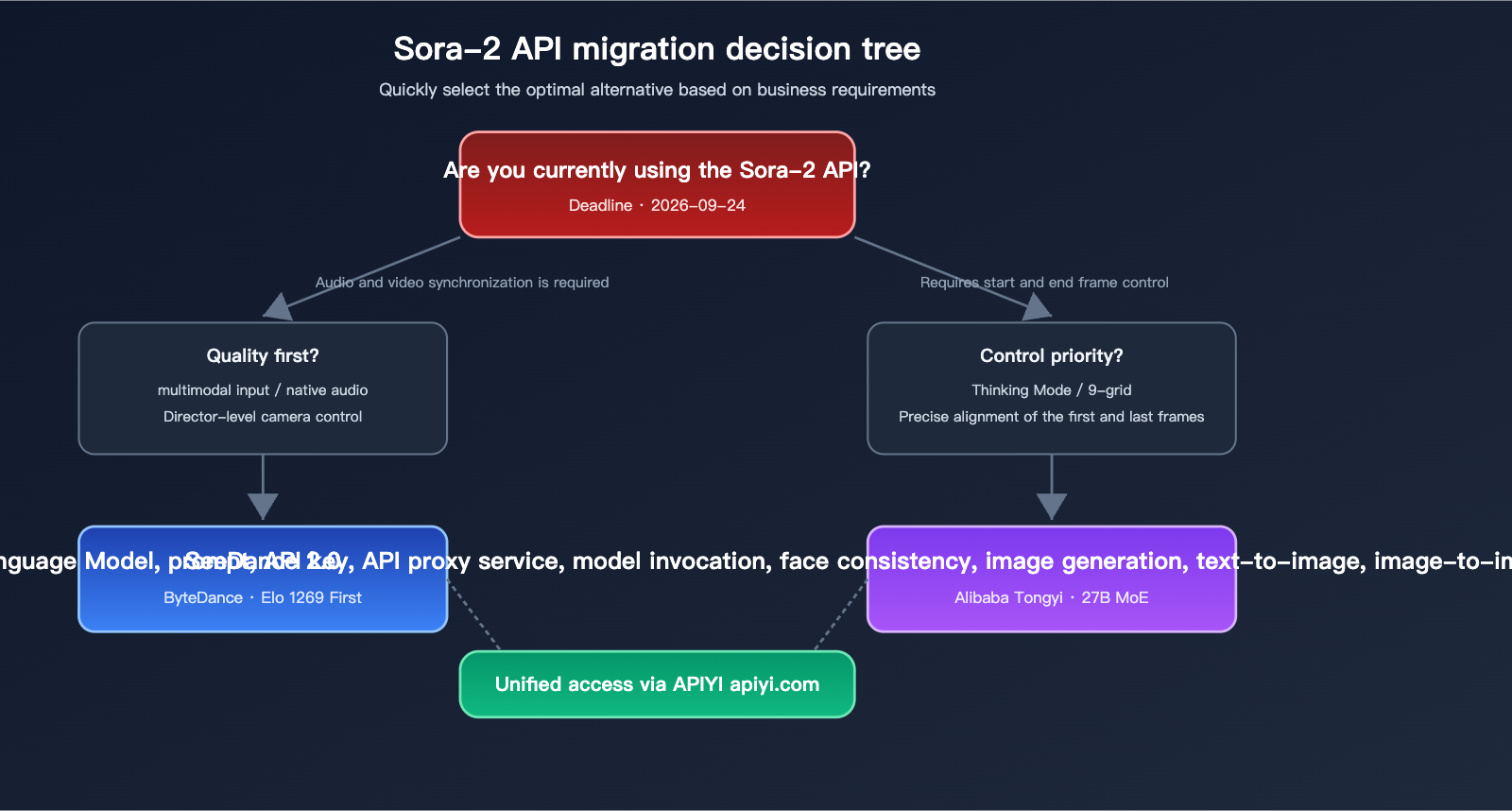

Migration Decision Tree

Migrating from the Sora-2 API can be broken down into four steps:

- Audit Existing Calls: Identify all code paths calling

sora-2or the Videos API; document your prompt templates, input parameters, and output handling logic. - Select Target Model: Determine your primary model based on the selection guide above.

- Replace Base URL and Model Name: Swap the OpenAI base URL with your API proxy service URL (e.g.,

https://api.apiyi.com/v1) and update the model name toseedance-2.0orwan-2.7. - Regression Testing: Run a set of representative prompts to verify the full pipeline, comparing output quality and latency.

Unified Access via API Proxy Service

from openai import OpenAI

# Initialize with APIYI proxy

client = OpenAI(

api_key="your-api-key",

base_url="https://api.apiyi.com/v1"

)

def generate_video(prompt: str, prefer: str = "seedance"):

# Toggle between models easily

model = "seedance-2.0" if prefer == "seedance" else "wan-2.7"

return client.videos.generate(

model=model,

prompt=prompt,

duration=10,

audio=True

)

video_a = generate_video("Product ad: smart speaker playing music", prefer="seedance")

video_b = generate_video("Brand intro: logo emerging from light flares", prefer="wan")

The beauty of this approach is that one SDK, one API key, and one base URL allow you to access both alternatives. Once Sora-2 sunsets on September 24, you simply update model="sora-2" to your chosen replacement—no other code changes required.

Migration Checklist

| Phase | Task | Est. Time |

|---|---|---|

| Audit | List all Sora-2 call entry points | 0.5 days |

| Audit | Prepare 50-100 representative prompts | 1 day |

| Setup | Register for API proxy service | 0.5 days |

| Setup | Update base_url and model fields | 1 day |

| Test | Run regression test suite | 2-3 days |

| Test | Quality review by product/design teams | 1 week |

| Rollout | Shift 10%-30% traffic | 1 week |

| Rollout | Shift to 100% traffic | — |

| Cleanup | Remove legacy Sora-2 code | 0.5 days |

Following this timeline, you can complete the migration in 4-6 weeks, leaving a 2-month buffer before the September 24 deadline.

🎯 Pro-tip: Don't wait until September. Start immediately by cloning your existing Sora-2 calls, pointing them to

https://api.apiyi.com/v1, and running parallel experiments to make data-driven decisions.

Cost Control During Migration

Video generation is significantly more expensive than text models. To keep your API bill in check:

- Degrade Strategy: Test with 720p/5s clips first, then scale to 1080p/15s once the prompt is optimized.

- Cache Reuse: Implement content hashing for identical prompts/parameters to avoid redundant generation.

- Aggregated Billing: Use an API proxy service to avoid minimum spend thresholds across multiple vendors.

- Batch Processing: Use batch modes for overnight generation to save 30%-50%.

- Prompt Engineering: Precise prompts reduce the need for re-generations, saving 20%-40% in costs.

Common Pitfalls & Solutions

| Pitfall | Cause | Solution |

|---|---|---|

| Aspect Ratio Mismatch | Different default aspect ratios | Explicitly set aspect_ratio |

| Duration Limits | Different billing/duration units | Specify duration explicitly |

| Missing Audio | Different audio interface | Explicitly set audio=True |

| Prompt Drift | Different model preferences | Optimize prompts per model |

| Callback Differences | Polling vs. Webhook | Use the proxy service's unified webhook |

FAQ: Common Questions Regarding the Sora-2 API Sunset

Q1: Can I still use videos I've already generated after the Sora-2 API is shut down?

Yes. Video files themselves are unaffected by the API sunset once they are downloaded to your local storage or OSS. However, if you were previously referencing the video_url returned by OpenAI, these links will stop working after the API is retired. Make sure to migrate all your videos to your own object storage before September 24th. If you have a large volume of files, you can use the video proxy capabilities on the APIYI (apiyi.com) platform to download them in bulk; the platform will automatically handle concurrency limits and retry failed requests.

Q2: Will OpenAI release a Sora-3 API in the future?

OpenAI has not provided a timeline for Sora-3. From a strategic perspective, OpenAI is currently prioritizing compute resources for its core GPT line and Agent business, making the likelihood of a Sora-3 video generation API in the near term quite low. Analyses from Wikipedia and the Futurum Group also point to the fact that Sora's commercial performance fell short of expectations, which is the core reason for this shutdown. We recommend not basing your business planning on the hope that "Sora-3 might return"—make sure to complete your migration to SeeDance 2.0 or Wan 2.7.

Q3: Can SeeDance 2.0 and Wan 2.7 be called reliably from mainland China?

Yes. Both models are operated by ByteDance and Alibaba, respectively, with service nodes located domestically. Network stability is significantly better than cross-border calls to OpenAI. If you previously had to use proxies or overseas servers for Sora-2, you can simplify your network architecture after migrating to these models. Calling them directly through a compliant domestic aggregation platform solves both stability and invoicing compliance issues in one go.

Q4: Is reverse-engineering the Sora API still a viable transition strategy?

We don't recommend it. The stability of reverse-engineered APIs has dropped sharply since Q1 2026, and once the official source disappears after September, reverse-engineered interfaces will inevitably stop working entirely. Continuing to invest in reverse-engineered solutions is like adding new code to a tech stack you know is being deprecated; it will only increase your migration costs later. The most economical approach is to migrate directly to SeeDance 2.0 or Wan 2.7.

Q5: How should I evaluate the actual performance of alternative solutions during migration?

We recommend a three-dimensional assessment: visual quality (subjective scoring), prompt adherence (objective comparison), and unit cost (financial calculation). The specific approach is to extract 50 representative prompts from your historical Sora-2 calls, rerun them on SeeDance 2.0 and Wan 2.7, and have your product team conduct a blind test. We suggest using an aggregation platform for these tests to unify billing and logging, which will help you quickly make the best choice for your business.

Q6: Will the API interface specifications change after migrating to an aggregation platform?

Aggregation platforms usually adopt OpenAI-compatible protocols, which means you can keep your existing code framework for calling Sora-2; you only need to modify the base_url and model fields. Request parameters related to video generation (prompt, duration, audio, resolution, etc.) may vary slightly between models, but the aggregation platform will handle parameter alignment. You can check the platform documentation for specific differences.

Q7: Should I migrate immediately, or wait until September?

We strongly recommend starting tests immediately and completing the migration by the end of June. There are three reasons for this:

- As the September 24th deadline approaches, all Sora-2 users will be crowding the migration window, making resources on aggregation platforms and alternative models much tighter.

- Early migration allows you to fully compare the two alternatives and avoid a last-minute rush.

- Developers who migrate early can usually secure better trial quotas and pricing terms.

Q8: Which is more friendly to Chinese prompts, SeeDance 2.0 or Wan 2.7?

Both are significantly better than Sora-2 at understanding Chinese. SeeDance 2.0 is more nuanced in expressing emotions and describing actions in Chinese, while Wan 2.7 supports 5,000-character long prompts and a "Thinking Mode," making it more stable for complex Chinese narrative scenarios. If your prompts are usually under 200 characters, choose SeeDance 2.0; if you frequently need to describe complex storyboards, choose Wan 2.7.

Q9: How can I ensure no service interruptions during the migration?

The safest approach is dual-writing and dual-reading: during the transition, call both Sora-2 and the target model, save both outputs, and use A/B testing on the frontend to route traffic. This way, if the target model encounters issues, you can immediately roll back to Sora-2 (before September 24th), minimizing risk. Aggregation platforms generally support dynamic routing based on the model field, making the cost of implementing dual-writing/reading very low.

Summary: The Best Path After the Sora-2 API Sunset

OpenAI has locked the Sora-2 API sunset date for September 24, 2026, leaving less than six months for all video generation businesses to transition. The "cheap transition" route of reverse-engineered APIs has lost its value under the dual pressure of tightened risk control and the official shutdown; continuing to invest in it will only pile up more migration costs.

Fortunately, the 2026 video generation landscape has formed a very healthy, multi-polar structure: SeeDance 2.0 has become the quality ceiling with an Artificial Analysis Elo of 1269, excelling in multimodal input and native audio-video joint generation; Wan 2.7 has established a unique competitive advantage with its Thinking Mode and start/end frame control, making it suitable for scenarios requiring precise visual control. Both solutions are significantly superior to the soon-to-be-retired Sora-2 in terms of Chinese support, domestic compliance, and call stability.

Our advice is: Don't put all your eggs in one basket. Use the APIYI (apiyi.com) platform to access both SeeDance 2.0 and Wan 2.7, and route dynamically based on specific business requests—use SeeDance 2.0 for quality-first content and Wan 2.7 for scenes requiring precise control. This dual-model architecture ensures stability during the migration period and allows for a seamless transition of your original Sora-2 business after September.

The most critical point: Start now. Waiting until August or September to start your migration will make model adaptation, prompt tuning, and quota applications much more difficult than they are today. We recommend launching a PoC test this week, cloning your existing Sora-2 calling code for parallel testing, and using real business data to drive your final decision, ensuring you have at least two months of stable operation with your chosen alternative by the time the September 24th deadline arrives.

Author: APIYI Team — Focused on AI Large Language Model API proxy and video generation model aggregation services.