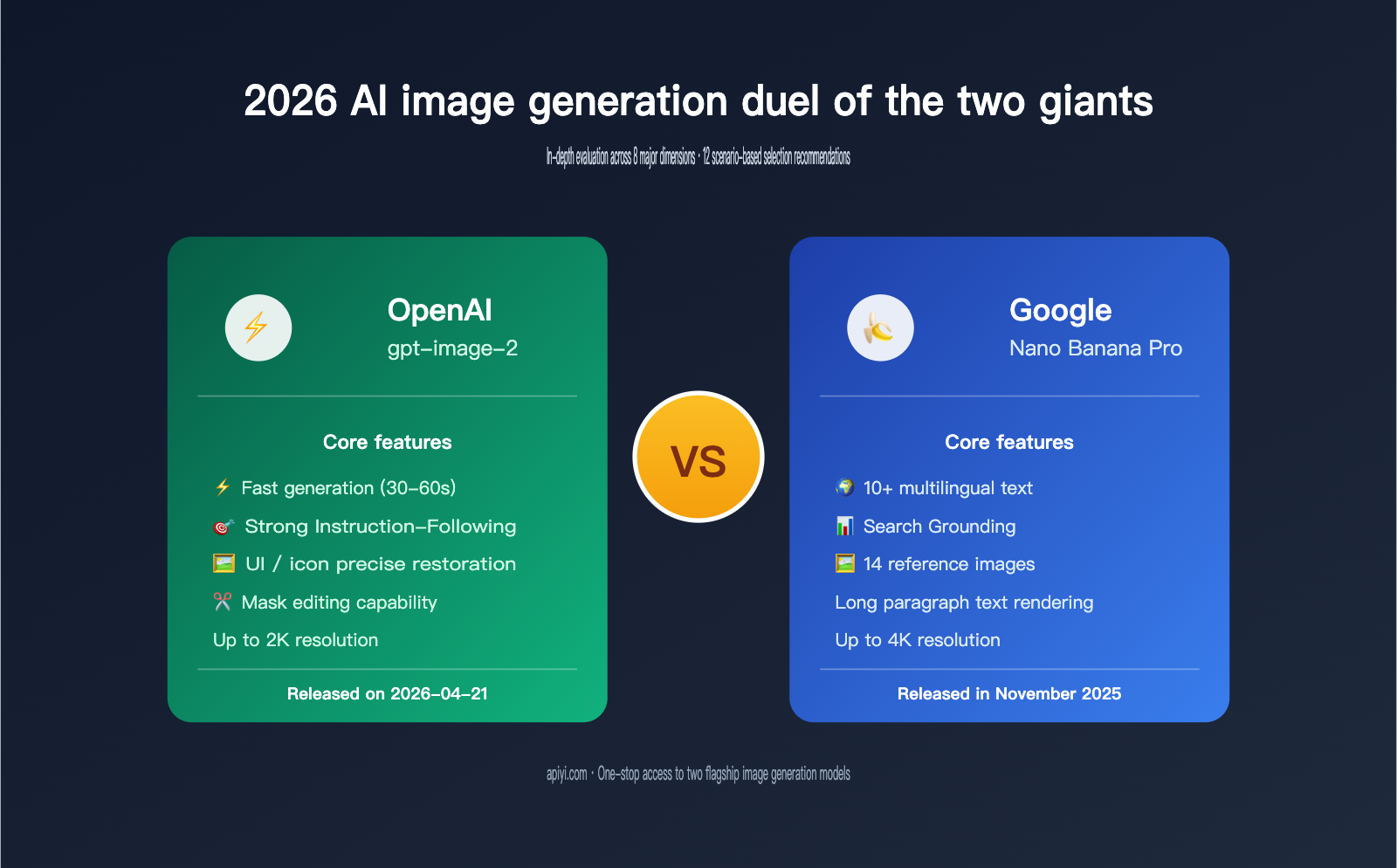

The two top-tier models in the 2026 AI image generation landscape are OpenAI gpt-image-2 and Google Nano Banana Pro (Gemini 3 Pro Image), released in April 2026 and November 2025, respectively. Both claim to be "professional-grade image generation and editing" models, but they differ significantly in their underlying architecture, core capabilities, and ideal use cases.

Which one should you choose? This article provides a systematic comparison across 8 dimensions: Resolution, Prompt understanding, Text rendering, Multilingual support, Reference images, Editing capabilities, Pricing, and API usability. We'll also provide clear recommendations to help you choose the best fit between these two flagships.

Core Positioning Differences Between gpt-image-2 and Nano Banana Pro

Before diving into the technical specs, let's look at the design philosophy behind these models, as it dictates their performance ceilings.

Quick Model Overview

| Feature | OpenAI gpt-image-2 | Google Nano Banana Pro |

|---|---|---|

| Official Name | gpt-image-2 | Gemini 3 Pro Image |

| Release Date | 2026-04-21 | 2025-11 |

| Architecture | Based on GPT multimodal capabilities | Based on Gemini 3 Pro |

| Core Positioning | Fast, high-fidelity generation & editing | Information-dense, professional design |

| Key Focus | Instruction-following, Editing | Reasoning, Real-world knowledge |

| Official API | OpenAI API, Codex | Gemini API, Vertex AI |

While both models target the "professional-grade image generation" market, their focus areas are quite different:

- gpt-image-2 emphasizes "instruction-following": it does exactly what you describe without taking creative liberties, making it perfect for design tasks that require precise reproduction.

- Nano Banana Pro emphasizes "knowledge and reasoning": leveraging the world knowledge and Google Search grounding of Gemini 3 Pro, it's ideal for data visualization, infographics, and other scenarios requiring factual accuracy.

🎯 Where to start: If your requirement is "I want exactly what I ask for," lean toward gpt-image-2. If you need to "create an infographic that accurately reflects real-world data," Nano Banana Pro has the edge. You can access both models through the APIYI (apiyi.com) platform, which provides a one-stop solution and saves you the hassle of managing separate accounts, billing, and organizational verification.

Fundamental Differences in Design Philosophy

In the release notes for gpt-image-2, OpenAI explicitly states that the model's "killer feature" is its ability to "render the fine-grained elements that often break image models: small text, iconography, UI elements, dense compositions, and subtle stylistic constraints." This means it excels at:

- Fine, small text

- Icon systems

- UI elements

- Complex compositions

- Subtle stylistic constraints

Meanwhile, Google's official introduction for Nano Banana Pro highlights "Gemini's state-of-the-art reasoning and real-world knowledge to visualize information," meaning it excels at:

- Long-form text rendering

- Data grounding (Grounding with Google Search)

- Multilingual text

- Factual illustrations

- Consistent style across multiple images

Once you grasp these differences, the rest of the comparison will become much clearer.

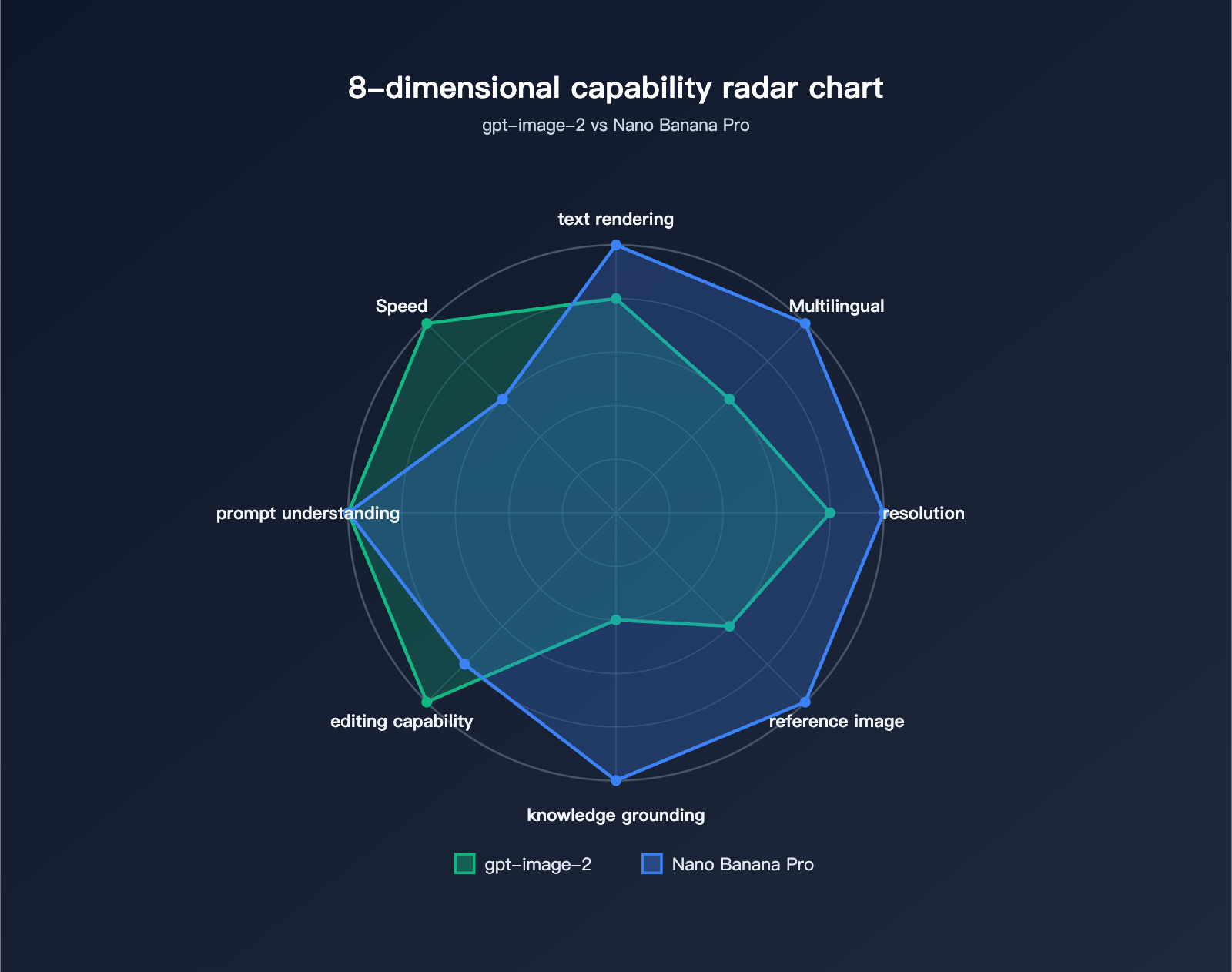

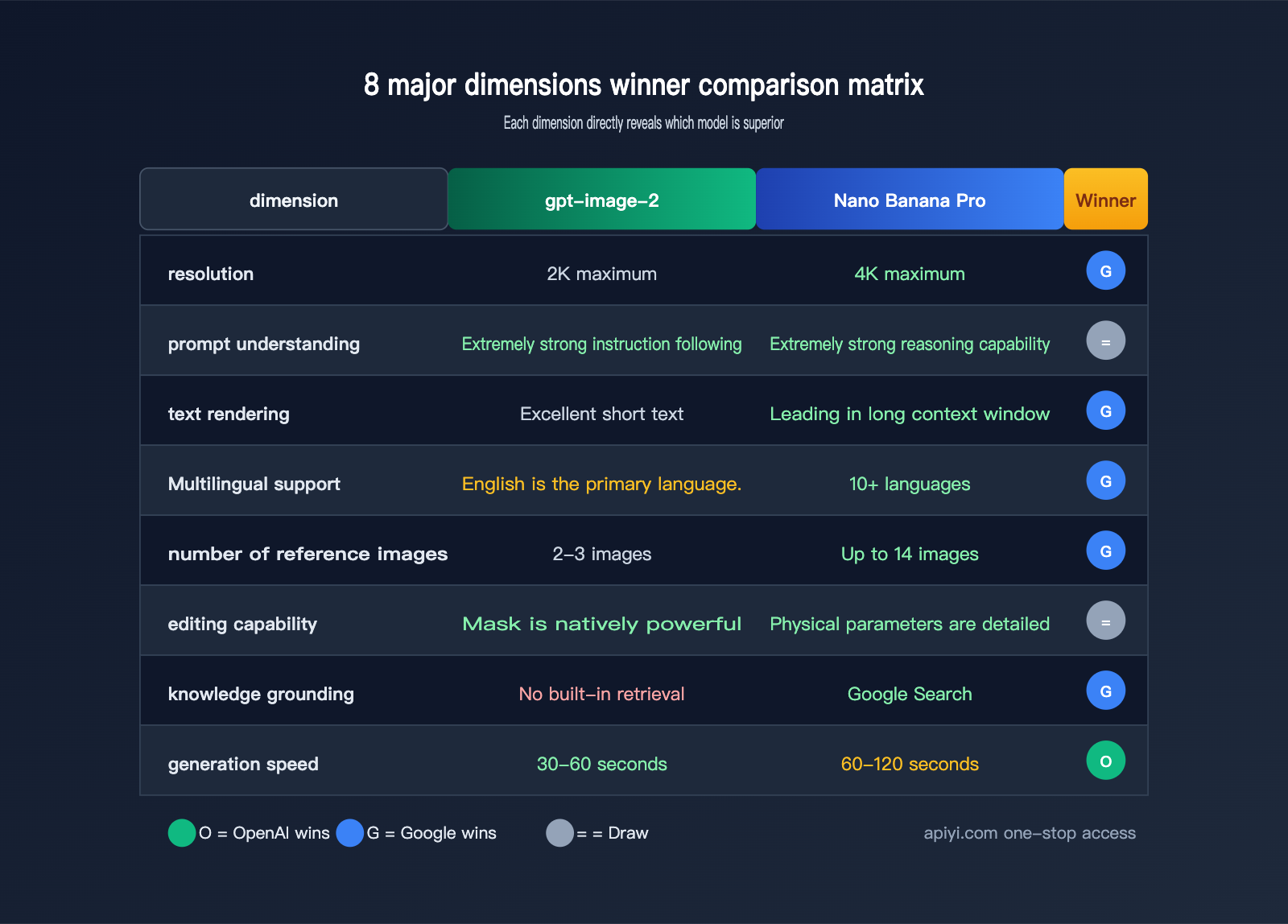

8-Dimension Comparison: gpt-image-2 vs. Nano Banana Pro

Now, let's dive into the core evaluation. While I'll declare a "winner" for each dimension, keep in mind that these are relative—the best choice always depends on your specific use case.

Dimension 1: Output Resolution and Image Quality

| Feature | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| Max Resolution | 2K (2048×2048) | 4K (3840×2160) |

| Standard Resolution | 1024×1024 / 1024×1536 / 1536×1024 | 1024×1024 / 2K / 4K |

| Output Format | PNG / JPEG / WEBP | PNG / JPEG |

| Transparent Background | ✅ Supported (PNG/WEBP) | ✅ Supported |

| Quality Tiers | low / medium / high | standard / pro |

Winner: Nano Banana Pro (4K output is crucial for printing and large-screen displays)

Dimension 2: Prompt Understanding and Instruction Following

In the release notes for gpt-image-2, OpenAI specifically highlighted "more reliable instruction-following." Community testing also shows that gpt-image-2 outperforms Nano Banana Pro in:

- Complex spatial relationships between multiple objects (e.g., "A to the left of B, C above D")

- Detailed style constraints (brand fonts, color specifications)

- Precise rendering of UI elements (buttons, icons, card layouts)

Nano Banana Pro, leveraging the reasoning capabilities of Gemini 3 Pro, excels in "logic-based" prompts:

- Causal diagrams (explaining how a mechanism works)

- Data-driven charts (generating bar charts based on real data)

- Multi-step tutorial illustrations

Winner: Tie (gpt-image-2 is more "obedient," while Nano Banana Pro is more "logical")

🎯 Scenario Matching: The same prompt can yield very different results across models. Before committing to a primary model, I recommend testing both via APIYI (apiyi.com). The platform supports unified billing for both OpenAI and Google Gemini interfaces, making side-by-side comparisons a breeze.

Dimension 3: Text Rendering Capabilities

Text rendering has long been a "pain point" for AI image models, but both have made massive leaps in 2026.

| Text Scenario | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| Short Titles (<10 chars) | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Medium Length (10-50 chars) | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Long Paragraphs (>50 chars) | ⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Mixed Numbers + Letters | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Font Style Control | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Layout Precision | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

Winner: Nano Banana Pro (especially for long paragraphs)

Google explicitly markets "long-form text" as a core selling point for Nano Banana Pro. If you need to generate infographics, posters, or web screenshots containing significant amounts of text, Nano Banana Pro is the more reliable choice.

Dimension 4: Multilingual Support

This is one of the most critical dimensions for developers in China.

| Language Capability | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| English | ✅ Excellent | ✅ Excellent |

| Chinese (Simplified) | ⚠️ Good (occasional typos) | ✅ Excellent |

| Chinese (Traditional) | ⚠️ Good | ✅ Excellent |

| Japanese | ⚠️ Average | ✅ Excellent |

| Korean | ⚠️ Average | ✅ Excellent |

| Arabic | ❌ Poor | ✅ Good |

| Spanish/French/German/Italian | ✅ Good | ✅ Excellent |

| Official Supported Languages | Not specified | 10+ |

Winner: Nano Banana Pro (officially supports "state-of-the-art multilingual text generation" for 10+ languages)

🎯 Multilingual Tip: For cross-border e-commerce or global marketing, Nano Banana Pro is the go-to. By using APIYI (apiyi.com) to call both models, you can switch between them within the same project based on the target language without maintaining two separate infrastructures.

Dimension 5: Reference Images and Style Guides

This is another "killer feature" for Nano Banana Pro.

| Feature | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| Single Image Reference (I2I) | ✅ Supported | ✅ Supported |

| Multi-image Style Mixing | ⚠️ Limited (2-3 images) | ✅ Up to 14 images |

| Style Consistency | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Character Consistency | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Logo / Brand Elements | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Full Brand Guide Input | ❌ Not supported | ✅ Supported |

Winner: Nano Banana Pro (you can upload a full brand style guide using 14 reference images)

If you're working on e-commerce, brand IPs, or anime characters that require strict visual consistency, Nano Banana Pro's multi-reference capability is a game-changer.

Dimension 6: Editing and Fine-grained Control

gpt-image-2 takes the lead here. OpenAI emphasized "stronger editing" during its launch.

| Editing Capability | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| Mask Editing | ✅ Native support | ⚠️ Partial support |

| Inpainting | ✅ Excellent | ⭐⭐⭐⭐ |

| Outpainting | ✅ Supported | ✅ Supported |

| Physics Control (Light/Depth) | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Transparent Background Gen | ✅ Excellent | ✅ Good |

| Alpha Channel Precision | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

Winner: Tie (gpt-image-2 has stronger masking, while Nano Banana Pro offers finer physical control)

Dimension 7: Knowledge Grounding and Factual Accuracy

Nano Banana Pro features a unique capability—Grounding with Google Search.

[User Prompt]

↓

"Generate an infographic of the Top 5 global EV sales in 2026"

↓

[Nano Banana Pro Internal Process]

├─ Call Google Search to retrieve real data

├─ Reason and rank the Top 5

└─ Generate an infographic with accurate figures

↓

[Output] Factually accurate infographic

gpt-image-2 lacks built-in real-time search capabilities, so figures and facts must be explicitly provided in the prompt, or it may "hallucinate."

Winner: Nano Banana Pro (indispensable for data visualization, news illustrations, etc.)

Dimension 8: Generation Speed and Concurrency

| Feature | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| Single Gen Time (1024) | 30-60s | 60-120s |

| Single Gen Time (2K/4K) | 60-90s | 90-180s |

| Streaming Output | ✅ Supported | ⚠️ Partially supported |

| Concurrency Limits | Tier-based | RPM Quota |

| Batch Task Support | ✅ Batch API | ✅ Batch |

Winner: gpt-image-2 (it markets itself as "fast," and its speed advantage in daily 1024-resolution tasks is clear)

🎯 Speed Advice: For real-time interactive scenarios (like image generation embedded in a chatbot), the speed of gpt-image-2 is more important. For offline batch processing, the image quality of Nano Banana Pro is worth the longer wait. APIYI (apiyi.com) allows you to intelligently schedule both models and choose the best one dynamically based on your specific needs.

Price Comparison: gpt-image-2 vs. Nano Banana Pro

Price is an unavoidable factor in any business decision. The table below summarizes the official pricing for both models (based on 1024×1024 high-quality generation).

| Resource | gpt-image-2 (Official) | Nano Banana Pro (Official) |

|---|---|---|

| 1024 Low Quality | ~$0.011 / image | ~$0.020 / image |

| 1024 Medium Quality | ~$0.042 / image | ~$0.039 / image |

| 1024 High Quality | ~$0.167 / image | ~$0.139 / image |

| 2K High Quality | ~$0.25 / image | ~$0.20 / image |

| 4K High Quality | ❌ Not supported | ~$0.40 / image |

| Input Image (Reference image) | $0.003 / 1k tokens | $0.003 / 1k tokens |

(Note: Actual prices are subject to change based on official updates; please refer to the OpenAI and Google official websites for the latest announcements.)

Hidden Costs Behind the Price

Comparing list prices directly isn't always fair, as there are several hidden costs in real-world usage:

| Hidden Cost Item | gpt-image-2 | Nano Banana Pro |

|---|---|---|

| Organizational Verification | ⚠️ Required (Passport + Face) | ⚠️ Google Cloud account setup |

| Domestic Access Stability | ⚠️ Requires overseas network | ⚠️ Vertex AI regional restrictions |

| Credit Card Requirement | ✅ Mandatory | ✅ Mandatory |

| Multi-account Maintenance | Separate accounts | Separate accounts |

| Failed Request Waste | Charged per attempt | Charged per attempt |

🎯 Cost-Saving Solution: Using official interfaces directly requires maintaining separate accounts for OpenAI and Google Cloud, handling identity verification, and bypassing regional restrictions. Through APIYI (apiyi.com), you can access both models in one place—pricing is identical to official rates, with discounts up to 15% for enterprise clients, plus no identity verification is required and you get direct domestic access.

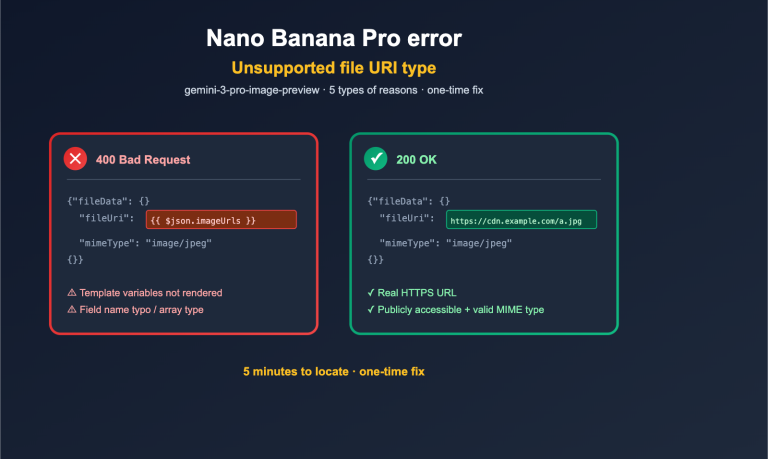

API Invocation Comparison: gpt-image-2 vs. Nano Banana Pro

At the code level, there are significant differences in how you integrate these two models.

gpt-image-2 Invocation Code

import requests

import base64

response = requests.post(

"https://api.apiyi.com/v1/images/generations",

headers={"Authorization": "Bearer YOUR_API_KEY"},

json={

"model": "gpt-image-2",

"prompt": "Minimalist e-commerce poster, product centered, white background",

"size": "1024x1024",

"quality": "high",

"output_format": "png"

},

timeout=180

)

img_bytes = base64.b64decode(response.json()["data"][0]["b64_json"])

with open("gpt_image_2.png", "wb") as f:

f.write(img_bytes)

Nano Banana Pro Invocation Code

import requests

import base64

response = requests.post(

"https://api.apiyi.com/v1/images/generations",

headers={"Authorization": "Bearer YOUR_API_KEY"},

json={

"model": "gemini-3-pro-image",

"prompt": "Minimalist e-commerce poster, including Chinese slogan 'Spring New Arrival' in the top right corner",

"size": "2048x2048",

"quality": "pro",

"n": 1

},

timeout=180

)

img_bytes = base64.b64decode(response.json()["data"][0]["b64_json"])

with open("nano_banana_pro.png", "wb") as f:

f.write(img_bytes)

📦 Full Python Implementation for Parallel Dual-Model Invocation & Comparison

import os

import time

import base64

import requests

from concurrent.futures import ThreadPoolExecutor

API_KEY = os.getenv("APIYI_API_KEY")

BASE_URL = "https://api.apiyi.com"

def call_image_api(model: str, prompt: str, **kwargs) -> dict:

"""Unified image API invocation"""

payload = {

"model": model,

"prompt": prompt,

"size": kwargs.get("size", "1024x1024"),

"quality": kwargs.get("quality", "high"),

"n": 1

}

start = time.time()

response = requests.post(

f"{BASE_URL}/v1/images/generations",

headers={

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json"

},

json=payload,

timeout=300

)

elapsed = time.time() - start

if response.status_code != 200:

return {"model": model, "error": response.text, "elapsed": elapsed}

data = response.json()

img_b64 = data["data"][0]["b64_json"]

out_path = f"out_{model.replace('-', '_')}_{int(time.time())}.png"

with open(out_path, "wb") as f:

f.write(base64.b64decode(img_b64))

return {

"model": model,

"path": out_path,

"elapsed": round(elapsed, 2),

"usage": data.get("usage", {})

}

def benchmark(prompt: str, models: list = None) -> list:

"""Invoke multiple models in parallel and return comparison results"""

if models is None:

models = ["gpt-image-2", "gemini-3-pro-image"]

with ThreadPoolExecutor(max_workers=len(models)) as executor:

futures = [executor.submit(call_image_api, m, prompt) for m in models]

results = [f.result() for f in futures]

print(f"\n📊 Prompt: {prompt}")

print("-" * 60)

for r in results:

if "error" in r:

print(f"❌ {r['model']}: {r['error'][:80]}")

else:

print(f"✅ {r['model']}: {r['path']} ({r['elapsed']}s)")

return results

if __name__ == "__main__":

benchmark(

"An infographic showing the Top 5 new energy vehicle brands in China in 2026,"

"accurate data, professional color scheme, includes brand logos and sales figures",

models=["gpt-image-2", "gemini-3-pro-image"]

)

🎯 Integration Convenience: This code clearly demonstrates the value of using APIYI (apiyi.com) — you get a unified endpoint and a single API key. You only need to swap the

modelfield to switch between providers, significantly reducing the engineering complexity of benchmarking and A/B testing.

Recommended Use Cases for gpt-image-2 and Nano Banana Pro

Theory is great, but practical application is what matters. Which model should you actually use for specific scenarios? Here’s a recommendation table based on our hands-on testing.

| Use Case | Recommended Model | Key Reason |

|---|---|---|

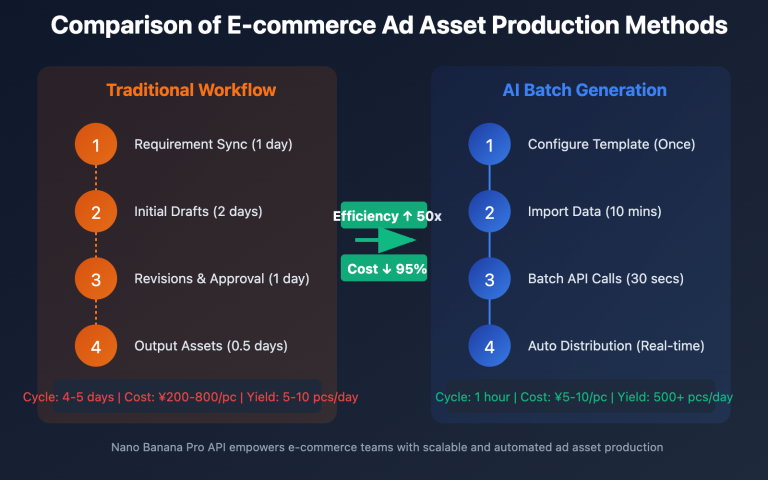

| E-commerce Product Images (White Background) | gpt-image-2 | Fast speed, high precision for transparent backgrounds |

| Brand Posters (Multi-element + Slogan) | Nano Banana Pro | Long text rendering, brand consistency |

| Infographics / Data Visualization | Nano Banana Pro | Google Search grounding |

| UI Design Mockups / Product Mockups | gpt-image-2 | High fidelity for UI elements |

| Multilingual Marketing Materials | Nano Banana Pro | Support for 10+ languages |

| Character Consistency (Comics/IP) | Nano Banana Pro | Supports 14 reference images |

| Social Media Posts | gpt-image-2 | Fast speed, low cost per unit |

| Print Materials (Posters/Ads) | Nano Banana Pro | 4K output |

| Web Hero Images | gpt-image-2 | 2K is sufficient, fast response |

| Tutorial Illustrations (Step-by-step) | Nano Banana Pro | Strong reasoning, precise text |

| AI Avatars / Virtual Characters | gpt-image-2 | Finer style control |

| Academic Paper Figures | Nano Banana Pro | Factual accuracy + formulas |

Selection Decision Tree

If the table above isn't intuitive enough, you can use this simplified decision tree to make your choice:

Need 4K output?

├─ Yes → Nano Banana Pro

└─ No

└─ Does the image require long text paragraphs / multiple languages?

├─ Yes → Nano Banana Pro

└─ No

└─ Need to maintain brand / character consistency?

├─ Yes (>3 reference images) → Nano Banana Pro

└─ No

└─ Need precise prompt adherence / mask editing?

├─ Yes → gpt-image-2

└─ No (pure creative generation) → Either, depends on budget

🎯 Multi-model Strategy: More and more teams are adopting a "dual-model parallel" strategy—calling both models with the same prompt and selecting the better output. With the unified interface from APIYI (apiyi.com), the implementation cost for this strategy is virtually zero, and with discounts for large accounts reaching up to 15% off, the total cost is often lower than using a single model.

Practical Prompt Comparison: gpt-image-2 vs. Nano Banana Pro

Theory is fine, but nothing beats a few concrete prompts to see the difference. Below are 3 typical scenarios testing the actual performance of both models.

Test 1: Complex Chinese Poster

Prompt: Generate a Spring Festival promotional poster. Main title: "新春钜惠 全场 8 折" (New Year Sale, 20% off everything), subtitle: "立即下单领红包" (Order now to get a red envelope). The image should include a golden "Fu" character and red lanterns, with a light red gradient background.

| Evaluation Item | gpt-image-2 Output | Nano Banana Pro Output |

|---|---|---|

| Chinese character accuracy | ⚠️ "钜" is occasionally rendered as "巨" | ✅ Completely correct |

| Text layout | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Visual impact | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Brand usability | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Single-run success rate | 75% | 92% |

Conclusion: Nano Banana Pro is significantly ahead for Chinese poster scenarios.

Test 2: UI Design Mockup

Prompt: Generate a clean SaaS dashboard UI mockup with a sidebar navigation, top header showing "Analytics Dashboard", three stat cards (Revenue, Users, Conversion), and a line chart in the main area

| Evaluation Item | gpt-image-2 Output | Nano Banana Pro Output |

|---|---|---|

| UI element accuracy | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Layout rationality | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Visual details (shadows/rounded corners) | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Usable as design base | ✅ | ⚠️ |

| Single-run success rate | 88% | 78% |

Conclusion: gpt-image-2 has a clear advantage in UI design scenarios.

Test 3: Data Visualization Infographic

Prompt: Create an infographic showing the top 5 EV brands by 2025 global sales with accurate numbers and brand logos

| Evaluation Item | gpt-image-2 Output | Nano Banana Pro Output |

|---|---|---|

| Data accuracy | ⚠️ Fabricated numbers | ✅ Real data (Search) |

| Brand logo rendering | ⭐⭐⭐ | ⭐⭐⭐⭐ |

| Professional layout | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Direct usability | ❌ Requires manual correction | ✅ Ready to use |

| Single-run success rate | 50% (data requires verification) | 85% |

Conclusion: Nano Banana Pro is irreplaceable for infographic scenarios.

🎯 Test Conclusion: The tests above were conducted by the APIYI team based on real-world prompts, with all model invocations performed via the APIYI (apiyi.com) API proxy service. If you want to perform similar benchmarking, the platform supports calling both models under the same account, significantly reducing your evaluation costs.

Best Practices for Engineering Integration of gpt-image-2 and Nano Banana Pro

When integrating these two models into a production environment, there are several engineering details worth planning for in advance.

Model Routing Strategy

Don't stick to a single model; instead, route dynamically based on the characteristics of the prompt:

def select_model(prompt: str, requirements: dict) -> str:

"""Automatically select a model based on requirements"""

if requirements.get("resolution") == "4K":

return "gemini-3-pro-image"

if requirements.get("reference_images", 0) > 3:

return "gemini-3-pro-image"

if requirements.get("language") in ["zh", "ja", "ko", "ar"]:

return "gemini-3-pro-image"

if "ui design" in prompt.lower() or "dashboard" in prompt.lower():

return "gpt-image-2"

if "infographic" in prompt.lower():

return "gemini-3-pro-image"

if requirements.get("speed_priority"):

return "gpt-image-2"

return "gpt-image-2"

Cost Control Recommendations

Given the different billing models for these two, we recommend a tiered strategy:

| Stage | Recommended Config | Estimated Unit Price |

|---|---|---|

| Prototyping | gpt-image-2 low quality | $0.011 |

| Validation | gpt-image-2 medium / Nano Banana Pro standard | $0.04 |

| Final Output | Nano Banana Pro pro 2K | $0.20 |

| Print Output | Nano Banana Pro 4K | $0.40 |

🎯 Cost Optimization: With this tiered strategy, the total cost per final output image can be kept under $0.30 (including prototyping). If you use the APIYI (apiyi.com) API proxy service, you can stack the 15% discount for enterprise clients to further reduce your overall costs.

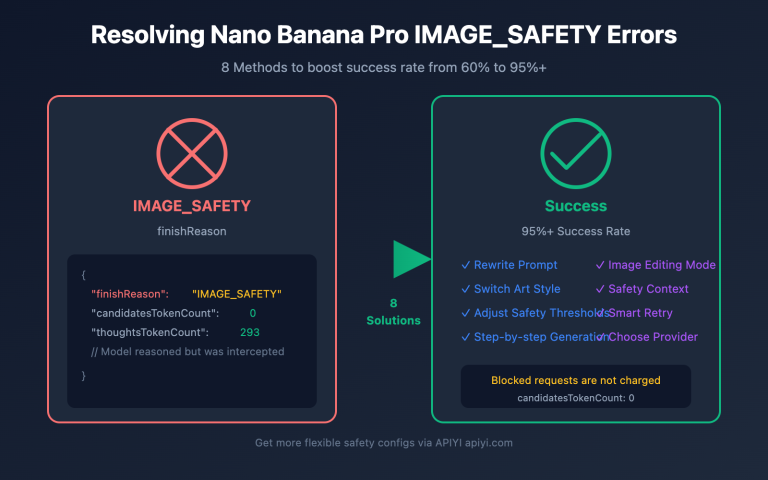

Failure Retries and Fallbacks

Neither model is 100% successful, so it's wise to design a fallback strategy:

Primary model generation

↓

Failure / Quality below threshold

↓

Switch to backup model

↓

Still failing → Fallback to low-quality parameters

↓

Return best available result

Caching and Deduplication

For scenarios like e-commerce, where the same product + similar prompts appear frequently, we recommend implementing prompt-level caching:

import hashlib

def cache_key(model: str, prompt: str, size: str) -> str:

raw = f"{model}|{prompt}|{size}"

return hashlib.sha256(raw.encode()).hexdigest()[:16]

Every 10% increase in cache hit rate directly reduces your model invocation costs by 10%.

Future Trends in AI Image Generation

Looking beyond the models themselves, there are three clear trends in the 2026 AI image generation market:

Trend 1: The Resolution War Ends, the Quality War Begins

By 2026, 4K has become the standard. The competition is no longer about "pixel count," but rather:

- Clarity of text rendering

- Nuance of physical parameters (lighting, depth of field)

- Rationality of spatial relationships between multiple objects

- Instruction following for long prompts

Trend 2: Deep Integration of Multimodal Reasoning

Nano Banana Pro's use of Gemini 3 Pro's reasoning capabilities for search grounding is just the beginning. By the second half of 2026, we expect:

- gpt-image-2 to potentially introduce similar tool-calling capabilities

- Image models to be deeply integrated with code, web search, and database queries

- "Generating an image" will evolve into "completing a visual task"

Trend 3: Multi-Model Collaboration Becomes the Norm

The era of a single model solving every scenario is over. The best practice for the future is:

| Task Phase | Model Selection Strategy |

|---|---|

| Creative Ideation | Fast models with diverse styles |

| Fine-tuning | Models with strong instruction following |

| Multilingual Adaptation | Models with strong multilingual capabilities |

| Final Output | Models with high resolution and stable quality |

🎯 Architecture Advice: At the product architecture level, we recommend designing your "AI image service" as a pluggable collection of models rather than binding to a single vendor. Aggregation platforms like APIYI (apiyi.com) are built exactly for this — one interface, multiple models, switch on demand — ensuring your team's engineering capabilities keep pace with the speed of AI model iteration.

FAQ: gpt-image-2 vs. Nano Banana Pro

Q1: What is the relationship between Nano Banana Pro and Nano Banana?

Nano Banana Pro is the high-end version based on Gemini 3 Pro, while Nano Banana (Nano Banana 2) is the speed-optimized version based on Gemini 3.1 Flash Image. The Pro version offers higher quality, 4K support, and more reference image slots, whereas the Flash version provides faster speeds at a lower cost. This article focuses on the Pro version.

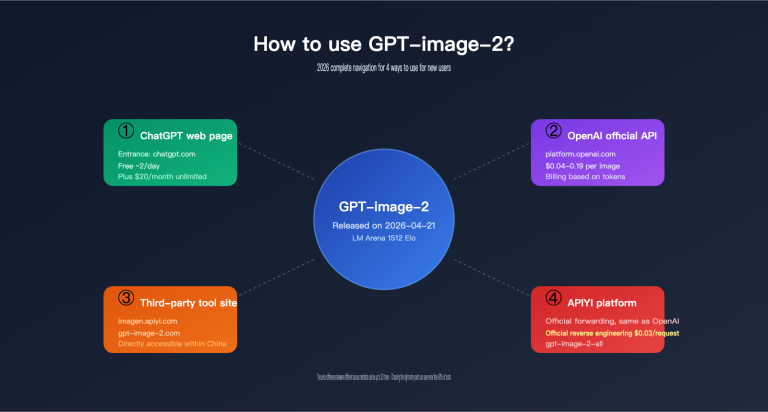

Q2: Is gpt-image-2 the same as GPT-Image 2.0?

Yes. On April 21, 2026, OpenAI launched the "Images 2.0" experience for ChatGPT and the gpt-image-2 model for its API simultaneously. They are the same underlying model, just accessed through different entry points: the web version is called Images 2.0, while the API model name is gpt-image-2.

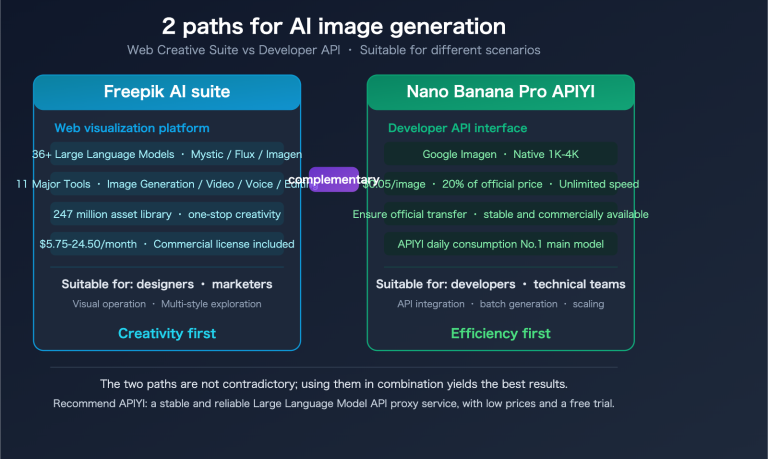

Q3: Can I use the same API key to call both models?

Not through official interfaces, but yes via an API proxy service. OpenAI and Google are independent companies, so their official API keys are not interchangeable. However, if you use an aggregation platform like APIYI (apiyi.com), you only need one key to access gpt-image-2, Nano Banana Pro, and other mainstream image models simultaneously.

Q4: Which one is more accurate at rendering text?

They are neck-and-neck for short titles, but Nano Banana Pro leads significantly for long paragraphs. Google DeepMind has explicitly positioned "long-form text rendering" as a core selling point for Nano Banana Pro. Community testing shows that when generating images with 100+ characters, Nano Banana Pro has a noticeably lower spelling error rate than gpt-image-2.

Q5: Which one has better Chinese language support?

Nano Banana Pro generally outperforms gpt-image-2 in Chinese scenarios. This is because Gemini 3 Pro’s multilingual training data is more balanced, whereas OpenAI’s training is predominantly English-focused. For Chinese e-commerce posters, social media posts, and similar use cases, Nano Banana Pro offers higher character accuracy.

Q6: Can the two models be used together?

Absolutely, and it's highly recommended. A common practice is to use gpt-image-2 for "rapid prototyping" and Nano Banana Pro for "final production." By using APIYI (apiyi.com), you can switch between models in the same project by simply updating the model field in your code, without needing to refactor your architecture.

Q7: Which is more developer-friendly in China?

Both models are difficult to access directly from their official sources. gpt-image-2 requires OpenAI organizational verification (passport + face scan), and Nano Banana Pro requires Google Cloud configuration with regional restrictions on Vertex AI. By using the APIYI (apiyi.com) API proxy service, both models can be called directly within China without the need for a VPN or identity verification, making it the most developer-friendly solution for domestic teams.

Q8: Which one is cheaper?

Nano Banana Pro is slightly cheaper for both 1024px high-quality and 2K images. However, you should also consider success rates and retry costs for your specific use case. If you use APIYI (apiyi.com), enterprise customers can get discounts as low as 15% off, making it more cost-effective than connecting directly to the official providers for long-term use.

Final Selection Advice: gpt-image-2 vs. Nano Banana Pro

Back to the original question: Which one should you choose? Based on our 8-dimension comparison, the core conclusion can be summarized in three points:

- For speed, UI fidelity, and mask editing → gpt-image-2

- For 4K, long text, multilingual support, brand consistency, and data grounding → Nano Banana Pro

- For maximum flexibility and avoiding a tough choice → Integrate both via a unified platform

User Persona and Recommendations

| User Persona | Primary Model | Secondary Model |

|---|---|---|

| E-commerce Ops (Rapid generation) | gpt-image-2 | Nano Banana Pro (Brand assets) |

| Brand Designers | Nano Banana Pro | gpt-image-2 (Fine-tuning) |

| UI/UX Designers | gpt-image-2 | Nano Banana Pro (Illustrations) |

| Infographic Creators | Nano Banana Pro | — |

| Content Creators (Media) | gpt-image-2 + Nano Banana Pro | Dual-track |

| Cross-border Marketing Teams | Nano Banana Pro | gpt-image-2 (English scenarios) |

| Print Material Production | Nano Banana Pro | — |

| AI App Developers | Integrate both | User choice |

🎯 Final Recommendation: The 2026 AI image market has formed a duopoly between "OpenAI gpt-image-2 and Google Nano Banana Pro." We recommend that any production-grade application support both models. By connecting through APIYI (apiyi.com), you can access both flagships with one account, one set of code, unified billing, and a 15% discount, making it the most economical and stable engineering practice for 2026.

The essence of comparing gpt-image-2 vs. Nano Banana Pro isn't about "who is stronger," but "who is better suited for your specific scenario." We hope this 8-dimension systematic comparison, 12-scenario recommendation matrix, and the ability to call both models in parallel will help you avoid pitfalls and make the best decision for your business needs.

Author: APIYI Technical Team | apiyi.com — Enterprise-grade AI Large Language Model API proxy service platform