Author's Note: This article breaks down the performance data behind the Claude Opus 4.6 and Thinking models, which have swept the top spots on the Arena.ai leaderboard for both Text and Code. We'll also show you how to access the Claude Opus 4.6 API via APIYI at 80% of the official price, with high concurrency and no speed limits.

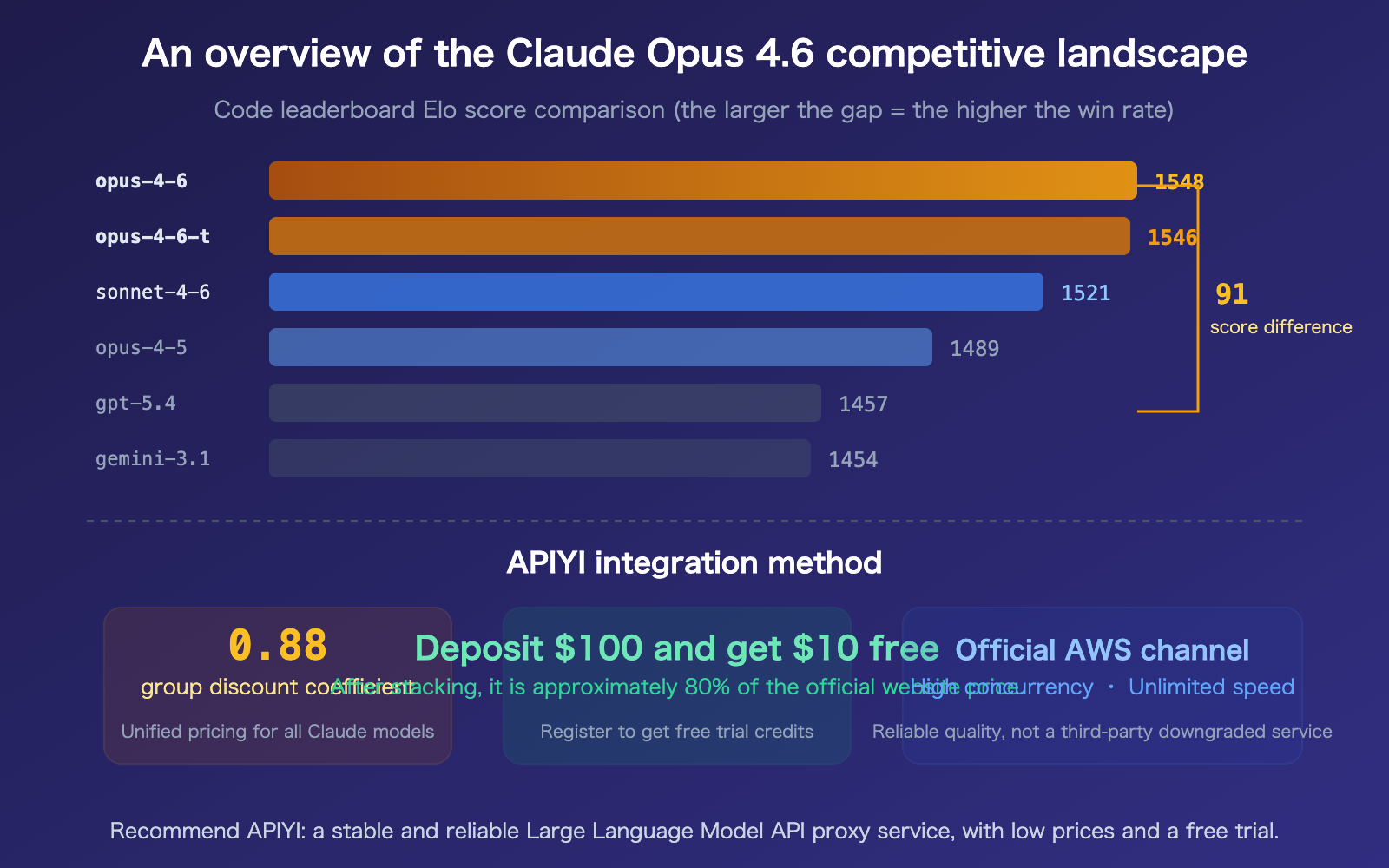

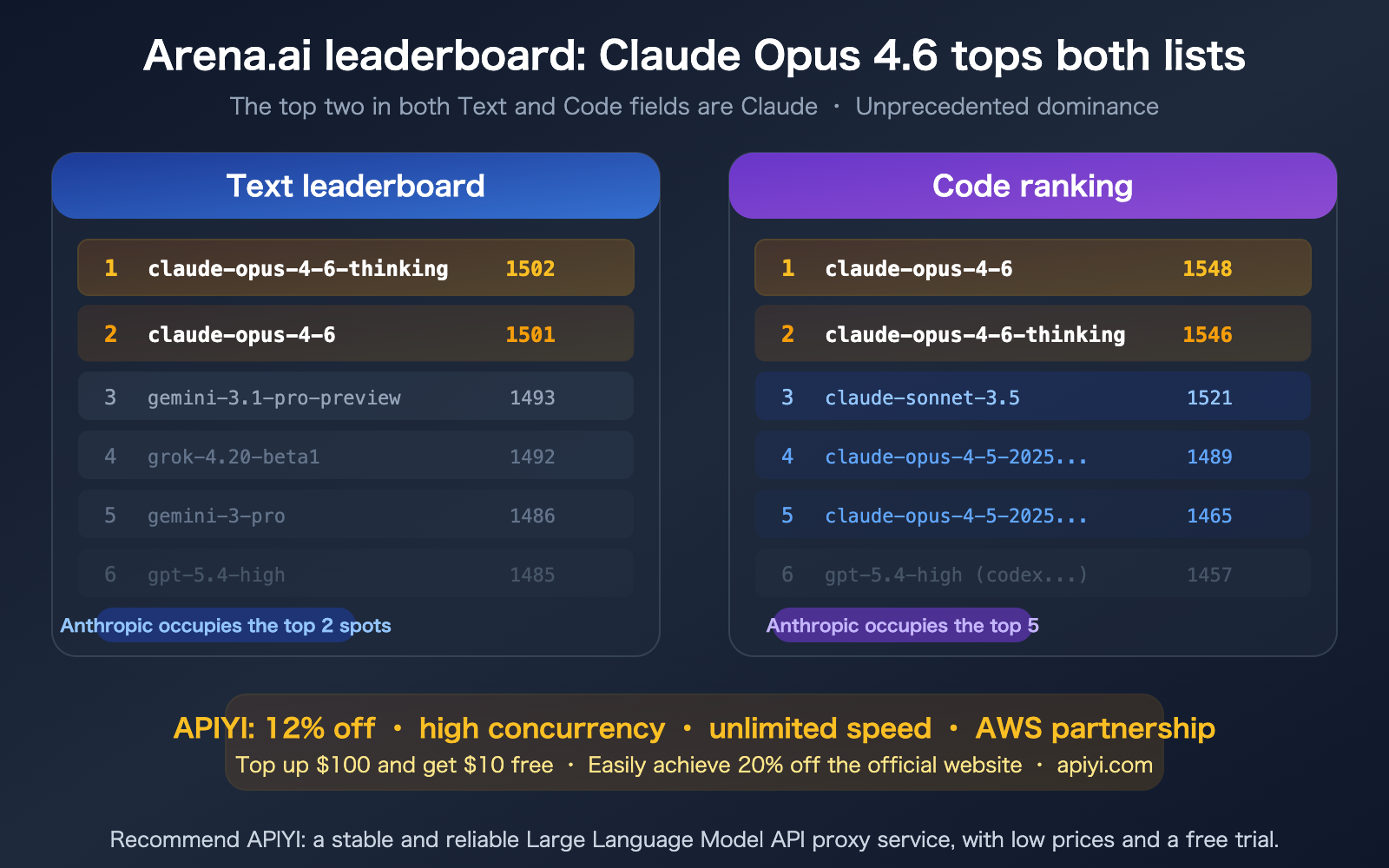

The latest Arena.ai leaderboard is out—the Claude Opus 4.6 series has swept the top two spots on both the Text and Code leaderboards. On the Text leaderboard, claude-opus-4-6-thinking took the top spot with 1502 points, followed closely by claude-opus-4-6 with 1501 points. On the Code leaderboard, claude-opus-4-6 secured first place with 1548 points, with Anthropic models occupying four of the top five positions. This is a rare display of total dominance in the AI model landscape. In this article, we'll analyze this data and show you how to access this top-tier model through APIYI at a 20% discount.

Core Value: Understand the dominant position of Claude Opus 4.6 on industry-standard leaderboards and discover the most cost-effective way to integrate its API.

Decoding the Claude Opus 4.6 Arena Leaderboard Data

Arena.ai (formerly LMSYS Chatbot Arena) is one of the most authoritative third-party platforms for AI model evaluation. It uses a blind, human-preference voting mechanism where users interact with two anonymous models and vote for the better one, with rankings determined by the Elo rating system.

Claude Opus 4.6 Text Leaderboard Data

| Rank | Model | Score | Votes | Provider |

|---|---|---|---|---|

| 1 | claude-opus-4-6-thinking | 1502 | 11,801 | Anthropic |

| 2 | claude-opus-4-6 | 1501 | 12,546 | Anthropic |

| 3 | gemini-3.1-pro-preview | 1493 | 14,677 | |

| 4 | grok-4.20-beta1 | 1492 | 7,396 | xAI |

| 5 | gemini-3-pro | 1486 | 41,762 | |

| 6 | gpt-5.4-high | 1485 | 4,965 | OpenAI |

The two versions of Claude Opus 4.6 (Standard and Thinking) have claimed the top two spots with scores of 1502 and 1501, leading the third-place Gemini 3.1 Pro by 9 points. In the Elo system, a 9-point gap translates to roughly a 55-57% win rate advantage—a solid and reliable lead.

Claude Opus 4.6 Code Leaderboard Data

| Rank | Model | Score | Votes | Provider |

|---|---|---|---|---|

| 1 | claude-opus-4-6 | 1548 | 4,059 | Anthropic |

| 2 | claude-opus-4-6-thinking | 1546 | 3,317 | Anthropic |

| 3 | claude-sonnet-4-6 | 1521 | 5,876 | Anthropic |

| 4 | claude-opus-4-5-20251101 | 1489 | 13,259 | Anthropic |

| 5 | claude-opus-4-5-20251101 | 1465 | 13,313 | Anthropic |

| 6 | gpt-5.4-high (codex-harne…) | 1457 | 1,486 | OpenAI |

The data on the Code leaderboard is even more striking: the top five spots are all held by Anthropic's Claude models. Claude Opus 4.6 leads the sixth-place GPT-5.4 by a massive 91 points—in the Elo system, this represents nearly a 63% win rate advantage, which is an overwhelming lead.

🎯 Leaderboard Takeaway: Claude Opus 4.6's lead in coding capability is significantly wider than its lead in text tasks. This explains why Claude Code has become a leader in the coding Agent market—the underlying model's coding ability is simply undisputed.

You can access this top-tier model at an 88% discount via APIYI (apiyi.com).

Why Claude Opus 4.6 Tops Both Leaderboards

Core Technical Advantages of Claude Opus 4.6

The reason Claude Opus 4.6 dominates both leaderboards is Anthropic's focused compute strategy—100% of their GPU resources are dedicated to model inference, rather than being split across image or video generation.

| Capability Dimension | Claude Opus 4.6 | Competitor Comparison |

|---|---|---|

| SWE-bench | 80.8% (Code Repair) | GPT-5.4 ~75% |

| ARC-AGI-2 | 68.8% (Reasoning) | Leads contemporary models |

| MRCR v2 (1M) | 76% (Long Context Retrieval) | Sonnet 4.5 only 18.5% |

| BigLaw Bench | 90.2% (Legal Reasoning) | Highest in Claude series |

| Terminal-Bench 2.0 | 65.4% (Terminal Ops) | Industry-leading |

| Context Window | 1M Tokens (No long-context surcharges) | One of the largest in the industry |

| Max Output | 128K Tokens | Highest in the industry |

Claude Opus 4.6 Standard vs. Thinking

Looking at the Arena leaderboard, there's an interesting trend:

- Text Leaderboard: The Thinking version has a slight edge (1502 vs 1501)—deep reasoning provides a marginal advantage in text tasks.

- Code Leaderboard: The Standard version has a slight edge (1548 vs 1546)—direct responses can be more precise for coding tasks.

The gap between them is minimal (1-2 points), suggesting that the base capabilities of Claude Opus 4.6 are already so strong that the incremental benefit of the Thinking mode is limited—the model is already "thinking" effectively, so an explicit Thinking mode isn't always necessary.

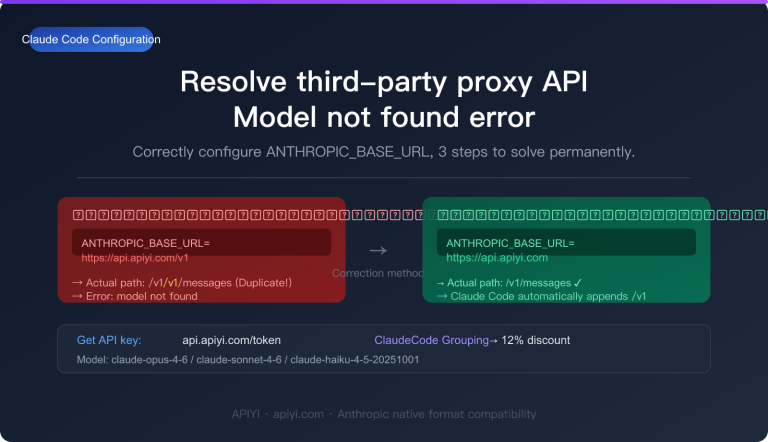

title: "Quick Start: Integrating Claude Opus 4.6 via APIYI"

description: "Learn how to integrate the top-ranked Claude Opus 4.6 model using APIYI with just a few lines of code. Save 20% on costs with our optimized API proxy service."

tags: [APIYI, Claude, LLM, API, Tutorial]

Quick Start: Integrating Claude Opus 4.6 via APIYI

Minimalist Example: Access the Top-Ranked Model in 3 Lines of Code

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY", # Get yours at apiyi.com

base_url="https://vip.apiyi.com/v1"

)

response = client.chat.completions.create(

model="claude-opus-4-6", # #1 on the Arena Code Leaderboard

messages=[

{"role": "user", "content": "Analyze the performance bottlenecks in this code and suggest optimizations."}

],

max_tokens=16000

)

print(response.choices[0].message.content)

View Code for the Thinking Version

import openai

client = openai.OpenAI(

api_key="YOUR_API_KEY",

base_url="https://vip.apiyi.com/v1"

)

# Using the Thinking version (#1 on the Arena Text Leaderboard)

response = client.chat.completions.create(

model="claude-opus-4-6-thinking",

messages=[

{"role": "user", "content": "Design a high-concurrency message queue system architecture."}

],

max_tokens=32000

)

print(response.choices[0].message.content)

The Thinking version performs deeper internal reasoning, making it perfect for complex architectural design, mathematical derivations, and in-depth analysis tasks.

Integration Tip: Use

claude-opus-4-6(#1 on the Code leaderboard) for general coding tasks, andclaude-opus-4-6-thinking(#1 on the Text leaderboard) for complex reasoning. APIYI (apiyi.com) supports both models with a flat 12% discount (0.88x) across the board.

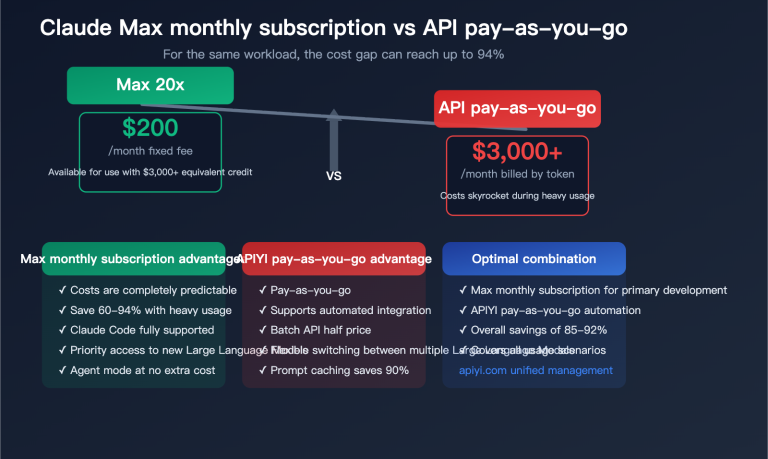

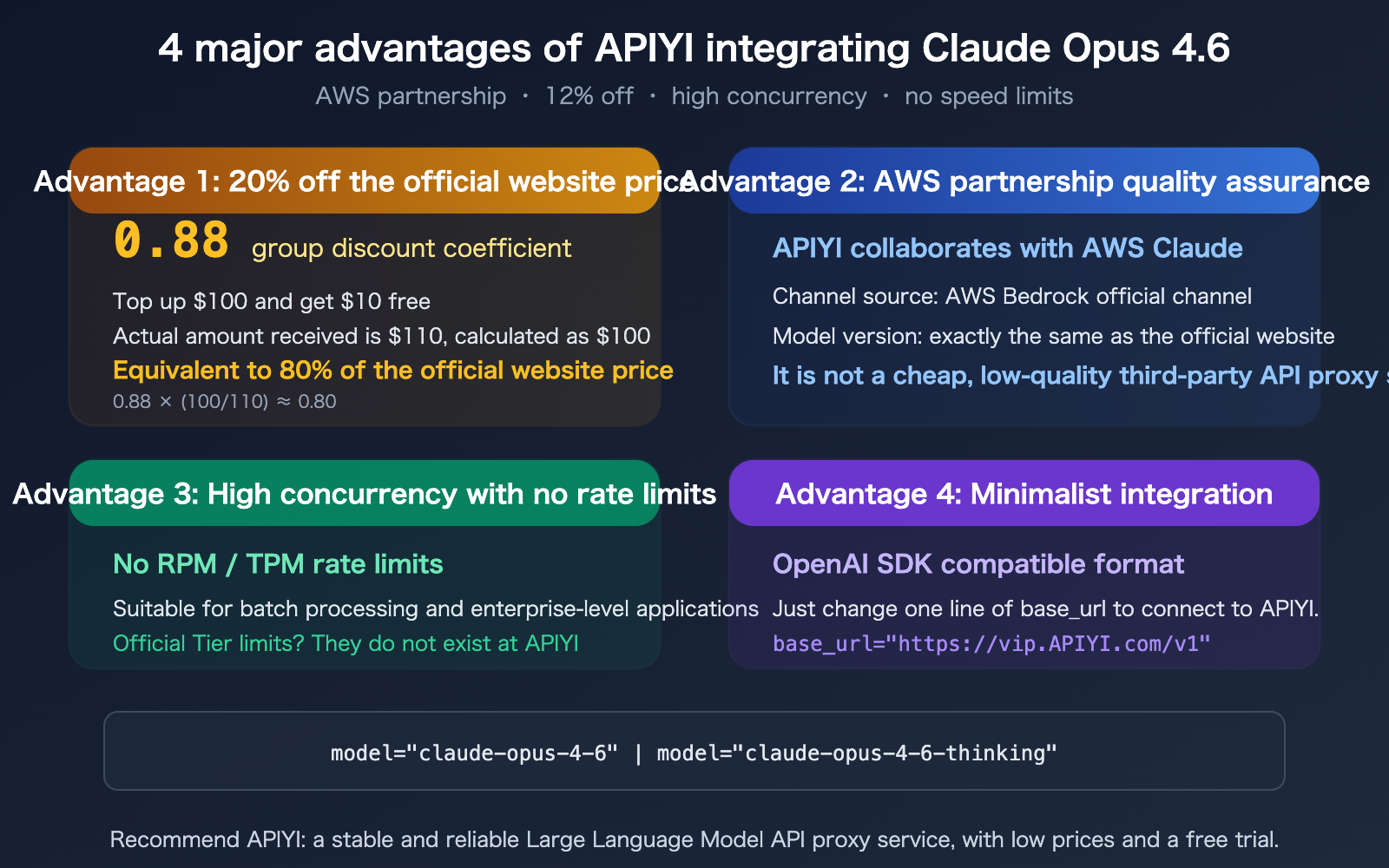

APIYI Claude Opus 4.6 Pricing Breakdown

Why APIYI Claude Opus 4.6 is More Cost-Effective

| Billing Item | Official Anthropic Price | APIYI Price (12% off) | With Top-up Bonus |

|---|---|---|---|

| Input Token | $5.00/M | $4.40/M | ~$4.00/M |

| Output Token | $25.00/M | $22.00/M | ~$20.00/M |

| Cache Write | $6.25/M | $5.50/M | ~$5.00/M |

| Cache Hit | $0.50/M | $0.44/M | ~$0.40/M |

Top-up Bonus Calculation:

- Top up $100, get $10 free (total $110 balance).

- 0.88x group discount + 10% top-up bonus → Effective discount of ~0.80x (20% off official rates).

- You save 20% compared to calling the official API directly for the same usage volume.

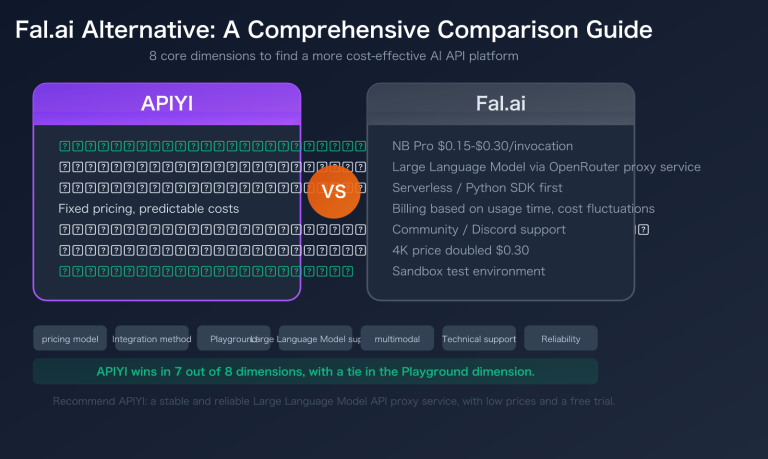

How APIYI Achieves Lower Prices

APIYI partners with AWS to provide access to Claude models via AWS Bedrock. By combining AWS volume discounts with APIYI's operational efficiency, we pass the savings directly to you. The model version and quality are identical to the official offering—no downgrades or alternative channels.

🎯 Cost Tip: If your monthly Claude API spend exceeds $100, switching to APIYI (apiyi.com) can save you over $20 per month. The larger your project, the more you save. Register now to receive free credits and try it out before you commit.

FAQ

Q1: Is there any difference between APIYI’s Claude Opus 4.6 and calling the official API directly?

The model is exactly the same—APIYI connects to Claude via the official AWS Bedrock channel, not through third-party reverse engineering or downgraded services. The model version, reasoning capabilities, and output quality are identical to those on the official Anthropic platform. The only difference is the integration method: APIYI provides an OpenAI-compatible format, so you can connect by changing just one line of base_url, without needing to register for an Anthropic account or configure AWS credentials.

Q2: How is the 0.88 discount calculated? Can it be combined with recharge bonuses?

Yes, they can be combined. The 0.88 group price is a base discount that applies to all Claude Opus 4.6 requests. The $10 bonus for every $100 recharged is an additional offer; when combined, the total discount is approximately 80% of the official price. For example: $100 worth of usage on the official site will only cost you about $80 on APIYI.

Q3: What exactly does “high concurrency with no rate limits” mean?

The official Anthropic API has strict rate limits (RPM and TPM) that vary by tier and require manual requests for increases. APIYI removes these restrictions—you can send as many concurrent requests as you need, making it perfect for batch data processing, automated testing, and enterprise-level applications.

Q4: Is the Arena leaderboard scoring mechanism reliable?

Arena.ai (formerly LMSYS Chatbot Arena) is currently one of the most recognized third-party evaluation platforms in the AI community. It uses blind human testing—users interact with two anonymous models and vote for the better one, which helps eliminate brand bias. The Elo rating system has been built up over tens of thousands of votes, ensuring high statistical reliability. The vote counts for Claude Opus 4.6 (12,546 votes on the Text leaderboard and 4,059 on the Code leaderboard) also provide a sufficient sample size.

Summary

Key takeaways from Claude Opus 4.6 topping the Arena leaderboards:

- Top of both Text and Code leaderboards:

claude-opus-4-6-thinkingtook the #1 spot on the Text leaderboard (1502 points), whileclaude-opus-4-6took the #1 spot on the Code leaderboard (1548 points). Anthropic models currently hold all top five spots on the Code leaderboard. - Significant lead in coding capabilities: On the Code leaderboard, Claude Opus 4.6 leads GPT-5.4 by a massive 91 points (Elo), cementing its undisputed dominance in the coding domain.

- APIYI offers the best integration: 0.88 discount + 10% recharge bonus = 20% off overall. You get reliable quality via AWS, no rate limits for high concurrency, and an OpenAI-compatible format that lets you connect with just one line of code.

We recommend accessing the top-ranked Claude Opus 4.6 via APIYI at apiyi.com—register to get free credits, and get $10 extra when you recharge $100, making it easy to save 20% compared to the official site.

📚 References

-

Arena.ai Leaderboard: Authoritative third-party blind test ranking for AI models.

- Link:

arena.ai/leaderboard - Description: Real-time updated leaderboard across multiple dimensions like Text, Code, etc.

- Link:

-

Official Claude Opus 4.6 Announcement: The official model release announcement from Anthropic.

- Link:

anthropic.com/news/claude-opus-4-6 - Description: Includes benchmark data and technical specifications.

- Link:

-

Claude Opus 4.6 Performance Analysis: In-depth analysis by an independent evaluation agency.

- Link:

artificialanalysis.ai/models/claude-opus-4-6-adaptive - Description: Includes data on latency, throughput, and price comparisons.

- Link:

-

APIYI Documentation Center: API integration guide for Claude Opus 4.6.

- Link:

docs.apiyi.com - Description: Includes integration tutorials, pricing details, and sample code.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to join the discussion in the comments. For more resources, visit the APIYI documentation center at docs.apiyi.com.