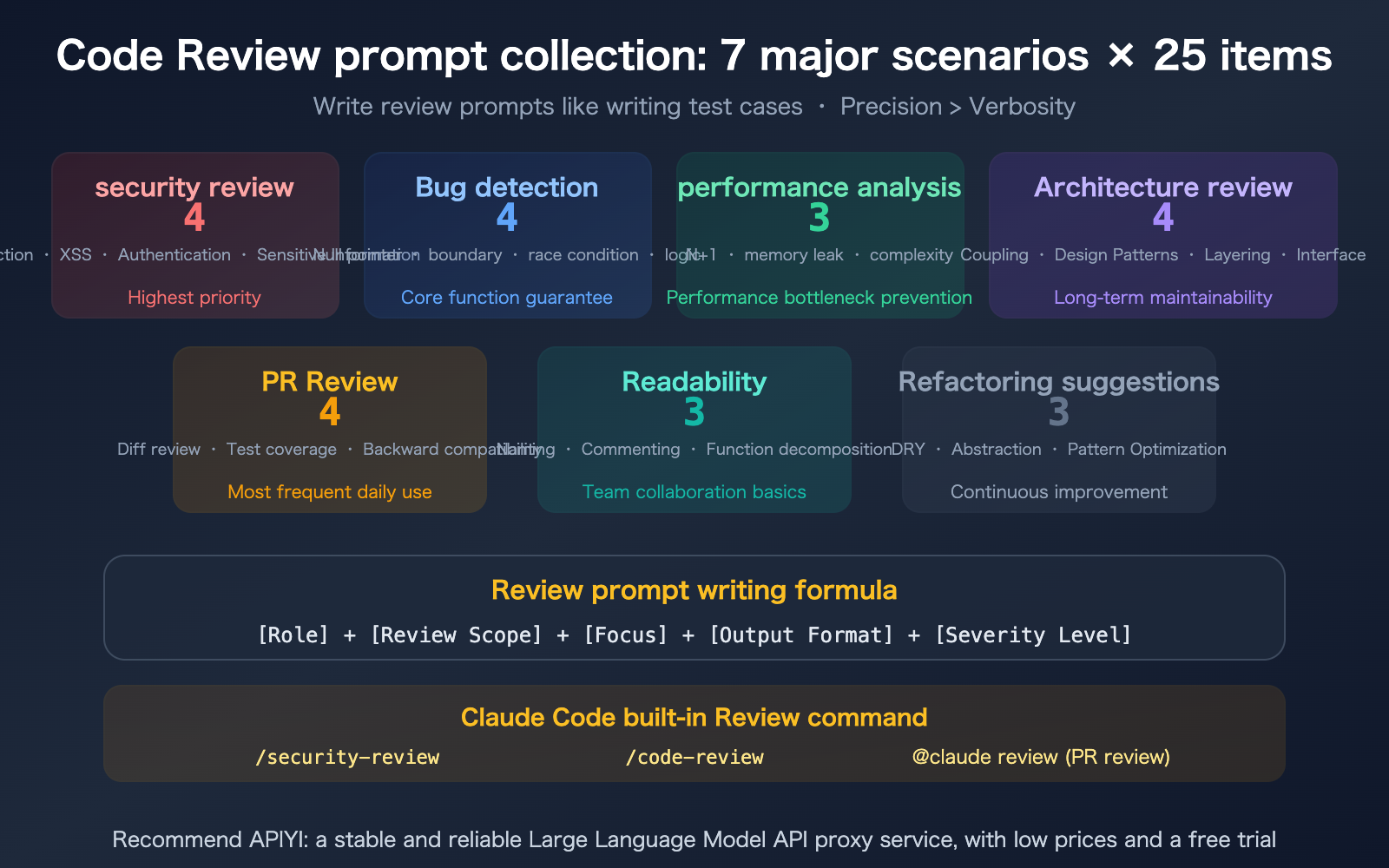

Author's Note: I've compiled 25 field-tested Claude Code code review prompts covering 7 key scenarios, including security audits, performance analysis, architecture reviews, bug detection, and PR reviews, complete with a formula for crafting your own.

Claude Code comes with a built-in /security-review command and a multi-agent system for code reviews, but the default output can often be too verbose, nitpicking every little detail. A good review prompt should be as precise as a test case—defining the scope, setting priorities, and requiring specific line numbers and remediation suggestions. This article collects 25 code review prompts across 7 scenarios, from security audits to architecture reviews, all ready for you to copy and use.

Core Value: 25 prompts covering the most frequent code review scenarios, plus a prompt-writing formula and examples of good vs. bad practices.

Review Prompt Engineering Formula

A good review prompt is as precise as a test case. A bad review prompt is like a vague Slack message.

The Five-Element Formula

[Role] Act as a senior {language/domain} engineer.

[Scope] Review the {changes} in the {file/directory/PR}.

[Focus] Focus on {security/performance/logic/architecture}.

[Format] Output format: {numbered list/table/inline comments}.

[Severity] Label severity levels: {Critical/High/Medium/Low}.

| Element | Bad Example | Good Example |

|---|---|---|

| Role | (Not specified) | "Act as a senior backend engineer" |

| Scope | "Take a look at this code" | "Review the recent git diff in src/auth/" |

| Focus | "Give me some feedback" | "Focus on SQL injection and authentication bypass" |

| Format | (Random output) | "Numbered list, each item including file:line number, issue description, and fix suggestion" |

| Severity | (Not requested) | "Label as Critical/High/Medium/Low" |

Scenario 1: Security Review (4 Prompts)

Security review is the highest priority in Code Review. While Claude Code comes with a built-in /security-review command, custom prompts can go much deeper.

Prompt #1: OWASP Top 10 Comprehensive Scan

Act as a security audit engineer and review all files recently modified in the src/ directory.

Check against the OWASP Top 10:

1. Injection (SQL/NoSQL/Command Injection)

2. Broken Authentication

3. Sensitive Data Exposure

4. XXE

5. Broken Access Control

6. Security Misconfiguration

7. XSS

8. Insecure Deserialization

9. Using Components with Known Vulnerabilities

10. Insufficient Logging and Monitoring

Output format: Numbered list, each item including [file:line number] [severity level] [issue description] [fix suggestion].

Only report actual issues, do not report theoretical risks.

Prompt #2: API Endpoint Security Review

Review all API route files (routes/, controllers/), focusing on:

- Endpoints missing authentication middleware

- Whether input validation and sanitization are applied to parameters

- Risks of mass assignment

- Whether rate limiting is configured

- Whether error responses leak internal information

Output table: Endpoint Path | Issue | Severity Level | Fix Plan

Prompt #3: Sensitive Information Leak Detection

Scan the entire project to check if the following sensitive information is hardcoded or accidentally exposed:

- API keys, secrets, tokens

- Database connection strings

- Private keys and certificates

- Internal IPs and domain names

- Passwords or credentials in comments

The scope includes: source code, configuration files, .env.example, docker-compose.yml, and README.

Label the file path and line number for every finding.

Prompt #4: Authentication and Authorization Logic Review

Act as a security expert and review the authentication and authorization code:

1. Is the JWT token verification logic complete (expiration, signature, tampering)?

2. Is secure hashing (bcrypt/argon2) used for password storage?

3. Is there a risk of session fixation attacks in session management?

4. Is the CORS configuration too permissive?

5. Does the OAuth callback verify the state parameter?

Only report Critical and High severity issues, and include code examples for fixes.

Scenario 2: Bug Detection (4 Prompts)

Prompt #5: Null Pointers and Boundary Conditions

Review the recently modified files and look for the following potential bugs:

- Accessing properties without checking for null/undefined

- Out-of-bounds array access

- Division by zero errors

- Unhandled empty strings

- Unhandled NaN from parseInt/parseFloat

For each finding, provide the trigger condition (what input causes a crash) and the fix code.

Prompt #6: Async and Concurrency Issues

Review all asynchronous code in the project (async/await, Promises, callbacks) and check for:

- Promises missing .catch() error handling

- Potential race conditions

- await inside loops causing serial execution (should use Promise.all)

- Callback hell that can be refactored

- Whether transactions correctly handle rollbacks

Mark as [File:Line Number] [Issue] [Impact] [Fix Plan]

Prompt #7: Logic Error Hunter

Carefully read the business logic of the following functions and look for:

- Whether if/else branches cover all cases

- Whether loop termination conditions are correct

- Whether comparison operators are correct (== vs ===, > vs >=)

- Whether variable scope is correct

- Whether return values are defined for all paths

Don't focus on code style; focus only on logical correctness.

Prompt #8: Error Handling Review

Review the project's error handling mechanism:

1. Do try/catch blocks catch specific exceptions rather than generic Errors?

2. Do catch blocks swallow errors (empty catch)?

3. Are errors correctly propagated to the upper layers?

4. Are user-facing error messages friendly (not exposing internal info)?

5. Do critical operations (payments, data changes) have failure rollback mechanisms?

Sort the output by severity level.

Scenario 3: Performance Analysis (3 Prompts)

Prompt #9: Database Query Performance

Review all database query code (models/, repositories/, ORM calls) and check for:

- N+1 query problems (queries executed inside loops)

- Query fields missing indexes

- Whether SELECT * should be replaced with specific fields

- Whether large data queries have pagination

- Whether there are repeated queries that can be optimized with caching

For each issue, estimate the performance impact (Low/Medium/High) and provide the optimized code.

Prompt #10: Memory and Resource Leaks

Review the project for potential memory and resource leaks:

- Are event listeners removed when components unmount?

- Are timers (setInterval/setTimeout) cleared?

- Are database connections closed correctly?

- Are file handles released in finally blocks?

- Are large arrays/objects dereferenced after use?

Focus on React component useEffect cleanup and Node.js stream processing.

Prompt #11: Algorithm Complexity Review

Review the recently modified functions and analyze their time and space complexity:

- Are there O(n²) or higher complexity implementations that can be optimized?

- Are there places where linear searches can be replaced by hash tables?

- Are there unnecessary deep copies?

- Should string concatenation use StringBuilder/join?

- Is the appropriate algorithm used for sorting?

Mark as Current Complexity → Optimizable Complexity → Specific Optimization Plan.

Scenario 4: Architecture Review (4 Prompts)

Prompt #12: Dependency and Coupling Analysis

Analyze the module dependencies in src/:

1. Draw a dependency graph between modules (which module imports which)

2. Identify circular dependencies

3. Identify the most coupled modules (those most depended upon by others)

4. Suggest which dependencies should be decoupled via interfaces/abstractions

Output: Dependency table + List of circular dependencies + Decoupling suggestions

Prompt #13: Layered Architecture Compliance Check

Check if the code adheres to layered architecture principles:

- Does the Controller layer contain business logic? (It should only handle routing and parameter validation)

- Does the Service layer operate directly on the database? (It should go through a Repository)

- Does the Model/Entity layer contain HTTP-related logic?

- Are there any cross-layer calls? (e.g., Controller calling Repository directly)

List each file that violates these principles and the specific code location.

Prompt #14: API Design Review

Review all API endpoints from the perspective of RESTful API design best practices:

- Do URL names follow REST conventions (plural nouns, hierarchical relationships)?

- Are HTTP methods used correctly (GET for read-only, POST for create, PUT for update, DELETE for delete)?

- Is the response format consistent (error codes, pagination, date formats)?

- Is API versioning implemented properly?

- Are there any redundant endpoints that could be merged?

Output: Improvement suggestions table (Current → Suggestion → Reason)

Prompt #15: Technical Debt Assessment

Perform a comprehensive assessment of the project's technical debt:

1. Outdated dependencies and framework versions

2. Deprecated API calls

3. Hardcoded configuration values (should use environment variables)

4. Copy-pasted duplicate code blocks

5. Critical modules lacking unit tests

6. Overly complex functions (cyclomatic complexity > 15)

Sort by urgency: Blocker (must fix immediately) > High > Medium > Low

Scenario 5: PR Review (4 Prompts)

Prompt #16: PR Diff Quick Review

Review the diff between the current branch and main, and evaluate it from the perspective of a senior engineer:

1. What is the purpose of this PR (inferred from the diff)?

2. Is the change complete (are there any missing files or logic)?

3. Does it introduce new bugs or regressions?

4. Is test coverage sufficient?

5. Are there any unnecessary changes (debug code, formatting noise)?

Report only High and Critical level issues. Do not nit-pick code style.

Prompt #17: Backward Compatibility Check

Review all changes in the current PR and check for backward-incompatible modifications:

- Have public API signatures or return values changed?

- Are there any breaking changes to the database schema?

- Has the configuration file format changed?

- Have functions used by other modules been deleted?

- Have environment variable names or formats been altered?

For each incompatibility, assess the impact scope and migration plan.

Prompt #18: Test Sufficiency Review

Compare the code changes in the current PR with the test changes:

1. Do all new functions have corresponding unit tests?

2. Have existing tests been updated for modified logic?

3. Are boundary conditions and exception paths covered by tests?

4. Do integration tests cover the new API endpoints?

5. Is the test data reasonable (not just random 123, abc)?

List suggested missing test cases: Function Name | Missing Test Scenario | Priority

Prompt #19: Commit Quality Review

Review the commit history of the current PR:

1. Do the commit messages clearly describe the changes?

2. Is each commit atomic (one commit per purpose)?

3. Are there trivial commits that should be squashed?

4. Are there temporary commits like "fix typo" or "wip" that should be cleaned up?

5. Is the commit order logical (infrastructure before business logic)?

Suggest which commits need to be reorganized and the final commit structure.

Scenario 6: Readability (3 Prompts)

Prompt #20: Naming Review

Review the naming of all variables, functions, and classes in the recently modified files:

- Are there any ambiguous names (e.g., data, info, temp, res, obj)?

- Is there excessive abbreviation (e.g., usr → user, btn → button)?

- Do boolean names fail to start with is/has/should?

- Do function names start with a verb that accurately describes the behavior?

- Do class names accurately describe their responsibilities using nouns?

For every poor naming choice, suggest a better alternative.

Prompt #21: Comment Quality Review

Review the quality of comments in the code:

- Are there "what" comments that should be "why" comments?

- Are there outdated comments (inconsistent with the code)?

- Are there comments that should be extracted into function names?

- Is there a lack of explanatory comments for complex business logic?

- Do public APIs have JSDoc/docstrings?

Do not suggest adding obvious comments (e.g., "// increment counter").

Prompt #22: Function Splitting Suggestions

Review all functions longer than 30 lines and evaluate whether they should be split:

- Does the function have multiple responsibilities (doing several unrelated things)?

- Is the nesting level deeper than 3 layers?

- Does it have more than 4 parameters?

- Is there repetitive logic that can be extracted?

Provide a specific splitting plan: Original function → List of split functions → Responsibility of each function.

Scenario 7: Refactoring Suggestions (3 Prompts)

Prompt #23: DRY Violation Detection

Scan the project for duplicate code:

- Identify code blocks repeated more than 3 lines.

- Identify code with similar logic but different implementations.

- Identify common patterns that can be extracted into utility functions.

For each group of duplicates, provide the implementation code for the extracted utility function.

Prompt #24: Design Pattern Optimization

Review the code from the perspective of a design pattern expert:

- Should excessive if/else or switch statements be replaced with the Strategy pattern?

- Should complex object creation be replaced with the Factory/Builder pattern?

- Should repetitive boilerplate code be replaced with the Template Method pattern?

- Should deeply nested callbacks be replaced with the Chain of Responsibility pattern?

- Should global state management be replaced with the Observer pattern?

Only suggest these if the pattern improvement significantly reduces complexity; avoid over-engineering.

Prompt #25: Legacy Code Modernization

Review legacy code in the project and identify parts that can be rewritten using modern syntax:

- var → const/let

- Callbacks → async/await

- for loops → map/filter/reduce

- String concatenation → Template literals

- require → import

- Class → Functional components + Hooks (React)

Provide a comparison of the code before and after refactoring, and assess the refactoring risk (Low/Medium/High).

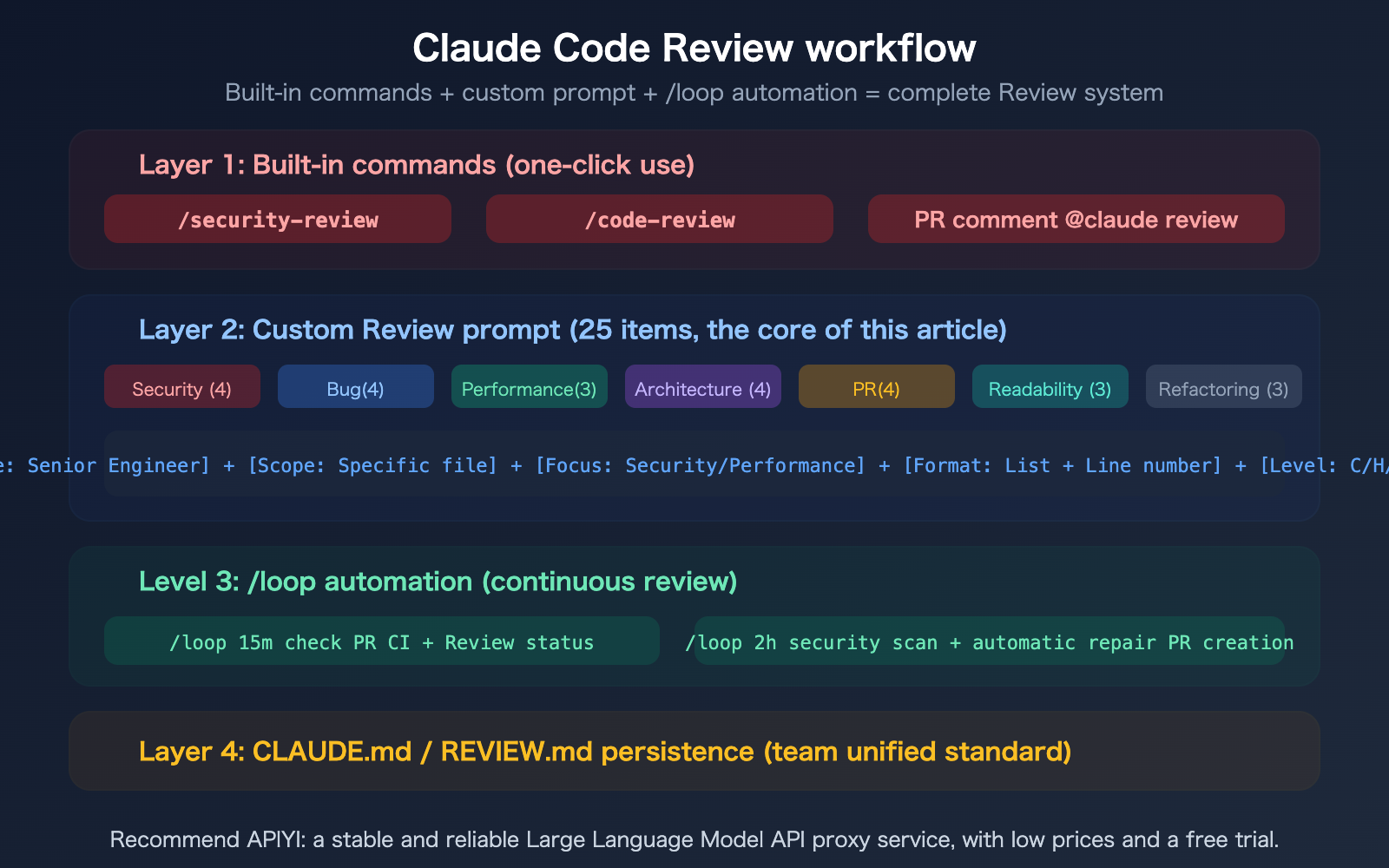

🎯 Usage Tip: It's recommended to save your most frequently used review prompts into

CLAUDE.mdor.claude/skills/to standardize them across your team. You can use/loopto automate security audits and PR reviews.

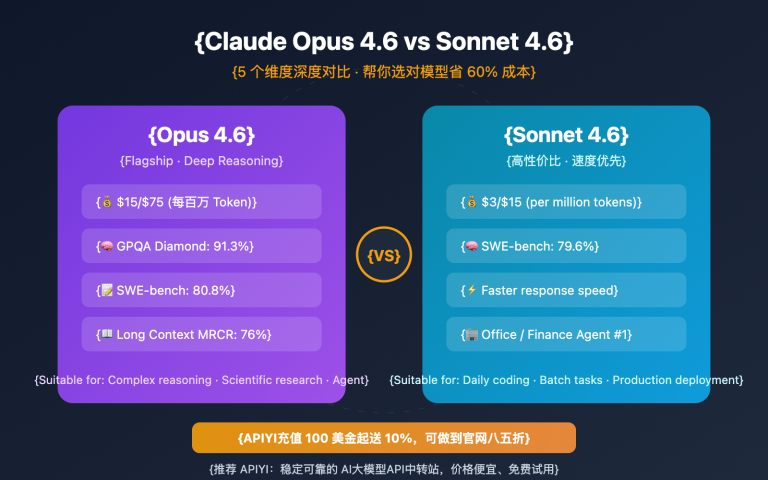

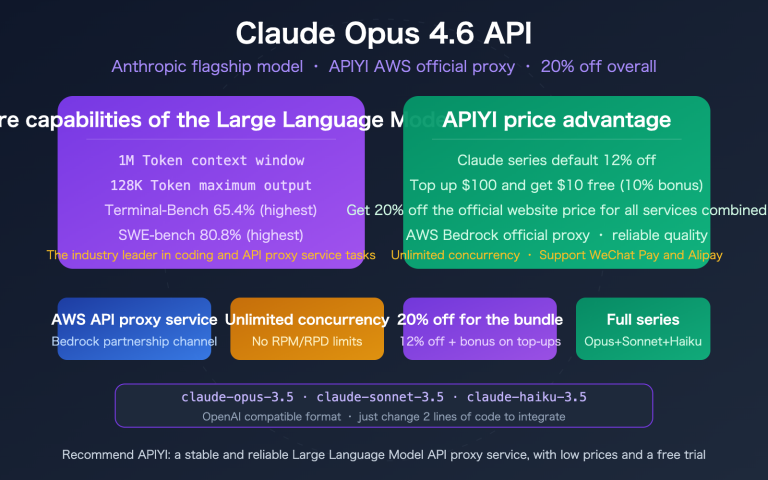

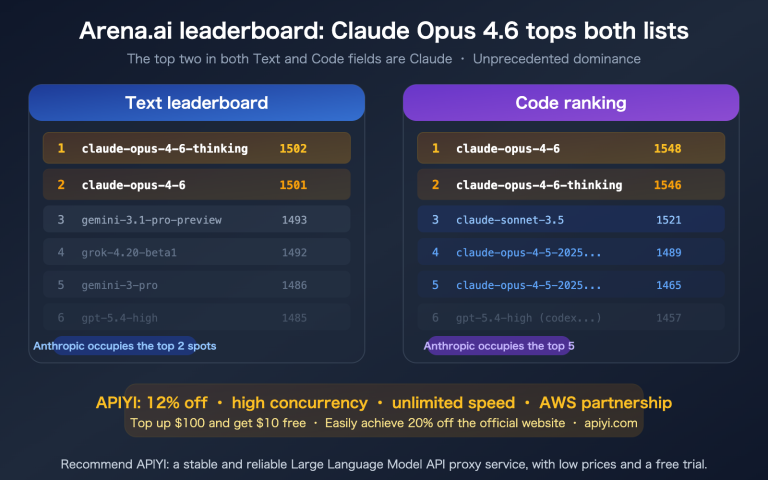

If you're building an automated review system via API, we recommend using APIYI (apiyi.com) to access Claude Opus 4.6 at a 20% discount.

FAQ

Q1: What if the default /code-review is too verbose?

Create a REVIEW.md file in your project root or add review rules to your CLAUDE.md to clearly tell Claude what to focus on and what to ignore. For example: "Only report Critical and High severity issues. Do not nit-pick code style or naming. Do not suggest adding comments." Claude Code will automatically read this file during every review.

Q2: How can I save prompts as reusable Skills?

Save your security review prompt in .claude/skills/security-review/SKILL.md and set user-invocable: true. This will register it as a /security-review slash command. You can then simply type /security-review to execute it without having to copy and paste every time. You can save multiple prompts as individual Skills.

Q3: Can PR reviews be automatically posted as GitHub comments?

Yes. There are two ways: 1) Type @claude review in a PR comment, and Claude will automatically analyze the diff and post its findings as inline comments; 2) Use the /code-review --comment command, and Claude will post the review results as a PR comment. In March 2026, Anthropic released a dedicated Code Review multi-agent system that can coordinate a team of specialized agents to review PRs from multiple perspectives, including security, logic, and testing.

Q4: How many tokens do review prompts consume?

It depends on the scope of the review. A single-file review typically takes about 2,000–5,000 tokens, while a full-project security scan might take 10,000–30,000 tokens. It's recommended to limit the review scope to specific files or directories to avoid wasting tokens on "scanning all files." You can significantly reduce your review costs by accessing Claude Opus 4.6 at a 20% discount via APIYI (apiyi.com).

Summary

Key takeaways for Claude Code review prompts:

- 25 prompts covering 7 scenarios: Security review (4), Bug detection (4), Performance analysis (3), Architecture review (4), PR review (4), Readability (3), and Refactoring suggestions (3)—ready for you to copy and use.

- The 5-element formula for great prompts: Role + Scope + Focus + Format + Severity. Be as precise as a test case, not as vague as a Slack message.

- Three-tier review system: Built-in commands (

/security-review) → Custom prompts (the 25 in this article) →/loopfor automated, continuous reviews.

We recommend accessing the Claude Opus 4.6 API at a 20% discount via APIYI (apiyi.com) to build your automated code review system.

📚 References

-

Claude Code Code Review Official Documentation: Complete guide to the built-in review functionality.

- Link:

code.claude.com/docs/en/code-review - Description: Covers PR reviews, multi-agent systems, and customization methods.

- Link:

-

Claude Code Security Review: Official security review solution from Anthropic.

- Link:

github.com/anthropics/claude-code-security-review - Description: Includes the full implementation of the

/security-reviewcommand.

- Link:

-

7 Claude PR Review Prompts: Community-verified review prompts.

- Link:

rephrase-it.com/blog/7-claude-pr-review-prompts-for-2026 - Description: Features structured prompt templates for PR reviews.

- Link:

-

APIYI Documentation Center: Get 20% off Claude Opus 4.6 API access.

- Link:

docs.apiyi.com - Description: The optimal API solution for building automated review systems.

- Link:

Author: APIYI Technical Team

Technical Discussion: Feel free to join the discussion in the comments section. For more resources, visit the APIYI documentation center at docs.apiyi.com.