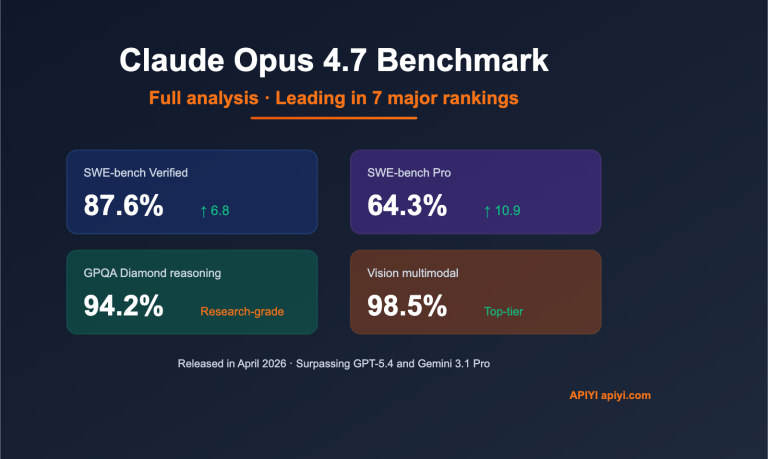

Claude Opus 4.7 Benchmark Full Analysis: Empirical Data Leading GPT-5.4 Across 7 Major Leaderboards

Author's Note: A deep dive into the Claude Opus 4.7 benchmarks: 87.6% on SWE-bench Verified, 64.3% on SWE-bench Pro, and 94.2% on GPQA Diamond. It sweeps the floor with GPT-5.4 and Gemini 3.1 Pro. API invocation guide included. Anthropic officially released Claude Opus 4.7 on April 16, 2026, taking the lead in 7 out of…