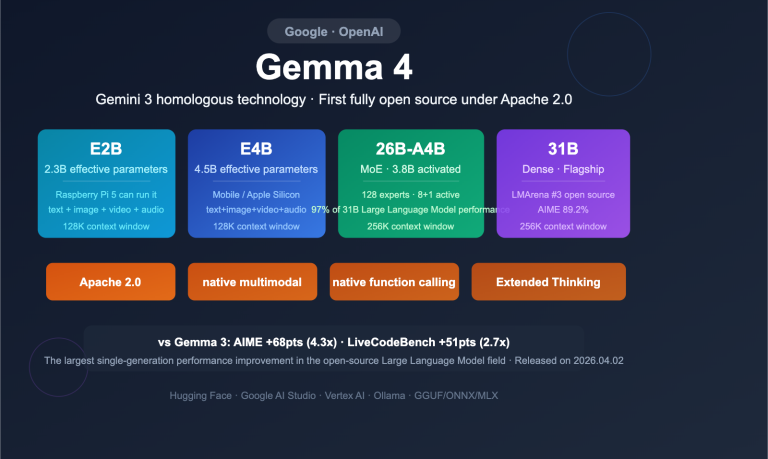

Comprehensive interpretation of Google Gemma 4: 4 open-source models, Apache 2.0 license, and 6 core upgrades

Google Gemma 4 has been officially released, marking its first-ever release under the fully open-source Apache 2.0 license, with four models covering the entire spectrum of computing scenarios from Raspberry Pis to data centers. As the open-source version of the technology behind Gemini 3, Gemma 4 delivers a comprehensive, crushing performance lead over Gemma 3…